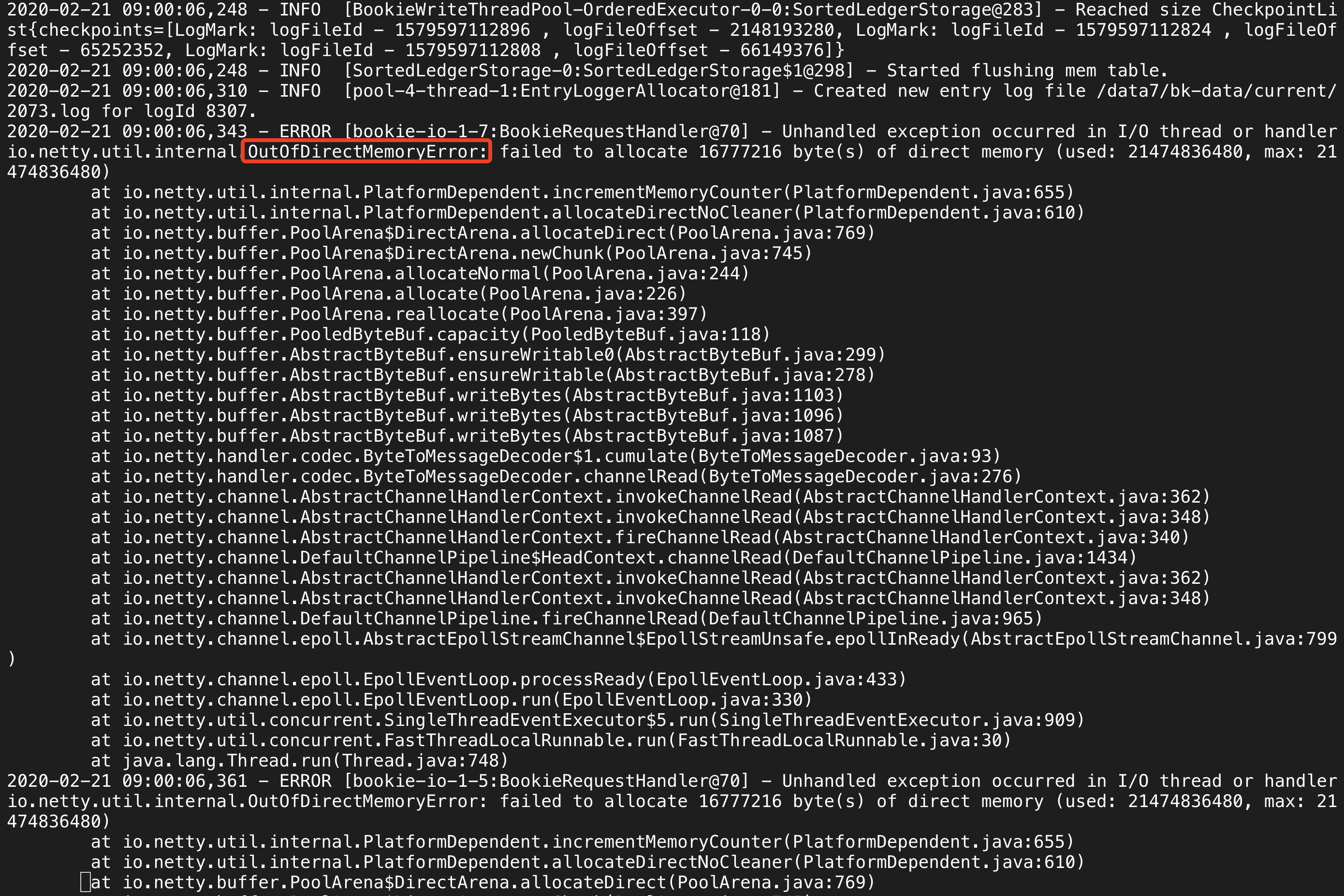

aloyszhang opened a new issue #2269: Bookie shutdown due to OutOfDirectMemoryError URL: https://github.com/apache/bookkeeper/issues/2269 **BUG REPORT** Bookie shutdown due to OutOfDirectMemoryError ***Describe the bug*** Bookie shutdown when pulsar continuous send message. ``` ERROR [bookie-io-1-19:BookieRequestHandler@70] - Unhandled exception occurred in I/O thread or handler io.netty.util.internal.OutOfDirectMemoryError: failed to allocate 16777216 byte(s) of direct memory (used: 21474836480, max: 21474836480) at io.netty.util.internal.PlatformDependent.incrementMemoryCounter(PlatformDependent.java:655) at io.netty.util.internal.PlatformDependent.allocateDirectNoCleaner(PlatformDependent.java:610) at io.netty.buffer.PoolArena$DirectArena.allocateDirect(PoolArena.java:769) at io.netty.buffer.PoolArena$DirectArena.newChunk(PoolArena.java:745) at io.netty.buffer.PoolArena.allocateNormal(PoolArena.java:244) at io.netty.buffer.PoolArena.allocate(PoolArena.java:226) at io.netty.buffer.PoolArena.reallocate(PoolArena.java:397) at io.netty.buffer.PooledByteBuf.capacity(PooledByteBuf.java:118) at io.netty.buffer.AbstractByteBuf.ensureWritable0(AbstractByteBuf.java:299) at io.netty.buffer.AbstractByteBuf.ensureWritable(AbstractByteBuf.java:278) at io.netty.buffer.AbstractByteBuf.writeBytes(AbstractByteBuf.java:1103) at io.netty.buffer.AbstractByteBuf.writeBytes(AbstractByteBuf.java:1096) at io.netty.buffer.AbstractByteBuf.writeBytes(AbstractByteBuf.java:1087) at io.netty.handler.codec.ByteToMessageDecoder$1.cumulate(ByteToMessageDecoder.java:93) at io.netty.handler.codec.ByteToMessageDecoder.channelRead(ByteToMessageDecoder.java:276) at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:362) at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:348) at io.netty.channel.AbstractChannelHandlerContext.fireChannelRead(AbstractChannelHandlerContext.java:340) at io.netty.channel.DefaultChannelPipeline$HeadContext.channelRead(DefaultChannelPipeline.java:1434) at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:362) at io.netty.channel.AbstractChannelHandlerContext.invokeChannelRead(AbstractChannelHandlerContext.java:348) at io.netty.channel.DefaultChannelPipeline.fireChannelRead(DefaultChannelPipeline.java:965) at io.netty.channel.epoll.AbstractEpollStreamChannel$EpollStreamUnsafe.epollInReady(AbstractEpollStreamChannel.java:799) at io.netty.channel.epoll.EpollEventLoop.processReady(EpollEventLoop.java:433) at io.netty.channel.epoll.EpollEventLoop.run(EpollEventLoop.java:330) at io.netty.util.concurrent.SingleThreadEventExecutor$5.run(SingleThreadEventExecutor.java:909) at io.netty.util.concurrent.FastThreadLocalRunnable.run(FastThreadLocalRunnable.java:30) at java.lang.Thread.run(Thread.java:748) ``` ***To Reproduce*** In my case, there are 4 bookies start with the following configuration. bkenv.sh ``` BOOKIE_MAX_HEAP_MEMORY=10g BOOKIE_MIN_HEAP_MEMORY=10g BOOKIE_MAX_DIRECT_MEMORY=20g ``` bk_server.sh ``` journalDirectories=/data2/bookkeeper/journal,/data3/bookkeeper/journal,/data4/bookkeeper/journal ledgerDirectories=/data1/bk-data,/data5/bk-data,/data7/bk-data,/data8/bk-data,/data9/bk-data ``` Directory data1,data2,data3,data4,data5,data6,data7,date8,data9 are on different disk with individual disk driver. Then start client (actuallly pulsar) to send message by pulsar-perf tool with the command : `bin/pulsar-perf produce -threads 6 -u pulsar://10.56.204.197:6650,10.56.204.198:6650,10.56.205.196:6650 -o 10000 -n 1 -s 1000 -r 3000000 persistent://tenant-bk/namespace-bk/topic-bk` ***Expected behavior*** BookKeeper should have mechanism of rate limit to avoid OutOfDirectMemoryError happends. ***Screenshots***

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services