fsk119 commented on a change in pull request #14126:

URL: https://github.com/apache/flink/pull/14126#discussion_r527354544

##########

File path: docs/dev/table/connectors/upsert-kafka.zh.md

##########

@@ -29,36 +29,26 @@ under the License.

* This will be replaced by the TOC

{:toc}

-The Upsert Kafka connector allows for reading data from and writing data into

Kafka topics in the upsert fashion.

+Upsert Kafka 连接器支持以 upsert 方式从 Kafka topic 中读取数据并将数据写入 Kafka topic。

-As a source, the upsert-kafka connector produces a changelog stream, where

each data record represents

-an update or delete event. More precisely, the value in a data record is

interpreted as an UPDATE of

-the last value for the same key, if any (if a corresponding key doesn’t exist

yet, the update will

-be considered an INSERT). Using the table analogy, a data record in a

changelog stream is interpreted

-as an UPSERT aka INSERT/UPDATE because any existing row with the same key is

overwritten. Also, null

-values are interpreted in a special way: a record with a null value represents

a “DELETE”.

+作为 source,upsert-kafka 连接器生产 changelog 流,其中每条数据记录代表一个更新或删除事件。更准确地说,数据记录中的

value 被解释为同一 key 的最后一个 value 的 UPDATE,如果有这个 key(如果不存在相应的 key,则该更新被视为

INSERT)。用表来类比,更改日志流中的数据记录被解释为 UPSERT,也称为 INSERT/UPDATE,因为任何具有相同 key

的现有行都被覆盖。另外,value 为空的消息将会被视作为 DELETE 消息。

Review comment:

更改日志 -> changelog

##########

File path: docs/dev/table/connectors/upsert-kafka.zh.md

##########

@@ -29,36 +29,26 @@ under the License.

* This will be replaced by the TOC

{:toc}

-The Upsert Kafka connector allows for reading data from and writing data into

Kafka topics in the upsert fashion.

+Upsert Kafka 连接器支持以 upsert 方式从 Kafka topic 中读取数据并将数据写入 Kafka topic。

-As a source, the upsert-kafka connector produces a changelog stream, where

each data record represents

-an update or delete event. More precisely, the value in a data record is

interpreted as an UPDATE of

-the last value for the same key, if any (if a corresponding key doesn’t exist

yet, the update will

-be considered an INSERT). Using the table analogy, a data record in a

changelog stream is interpreted

-as an UPSERT aka INSERT/UPDATE because any existing row with the same key is

overwritten. Also, null

-values are interpreted in a special way: a record with a null value represents

a “DELETE”.

+作为 source,upsert-kafka 连接器生产 changelog 流,其中每条数据记录代表一个更新或删除事件。更准确地说,数据记录中的

value 被解释为同一 key 的最后一个 value 的 UPDATE,如果有这个 key(如果不存在相应的 key,则该更新被视为

INSERT)。用表来类比,更改日志流中的数据记录被解释为 UPSERT,也称为 INSERT/UPDATE,因为任何具有相同 key

的现有行都被覆盖。另外,value 为空的消息将会被视作为 DELETE 消息。

-As a sink, the upsert-kafka connector can consume a changelog stream. It will

write INSERT/UPDATE_AFTER

-data as normal Kafka messages value, and write DELETE data as Kafka messages

with null values

-(indicate tombstone for the key). Flink will guarantee the message ordering on

the primary key by

-partition data on the values of the primary key columns, so the

update/deletion messages on the same

-key will fall into the same partition.

+作为 sink,upsert-kafka 连接器可以消费 changelog 流。它会将 INSERT/UPDATE_AFTER 数据作为正常的 Kafka

消息写入,并将 DELETE 数据以 value 为空的 Kafka 消息写入(表示对应 key 的消息被删除)。Flink

将根据主键列的值对数据进行分区,从而保证主键上的消息有序,因此同一 key 上的更新/删除消息将落在同一分区中。

-Dependencies

+依赖

------------

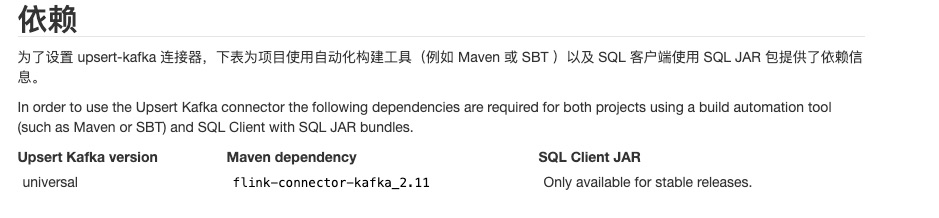

-In order to set up the upsert-kafka connector, the following table provide

dependency information for

-both projects using a build automation tool (such as Maven or SBT) and SQL

Client with SQL JAR bundles.

+为了设置 upsert-kafka 连接器,下表为项目使用自动化构建工具(例如 Maven 或 SBT )以及 SQL 客户端使用 SQL JAR

包提供了依赖信息。

Review comment:

Please also delete it as you work in upsert-kafka.md. We should use

`sql-connector-download-table.html` to generate the introduction. But

currently, we only has English verion page here.

##########

File path: docs/dev/table/connectors/upsert-kafka.zh.md

##########

@@ -29,36 +29,26 @@ under the License.

* This will be replaced by the TOC

{:toc}

-The Upsert Kafka connector allows for reading data from and writing data into

Kafka topics in the upsert fashion.

+Upsert Kafka 连接器支持以 upsert 方式从 Kafka topic 中读取数据并将数据写入 Kafka topic。

-As a source, the upsert-kafka connector produces a changelog stream, where

each data record represents

-an update or delete event. More precisely, the value in a data record is

interpreted as an UPDATE of

-the last value for the same key, if any (if a corresponding key doesn’t exist

yet, the update will

-be considered an INSERT). Using the table analogy, a data record in a

changelog stream is interpreted

-as an UPSERT aka INSERT/UPDATE because any existing row with the same key is

overwritten. Also, null

-values are interpreted in a special way: a record with a null value represents

a “DELETE”.

+作为 source,upsert-kafka 连接器生产 changelog 流,其中每条数据记录代表一个更新或删除事件。更准确地说,数据记录中的

value 被解释为同一 key 的最后一个 value 的 UPDATE,如果有这个 key(如果不存在相应的 key,则该更新被视为

INSERT)。用表来类比,更改日志流中的数据记录被解释为 UPSERT,也称为 INSERT/UPDATE,因为任何具有相同 key

的现有行都被覆盖。另外,value 为空的消息将会被视作为 DELETE 消息。

-As a sink, the upsert-kafka connector can consume a changelog stream. It will

write INSERT/UPDATE_AFTER

-data as normal Kafka messages value, and write DELETE data as Kafka messages

with null values

-(indicate tombstone for the key). Flink will guarantee the message ordering on

the primary key by

-partition data on the values of the primary key columns, so the

update/deletion messages on the same

-key will fall into the same partition.

+作为 sink,upsert-kafka 连接器可以消费 changelog 流。它会将 INSERT/UPDATE_AFTER 数据作为正常的 Kafka

消息写入,并将 DELETE 数据以 value 为空的 Kafka 消息写入(表示对应 key 的消息被删除)。Flink

将根据主键列的值对数据进行分区,从而保证主键上的消息有序,因此同一 key 上的更新/删除消息将落在同一分区中。

Review comment:

> Flink 将根据主键列的值对数据进行分区,从而保证主键上的消息有序,因此同一 key 上的更新/删除消息将落在同一分区中

Flink 将根据主键列的值对数据进行分区,从而保证主键上的消息有序,因此同一主键上的更新/删除消息将落在同一分区中。

感觉如果前面用中文的主键,那么后半句也用中文的描述会更为一致。

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]