openinx commented on a change in pull request #2229:

URL: https://github.com/apache/iceberg/pull/2229#discussion_r591267913

##########

File path: flink/src/main/java/org/apache/iceberg/flink/IcebergTableSource.java

##########

@@ -100,70 +93,73 @@ public boolean isBounded() {

.build();

}

- @Override

- public TableSchema getTableSchema() {

- return schema;

- }

-

- @Override

- public DataType getProducedDataType() {

- return getProjectedSchema().toRowDataType().bridgedTo(RowData.class);

- }

-

private TableSchema getProjectedSchema() {

- TableSchema fullSchema = getTableSchema();

if (projectedFields == null) {

- return fullSchema;

+ return schema;

} else {

- String[] fullNames = fullSchema.getFieldNames();

- DataType[] fullTypes = fullSchema.getFieldDataTypes();

+ String[] fullNames = schema.getFieldNames();

+ DataType[] fullTypes = schema.getFieldDataTypes();

return TableSchema.builder().fields(

Arrays.stream(projectedFields).mapToObj(i ->

fullNames[i]).toArray(String[]::new),

Arrays.stream(projectedFields).mapToObj(i ->

fullTypes[i]).toArray(DataType[]::new)).build();

}

}

@Override

- public String explainSource() {

- String explain = "Iceberg table: " + loader.toString();

- if (projectedFields != null) {

- explain += ", ProjectedFields: " + Arrays.toString(projectedFields);

- }

-

- if (isLimitPushDown) {

- explain += String.format(", LimitPushDown : %d", limit);

- }

+ public void applyLimit(long newLimit) {

+ this.limit = newLimit;

+ }

- if (isFilterPushedDown()) {

- explain += String.format(", FilterPushDown: %s", COMMA.join(filters));

+ @Override

+ public Result applyFilters(List<ResolvedExpression> flinkFilters) {

+ List<ResolvedExpression> acceptedFilters = Lists.newArrayList();

+ List<Expression> expressions = Lists.newArrayList();

+

+ for (ResolvedExpression resolvedExpression : flinkFilters) {

+ Optional<Expression> icebergExpression =

FlinkFilters.convert(resolvedExpression);

+ if (icebergExpression.isPresent()) {

+ expressions.add(icebergExpression.get());

+ acceptedFilters.add(resolvedExpression);

+ }

}

- return TableConnectorUtils.generateRuntimeName(getClass(),

getTableSchema().getFieldNames()) + explain;

+ this.filters = expressions;

+ return Result.of(acceptedFilters, flinkFilters);

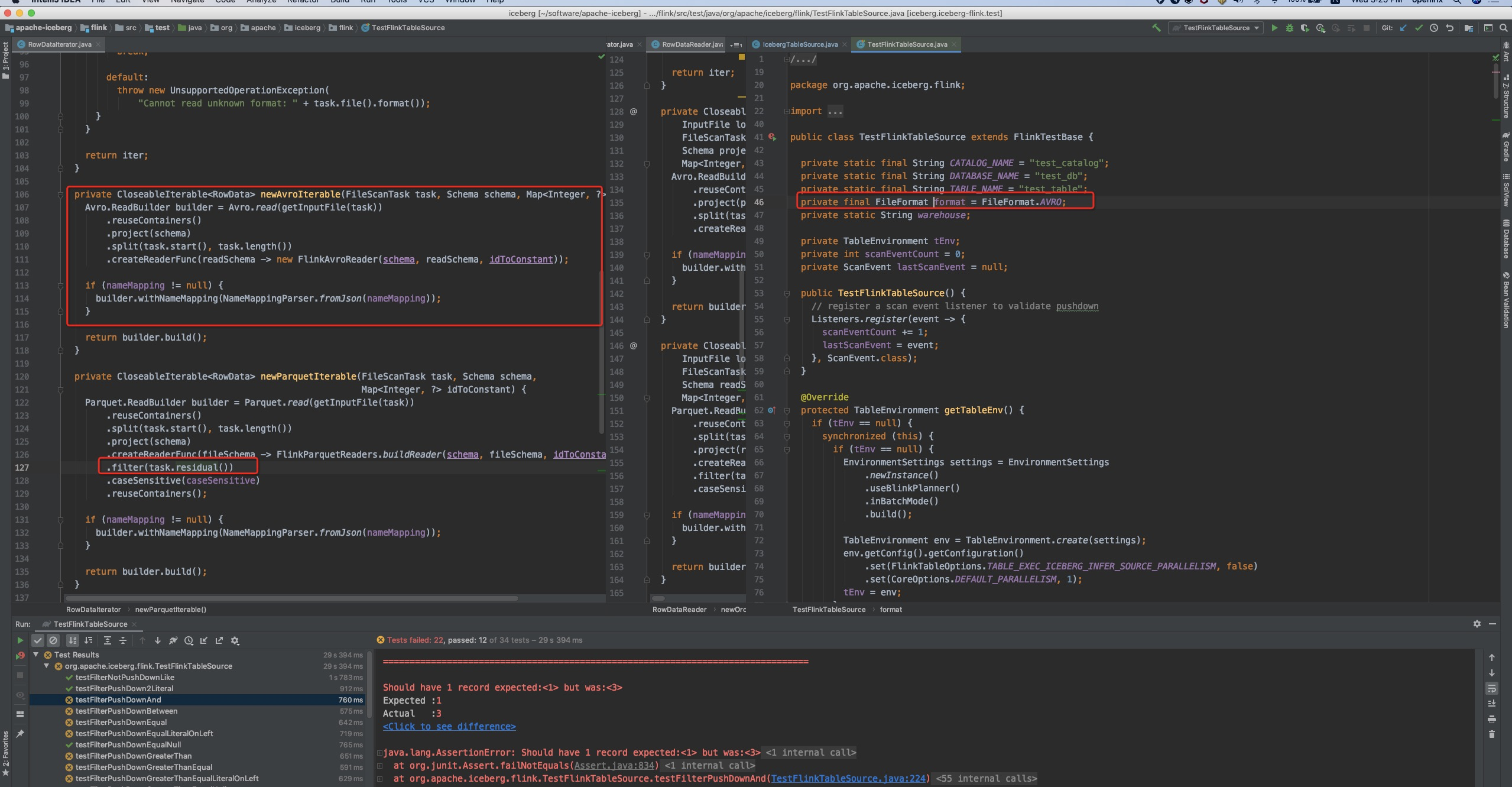

Review comment:

OK, after read the flink runtime & iceberg filter pushdown code

carefully, I found that we iceberg's AVRO file reader did not push down the

filters, so it will always return the row that are not filtered by

`acceptedFilters` .

So here we could only pass the `flinkFilters` as the `remainingFilters`.

Let me resolve this comment.

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]