hellochueng opened a new issue, #7224: URL: https://github.com/apache/iceberg/issues/7224

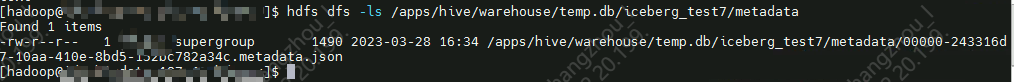

### Apache Iceberg version 1.2.0 (latest release) ### Query engine Spark ### Please describe the bug 🐞 sh spark-sql ../jars/iceberg-spark-runtime-3.2_2.12-1.2.0.jar \ --conf spark.sql.extensions=org.apache.iceberg.spark.extensions.IcebergSparkSessionExtensions \ --conf spark.sql.catalog.spark_catalog=org.apache.iceberg.spark.SparkSessionCatalog \ --conf spark.sql.catalog.spark_catalog.type=hive \ --conf spark.sql.catalog.hadoop_prod=org.apache.iceberg.spark.SparkCatalog \ --conf spark.sql.catalog.hadoop_prod.type=hadoop \ --conf spark.sql.catalog.hadoop_prod.warehouse=hdfs://***/apps/hive/warehouse/temp.db \ --conf spark.sql.catalog.hive_prod=org.apache.iceberg.spark.SparkCatalog \ --conf spark.sql.catalog.hive_prod.type=hive \ --conf spark.sql.catalog.hive_prod.uri=thrift://*** \ --conf iceberg.engine.hive.enabled=true CREATE TABLE hadoop_prod.iceberg_test6(id bigint COMMENT 'unique id',name string, data string)USING iceberg;  hadoop_prod SparkCatalog will create version-hint.text CREATE TABLE hive_prod.temp.iceberg_test7(id bigint COMMENT 'unique id',name string, data string)USING iceberg;  hive_prod SparkCatalog not create version-hint.text -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected] --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]