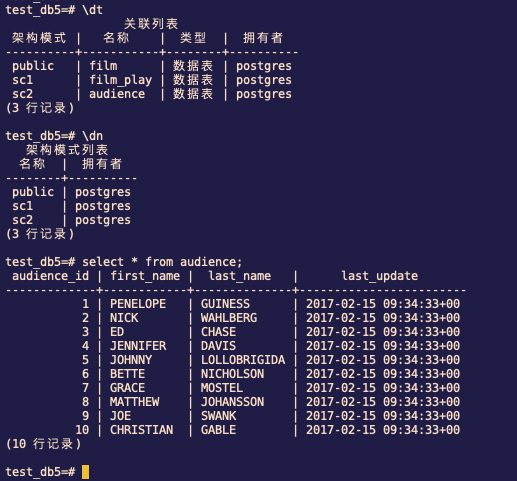

hit-lacus edited a comment on issue #902: KYLIN-3832 Query pushdown support postgresql URL: https://github.com/apache/kylin/pull/902#issuecomment-547393013 # Test Build ### Prepare data  ### Set project level config <img width="1128" alt="image" src="https://user-images.githubusercontent.com/14030549/67767483-a197f780-fa8b-11e9-90c4-1040df59e2b5.png";> ### Import table from pg datasource <img width="596" alt="image" src="https://user-images.githubusercontent.com/14030549/67767578-ce4c0f00-fa8b-11e9-84e2-f1e07ac1a348.png";> ### Create Model and Cube <img width="1062" alt="image" src="https://user-images.githubusercontent.com/14030549/67789595-3102d200-faaf-11e9-84f0-a666dadbd2ca.png";> ### Build Job Success <img width="1395" alt="image" src="https://user-images.githubusercontent.com/14030549/67789653-48da5600-faaf-11e9-8ec8-154d43114881.png";> ### Log of sqoop step ``` exe cmd:/opt/cloudera/parcels/CDH-5.7.6-1.cdh5.7.6.p0.6/bin/sqoop import -Dorg.apache.sqoop.splitter.allow_text_splitter=true -Dmapreduce.job.queuename=default --connect "jdbc:postgresql://10.1.2.42:54321/test_db5" --driver "org.postgresql.Driver" --username "postgres" --password "password" --query "SELECT \"film_play\".\"audience_id\" AS \"FILM_PLAY_AUDIENCE_ID\", \"film_play\".\"film_id\" AS \"FILM_PLAY_FILM_ID\", \"film_play\".\"watch_time\", \"film_play\".\"payment\" AS \"FILM_PLAY_PAYMENT\" FROM \"sc1\".\"film_play\" AS \"film_play\" LEFT JOIN \"public\".\"film\" AS \"film\" ON \"film_play\".\"film_id\" = \"film\".\"film_id\" LEFT JOIN \"sc2\".\"audience\" AS \"audience\" ON \"film_play\".\"audience_id\" = \"audience\".\"audience_id\" WHERE 1 = 1 AND (\"film_play\".\"watch_time\" >= '2017-04-01 00:00:00' AND \"film_play\".\"watch_time\" < '2017-08-01 00:00:00') AND \$CONDITIONS" --target-dir "hdfs://cdh-master:8020/kylin/postgresql_metadata/kylin-c517a543-b212-4a6f-f220-5eee731dea56/kylin_intermediate_playstatcube_a702211d_5192_902e_b4bd_fef7f7985ebe" --split-by "FILM_PLAY.WATCH_TIME" --boundary-query "SELECT MIN(\"film_play\".\"watch_time\"), MAX(\"film_play\".\"watch_time\") FROM \"sc1\".\"film_play\" AS \"film_play\"" --null-string '' --fields-terminated-by '|' --num-mappers 4 Warning: /opt/cloudera/parcels/CDH-5.7.6-1.cdh5.7.6.p0.6/bin/../lib/sqoop/../accumulo does not exist! Accumulo imports will fail. Please set $ACCUMULO_HOME to the root of your Accumulo installation. 19/10/30 00:44:47 INFO sqoop.Sqoop: Running Sqoop version: 1.4.6-cdh5.7.6 19/10/30 00:44:47 WARN tool.BaseSqoopTool: Setting your password on the command-line is insecure. Consider using -P instead. 19/10/30 00:44:47 WARN sqoop.ConnFactory: Parameter --driver is set to an explicit driver however appropriate connection manager is not being set (via --connection-manager). Sqoop is going to fall back to org.apache.sqoop.manager.GenericJdbcManager. Please specify explicitly which connection manager should be used next time. 19/10/30 00:44:47 INFO manager.SqlManager: Using default fetchSize of 1000 19/10/30 00:44:47 INFO tool.CodeGenTool: Beginning code generation 19/10/30 00:44:48 INFO manager.SqlManager: Executing SQL statement: SELECT "film_play"."audience_id" AS "FILM_PLAY_AUDIENCE_ID", "film_play"."film_id" AS "FILM_PLAY_FILM_ID", "film_play"."watch_time", "film_play"."payment" AS "FILM_PLAY_PAYMENT" FROM "sc1"."film_play" AS "film_play" LEFT JOIN "public"."film" AS "film" ON "film_play"."film_id" = "film"."film_id" LEFT JOIN "sc2"."audience" AS "audience" ON "film_play"."audience_id" = "audience"."audience_id" WHERE 1 = 1 AND ("film_play"."watch_time" >= '2017-04-01 00:00:00' AND "film_play"."watch_time" < '2017-08-01 00:00:00') AND (1 = 0) 19/10/30 00:44:48 INFO manager.SqlManager: Executing SQL statement: SELECT "film_play"."audience_id" AS "FILM_PLAY_AUDIENCE_ID", "film_play"."film_id" AS "FILM_PLAY_FILM_ID", "film_play"."watch_time", "film_play"."payment" AS "FILM_PLAY_PAYMENT" FROM "sc1"."film_play" AS "film_play" LEFT JOIN "public"."film" AS "film" ON "film_play"."film_id" = "film"."film_id" LEFT JOIN "sc2"."audience" AS "audience" ON "film_play"."audience_id" = "audience"."audience_id" WHERE 1 = 1 AND ("film_play"."watch_time" >= '2017-04-01 00:00:00' AND "film_play"."watch_time" < '2017-08-01 00:00:00') AND (1 = 0) 19/10/30 00:44:48 INFO orm.CompilationManager: HADOOP_MAPRED_HOME is /opt/cloudera/parcels/CDH/lib/hadoop-mapreduce 注: /tmp/sqoop-root/compile/d59f0d854a853a628b5823a7a5192262/QueryResult.java使用或覆盖了已过时的 API。 注: 有关详细信息, 请使用 -Xlint:deprecation 重新编译。 19/10/30 00:44:49 INFO orm.CompilationManager: Writing jar file: /tmp/sqoop-root/compile/d59f0d854a853a628b5823a7a5192262/QueryResult.jar 19/10/30 00:44:49 INFO mapreduce.ImportJobBase: Beginning query import. 19/10/30 00:44:49 INFO Configuration.deprecation: mapred.jar is deprecated. Instead, use mapreduce.job.jar 19/10/30 00:44:50 INFO Configuration.deprecation: mapred.map.tasks is deprecated. Instead, use mapreduce.job.maps 19/10/30 00:44:50 INFO client.RMProxy: Connecting to ResourceManager at cdh-master/10.1.3.90:8032 19/10/30 00:44:53 INFO db.DBInputFormat: Using read commited transaction isolation 19/10/30 00:44:53 INFO db.DataDrivenDBInputFormat: BoundingValsQuery: SELECT MIN("film_play"."watch_time"), MAX("film_play"."watch_time") FROM "sc1"."film_play" AS "film_play" 19/10/30 00:44:53 INFO db.IntegerSplitter: Split size: 3909600000; Num splits: 4 from: 1484474703000 to: 1500113103000 19/10/30 00:44:53 INFO mapreduce.JobSubmitter: number of splits:4 19/10/30 00:44:53 INFO mapreduce.JobSubmitter: Submitting tokens for job: job_1571054284123_0700 19/10/30 00:44:54 INFO impl.YarnClientImpl: Submitted application application_1571054284123_0700 19/10/30 00:44:54 INFO mapreduce.Job: The url to track the job: http://cdh-master:8088/proxy/application_1571054284123_0700/ 19/10/30 00:44:54 INFO mapreduce.Job: Running job: job_1571054284123_0700 19/10/30 00:45:00 INFO mapreduce.Job: Job job_1571054284123_0700 running in uber mode : false 19/10/30 00:45:00 INFO mapreduce.Job: map 0% reduce 0% 19/10/30 00:45:05 INFO mapreduce.Job: map 100% reduce 0% 19/10/30 00:45:05 INFO mapreduce.Job: Job job_1571054284123_0700 completed successfully 19/10/30 00:45:05 INFO mapreduce.Job: Counters: 30 File System Counters FILE: Number of bytes read=0 FILE: Number of bytes written=580620 FILE: Number of read operations=0 FILE: Number of large read operations=0 FILE: Number of write operations=0 HDFS: Number of bytes read=697 HDFS: Number of bytes written=812 HDFS: Number of read operations=16 HDFS: Number of large read operations=0 HDFS: Number of write operations=8 Job Counters Launched map tasks=4 Other local map tasks=4 Total time spent by all maps in occupied slots (ms)=39411 Total time spent by all reduces in occupied slots (ms)=0 Total time spent by all map tasks (ms)=13137 Total vcore-seconds taken by all map tasks=13137 Total megabyte-seconds taken by all map tasks=40356864 Map-Reduce Framework Map input records=28 Map output records=28 Input split bytes=697 Spilled Records=0 Failed Shuffles=0 Merged Map outputs=0 GC time elapsed (ms)=250 CPU time spent (ms)=3830 Physical memory (bytes) snapshot=1270038528 Virtual memory (bytes) snapshot=18745585664 Total committed heap usage (bytes)=3234332672 File Input Format Counters Bytes Read=0 File Output Format Counters Bytes Written=812 19/10/30 00:45:05 INFO mapreduce.ImportJobBase: Transferred 812 bytes in 14.9212 seconds (54.4191 bytes/sec) 19/10/30 00:45:05 INFO mapreduce.ImportJobBase: Retrieved 28 records. ```

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services