zzcclp edited a comment on pull request #1495: URL: https://github.com/apache/kylin/pull/1495#issuecomment-738628576

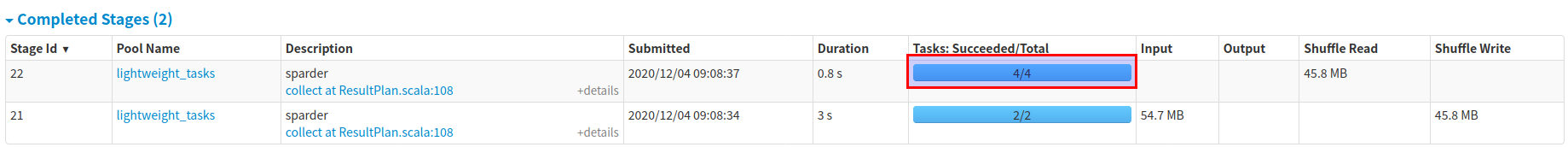

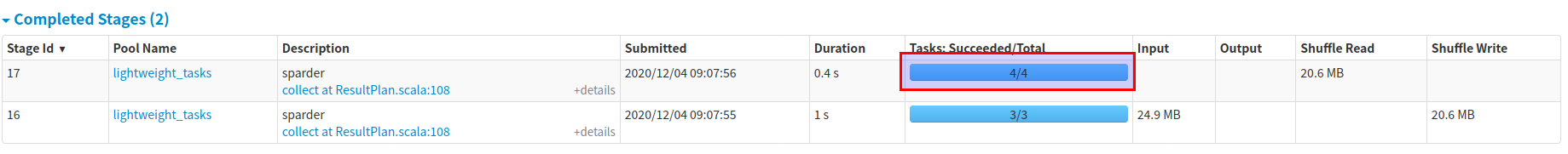

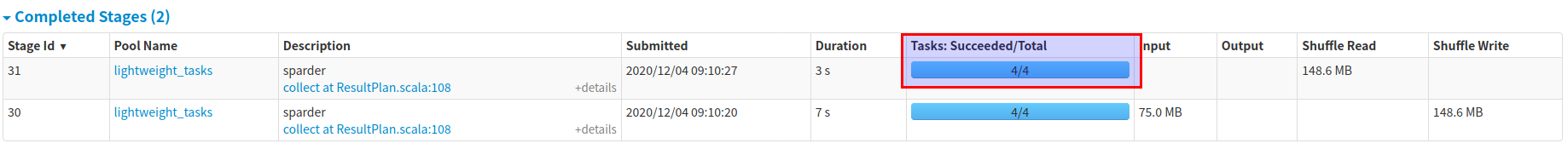

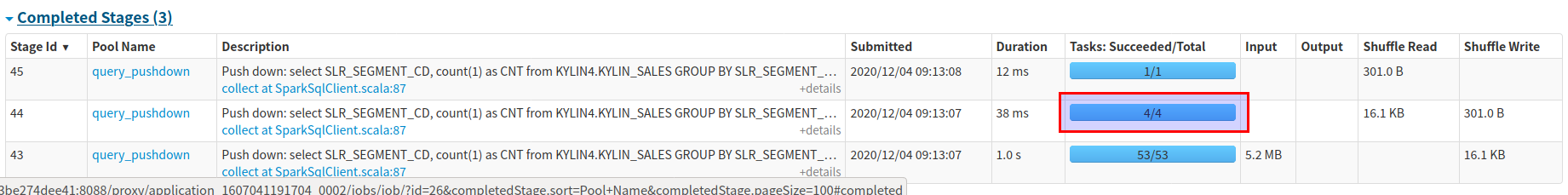

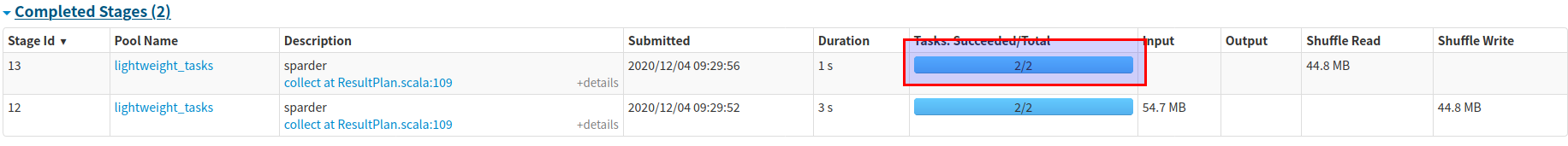

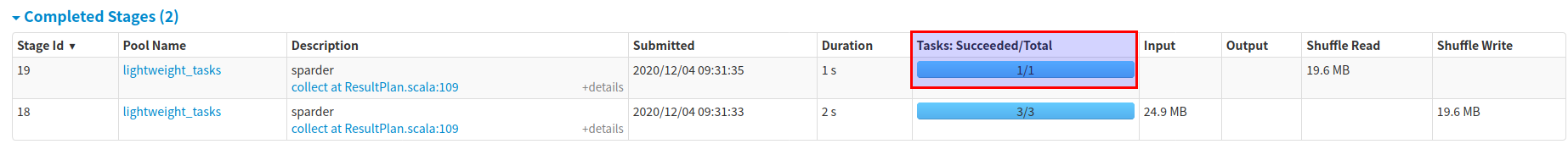

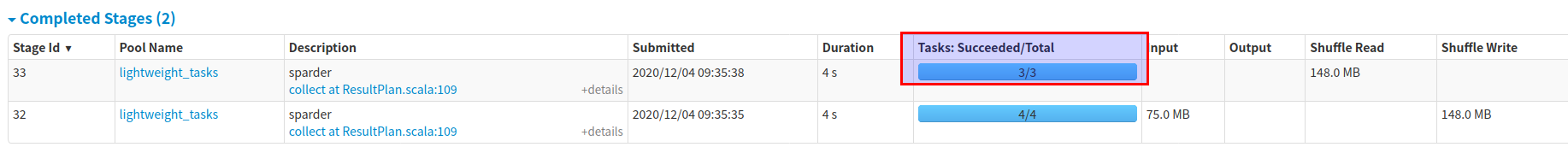

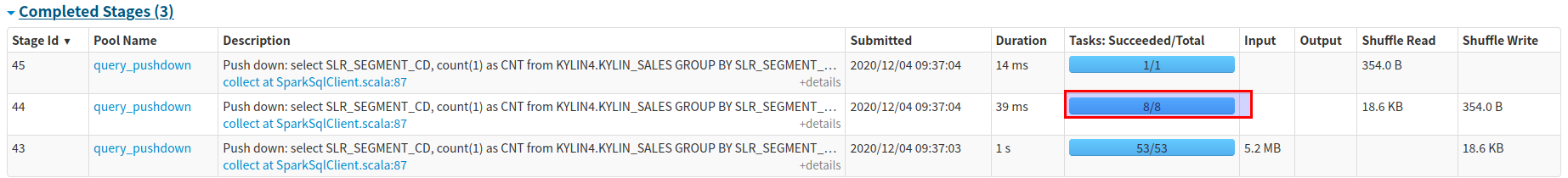

## The Results of Testing Manually ### Test Env - Hadoop 2.7.0 on docker. - Commit : [3b3786c5c](https://github.com/apache/kylin/commit/3b3786c5c9602838cd4abd0a6d40574550ec8622) - Sparder Env : spark.executor.cores=1 spark.executor.instances=4 spark.executor.memory=2G spark.executor.memoryOverhead=1G spark.sql.shuffle.partitions=4 ### Before this patch The shuffle partition number of all querys is 4, which equals to the total cores number.     ### After this patch The shuffle partition number of each query is calculated according to the scanned bytes of each query:  The log messages shown as below: `2020-12-04 05:28:11,991 INFO [Query a5e841ba-c430-383b-dfa8-5694cd6d282b-122] datasource.FilePruner:51 : Set partition to 2, total bytes 92610534`  The log messages shown as below: `2020-12-04 05:28:12,112 INFO [Query 534a7afb-4857-6e0c-67b8-bd6a8da155a8-130] datasource.FilePruner:51 : Set partition to 1, total bytes 42133710`  The log messages shown as below: `2020-12-04 05:28:12,141 INFO [Query 744d34d1-0d06-11c8-fdee-1f260388117f-131] datasource.FilePruner:51 : Set partition to 3, total bytes 158775868`  The log messages shown as below: `2020-12-04 08:16:43,746 INFO [Query e117ceb8-53c1-959e-9cb0-75ee3901e271-126] pushdown.SparkSqlClient:68 : Auto set spark.sql.shuffle.partitions to 8, the total sources size is 415631445 b` ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected]