smengcl commented on a change in pull request #2417: URL: https://github.com/apache/ozone/pull/2417#discussion_r676903922

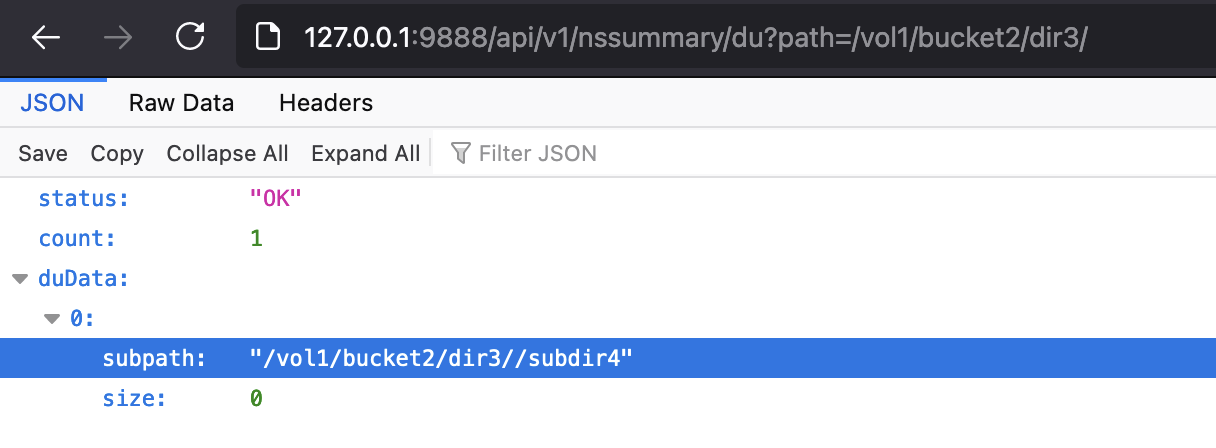

########## File path: hadoop-ozone/recon/src/main/java/org/apache/hadoop/ozone/recon/api/NSSummaryEndpoint.java ########## @@ -0,0 +1,640 @@ +/* + * Licensed to the Apache Software Foundation (ASF) under one + * or more contributor license agreements. See the NOTICE file + * distributed with this work for additional information + * regarding copyright ownership. The ASF licenses this file + * to you under the Apache License, Version 2.0 (the + * "License"); you may not use this file except in compliance + * with the License. You may obtain a copy of the License at + * <p> + * http://www.apache.org/licenses/LICENSE-2.0 + * <p> + * Unless required by applicable law or agreed to in writing, software + * distributed under the License is distributed on an "AS IS" BASIS, + * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + * See the License for the specific language governing permissions and + * limitations under the License. + */ + +package org.apache.hadoop.ozone.recon.api; + +import com.google.common.annotations.VisibleForTesting; +import com.google.common.base.Strings; +import org.apache.hadoop.hdds.utils.db.Table; +import org.apache.hadoop.hdds.utils.db.TableIterator; +import org.apache.hadoop.ozone.om.helpers.OmBucketInfo; +import org.apache.hadoop.ozone.om.helpers.OmDirectoryInfo; +import org.apache.hadoop.ozone.om.helpers.OmKeyInfo; +import org.apache.hadoop.ozone.om.helpers.OmVolumeArgs; +import org.apache.hadoop.ozone.recon.ReconConstants; +import org.apache.hadoop.ozone.recon.api.types.BasicResponse; +import org.apache.hadoop.ozone.recon.api.types.DUResponse; +import org.apache.hadoop.ozone.recon.api.types.EntityType; +import org.apache.hadoop.ozone.recon.api.types.FileSizeDistributionResponse; +import org.apache.hadoop.ozone.recon.api.types.NSSummary; +import org.apache.hadoop.ozone.recon.api.types.PathStatus; +import org.apache.hadoop.ozone.recon.api.types.QuotaUsageResponse; +import org.apache.hadoop.ozone.recon.recovery.ReconOMMetadataManager; +import org.apache.hadoop.ozone.recon.spi.ReconNamespaceSummaryManager; + +import javax.inject.Inject; +import javax.ws.rs.GET; +import javax.ws.rs.Path; +import javax.ws.rs.Produces; +import javax.ws.rs.QueryParam; +import javax.ws.rs.core.MediaType; +import javax.ws.rs.core.Response; +import java.io.IOException; +import java.nio.file.Paths; +import java.util.ArrayList; +import java.util.Arrays; +import java.util.Collections; +import java.util.Iterator; +import java.util.List; +import java.util.Set; + +import static org.apache.hadoop.ozone.OzoneConsts.OM_KEY_PREFIX; +import static org.apache.hadoop.ozone.om.helpers.OzoneFSUtils.removeTrailingSlashIfNeeded; +import static org.apache.hadoop.ozone.om.lock.OzoneManagerLock.Resource.BUCKET_LOCK; + +/** + * REST APIs for namespace metadata summary. + */ +@Path("/nssummary") +@Produces(MediaType.APPLICATION_JSON) +public class NSSummaryEndpoint { + @Inject + private ReconNamespaceSummaryManager reconNamespaceSummaryManager; + + @Inject + private ReconOMMetadataManager omMetadataManager; + + /** + * This endpoint will return the entity type and aggregate count of objects. + * @param path the request path. + * @return HTTP response with basic info: entity type, num of objects + * @throws IOException IOE + */ + @GET + @Path("/basic") + public Response getBasicInfo( + @QueryParam("path") String path) throws IOException { + + String[] names = parseRequestPath(path); + EntityType type = getEntityType(names); + + BasicResponse basicResponse = null; + switch (type) { + case VOLUME: + basicResponse = new BasicResponse(EntityType.VOLUME); + List<OmBucketInfo> buckets = listBucketsUnderVolume(names[0]); + basicResponse.setNumTotalBucket(buckets.size()); + int totalDir = 0; + int totalKey = 0; + + // iterate all buckets to collect the total object count. + for (OmBucketInfo bucket : buckets) { + long bucketObjectId = bucket.getObjectID(); + totalDir += getTotalDirCount(bucketObjectId); + totalKey += getTotalKeyCount(bucketObjectId); + } + basicResponse.setNumTotalDir(totalDir); + basicResponse.setNumTotalKey(totalKey); + break; + case BUCKET: + basicResponse = new BasicResponse(EntityType.BUCKET); + assert (names.length == 2); + long bucketObjectId = getBucketObjectId(names); + basicResponse.setNumTotalDir(getTotalDirCount(bucketObjectId)); + basicResponse.setNumTotalKey(getTotalKeyCount(bucketObjectId)); + break; + case DIRECTORY: + // path should exist so we don't need any extra verification/null check + long dirObjectId = getDirObjectId(names); + basicResponse = new BasicResponse(EntityType.DIRECTORY); + basicResponse.setNumTotalDir(getTotalDirCount(dirObjectId)); + basicResponse.setNumTotalKey(getTotalKeyCount(dirObjectId)); + break; + case KEY: + basicResponse = new BasicResponse(EntityType.KEY); + break; + case UNKNOWN: + basicResponse = new BasicResponse(EntityType.UNKNOWN); + basicResponse.setStatus(PathStatus.PATH_NOT_FOUND); + break; + default: + break; + } + return Response.ok(basicResponse).build(); + } + + /** + * DU endpoint to return datasize for subdirectory (bucket for volume). + * @param path request path + * @return DU response + * @throws IOException + */ + @GET + @Path("/du") + public Response getDiskUsage(@QueryParam("path") String path) + throws IOException { + String[] names = parseRequestPath(path); + EntityType type = getEntityType(names); + DUResponse duResponse = new DUResponse(); + switch (type) { + case VOLUME: + String volName = names[0]; + List<OmBucketInfo> buckets = listBucketsUnderVolume(volName); + duResponse.setCount(buckets.size()); + + // List of DiskUsage data for all buckets + List<DUResponse.DiskUsage> bucketDuData = new ArrayList<>(); + for (OmBucketInfo bucket : buckets) { + String bucketName = bucket.getBucketName(); + long bucketObjectID = bucket.getObjectID(); + String subpath = omMetadataManager.getBucketKey(volName, bucketName); + DUResponse.DiskUsage diskUsage = new DUResponse.DiskUsage(); + diskUsage.setSubpath(subpath); + long dataSize = getTotalSize(bucketObjectID); + diskUsage.setSize(dataSize); + bucketDuData.add(diskUsage); + } + duResponse.setDuData(bucketDuData); + break; + case BUCKET: + long bucketObjectId = getBucketObjectId(names); + NSSummary bucketNSSummary = + reconNamespaceSummaryManager.getNSSummary(bucketObjectId); + + // get object IDs for all its subdirectories + Set<Long> bucketSubdirs = bucketNSSummary.getChildDir(); + duResponse.setCount(bucketSubdirs.size()); + List<DUResponse.DiskUsage> dirDUData = new ArrayList<>(); + for (long subdirObjectId: bucketSubdirs) { + NSSummary subdirNSSummary = reconNamespaceSummaryManager + .getNSSummary(subdirObjectId); + + // get directory's name and generate the next-level subpath. + String dirName = subdirNSSummary.getDirName(); + String subpath = path + OM_KEY_PREFIX + dirName; Review comment: If `path` already has a trailing `OM_KEY_PREFIX`, don't append `OM_KEY_PREFIX` again. Otherwise it ends up with double `OM_KEY_PREFIX` like this: e.g. `path=/vol1/bucket2/dir3/`  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected] --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]