[spark] branch master updated: [SPARK-37925][DOC] Update document to mention the workaround for YARN-11053

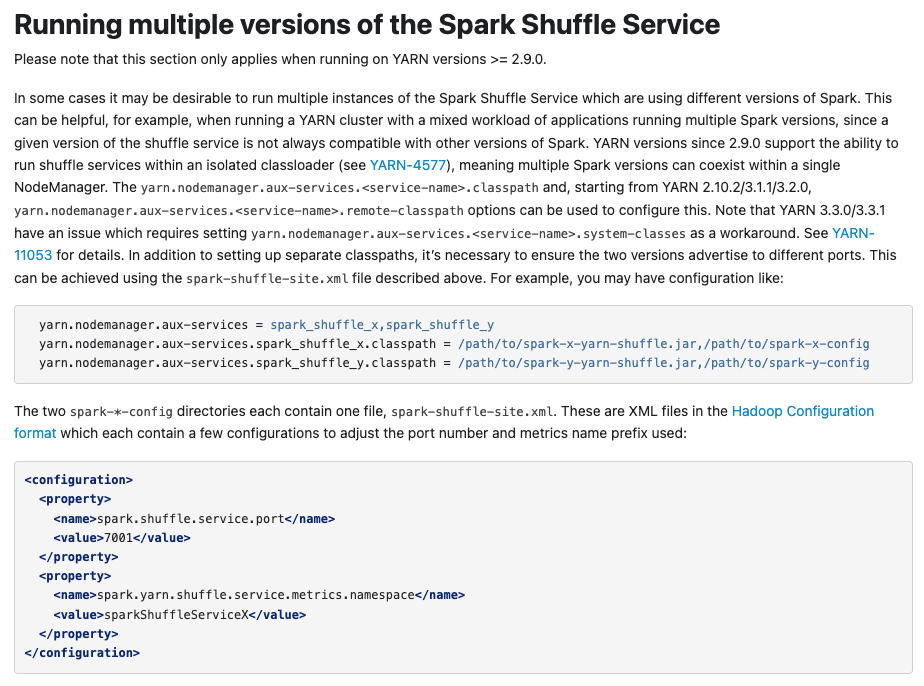

This is an automated email from the ASF dual-hosted git repository. srowen pushed a commit to branch master in repository https://gitbox.apache.org/repos/asf/spark.git The following commit(s) were added to refs/heads/master by this push: new 74ebef2 [SPARK-37925][DOC] Update document to mention the workaround for YARN-11053 74ebef2 is described below commit 74ebef243c18e7a8f32bf90ea75ab6afed9e3132 Author: Cheng Pan AuthorDate: Sat Feb 5 09:47:15 2022 -0600 [SPARK-37925][DOC] Update document to mention the workaround for YARN-11053 ### What changes were proposed in this pull request? Update document "Running multiple versions of the Spark Shuffle Service" to mention the workaround for YARN-11053 ### Why are the changes needed? User may stuck when they following the current document to deploy multi-versions Spark Shuffle Service on YARN because of [YARN-11053](https://issues.apache.org/jira/browse/YARN-11053) ### Does this PR introduce _any_ user-facing change? User document changes. ### How was this patch tested?  Closes #35223 from pan3793/SPARK-37925. Authored-by: Cheng Pan Signed-off-by: Sean Owen --- docs/running-on-yarn.md | 9 ++--- 1 file changed, 6 insertions(+), 3 deletions(-) diff --git a/docs/running-on-yarn.md b/docs/running-on-yarn.md index c55ce86..63c0376 100644 --- a/docs/running-on-yarn.md +++ b/docs/running-on-yarn.md @@ -916,9 +916,12 @@ support the ability to run shuffle services within an isolated classloader can coexist within a single NodeManager. The `yarn.nodemanager.aux-services..classpath` and, starting from YARN 2.10.2/3.1.1/3.2.0, `yarn.nodemanager.aux-services..remote-classpath` options can be used to configure -this. In addition to setting up separate classpaths, it's necessary to ensure the two versions -advertise to different ports. This can be achieved using the `spark-shuffle-site.xml` file described -above. For example, you may have configuration like: +this. Note that YARN 3.3.0/3.3.1 have an issue which requires setting +`yarn.nodemanager.aux-services..system-classes` as a workaround. See +[YARN-11053](https://issues.apache.org/jira/browse/YARN-11053) for details. In addition to setting +up separate classpaths, it's necessary to ensure the two versions advertise to different ports. +This can be achieved using the `spark-shuffle-site.xml` file described above. For example, you may +have configuration like: ```properties yarn.nodemanager.aux-services = spark_shuffle_x,spark_shuffle_y - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark-website] branch asf-site updated: Contribution guide to document actual guide for pull requests

This is an automated email from the ASF dual-hosted git repository. srowen pushed a commit to branch asf-site in repository https://gitbox.apache.org/repos/asf/spark-website.git The following commit(s) were added to refs/heads/asf-site by this push: new 991df19 Contribution guide to document actual guide for pull requests 991df19 is described below commit 991df1959e2381dfd32dadce39cbfa2be80ec0c6 Author: khalidmammadov AuthorDate: Fri Feb 4 17:07:55 2022 -0600 Contribution guide to document actual guide for pull requests Currently contribution guide does not reflect actual flow to raise a new PR and hence it's not clear (for a new contributors) what exactly needs to be done to make a PR for Spark repository and test it as per expectation. This PR addresses that by following: - It describes in the Pull request section of the Contributing page the actual procedure and takes a contributor through a step by step process. - It removes optional "Running tests in your forked repository" section on Developer Tools page which is obsolete now and doesn't reflect reality anymore i.e. it says we can test by clicking “Run workflow” button which is not available anymore as workflow does not use "workflow_dispatch" event trigger anymore and was removed in https://github.com/apache/spark/pull/32092 - Instead it documents the new procedure that above PR introduced i.e. contributors needs to use their own GitHub free workflow credits to test new changes they are purposing and a Spark Actions workflow will expect that to be completed before marking PR to be ready for a review. - Some general wording was copied from "Running tests in your forked repository" section on Developer Tools page but main content was rewritten to meet objective - Also fixed URL to developer-tools.html to be resolved by parser (that converted it into relative URI) instead of using hard coded absolute URL. Tested imperically with `bundle exec jekyll serve` and static files were generated with `bundle exec jekyll build` commands This closes https://issues.apache.org/jira/browse/SPARK-37996 Author: khalidmammadov Closes #378 from khalidmammadov/fix_contribution_workflow_guide. --- contributing.md| 21 +++-- developer-tools.md | 17 - images/running-tests-using-github-actions.png | Bin 312696 -> 0 bytes site/contributing.html | 18 +- site/developer-tools.html | 19 --- site/images/running-tests-using-github-actions.png | Bin 312696 -> 0 bytes 6 files changed, 28 insertions(+), 47 deletions(-) diff --git a/contributing.md b/contributing.md index d5f0142..b127afe 100644 --- a/contributing.md +++ b/contributing.md @@ -322,9 +322,16 @@ Example: `Fix typos in Foo scaladoc` Pull request +Before creating a pull request in Apache Spark, it is important to check if tests can pass on your branch because +our GitHub Actions workflows automatically run tests for your pull request/following commits +and every run burdens the limited resources of GitHub Actions in Apache Spark repository. +Below steps will take your through the process. + + 1. https://help.github.com/articles/fork-a-repo/";>Fork the GitHub repository at https://github.com/apache/spark";>https://github.com/apache/spark if you haven't already -1. Clone your fork, create a new branch, push commits to the branch. +1. Go to "Actions" tab on your forked repository and enable "Build and test" and "Report test results" workflows +1. Clone your fork and create a new branch 1. Consider whether documentation or tests need to be added or updated as part of the change, and add them as needed. 1. When you add tests, make sure the tests are self-descriptive. @@ -355,14 +362,16 @@ and add them as needed. ... ``` 1. Consider whether benchmark results should be added or updated as part of the change, and add them as needed by -https://spark.apache.org/developer-tools.html#github-workflow-benchmarks";>Running benchmarks in your forked repository +Running benchmarks in your forked repository to generate benchmark results. 1. Run all tests with `./dev/run-tests` to verify that the code still compiles, passes tests, and -passes style checks. Alternatively you can run the tests via GitHub Actions workflow by -https://spark.apache.org/developer-tools.html#github-workflow-tests";>Running tests in your forked repository. +passes style checks. If style checks fail, review the Code Style Guide below. +1. Push commits to your branch. This will trigger "Build and test" and "Report test results" workflows +on your forked repository an

[spark] branch master updated (7a613ec -> 54b11fa)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from 7a613ec [SPARK-38100][SQL] Remove unused private method in `Decimal` add 54b11fa [MINOR] Remove unnecessary null check for exception cause No new revisions were added by this update. Summary of changes: .../src/main/java/org/apache/spark/network/shuffle/ErrorHandler.java | 4 ++-- .../org/apache/spark/network/shuffle/RetryingBlockTransferor.java | 2 +- 2 files changed, 3 insertions(+), 3 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated: [SPARK-37984][SHUFFLE] Avoid calculating all outstanding requests to improve performance

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new f8ff786 [SPARK-37984][SHUFFLE] Avoid calculating all outstanding

requests to improve performance

f8ff786 is described below

commit f8ff7863e792b833afb2ff603878f29d4a9888e6

Author: weixiuli

AuthorDate: Sun Jan 23 20:23:20 2022 -0600

[SPARK-37984][SHUFFLE] Avoid calculating all outstanding requests to

improve performance

### What changes were proposed in this pull request?

Avoid calculating all outstanding requests to improve performance.

### Why are the changes needed?

Follow the comment

(https://github.com/apache/spark/pull/34711#pullrequestreview-835520984) , we

can implement a "has outstanding requests" method in the response handler that

doesn't even need to get a count,let's do this with PR.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Exist unittests.

Closes #35276 from weixiuli/SPARK-37984.

Authored-by: weixiuli

Signed-off-by: Sean Owen

---

.../apache/spark/network/client/TransportResponseHandler.java | 10 --

.../apache/spark/network/server/TransportChannelHandler.java | 3 +--

2 files changed, 9 insertions(+), 4 deletions(-)

diff --git

a/common/network-common/src/main/java/org/apache/spark/network/client/TransportResponseHandler.java

b/common/network-common/src/main/java/org/apache/spark/network/client/TransportResponseHandler.java

index 576c088..261f205 100644

---

a/common/network-common/src/main/java/org/apache/spark/network/client/TransportResponseHandler.java

+++

b/common/network-common/src/main/java/org/apache/spark/network/client/TransportResponseHandler.java

@@ -140,7 +140,7 @@ public class TransportResponseHandler extends

MessageHandler {

@Override

public void channelInactive() {

-if (numOutstandingRequests() > 0) {

+if (hasOutstandingRequests()) {

String remoteAddress = getRemoteAddress(channel);

logger.error("Still have {} requests outstanding when connection from {}

is closed",

numOutstandingRequests(), remoteAddress);

@@ -150,7 +150,7 @@ public class TransportResponseHandler extends

MessageHandler {

@Override

public void exceptionCaught(Throwable cause) {

-if (numOutstandingRequests() > 0) {

+if (hasOutstandingRequests()) {

String remoteAddress = getRemoteAddress(channel);

logger.error("Still have {} requests outstanding when connection from {}

is closed",

numOutstandingRequests(), remoteAddress);

@@ -275,6 +275,12 @@ public class TransportResponseHandler extends

MessageHandler {

(streamActive ? 1 : 0);

}

+ /** Check if there are any outstanding requests (fetch requests + rpcs) */

+ public Boolean hasOutstandingRequests() {

+return streamActive || !outstandingFetches.isEmpty() ||

!outstandingRpcs.isEmpty() ||

+!streamCallbacks.isEmpty();

+ }

+

/** Returns the time in nanoseconds of when the last request was sent out. */

public long getTimeOfLastRequestNs() {

return timeOfLastRequestNs.get();

diff --git

a/common/network-common/src/main/java/org/apache/spark/network/server/TransportChannelHandler.java

b/common/network-common/src/main/java/org/apache/spark/network/server/TransportChannelHandler.java

index 275e64e..d197032 100644

---

a/common/network-common/src/main/java/org/apache/spark/network/server/TransportChannelHandler.java

+++

b/common/network-common/src/main/java/org/apache/spark/network/server/TransportChannelHandler.java

@@ -161,8 +161,7 @@ public class TransportChannelHandler extends

SimpleChannelInboundHandler

requestTimeoutNs;

if (e.state() == IdleState.ALL_IDLE && isActuallyOverdue) {

- boolean hasInFlightRequests =

responseHandler.numOutstandingRequests() > 0;

- if (hasInFlightRequests) {

+ if (responseHandler.hasOutstandingRequests()) {

String address = getRemoteAddress(ctx.channel());

logger.error("Connection to {} has been quiet for {} ms while

there are outstanding " +

"requests. Assuming connection is dead; please adjust" +

-

To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org

For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated: [SPARK-37934][BUILD] Upgrade Jetty version to 9.4.44

This is an automated email from the ASF dual-hosted git repository. srowen pushed a commit to branch master in repository https://gitbox.apache.org/repos/asf/spark.git The following commit(s) were added to refs/heads/master by this push: new 2e95c6f [SPARK-37934][BUILD] Upgrade Jetty version to 9.4.44 2e95c6f is described below commit 2e95c6f28d012c88c691ccd28cb04674461ff782 Author: Sajith Ariyarathna AuthorDate: Wed Jan 19 12:19:57 2022 -0600 [SPARK-37934][BUILD] Upgrade Jetty version to 9.4.44 ### What changes were proposed in this pull request? This PR upgrades Jetty version to `9.4.44.v20210927`. ### Why are the changes needed? We would like to have the fix for https://github.com/eclipse/jetty.project/issues/6973 in latest Spark. ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? CI Closes #35230 from this/upgrade-jetty-9.4.44. Authored-by: Sajith Ariyarathna Signed-off-by: Sean Owen --- dev/deps/spark-deps-hadoop-2-hive-2.3 | 2 +- dev/deps/spark-deps-hadoop-3-hive-2.3 | 4 ++-- pom.xml | 2 +- 3 files changed, 4 insertions(+), 4 deletions(-) diff --git a/dev/deps/spark-deps-hadoop-2-hive-2.3 b/dev/deps/spark-deps-hadoop-2-hive-2.3 index 0227c76..09b01a3 100644 --- a/dev/deps/spark-deps-hadoop-2-hive-2.3 +++ b/dev/deps/spark-deps-hadoop-2-hive-2.3 @@ -145,7 +145,7 @@ jersey-hk2/2.34//jersey-hk2-2.34.jar jersey-server/2.34//jersey-server-2.34.jar jetty-sslengine/6.1.26//jetty-sslengine-6.1.26.jar jetty-util/6.1.26//jetty-util-6.1.26.jar -jetty-util/9.4.43.v20210629//jetty-util-9.4.43.v20210629.jar +jetty-util/9.4.44.v20210927//jetty-util-9.4.44.v20210927.jar jetty/6.1.26//jetty-6.1.26.jar jline/2.14.6//jline-2.14.6.jar joda-time/2.10.12//joda-time-2.10.12.jar diff --git a/dev/deps/spark-deps-hadoop-3-hive-2.3 b/dev/deps/spark-deps-hadoop-3-hive-2.3 index afa4ba5..b7cc91f 100644 --- a/dev/deps/spark-deps-hadoop-3-hive-2.3 +++ b/dev/deps/spark-deps-hadoop-3-hive-2.3 @@ -133,8 +133,8 @@ jersey-container-servlet/2.34//jersey-container-servlet-2.34.jar jersey-hk2/2.34//jersey-hk2-2.34.jar jersey-server/2.34//jersey-server-2.34.jar jettison/1.1//jettison-1.1.jar -jetty-util-ajax/9.4.43.v20210629//jetty-util-ajax-9.4.43.v20210629.jar -jetty-util/9.4.43.v20210629//jetty-util-9.4.43.v20210629.jar +jetty-util-ajax/9.4.44.v20210927//jetty-util-ajax-9.4.44.v20210927.jar +jetty-util/9.4.44.v20210927//jetty-util-9.4.44.v20210927.jar jline/2.14.6//jline-2.14.6.jar joda-time/2.10.12//joda-time-2.10.12.jar jodd-core/3.5.2//jodd-core-3.5.2.jar diff --git a/pom.xml b/pom.xml index 5087113..4f53da0 100644 --- a/pom.xml +++ b/pom.xml @@ -138,7 +138,7 @@ 10.14.2.0 1.12.2 1.7.2 -9.4.43.v20210629 +9.4.44.v20210927 4.0.3 0.10.0 2.5.0 - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated (4c59a83 -> 0841579)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from 4c59a83 [SPARK-37921][TESTS] Update OrcReadBenchmark to use Hive ORC reader as the basis add 0841579 [SPARK-37901] Upgrade Netty from 4.1.72 to 4.1.73 No new revisions were added by this update. Summary of changes: dev/deps/spark-deps-hadoop-2-hive-2.3 | 28 ++-- dev/deps/spark-deps-hadoop-3-hive-2.3 | 28 ++-- pom.xml | 2 +- 3 files changed, 29 insertions(+), 29 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated: [SPARK-37876][CORE][SQL] Move `SpecificParquetRecordReaderBase.listDirectory` to `TestUtils`

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new 7614472 [SPARK-37876][CORE][SQL] Move

`SpecificParquetRecordReaderBase.listDirectory` to `TestUtils`

7614472 is described below

commit 7614472950cb57ffefa0a51dd1163103c5d42df6

Author: yangjie01

AuthorDate: Sat Jan 15 09:01:55 2022 -0600

[SPARK-37876][CORE][SQL] Move

`SpecificParquetRecordReaderBase.listDirectory` to `TestUtils`

### What changes were proposed in this pull request?

`SpecificParquetRecordReaderBase.listDirectory` is used to return the list

of files at `path` recursively and the result will skips files that are ignored

normally by MapReduce.

This method is only used by tests in Spark now and the tests also includes

non-parquet test scenario, such as `OrcColumnarBatchReaderSuite`.

So this pr move this method from `SpecificParquetRecordReaderBase` to

`TestUtils` to make it as a test method.

### Why are the changes needed?

Refactoring: move test method to `TestUtils`.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Pass GA

Closes #35177 from LuciferYang/list-directory.

Authored-by: yangjie01

Signed-off-by: Sean Owen

---

.../src/main/scala/org/apache/spark/TestUtils.scala | 15 +++

.../parquet/SpecificParquetRecordReaderBase.java| 21 -

.../benchmark/DataSourceReadBenchmark.scala | 11 ++-

.../orc/OrcColumnarBatchReaderSuite.scala | 4 ++--

.../datasources/parquet/ParquetEncodingSuite.scala | 11 ++-

.../datasources/parquet/ParquetIOSuite.scala| 6 +++---

.../execution/datasources/parquet/ParquetTest.scala | 3 ++-

.../spark/sql/test/DataFrameReaderWriterSuite.scala | 5 ++---

8 files changed, 36 insertions(+), 40 deletions(-)

diff --git a/core/src/main/scala/org/apache/spark/TestUtils.scala

b/core/src/main/scala/org/apache/spark/TestUtils.scala

index d2af955..505b3ab 100644

--- a/core/src/main/scala/org/apache/spark/TestUtils.scala

+++ b/core/src/main/scala/org/apache/spark/TestUtils.scala

@@ -446,6 +446,21 @@ private[spark] object TestUtils {

current ++ current.filter(_.isDirectory).flatMap(recursiveList)

}

+ /**

+ * Returns the list of files at 'path' recursively. This skips files that

are ignored normally

+ * by MapReduce.

+ */

+ def listDirectory(path: File): Array[String] = {

+val result = ArrayBuffer.empty[String]

+if (path.isDirectory) {

+ path.listFiles.foreach(f => result.appendAll(listDirectory(f)))

+} else {

+ val c = path.getName.charAt(0)

+ if (c != '.' && c != '_') result.append(path.getAbsolutePath)

+}

+result.toArray

+ }

+

/** Creates a temp JSON file that contains the input JSON record. */

def createTempJsonFile(dir: File, prefix: String, jsonValue: JValue): String

= {

val file = File.createTempFile(prefix, ".json", dir)

diff --git

a/sql/core/src/main/java/org/apache/spark/sql/execution/datasources/parquet/SpecificParquetRecordReaderBase.java

b/sql/core/src/main/java/org/apache/spark/sql/execution/datasources/parquet/SpecificParquetRecordReaderBase.java

index e1a0607..07e35c1 100644

---

a/sql/core/src/main/java/org/apache/spark/sql/execution/datasources/parquet/SpecificParquetRecordReaderBase.java

+++

b/sql/core/src/main/java/org/apache/spark/sql/execution/datasources/parquet/SpecificParquetRecordReaderBase.java

@@ -19,10 +19,8 @@

package org.apache.spark.sql.execution.datasources.parquet;

import java.io.Closeable;

-import java.io.File;

import java.io.IOException;

import java.lang.reflect.InvocationTargetException;

-import java.util.ArrayList;

import java.util.Collections;

import java.util.HashMap;

import java.util.HashSet;

@@ -122,25 +120,6 @@ public abstract class SpecificParquetRecordReaderBase

extends RecordReader listDirectory(File path) {

-List result = new ArrayList<>();

-if (path.isDirectory()) {

- for (File f: path.listFiles()) {

-result.addAll(listDirectory(f));

- }

-} else {

- char c = path.getName().charAt(0);

- if (c != '.' && c != '_') {

-result.add(path.getAbsolutePath());

- }

-}

-return result;

- }

-

- /**

* Initializes the reader to read the file at `path` with `columns`

projected. If columns is

* null, all the columns are projected.

*

diff --git

a/sql/core/src/test/scala/org/apache/spark/sql/execution/benchmark/DataSourceReadBenchmark.scala

b/sql/core/src/test/scala/org/apache/spark/sql/execution/benchmark/DataSourceReadBenchmark.scala

index 31cee48..5094cdf 100644

---

a/sql/core/src/test/scala/org/apache/spark/sql/execution/benchmark/DataSo

[spark] branch master updated: [SPARK-37862][SQL] RecordBinaryComparator should fast skip the check of aligning with unaligned platform

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new 8ae9707 [SPARK-37862][SQL] RecordBinaryComparator should fast skip

the check of aligning with unaligned platform

8ae9707 is described below

commit 8ae970790814a0080713857261a3b1c2e2b01dd7

Author: ulysses-you

AuthorDate: Sat Jan 15 08:59:56 2022 -0600

[SPARK-37862][SQL] RecordBinaryComparator should fast skip the check of

aligning with unaligned platform

### What changes were proposed in this pull request?

`RecordBinaryComparator` compare the entire row, so it need to check if the

platform is unaligned. #35078 had given the perf number to show the benefits.

So this PR aims to do the same thing that fast skip the check of aligning with

unaligned platform.

### Why are the changes needed?

Improve the performance.

### Does this PR introduce _any_ user-facing change?

no

### How was this patch tested?

Pass CI. And the perf number should be same with #35078

Closes #35161 from ulysses-you/unaligned.

Authored-by: ulysses-you

Signed-off-by: Sean Owen

---

.../java/org/apache/spark/sql/execution/RecordBinaryComparator.java | 5 +++--

1 file changed, 3 insertions(+), 2 deletions(-)

diff --git

a/sql/core/src/main/java/org/apache/spark/sql/execution/RecordBinaryComparator.java

b/sql/core/src/main/java/org/apache/spark/sql/execution/RecordBinaryComparator.java

index 1f24340..e91873a 100644

---

a/sql/core/src/main/java/org/apache/spark/sql/execution/RecordBinaryComparator.java

+++

b/sql/core/src/main/java/org/apache/spark/sql/execution/RecordBinaryComparator.java

@@ -24,6 +24,7 @@ import java.nio.ByteOrder;

public final class RecordBinaryComparator extends RecordComparator {

+ private static final boolean UNALIGNED = Platform.unaligned();

private static final boolean LITTLE_ENDIAN =

ByteOrder.nativeOrder().equals(ByteOrder.LITTLE_ENDIAN);

@@ -41,7 +42,7 @@ public final class RecordBinaryComparator extends

RecordComparator {

// we have guaranteed `leftLen` == `rightLen`.

// check if stars align and we can get both offsets to be aligned

-if ((leftOff % 8) == (rightOff % 8)) {

+if (!UNALIGNED && ((leftOff % 8) == (rightOff % 8))) {

while ((leftOff + i) % 8 != 0 && i < leftLen) {

final int v1 = Platform.getByte(leftObj, leftOff + i);

final int v2 = Platform.getByte(rightObj, rightOff + i);

@@ -52,7 +53,7 @@ public final class RecordBinaryComparator extends

RecordComparator {

}

}

// for architectures that support unaligned accesses, chew it up 8 bytes

at a time

-if (Platform.unaligned() || (((leftOff + i) % 8 == 0) && ((rightOff + i) %

8 == 0))) {

+if (UNALIGNED || (((leftOff + i) % 8 == 0) && ((rightOff + i) % 8 == 0))) {

while (i <= leftLen - 8) {

long v1 = Platform.getLong(leftObj, leftOff + i);

long v2 = Platform.getLong(rightObj, rightOff + i);

-

To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org

For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated: [SPARK-37854][CORE] Replace type check with pattern matching in Spark code

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new c7c51bc [SPARK-37854][CORE] Replace type check with pattern matching

in Spark code

c7c51bc is described below

commit c7c51bcab5cb067d36bccf789e0e4ad7f37ffb7c

Author: yangjie01

AuthorDate: Sat Jan 15 08:54:16 2022 -0600

[SPARK-37854][CORE] Replace type check with pattern matching in Spark code

### What changes were proposed in this pull request?

There are many method use `isInstanceOf + asInstanceOf` for type

conversion in Spark code now, the main change of this pr is replace `type

check` with `pattern matching` for code simplification.

### Why are the changes needed?

Code simplification

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Pass GA

Closes #35154 from LuciferYang/SPARK-37854.

Authored-by: yangjie01

Signed-off-by: Sean Owen

---

.../main/scala/org/apache/spark/TestUtils.scala| 36 ++--

.../main/scala/org/apache/spark/api/r/SerDe.scala | 12 ++--

.../spark/internal/config/ConfigBuilder.scala | 18 +++---

.../scala/org/apache/spark/rdd/HadoopRDD.scala | 64 +++---

.../main/scala/org/apache/spark/rdd/PipedRDD.scala | 7 ++-

core/src/main/scala/org/apache/spark/rdd/RDD.scala | 8 ++-

.../main/scala/org/apache/spark/util/Utils.scala | 38 ++---

.../storage/ShuffleBlockFetcherIteratorSuite.scala | 10 ++--

.../org/apache/spark/util/FileAppenderSuite.scala | 17 +++---

.../scala/org/apache/spark/util/UtilsSuite.scala | 19 ---

.../apache/spark/examples/mllib/LDAExample.scala | 11 ++--

.../spark/mllib/api/python/PythonMLLibAPI.scala| 12 ++--

.../expressions/aggregate/Percentile.scala | 14 ++---

.../apache/spark/sql/catalyst/trees/TreeNode.scala | 7 +--

.../sql/catalyst/encoders/RowEncoderSuite.scala| 11 ++--

.../sql/execution/columnar/ColumnAccessor.scala| 10 ++--

.../spark/sql/execution/columnar/ColumnType.scala | 50 +

.../sql/execution/datasources/FileScanRDD.scala| 19 ---

.../org/apache/spark/sql/jdbc/H2Dialect.scala | 30 +-

.../spark/sql/SparkSessionExtensionSuite.scala | 57 +--

.../sql/execution/joins/BroadcastJoinSuite.scala | 13 ++---

.../apache/spark/sql/streaming/StreamTest.scala| 6 +-

.../sql/hive/client/IsolatedClientLoader.scala | 12 ++--

.../spark/streaming/scheduler/JobGenerator.scala | 10 ++--

.../org/apache/spark/streaming/util/StateMap.scala | 21 +++

25 files changed, 263 insertions(+), 249 deletions(-)

diff --git a/core/src/main/scala/org/apache/spark/TestUtils.scala

b/core/src/main/scala/org/apache/spark/TestUtils.scala

index 20159af..d2af955 100644

--- a/core/src/main/scala/org/apache/spark/TestUtils.scala

+++ b/core/src/main/scala/org/apache/spark/TestUtils.scala

@@ -337,22 +337,26 @@ private[spark] object TestUtils {

connection.setRequestMethod(method)

headers.foreach { case (k, v) => connection.setRequestProperty(k, v) }

-// Disable cert and host name validation for HTTPS tests.

-if (connection.isInstanceOf[HttpsURLConnection]) {

- val sslCtx = SSLContext.getInstance("SSL")

- val trustManager = new X509TrustManager {

-override def getAcceptedIssuers(): Array[X509Certificate] = null

-override def checkClientTrusted(x509Certificates:

Array[X509Certificate],

-s: String): Unit = {}

-override def checkServerTrusted(x509Certificates:

Array[X509Certificate],

-s: String): Unit = {}

- }

- val verifier = new HostnameVerifier() {

-override def verify(hostname: String, session: SSLSession): Boolean =

true

- }

- sslCtx.init(null, Array(trustManager), new SecureRandom())

-

connection.asInstanceOf[HttpsURLConnection].setSSLSocketFactory(sslCtx.getSocketFactory())

- connection.asInstanceOf[HttpsURLConnection].setHostnameVerifier(verifier)

+connection match {

+ // Disable cert and host name validation for HTTPS tests.

+ case httpConnection: HttpsURLConnection =>

+val sslCtx = SSLContext.getInstance("SSL")

+val trustManager = new X509TrustManager {

+ override def getAcceptedIssuers: Array[X509Certificate] = null

+

+ override def checkClientTrusted(x509Certificates:

Array[X509Certificate],

+ s: String): Unit = {}

+

+ override def checkServerTrusted(x509Certificates:

Array[X509Certificate],

+ s: String): Unit = {}

+}

+val verifier = new HostnameVerifier() {

+ override def verify(hostname: String, session: SSLSession): Boolean

= true

+}

+sslCtx.init(null, Array(trust

[spark] branch master updated (c68dcef -> f3eedaf4)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from c68dcef [SPARK-37418][PYTHON][ML] Inline annotations for pyspark.ml.param.__init__.py add f3eedaf4 [SPARK-37805][TESTS] Refactor `TestUtils#configTestLog4j` method to use log4j2 api No new revisions were added by this update. Summary of changes: .../main/scala/org/apache/spark/TestUtils.scala| 30 -- .../test/scala/org/apache/spark/DriverSuite.scala | 2 +- .../org/apache/spark/deploy/SparkSubmitSuite.scala | 4 +-- .../WholeStageCodegenSparkSubmitSuite.scala| 2 +- .../spark/sql/hive/HiveSparkSubmitSuite.scala | 20 +++ 5 files changed, 30 insertions(+), 28 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated (c68dcef -> f3eedaf4)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from c68dcef [SPARK-37418][PYTHON][ML] Inline annotations for pyspark.ml.param.__init__.py add f3eedaf4 [SPARK-37805][TESTS] Refactor `TestUtils#configTestLog4j` method to use log4j2 api No new revisions were added by this update. Summary of changes: .../main/scala/org/apache/spark/TestUtils.scala| 30 -- .../test/scala/org/apache/spark/DriverSuite.scala | 2 +- .../org/apache/spark/deploy/SparkSubmitSuite.scala | 4 +-- .../WholeStageCodegenSparkSubmitSuite.scala| 2 +- .../spark/sql/hive/HiveSparkSubmitSuite.scala | 20 +++ 5 files changed, 30 insertions(+), 28 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated (c68dcef -> f3eedaf4)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from c68dcef [SPARK-37418][PYTHON][ML] Inline annotations for pyspark.ml.param.__init__.py add f3eedaf4 [SPARK-37805][TESTS] Refactor `TestUtils#configTestLog4j` method to use log4j2 api No new revisions were added by this update. Summary of changes: .../main/scala/org/apache/spark/TestUtils.scala| 30 -- .../test/scala/org/apache/spark/DriverSuite.scala | 2 +- .../org/apache/spark/deploy/SparkSubmitSuite.scala | 4 +-- .../WholeStageCodegenSparkSubmitSuite.scala| 2 +- .../spark/sql/hive/HiveSparkSubmitSuite.scala | 20 +++ 5 files changed, 30 insertions(+), 28 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated (c68dcef -> f3eedaf4)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from c68dcef [SPARK-37418][PYTHON][ML] Inline annotations for pyspark.ml.param.__init__.py add f3eedaf4 [SPARK-37805][TESTS] Refactor `TestUtils#configTestLog4j` method to use log4j2 api No new revisions were added by this update. Summary of changes: .../main/scala/org/apache/spark/TestUtils.scala| 30 -- .../test/scala/org/apache/spark/DriverSuite.scala | 2 +- .../org/apache/spark/deploy/SparkSubmitSuite.scala | 4 +-- .../WholeStageCodegenSparkSubmitSuite.scala| 2 +- .../spark/sql/hive/HiveSparkSubmitSuite.scala | 20 +++ 5 files changed, 30 insertions(+), 28 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated: [SPARK-37256][SQL] Replace `ScalaObjectMapper` with `ClassTagExtensions` to fix compilation warning

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new 3faced8 [SPARK-37256][SQL] Replace `ScalaObjectMapper` with

`ClassTagExtensions` to fix compilation warning

3faced8 is described below

commit 3faced8b2ab922c2a3f25bdf393aa0511d87ccc8

Author: yangjie01

AuthorDate: Tue Jan 11 09:25:49 2022 -0600

[SPARK-37256][SQL] Replace `ScalaObjectMapper` with `ClassTagExtensions` to

fix compilation warning

### What changes were proposed in this pull request?

There are some compilation warning log like follows:

```

[WARNING] [Warn]

/spark/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/util/RebaseDateTime.scala:268:

[deprecation

org.apache.spark.sql.catalyst.util.RebaseDateTime.loadRebaseRecords.mapper.$anon

| origin=com.fasterxml.jackson.module.scala.ScalaObjectMapper |

version=2.12.1] trait ScalaObjectMapper in package scala is deprecated (since

2.12.1): ScalaObjectMapper is deprecated because Manifests are not supported in

Scala3

```

Refer to the recommendations of `jackson-module-scala`, this PR use

`ClassTagExtensions` instead of `ScalaObjectMapper` to fix this compilation

warning

### Why are the changes needed?

Fix compilation warning

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

Pass the Jenkins or GitHub Action

Closes #34532 from LuciferYang/fix-ScalaObjectMapper.

Authored-by: yangjie01

Signed-off-by: Sean Owen

---

.../scala/org/apache/spark/sql/catalyst/util/RebaseDateTime.scala | 4 ++--

.../org/apache/spark/sql/catalyst/util/RebaseDateTimeSuite.scala | 4 ++--

.../spark/sql/execution/streaming/state/RocksDBFileManager.scala | 4 ++--

3 files changed, 6 insertions(+), 6 deletions(-)

diff --git

a/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/util/RebaseDateTime.scala

b/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/util/RebaseDateTime.scala

index 72bb43b..dc1c4db 100644

---

a/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/util/RebaseDateTime.scala

+++

b/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/util/RebaseDateTime.scala

@@ -25,7 +25,7 @@ import java.util.Calendar.{DAY_OF_MONTH, DST_OFFSET, ERA,

HOUR_OF_DAY, MINUTE, M

import scala.collection.mutable.AnyRefMap

import com.fasterxml.jackson.databind.ObjectMapper

-import com.fasterxml.jackson.module.scala.{DefaultScalaModule,

ScalaObjectMapper}

+import com.fasterxml.jackson.module.scala.{ClassTagExtensions,

DefaultScalaModule}

import org.apache.spark.sql.catalyst.util.DateTimeConstants._

import org.apache.spark.sql.catalyst.util.DateTimeUtils._

@@ -273,7 +273,7 @@ object RebaseDateTime {

// it is 2 times faster in DateTimeRebaseBenchmark.

private[sql] def loadRebaseRecords(fileName: String): AnyRefMap[String,

RebaseInfo] = {

val file = Utils.getSparkClassLoader.getResource(fileName)

-val mapper = new ObjectMapper() with ScalaObjectMapper

+val mapper = new ObjectMapper() with ClassTagExtensions

mapper.registerModule(DefaultScalaModule)

val jsonRebaseRecords = mapper.readValue[Seq[JsonRebaseRecord]](file)

val anyRefMap = new AnyRefMap[String, RebaseInfo]((3 *

jsonRebaseRecords.size) / 2)

diff --git

a/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/util/RebaseDateTimeSuite.scala

b/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/util/RebaseDateTimeSuite.scala

index 428a0c0..0d3f681c 100644

---

a/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/util/RebaseDateTimeSuite.scala

+++

b/sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/util/RebaseDateTimeSuite.scala

@@ -252,7 +252,7 @@ class RebaseDateTimeSuite extends SparkFunSuite with

Matchers with SQLHelper {

import scala.collection.mutable.ArrayBuffer

import com.fasterxml.jackson.databind.ObjectMapper

-import com.fasterxml.jackson.module.scala.{DefaultScalaModule,

ScalaObjectMapper}

+import com.fasterxml.jackson.module.scala.{ClassTagExtensions,

DefaultScalaModule}

case class RebaseRecord(tz: String, switches: Array[Long], diffs:

Array[Long])

val rebaseRecords = ThreadUtils.parmap(ALL_TIMEZONES, "JSON-rebase-gen",

16) { zid =>

@@ -296,7 +296,7 @@ class RebaseDateTimeSuite extends SparkFunSuite with

Matchers with SQLHelper {

}

val result = new ArrayBuffer[RebaseRecord]()

rebaseRecords.sortBy(_.tz).foreach(result.append(_))

-val mapper = (new ObjectMapper() with ScalaObjectMapper)

+val mapper = (new ObjectMapper() with ClassTagExtensions)

.registerModule(DefaultScalaModule)

.writerWithDefaultPrettyPrinter()

mapper.writeValue(

diff --git

a/sql/core/src/main/scala/org/apache/spark/sql/execution/st

[spark] branch master updated: [SPARK-37796][SQL] ByteArrayMethods arrayEquals should fast skip the check of aligning with unaligned platform

This is an automated email from the ASF dual-hosted git repository. srowen pushed a commit to branch master in repository https://gitbox.apache.org/repos/asf/spark.git The following commit(s) were added to refs/heads/master by this push: new 8c6d312 [SPARK-37796][SQL] ByteArrayMethods arrayEquals should fast skip the check of aligning with unaligned platform 8c6d312 is described below commit 8c6d3123086cf4def7e8be61214dfc9286578169 Author: ulysses-you AuthorDate: Wed Jan 5 09:30:05 2022 -0600 [SPARK-37796][SQL] ByteArrayMethods arrayEquals should fast skip the check of aligning with unaligned platform ### What changes were proposed in this pull request? The method `arrayEquals` in `ByteArrayMethods` is critical function which is used in `UTF8String.` `equals`, `indexOf`,`find` etc. After SPARK-16962, it add the complexity of aligned. It would be better to fast sikip the check of aligning if the platform is unaligned. ### Why are the changes needed? Improve the performance. ### Does this PR introduce _any_ user-facing change? no ### How was this patch tested? Pass CI. Run the benchmark using [unaligned-benchmark](https://github.com/ulysses-you/spark/commit/d14d4bfcfeddcf90ccfe7cc3f6cda426d6d6b7e5), and here is the benchmark result: [JDK8](https://github.com/ulysses-you/spark/actions/runs/1639852573) ``` byte array equals OpenJDK 64-Bit Server VM 1.8.0_312-b07 on Linux 5.11.0-1022-azure Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz Byte Array equals:Best Time(ms) Avg Time(ms) Stdev(ms)Rate(M/s) Per Row(ns) Relative Byte Array equals fast 1322 NaN121.0 8.3 1.0X Byte Array equals 3378 3381 3 47.4 21.1 0.4X ``` [JDK11](https://github.com/ulysses-you/spark/actions/runs/1639853330) ``` byte array equals OpenJDK 64-Bit Server VM 11.0.13+8-LTS on Linux 5.11.0-1022-azure Intel(R) Xeon(R) Platinum 8272CL CPU 2.60GHz Byte Array equals:Best Time(ms) Avg Time(ms) Stdev(ms)Rate(M/s) Per Row(ns) Relative Byte Array equals fast 1860 1891 15 86.0 11.6 1.0X Byte Array equals 2913 2921 8 54.9 18.2 0.6X ``` [JDK17](https://github.com/ulysses-you/spark/actions/runs/1639853938) ``` byte array equals OpenJDK 64-Bit Server VM 17.0.1+12-LTS on Linux 5.11.0-1022-azure Intel(R) Xeon(R) Platinum 8171M CPU 2.60GHz Byte Array equals:Best Time(ms) Avg Time(ms) Stdev(ms)Rate(M/s) Per Row(ns) Relative Byte Array equals fast 1543 1602 39103.7 9.6 1.0X Byte Array equals 3027 3029 1 52.9 18.9 0.5X ``` Closes #35078 from ulysses-you/SPARK-37796. Authored-by: ulysses-you Signed-off-by: Sean Owen --- .../spark/unsafe/array/ByteArrayMethods.java | 2 +- .../ByteArrayBenchmark-jdk11-results.txt | 10 .../ByteArrayBenchmark-jdk17-results.txt | 10 sql/core/benchmarks/ByteArrayBenchmark-results.txt | 10 .../execution/benchmark/ByteArrayBenchmark.scala | 66 +- 5 files changed, 83 insertions(+), 15 deletions(-) diff --git a/common/unsafe/src/main/java/org/apache/spark/unsafe/array/ByteArrayMethods.java b/common/unsafe/src/main/java/org/apache/spark/unsafe/array/ByteArrayMethods.java index f3a59e3..5a7e32b 100644 --- a/common/unsafe/src/main/java/org/apache/spark/unsafe/array/ByteArrayMethods.java

[spark] branch master updated (4caface -> 88c7b6a)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from 4caface [MINOR] Remove unused imports in Scala 2.13 Repl2Suite add 88c7b6a [SPARK-37719][BUILD] Remove the `-add-exports` compilation option introduced by SPARK-37070 No new revisions were added by this update. Summary of changes: dev/deps/spark-deps-hadoop-2-hive-2.3 | 2 +- dev/deps/spark-deps-hadoop-3-hive-2.3 | 2 +- mllib-local/pom.xml | 5 + mllib/pom.xml | 5 + pom.xml | 20 ++-- project/SparkBuild.scala | 6 +- 6 files changed, 23 insertions(+), 17 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated (4fd1a0c -> 9b94081)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from 4fd1a0c [SPARK-37739][BUILD] Upgrade Arrow to 6.0.1 add 9b94081 [MINOR][DOCS] Update pandas_pyspark.rst No new revisions were added by this update. Summary of changes: python/docs/source/development/contributing.rst| 2 +- .../source/user_guide/pandas_on_spark/best_practices.rst | 14 +++--- .../source/user_guide/pandas_on_spark/from_to_dbms.rst | 2 +- .../source/user_guide/pandas_on_spark/pandas_pyspark.rst | 2 +- .../source/user_guide/pandas_on_spark/transform_apply.rst | 8 5 files changed, 14 insertions(+), 14 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated (805e3fb -> 64c79a7)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from 805e3fb [SPARK-37462][CORE] Avoid unnecessary calculating the number of outstanding fetch requests and RPCS add 64c79a7 [SPARK-37718][MINOR][DOCS] Demo sql is incorrect No new revisions were added by this update. Summary of changes: docs/sql-ref-null-semantics.md | 2 +- 1 file changed, 1 insertion(+), 1 deletion(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated (f6be769 -> 805e3fb)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from f6be769 [SPARK-37668][PYTHON] 'Index' object has no attribute 'levels' in pyspark.pandas.frame.DataFrame.insert add 805e3fb [SPARK-37462][CORE] Avoid unnecessary calculating the number of outstanding fetch requests and RPCS No new revisions were added by this update. Summary of changes: .../java/org/apache/spark/network/server/TransportChannelHandler.java | 2 +- 1 file changed, 1 insertion(+), 1 deletion(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated (57ca75f -> 6511dbb)

This is an automated email from the ASF dual-hosted git repository. srowen pushed a change to branch master in repository https://gitbox.apache.org/repos/asf/spark.git. from 57ca75f [SPARK-37695][CORE][SHUFFLE] Skip diagnosis ob merged blocks from push-based shuffle add 6511dbb [SPARK-37628][BUILD] Upgrade Netty from 4.1.68 to 4.1.72 No new revisions were added by this update. Summary of changes: dev/deps/spark-deps-hadoop-2-hive-2.3 | 16 +++- dev/deps/spark-deps-hadoop-3-hive-2.3 | 16 +++- pom.xml | 76 ++- project/SparkBuild.scala | 4 +- 4 files changed, 106 insertions(+), 6 deletions(-) - To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org For additional commands, e-mail: commits-h...@spark.apache.org

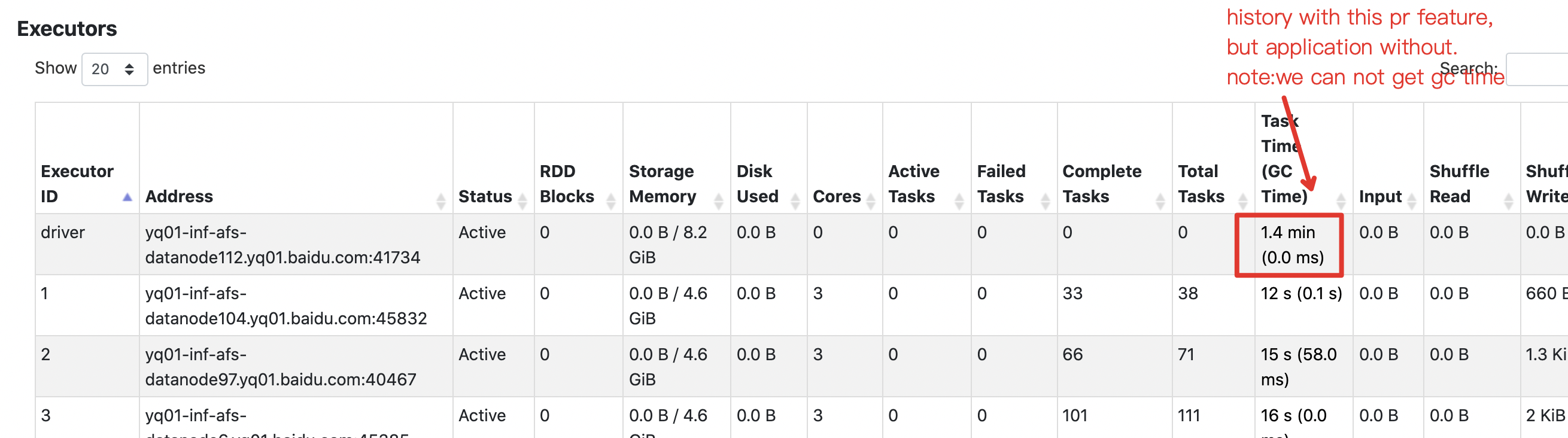

[spark] branch master updated: [SPARK-37493][CORE] show gc time and duration time of driver in executors page

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new c2df895 [SPARK-37493][CORE] show gc time and duration time of driver

in executors page

c2df895 is described below

commit c2df895a8cbdfcbffc320e837ad1826933685912

Author: zhoubin11

AuthorDate: Mon Dec 13 08:47:01 2021 -0600

[SPARK-37493][CORE] show gc time and duration time of driver in executors

page

### What changes were proposed in this pull request?

show driver's gc time & duration time(equivalent to application time) of

driver in both driver side and history side UI

### Why are the changes needed?

help user to config driver's resource more appropriately

### Does this PR introduce _any_ user-facing change?

yes,user will see driver's gc time & duration time in executors page .

when `spark.eventLog.logStageExecutorMetrics` is enabled driver's gc time

can be logged.

before this change,user always get zero

### How was this patch tested?

unit tests

Closes #34749 from summaryzb/SPARK-37493.

Authored-by: zhoubin11

Signed-off-by: Sean Owen

---

.../apache/spark/metrics/ExecutorMetricType.scala | 9 ++--

.../org/apache/spark/status/AppStatusStore.scala | 54 +++-

.../complete_stage_list_json_expectation.json | 9 ++--

.../excludeOnFailure_for_stage_expectation.json| 9 ++--

...xcludeOnFailure_node_for_stage_expectation.json | 18 ---

.../executor_list_json_expectation.json| 7 +--

...ist_with_executor_metrics_json_expectation.json | 14 --

.../executor_memory_usage_expectation.json | 32 ++--

...executor_node_excludeOnFailure_expectation.json | 40 ---

...e_excludeOnFailure_unexcluding_expectation.json | 14 --

.../executor_resource_information_expectation.json | 2 +-

.../failed_stage_list_json_expectation.json| 3 +-

..._json_details_with_failed_task_expectation.json | 6 ++-

.../one_stage_attempt_json_expectation.json| 6 ++-

.../one_stage_json_expectation.json| 6 ++-

.../one_stage_json_with_details_expectation.json | 6 ++-

.../stage_list_json_expectation.json | 12 +++--

...age_list_with_accumulable_json_expectation.json | 3 +-

.../stage_list_with_peak_metrics_expectation.json | 9 ++--

.../stage_with_accumulable_json_expectation.json | 6 ++-

.../stage_with_peak_metrics_expectation.json | 9 ++--

...stage_with_speculation_summary_expectation.json | 17 ---

.../stage_with_summaries_expectation.json | 12 +++--

.../scheduler/EventLoggingListenerSuite.scala | 58 +++---

.../org/apache/spark/util/JsonProtocolSuite.scala | 36 +-

25 files changed, 260 insertions(+), 137 deletions(-)

diff --git

a/core/src/main/scala/org/apache/spark/metrics/ExecutorMetricType.scala

b/core/src/main/scala/org/apache/spark/metrics/ExecutorMetricType.scala

index 76e2813..a536919 100644

--- a/core/src/main/scala/org/apache/spark/metrics/ExecutorMetricType.scala

+++ b/core/src/main/scala/org/apache/spark/metrics/ExecutorMetricType.scala

@@ -109,7 +109,8 @@ case object GarbageCollectionMetrics extends

ExecutorMetricType with Logging {

"MinorGCCount",

"MinorGCTime",

"MajorGCCount",

-"MajorGCTime"

+"MajorGCTime",

+"TotalGCTime"

)

/* We builtin some common GC collectors which categorized as young

generation and old */

@@ -136,8 +137,10 @@ case object GarbageCollectionMetrics extends

ExecutorMetricType with Logging {

}

override private[spark] def getMetricValues(memoryManager: MemoryManager):

Array[Long] = {

-val gcMetrics = new Array[Long](names.length) // minorCount, minorTime,

majorCount, majorTime

-ManagementFactory.getGarbageCollectorMXBeans.asScala.foreach { mxBean =>

+val gcMetrics = new Array[Long](names.length)

+val mxBeans = ManagementFactory.getGarbageCollectorMXBeans.asScala

+gcMetrics(4) = mxBeans.map(_.getCollectionTime).sum

+mxBeans.foreach { mxBean =>

if (youngGenerationGarbageCollector.contains(mxBean.getName)) {

gcMetrics(0) = mxBean.getCollectionCount

gcMetrics(1) = mxBean.getCollectionTime

diff --git a/core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

b/core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

index

[spark] branch branch-3.2 updated: [SPARK-37594][SPARK-34399][SQL][TESTS] Make UT more stable

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch branch-3.2

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/branch-3.2 by this push:

new 0bd2dab [SPARK-37594][SPARK-34399][SQL][TESTS] Make UT more stable

0bd2dab is described below

commit 0bd2dab53baaf1ad19a4389e5bcbeb388693d11f

Author: Angerszh

AuthorDate: Fri Dec 10 10:53:31 2021 -0600

[SPARK-37594][SPARK-34399][SQL][TESTS] Make UT more stable

### What changes were proposed in this pull request?

There are some GA test failed caused by UT ` test("SPARK-34399: Add job

commit duration metrics for DataWritingCommand") ` such as

```

sbt.ForkMain$ForkError: org.scalatest.exceptions.TestFailedException: 0 was

not greater than 0

at

org.scalatest.Assertions.newAssertionFailedException(Assertions.scala:472)

at

org.scalatest.Assertions.newAssertionFailedException$(Assertions.scala:471)

at

org.scalatest.Assertions$.newAssertionFailedException(Assertions.scala:1231)

at

org.scalatest.Assertions$AssertionsHelper.macroAssert(Assertions.scala:1295)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.$anonfun$new$87(SQLMetricsSuite.scala:810)

at

scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.scala:18)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1462)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withTable(SQLTestUtils.scala:305)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withTable$(SQLTestUtils.scala:303)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.withTable(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.$anonfun$new$86(SQLMetricsSuite.scala:800)

at

org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf(SQLHelper.scala:54)

at

org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf$(SQLHelper.scala:38)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.org$apache$spark$sql$test$SQLTestUtilsBase$$super$withSQLConf(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withSQLConf(SQLTestUtils.scala:246)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withSQLConf$(SQLTestUtils.scala:244)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.withSQLConf(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.$anonfun$new$85(SQLMetricsSuite.scala:800)

at

scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.scala:18)

at

org.apache.spark.sql.execution.adaptive.DisableAdaptiveExecutionSuite.$anonfun$test$5(AdaptiveTestUtils.scala:65)

at

org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf(SQLHelper.scala:54)

at

org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf$(SQLHelper.scala:38)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.org$apache$spark$sql$test$SQLTestUtilsBase$$super$withSQLConf(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withSQLConf(SQLTestUtils.scala:246)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withSQLConf$(SQLTestUtils.scala:244)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.withSQLConf(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.execution.adaptive.DisableAdaptiveExecutionSuite.$anonfun$test$4(AdaptiveTestUtils.scala:65)

at

scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.scala:18)

at org.scalatest.OutcomeOf.outcomeOf(OutcomeOf.scala:85)

at org.scalatest.OutcomeOf.outcomeOf$(OutcomeOf.scala:83)

at org.scalatest.OutcomeOf$.outcomeOf(OutcomeOf.scala:104)

at org.scalatest.Transformer.apply(Transformer.scala:22)

at org.scalatest.Transformer.apply(Transformer.scala:20)

at

org.scalatest.funsuite.AnyFunSuiteLike$$anon$1.apply(AnyFunSuiteLike.scala:226)

at org.apache.spark.SparkFunSuite.withFixture(SparkFunSuite.scala:190)

at

org.scalatest.funsuite.AnyFunSuiteLike.invokeWithFixture$1(AnyFunSuiteLike.scala:224)

at

org.scalatest.funsuite.AnyFunSuiteLike.$anonfun$runTest$1(AnyFunSuiteLike.scala:236)

at org.scalatest.SuperEngine.runTestImpl(Engine.scala:306)

at

org.scalatest.funsuite.AnyFunSuiteLike.runTest(AnyFunSuiteLike.scala:236)

at

org.scalatest.funsuite.AnyFunSuiteLike.runTest$(AnyFunSuiteLike.scala:218)

at

org.apache.spark.SparkFunSuite.org$scalatest$BeforeAndAfterEach$$super$runTest(SparkFunSuite.scala:62)

at

org.scalatest.BeforeAndAfterEach.runTest(BeforeAndAfterEach.scala:234)

at

org.scalatest.BeforeAndAfterEach.runTest$(BeforeAndAfterEach.scala:227)

at org.apache.spark.SparkFunSuite.runTest(SparkFunSuite.scala:62)

at

org.scalatest.funsuite.AnyFunSuiteLike.$anonfun$

[spark] branch master updated: [SPARK-37594][SPARK-34399][SQL][TESTS] Make UT more stable

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new 471a5b5 [SPARK-37594][SPARK-34399][SQL][TESTS] Make UT more stable

471a5b5 is described below

commit 471a5b55b80256ccd253c93623ff363add5f1985

Author: Angerszh

AuthorDate: Fri Dec 10 10:53:31 2021 -0600

[SPARK-37594][SPARK-34399][SQL][TESTS] Make UT more stable

### What changes were proposed in this pull request?

There are some GA test failed caused by UT ` test("SPARK-34399: Add job

commit duration metrics for DataWritingCommand") ` such as

```

sbt.ForkMain$ForkError: org.scalatest.exceptions.TestFailedException: 0 was

not greater than 0

at

org.scalatest.Assertions.newAssertionFailedException(Assertions.scala:472)

at

org.scalatest.Assertions.newAssertionFailedException$(Assertions.scala:471)

at

org.scalatest.Assertions$.newAssertionFailedException(Assertions.scala:1231)

at

org.scalatest.Assertions$AssertionsHelper.macroAssert(Assertions.scala:1295)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.$anonfun$new$87(SQLMetricsSuite.scala:810)

at

scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.scala:18)

at org.apache.spark.util.Utils$.tryWithSafeFinally(Utils.scala:1462)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withTable(SQLTestUtils.scala:305)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withTable$(SQLTestUtils.scala:303)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.withTable(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.$anonfun$new$86(SQLMetricsSuite.scala:800)

at

org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf(SQLHelper.scala:54)

at

org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf$(SQLHelper.scala:38)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.org$apache$spark$sql$test$SQLTestUtilsBase$$super$withSQLConf(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withSQLConf(SQLTestUtils.scala:246)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withSQLConf$(SQLTestUtils.scala:244)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.withSQLConf(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.$anonfun$new$85(SQLMetricsSuite.scala:800)

at

scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.scala:18)

at

org.apache.spark.sql.execution.adaptive.DisableAdaptiveExecutionSuite.$anonfun$test$5(AdaptiveTestUtils.scala:65)

at

org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf(SQLHelper.scala:54)

at

org.apache.spark.sql.catalyst.plans.SQLHelper.withSQLConf$(SQLHelper.scala:38)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.org$apache$spark$sql$test$SQLTestUtilsBase$$super$withSQLConf(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withSQLConf(SQLTestUtils.scala:246)

at

org.apache.spark.sql.test.SQLTestUtilsBase.withSQLConf$(SQLTestUtils.scala:244)

at

org.apache.spark.sql.execution.metric.SQLMetricsSuite.withSQLConf(SQLMetricsSuite.scala:44)

at

org.apache.spark.sql.execution.adaptive.DisableAdaptiveExecutionSuite.$anonfun$test$4(AdaptiveTestUtils.scala:65)

at

scala.runtime.java8.JFunction0$mcV$sp.apply(JFunction0$mcV$sp.scala:18)

at org.scalatest.OutcomeOf.outcomeOf(OutcomeOf.scala:85)

at org.scalatest.OutcomeOf.outcomeOf$(OutcomeOf.scala:83)

at org.scalatest.OutcomeOf$.outcomeOf(OutcomeOf.scala:104)

at org.scalatest.Transformer.apply(Transformer.scala:22)

at org.scalatest.Transformer.apply(Transformer.scala:20)

at

org.scalatest.funsuite.AnyFunSuiteLike$$anon$1.apply(AnyFunSuiteLike.scala:226)

at org.apache.spark.SparkFunSuite.withFixture(SparkFunSuite.scala:190)

at

org.scalatest.funsuite.AnyFunSuiteLike.invokeWithFixture$1(AnyFunSuiteLike.scala:224)

at

org.scalatest.funsuite.AnyFunSuiteLike.$anonfun$runTest$1(AnyFunSuiteLike.scala:236)

at org.scalatest.SuperEngine.runTestImpl(Engine.scala:306)

at

org.scalatest.funsuite.AnyFunSuiteLike.runTest(AnyFunSuiteLike.scala:236)

at

org.scalatest.funsuite.AnyFunSuiteLike.runTest$(AnyFunSuiteLike.scala:218)

at

org.apache.spark.SparkFunSuite.org$scalatest$BeforeAndAfterEach$$super$runTest(SparkFunSuite.scala:62)

at

org.scalatest.BeforeAndAfterEach.runTest(BeforeAndAfterEach.scala:234)

at

org.scalatest.BeforeAndAfterEach.runTest$(BeforeAndAfterEach.scala:227)

at org.apache.spark.SparkFunSuite.runTest(SparkFunSuite.scala:62)

at

org.scalatest.funsuite.AnyFunSuiteLike.$anonfun$runTests$1(AnyFunSuite

[spark] branch master updated (12d3517 -> 16d1c68)

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a change to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git.

from 12d3517 [SPARK-34332][SQL][TEST][FOLLOWUP] Remove unnecessary test

for ALTER NAMESPACE .. SET LOCATION

add 16d1c68 [SPARK-37474][R][DOCS] Migrate SparkR docs to pkgdown

No new revisions were added by this update.

Summary of changes:

.github/workflows/build_and_test.yml | 5 +-

R/create-docs.sh | 14 +

R/pkg/.Rbuildignore| 3 +

R/pkg/.gitignore | 1 +

R/pkg/R/DataFrame.R| 31 +-

R/pkg/R/SQLContext.R | 29 +-

R/pkg/R/functions.R| 17 +-

R/pkg/R/jobj.R | 1 +

R/pkg/R/schema.R | 2 +

R/pkg/R/utils.R| 1 +

R/{ => pkg}/README.md | 0

R/pkg/pkgdown/_pkgdown_template.yml| 311 +

.../pkg/pkgdown/extra.css | 43 ++-

R/pkg/vignettes/sparkr-vignettes.Rmd | 175 ++--

dev/create-release/spark-rm/Dockerfile | 3 +

docs/README.md | 2 +

docs/_plugins/copy_api_dirs.rb | 7 +-

17 files changed, 503 insertions(+), 142 deletions(-)

create mode 100644 R/pkg/.gitignore

rename R/{ => pkg}/README.md (100%)

create mode 100644 R/pkg/pkgdown/_pkgdown_template.yml

copy

sql/catalyst/src/main/java/org/apache/spark/sql/connector/distributions/OrderedDistribution.java

=> R/pkg/pkgdown/extra.css (60%)

-

To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org

For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch branch-3.0 updated: [SPARK-37556][SQL] Deser void class fail with Java serialization

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch branch-3.0

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/branch-3.0 by this push:

new 113f750 [SPARK-37556][SQL] Deser void class fail with Java

serialization

113f750 is described below

commit 113f75058f99465281cd2065c22c0456c344be71

Author: Daniel Dai

AuthorDate: Tue Dec 7 08:48:23 2021 -0600

[SPARK-37556][SQL] Deser void class fail with Java serialization

**What changes were proposed in this pull request?**

Change the deserialization mapping for primitive type void.

**Why are the changes needed?**

The void primitive type in Scala should be classOf[Unit] not classOf[Void].

Spark erroneously [map

it](https://github.com/apache/spark/blob/v3.2.0/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala#L80)

differently than all other primitive types. Here is the code:

```

private object JavaDeserializationStream {

val primitiveMappings = Map[String, Class[_]](

"boolean" -> classOf[Boolean],

"byte" -> classOf[Byte],

"char" -> classOf[Char],

"short" -> classOf[Short],

"int" -> classOf[Int],

"long" -> classOf[Long],

"float" -> classOf[Float],

"double" -> classOf[Double],

"void" -> classOf[Void]

)

}

```

Spark code is Here is the demonstration:

```

scala> classOf[Long]

val res0: Class[Long] = long

scala> classOf[Double]

val res1: Class[Double] = double

scala> classOf[Byte]

val res2: Class[Byte] = byte

scala> classOf[Void]

val res3: Class[Void] = class java.lang.Void <--- this is wrong

scala> classOf[Unit]

val res4: Class[Unit] = void < this is right

```

It will result in Spark deserialization error if the Spark code contains

void primitive type:

`java.io.InvalidClassException: java.lang.Void; local class name

incompatible with stream class name "void"`

**Does this PR introduce any user-facing change?**

no

**How was this patch tested?**

Changed test, also tested e2e with the code results deserialization error

and it pass now.

Closes #34816 from daijyc/voidtype.

Authored-by: Daniel Dai

Signed-off-by: Sean Owen

(cherry picked from commit fb40c0e19f84f2de9a3d69d809e9e4031f76ef90)

Signed-off-by: Sean Owen

---

core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala | 4 ++--

.../test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala | 2 +-

2 files changed, 3 insertions(+), 3 deletions(-)

diff --git

a/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

b/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

index 077b035..3c13401 100644

--- a/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

+++ b/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

@@ -87,8 +87,8 @@ private object JavaDeserializationStream {

"long" -> classOf[Long],

"float" -> classOf[Float],

"double" -> classOf[Double],

-"void" -> classOf[Void]

- )

+"void" -> classOf[Unit])

+

}

private[spark] class JavaSerializerInstance(

diff --git

a/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

b/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

index 6a6ea42..03349f8 100644

--- a/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

+++ b/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

@@ -47,5 +47,5 @@ private class ContainsPrimitiveClass extends Serializable {

val floatClass = classOf[Float]

val booleanClass = classOf[Boolean]

val byteClass = classOf[Byte]

- val voidClass = classOf[Void]

+ val voidClass = classOf[Unit]

}

-

To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org

For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch branch-3.1 updated: [SPARK-37556][SQL] Deser void class fail with Java serialization

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch branch-3.1

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/branch-3.1 by this push:

new 2816017 [SPARK-37556][SQL] Deser void class fail with Java

serialization

2816017 is described below

commit 281601739de100521de6009b4a65efc3e922622a

Author: Daniel Dai

AuthorDate: Tue Dec 7 08:48:23 2021 -0600

[SPARK-37556][SQL] Deser void class fail with Java serialization

**What changes were proposed in this pull request?**

Change the deserialization mapping for primitive type void.

**Why are the changes needed?**

The void primitive type in Scala should be classOf[Unit] not classOf[Void].

Spark erroneously [map

it](https://github.com/apache/spark/blob/v3.2.0/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala#L80)

differently than all other primitive types. Here is the code:

```

private object JavaDeserializationStream {

val primitiveMappings = Map[String, Class[_]](

"boolean" -> classOf[Boolean],

"byte" -> classOf[Byte],

"char" -> classOf[Char],

"short" -> classOf[Short],

"int" -> classOf[Int],

"long" -> classOf[Long],

"float" -> classOf[Float],

"double" -> classOf[Double],

"void" -> classOf[Void]

)

}

```

Spark code is Here is the demonstration:

```

scala> classOf[Long]

val res0: Class[Long] = long

scala> classOf[Double]

val res1: Class[Double] = double

scala> classOf[Byte]

val res2: Class[Byte] = byte

scala> classOf[Void]

val res3: Class[Void] = class java.lang.Void <--- this is wrong

scala> classOf[Unit]

val res4: Class[Unit] = void < this is right

```

It will result in Spark deserialization error if the Spark code contains

void primitive type:

`java.io.InvalidClassException: java.lang.Void; local class name

incompatible with stream class name "void"`

**Does this PR introduce any user-facing change?**

no

**How was this patch tested?**

Changed test, also tested e2e with the code results deserialization error

and it pass now.

Closes #34816 from daijyc/voidtype.

Authored-by: Daniel Dai

Signed-off-by: Sean Owen

(cherry picked from commit fb40c0e19f84f2de9a3d69d809e9e4031f76ef90)

Signed-off-by: Sean Owen

---

core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala | 4 ++--

.../test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala | 2 +-

2 files changed, 3 insertions(+), 3 deletions(-)

diff --git

a/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

b/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

index 077b035..3c13401 100644

--- a/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

+++ b/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

@@ -87,8 +87,8 @@ private object JavaDeserializationStream {

"long" -> classOf[Long],

"float" -> classOf[Float],

"double" -> classOf[Double],

-"void" -> classOf[Void]

- )

+"void" -> classOf[Unit])

+

}

private[spark] class JavaSerializerInstance(

diff --git

a/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

b/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

index 6a6ea42..03349f8 100644

--- a/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

+++ b/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

@@ -47,5 +47,5 @@ private class ContainsPrimitiveClass extends Serializable {

val floatClass = classOf[Float]

val booleanClass = classOf[Boolean]

val byteClass = classOf[Byte]

- val voidClass = classOf[Void]

+ val voidClass = classOf[Unit]

}

-

To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org

For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch branch-3.2 updated: [SPARK-37556][SQL] Deser void class fail with Java serialization

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch branch-3.2

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/branch-3.2 by this push:

new ce414f8 [SPARK-37556][SQL] Deser void class fail with Java

serialization

ce414f8 is described below

commit ce414f82eb69a1888f0a166ce8f3bd3f209b15a6

Author: Daniel Dai

AuthorDate: Tue Dec 7 08:48:23 2021 -0600

[SPARK-37556][SQL] Deser void class fail with Java serialization

**What changes were proposed in this pull request?**

Change the deserialization mapping for primitive type void.

**Why are the changes needed?**

The void primitive type in Scala should be classOf[Unit] not classOf[Void].

Spark erroneously [map

it](https://github.com/apache/spark/blob/v3.2.0/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala#L80)

differently than all other primitive types. Here is the code:

```

private object JavaDeserializationStream {

val primitiveMappings = Map[String, Class[_]](

"boolean" -> classOf[Boolean],

"byte" -> classOf[Byte],

"char" -> classOf[Char],

"short" -> classOf[Short],

"int" -> classOf[Int],

"long" -> classOf[Long],

"float" -> classOf[Float],

"double" -> classOf[Double],

"void" -> classOf[Void]

)

}

```

Spark code is Here is the demonstration:

```

scala> classOf[Long]

val res0: Class[Long] = long

scala> classOf[Double]

val res1: Class[Double] = double

scala> classOf[Byte]

val res2: Class[Byte] = byte

scala> classOf[Void]

val res3: Class[Void] = class java.lang.Void <--- this is wrong

scala> classOf[Unit]

val res4: Class[Unit] = void < this is right

```

It will result in Spark deserialization error if the Spark code contains

void primitive type:

`java.io.InvalidClassException: java.lang.Void; local class name

incompatible with stream class name "void"`

**Does this PR introduce any user-facing change?**

no

**How was this patch tested?**

Changed test, also tested e2e with the code results deserialization error

and it pass now.

Closes #34816 from daijyc/voidtype.

Authored-by: Daniel Dai

Signed-off-by: Sean Owen

(cherry picked from commit fb40c0e19f84f2de9a3d69d809e9e4031f76ef90)

Signed-off-by: Sean Owen

---

core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala | 4 ++--

.../test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala | 2 +-

2 files changed, 3 insertions(+), 3 deletions(-)

diff --git

a/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

b/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

index 077b035..3c13401 100644

--- a/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

+++ b/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala

@@ -87,8 +87,8 @@ private object JavaDeserializationStream {

"long" -> classOf[Long],

"float" -> classOf[Float],

"double" -> classOf[Double],

-"void" -> classOf[Void]

- )

+"void" -> classOf[Unit])

+

}

private[spark] class JavaSerializerInstance(

diff --git

a/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

b/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

index 6a6ea42..03349f8 100644

--- a/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

+++ b/core/src/test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala

@@ -47,5 +47,5 @@ private class ContainsPrimitiveClass extends Serializable {

val floatClass = classOf[Float]

val booleanClass = classOf[Boolean]

val byteClass = classOf[Byte]

- val voidClass = classOf[Void]

+ val voidClass = classOf[Unit]

}

-

To unsubscribe, e-mail: commits-unsubscr...@spark.apache.org

For additional commands, e-mail: commits-h...@spark.apache.org

[spark] branch master updated: [SPARK-37556][SQL] Deser void class fail with Java serialization

This is an automated email from the ASF dual-hosted git repository.

srowen pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/spark.git

The following commit(s) were added to refs/heads/master by this push:

new fb40c0e [SPARK-37556][SQL] Deser void class fail with Java

serialization

fb40c0e is described below

commit fb40c0e19f84f2de9a3d69d809e9e4031f76ef90

Author: Daniel Dai

AuthorDate: Tue Dec 7 08:48:23 2021 -0600

[SPARK-37556][SQL] Deser void class fail with Java serialization

**What changes were proposed in this pull request?**

Change the deserialization mapping for primitive type void.

**Why are the changes needed?**

The void primitive type in Scala should be classOf[Unit] not classOf[Void].

Spark erroneously [map

it](https://github.com/apache/spark/blob/v3.2.0/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala#L80)

differently than all other primitive types. Here is the code:

```

private object JavaDeserializationStream {

val primitiveMappings = Map[String, Class[_]](

"boolean" -> classOf[Boolean],

"byte" -> classOf[Byte],

"char" -> classOf[Char],

"short" -> classOf[Short],

"int" -> classOf[Int],

"long" -> classOf[Long],

"float" -> classOf[Float],

"double" -> classOf[Double],

"void" -> classOf[Void]

)

}

```

Spark code is Here is the demonstration:

```

scala> classOf[Long]

val res0: Class[Long] = long

scala> classOf[Double]

val res1: Class[Double] = double

scala> classOf[Byte]

val res2: Class[Byte] = byte

scala> classOf[Void]

val res3: Class[Void] = class java.lang.Void <--- this is wrong

scala> classOf[Unit]

val res4: Class[Unit] = void < this is right

```

It will result in Spark deserialization error if the Spark code contains

void primitive type:

`java.io.InvalidClassException: java.lang.Void; local class name

incompatible with stream class name "void"`

**Does this PR introduce any user-facing change?**

no

**How was this patch tested?**

Changed test, also tested e2e with the code results deserialization error

and it pass now.

Closes #34816 from daijyc/voidtype.

Authored-by: Daniel Dai

Signed-off-by: Sean Owen

---

core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala| 2 +-

.../test/scala/org/apache/spark/serializer/JavaSerializerSuite.scala| 2 +-

2 files changed, 2 insertions(+), 2 deletions(-)

diff --git

a/core/src/main/scala/org/apache/spark/serializer/JavaSerializer.scala