[GitHub] [airflow] boring-cyborg[bot] commented on issue #17843: Traceback Error Resulting from Deleting a Dag

boring-cyborg[bot] commented on issue #17843: URL: https://github.com/apache/airflow/issues/17843#issuecomment-906105699 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] edctorr opened a new issue #17843: Traceback Error Resulting from Deleting a Dag

edctorr opened a new issue #17843: URL: https://github.com/apache/airflow/issues/17843 Python version: 3.8.10 Airflow version: 2.1.3 Hello, Whenever I try and delete a DAG, I receive a Traceback Error. I have tried to change load_examples = False inside airflow.cfg and reset the airflow db and still have no luck removing the sample DAGs. Any solutions? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] anuj-condenast opened a new issue #17842: Add a config or variable which will schedule the DAG at the same time as given instead of end of that schedule.

anuj-condenast opened a new issue #17842: URL: https://github.com/apache/airflow/issues/17842 **Description** We all have faced issues with Airflow scheduling, where it schedules a DAG at the end of the given schedule. We want a variable or config that could turn that end of schedule off so that if someone wishes, they could schedule at the exact time as mentioned. **Use case / motivation** So generally, if I schedule a task to start daily at 12:00 pm, the problem is it will start at the end of the schedule at 11:59 the next day. For daily, it's not that big of a deal, but when we are trying to run it monthly, we face issues in scheduling it exactly on the 1st of every month at a given time instead of the end of the schedule. We understand Airflow's intention of having the end of the schedule thing, but it would also be great if there is a variable that can be turned off or something in config that can be used to schedule at the exact time and date as mentioned in the schedule. By default, we can have the same thing that airflow still follows, but one extra variable could help many people. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #17842: Add a config or variable which will schedule the DAG at the same time as given instead of end of that schedule.

boring-cyborg[bot] commented on issue #17842: URL: https://github.com/apache/airflow/issues/17842#issuecomment-906101685 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] aprettyloner edited a comment on issue #10288: gcs_to_bigquery using deprecated methods

aprettyloner edited a comment on issue #10288: URL: https://github.com/apache/airflow/issues/10288#issuecomment-906012109 My team is also seeing the deprecation warning for decorator in `gcs_to_bigquery` on all `GCSToBigQueryOperator` imports. This is noisy and is misleading some of our developers to think we need to make a code change. ``` /home/airflow/.local/lib/python3.8/site-packages/airflow/providers/google/cloud/transfers/gcs_to_bigquery.py:31 DeprecationWarning: This decorator is deprecated. In previous versions, all subclasses of BaseOperator must use apply_default decorator for the`default_args` feature to work properly. In current version, it is optional. The decorator is applied automatically using the metaclass. ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] aprettyloner edited a comment on issue #10288: gcs_to_bigquery using deprecated methods

aprettyloner edited a comment on issue #10288: URL: https://github.com/apache/airflow/issues/10288#issuecomment-906012109 My team is also seeing the deprecation warning for decorator in `gcs_to_bigquery` on all `GCSToBigQueryOperator` imports. This is noisy and leads some of our developers to think we need to make a code change. ``` /home/airflow/.local/lib/python3.8/site-packages/airflow/providers/google/cloud/transfers/gcs_to_bigquery.py:31 DeprecationWarning: This decorator is deprecated. In previous versions, all subclasses of BaseOperator must use apply_default decorator for the`default_args` feature to work properly. In current version, it is optional. The decorator is applied automatically using the metaclass. ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] aprettyloner edited a comment on issue #10288: gcs_to_bigquery using deprecated methods

aprettyloner edited a comment on issue #10288: URL: https://github.com/apache/airflow/issues/10288#issuecomment-906012109 My team is also seeing the deprecation warning for decorator in `gcs_to_bigquery` on all `GCSToBigQueryOperator` imports. This is noisy as it is not coming from our own code. ``` /home/airflow/.local/lib/python3.8/site-packages/airflow/providers/google/cloud/transfers/gcs_to_bigquery.py:31 DeprecationWarning: This decorator is deprecated. ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] aprettyloner edited a comment on issue #10288: gcs_to_bigquery using deprecated methods

aprettyloner edited a comment on issue #10288: URL: https://github.com/apache/airflow/issues/10288#issuecomment-906012109 My team is also seeing the deprecation warning. ``` /home/airflow/.local/lib/python3.8/site-packages/airflow/providers/google/cloud/transfers/gcs_to_bigquery.py:31 DeprecationWarning: This decorator is deprecated. ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] aprettyloner commented on issue #10288: gcs_to_bigquery using deprecated methods

aprettyloner commented on issue #10288: URL: https://github.com/apache/airflow/issues/10288#issuecomment-906012109 My team is also seeing the deprecation warning -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] andrewgodwin commented on issue #17834: Python Sensor cannot be turned into a Smart Sensor

andrewgodwin commented on issue #17834: URL: https://github.com/apache/airflow/issues/17834#issuecomment-906001148 It's not _quite_ that flexible sadly - Operators must have an async version written along with an accompanying trigger, and since the trigger runs on a different process it still suffers the same serialisation problem. I doubt we'll ever be able to make any generic operator (PythonOperator counting as the "most generic" of all operators) run well at scale unless we change the Operator contract to bake-in multi-tenancy and serializability from the get-go, which seems... unlikely. Deferrable Operators (AIP-40), and Smart Sensors to a similar extent, require that the operator that's being made "more efficient" have a central "delay portion" that can be done from anywhere - for example, waiting on an external system. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] bbenshalom opened a new pull request #17839: Add DAG run abort endpoint

bbenshalom opened a new pull request #17839: URL: https://github.com/apache/airflow/pull/17839 Fixes #15888 The new endpoint will set the DAG run state and all of the task instances' state to FAILED I couldn't find any tests for the endpoints directly, so did not add a unit test... Please let me know if I missed their location and I'll happily add them! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on pull request #17839: Add DAG run abort endpoint

boring-cyborg[bot] commented on pull request #17839: URL: https://github.com/apache/airflow/pull/17839#issuecomment-905972040 Congratulations on your first Pull Request and welcome to the Apache Airflow community! If you have any issues or are unsure about any anything please check our Contribution Guide (https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst) Here are some useful points: - Pay attention to the quality of your code (flake8, mypy and type annotations). Our [pre-commits]( https://github.com/apache/airflow/blob/main/STATIC_CODE_CHECKS.rst#prerequisites-for-pre-commit-hooks) will help you with that. - In case of a new feature add useful documentation (in docstrings or in `docs/` directory). Adding a new operator? Check this short [guide](https://github.com/apache/airflow/blob/main/docs/apache-airflow/howto/custom-operator.rst) Consider adding an example DAG that shows how users should use it. - Consider using [Breeze environment](https://github.com/apache/airflow/blob/main/BREEZE.rst) for testing locally, it’s a heavy docker but it ships with a working Airflow and a lot of integrations. - Be patient and persistent. It might take some time to get a review or get the final approval from Committers. - Please follow [ASF Code of Conduct](https://www.apache.org/foundation/policies/conduct) for all communication including (but not limited to) comments on Pull Requests, Mailing list and Slack. - Be sure to read the [Airflow Coding style]( https://github.com/apache/airflow/blob/main/CONTRIBUTING.rst#coding-style-and-best-practices). Apache Airflow is a community-driven project and together we are making it better . In case of doubts contact the developers at: Mailing List: d...@airflow.apache.org Slack: https://s.apache.org/airflow-slack -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

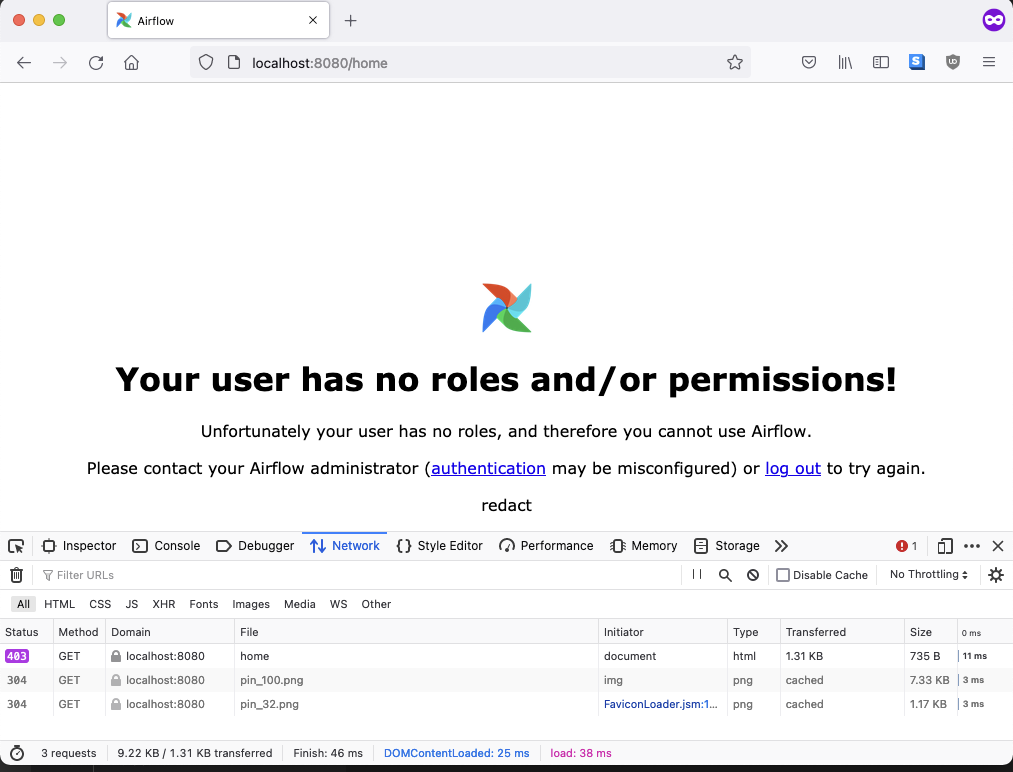

[GitHub] [airflow] jedcunningham opened a new pull request #17838: Avoid redirect loop for users with no permissions

jedcunningham opened a new pull request #17838: URL: https://github.com/apache/airflow/pull/17838 Like we recently did for users with no roles, also handle it gracefully when users have no permissions instead of letting them get stuck in a redirect loop. This also changes the approach to rendering the template as a 403 for the originally requested URI instead of redirecting to a separate endpoint.  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] closed pull request #10606: Use private docker repository for K8S Operator & sidecar container

github-actions[bot] closed pull request #10606: URL: https://github.com/apache/airflow/pull/10606 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on issue #13853: Clearing of historic Task or DagRuns leads to failed DagRun

github-actions[bot] commented on issue #13853: URL: https://github.com/apache/airflow/issues/13853#issuecomment-905956858 This issue has been automatically marked as stale because it has been open for 30 days with no response from the author. It will be closed in next 7 days if no further activity occurs from the issue author. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch constraints-main updated: Updating constraints. Build id:1168386641

This is an automated email from the ASF dual-hosted git repository. github-bot pushed a commit to branch constraints-main in repository https://gitbox.apache.org/repos/asf/airflow.git The following commit(s) were added to refs/heads/constraints-main by this push: new f8339ec Updating constraints. Build id:1168386641 f8339ec is described below commit f8339ec5090ff8518a7b071a78778927a24c5039 Author: Automated GitHub Actions commit AuthorDate: Wed Aug 25 23:46:33 2021 + Updating constraints. Build id:1168386641 This update in constraints is automatically committed by the CI 'constraints-push' step based on HEAD of 'refs/heads/main' in 'apache/airflow' with commit sha 7e93828253bcea2fcf5e528a050b1e4b3194464c. All tests passed in this build so we determined we can push the updated constraints. See https://github.com/apache/airflow/blob/main/README.md#installing-from-pypi for details. --- constraints-3.6.txt | 2 +- constraints-3.7.txt | 2 +- constraints-3.8.txt | 2 +- constraints-3.9.txt | 2 +- 4 files changed, 4 insertions(+), 4 deletions(-) diff --git a/constraints-3.6.txt b/constraints-3.6.txt index 02ce4e3..2dbc114 100644 --- a/constraints-3.6.txt +++ b/constraints-3.6.txt @@ -447,7 +447,7 @@ s3transfer==0.4.2 sasl==0.3.1 scrapbook==0.5.0 semver==2.13.0 -sendgrid==6.8.0 +sendgrid==6.8.1 sentinels==1.0.0 sentry-sdk==1.3.1 setproctitle==1.2.2 diff --git a/constraints-3.7.txt b/constraints-3.7.txt index 602b294..681eda2 100644 --- a/constraints-3.7.txt +++ b/constraints-3.7.txt @@ -445,7 +445,7 @@ s3transfer==0.4.2 sasl==0.3.1 scrapbook==0.5.0 semver==2.13.0 -sendgrid==6.8.0 +sendgrid==6.8.1 sentinels==1.0.0 sentry-sdk==1.3.1 setproctitle==1.2.2 diff --git a/constraints-3.8.txt b/constraints-3.8.txt index 602b294..681eda2 100644 --- a/constraints-3.8.txt +++ b/constraints-3.8.txt @@ -445,7 +445,7 @@ s3transfer==0.4.2 sasl==0.3.1 scrapbook==0.5.0 semver==2.13.0 -sendgrid==6.8.0 +sendgrid==6.8.1 sentinels==1.0.0 sentry-sdk==1.3.1 setproctitle==1.2.2 diff --git a/constraints-3.9.txt b/constraints-3.9.txt index 5611a18..603f176 100644 --- a/constraints-3.9.txt +++ b/constraints-3.9.txt @@ -441,7 +441,7 @@ rsa==4.7.2 s3transfer==0.4.2 scrapbook==0.5.0 semver==2.13.0 -sendgrid==6.8.0 +sendgrid==6.8.1 sentinels==1.0.0 sentry-sdk==1.3.1 setproctitle==1.2.2

[GitHub] [airflow] jedcunningham commented on issue #17684: Role permissions and displaying dag load errors on webserver UI

jedcunningham commented on issue #17684: URL: https://github.com/apache/airflow/issues/17684#issuecomment-905944084 Thanks again for the report @kn6405, the fix will be in 2.2.0. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated (ea49c96 -> 7e93828)

This is an automated email from the ASF dual-hosted git repository. potiuk pushed a change to branch main in repository https://gitbox.apache.org/repos/asf/airflow.git. from ea49c96 Fix typos in docs & ``bug_report`` template (#17809) add 7e93828 (docs): update README.md (#17806) No new revisions were added by this update. Summary of changes: README.md | 88 +++ 1 file changed, 43 insertions(+), 45 deletions(-)

[GitHub] [airflow] boring-cyborg[bot] commented on pull request #17806: (docs): update README.md

boring-cyborg[bot] commented on pull request #17806: URL: https://github.com/apache/airflow/pull/17806#issuecomment-905929533 Awesome work, congrats on your first merged pull request! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk merged pull request #17806: (docs): update README.md

potiuk merged pull request #17806: URL: https://github.com/apache/airflow/pull/17806 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17806: (docs): update README.md

github-actions[bot] commented on pull request #17806: URL: https://github.com/apache/airflow/pull/17806#issuecomment-905929170 The PR is likely ready to be merged. No tests are needed as no important environment files, nor python files were modified by it. However, committers might decide that full test matrix is needed and add the 'full tests needed' label. Then you should rebase it to the latest main or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated (45e61a9 -> ea49c96)

This is an automated email from the ASF dual-hosted git repository. kaxilnaik pushed a change to branch main in repository https://gitbox.apache.org/repos/asf/airflow.git. from 45e61a9 Only show import errors for DAGs a user can access (#17835) add ea49c96 Fix typos in docs & ``bug_report`` template (#17809) No new revisions were added by this update. Summary of changes: .github/ISSUE_TEMPLATE/bug_report.md | 4 ++-- BREEZE.rst | 8 CONTRIBUTING.rst | 6 +++--- README.md| 2 +- TESTING.rst | 2 +- 5 files changed, 11 insertions(+), 11 deletions(-)

[GitHub] [airflow] boring-cyborg[bot] commented on pull request #17809: Fix typos in documentation and command example in comment in bug_report template

boring-cyborg[bot] commented on pull request #17809: URL: https://github.com/apache/airflow/pull/17809#issuecomment-905928284 Awesome work, congrats on your first merged pull request! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] kaxil merged pull request #17809: Fix typos in documentation and command example in comment in bug_report template

kaxil merged pull request #17809: URL: https://github.com/apache/airflow/pull/17809 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] github-actions[bot] commented on pull request #17809: Fix typos in documentation and command example in comment in bug_report template

github-actions[bot] commented on pull request #17809: URL: https://github.com/apache/airflow/pull/17809#issuecomment-905928116 The PR is likely ready to be merged. No tests are needed as no important environment files, nor python files were modified by it. However, committers might decide that full test matrix is needed and add the 'full tests needed' label. Then you should rebase it to the latest main or amend the last commit of the PR, and push it with --force-with-lease. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated: Only show import errors for DAGs a user can access (#17835)

This is an automated email from the ASF dual-hosted git repository.

kaxilnaik pushed a commit to branch main

in repository https://gitbox.apache.org/repos/asf/airflow.git

The following commit(s) were added to refs/heads/main by this push:

new 45e61a9 Only show import errors for DAGs a user can access (#17835)

45e61a9 is described below

commit 45e61a965f64feffb18f6e064810a93b61a48c8a

Author: Jed Cunningham <66968678+jedcunning...@users.noreply.github.com>

AuthorDate: Wed Aug 25 16:50:40 2021 -0600

Only show import errors for DAGs a user can access (#17835)

For new DAGs (ones that have not previously parsed successfully), import

errors will only be shown to users who can read all DAGs.

Closes: #17684

---

airflow/www/views.py | 12 ++-

tests/www/views/test_views_base.py | 50 ---

tests/www/views/test_views_home.py | 180 +

3 files changed, 190 insertions(+), 52 deletions(-)

diff --git a/airflow/www/views.py b/airflow/www/views.py

index 5a45bda..ceb035f 100644

--- a/airflow/www/views.py

+++ b/airflow/www/views.py

@@ -694,8 +694,16 @@ class Airflow(AirflowBaseView):

import_errors =

session.query(errors.ImportError).order_by(errors.ImportError.id).all()

-for import_error in import_errors:

-flash("Broken DAG: [{ie.filename}]

{ie.stacktrace}".format(ie=import_error), "dag_import_error")

+if import_errors:

+dag_filenames = {dag.fileloc for dag in dags}

+all_dags_readable = (permissions.ACTION_CAN_READ,

permissions.RESOURCE_DAG) in user_permissions

+

+for import_error in import_errors:

+if all_dags_readable or import_error.filename in dag_filenames:

+flash(

+"Broken DAG: [{ie.filename}]

{ie.stacktrace}".format(ie=import_error),

+"dag_import_error",

+)

from airflow.plugins_manager import import_errors as

plugin_import_errors

diff --git a/tests/www/views/test_views_base.py

b/tests/www/views/test_views_base.py

index 95b4319..1581588 100644

--- a/tests/www/views/test_views_base.py

+++ b/tests/www/views/test_views_base.py

@@ -18,15 +18,12 @@

import datetime

import json

-import flask

import pytest

from airflow import version

from airflow.jobs.base_job import BaseJob

from airflow.utils import timezone

from airflow.utils.session import create_session

-from airflow.utils.state import State

-from airflow.www.views import FILTER_STATUS_COOKIE, FILTER_TAGS_COOKIE

from tests.test_utils.asserts import assert_queries_count

from tests.test_utils.config import conf_vars

from tests.test_utils.www import check_content_in_response,

check_content_not_in_response

@@ -51,29 +48,6 @@ def test_doc_urls(admin_client):

check_content_in_response("/api/v1/ui", resp)

-def test_home(capture_templates, admin_client):

-with capture_templates() as templates:

-resp = admin_client.get('home', follow_redirects=True)

-check_content_in_response('DAGs', resp)

-val_state_color_mapping = (

-'const STATE_COLOR = {'

-'"deferred": "lightseagreen", "failed": "red", '

-'"null": "lightblue", "queued": "gray", '

-'"removed": "lightgrey", "restarting": "violet", "running":

"lime", '

-'"scheduled": "tan", "sensing": "lightseagreen", '

-'"shutdown": "blue", "skipped": "pink", '

-'"success": "green", "up_for_reschedule": "turquoise", '

-'"up_for_retry": "gold", "upstream_failed": "orange"};'

-)

-check_content_in_response(val_state_color_mapping, resp)

-

-assert len(templates) == 1

-assert templates[0].name == 'airflow/dags.html'

-state_color_mapping = State.state_color.copy()

-state_color_mapping["null"] = state_color_mapping.pop(None)

-assert templates[0].local_context['state_color'] == state_color_mapping

-

-

@pytest.fixture()

def heartbeat_healthy():

# case-1: healthy scheduler status

@@ -395,30 +369,6 @@ def test_delete_user(app, admin_client, exist_username):

check_content_in_response("Deleted Row", resp)

-def test_home_filter_tags(admin_client):

-with admin_client:

-admin_client.get('home?tags=example=data', follow_redirects=True)

-assert 'example,data' == flask.session[FILTER_TAGS_COOKIE]

-

-admin_client.get('home?reset_tags', follow_redirects=True)

-assert flask.session[FILTER_TAGS_COOKIE] is None

-

-

-def test_home_status_filter_cookie(admin_client):

-with admin_client:

-admin_client.get('home', follow_redirects=True)

-assert 'all' == flask.session[FILTER_STATUS_COOKIE]

-

-admin_client.get('home?status=active', follow_redirects=True)

-assert 'active' == flask.session[FILTER_STATUS_COOKIE]

-

-admin_client.get('home?status=paused', follow_redirects=True)

-assert 'paused' ==

[GitHub] [airflow] kaxil merged pull request #17835: Only show import errors for DAGs a user can access

kaxil merged pull request #17835: URL: https://github.com/apache/airflow/pull/17835 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] kaxil closed issue #17684: Role permissions and displaying dag load errors on webserver UI

kaxil closed issue #17684: URL: https://github.com/apache/airflow/issues/17684 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham commented on issue #17836: sqlalchemy.exc.ProgrammingError: (psycopg2.errors.UndefinedTable) relation "dag" does not exist

jedcunningham commented on issue #17836: URL: https://github.com/apache/airflow/issues/17836#issuecomment-905916378 @fe2906 please open another issue with details if you found a bug. Thanks. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham closed issue #17836: sqlalchemy.exc.ProgrammingError: (psycopg2.errors.UndefinedTable) relation "dag" does not exist

jedcunningham closed issue #17836: URL: https://github.com/apache/airflow/issues/17836 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] msolano00 edited a comment on issue #16398: Enhance impersonation on Restful API

msolano00 edited a comment on issue #16398: URL: https://github.com/apache/airflow/issues/16398#issuecomment-905896893 @ashb Hi, thanks you for reply and sorry for the late response, got pulled into something at work. Yes, exactly that is the behavior. Have a property that allows authenticated users (like a service account) to run jobs as other users. `So first step on this: we need to come up with a design for letting some properties of the dag be overridden based on the DagRun.` I will take a look into the core modules of airflow and reply back here once I have a better understanding of how it is being setup. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] msolano00 commented on issue #16398: Enhance impersonation on Restful API

msolano00 commented on issue #16398: URL: https://github.com/apache/airflow/issues/16398#issuecomment-905896893 @ashb Hi, thanks you for reply! Yes, exactly that is the behavior. Have a property that allows authenticated users (like a service account) to run jobs as other users. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #17836: sqlalchemy.exc.ProgrammingError: (psycopg2.errors.UndefinedTable) relation "dag" does not exist

boring-cyborg[bot] commented on issue #17836: URL: https://github.com/apache/airflow/issues/17836#issuecomment-905887844 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] fe2906 opened a new issue #17836: sqlalchemy.exc.ProgrammingError: (psycopg2.errors.UndefinedTable) relation "dag" does not exist

fe2906 opened a new issue #17836: URL: https://github.com/apache/airflow/issues/17836 **Apache Airflow version**: **OS**: **Apache Airflow Provider versions**: **Deployment**: **What happened**: **What you expected to happen**: **How to reproduce it**: **Anything else we need to know**: **Are you willing to submit a PR?** -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham opened a new pull request #17835: Only show import errors for DAGs a user can access

jedcunningham opened a new pull request #17835: URL: https://github.com/apache/airflow/pull/17835 We should only show import errors for DAGs that the user can access. For new DAGs (ones that have not previously parsed successfully), import errors will only be shown to users who can read all DAGs. Closes: #17684 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] mnojek commented on a change in pull request #17809: Fix typos in documentation and command example in comment in bug_report template

mnojek commented on a change in pull request #17809: URL: https://github.com/apache/airflow/pull/17809#discussion_r696084606 ## File path: .github/ISSUE_TEMPLATE/bug_report.md ## @@ -19,7 +19,7 @@ Please complete the next sections or the issue will be closed. **OS**: - + Review comment: Can I resolve this thread, @uranusjr ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on pull request #17745: Add triggerer to `docker-compose.yaml` file

potiuk edited a comment on pull request #17745: URL: https://github.com/apache/airflow/pull/17745#issuecomment-905837509 Yep. Because we stopped using it. I am just about to delete `apache/airflow-ci` images because we switched to `ghcr.io` github images ~ 2 weeks ago and last week we disabled pushing the CI images to "airflow-ci" and I am just about to remove the whole "airflow-ci" . This is the last "checkbox" to complete here: https://github.com/apache/airflow/issues/16555 (but we waited for catching up with PRs based on earlier versions of Airflow). You can find the latest CI images in `ghcr.io`: See https://github.com/apache/airflow/blob/main/IMAGES.rst#naming-conventions You should look at the : `ghcr.io/apache/airflow/main/ci/python3.9:latest` image :) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ephraimbuddy commented on a change in pull request #17819: Promptly handle task callback from _process_executor_events

ephraimbuddy commented on a change in pull request #17819: URL: https://github.com/apache/airflow/pull/17819#discussion_r696076214 ## File path: tests/jobs/test_scheduler_job.py ## @@ -207,17 +207,18 @@ def test_process_executor_events(self, mock_stats_incr, mock_task_callback, dag_ session.commit() executor.event_buffer[ti1.key] = State.FAILED, None - self.scheduler_job._process_executor_events(session=session) ti1.refresh_from_db() -assert ti1.state == State.FAILED + +assert ti1.state == State.QUEUED mock_task_callback.assert_called_once_with( full_filepath='/test_path1/', simple_task_instance=mock.ANY, msg='Executor reports task instance ' ' ' 'finished (failed) although the task says its queued. (Info: None) ' 'Was the task killed externally?', +task=task1, Review comment: I'm not sure why this is failing...will appreciate a help here ## File path: tests/jobs/test_scheduler_job.py ## @@ -207,17 +207,18 @@ def test_process_executor_events(self, mock_stats_incr, mock_task_callback, dag_ session.commit() executor.event_buffer[ti1.key] = State.FAILED, None - self.scheduler_job._process_executor_events(session=session) ti1.refresh_from_db() -assert ti1.state == State.FAILED + +assert ti1.state == State.QUEUED mock_task_callback.assert_called_once_with( full_filepath='/test_path1/', simple_task_instance=mock.ANY, msg='Executor reports task instance ' ' ' 'finished (failed) although the task says its queued. (Info: None) ' 'Was the task killed externally?', +task=task1, Review comment: I'm not sure why this is failing...will appreciate help here -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on pull request #17745: Add triggerer to `docker-compose.yaml` file

potiuk commented on pull request #17745: URL: https://github.com/apache/airflow/pull/17745#issuecomment-905837509 Yep. Because we stopped using it. I am just about to delete `apache/airflow-ci' images because we switched to `ghcr.io` github images ~ 2 weeks ago and last week we disabled pushing the CI images to "airflow-ci" and I am just about to remove the whole "airflow-ci" . This is the last "checkbox" to complete here: https://github.com/apache/airflow/issues/16555 (but we waited for catching up with PRs based on earlier versions of Airflow). You can find the latest CI images in `ghcr.io`: See https://github.com/apache/airflow/blob/main/IMAGES.rst#naming-conventions You should look at the : `ghcr.io/apache/airflow/main/ci/python3.9:latest` image :) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk closed issue #17834: Python Sensor cannot be turned into a Smart Sensor

potiuk closed issue #17834: URL: https://github.com/apache/airflow/issues/17834 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham closed pull request #17797: Fix broken MSSQL test

jedcunningham closed pull request #17797: URL: https://github.com/apache/airflow/pull/17797 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17834: Python Sensor cannot be turned into a Smart Sensor

potiuk commented on issue #17834: URL: https://github.com/apache/airflow/issues/17834#issuecomment-905831938 It's not supported because it has "callable" which cannot be serialised. But FEAR NOUGHT ! @andrewgodwin is working on implementing AIP-40 (https://cwiki.apache.org/confluence/pages/viewpage.action?pageId=177050929) which is a different (and much more comprehensive) approach to this problem (and there - as far as I understand it - Any operator (including Python Operator) will be possible to be run in "async" mode. The bad thing - it is going to be available in Airflow 2.2 at the earliest. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham closed pull request #17397: Update to Celery 5

jedcunningham closed pull request #17397: URL: https://github.com/apache/airflow/pull/17397 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] atorres-eqrx commented on pull request #12809: Add 'headers' to template_fields in HttpSensor

atorres-eqrx commented on pull request #12809: URL: https://github.com/apache/airflow/pull/12809#issuecomment-905821908 > > How does one know in which release of airflow this change has been deployed? > > I'm on airflow 1.10.15 and it seems that `headers` is not included in `template_fields`. > > Probably because you are importing the sensor from `contrib` rather than from `providers`. > insall https://pypi.org/project/apache-airflow-backport-providers-http/ > then import it as: > `from airflow.providers.http.sensors.http import HttpSensor ` That did the trick. Thank you! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] eladkal commented on pull request #12809: Add 'headers' to template_fields in HttpSensor

eladkal commented on pull request #12809: URL: https://github.com/apache/airflow/pull/12809#issuecomment-905815868 > How does one know in which release of airflow this change has been deployed? > > I'm on airflow 1.10.15 and it seems that `headers` is not included in `template_fields`. Probably because you are importing the sensor from `contrib` rather than from `providers`. insall https://pypi.org/project/apache-airflow-backport-providers-http/ then import it as: `from airflow.providers.http.sensors.http import HttpSensor ` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jbsilva edited a comment on pull request #17745: Add triggerer to `docker-compose.yaml` file

jbsilva edited a comment on pull request #17745: URL: https://github.com/apache/airflow/pull/17745#issuecomment-905808906 I read the 2.2.0 warning, but seems that the most recent image on Docker Hub (_apache/airflow-ci:main-python3.9_) still does not have the _triggerer_ command: `airflow command error: argument GROUP_OR_COMMAND: invalid choice: 'triggerer' (choose from 'celery', 'cheat-sheet', 'config', 'connections', 'dags', 'db', 'info', 'jobs', 'kerberos', 'kubernetes', 'plugins', 'pools', 'providers', 'roles', 'rotate-fernet-key', 'scheduler', 'standalone', 'sync-perm', 'tasks', 'users', 'variables', 'version', 'webserver'), see help above.` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jbsilva commented on pull request #17745: Add triggerer to `docker-compose.yaml` file

jbsilva commented on pull request #17745: URL: https://github.com/apache/airflow/pull/17745#issuecomment-905808906 I read the 2.2.0 warning, but seems that the most recent Docker Hub image (_apache/airflow-ci:main-python3.9_) still does not have the _triggerer_ command: `airflow command error: argument GROUP_OR_COMMAND: invalid choice: 'triggerer' (choose from 'celery', 'cheat-sheet', 'config', 'connections', 'dags', 'db', 'info', 'jobs', 'kerberos', 'kubernetes', 'plugins', 'pools', 'providers', 'roles', 'rotate-fernet-key', 'scheduler', 'standalone', 'sync-perm', 'tasks', 'users', 'variables', 'version', 'webserver'), see help above.` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] atorres-eqrx commented on pull request #12809: Add 'headers' to template_fields in HttpSensor

atorres-eqrx commented on pull request #12809: URL: https://github.com/apache/airflow/pull/12809#issuecomment-905808828 How does one know in which release of airflow this change has been deployed? I'm on airflow 1.10.15 and it seems that `headers` is not included in `template_fields`. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] ephraimbuddy commented on a change in pull request #17819: Promptly handle task callback from _process_executor_events

ephraimbuddy commented on a change in pull request #17819: URL: https://github.com/apache/airflow/pull/17819#discussion_r695882206 ## File path: tests/jobs/test_scheduler_job.py ## @@ -207,17 +207,18 @@ def test_process_executor_events(self, mock_stats_incr, mock_task_callback, dag_ session.commit() executor.event_buffer[ti1.key] = State.FAILED, None - self.scheduler_job._process_executor_events(session=session) ti1.refresh_from_db() -assert ti1.state == State.FAILED + +assert ti1.state == State.QUEUED mock_task_callback.assert_called_once_with( full_filepath='/test_path1/', simple_task_instance=mock.ANY, msg='Executor reports task instance ' ' ' 'finished (failed) although the task says its queued. (Info: None) ' 'Was the task killed externally?', +task=task1, Review comment: I don't know why the` mock_task_callback` `simple_task_instance` is no longer called with `mock.ANY` but with the real `SimpleTaskInstance` object, causing the test to fail. Any ideas? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch constraints-main updated: Updating constraints. Build id:1167561582

This is an automated email from the ASF dual-hosted git repository. github-bot pushed a commit to branch constraints-main in repository https://gitbox.apache.org/repos/asf/airflow.git The following commit(s) were added to refs/heads/constraints-main by this push: new 62166ec Updating constraints. Build id:1167561582 62166ec is described below commit 62166ec1f6c0393478f538a2706b45f62698a6c5 Author: Automated GitHub Actions commit AuthorDate: Wed Aug 25 18:49:13 2021 + Updating constraints. Build id:1167561582 This update in constraints is automatically committed by the CI 'constraints-push' step based on HEAD of 'refs/heads/main' in 'apache/airflow' with commit sha a759499c8d741b8d679c683d1ee2757dd42e1cd2. All tests passed in this build so we determined we can push the updated constraints. See https://github.com/apache/airflow/blob/main/README.md#installing-from-pypi for details. --- constraints-3.6.txt | 2 +- constraints-3.7.txt | 2 +- constraints-3.8.txt | 2 +- constraints-3.9.txt | 2 +- 4 files changed, 4 insertions(+), 4 deletions(-) diff --git a/constraints-3.6.txt b/constraints-3.6.txt index b4bd824..02ce4e3 100644 --- a/constraints-3.6.txt +++ b/constraints-3.6.txt @@ -235,7 +235,7 @@ google-cloud-audit-log==0.1.0 google-cloud-automl==2.4.2 google-cloud-bigquery-datatransfer==3.3.1 google-cloud-bigquery-storage==2.6.3 -google-cloud-bigquery==2.25.0 +google-cloud-bigquery==2.25.1 google-cloud-bigtable==1.7.0 google-cloud-container==1.0.1 google-cloud-core==1.7.2 diff --git a/constraints-3.7.txt b/constraints-3.7.txt index 62368bc..602b294 100644 --- a/constraints-3.7.txt +++ b/constraints-3.7.txt @@ -233,7 +233,7 @@ google-cloud-audit-log==0.1.0 google-cloud-automl==2.4.2 google-cloud-bigquery-datatransfer==3.3.1 google-cloud-bigquery-storage==2.6.3 -google-cloud-bigquery==2.25.0 +google-cloud-bigquery==2.25.1 google-cloud-bigtable==1.7.0 google-cloud-container==1.0.1 google-cloud-core==1.7.2 diff --git a/constraints-3.8.txt b/constraints-3.8.txt index 62368bc..602b294 100644 --- a/constraints-3.8.txt +++ b/constraints-3.8.txt @@ -233,7 +233,7 @@ google-cloud-audit-log==0.1.0 google-cloud-automl==2.4.2 google-cloud-bigquery-datatransfer==3.3.1 google-cloud-bigquery-storage==2.6.3 -google-cloud-bigquery==2.25.0 +google-cloud-bigquery==2.25.1 google-cloud-bigtable==1.7.0 google-cloud-container==1.0.1 google-cloud-core==1.7.2 diff --git a/constraints-3.9.txt b/constraints-3.9.txt index 731032a..5611a18 100644 --- a/constraints-3.9.txt +++ b/constraints-3.9.txt @@ -230,7 +230,7 @@ google-cloud-audit-log==0.1.0 google-cloud-automl==2.4.2 google-cloud-bigquery-datatransfer==3.3.1 google-cloud-bigquery-storage==2.6.3 -google-cloud-bigquery==2.25.0 +google-cloud-bigquery==2.25.1 google-cloud-bigtable==1.7.0 google-cloud-container==1.0.1 google-cloud-core==1.7.2

[GitHub] [airflow] potiuk commented on issue #17833: AwsBaseHook isn't using connection.host, using connection.extra.host instead

potiuk commented on issue #17833: URL: https://github.com/apache/airflow/issues/17833#issuecomment-905773352 Nice one. @alwaysmpe - I can assign you this one. You can make it in the way that it checks if "host" is in extra an uses it from there if it's there (+raise a deprecation warning) and falls-back to `connection.host` otherwise. This will make it backwards compatible (and we will be able to remove it in the future then). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on issue #17828: Docker image entrypoint does not parse redis URLs correctly with no password

potiuk edited a comment on issue #17828: URL: https://github.com/apache/airflow/issues/17828#issuecomment-905761599 1. How do you set your broker variable ? 2. Can you show us result of `echo "'$(airflow config get-value celery broker_url)'"` - i added ' to see the boundaries of what it prints. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17828: Docker image entrypoint does not parse redis URLs correctly with no password

potiuk commented on issue #17828: URL: https://github.com/apache/airflow/issues/17828#issuecomment-905761599 1. How do you set your broker variable 2. Can you show us result of `echo "'$(airflow config get-value celery broker_url)'"` - i added ' to see the boundaries of what it prints. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on issue #17828: Docker image entrypoint does not parse redis URLs correctly with no password

potiuk edited a comment on issue #17828: URL: https://github.com/apache/airflow/issues/17828#issuecomment-905758371 BTW. The regexp pattern is correct .. It should nicely match the `redis://redis-analytics-airflow.signal:6379/1`. I am just thinking maybe it's about End-of-line or " " returned by `airflow config get-value celery broker_url` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] cablespaghetti edited a comment on pull request #17211: Chart: Use stable API versions where available

cablespaghetti edited a comment on pull request #17211: URL: https://github.com/apache/airflow/pull/17211#issuecomment-905692061 Ok hopefully I've done enough now. Also upgraded the default it tests against to 1.22. Rerunning everything locally to check this hasn't broken anything at the moment. edit: They passed -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17828: Docker image entrypoint does not parse redis URLs correctly with no password

potiuk commented on issue #17828: URL: https://github.com/apache/airflow/issues/17828#issuecomment-905758371 BTW. The regexp pattern is correct .. It should nicely match the `redis://redis-analytics-airflow.signal:6379/1`. I am just thinking maybe it's about End-of-line returned by `airflow config get-value celery broker_url` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[airflow] branch main updated (96f7e3f -> a759499)

This is an automated email from the ASF dual-hosted git repository. potiuk pushed a change to branch main in repository https://gitbox.apache.org/repos/asf/airflow.git. from 96f7e3f Increase width for Run column (#17817) add a759499 Update INTHEWILD.md (#17832) No new revisions were added by this update. Summary of changes: INTHEWILD.md | 1 + 1 file changed, 1 insertion(+)

[GitHub] [airflow] boring-cyborg[bot] commented on pull request #17832: Update INTHEWILD.md

boring-cyborg[bot] commented on pull request #17832: URL: https://github.com/apache/airflow/pull/17832#issuecomment-905751884 Awesome work, congrats on your first merged pull request! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk merged pull request #17832: Update INTHEWILD.md

potiuk merged pull request #17832: URL: https://github.com/apache/airflow/pull/17832 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jeffvswanson opened a new issue #17834: Python Sensor cannot be turned into a Smart Sensor

jeffvswanson opened a new issue #17834:

URL: https://github.com/apache/airflow/issues/17834

**Apache Airflow version**: 2.02

**OS**: Amazon Linux AMI

**Apache Airflow Provider versions**: apache-airflow-providers-amazon, v2.1.0

**Deployment**: AWS MWAA

**What happened**: Setting up a Smart Python Sensor in AWS MWAA always

reverts to a normal Python Sensor task. This is because the Sensor Instance

cannot JSON serialize the python callable function.

Error message

```

[2021-08-25 16:41:57,686] {{taskinstance.py:1301}} WARNING - Failed to

register in sensor service.Continue to run task in non smart sensor mode.

[2021-08-25 16:41:57,705] {{taskinstance.py:1303}} ERROR - Object of type

function is not JSON serializable

Traceback (most recent call last):

File

"/usr/local/lib/python3.7/site-packages/airflow/models/taskinstance.py", line

1298, in _prepare_and_execute_task_with_callbacks

registered = task_copy.register_in_sensor_service(self, context)

File "/usr/local/lib/python3.7/site-packages/airflow/sensors/base.py",

line 163, in register_in_sensor_service

return SensorInstance.register(ti, poke_context, execution_context)

File "/usr/local/lib/python3.7/site-packages/airflow/utils/session.py",

line 70, in wrapper

return func(*args, session=session, **kwargs)

File

"/usr/local/lib/python3.7/site-packages/airflow/models/sensorinstance.py", line

114, in register

encoded_poke = json.dumps(poke_context)

File "/usr/lib64/python3.7/json/__init__.py", line 231, in dumps

return _default_encoder.encode(obj)

File "/usr/lib64/python3.7/json/encoder.py", line 199, in encode

chunks = self.iterencode(o, _one_shot=True)

File "/usr/lib64/python3.7/json/encoder.py", line 257, in iterencode

return _iterencode(o, 0)

File "/usr/lib64/python3.7/json/encoder.py", line 179, in default

raise TypeError(f'Object of type {o.__class__.__name__} '

TypeError: Object of type function is not JSON serializable

[2021-08-25 16:41:57,726] {{python.py:72}} INFO - Poking callable:

```

**What you expected to happen**: I expected a Python Sensor to be capable of

being cast as a Smart Sensor with the appropriate legwork, similar to working

with the Amazon Airflow Sensors being capable of being able to be classed as

smart sensors.

**How to reproduce it**:

1. Set up Apache Airflow environment configuration to support a

`SmartPythonSensor` with `poke_context_fields` of `("python_callable",

"op_kwargs")`.

2. Create a child class, `SmartPythonSensor` that inherits from

`PythonSensor` registered to be smart sensor compatible.

3. Write a test DAG with a `SmartPythonSensor` task with a simple python

callable.

4. Watch the logs as the test DAG complains the `SmartPythonSensor` cannot

be registered with the sensor service because the python callable the

`SmartPythonSensor` relies upon cannot be JSON serialized.

**Anything else we need to know**:

Downgrading a registered smart sensor to a regular sensor task will occur

every time a DAG with a `SmartPythonSensor` runs due to the `SensorInstance`

not being able to serialize a python callable.

My `SmartPythonSensor` class:

```

from airflow.sensors.python import PythonSensor

from airflow.utils.decorators import apply_defaults

class SmartPythonSensor(PythonSensor):

poke_context_fields = ("python_callable", "op_kwargs")

@apply_defaults

def __init__(self, **kwargs):

super().__init__(**kwargs)

def is_smart_sensor_compatible(self):

# Smart sensor cannot have on success callback

self.on_success_callback = None

# Smart sensor cannot have on retry callback

self.on_retry_callback = None

# Smart sensor cannot have on failure callback

self.on_failure_callback = None

# Smart sensor cannot mark the task as SKIPPED on failure

self.soft_fail = False

return super().is_smart_sensor_compatible()

```

**Are you willing to submit a PR?**

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #17834: Python Sensor cannot be turned into a Smart Sensor

boring-cyborg[bot] commented on issue #17834: URL: https://github.com/apache/airflow/issues/17834#issuecomment-905751259 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on pull request #15177: Import connections from a file

potiuk commented on pull request #15177: URL: https://github.com/apache/airflow/pull/15177#issuecomment-905751128 'And answering your other question @mm-lehmann - in case you have not noticed the "import" command is counterpart of the "export" command https://airflow.apache.org/docs/apache-airflow/stable/cli-and-env-variables-ref.html#export which result you can inspect and check the format. If you really think the format needs to be described - you are absolutely invited to contribute documentation for both export and import after reverse engineering it! I am not kidding. I think this might be valuable contribution and great way to repay for all the countless - often unpaid hours other contributors spent on building airflow (which you can now use for free). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] subkanthi commented on pull request #17068: Influxdb Hook

subkanthi commented on pull request #17068: URL: https://github.com/apache/airflow/pull/17068#issuecomment-905734948 > LGTM and heppy to merge as soon as as you rebase @subkanthi - there were some unrelated errors in main (fixed since then). Can you please rebase? @potiuk , The docs are fixed now. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on issue #17828: Docker image entrypoint does not parse redis URLs correctly with no password

potiuk edited a comment on issue #17828: URL: https://github.com/apache/airflow/issues/17828#issuecomment-905724680 Hm I somehow have a feeling (do no know yet) that it could be side-effect of this change: which instead of reading the `__CELERY__` env variable, uses `airflow get-value`: https://github.com/apache/airflow/pull/17069) Do you have maybe some other way of defining the Broker ? Secret Backend ? Could you please tell what `airflow config get-value celery broker_url` returns? In the meantime you can workaround it by setting ``CONNECTION_CHECK_MAX_COUNT`` env variable to 0 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on issue #17828: Docker image entrypoint does not parse redis URLs correctly with no password

potiuk edited a comment on issue #17828: URL: https://github.com/apache/airflow/issues/17828#issuecomment-905724680 Hm I somehow have a feeling (do no know yet) that it could be side-effect of this change: which instead of reading the `__CELERY__` env variable, uses `airflow get config`: https://github.com/apache/airflow/pull/17069) Do you have maybe some other way of defining the Broker ? Secret Backend ? Could you please tell what `airflow config get-value celery broker_url` returns? In the meantime you can workaround it by setting ``CONNECTION_CHECK_MAX_COUNT`` env variable to 0 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on issue #17828: Docker image entrypoint does not parse redis URLs correctly with no password

potiuk commented on issue #17828: URL: https://github.com/apache/airflow/issues/17828#issuecomment-905724680 Hm I somehow have a feeling (do no know yet) that it could be side-effect of this change: which instead of running the `__CELERY__` env variable, uses `airflow get config`: https://github.com/apache/airflow/pull/17069) Do you have maybe some other way of defining the Broker ? Secret Backend ? Could you please tell what `airflow config get-value celery broker_url` returns? In the meantime you can workaround it by setting ``CONNECTION_CHECK_MAX_COUNT`` env variable to 0 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] alwaysmpe opened a new issue #17833: AwsBaseHook isn't using connection.host, using connection.extra.host instead

alwaysmpe opened a new issue #17833: URL: https://github.com/apache/airflow/issues/17833 **Apache Airflow version**: Version: v2.1.2 Git Version: .release:2.1.2+d25854dd413aa68ea70fb1ade7fe01425f456192 **OS**: PRETTY_NAME="Debian GNU/Linux 10 (buster)" NAME="Debian GNU/Linux" VERSION_ID="10" VERSION="10 (buster)" VERSION_CODENAME=buster ID=debian HOME_URL="https://www.debian.org/; SUPPORT_URL="https://www.debian.org/support; BUG_REPORT_URL="https://bugs.debian.org/; **Apache Airflow Provider versions**: **Deployment**: Docker compose using reference docker compose from here: https://airflow.apache.org/docs/apache-airflow/stable/start/docker.html **What happened**: Connection specifications have a host field. This is declared as a member variable of the connection class here: https://github.com/apache/airflow/blob/96f7e3fec76a78f49032fbc9a4ee9a5551f38042/airflow/models/connection.py#L101 It's correctly used in the BaseHook class, eg here: https://github.com/apache/airflow/blob/96f7e3fec76a78f49032fbc9a4ee9a5551f38042/airflow/hooks/base.py#L69 However in AwsBaseHook, it's expected to be in extra, here: https://github.com/apache/airflow/blob/96f7e3fec76a78f49032fbc9a4ee9a5551f38042/airflow/providers/amazon/aws/hooks/base_aws.py#L404-L406 **What you expected to happen**: AwsBaseHook should use the connection.host value, not connection.extra.host **How to reproduce it**: See above code **Anything else we need to know**: **Are you willing to submit a PR?** Probably. It'd also be good for someone inexperienced. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] boring-cyborg[bot] commented on issue #17833: AwsBaseHook isn't using connection.host, using connection.extra.host instead

boring-cyborg[bot] commented on issue #17833: URL: https://github.com/apache/airflow/issues/17833#issuecomment-905723673 Thanks for opening your first issue here! Be sure to follow the issue template! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] Jorricks commented on pull request #16634: Require can_edit on DAG privileges to modify TaskInstances and DagRuns

Jorricks commented on pull request #16634: URL: https://github.com/apache/airflow/pull/16634#issuecomment-905710216 CI pipeline still not running.. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] cablespaghetti commented on a change in pull request #17211: Chart: Use stable API versions where available

cablespaghetti commented on a change in pull request #17211:

URL: https://github.com/apache/airflow/pull/17211#discussion_r695925270

##

File path: chart/values.yaml

##

@@ -120,24 +129,24 @@ ingress:

annotations: {}

# The path for the flower Ingress

-path: ""

+path: "/"

Review comment:

Both path and pathType are mandatory in the stable api. I don't think

setting it by default is likely break many people's config but thought I'd

point it out.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [airflow] cablespaghetti commented on pull request #17211: Chart: Use stable API versions where available

cablespaghetti commented on pull request #17211: URL: https://github.com/apache/airflow/pull/17211#issuecomment-905692061 Ok hopefully I've done enough now. Also upgraded the default it tests against to 1.22. Rerunning everything locally to check this hasn't broken anything at the moment. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] cablespaghetti edited a comment on pull request #17211: Chart: Use stable API versions where available

cablespaghetti edited a comment on pull request #17211: URL: https://github.com/apache/airflow/pull/17211#issuecomment-904661269 Really sorry this has taken so long, I have been super busy both at work and outside. I will put in some time to try and get this done tomorrow. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk edited a comment on pull request #15177: Import connections from a file

potiuk edited a comment on pull request #15177: URL: https://github.com/apache/airflow/pull/15177#issuecomment-905665622 If you really cared to ask this kind of question @MM-Lehmann, then I believe because apparently you wanted to hear the answer rather than show your superiority over those who volunteer their time so that you can get great FREE software. So here it is. My one answer is - because people are humans and when they try to improve something, they sometimes make mistakes (as opposed to people who do not do do much so they do not do mistakes either). Or maybe another answer - because you weren't around with helpful comment like that when it was discussed @MM-Lehmann . I am sure if you were around such mistakes would not have happened. Please next time be sure to follow up the changed PRs and catch such omissions early. Airflow PRs can be commented and suggested by everyone. Please do so next time before merging! You are most welcome. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] potiuk commented on pull request #15177: Import connections from a file

potiuk commented on pull request #15177: URL: https://github.com/apache/airflow/pull/15177#issuecomment-905665622 If you really cared to ask this kind of question @MM-Lehmann, then I believe because apparently you wanted to here the answer rather than show your superiority over those who volunteer their time so that you can get great FREE software. So here it is. My one answer is - because people are humans and when they try to improve something, they sometimes make mistakes (as opposed to people who do not do do much so they do not do mistakes either). Or maybe another answer - because you weren't around with helpful comment like that when it was discussed @MM-Lehmann . I am sure if you were around such mistakes would not have happened. Please next time be sure to follow up the changed PRs and catch such omissions early. Airflow PRs can be commented and suggested by everyone. Please do so next time before merging! You are most welcome. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] mik-laj commented on a change in pull request #17741: Add Snowflake DQ Operators

mik-laj commented on a change in pull request #17741: URL: https://github.com/apache/airflow/pull/17741#discussion_r695897882 ## File path: airflow/providers/snowflake/operators/snowflake.py ## @@ -125,3 +126,284 @@ def execute(self, context: Any) -> None: if self.do_xcom_push: return execution_info + + +class _SnowflakeDbHookMixin: +def get_db_hook(self) -> SnowflakeHook: +""" +Create and return SnowflakeHook. +:return: a SnowflakeHook instance. Review comment: ```suggestion :return: a SnowflakeHook instance. ``` this is needed to render the documentation properly. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] mik-laj commented on a change in pull request #17741: Add Snowflake DQ Operators

mik-laj commented on a change in pull request #17741: URL: https://github.com/apache/airflow/pull/17741#discussion_r695897882 ## File path: airflow/providers/snowflake/operators/snowflake.py ## @@ -125,3 +126,284 @@ def execute(self, context: Any) -> None: if self.do_xcom_push: return execution_info + + +class _SnowflakeDbHookMixin: +def get_db_hook(self) -> SnowflakeHook: +""" +Create and return SnowflakeHook. +:return: a SnowflakeHook instance. Review comment: ```suggestion :return: a SnowflakeHook instance. ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] KarthikRajashekaran closed issue #17831: MSSQL: Adaptive Server connection failed

KarthikRajashekaran closed issue #17831: URL: https://github.com/apache/airflow/issues/17831 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: commits-unsubscr...@airflow.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [airflow] jedcunningham commented on a change in pull request #17797: Fix broken MSSQL test

jedcunningham commented on a change in pull request #17797:

URL: https://github.com/apache/airflow/pull/17797#discussion_r695890136

##

File path: tests/task/task_runner/test_standard_task_runner.py

##

@@ -181,40 +179,40 @@ def test_on_kill(self):

except OSError:

pass

-dagbag = models.DagBag(

+dagbag = DagBag(

dag_folder=TEST_DAG_FOLDER,

include_examples=False,

)

dag = dagbag.dags.get('test_on_kill')

task = dag.get_task('task1')

-session = settings.Session()

+with create_session() as session:

+dag.clear()

+dag.create_dagrun(

+run_id="test",

+state=State.RUNNING,