[GitHub] ankit0811 commented on issue #6967: NoClassDefFoundError when using druid-hdfs-storage

ankit0811 commented on issue #6967: NoClassDefFoundError when using druid-hdfs-storage URL: https://github.com/apache/incubator-druid/issues/6967#issuecomment-459941408 The `hadoop-client` is unable to pull in the dependency `hadoop-common` in `extension-core/druid-hdfs-storage` cos of the scope `provided` for `hadoop-common` in the main and `hadoop-indexing` pom One possible solution is to provide the dependency `hadoop-common` with scope as `compile` inside `druid-hdfs-storage` pom Let me know what you think @jon-wei This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] glasser opened a new issue #6989: Native batch ingestion didn't replace existing segment

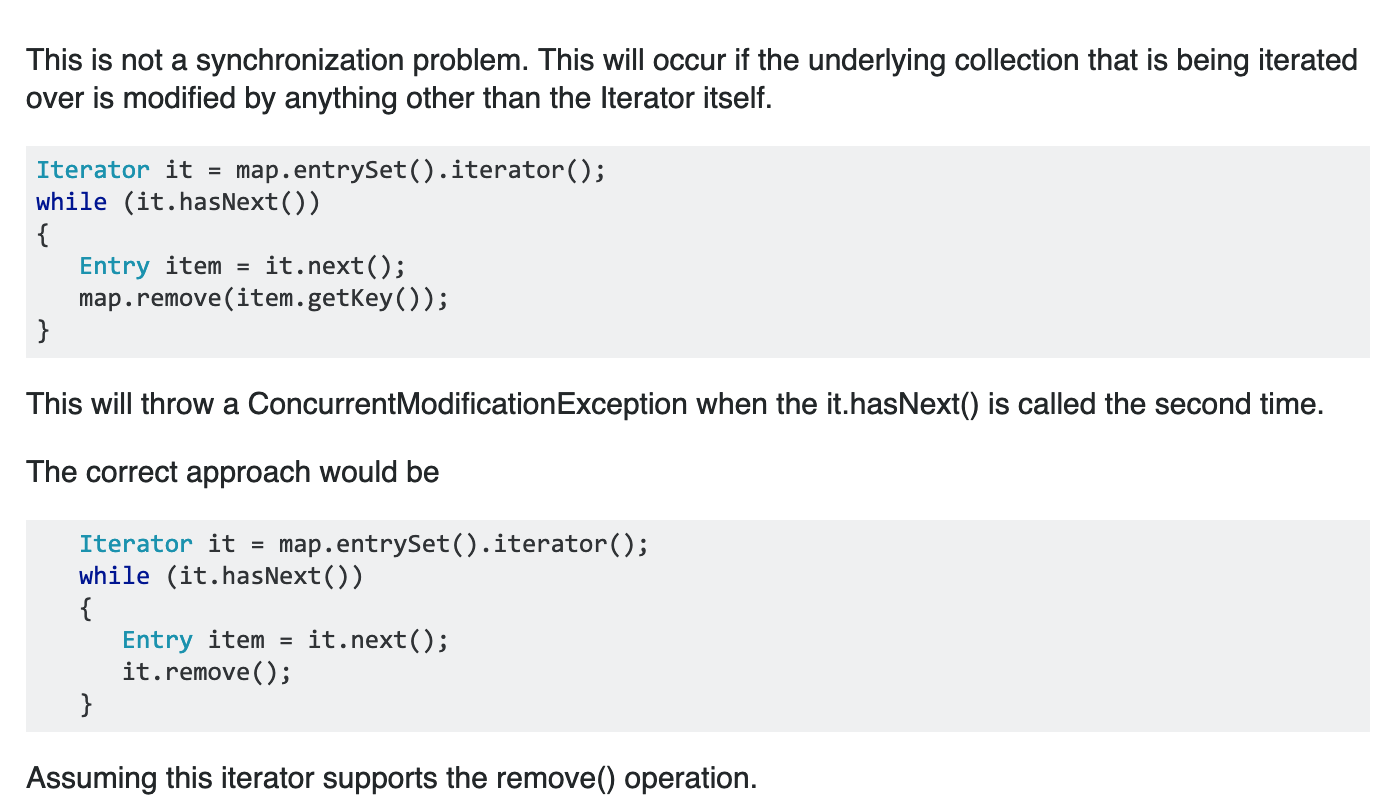

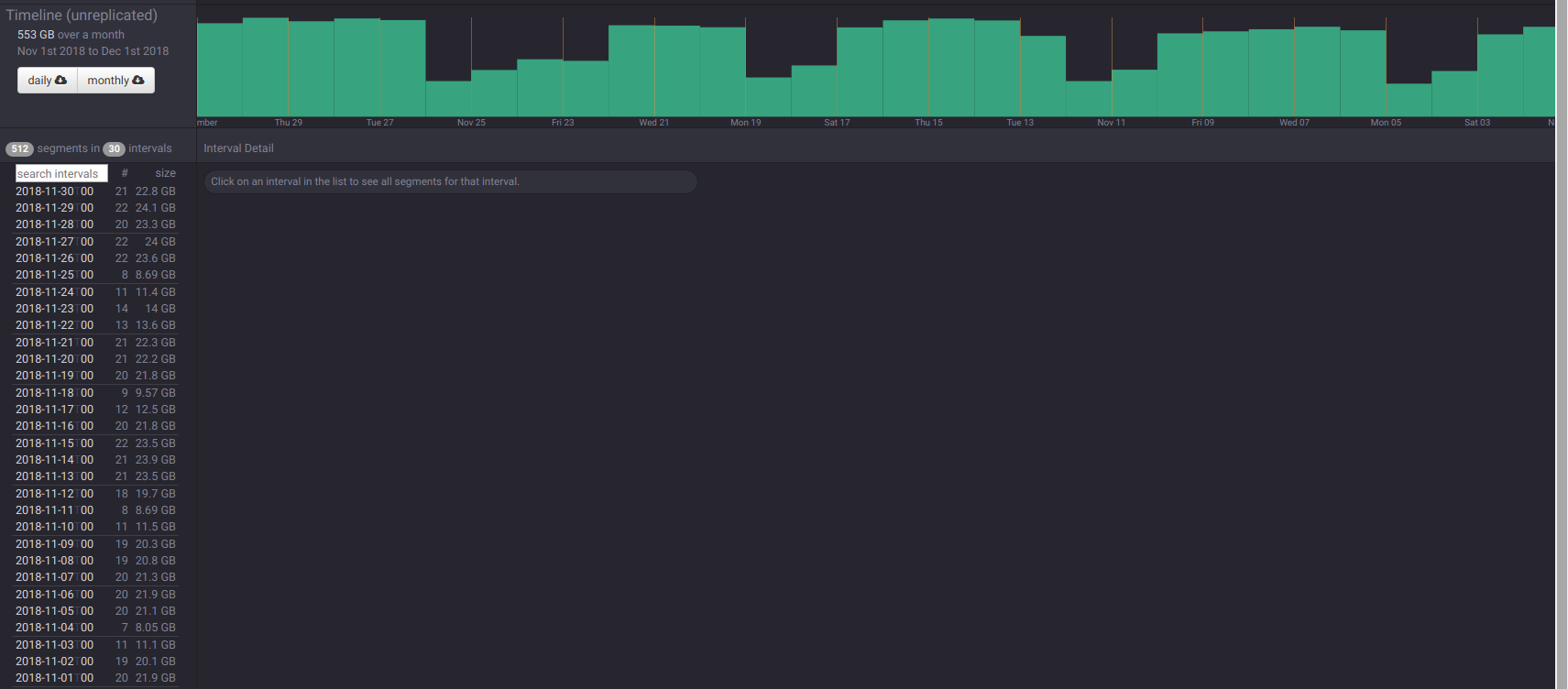

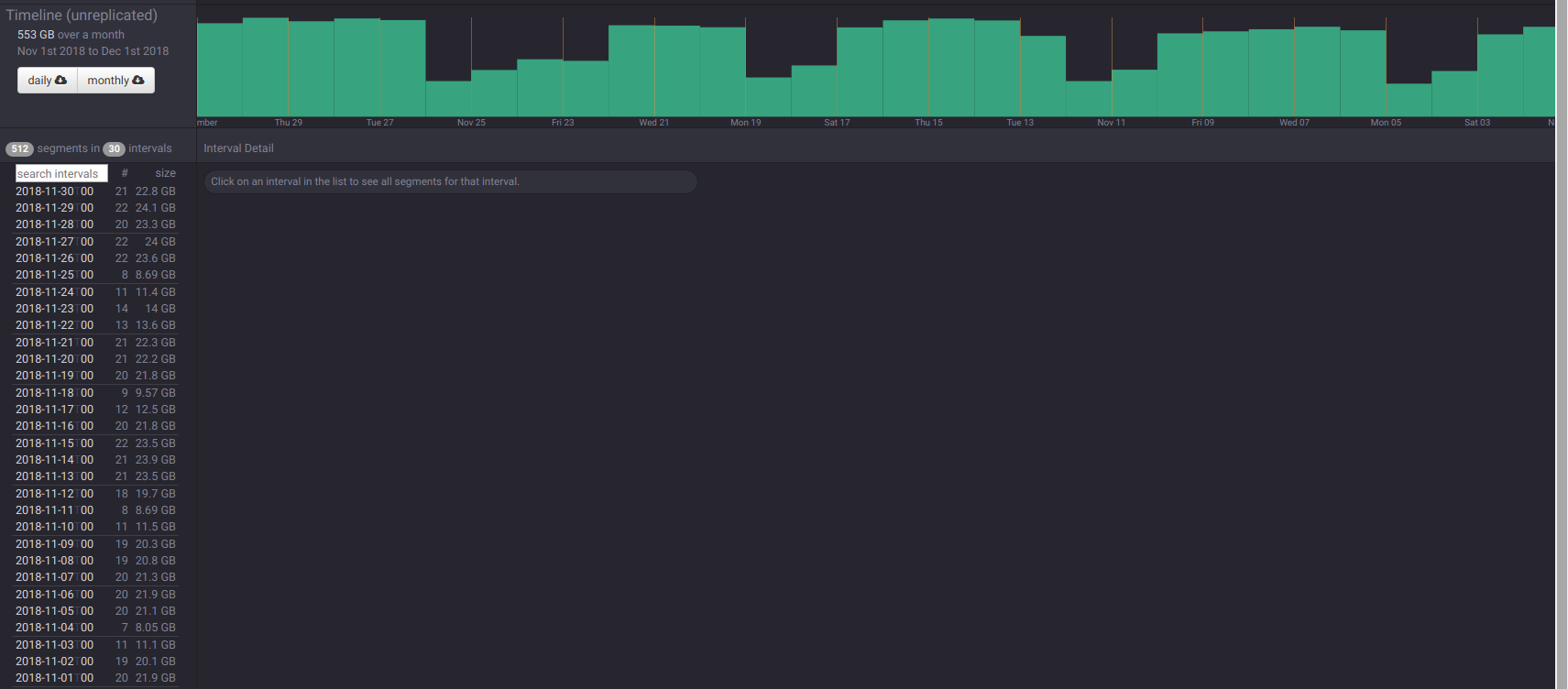

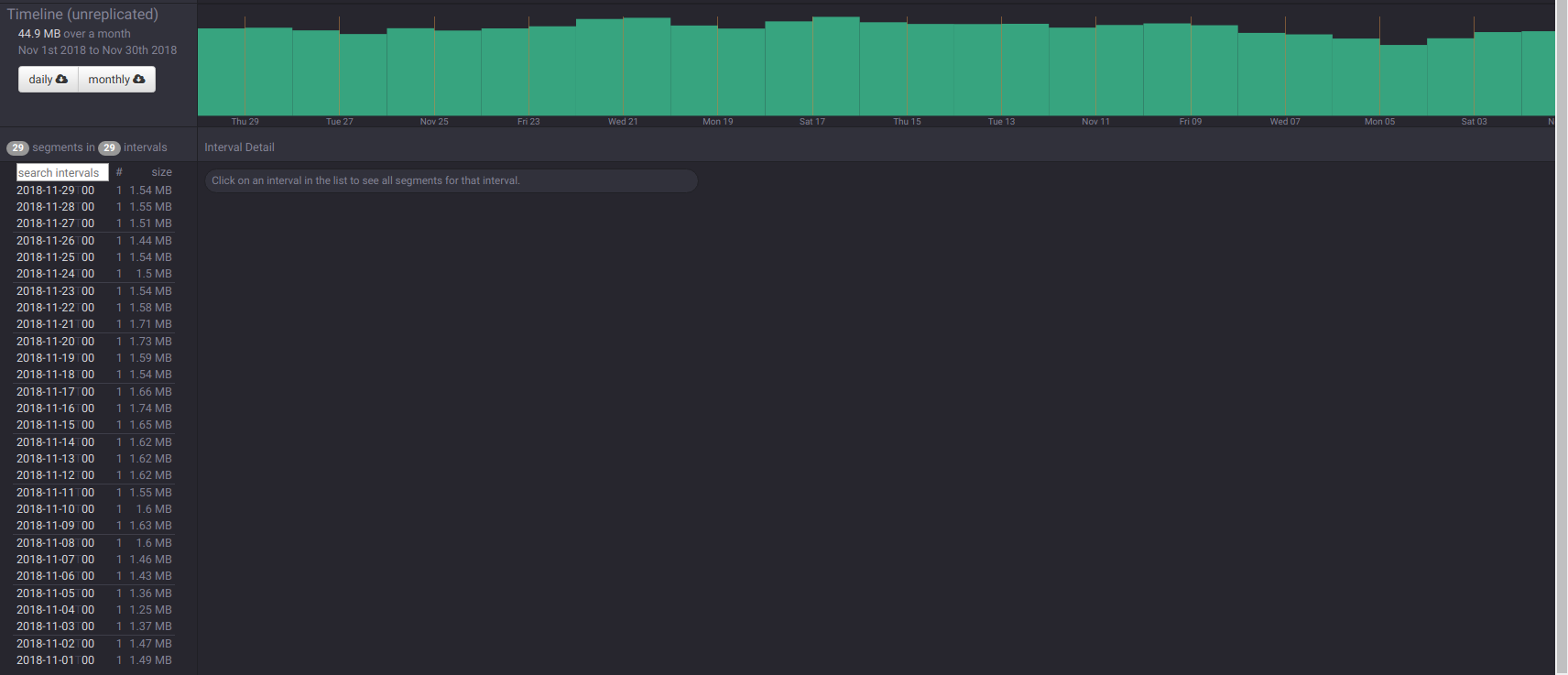

glasser opened a new issue #6989: Native batch ingestion didn't replace existing segment URL: https://github.com/apache/incubator-druid/issues/6989 We're experimenting with native batch ingestion on our 0.13-incubating cluster for the first time (with a custom firehose reading from files saved to GCS by Secor, with a custom InputRowParser). There was a period of a week where the data source had no data. We ran batch ingestion (index_parallel) over one particular hour (5am-6am on December 16th) and it successfully ingested that hour — a segment showed up in the coordinator, it could be queried, etc. (Our segment granularity is HOUR.) Then we ran it again on the entire 24 hours of December 16th. It ran 24 subtasks (our firehose divides up by hour) and ingested the full day, yay! Except when we look in the coordinator, it now lists 2 segments with identical sizes for the 5am hour that we first tested with. Also both of them are listed with the same version which was from the first batch ingestion, not the version that the other 23 segments have from the second batch ingestion. We did not explicitly specify appendToExisting in our ioConfig but I believe the default is false and looking at the task payload it is expanded to false. Are we doing something wrong if our goal is to replace existing segments? Isn't that what `appendToExisting: false` should do? The bad hour in the coordinator:  The good hour:  This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] pzhdfy edited a comment on issue #6690: bugfix: when building materialized-view, if taskCount>1, may cause concurrentModificationException

pzhdfy edited a comment on issue #6690: bugfix: when building

materialized-view, if taskCount>1, may cause concurrentModificationException

URL: https://github.com/apache/incubator-druid/pull/6690#issuecomment-459931967

@jihoonson

Yes, this is guarded by taskLock .

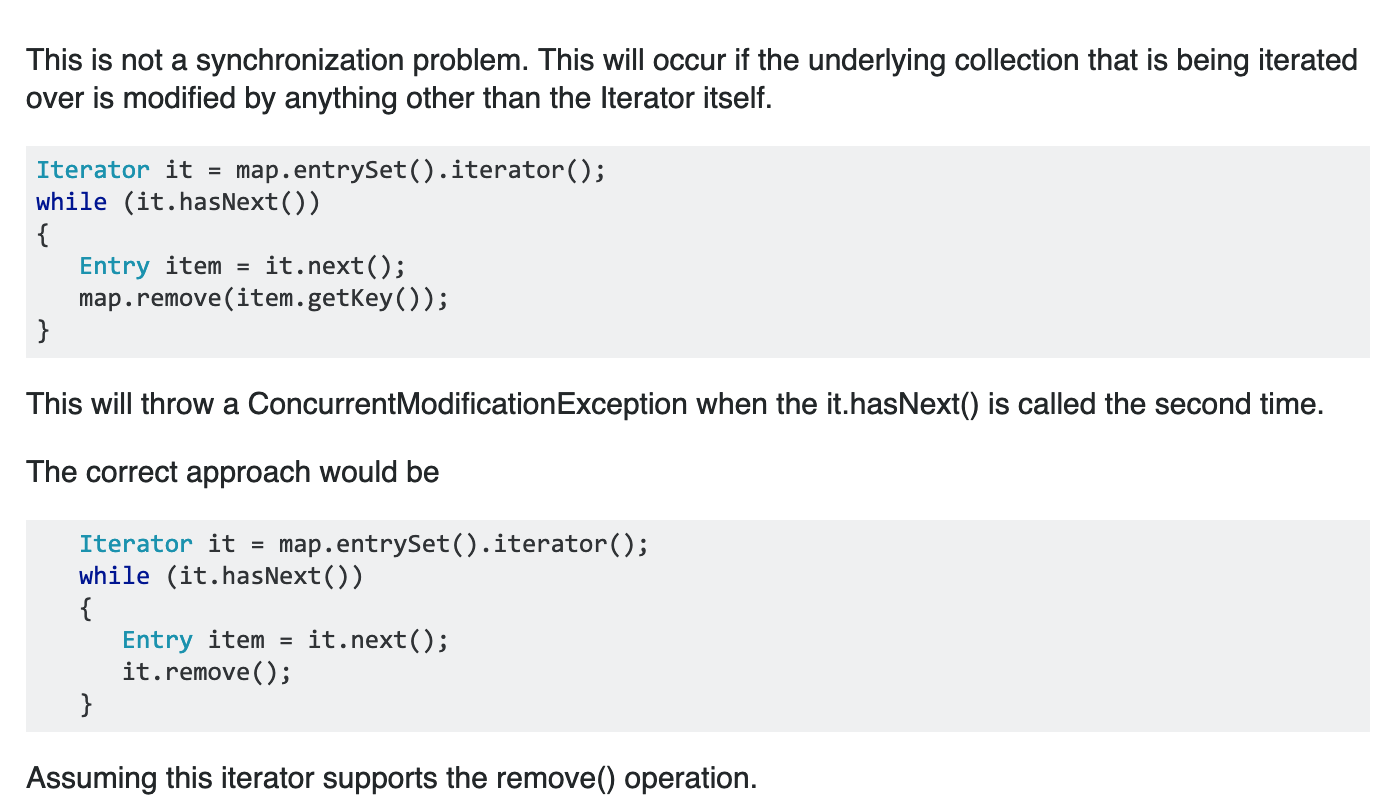

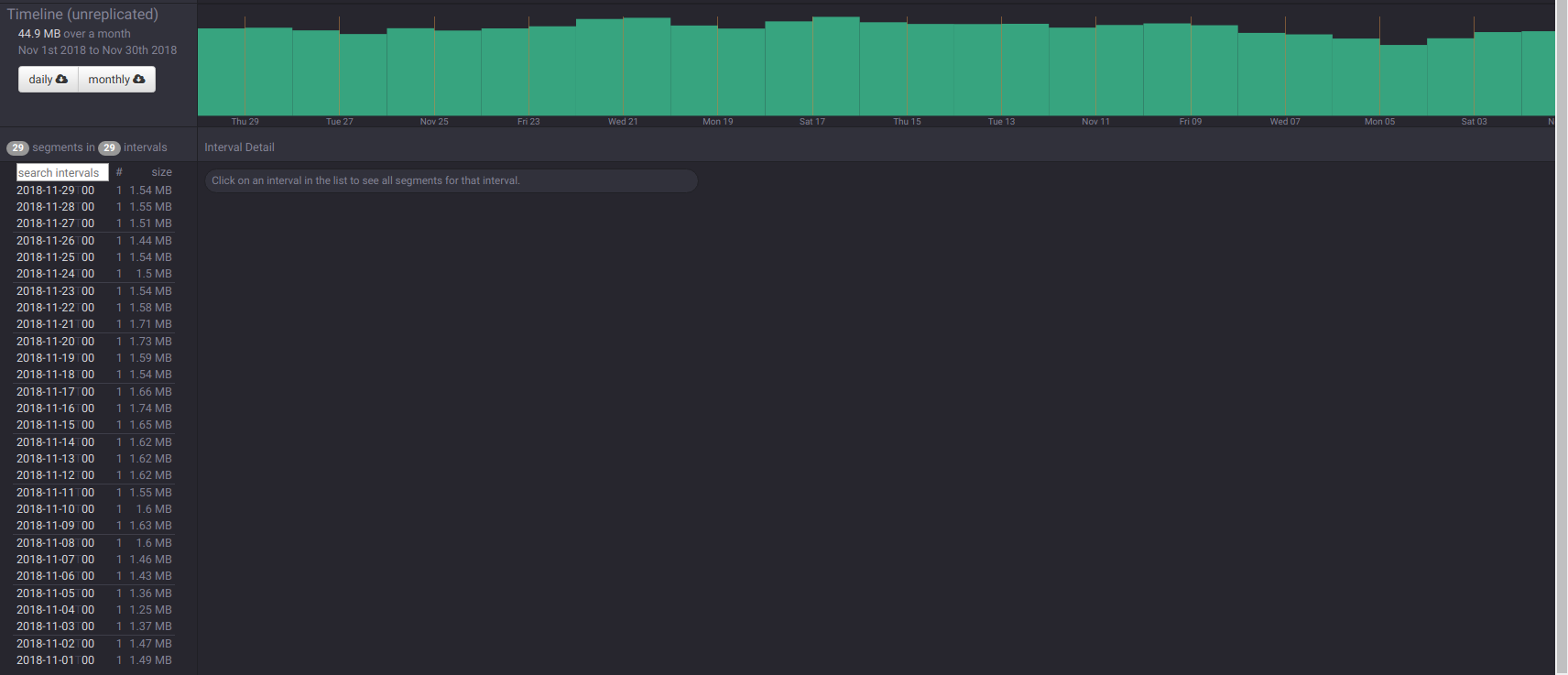

This is just the WRONG USE of hashmap.

if we remove an entry from a hashmap by remove(key), while iterating it,

this will throw a concurrentModificationException, even in a single thread.

ref:https://stackoverflow.com/questions/602636/concurrentmodificationexception-and-a-hashmap

```java

for (Map.Entry entry : runningTasks.entrySet()) {

Optional taskStatus =

taskStorage.getStatus(entry.getValue().getId());

if (!taskStatus.isPresent() || !taskStatus.get().isRunnable()) {

runningTasks.remove(entry.getKey());

runningVersion.remove(entry.getKey());

}

}

```

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] pzhdfy commented on issue #6690: bugfix: when building materialized-view, if taskCount>1, may cause concurrentModificationException

pzhdfy commented on issue #6690: bugfix: when building materialized-view, if

taskCount>1, may cause concurrentModificationException

URL: https://github.com/apache/incubator-druid/pull/6690#issuecomment-459931967

Yes, this is guarded by taskLock .

This is just the WRONG USE of hashmap.

if we remove an entry from a hashmap by remove(key), while iterating it,

this will throw a concurrentModificationException, even in a single thread.

ref:https://stackoverflow.com/questions/602636/concurrentmodificationexception-and-a-hashmap

```java

for (Map.Entry entry : runningTasks.entrySet()) {

Optional taskStatus =

taskStorage.getStatus(entry.getValue().getId());

if (!taskStatus.isPresent() || !taskStatus.get().isRunnable()) {

runningTasks.remove(entry.getKey());

runningVersion.remove(entry.getKey());

}

}

```

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] pzhdfy opened a new pull request #6988: [Improvement] historical fast restart by lazy load columns metadata

pzhdfy opened a new pull request #6988: [Improvement] historical fast restart by lazy load columns metadata URL: https://github.com/apache/incubator-druid/pull/6988 We have large data in druid, historical (12 * 2T SATA HDD) will have over 10k segments and 10TB size. When we want to restart a historical to change configuration or after OOM, it will take 40 minutes , that is too slow. We profile the restart progress, and make a flame graph.  We can see io.druid.segment.IndexIO$V9IndexLoader.deserializeColumn cost most time. So we can make columns metadata lazy load , until it gets first used. After optimize, historical restart will only spend 2 minutes( 20X faster). And the flame graph after optimize is below, we can see load metadata spend little time.  we add a new config druid.segmentCache.lazyLoadOnStart (default is false), whether to do this optimize. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] jihoonson commented on issue #6987: ParallelIndexSupervisorTask: don't warn about a default value

jihoonson commented on issue #6987: ParallelIndexSupervisorTask: don't warn about a default value URL: https://github.com/apache/incubator-druid/pull/6987#issuecomment-459931226 Thanks. LGTM after CI. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] leerho commented on issue #6869: [Proposal] Deprecating "approximate histogram" in favor of new sketches

leerho commented on issue #6869: [Proposal] Deprecating "approximate histogram" in favor of new sketches URL: https://github.com/apache/incubator-druid/issues/6869#issuecomment-459925200 Next up is the Moment Sketch ... :) This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] leerho commented on issue #6869: [Proposal] Deprecating "approximate histogram" in favor of new sketches

leerho commented on issue #6869: [Proposal] Deprecating "approximate histogram" in favor of new sketches URL: https://github.com/apache/incubator-druid/issues/6869#issuecomment-459925039 FYI I just finished an accuracy study of the Approximate Histogram. It is really bad! You can find it [here](https://datasketches.github.io/docs/Quantiles/DruidApproxHistogramStudy.html). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] surekhasaharan commented on a change in pull request #6901: Introduce published segment cache in broker

surekhasaharan commented on a change in pull request #6901: Introduce published

segment cache in broker

URL: https://github.com/apache/incubator-druid/pull/6901#discussion_r253248599

##

File path:

sql/src/main/java/org/apache/druid/sql/calcite/schema/MetadataSegmentView.java

##

@@ -0,0 +1,258 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.sql.calcite.schema;

+

+import com.fasterxml.jackson.core.type.TypeReference;

+import com.fasterxml.jackson.databind.JavaType;

+import com.fasterxml.jackson.databind.ObjectMapper;

+import com.google.common.base.Preconditions;

+import com.google.common.util.concurrent.ListenableFuture;

+import com.google.inject.Inject;

+import org.apache.druid.client.BrokerSegmentWatcherConfig;

+import org.apache.druid.client.DataSegmentInterner;

+import org.apache.druid.client.JsonParserIterator;

+import org.apache.druid.client.coordinator.Coordinator;

+import org.apache.druid.concurrent.LifecycleLock;

+import org.apache.druid.discovery.DruidLeaderClient;

+import org.apache.druid.guice.ManageLifecycle;

+import org.apache.druid.java.util.common.DateTimes;

+import org.apache.druid.java.util.common.ISE;

+import org.apache.druid.java.util.common.StringUtils;

+import org.apache.druid.java.util.common.concurrent.Execs;

+import org.apache.druid.java.util.common.lifecycle.LifecycleStart;

+import org.apache.druid.java.util.common.lifecycle.LifecycleStop;

+import org.apache.druid.java.util.emitter.EmittingLogger;

+import org.apache.druid.java.util.http.client.Request;

+import org.apache.druid.server.coordinator.BytesAccumulatingResponseHandler;

+import org.apache.druid.sql.calcite.planner.PlannerConfig;

+import org.apache.druid.timeline.DataSegment;

+import org.jboss.netty.handler.codec.http.HttpMethod;

+import org.joda.time.DateTime;

+

+import javax.annotation.Nullable;

+import java.io.IOException;

+import java.io.InputStream;

+import java.util.Iterator;

+import java.util.Set;

+import java.util.concurrent.ConcurrentHashMap;

+import java.util.concurrent.ConcurrentMap;

+import java.util.concurrent.ScheduledExecutorService;

+import java.util.concurrent.TimeUnit;

+

+/**

+ * This class polls the coordinator in background to keep the latest published

segments.

+ * Provides {@link #getPublishedSegments()} for others to get segments in

metadata store.

+ */

+@ManageLifecycle

+public class MetadataSegmentView

+{

+

+ private static final EmittingLogger log = new

EmittingLogger(MetadataSegmentView.class);

+

+ private final DruidLeaderClient coordinatorDruidLeaderClient;

+ private final ObjectMapper jsonMapper;

+ private final BytesAccumulatingResponseHandler responseHandler;

+ private final BrokerSegmentWatcherConfig segmentWatcherConfig;

+

+ private final boolean isCacheEnabled;

+ @Nullable

+ private final ConcurrentMap publishedSegments;

+ private final ScheduledExecutorService scheduledExec;

+ private final long pollPeriodinMS;

+ private final LifecycleLock lifecycleLock = new LifecycleLock();

+

+ @Inject

+ public MetadataSegmentView(

+ final @Coordinator DruidLeaderClient druidLeaderClient,

+ final ObjectMapper jsonMapper,

+ final BytesAccumulatingResponseHandler responseHandler,

+ final BrokerSegmentWatcherConfig segmentWatcherConfig,

+ final PlannerConfig plannerConfig

+ )

+ {

+Preconditions.checkNotNull(plannerConfig, "plannerConfig");

+this.coordinatorDruidLeaderClient = druidLeaderClient;

+this.jsonMapper = jsonMapper;

+this.responseHandler = responseHandler;

+this.segmentWatcherConfig = segmentWatcherConfig;

+this.isCacheEnabled = plannerConfig.isMetadataSegmentCacheEnable();

+this.pollPeriodinMS = plannerConfig.getMetadataSegmentPollPeriod();

+this.publishedSegments = isCacheEnabled ? new ConcurrentHashMap<>(1000) :

null;

+this.scheduledExec =

Execs.scheduledSingleThreaded("MetadataSegmentView-Cache--%d");

+ }

+

+ @LifecycleStart

+ public void start()

+ {

+if (!lifecycleLock.canStart()) {

+ throw new ISE("can't start.");

+}

+try {

+ if (isCacheEnabled) {

+try {

+ poll();

+}

+finally {

+ scheduledExec.schedule(new PollTask(), 0, Time

[GitHub] glasser opened a new pull request #6987: ParallelIndexSupervisorTask: don't warn about a default value

glasser opened a new pull request #6987: ParallelIndexSupervisorTask: don't warn about a default value URL: https://github.com/apache/incubator-druid/pull/6987 Native batch indexing doesn't yet support the maxParseExceptions, maxSavedParseExceptions, and logParseExceptions tuning config options, so ParallelIndexSupervisorTask logs if these are set. But the default value for maxParseExceptions is Integer.MAX_VALUE, which means that you'll get the maxParseExceptions flavor of this warning even if you don't configure maxParseExceptions. This PR changes all three warnings to occur if you change the settings from the default; this mostly affects the maxParseExceptions warning. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] surekhasaharan commented on a change in pull request #6901: Introduce published segment cache in broker

surekhasaharan commented on a change in pull request #6901: Introduce published

segment cache in broker

URL: https://github.com/apache/incubator-druid/pull/6901#discussion_r253245250

##

File path:

sql/src/main/java/org/apache/druid/sql/calcite/schema/MetadataSegmentView.java

##

@@ -106,10 +106,15 @@ public void start()

}

try {

if (isCacheEnabled) {

-scheduledExec.schedule(new PollTask(), 0, TimeUnit.MILLISECONDS);

-lifecycleLock.started();

-log.info("MetadataSegmentView Started.");

+try {

+ poll();

+}

+finally {

+ scheduledExec.schedule(new PollTask(), 0, TimeUnit.MILLISECONDS);

Review comment:

yeah, changed to use `pollPeriodinMS`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] surekhasaharan commented on a change in pull request #6901: Introduce published segment cache in broker

surekhasaharan commented on a change in pull request #6901: Introduce published

segment cache in broker

URL: https://github.com/apache/incubator-druid/pull/6901#discussion_r253245195

##

File path:

sql/src/main/java/org/apache/druid/sql/calcite/schema/MetadataSegmentView.java

##

@@ -106,10 +106,15 @@ public void start()

}

try {

if (isCacheEnabled) {

-scheduledExec.schedule(new PollTask(), 0, TimeUnit.MILLISECONDS);

-lifecycleLock.started();

-log.info("MetadataSegmentView Started.");

+try {

+ poll();

+}

+finally {

Review comment:

added catch block

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] jon-wei commented on a change in pull request #6901: Introduce published segment cache in broker

jon-wei commented on a change in pull request #6901: Introduce published

segment cache in broker

URL: https://github.com/apache/incubator-druid/pull/6901#discussion_r253244563

##

File path:

sql/src/main/java/org/apache/druid/sql/calcite/schema/MetadataSegmentView.java

##

@@ -0,0 +1,258 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.sql.calcite.schema;

+

+import com.fasterxml.jackson.core.type.TypeReference;

+import com.fasterxml.jackson.databind.JavaType;

+import com.fasterxml.jackson.databind.ObjectMapper;

+import com.google.common.base.Preconditions;

+import com.google.common.util.concurrent.ListenableFuture;

+import com.google.inject.Inject;

+import org.apache.druid.client.BrokerSegmentWatcherConfig;

+import org.apache.druid.client.DataSegmentInterner;

+import org.apache.druid.client.JsonParserIterator;

+import org.apache.druid.client.coordinator.Coordinator;

+import org.apache.druid.concurrent.LifecycleLock;

+import org.apache.druid.discovery.DruidLeaderClient;

+import org.apache.druid.guice.ManageLifecycle;

+import org.apache.druid.java.util.common.DateTimes;

+import org.apache.druid.java.util.common.ISE;

+import org.apache.druid.java.util.common.StringUtils;

+import org.apache.druid.java.util.common.concurrent.Execs;

+import org.apache.druid.java.util.common.lifecycle.LifecycleStart;

+import org.apache.druid.java.util.common.lifecycle.LifecycleStop;

+import org.apache.druid.java.util.emitter.EmittingLogger;

+import org.apache.druid.java.util.http.client.Request;

+import org.apache.druid.server.coordinator.BytesAccumulatingResponseHandler;

+import org.apache.druid.sql.calcite.planner.PlannerConfig;

+import org.apache.druid.timeline.DataSegment;

+import org.jboss.netty.handler.codec.http.HttpMethod;

+import org.joda.time.DateTime;

+

+import javax.annotation.Nullable;

+import java.io.IOException;

+import java.io.InputStream;

+import java.util.Iterator;

+import java.util.Set;

+import java.util.concurrent.ConcurrentHashMap;

+import java.util.concurrent.ConcurrentMap;

+import java.util.concurrent.ScheduledExecutorService;

+import java.util.concurrent.TimeUnit;

+

+/**

+ * This class polls the coordinator in background to keep the latest published

segments.

+ * Provides {@link #getPublishedSegments()} for others to get segments in

metadata store.

+ */

+@ManageLifecycle

+public class MetadataSegmentView

+{

+

+ private static final EmittingLogger log = new

EmittingLogger(MetadataSegmentView.class);

+

+ private final DruidLeaderClient coordinatorDruidLeaderClient;

+ private final ObjectMapper jsonMapper;

+ private final BytesAccumulatingResponseHandler responseHandler;

+ private final BrokerSegmentWatcherConfig segmentWatcherConfig;

+

+ private final boolean isCacheEnabled;

+ @Nullable

+ private final ConcurrentMap publishedSegments;

+ private final ScheduledExecutorService scheduledExec;

+ private final long pollPeriodinMS;

+ private final LifecycleLock lifecycleLock = new LifecycleLock();

+

+ @Inject

+ public MetadataSegmentView(

+ final @Coordinator DruidLeaderClient druidLeaderClient,

+ final ObjectMapper jsonMapper,

+ final BytesAccumulatingResponseHandler responseHandler,

+ final BrokerSegmentWatcherConfig segmentWatcherConfig,

+ final PlannerConfig plannerConfig

+ )

+ {

+Preconditions.checkNotNull(plannerConfig, "plannerConfig");

+this.coordinatorDruidLeaderClient = druidLeaderClient;

+this.jsonMapper = jsonMapper;

+this.responseHandler = responseHandler;

+this.segmentWatcherConfig = segmentWatcherConfig;

+this.isCacheEnabled = plannerConfig.isMetadataSegmentCacheEnable();

+this.pollPeriodinMS = plannerConfig.getMetadataSegmentPollPeriod();

+this.publishedSegments = isCacheEnabled ? new ConcurrentHashMap<>(1000) :

null;

+this.scheduledExec =

Execs.scheduledSingleThreaded("MetadataSegmentView-Cache--%d");

+ }

+

+ @LifecycleStart

+ public void start()

+ {

+if (!lifecycleLock.canStart()) {

+ throw new ISE("can't start.");

+}

+try {

+ if (isCacheEnabled) {

+try {

+ poll();

+}

+finally {

+ scheduledExec.schedule(new PollTask(), 0, TimeUnit.MI

[GitHub] jon-wei commented on a change in pull request #6901: Introduce published segment cache in broker

jon-wei commented on a change in pull request #6901: Introduce published

segment cache in broker

URL: https://github.com/apache/incubator-druid/pull/6901#discussion_r253244563

##

File path:

sql/src/main/java/org/apache/druid/sql/calcite/schema/MetadataSegmentView.java

##

@@ -0,0 +1,258 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.sql.calcite.schema;

+

+import com.fasterxml.jackson.core.type.TypeReference;

+import com.fasterxml.jackson.databind.JavaType;

+import com.fasterxml.jackson.databind.ObjectMapper;

+import com.google.common.base.Preconditions;

+import com.google.common.util.concurrent.ListenableFuture;

+import com.google.inject.Inject;

+import org.apache.druid.client.BrokerSegmentWatcherConfig;

+import org.apache.druid.client.DataSegmentInterner;

+import org.apache.druid.client.JsonParserIterator;

+import org.apache.druid.client.coordinator.Coordinator;

+import org.apache.druid.concurrent.LifecycleLock;

+import org.apache.druid.discovery.DruidLeaderClient;

+import org.apache.druid.guice.ManageLifecycle;

+import org.apache.druid.java.util.common.DateTimes;

+import org.apache.druid.java.util.common.ISE;

+import org.apache.druid.java.util.common.StringUtils;

+import org.apache.druid.java.util.common.concurrent.Execs;

+import org.apache.druid.java.util.common.lifecycle.LifecycleStart;

+import org.apache.druid.java.util.common.lifecycle.LifecycleStop;

+import org.apache.druid.java.util.emitter.EmittingLogger;

+import org.apache.druid.java.util.http.client.Request;

+import org.apache.druid.server.coordinator.BytesAccumulatingResponseHandler;

+import org.apache.druid.sql.calcite.planner.PlannerConfig;

+import org.apache.druid.timeline.DataSegment;

+import org.jboss.netty.handler.codec.http.HttpMethod;

+import org.joda.time.DateTime;

+

+import javax.annotation.Nullable;

+import java.io.IOException;

+import java.io.InputStream;

+import java.util.Iterator;

+import java.util.Set;

+import java.util.concurrent.ConcurrentHashMap;

+import java.util.concurrent.ConcurrentMap;

+import java.util.concurrent.ScheduledExecutorService;

+import java.util.concurrent.TimeUnit;

+

+/**

+ * This class polls the coordinator in background to keep the latest published

segments.

+ * Provides {@link #getPublishedSegments()} for others to get segments in

metadata store.

+ */

+@ManageLifecycle

+public class MetadataSegmentView

+{

+

+ private static final EmittingLogger log = new

EmittingLogger(MetadataSegmentView.class);

+

+ private final DruidLeaderClient coordinatorDruidLeaderClient;

+ private final ObjectMapper jsonMapper;

+ private final BytesAccumulatingResponseHandler responseHandler;

+ private final BrokerSegmentWatcherConfig segmentWatcherConfig;

+

+ private final boolean isCacheEnabled;

+ @Nullable

+ private final ConcurrentMap publishedSegments;

+ private final ScheduledExecutorService scheduledExec;

+ private final long pollPeriodinMS;

+ private final LifecycleLock lifecycleLock = new LifecycleLock();

+

+ @Inject

+ public MetadataSegmentView(

+ final @Coordinator DruidLeaderClient druidLeaderClient,

+ final ObjectMapper jsonMapper,

+ final BytesAccumulatingResponseHandler responseHandler,

+ final BrokerSegmentWatcherConfig segmentWatcherConfig,

+ final PlannerConfig plannerConfig

+ )

+ {

+Preconditions.checkNotNull(plannerConfig, "plannerConfig");

+this.coordinatorDruidLeaderClient = druidLeaderClient;

+this.jsonMapper = jsonMapper;

+this.responseHandler = responseHandler;

+this.segmentWatcherConfig = segmentWatcherConfig;

+this.isCacheEnabled = plannerConfig.isMetadataSegmentCacheEnable();

+this.pollPeriodinMS = plannerConfig.getMetadataSegmentPollPeriod();

+this.publishedSegments = isCacheEnabled ? new ConcurrentHashMap<>(1000) :

null;

+this.scheduledExec =

Execs.scheduledSingleThreaded("MetadataSegmentView-Cache--%d");

+ }

+

+ @LifecycleStart

+ public void start()

+ {

+if (!lifecycleLock.canStart()) {

+ throw new ISE("can't start.");

+}

+try {

+ if (isCacheEnabled) {

+try {

+ poll();

+}

+finally {

+ scheduledExec.schedule(new PollTask(), 0, TimeUnit.MI

[GitHub] jihoonson commented on a change in pull request #6901: Introduce published segment cache in broker

jihoonson commented on a change in pull request #6901: Introduce published

segment cache in broker

URL: https://github.com/apache/incubator-druid/pull/6901#discussion_r253242662

##

File path:

sql/src/main/java/org/apache/druid/sql/calcite/schema/MetadataSegmentView.java

##

@@ -106,10 +106,15 @@ public void start()

}

try {

if (isCacheEnabled) {

-scheduledExec.schedule(new PollTask(), 0, TimeUnit.MILLISECONDS);

-lifecycleLock.started();

-log.info("MetadataSegmentView Started.");

+try {

+ poll();

+}

+finally {

+ scheduledExec.schedule(new PollTask(), 0, TimeUnit.MILLISECONDS);

Review comment:

Please set the proper wait time instead of starting poll() immediately.

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] justinborromeo opened a new pull request #6986: Create Scan Benchmark

justinborromeo opened a new pull request #6986: Create Scan Benchmark URL: https://github.com/apache/incubator-druid/pull/6986 This PR creates a Scan query benchmark to act as a baseline for future changes to the Scan query (specifically #6088 ). The queries are fairly simple and if anyone has any suggestions for better queries to do, lmk. The ScanQuery builder was also moved from the ScanQuery class to the Druids class to match the pattern of all the other query builders. Not sure if this matters since this PR doesn't involve any changes to the query code but here's the results of the benchmark:  This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] jihoonson commented on a change in pull request #6901: Introduce published segment cache in broker

jihoonson commented on a change in pull request #6901: Introduce published

segment cache in broker

URL: https://github.com/apache/incubator-druid/pull/6901#discussion_r253242562

##

File path:

sql/src/main/java/org/apache/druid/sql/calcite/schema/MetadataSegmentView.java

##

@@ -106,10 +106,15 @@ public void start()

}

try {

if (isCacheEnabled) {

-scheduledExec.schedule(new PollTask(), 0, TimeUnit.MILLISECONDS);

-lifecycleLock.started();

-log.info("MetadataSegmentView Started.");

+try {

+ poll();

+}

+finally {

Review comment:

Please add a catch block to emit exceptions.

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] jon-wei commented on a change in pull request #6951: Add more sketch aggregator support in Druid SQL

jon-wei commented on a change in pull request #6951: Add more sketch aggregator

support in Druid SQL

URL: https://github.com/apache/incubator-druid/pull/6951#discussion_r253238633

##

File path:

extensions-core/datasketches/src/test/java/org/apache/druid/query/aggregation/datasketches/hll/sql/HllSketchSqlAggregatorTest.java

##

@@ -0,0 +1,402 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.query.aggregation.datasketches.hll.sql;

+

+import com.fasterxml.jackson.databind.Module;

+import com.google.common.collect.ImmutableList;

+import com.google.common.collect.ImmutableMap;

+import com.google.common.collect.ImmutableSet;

+import com.google.common.collect.Iterables;

+import org.apache.druid.common.config.NullHandling;

+import org.apache.druid.java.util.common.Pair;

+import org.apache.druid.java.util.common.granularity.Granularities;

+import org.apache.druid.java.util.common.io.Closer;

+import org.apache.druid.query.Druids;

+import org.apache.druid.query.Query;

+import org.apache.druid.query.QueryDataSource;

+import org.apache.druid.query.QueryRunnerFactoryConglomerate;

+import org.apache.druid.query.aggregation.CountAggregatorFactory;

+import org.apache.druid.query.aggregation.DoubleSumAggregatorFactory;

+import org.apache.druid.query.aggregation.FilteredAggregatorFactory;

+import org.apache.druid.query.aggregation.LongSumAggregatorFactory;

+import

org.apache.druid.query.aggregation.datasketches.hll.HllSketchBuildAggregatorFactory;

+import

org.apache.druid.query.aggregation.datasketches.hll.HllSketchMergeAggregatorFactory;

+import org.apache.druid.query.aggregation.datasketches.hll.HllSketchModule;

+import org.apache.druid.query.aggregation.post.ArithmeticPostAggregator;

+import org.apache.druid.query.aggregation.post.FieldAccessPostAggregator;

+import

org.apache.druid.query.aggregation.post.FinalizingFieldAccessPostAggregator;

+import org.apache.druid.query.dimension.DefaultDimensionSpec;

+import org.apache.druid.query.expression.TestExprMacroTable;

+import org.apache.druid.query.groupby.GroupByQuery;

+import org.apache.druid.query.spec.MultipleIntervalSegmentSpec;

+import org.apache.druid.segment.IndexBuilder;

+import org.apache.druid.segment.QueryableIndex;

+import org.apache.druid.segment.column.ValueType;

+import org.apache.druid.segment.incremental.IncrementalIndexSchema;

+import org.apache.druid.segment.virtual.ExpressionVirtualColumn;

+import

org.apache.druid.segment.writeout.OffHeapMemorySegmentWriteOutMediumFactory;

+import org.apache.druid.server.security.AuthTestUtils;

+import org.apache.druid.server.security.AuthenticationResult;

+import org.apache.druid.sql.SqlLifecycle;

+import org.apache.druid.sql.SqlLifecycleFactory;

+import org.apache.druid.sql.calcite.BaseCalciteQueryTest;

+import org.apache.druid.sql.calcite.filtration.Filtration;

+import org.apache.druid.sql.calcite.planner.DruidOperatorTable;

+import org.apache.druid.sql.calcite.planner.PlannerConfig;

+import org.apache.druid.sql.calcite.planner.PlannerContext;

+import org.apache.druid.sql.calcite.planner.PlannerFactory;

+import org.apache.druid.sql.calcite.schema.DruidSchema;

+import org.apache.druid.sql.calcite.schema.SystemSchema;

+import org.apache.druid.sql.calcite.util.CalciteTestBase;

+import org.apache.druid.sql.calcite.util.CalciteTests;

+import org.apache.druid.sql.calcite.util.QueryLogHook;

+import org.apache.druid.sql.calcite.util.SpecificSegmentsQuerySegmentWalker;

+import org.apache.druid.timeline.DataSegment;

+import org.apache.druid.timeline.partition.LinearShardSpec;

+import org.junit.After;

+import org.junit.AfterClass;

+import org.junit.Assert;

+import org.junit.Before;

+import org.junit.BeforeClass;

+import org.junit.Rule;

+import org.junit.Test;

+import org.junit.rules.TemporaryFolder;

+

+import java.io.IOException;

+import java.util.Arrays;

+import java.util.Collections;

+import java.util.List;

+import java.util.Map;

+

+public class HllSketchSqlAggregatorTest extends CalciteTestBase

+{

+ private static final String DATA_SOURCE = "foo";

+

+ private static QueryRunnerFactoryConglomerate conglomerate;

+ private static Closer resourceCloser;

+ private static AuthenticationResult authenticationR

[GitHub] jon-wei commented on a change in pull request #6951: Add more sketch aggregator support in Druid SQL

jon-wei commented on a change in pull request #6951: Add more sketch aggregator

support in Druid SQL

URL: https://github.com/apache/incubator-druid/pull/6951#discussion_r253238585

##

File path:

extensions-core/datasketches/src/test/java/org/apache/druid/query/aggregation/datasketches/hll/sql/HllSketchSqlAggregatorTest.java

##

@@ -0,0 +1,402 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.query.aggregation.datasketches.hll.sql;

+

+import com.fasterxml.jackson.databind.Module;

+import com.google.common.collect.ImmutableList;

+import com.google.common.collect.ImmutableMap;

+import com.google.common.collect.ImmutableSet;

+import com.google.common.collect.Iterables;

+import org.apache.druid.common.config.NullHandling;

+import org.apache.druid.java.util.common.Pair;

+import org.apache.druid.java.util.common.granularity.Granularities;

+import org.apache.druid.java.util.common.io.Closer;

+import org.apache.druid.query.Druids;

+import org.apache.druid.query.Query;

+import org.apache.druid.query.QueryDataSource;

+import org.apache.druid.query.QueryRunnerFactoryConglomerate;

+import org.apache.druid.query.aggregation.CountAggregatorFactory;

+import org.apache.druid.query.aggregation.DoubleSumAggregatorFactory;

+import org.apache.druid.query.aggregation.FilteredAggregatorFactory;

+import org.apache.druid.query.aggregation.LongSumAggregatorFactory;

+import

org.apache.druid.query.aggregation.datasketches.hll.HllSketchBuildAggregatorFactory;

+import

org.apache.druid.query.aggregation.datasketches.hll.HllSketchMergeAggregatorFactory;

+import org.apache.druid.query.aggregation.datasketches.hll.HllSketchModule;

+import org.apache.druid.query.aggregation.post.ArithmeticPostAggregator;

+import org.apache.druid.query.aggregation.post.FieldAccessPostAggregator;

+import

org.apache.druid.query.aggregation.post.FinalizingFieldAccessPostAggregator;

+import org.apache.druid.query.dimension.DefaultDimensionSpec;

+import org.apache.druid.query.expression.TestExprMacroTable;

+import org.apache.druid.query.groupby.GroupByQuery;

+import org.apache.druid.query.spec.MultipleIntervalSegmentSpec;

+import org.apache.druid.segment.IndexBuilder;

+import org.apache.druid.segment.QueryableIndex;

+import org.apache.druid.segment.column.ValueType;

+import org.apache.druid.segment.incremental.IncrementalIndexSchema;

+import org.apache.druid.segment.virtual.ExpressionVirtualColumn;

+import

org.apache.druid.segment.writeout.OffHeapMemorySegmentWriteOutMediumFactory;

+import org.apache.druid.server.security.AuthTestUtils;

+import org.apache.druid.server.security.AuthenticationResult;

+import org.apache.druid.sql.SqlLifecycle;

+import org.apache.druid.sql.SqlLifecycleFactory;

+import org.apache.druid.sql.calcite.BaseCalciteQueryTest;

+import org.apache.druid.sql.calcite.filtration.Filtration;

+import org.apache.druid.sql.calcite.planner.DruidOperatorTable;

+import org.apache.druid.sql.calcite.planner.PlannerConfig;

+import org.apache.druid.sql.calcite.planner.PlannerContext;

+import org.apache.druid.sql.calcite.planner.PlannerFactory;

+import org.apache.druid.sql.calcite.schema.DruidSchema;

+import org.apache.druid.sql.calcite.schema.SystemSchema;

+import org.apache.druid.sql.calcite.util.CalciteTestBase;

+import org.apache.druid.sql.calcite.util.CalciteTests;

+import org.apache.druid.sql.calcite.util.QueryLogHook;

+import org.apache.druid.sql.calcite.util.SpecificSegmentsQuerySegmentWalker;

+import org.apache.druid.timeline.DataSegment;

+import org.apache.druid.timeline.partition.LinearShardSpec;

+import org.junit.After;

+import org.junit.AfterClass;

+import org.junit.Assert;

+import org.junit.Before;

+import org.junit.BeforeClass;

+import org.junit.Rule;

+import org.junit.Test;

+import org.junit.rules.TemporaryFolder;

+

+import java.io.IOException;

+import java.util.Arrays;

+import java.util.Collections;

+import java.util.List;

+import java.util.Map;

+

+public class HllSketchSqlAggregatorTest extends CalciteTestBase

+{

+ private static final String DATA_SOURCE = "foo";

+

+ private static QueryRunnerFactoryConglomerate conglomerate;

+ private static Closer resourceCloser;

+ private static AuthenticationResult authenticationR

[GitHub] michael-trelinski commented on a change in pull request #6740: Zookeeper loss

michael-trelinski commented on a change in pull request #6740: Zookeeper loss

URL: https://github.com/apache/incubator-druid/pull/6740#discussion_r253238507

##

File path: server/src/main/java/org/apache/druid/curator/CuratorConfig.java

##

@@ -109,4 +113,14 @@ public String getAuthScheme()

return authScheme;

}

+ public boolean getTerminateDruidProcessOnConnectFail()

+ {

+return terminateDruidProcessOnConnectFail;

+ }

+

+ public void setTerminateDruidProcessOnConnectFail(Boolean

terminateDruidProcessOnConnectFail)

+ {

+this.terminateDruidProcessOnConnectFail =

terminateDruidProcessOnConnectFail == null ? false :

terminateDruidProcessOnConnectFail;

Review comment:

Fixed

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] michael-trelinski commented on a change in pull request #6740: Zookeeper loss

michael-trelinski commented on a change in pull request #6740: Zookeeper loss

URL: https://github.com/apache/incubator-druid/pull/6740#discussion_r253238376

##

File path: server/src/main/java/org/apache/druid/curator/CuratorModule.java

##

@@ -127,6 +154,29 @@ public EnsembleProvider

makeEnsembleProvider(CuratorConfig config, ExhibitorConf

return new FixedEnsembleProvider(config.getZkHosts());

}

+RetryPolicy retryPolicy;

+if (config.getTerminateDruidProcess()) {

+ final Function exitFunction = new Function()

+ {

+@Override

+public Void apply(Void aVoid)

+{

+ log.error("Zookeeper can't be reached, forcefully stopping virtual

machine...");

+ System.exit(1);

Review comment:

Switched to this pattern. I didn't think it was necessary since I've never

seen a logger kill a JVM.

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] michael-trelinski commented on a change in pull request #6740: Zookeeper loss

michael-trelinski commented on a change in pull request #6740: Zookeeper loss

URL: https://github.com/apache/incubator-druid/pull/6740#discussion_r253238297

##

File path: server/src/main/java/org/apache/druid/curator/CuratorModule.java

##

@@ -79,10 +80,24 @@ public CuratorFramework makeCurator(CuratorConfig config,

EnsembleProvider ensem

StringUtils.format("%s:%s", config.getZkUser(),

config.getZkPwd()).getBytes(StandardCharsets.UTF_8)

);

}

+

+final Function exitFunction = new Function()

+{

+ @Override

+ public Void apply(Void someVoid)

+ {

+log.error("Zookeeper can't be reached, forcefully stopping

lifecycle...");

+lifecycle.stop();

Review comment:

It is clearly logged:

makeCurator()'s exit will look like this:

> Zookeeper can't be reached, forcefully stopping lifecycle...

> Zookeeper can't be reached, forcefully stopping virtual machine...

makeEnsembleProvider()'s exit will look like this:

> Zookeeper can't be reached, forcefully stopping virtual machine...

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] jihoonson commented on issue #6690: bugfix: when building materialized-view, if taskCount>1, may cause concurrentModificationException

jihoonson commented on issue #6690: bugfix: when building materialized-view, if

taskCount>1, may cause concurrentModificationException

URL: https://github.com/apache/incubator-druid/pull/6690#issuecomment-459907344

@pzhdfy thanks for the patch and sorry for late review. Do you know what

threads can read and remove at the same time? It's currently guarded by

`taskLock`.

```java

synchronized (taskLock) {

for (Map.Entry entry :

runningTasks.entrySet()) {

Optional taskStatus =

taskStorage.getStatus(entry.getValue().getId());

if (!taskStatus.isPresent() || !taskStatus.get().isRunnable()) {

runningTasks.remove(entry.getKey());

runningVersion.remove(entry.getKey());

}

}

if (runningTasks.size() == maxTaskCount) {

//if the number of running tasks reach the max task count,

supervisor won't submit new tasks.

return;

}

Pair, Map>>

toBuildIntervalAndBaseSegments = checkSegments();

SortedMap sortedToBuildVersion =

toBuildIntervalAndBaseSegments.lhs;

Map> baseSegments =

toBuildIntervalAndBaseSegments.rhs;

missInterval = sortedToBuildVersion.keySet();

submitTasks(sortedToBuildVersion, baseSegments);

}

```

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org

For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] jihoonson commented on a change in pull request #6951: Add more sketch aggregator support in Druid SQL

jihoonson commented on a change in pull request #6951: Add more sketch

aggregator support in Druid SQL

URL: https://github.com/apache/incubator-druid/pull/6951#discussion_r253233621

##

File path:

extensions-core/datasketches/src/test/java/org/apache/druid/query/aggregation/datasketches/hll/sql/HllSketchSqlAggregatorTest.java

##

@@ -0,0 +1,402 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.query.aggregation.datasketches.hll.sql;

+

+import com.fasterxml.jackson.databind.Module;

+import com.google.common.collect.ImmutableList;

+import com.google.common.collect.ImmutableMap;

+import com.google.common.collect.ImmutableSet;

+import com.google.common.collect.Iterables;

+import org.apache.druid.common.config.NullHandling;

+import org.apache.druid.java.util.common.Pair;

+import org.apache.druid.java.util.common.granularity.Granularities;

+import org.apache.druid.java.util.common.io.Closer;

+import org.apache.druid.query.Druids;

+import org.apache.druid.query.Query;

+import org.apache.druid.query.QueryDataSource;

+import org.apache.druid.query.QueryRunnerFactoryConglomerate;

+import org.apache.druid.query.aggregation.CountAggregatorFactory;

+import org.apache.druid.query.aggregation.DoubleSumAggregatorFactory;

+import org.apache.druid.query.aggregation.FilteredAggregatorFactory;

+import org.apache.druid.query.aggregation.LongSumAggregatorFactory;

+import

org.apache.druid.query.aggregation.datasketches.hll.HllSketchBuildAggregatorFactory;

+import

org.apache.druid.query.aggregation.datasketches.hll.HllSketchMergeAggregatorFactory;

+import org.apache.druid.query.aggregation.datasketches.hll.HllSketchModule;

+import org.apache.druid.query.aggregation.post.ArithmeticPostAggregator;

+import org.apache.druid.query.aggregation.post.FieldAccessPostAggregator;

+import

org.apache.druid.query.aggregation.post.FinalizingFieldAccessPostAggregator;

+import org.apache.druid.query.dimension.DefaultDimensionSpec;

+import org.apache.druid.query.expression.TestExprMacroTable;

+import org.apache.druid.query.groupby.GroupByQuery;

+import org.apache.druid.query.spec.MultipleIntervalSegmentSpec;

+import org.apache.druid.segment.IndexBuilder;

+import org.apache.druid.segment.QueryableIndex;

+import org.apache.druid.segment.column.ValueType;

+import org.apache.druid.segment.incremental.IncrementalIndexSchema;

+import org.apache.druid.segment.virtual.ExpressionVirtualColumn;

+import

org.apache.druid.segment.writeout.OffHeapMemorySegmentWriteOutMediumFactory;

+import org.apache.druid.server.security.AuthTestUtils;

+import org.apache.druid.server.security.AuthenticationResult;

+import org.apache.druid.sql.SqlLifecycle;

+import org.apache.druid.sql.SqlLifecycleFactory;

+import org.apache.druid.sql.calcite.BaseCalciteQueryTest;

+import org.apache.druid.sql.calcite.filtration.Filtration;

+import org.apache.druid.sql.calcite.planner.DruidOperatorTable;

+import org.apache.druid.sql.calcite.planner.PlannerConfig;

+import org.apache.druid.sql.calcite.planner.PlannerContext;

+import org.apache.druid.sql.calcite.planner.PlannerFactory;

+import org.apache.druid.sql.calcite.schema.DruidSchema;

+import org.apache.druid.sql.calcite.schema.SystemSchema;

+import org.apache.druid.sql.calcite.util.CalciteTestBase;

+import org.apache.druid.sql.calcite.util.CalciteTests;

+import org.apache.druid.sql.calcite.util.QueryLogHook;

+import org.apache.druid.sql.calcite.util.SpecificSegmentsQuerySegmentWalker;

+import org.apache.druid.timeline.DataSegment;

+import org.apache.druid.timeline.partition.LinearShardSpec;

+import org.junit.After;

+import org.junit.AfterClass;

+import org.junit.Assert;

+import org.junit.Before;

+import org.junit.BeforeClass;

+import org.junit.Rule;

+import org.junit.Test;

+import org.junit.rules.TemporaryFolder;

+

+import java.io.IOException;

+import java.util.Arrays;

+import java.util.Collections;

+import java.util.List;

+import java.util.Map;

+

+public class HllSketchSqlAggregatorTest extends CalciteTestBase

+{

+ private static final String DATA_SOURCE = "foo";

+

+ private static QueryRunnerFactoryConglomerate conglomerate;

+ private static Closer resourceCloser;

+ private static AuthenticationResult authenticatio

[GitHub] jihoonson commented on a change in pull request #6951: Add more sketch aggregator support in Druid SQL

jihoonson commented on a change in pull request #6951: Add more sketch

aggregator support in Druid SQL

URL: https://github.com/apache/incubator-druid/pull/6951#discussion_r253233599

##

File path:

extensions-core/datasketches/src/test/java/org/apache/druid/query/aggregation/datasketches/hll/sql/HllSketchSqlAggregatorTest.java

##

@@ -0,0 +1,402 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.query.aggregation.datasketches.hll.sql;

+

+import com.fasterxml.jackson.databind.Module;

+import com.google.common.collect.ImmutableList;

+import com.google.common.collect.ImmutableMap;

+import com.google.common.collect.ImmutableSet;

+import com.google.common.collect.Iterables;

+import org.apache.druid.common.config.NullHandling;

+import org.apache.druid.java.util.common.Pair;

+import org.apache.druid.java.util.common.granularity.Granularities;

+import org.apache.druid.java.util.common.io.Closer;

+import org.apache.druid.query.Druids;

+import org.apache.druid.query.Query;

+import org.apache.druid.query.QueryDataSource;

+import org.apache.druid.query.QueryRunnerFactoryConglomerate;

+import org.apache.druid.query.aggregation.CountAggregatorFactory;

+import org.apache.druid.query.aggregation.DoubleSumAggregatorFactory;

+import org.apache.druid.query.aggregation.FilteredAggregatorFactory;

+import org.apache.druid.query.aggregation.LongSumAggregatorFactory;

+import

org.apache.druid.query.aggregation.datasketches.hll.HllSketchBuildAggregatorFactory;

+import

org.apache.druid.query.aggregation.datasketches.hll.HllSketchMergeAggregatorFactory;

+import org.apache.druid.query.aggregation.datasketches.hll.HllSketchModule;

+import org.apache.druid.query.aggregation.post.ArithmeticPostAggregator;

+import org.apache.druid.query.aggregation.post.FieldAccessPostAggregator;

+import

org.apache.druid.query.aggregation.post.FinalizingFieldAccessPostAggregator;

+import org.apache.druid.query.dimension.DefaultDimensionSpec;

+import org.apache.druid.query.expression.TestExprMacroTable;

+import org.apache.druid.query.groupby.GroupByQuery;

+import org.apache.druid.query.spec.MultipleIntervalSegmentSpec;

+import org.apache.druid.segment.IndexBuilder;

+import org.apache.druid.segment.QueryableIndex;

+import org.apache.druid.segment.column.ValueType;

+import org.apache.druid.segment.incremental.IncrementalIndexSchema;

+import org.apache.druid.segment.virtual.ExpressionVirtualColumn;

+import

org.apache.druid.segment.writeout.OffHeapMemorySegmentWriteOutMediumFactory;

+import org.apache.druid.server.security.AuthTestUtils;

+import org.apache.druid.server.security.AuthenticationResult;

+import org.apache.druid.sql.SqlLifecycle;

+import org.apache.druid.sql.SqlLifecycleFactory;

+import org.apache.druid.sql.calcite.BaseCalciteQueryTest;

+import org.apache.druid.sql.calcite.filtration.Filtration;

+import org.apache.druid.sql.calcite.planner.DruidOperatorTable;

+import org.apache.druid.sql.calcite.planner.PlannerConfig;

+import org.apache.druid.sql.calcite.planner.PlannerContext;

+import org.apache.druid.sql.calcite.planner.PlannerFactory;

+import org.apache.druid.sql.calcite.schema.DruidSchema;

+import org.apache.druid.sql.calcite.schema.SystemSchema;

+import org.apache.druid.sql.calcite.util.CalciteTestBase;

+import org.apache.druid.sql.calcite.util.CalciteTests;

+import org.apache.druid.sql.calcite.util.QueryLogHook;

+import org.apache.druid.sql.calcite.util.SpecificSegmentsQuerySegmentWalker;

+import org.apache.druid.timeline.DataSegment;

+import org.apache.druid.timeline.partition.LinearShardSpec;

+import org.junit.After;

+import org.junit.AfterClass;

+import org.junit.Assert;

+import org.junit.Before;

+import org.junit.BeforeClass;

+import org.junit.Rule;

+import org.junit.Test;

+import org.junit.rules.TemporaryFolder;

+

+import java.io.IOException;

+import java.util.Arrays;

+import java.util.Collections;

+import java.util.List;

+import java.util.Map;

+

+public class HllSketchSqlAggregatorTest extends CalciteTestBase

+{

+ private static final String DATA_SOURCE = "foo";

+

+ private static QueryRunnerFactoryConglomerate conglomerate;

+ private static Closer resourceCloser;

+ private static AuthenticationResult authenticatio

[GitHub] ankit0811 commented on issue #6967: NoClassDefFoundError when using druid-hdfs-storage

ankit0811 commented on issue #6967: NoClassDefFoundError when using druid-hdfs-storage URL: https://github.com/apache/incubator-druid/issues/6967#issuecomment-459896068 can take a look to check if its related to #6828 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[incubator-druid] branch master updated: lol (#6985)

This is an automated email from the ASF dual-hosted git repository. fjy pushed a commit to branch master in repository https://gitbox.apache.org/repos/asf/incubator-druid.git The following commit(s) were added to refs/heads/master by this push: new 6430ef8 lol (#6985) 6430ef8 is described below commit 6430ef8e1b62515576f5c7ec090bcd79da7eb8ee Author: Justin Borromeo AuthorDate: Fri Feb 1 14:21:13 2019 -0800 lol (#6985) --- docs/content/ingestion/schema-design.md | 2 +- 1 file changed, 1 insertion(+), 1 deletion(-) diff --git a/docs/content/ingestion/schema-design.md b/docs/content/ingestion/schema-design.md index 0408a39..58f0fae 100644 --- a/docs/content/ingestion/schema-design.md +++ b/docs/content/ingestion/schema-design.md @@ -86,7 +86,7 @@ rollup and load your existing data as-is. Rollup in Druid is similar to creating (Like OpenTSDB or InfluxDB.) Similar to time series databases, Druid's data model requires a timestamp. Druid is not a timeseries database, but -it is a natural choice for storing timeseries data. Its flexible data mdoel allows it to store both timeseries and +it is a natural choice for storing timeseries data. Its flexible data model allows it to store both timeseries and non-timeseries data, even in the same datasource. To achieve best-case compression and query performance in Druid for timeseries data, it is important to partition and - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] fjy merged pull request #6985: Fix typo in schema design doc

fjy merged pull request #6985: Fix typo in schema design doc URL: https://github.com/apache/incubator-druid/pull/6985 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] justinborromeo opened a new pull request #6985: Fix typo in schema design doc

justinborromeo opened a new pull request #6985: Fix typo in schema design doc URL: https://github.com/apache/incubator-druid/pull/6985 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] jon-wei opened a new issue #6984: Need to fix LICENSE and NOTICE for next release

jon-wei opened a new issue #6984: Need to fix LICENSE and NOTICE for next release URL: https://github.com/apache/incubator-druid/issues/6984 As brought up during the vote for 0.13.0-incubating, we will need to correct our LICENSE and NOTICE files for the next release. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] surekhasaharan commented on a change in pull request #6901: Introduce published segment cache in broker

surekhasaharan commented on a change in pull request #6901: Introduce published

segment cache in broker

URL: https://github.com/apache/incubator-druid/pull/6901#discussion_r253214293

##

File path:

sql/src/main/java/org/apache/druid/sql/calcite/schema/MetadataSegmentView.java

##

@@ -0,0 +1,243 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing,

+ * software distributed under the License is distributed on an

+ * "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+ * KIND, either express or implied. See the License for the

+ * specific language governing permissions and limitations

+ * under the License.

+ */

+

+package org.apache.druid.sql.calcite.schema;

+

+import com.fasterxml.jackson.core.type.TypeReference;

+import com.fasterxml.jackson.databind.JavaType;

+import com.fasterxml.jackson.databind.ObjectMapper;

+import com.google.common.base.Preconditions;

+import com.google.common.util.concurrent.ListenableFuture;

+import com.google.inject.Inject;

+import org.apache.druid.client.BrokerSegmentWatcherConfig;

+import org.apache.druid.client.DataSegmentInterner;

+import org.apache.druid.client.JsonParserIterator;

+import org.apache.druid.client.coordinator.Coordinator;

+import org.apache.druid.concurrent.LifecycleLock;

+import org.apache.druid.discovery.DruidLeaderClient;

+import org.apache.druid.guice.ManageLifecycle;

+import org.apache.druid.java.util.common.DateTimes;

+import org.apache.druid.java.util.common.ISE;

+import org.apache.druid.java.util.common.StringUtils;

+import org.apache.druid.java.util.common.concurrent.Execs;

+import org.apache.druid.java.util.common.lifecycle.LifecycleStart;

+import org.apache.druid.java.util.common.lifecycle.LifecycleStop;

+import org.apache.druid.java.util.emitter.EmittingLogger;

+import org.apache.druid.java.util.http.client.Request;

+import org.apache.druid.server.coordinator.BytesAccumulatingResponseHandler;

+import org.apache.druid.sql.calcite.planner.PlannerConfig;

+import org.apache.druid.timeline.DataSegment;

+import org.jboss.netty.handler.codec.http.HttpMethod;

+import org.joda.time.DateTime;

+

+import java.io.IOException;

+import java.io.InputStream;

+import java.util.Iterator;

+import java.util.Set;

+import java.util.concurrent.ConcurrentHashMap;

+import java.util.concurrent.ConcurrentMap;

+import java.util.concurrent.ScheduledExecutorService;

+import java.util.concurrent.TimeUnit;

+

+/**

+ * This class polls the coordinator in background to keep the latest published

segments.

+ * Provides {@link #getPublishedSegments()} for others to get segments in

metadata store.

+ */

+@ManageLifecycle

+public class MetadataSegmentView

+{

+

+ private static final EmittingLogger log = new

EmittingLogger(MetadataSegmentView.class);

+

+ private final DruidLeaderClient coordinatorDruidLeaderClient;

+ private final ObjectMapper jsonMapper;

+ private final BytesAccumulatingResponseHandler responseHandler;

+ private final BrokerSegmentWatcherConfig segmentWatcherConfig;

+

+ private final boolean isCacheEnabled;

+ private final ConcurrentMap publishedSegments;

+ private final ScheduledExecutorService scheduledExec;

+ private final long pollPeriodinMS;

+ private LifecycleLock lifecycleLock = new LifecycleLock();

+

+ @Inject

+ public MetadataSegmentView(

+ final @Coordinator DruidLeaderClient druidLeaderClient,

+ final ObjectMapper jsonMapper,

+ final BytesAccumulatingResponseHandler responseHandler,

+ final BrokerSegmentWatcherConfig segmentWatcherConfig,

+ final PlannerConfig plannerConfig

+ )

+ {

+Preconditions.checkNotNull(plannerConfig, "plannerConfig");

+this.coordinatorDruidLeaderClient = druidLeaderClient;

+this.jsonMapper = jsonMapper;

+this.responseHandler = responseHandler;

+this.segmentWatcherConfig = segmentWatcherConfig;

+this.isCacheEnabled = plannerConfig.isMetadataSegmentCacheEnable();

+this.pollPeriodinMS = plannerConfig.getMetadataSegmentPollPeriod();

+this.publishedSegments = isCacheEnabled ? new ConcurrentHashMap<>(1000) :

null;

+this.scheduledExec =

Execs.scheduledSingleThreaded("MetadataSegmentView-Cache--%d");

+ }

+

+ @LifecycleStart

+ public void start()

+ {

+if (!lifecycleLock.canStart()) {

+ throw new ISE("can't start.");

+}

+try {

+ if (isCacheEnabled) {

+scheduledExec.schedule(new PollTask(), 0, TimeUnit.MILLISECONDS);

+lifecycleLock.started();

+log.info("MetadataSegmentView Started.");

+ }

+}

[incubator-druid] branch master updated: Fix node path for building the unified console (#6981)

This is an automated email from the ASF dual-hosted git repository. cwylie pushed a commit to branch master in repository https://gitbox.apache.org/repos/asf/incubator-druid.git The following commit(s) were added to refs/heads/master by this push: new 7d4cc28 Fix node path for building the unified console (#6981) 7d4cc28 is described below commit 7d4cc287306e9aa576e29b722a0d74f6a86fdab8 Author: Jihoon Son AuthorDate: Fri Feb 1 13:57:17 2019 -0800 Fix node path for building the unified console (#6981) --- web-console/script/build | 8 ++-- 1 file changed, 2 insertions(+), 6 deletions(-) diff --git a/web-console/script/build b/web-console/script/build index 52e35f5..147f0e6 100755 --- a/web-console/script/build +++ b/web-console/script/build @@ -18,19 +18,15 @@ set -e -#pushd web-console - echo "Copying coordinator console in..." cp -r ./node_modules/druid-console/coordinator-console . cp -r ./node_modules/druid-console/pages . cp ./node_modules/druid-console/index.html . echo "Transpiling ReactTable CSS..." -./node_modules/.bin/stylus lib/react-table.styl -o lib/react-table.css +PATH="./target/node:$PATH" ./node_modules/.bin/stylus lib/react-table.styl -o lib/react-table.css echo "Webpacking everything..." -./node_modules/.bin/webpack -c webpack.config.js +PATH="./target/node:$PATH" ./node_modules/.bin/webpack -c webpack.config.js echo "Done! Have a good day." - -#popd - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] clintropolis merged pull request #6981: Fix node path for building the unified console

clintropolis merged pull request #6981: Fix node path for building the unified console URL: https://github.com/apache/incubator-druid/pull/6981 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[GitHub] jon-wei merged pull request #6950: bloom filter sql aggregator

jon-wei merged pull request #6950: bloom filter sql aggregator URL: https://github.com/apache/incubator-druid/pull/6950 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services - To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org

[incubator-druid] branch master updated: bloom filter sql aggregator (#6950)

This is an automated email from the ASF dual-hosted git repository.

jonwei pushed a commit to branch master

in repository https://gitbox.apache.org/repos/asf/incubator-druid.git

The following commit(s) were added to refs/heads/master by this push:

new 7a5827e bloom filter sql aggregator (#6950)

7a5827e is described below

commit 7a5827e12eb65eef80e08fe86ef76604019d6af8

Author: Clint Wylie

AuthorDate: Fri Feb 1 13:54:46 2019 -0800

bloom filter sql aggregator (#6950)

* adds sql aggregator for bloom filter, adds complex value serde for sql

results

* fix tests

* checkstyle

* fix copy-paste

---

docs/content/configuration/index.md| 1 +

.../development/extensions-core/bloom-filter.md| 25 +-

docs/content/querying/sql.md | 1 +

.../druid/guice/BloomFilterExtensionModule.java| 3 +-

.../bloom/sql/BloomFilterSqlAggregator.java| 212 +++

.../apache/druid/query/filter/BloomKFilter.java| 2 +-

.../bloom/sql/BloomFilterSqlAggregatorTest.java| 642 +

.../druid/sql/calcite/planner/PlannerConfig.java | 14 +-

.../apache/druid/sql/calcite/rel/QueryMaker.java | 14 +-

.../druid/sql/calcite/BaseCalciteQueryTest.java| 8 +

.../apache/druid/sql/calcite/CalciteQueryTest.java | 17 +-

.../druid/sql/calcite/http/SqlResourceTest.java| 9 +-

12 files changed, 936 insertions(+), 12 deletions(-)

diff --git a/docs/content/configuration/index.md

b/docs/content/configuration/index.md

index 990f9ce..221cccd 100644

--- a/docs/content/configuration/index.md

+++ b/docs/content/configuration/index.md

@@ -1418,6 +1418,7 @@ The Druid SQL server is configured through the following

properties on the Broke

|`druid.sql.planner.useFallback`|Whether to evaluate operations on the Broker

when they cannot be expressed as Druid queries. This option is not recommended

for production since it can generate unscalable query plans. If false, SQL

queries that cannot be translated to Druid queries will fail.|false|

|`druid.sql.planner.requireTimeCondition`|Whether to require SQL to have

filter conditions on __time column so that all generated native queries will

have user specified intervals. If true, all queries wihout filter condition on

__time column will fail|false|

|`druid.sql.planner.sqlTimeZone`|Sets the default time zone for the server,

which will affect how time functions and timestamp literals behave. Should be a

time zone name like "America/Los_Angeles" or offset like "-08:00".|UTC|

+|`druid.sql.planner.serializeComplexValues`|Whether to serialize "complex"

output values, false will return the class name instead of the serialized

value.|true|

Broker Caching

diff --git a/docs/content/development/extensions-core/bloom-filter.md