[GitHub] [flink] flinkbot edited a comment on pull request #12994: [FLINK-18728][network] Make initialCredit of RemoteInputChannel final

flinkbot edited a comment on pull request #12994: URL: https://github.com/apache/flink/pull/12994#issuecomment-664206728 ## CI report: * 707c67f554cf59d2ba1e848e05cef0aea1fe7a89 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5280) * 370e0fc44226f5a616c459cc68d51cf6a3686991 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5314) * 453eabc11a5acfbf6cca97959b6bf6e57a93b0e9 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5315) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #12994: [FLINK-18728][network] Make initialCredit of RemoteInputChannel final

flinkbot edited a comment on pull request #12994: URL: https://github.com/apache/flink/pull/12994#issuecomment-664206728 ## CI report: * 707c67f554cf59d2ba1e848e05cef0aea1fe7a89 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5280) * 370e0fc44226f5a616c459cc68d51cf6a3686991 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5314) * 453eabc11a5acfbf6cca97959b6bf6e57a93b0e9 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] zenfenan commented on a change in pull request #12823: FLINK-18013: Refactor Hadoop utils to a single module

zenfenan commented on a change in pull request #12823:

URL: https://github.com/apache/flink/pull/12823#discussion_r467537692

##

File path:

flink-hadoop-utils/src/main/java/org/apache/flink/hadoop/utils/HadoopUtils.java

##

@@ -16,7 +16,7 @@

* limitations under the License.

*/

-package org.apache.flink.runtime.util;

+package org.apache.flink.hadoop.utils;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.ConfigConstants;

Review comment:

Makes sense. I feel it is better if we separate out the utility class at

functionality level rather than having an uber HadoopUtils class. Would also

avoid confusion. I will do that but do we have util classes for Hadoop security

now to be moved under this new module?

##

File path:

flink-hadoop-utils/src/main/java/org/apache/flink/hadoop/utils/HadoopUtils.java

##

@@ -16,7 +16,7 @@

* limitations under the License.

*/

-package org.apache.flink.runtime.util;

+package org.apache.flink.hadoop.utils;

import org.apache.flink.api.java.tuple.Tuple2;

import org.apache.flink.configuration.ConfigConstants;

Review comment:

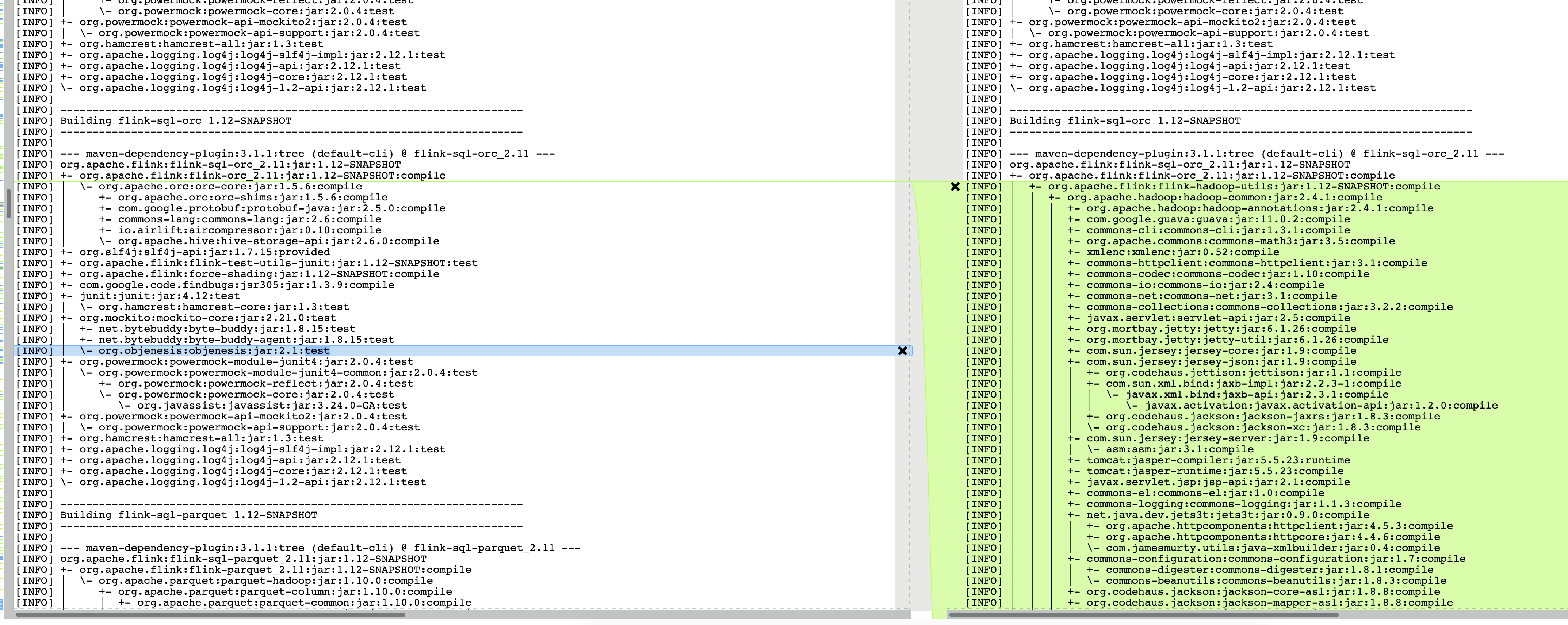

> It seems that this change is affecting the maven dependency tree quite

a bit.

> For example, here:

>

>

> This is problematic, as it might modify Flink's own dependencies (in

particular in modules connecting to external systems), and we might

"accidentally" include dependencies in our dependency shading.

> Maybe we can use a wildcard exclude for the Hadoop dependencies?

> I was also wondering if we can rid of the `hadoop-hdfs` dependency. It

only seems to be there because of `HdfsConfiguration`. Maybe we can work around

that (by manually calling

`Configuration.addDefaultResource("hdfs-default.xml");

Configuration.addDefaultResource("hdfs-site.xml");` ? )

Aah.. Good that you caught I should have compared the dependency trees

myself. I will work on getting this resolved.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * 3faa1b539faff3a59454cf9710ea69f4dd395e05 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5305) Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5308) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #12994: [FLINK-18728][network] Make initialCredit of RemoteInputChannel final

flinkbot edited a comment on pull request #12994: URL: https://github.com/apache/flink/pull/12994#issuecomment-664206728 ## CI report: * 707c67f554cf59d2ba1e848e05cef0aea1fe7a89 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5280) * 370e0fc44226f5a616c459cc68d51cf6a3686991 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5314) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * 3faa1b539faff3a59454cf9710ea69f4dd395e05 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5308) Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5305) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #12994: [FLINK-18728][network] Make initialCredit of RemoteInputChannel final

flinkbot edited a comment on pull request #12994: URL: https://github.com/apache/flink/pull/12994#issuecomment-664206728 ## CI report: * 707c67f554cf59d2ba1e848e05cef0aea1fe7a89 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5280) * 370e0fc44226f5a616c459cc68d51cf6a3686991 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] lonelyGhostisdog commented on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

lonelyGhostisdog commented on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-671003019 @flinkbot run travis This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (FLINK-18766) Support add_sink() for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18766?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng updated FLINK-18766: Component/s: API / Python > Support add_sink() for Python DataStream API > > > Key: FLINK-18766 > URL: https://issues.apache.org/jira/browse/FLINK-18766 > Project: Flink > Issue Type: Sub-task > Components: API / Python >Reporter: Hequn Cheng >Priority: Major > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (FLINK-18765) Support map() and flat_map() for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18765?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng updated FLINK-18765: Component/s: API / Python > Support map() and flat_map() for Python DataStream API > -- > > Key: FLINK-18765 > URL: https://issues.apache.org/jira/browse/FLINK-18765 > Project: Flink > Issue Type: Sub-task > Components: API / Python >Reporter: Hequn Cheng >Priority: Major > Labels: pull-request-available > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (FLINK-18763) Support basic TypeInformation for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18763?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng updated FLINK-18763: Component/s: API / Python > Support basic TypeInformation for Python DataStream API > --- > > Key: FLINK-18763 > URL: https://issues.apache.org/jira/browse/FLINK-18763 > Project: Flink > Issue Type: Sub-task > Components: API / Python >Reporter: Hequn Cheng >Assignee: Shuiqiang Chen >Priority: Major > Labels: pull-request-available > > Supports basic TypeInformation including BasicTypeInfo, LocalTimeTypeInfo, > PrimitiveArrayTypeInfo, RowTypeInfo. > Types.ROW()/Types.ROW_NAMED()/Types.PRIMITIVE_ARRAY() should also be > supported. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (FLINK-18765) Support map() and flat_map() for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18765?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng updated FLINK-18765: Fix Version/s: 1.12.0 > Support map() and flat_map() for Python DataStream API > -- > > Key: FLINK-18765 > URL: https://issues.apache.org/jira/browse/FLINK-18765 > Project: Flink > Issue Type: Sub-task > Components: API / Python >Reporter: Hequn Cheng >Priority: Major > Labels: pull-request-available > Fix For: 1.12.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (FLINK-18763) Support basic TypeInformation for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18763?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng updated FLINK-18763: Fix Version/s: 1.12.0 > Support basic TypeInformation for Python DataStream API > --- > > Key: FLINK-18763 > URL: https://issues.apache.org/jira/browse/FLINK-18763 > Project: Flink > Issue Type: Sub-task > Components: API / Python >Reporter: Hequn Cheng >Assignee: Shuiqiang Chen >Priority: Major > Labels: pull-request-available > Fix For: 1.12.0 > > > Supports basic TypeInformation including BasicTypeInfo, LocalTimeTypeInfo, > PrimitiveArrayTypeInfo, RowTypeInfo. > Types.ROW()/Types.ROW_NAMED()/Types.PRIMITIVE_ARRAY() should also be > supported. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Closed] (FLINK-18764) Support from_collection for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18764?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng closed FLINK-18764. --- Resolution: Resolved > Support from_collection for Python DataStream API > - > > Key: FLINK-18764 > URL: https://issues.apache.org/jira/browse/FLINK-18764 > Project: Flink > Issue Type: Sub-task > Components: API / Python >Reporter: Hequn Cheng >Priority: Major > Labels: pull-request-available > Fix For: 1.12.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (FLINK-18764) Support from_collection for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18764?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng updated FLINK-18764: Component/s: API / Python > Support from_collection for Python DataStream API > - > > Key: FLINK-18764 > URL: https://issues.apache.org/jira/browse/FLINK-18764 > Project: Flink > Issue Type: Sub-task > Components: API / Python >Reporter: Hequn Cheng >Priority: Major > Labels: pull-request-available > Fix For: 1.12.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (FLINK-18764) Support from_collection for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18764?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17173738#comment-17173738 ] Hequn Cheng commented on FLINK-18764: - Resolved in 1.12.0 via c966182ee051e4f21d8490a19f357abe86952af2 > Support from_collection for Python DataStream API > - > > Key: FLINK-18764 > URL: https://issues.apache.org/jira/browse/FLINK-18764 > Project: Flink > Issue Type: Sub-task >Reporter: Hequn Cheng >Priority: Major > Labels: pull-request-available > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (FLINK-18764) Support from_collection for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18764?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng updated FLINK-18764: Fix Version/s: 1.12.0 > Support from_collection for Python DataStream API > - > > Key: FLINK-18764 > URL: https://issues.apache.org/jira/browse/FLINK-18764 > Project: Flink > Issue Type: Sub-task >Reporter: Hequn Cheng >Priority: Major > Labels: pull-request-available > Fix For: 1.12.0 > > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Closed] (FLINK-18763) Support basic TypeInformation for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18763?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng closed FLINK-18763. --- Resolution: Resolved > Support basic TypeInformation for Python DataStream API > --- > > Key: FLINK-18763 > URL: https://issues.apache.org/jira/browse/FLINK-18763 > Project: Flink > Issue Type: Sub-task >Reporter: Hequn Cheng >Assignee: Shuiqiang Chen >Priority: Major > Labels: pull-request-available > > Supports basic TypeInformation including BasicTypeInfo, LocalTimeTypeInfo, > PrimitiveArrayTypeInfo, RowTypeInfo. > Types.ROW()/Types.ROW_NAMED()/Types.PRIMITIVE_ARRAY() should also be > supported. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (FLINK-18763) Support basic TypeInformation for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18763?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17173737#comment-17173737 ] Hequn Cheng commented on FLINK-18763: - Resolved in 1.12.0 via ea9f44985ab387f1a234f25e9a74ce697a6cb982 > Support basic TypeInformation for Python DataStream API > --- > > Key: FLINK-18763 > URL: https://issues.apache.org/jira/browse/FLINK-18763 > Project: Flink > Issue Type: Sub-task >Reporter: Hequn Cheng >Assignee: Shuiqiang Chen >Priority: Major > Labels: pull-request-available > > Supports basic TypeInformation including BasicTypeInfo, LocalTimeTypeInfo, > PrimitiveArrayTypeInfo, RowTypeInfo. > Types.ROW()/Types.ROW_NAMED()/Types.PRIMITIVE_ARRAY() should also be > supported. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Closed] (FLINK-18765) Support map() and flat_map() for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18765?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Hequn Cheng closed FLINK-18765. --- Resolution: Resolved > Support map() and flat_map() for Python DataStream API > -- > > Key: FLINK-18765 > URL: https://issues.apache.org/jira/browse/FLINK-18765 > Project: Flink > Issue Type: Sub-task >Reporter: Hequn Cheng >Priority: Major > Labels: pull-request-available > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Assigned] (FLINK-18849) Improve the code tabs of the Flink documents

[ https://issues.apache.org/jira/browse/FLINK-18849?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Dian Fu reassigned FLINK-18849: --- Assignee: Wei Zhong > Improve the code tabs of the Flink documents > > > Key: FLINK-18849 > URL: https://issues.apache.org/jira/browse/FLINK-18849 > Project: Flink > Issue Type: Improvement > Components: Documentation >Reporter: Wei Zhong >Assignee: Wei Zhong >Priority: Minor > Labels: pull-request-available > > Currently there are some minor problems on the code tabs of the Flink > documents: > # There are some tab labels like `data-lang="Java/Scala"`, which can not be > changed synchronously with the label `data-lang="Java"` and > `data-lang="Scala"`. > # Case sensitive. If one code tab has a label `data-lang="java"` and another > has the label `data-lang="Java"` in one page. They would not change > synchronously. > # Duplicated content. Many contents in the "Java" tab are the same as the > "Scala" tab. > I would like to improve the situation by following way: > 1. When parsing the label like `data-lang="Java/Scala"`, we can clone > the tab content, let one has the label `data-lang="Java"`, another has the > label `data-lang="Scala"`. > 2. Then force the first character of the data-lang value to be upper > case. i.e. if the label is `data-lang="java"`, it will be modified to > `data-lang="Java"`. > This way we can remove the duplicated content via merge them into one element > with a `data-lang="Java/Scala"` label. And all the tab can be changed > synchronously when they are clicked. > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (FLINK-18765) Support map() and flat_map() for Python DataStream API

[ https://issues.apache.org/jira/browse/FLINK-18765?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17173735#comment-17173735 ] Hequn Cheng commented on FLINK-18765: - Resolved in 1.12.0 via 862c9eb0f48f57f8f420d00ac20987e1f8412215 > Support map() and flat_map() for Python DataStream API > -- > > Key: FLINK-18765 > URL: https://issues.apache.org/jira/browse/FLINK-18765 > Project: Flink > Issue Type: Sub-task >Reporter: Hequn Cheng >Priority: Major > Labels: pull-request-available > -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [flink] hequn8128 merged pull request #13066: [FLINK-18765][python] Support map() and flat_map() for Python DataStream API.

hequn8128 merged pull request #13066: URL: https://github.com/apache/flink/pull/13066 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] dianfu edited a comment on pull request #13085: [FLINK-18849][docs] Improve the code tabs of the Flink documents.

dianfu edited a comment on pull request #13085: URL: https://github.com/apache/flink/pull/13085#issuecomment-671000990 What about also updating the existing documentation to use the functionality provided in this PR? It could provide an example on how to use the functionalities provided in this PR, help to understand and verify this PR. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] dianfu commented on pull request #13085: [FLINK-18849][docs] Improve the code tabs of the Flink documents.

dianfu commented on pull request #13085: URL: https://github.com/apache/flink/pull/13085#issuecomment-671000990 What about also updating the existing documentation to use the functionality provided in this PR? It could provide an example on how to use the functionalities provided in this PR and could also help to understand why this PR is needed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (FLINK-16720) Maven gets stuck downloading artifacts on Azure

[

https://issues.apache.org/jira/browse/FLINK-16720?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17173734#comment-17173734

]

Dian Fu commented on FLINK-16720:

-

[https://dev.azure.com/apache-flink/apache-flink/_build/results?buildId=5312=logs=fc5181b0-e452-5c8f-68de-1097947f6483=6b04ca5f-0b52-511d-19c9-52bf0d9fbdfa]

> Maven gets stuck downloading artifacts on Azure

> ---

>

> Key: FLINK-16720

> URL: https://issues.apache.org/jira/browse/FLINK-16720

> Project: Flink

> Issue Type: Bug

> Components: Build System / Azure Pipelines

>Affects Versions: 1.11.0

>Reporter: Robert Metzger

>Assignee: Robert Metzger

>Priority: Critical

> Labels: test-stability

> Fix For: 1.11.0

>

>

> Logs:

> https://dev.azure.com/rmetzger/Flink/_build/results?buildId=6509=logs=fc5181b0-e452-5c8f-68de-1097947f6483=27d1d645-cbce-54e2-51c4-d8b45fe24607

> {code}

> 2020-03-23T08:43:28.4128014Z [INFO]

>

> 2020-03-23T08:43:28.4128557Z [INFO] Building flink-avro-confluent-registry

> 1.11-SNAPSHOT

> 2020-03-23T08:43:28.4129129Z [INFO]

>

> 2020-03-23T08:48:47.6591333Z

> ==

> 2020-03-23T08:48:47.6594540Z Maven produced no output for 300 seconds.

> 2020-03-23T08:48:47.6595164Z

> ==

> 2020-03-23T08:48:47.6605370Z

> ==

> 2020-03-23T08:48:47.6605803Z The following Java processes are running (JPS)

> 2020-03-23T08:48:47.6606173Z

> ==

> 2020-03-23T08:48:47.7710037Z 920 Jps

> 2020-03-23T08:48:47.7778561Z 238 Launcher

> 2020-03-23T08:48:47.9270289Z

> ==

> 2020-03-23T08:48:47.9270832Z Printing stack trace of Java process 967

> 2020-03-23T08:48:47.9271199Z

> ==

> 2020-03-23T08:48:48.0165945Z 967: No such process

> 2020-03-23T08:48:48.0218260Z

> ==

> 2020-03-23T08:48:48.0218736Z Printing stack trace of Java process 238

> 2020-03-23T08:48:48.0219075Z

> ==

> 2020-03-23T08:48:48.3404066Z 2020-03-23 08:48:48

> 2020-03-23T08:48:48.3404828Z Full thread dump OpenJDK 64-Bit Server VM

> (25.242-b08 mixed mode):

> 2020-03-23T08:48:48.3405064Z

> 2020-03-23T08:48:48.3405445Z "Attach Listener" #370 daemon prio=9 os_prio=0

> tid=0x7fe130001000 nid=0x452 waiting on condition [0x]

> 2020-03-23T08:48:48.3405868Zjava.lang.Thread.State: RUNNABLE

> 2020-03-23T08:48:48.3411202Z

> 2020-03-23T08:48:48.3413171Z "resolver-5" #105 daemon prio=5 os_prio=0

> tid=0x7fe1ec2ad800 nid=0x177 waiting on condition [0x7fe1872d9000]

> 2020-03-23T08:48:48.3414175Zjava.lang.Thread.State: WAITING (parking)

> 2020-03-23T08:48:48.3414560Z at sun.misc.Unsafe.park(Native Method)

> 2020-03-23T08:48:48.3415451Z - parking to wait for <0x0003d5a9f828> (a

> java.util.concurrent.locks.AbstractQueuedSynchronizer$ConditionObject)

> 2020-03-23T08:48:48.3416180Z at

> java.util.concurrent.locks.LockSupport.park(LockSupport.java:175)

> 2020-03-23T08:48:48.3416825Z at

> java.util.concurrent.locks.AbstractQueuedSynchronizer$ConditionObject.await(AbstractQueuedSynchronizer.java:2039)

> 2020-03-23T08:48:48.3417602Z at

> java.util.concurrent.LinkedBlockingQueue.take(LinkedBlockingQueue.java:442)

> 2020-03-23T08:48:48.3418250Z at

> java.util.concurrent.ThreadPoolExecutor.getTask(ThreadPoolExecutor.java:1074)

> 2020-03-23T08:48:48.3418930Z at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1134)

> 2020-03-23T08:48:48.3419900Z at

> java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

> 2020-03-23T08:48:48.3420395Z at java.lang.Thread.run(Thread.java:748)

> 2020-03-23T08:48:48.3420648Z

> 2020-03-23T08:48:48.3421424Z "resolver-4" #104 daemon prio=5 os_prio=0

> tid=0x7fe1ec2ad000 nid=0x176 waiting on condition [0x7fe1863dd000]

> 2020-03-23T08:48:48.3421914Zjava.lang.Thread.State: WAITING (parking)

> 2020-03-23T08:48:48.3422233Z at sun.misc.Unsafe.park(Native Method)

> 2020-03-23T08:48:48.3422919Z - parking to wait for <0x0003d5a9f828> (a

> java.util.concurrent.locks.AbstractQueuedSynchronizer$ConditionObject)

> 2020-03-23T08:48:48.3423447Z at

>

[jira] [Commented] (FLINK-16947) ArtifactResolutionException: Could not transfer artifact. Entry [...] has not been leased from this pool

[

https://issues.apache.org/jira/browse/FLINK-16947?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17173733#comment-17173733

]

Dian Fu commented on FLINK-16947:

-

[https://dev.azure.com/apache-flink/apache-flink/_build/results?buildId=5300=logs=51fed01c-4eb0-5511-d479-ed5e8b9a7820=662e1729-9aac-55e2-8268-b965b3860e1f]

> ArtifactResolutionException: Could not transfer artifact. Entry [...] has

> not been leased from this pool

> -

>

> Key: FLINK-16947

> URL: https://issues.apache.org/jira/browse/FLINK-16947

> Project: Flink

> Issue Type: Bug

> Components: Build System / Azure Pipelines

>Reporter: Piotr Nowojski

>Assignee: Robert Metzger

>Priority: Blocker

> Labels: test-stability

> Fix For: 1.12.0

>

>

> https://dev.azure.com/rmetzger/Flink/_build/results?buildId=6982=logs=c88eea3b-64a0-564d-0031-9fdcd7b8abee=1e2bbe5b-4657-50be-1f07-d84bfce5b1f5

> Build of flink-metrics-availability-test failed with:

> {noformat}

> [ERROR] Failed to execute goal

> org.apache.maven.plugins:maven-surefire-plugin:2.22.1:test (end-to-end-tests)

> on project flink-metrics-availability-test: Unable to generate classpath:

> org.apache.maven.artifact.resolver.ArtifactResolutionException: Could not

> transfer artifact org.apache.maven.surefire:surefire-grouper:jar:2.22.1

> from/to google-maven-central

> (https://maven-central-eu.storage-download.googleapis.com/maven2/): Entry

> [id:13][route:{s}->https://maven-central-eu.storage-download.googleapis.com:443][state:null]

> has not been leased from this pool

> [ERROR] org.apache.maven.surefire:surefire-grouper:jar:2.22.1

> [ERROR]

> [ERROR] from the specified remote repositories:

> [ERROR] google-maven-central

> (https://maven-central-eu.storage-download.googleapis.com/maven2/,

> releases=true, snapshots=false),

> [ERROR] apache.snapshots (https://repository.apache.org/snapshots,

> releases=false, snapshots=true)

> [ERROR] Path to dependency:

> [ERROR] 1) dummy:dummy:jar:1.0

> [ERROR] 2) org.apache.maven.surefire:surefire-junit47:jar:2.22.1

> [ERROR] 3) org.apache.maven.surefire:common-junit48:jar:2.22.1

> [ERROR] 4) org.apache.maven.surefire:surefire-grouper:jar:2.22.1

> [ERROR] -> [Help 1]

> [ERROR]

> [ERROR] To see the full stack trace of the errors, re-run Maven with the -e

> switch.

> [ERROR] Re-run Maven using the -X switch to enable full debug logging.

> [ERROR]

> [ERROR] For more information about the errors and possible solutions, please

> read the following articles:

> [ERROR] [Help 1]

> http://cwiki.apache.org/confluence/display/MAVEN/MojoExecutionException

> [ERROR]

> [ERROR] After correcting the problems, you can resume the build with the

> command

> [ERROR] mvn -rf :flink-metrics-availability-test

> {noformat}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[jira] [Commented] (FLINK-17274) Maven: Premature end of Content-Length delimited message body

[

https://issues.apache.org/jira/browse/FLINK-17274?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17173730#comment-17173730

]

Dian Fu commented on FLINK-17274:

-

[https://dev.azure.com/apache-flink/apache-flink/_build/results?buildId=5300=logs=d47ab8d2-10c7-5d9e-8178-ef06a797a0d8=dbd54e26-95e0-584b-4a47-190a8df6e3ac]

> Maven: Premature end of Content-Length delimited message body

> -

>

> Key: FLINK-17274

> URL: https://issues.apache.org/jira/browse/FLINK-17274

> Project: Flink

> Issue Type: Bug

> Components: Build System / Azure Pipelines

>Reporter: Robert Metzger

>Assignee: Robert Metzger

>Priority: Critical

> Labels: test-stability

> Fix For: 1.12.0

>

>

> CI:

> https://dev.azure.com/rmetzger/Flink/_build/results?buildId=7786=logs=52b61abe-a3cc-5bde-cc35-1bbe89bb7df5=54421a62-0c80-5aad-3319-094ff69180bb

> {code}

> [ERROR] Failed to execute goal on project

> flink-connector-elasticsearch7_2.11: Could not resolve dependencies for

> project

> org.apache.flink:flink-connector-elasticsearch7_2.11:jar:1.11-SNAPSHOT: Could

> not transfer artifact org.apache.lucene:lucene-sandbox:jar:8.3.0 from/to

> alicloud-mvn-mirror

> (http://mavenmirror.alicloud.dak8s.net:/repository/maven-central/): GET

> request of: org/apache/lucene/lucene-sandbox/8.3.0/lucene-sandbox-8.3.0.jar

> from alicloud-mvn-mirror failed: Premature end of Content-Length delimited

> message body (expected: 289920; received: 239832 -> [Help 1]

> {code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[jira] [Updated] (FLINK-18859) ExecutionGraphNotEnoughResourceTest.testRestartWithSlotSharingAndNotEnoughResources failed with "Condition was not met in given timeout."

[

https://issues.apache.org/jira/browse/FLINK-18859?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Dian Fu updated FLINK-18859:

Labels: test-stability (was: )

> ExecutionGraphNotEnoughResourceTest.testRestartWithSlotSharingAndNotEnoughResources

> failed with "Condition was not met in given timeout."

> -

>

> Key: FLINK-18859

> URL: https://issues.apache.org/jira/browse/FLINK-18859

> Project: Flink

> Issue Type: Bug

> Components: Runtime / Coordination, Tests

>Affects Versions: 1.11.0

>Reporter: Dian Fu

>Priority: Major

> Labels: test-stability

>

> [https://dev.azure.com/apache-flink/apache-flink/_build/results?buildId=5300=logs=d89de3df-4600-5585-dadc-9bbc9a5e661c=66b5c59a-0094-561d-0e44-b149dfdd586d]

> {code}

> [ERROR] Tests run: 1, Failures: 0, Errors: 1, Skipped: 0, Time elapsed: 5.673

> s <<< FAILURE! - in

> org.apache.flink.runtime.executiongraph.ExecutionGraphNotEnoughResourceTest

> [ERROR]

> testRestartWithSlotSharingAndNotEnoughResources(org.apache.flink.runtime.executiongraph.ExecutionGraphNotEnoughResourceTest)

> Time elapsed: 3.158 s <<< ERROR!

> java.util.concurrent.TimeoutException: Condition was not met in given timeout.

> at

> org.apache.flink.runtime.testutils.CommonTestUtils.waitUntilCondition(CommonTestUtils.java:129)

> at

> org.apache.flink.runtime.testutils.CommonTestUtils.waitUntilCondition(CommonTestUtils.java:119)

> at

> org.apache.flink.runtime.executiongraph.ExecutionGraphNotEnoughResourceTest.testRestartWithSlotSharingAndNotEnoughResources(ExecutionGraphNotEnoughResourceTest.java:130)

> {code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[jira] [Created] (FLINK-18859) ExecutionGraphNotEnoughResourceTest.testRestartWithSlotSharingAndNotEnoughResources failed with "Condition was not met in given timeout."

Dian Fu created FLINK-18859:

---

Summary:

ExecutionGraphNotEnoughResourceTest.testRestartWithSlotSharingAndNotEnoughResources

failed with "Condition was not met in given timeout."

Key: FLINK-18859

URL: https://issues.apache.org/jira/browse/FLINK-18859

Project: Flink

Issue Type: Bug

Components: Runtime / Coordination, Tests

Affects Versions: 1.11.0

Reporter: Dian Fu

[https://dev.azure.com/apache-flink/apache-flink/_build/results?buildId=5300=logs=d89de3df-4600-5585-dadc-9bbc9a5e661c=66b5c59a-0094-561d-0e44-b149dfdd586d]

{code}

[ERROR] Tests run: 1, Failures: 0, Errors: 1, Skipped: 0, Time elapsed: 5.673 s

<<< FAILURE! - in

org.apache.flink.runtime.executiongraph.ExecutionGraphNotEnoughResourceTest

[ERROR]

testRestartWithSlotSharingAndNotEnoughResources(org.apache.flink.runtime.executiongraph.ExecutionGraphNotEnoughResourceTest)

Time elapsed: 3.158 s <<< ERROR!

java.util.concurrent.TimeoutException: Condition was not met in given timeout.

at

org.apache.flink.runtime.testutils.CommonTestUtils.waitUntilCondition(CommonTestUtils.java:129)

at

org.apache.flink.runtime.testutils.CommonTestUtils.waitUntilCondition(CommonTestUtils.java:119)

at

org.apache.flink.runtime.executiongraph.ExecutionGraphNotEnoughResourceTest.testRestartWithSlotSharingAndNotEnoughResources(ExecutionGraphNotEnoughResourceTest.java:130)

{code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [flink] flinkbot edited a comment on pull request #13027: [FLINK-16245] Decoupling user classloader from context classloader.

flinkbot edited a comment on pull request #13027: URL: https://github.com/apache/flink/pull/13027#issuecomment-666225612 ## CI report: * 30c7fa20d57633b62a3e28ddf4256193e6439ec2 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5310) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13091: [FLINK-18856][checkpointing] Don't ignore minPauseBetweenCheckpoints

flinkbot edited a comment on pull request #13091: URL: https://github.com/apache/flink/pull/13091#issuecomment-670949094 ## CI report: * 6339ff6f9b1acdb6f7cd163c9f866f77fd65c708 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5309) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13027: [FLINK-16245] Decoupling user classloader from context classloader.

flinkbot edited a comment on pull request #13027: URL: https://github.com/apache/flink/pull/13027#issuecomment-666225612 ## CI report: * f85e2aed8e736a6b384063518d72ca0cb44869ad Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5303) * 30c7fa20d57633b62a3e28ddf4256193e6439ec2 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5310) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13027: [FLINK-16245] Decoupling user classloader from context classloader.

flinkbot edited a comment on pull request #13027: URL: https://github.com/apache/flink/pull/13027#issuecomment-666225612 ## CI report: * f85e2aed8e736a6b384063518d72ca0cb44869ad Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5303) * 30c7fa20d57633b62a3e28ddf4256193e6439ec2 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * 3faa1b539faff3a59454cf9710ea69f4dd395e05 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5308) Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5305) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13091: [FLINK-18856][checkpointing] Don't ignore minPauseBetweenCheckpoints

flinkbot edited a comment on pull request #13091: URL: https://github.com/apache/flink/pull/13091#issuecomment-670949094 ## CI report: * 6339ff6f9b1acdb6f7cd163c9f866f77fd65c708 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5309) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot commented on pull request #13091: [FLINK-18856][checkpointing] Don't ignore minPauseBetweenCheckpoints

flinkbot commented on pull request #13091: URL: https://github.com/apache/flink/pull/13091#issuecomment-670949094 ## CI report: * 6339ff6f9b1acdb6f7cd163c9f866f77fd65c708 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot commented on pull request #13091: [FLINK-18856][checkpointing] Don't ignore minPauseBetweenCheckpoints

flinkbot commented on pull request #13091: URL: https://github.com/apache/flink/pull/13091#issuecomment-670947770 Thanks a lot for your contribution to the Apache Flink project. I'm the @flinkbot. I help the community to review your pull request. We will use this comment to track the progress of the review. ## Automated Checks Last check on commit 6339ff6f9b1acdb6f7cd163c9f866f77fd65c708 (Sat Aug 08 16:29:25 UTC 2020) **Warnings:** * No documentation files were touched! Remember to keep the Flink docs up to date! Mention the bot in a comment to re-run the automated checks. ## Review Progress * ❓ 1. The [description] looks good. * ❓ 2. There is [consensus] that the contribution should go into to Flink. * ❓ 3. Needs [attention] from. * ❓ 4. The change fits into the overall [architecture]. * ❓ 5. Overall code [quality] is good. Please see the [Pull Request Review Guide](https://flink.apache.org/contributing/reviewing-prs.html) for a full explanation of the review process. The Bot is tracking the review progress through labels. Labels are applied according to the order of the review items. For consensus, approval by a Flink committer of PMC member is required Bot commands The @flinkbot bot supports the following commands: - `@flinkbot approve description` to approve one or more aspects (aspects: `description`, `consensus`, `architecture` and `quality`) - `@flinkbot approve all` to approve all aspects - `@flinkbot approve-until architecture` to approve everything until `architecture` - `@flinkbot attention @username1 [@username2 ..]` to require somebody's attention - `@flinkbot disapprove architecture` to remove an approval you gave earlier This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (FLINK-18856) CheckpointCoordinator ignores checkpointing.min-pause

[ https://issues.apache.org/jira/browse/FLINK-18856?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated FLINK-18856: --- Labels: pull-request-available (was: ) > CheckpointCoordinator ignores checkpointing.min-pause > - > > Key: FLINK-18856 > URL: https://issues.apache.org/jira/browse/FLINK-18856 > Project: Flink > Issue Type: Bug > Components: Runtime / Checkpointing >Affects Versions: 1.11.1 >Reporter: Roman Khachatryan >Assignee: Roman Khachatryan >Priority: Major > Labels: pull-request-available > Fix For: 1.12.0, 1.11.2 > > > See discussion: > http://mail-archives.apache.org/mod_mbox/flink-dev/202008.mbox/%3cca+5xao2enuzfyq+e-mmr2luuueu_zfjjoabjtqxow6tkgdr...@mail.gmail.com%3e -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [flink] rkhachatryan opened a new pull request #13091: [FLINK-18856][checkpointing] Don't ignore minPauseBetweenCheckpoints

rkhachatryan opened a new pull request #13091: URL: https://github.com/apache/flink/pull/13091 ## What is the purpose of the change `CheckpointRequestDecider` uses lastCheckpointCompletionTime and pendingRequests.size to make a decision. While latter is currently checked under a lock, the former is not. So it can see "no pending checkpoints" (fresh value of `pendingRequests.size`) and "last checkpoint completed long time ago" (stale value of `lastCheckpointCompletionTime`). Therefore, it decides to execute request, effictevely ignoring minPauseBetweenCheckpoint setting. This increases checkpoint frequency, and in some cases decreases throughput (see ML thread). This PR replaces function argument for `lastCheckpointCompletionTime` with a supplier, which is called under lock (similar to `pendingRequests.size()`). ## Verifying this change Added unit test: `CheckpointCoordinatorTest.testMinCheckpointPause`. ## Does this pull request potentially affect one of the following parts: - Dependencies (does it add or upgrade a dependency): no - The public API, i.e., is any changed class annotated with `@Public(Evolving)`: no - The serializers: no - The runtime per-record code paths (performance sensitive): no - Anything that affects deployment or recovery: JobManager (and its components), Checkpointing, Kubernetes/Yarn/Mesos, ZooKeeper: yes, Checkpointing - The S3 file system connector: no ## Documentation - Does this pull request introduce a new feature? no - If yes, how is the feature documented? not applicable This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * 3faa1b539faff3a59454cf9710ea69f4dd395e05 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5308) Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5305) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] lonelyGhostisdog commented on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

lonelyGhostisdog commented on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670932013 @flinkbot run azure This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * 3faa1b539faff3a59454cf9710ea69f4dd395e05 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5305) Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5308) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (FLINK-18858) Kinesis Flink SQL Connector

[

https://issues.apache.org/jira/browse/FLINK-18858?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Waldemar Hummer updated FLINK-18858:

Description:

Hi all,

as far as I can see in the [list of

connectors|https://github.com/apache/flink/tree/master/flink-connectors], we

have a

{{[flink-connector-kinesis|https://github.com/apache/flink/tree/master/flink-connectors/flink-connector-kinesis]}}

for *programmatic access* to Kinesis streams, but there does not yet seem to

exist a *Kinesis SQL connector* (something like

{{flink-sql-connector-kinesis}}, analogous to {{flink-sql-connector-kafka}}).

Our use case would be to enable SQL queries with direct access to Kinesis

sources (and potentially sinks), to enable something like the following Flink

SQL queries:

{code:java}

$ bin/sql-client.sh embedded

...

Flink SQL> CREATE TABLE Orders(`user` string, amount int, rowtime TIME) WITH

('connector' = 'kinesis', ...);

...

Flink SQL> SELECT * FROM Orders ...;

...{code}

I was wondering if this is something that has been considered, or is already

actively being worked on? If one of you can provide some guidance, we may be

able to work on a PoC implementation to add this functionality.

(Wasn't able to find an existing issue in the backlog - if this is a duplicate,

then please let me know as well.)

Thanks!

was:

Hi all,

as far as I can see in the [list of

connectors|https://github.com/apache/flink/tree/master/flink-connectors], we

have a

{{[flink-connector-kinesis|https://github.com/apache/flink/tree/master/flink-connectors/flink-connector-kinesis]}}

for *programmatic access* to Kinesis streams, but there does not yet seem to

exist a *Kinesis SQL connector* (something like

{{flink-sql-connector-kinesis}}, analogous to {{flink-sql-connector-kafka}}).

Our use case would be to enable SQL queries with direct access to Kinesis

sources (and potentially sinks), to enable something like the following Flink

SQL queries:

{code:java}

$ bin/sql-client.sh embedded

...

Flink SQL> CREATE TABLE Orders(`user` string, amount int, rowtime TIME) WITH

('connector' = 'kinesis', ...);

...

Flink SQL> SELECT * FROM Orders ...;

...{code}

I was wondering if this is something that has been considered, or is already

actively being worked on? If one of you can provide some guidance, we may be

able to work on a PoC implementation to add this functionality.

Thanks!

> Kinesis Flink SQL Connector

> ---

>

> Key: FLINK-18858

> URL: https://issues.apache.org/jira/browse/FLINK-18858

> Project: Flink

> Issue Type: Improvement

>Reporter: Waldemar Hummer

>Priority: Major

>

> Hi all,

> as far as I can see in the [list of

> connectors|https://github.com/apache/flink/tree/master/flink-connectors], we

> have a

> {{[flink-connector-kinesis|https://github.com/apache/flink/tree/master/flink-connectors/flink-connector-kinesis]}}

> for *programmatic access* to Kinesis streams, but there does not yet seem to

> exist a *Kinesis SQL connector* (something like

> {{flink-sql-connector-kinesis}}, analogous to {{flink-sql-connector-kafka}}).

> Our use case would be to enable SQL queries with direct access to Kinesis

> sources (and potentially sinks), to enable something like the following Flink

> SQL queries:

> {code:java}

> $ bin/sql-client.sh embedded

> ...

> Flink SQL> CREATE TABLE Orders(`user` string, amount int, rowtime TIME) WITH

> ('connector' = 'kinesis', ...);

> ...

> Flink SQL> SELECT * FROM Orders ...;

> ...{code}

>

> I was wondering if this is something that has been considered, or is already

> actively being worked on? If one of you can provide some guidance, we may be

> able to work on a PoC implementation to add this functionality.

>

> (Wasn't able to find an existing issue in the backlog - if this is a

> duplicate, then please let me know as well.)

> Thanks!

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[jira] [Updated] (FLINK-18858) Kinesis Flink SQL Connector

[

https://issues.apache.org/jira/browse/FLINK-18858?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Waldemar Hummer updated FLINK-18858:

Summary: Kinesis Flink SQL Connector (was: Kinesis SQL Connector)

> Kinesis Flink SQL Connector

> ---

>

> Key: FLINK-18858

> URL: https://issues.apache.org/jira/browse/FLINK-18858

> Project: Flink

> Issue Type: Improvement

>Reporter: Waldemar Hummer

>Priority: Major

>

> Hi all,

> as far as I can see in the [list of

> connectors|https://github.com/apache/flink/tree/master/flink-connectors], we

> have a

> {{[flink-connector-kinesis|https://github.com/apache/flink/tree/master/flink-connectors/flink-connector-kinesis]}}

> for *programmatic access* to Kinesis streams, but there does not yet seem to

> exist a *Kinesis SQL connector* (something like

> {{flink-sql-connector-kinesis}}, analogous to {{flink-sql-connector-kafka}}).

> Our use case would be to enable SQL queries with direct access to Kinesis

> sources (and potentially sinks), to enable something like the following Flink

> SQL queries:

> {code:java}

> $ bin/sql-client.sh embedded

> ...

> Flink SQL> CREATE TABLE Orders(`user` string, amount int, rowtime TIME) WITH

> ('connector' = 'kinesis', ...);

> ...

> Flink SQL> SELECT * FROM Orders ...;

> ...{code}

>

>

> I was wondering if this is something that has been considered, or is already

> actively being worked on? If one of you can provide some guidance, we may be

> able to work on a PoC implementation to add this functionality.

> Thanks!

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[jira] [Created] (FLINK-18858) Kinesis SQL Connector

Waldemar Hummer created FLINK-18858:

---

Summary: Kinesis SQL Connector

Key: FLINK-18858

URL: https://issues.apache.org/jira/browse/FLINK-18858

Project: Flink

Issue Type: Improvement

Reporter: Waldemar Hummer

Hi all,

as far as I can see in the [list of

connectors|https://github.com/apache/flink/tree/master/flink-connectors], we

have a

{{[flink-connector-kinesis|https://github.com/apache/flink/tree/master/flink-connectors/flink-connector-kinesis]}}

for *programmatic access* to Kinesis streams, but there does not yet seem to

exist a *Kinesis SQL connector* (something like

{{flink-sql-connector-kinesis}}, analogous to {{flink-sql-connector-kafka}}).

Our use case would be to enable SQL queries with direct access to Kinesis

sources (and potentially sinks), to enable something like the following Flink

SQL queries:

{code:java}

$ bin/sql-client.sh embedded

...

Flink SQL> CREATE TABLE Orders(`user` string, amount int, rowtime TIME) WITH

('connector' = 'kinesis', ...);

...

Flink SQL> SELECT * FROM Orders ...;

...{code}

I was wondering if this is something that has been considered, or is already

actively being worked on? If one of you can provide some guidance, we may be

able to work on a PoC implementation to add this functionality.

Thanks!

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [flink] flinkbot edited a comment on pull request #13071: [FLINK-18751][Coordination] Implement SlotSharingExecutionSlotAllocator

flinkbot edited a comment on pull request #13071: URL: https://github.com/apache/flink/pull/13071#issuecomment-669310861 ## CI report: * 2233727954b8a6272c4a772a03bccc08b5e97720 UNKNOWN * 07fd40233580c1641fda58742e015144d767c2fe UNKNOWN * 0f6489c64935c086c4cc980bc7f5cf858f8544c4 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5304) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * 3faa1b539faff3a59454cf9710ea69f4dd395e05 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5305) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * 14d53ae063d5875b5b4b104b4b6005b2f755446e Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5302) * 3faa1b539faff3a59454cf9710ea69f4dd395e05 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5305) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * abe0926d30fc18a2b0ba2834ba7ff74c4e669743 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5301) * 14d53ae063d5875b5b4b104b4b6005b2f755446e Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5302) * 3faa1b539faff3a59454cf9710ea69f4dd395e05 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5305) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Closed] (FLINK-17503) Make memory configuration logging more user-friendly

[

https://issues.apache.org/jira/browse/FLINK-17503?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Till Rohrmann closed FLINK-17503.

-

Resolution: Fixed

Fixed via

master: b856047f554856c1d910f34db01343076aa2f7d4

1.11.2: fab025d84a3b0ba24d89888e977dd05066e6dbcb

1.10.2: 5696cf280707e358f0ad21dee1b9028177ed9e5d

> Make memory configuration logging more user-friendly

>

>

> Key: FLINK-17503

> URL: https://issues.apache.org/jira/browse/FLINK-17503

> Project: Flink

> Issue Type: Improvement

> Components: Runtime / Coordination

>Affects Versions: 1.10.0

>Reporter: Till Rohrmann

>Assignee: Matthias

>Priority: Major

> Labels: pull-request-available, usability

> Fix For: 1.10.2, 1.12.0, 1.11.2

>

>

> The newly introduced memory configuration logs some output when using the

> Mini Cluster (or local environment):

> {code}

> 2020-05-04 11:50:05,984 INFO

> org.apache.flink.runtime.taskexecutor.TaskExecutorResourceUtils [] - The

> configuration option Key: 'taskmanager.cpu.cores' , default: null (fallback

> keys: []) required for local execution is not set, setting it to its default

> value 1.7976931348623157E308

> 2020-05-04 11:50:05,989 INFO

> org.apache.flink.runtime.taskexecutor.TaskExecutorResourceUtils [] - The

> configuration option Key: 'taskmanager.memory.task.heap.size' , default: null

> (fallback keys: []) required for local execution is not set, setting it to

> its default value 9223372036854775807 bytes

> 2020-05-04 11:50:05,989 INFO

> org.apache.flink.runtime.taskexecutor.TaskExecutorResourceUtils [] - The

> configuration option Key: 'taskmanager.memory.task.off-heap.size' , default:

> 0 bytes (fallback keys: []) required for local execution is not set, setting

> it to its default value 9223372036854775807 bytes

> 2020-05-04 11:50:05,990 INFO

> org.apache.flink.runtime.taskexecutor.TaskExecutorResourceUtils [] - The

> configuration option Key: 'taskmanager.memory.network.min' , default: 64 mb

> (fallback keys: [{key=taskmanager.network.memory.min, isDeprecated=true}])

> required for local execution is not set, setting it to its default value 64 mb

> 2020-05-04 11:50:05,990 INFO

> org.apache.flink.runtime.taskexecutor.TaskExecutorResourceUtils [] - The

> configuration option Key: 'taskmanager.memory.network.max' , default: 1 gb

> (fallback keys: [{key=taskmanager.network.memory.max, isDeprecated=true}])

> required for local execution is not set, setting it to its default value 64 mb

> 2020-05-04 11:50:05,991 INFO

> org.apache.flink.runtime.taskexecutor.TaskExecutorResourceUtils [] - The

> configuration option Key: 'taskmanager.memory.managed.size' , default: null

> (fallback keys: [{key=taskmanager.memory.size, isDeprecated=true}]) required

> for local execution is not set, setting it to its default value 128 mb

> {code}

> This logging output could be made more user-friendly the following way:

> * Print only the key string of a {{ConfigOption}}, not the config option

> object with all the deprecated keys

> * Skipping the lines for {{taskmanager.memory.task.heap.size}} and

> {{taskmanager.memory.task.off-heap.size}} - we don't really set them (they

> are JVM paramaters) and the printing of long max looks strange (user would

> have to know these are place holders without effect).

> * Maybe similarly skipping the CPU cores value, this looks the strangest

> (double max).

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [flink] tillrohrmann closed pull request #13086: [FLINK-17503][runtime] [logs] Refactored log output.

tillrohrmann closed pull request #13086: URL: https://github.com/apache/flink/pull/13086 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * abe0926d30fc18a2b0ba2834ba7ff74c4e669743 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5301) * 14d53ae063d5875b5b4b104b4b6005b2f755446e Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5302) * 3faa1b539faff3a59454cf9710ea69f4dd395e05 UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (FLINK-15719) Exceptions when using scala types directly with the State Process API

[

https://issues.apache.org/jira/browse/FLINK-15719?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17173617#comment-17173617

]

Ying Z commented on FLINK-15719:

Hi, [~tzulitai] , I want to help to modify the doc [1] to make it less

error-prone, is it ok? Here is my test code of keyed state in scala lang:

# stateful process function to generate state

# inputs: 1 2 3 4 5 6

{code:java}

// code placeholder

import org.apache.flink.api.common.state.{ListState, ListStateDescriptor,

ValueState, ValueStateDescriptor}

import org.apache.flink.api.common.typeinfo.Types

import org.apache.flink.configuration.Configuration

import org.apache.flink.runtime.state.filesystem.FsStateBackend

import

org.apache.flink.streaming.api.environment.CheckpointConfig.ExternalizedCheckpointCleanup

import org.apache.flink.streaming.api.functions.KeyedProcessFunction

import org.apache.flink.streaming.api.scala._

import org.apache.flink.util.Collector

class StatefulFunctionWithTime extends KeyedProcessFunction[Int, Int, Void] {

var state: ValueState[Int] = _

var updateTimes: ListState[Long] = _

@throws[Exception]

override def open(parameters: Configuration): Unit = {

val stateDescriptor = new ValueStateDescriptor("state",

createTypeInformation[Int])

//val stateDescriptor = new ValueStateDescriptor("state", Types.INT)

state = getRuntimeContext().getState(stateDescriptor)

val updateDescriptor = new ListStateDescriptor("times",

createTypeInformation[Long])

//val updateDescriptor = new ListStateDescriptor("times", Types.LONG)

updateTimes = getRuntimeContext().getListState(updateDescriptor)

}

@throws[Exception]

override def processElement(value: Int, ctx: KeyedProcessFunction[ Int, Int,

Void ]#Context, out: Collector[Void]): Unit = {

state.update(value + 1)

updateTimes.add(System.currentTimeMillis)

}

}

object KeyedStateSample extends App {

val env = StreamExecutionEnvironment.getExecutionEnvironment

val fsStateBackend = new FsStateBackend("file:///tmp/chk_dir")

env.setStateBackend(fsStateBackend)

env.enableCheckpointing(6)

env.getCheckpointConfig.enableExternalizedCheckpoints(ExternalizedCheckpointCleanup.RETAIN_ON_CANCELLATION)

env.socketTextStream("127.0.0.1", 8010)

.map(_.toInt)

.keyBy(i => i)

.process(new StatefulFunctionWithTime)

.uid("my-uid")

env.execute()

}

{code}

# read the state generated by code above, which outputs:

# KeyedState(3,4,List(1596878053283))

KeyedState(5,6,List(1596878055023))

KeyedState(2,3,List(1596878052359))

KeyedState(4,5,List(1596878054098))

KeyedState(6,7,List(1596878056151))

KeyedState(1,2,List(1596878051332))

{code:java}

// code placeholder

import org.apache.flink.api.common.state.{ListState, ListStateDescriptor,

ValueState, ValueStateDescriptor}

import org.apache.flink.api.java.ExecutionEnvironment

import org.apache.flink.api.scala._

import org.apache.flink.configuration.Configuration

import org.apache.flink.runtime.state.memory.MemoryStateBackend

import org.apache.flink.state.api.Savepoint

import org.apache.flink.state.api.functions.KeyedStateReaderFunction

import org.apache.flink.util.Collector

import scala.collection.JavaConverters._

/**

* Description:

*/

object TestReadState extends App {

val bEnv = ExecutionEnvironment.getExecutionEnvironment

val savepoint = Savepoint.load(bEnv,

"file:///tmp/chk_dir/f988137ef1df4597bebc596ef7c76626/chk-2", new

MemoryStateBackend)

val keyedState = savepoint.readKeyedState("my-uid", new ReaderFunction)

keyedState.print()

case class KeyedState(key: Int, value: Int, times: List[Long])

class ReaderFunction extends KeyedStateReaderFunction[java.lang.Integer,

KeyedState] {

var state: ValueState[Int] = _

var updateTimes: ListState[Long] = _

@throws[Exception]

override def open(parameters: Configuration): Unit = {

val stateDescriptor = new ValueStateDescriptor("state",

createTypeInformation[Int])

state = getRuntimeContext().getState(stateDescriptor)

val updateDescriptor = new ListStateDescriptor("times",

createTypeInformation[Long])

updateTimes = getRuntimeContext().getListState(updateDescriptor)

}

override def readKey(key: java.lang.Integer,

ctx: KeyedStateReaderFunction.Context,

out: Collector[KeyedState]): Unit = {

val data = KeyedState(

key,

state.value(),

updateTimes.get().asScala.toList)

out.collect(data)

}

}

}

{code}

1.

https://ci.apache.org/projects/flink/flink-docs-master/dev/libs/state_processor_api.html#keyed-state

> Exceptions when using scala types directly with the State Process API

> -

>

> Key: FLINK-15719

> URL: https://issues.apache.org/jira/browse/FLINK-15719

> Project: Flink

> Issue Type: Bug

>

[GitHub] [flink] flinkbot edited a comment on pull request #13027: [FLINK-16245] Decoupling user classloader from context classloader.

flinkbot edited a comment on pull request #13027: URL: https://github.com/apache/flink/pull/13027#issuecomment-666225612 ## CI report: * f85e2aed8e736a6b384063518d72ca0cb44869ad Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5303) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13071: [FLINK-18751][Coordination] Implement SlotSharingExecutionSlotAllocator

flinkbot edited a comment on pull request #13071: URL: https://github.com/apache/flink/pull/13071#issuecomment-669310861 ## CI report: * 2233727954b8a6272c4a772a03bccc08b5e97720 UNKNOWN * 07fd40233580c1641fda58742e015144d767c2fe UNKNOWN * 97ee7a057155f9317a7890d405cb1f2ad363c271 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5290) * 0f6489c64935c086c4cc980bc7f5cf858f8544c4 Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5304) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13027: [FLINK-16245] Decoupling user classloader from context classloader.

flinkbot edited a comment on pull request #13027: URL: https://github.com/apache/flink/pull/13027#issuecomment-666225612 ## CI report: * cccf1bd4390323e70e998f819975fa9c29e0e4e5 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5190) * f85e2aed8e736a6b384063518d72ca0cb44869ad Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5303) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * abe0926d30fc18a2b0ba2834ba7ff74c4e669743 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5301) * 14d53ae063d5875b5b4b104b4b6005b2f755446e Azure: [PENDING](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5302) Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [flink] flinkbot edited a comment on pull request #13027: [FLINK-16245] Decoupling user classloader from context classloader.

flinkbot edited a comment on pull request #13027: URL: https://github.com/apache/flink/pull/13027#issuecomment-666225612 ## CI report: * cccf1bd4390323e70e998f819975fa9c29e0e4e5 Azure: [SUCCESS](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5190) * f85e2aed8e736a6b384063518d72ca0cb44869ad UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (FLINK-18857) Invalid lambda deserialization when use ElasticsearchSink#Builder to build ElasticserachSink

[

https://issues.apache.org/jira/browse/FLINK-18857?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Shengkai Fang updated FLINK-18857:

--

Description:

Currently when we use code below

{code:java}

new ElasticSearchSink.Builder(...).build()

{code}

we will get Invalid lambda deserialization error if users doesn't

setRestClientFactory explicitly. The reasion behind this bug has been figured

out in FLINK-18006, which is caused by maven. However, we only fix the

behaviour in ElasticSearchDynamicSink. When users who build es sink by

themselvies will still get the error.

was:

Currently when we use code below

{code:java}

new ElasticSearchSink.Builder(...).build()

{code}

user will get Invalid lambda deserialization error if users doesn't

setRestClientFactory explicitly. The reasion behind this bug has been figured

out in FLINK-18006, which is caused by maven. However, we only fix the

behaviour in ElasticSearchDynamicSink. When users who build es sink by

themselvies will still get the error.

> Invalid lambda deserialization when use ElasticsearchSink#Builder to build

> ElasticserachSink

>

>

> Key: FLINK-18857

> URL: https://issues.apache.org/jira/browse/FLINK-18857

> Project: Flink

> Issue Type: Bug

> Components: Connectors / ElasticSearch, Table SQL / API

>Affects Versions: 1.10.0, 1.11.0

>Reporter: Shengkai Fang

>Priority: Major

>

> Currently when we use code below

> {code:java}

> new ElasticSearchSink.Builder(...).build()

> {code}

> we will get Invalid lambda deserialization error if users doesn't

> setRestClientFactory explicitly. The reasion behind this bug has been

> figured out in FLINK-18006, which is caused by maven. However, we only fix

> the behaviour in ElasticSearchDynamicSink. When users who build es sink by

> themselvies will still get the error.

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

[GitHub] [flink] flinkbot edited a comment on pull request #13090: [FLINK-18822] [umbrella] Improve and complete Change Data Capture formats

flinkbot edited a comment on pull request #13090: URL: https://github.com/apache/flink/pull/13090#issuecomment-670818966 ## CI report: * abe0926d30fc18a2b0ba2834ba7ff74c4e669743 Azure: [FAILURE](https://dev.azure.com/apache-flink/98463496-1af2-4620-8eab-a2ecc1a2e6fe/_build/results?buildId=5301) * 14d53ae063d5875b5b4b104b4b6005b2f755446e UNKNOWN Bot commands The @flinkbot bot supports the following commands: - `@flinkbot run travis` re-run the last Travis build - `@flinkbot run azure` re-run the last Azure build This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org