[GitHub] [hadoop-ozone] bharatviswa504 commented on a change in pull request #1219: HDDS-3985. Update proto.lock files.

bharatviswa504 commented on a change in pull request #1219: URL: https://github.com/apache/hadoop-ozone/pull/1219#discussion_r456862135 ## File path: hadoop-hdds/interface-client/src/main/proto/proto.lock ## @@ -1935,4 +1935,4 @@ } } ] -} +} Review comment: This is a generated file by proto-backwards-compatibility. This is a related change. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Assigned] (HDDS-3985) Update proto.lock files

[ https://issues.apache.org/jira/browse/HDDS-3985?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham reassigned HDDS-3985: Assignee: Bharat Viswanadham (was: Vivek Ratnavel Subramanian) > Update proto.lock files > --- > > Key: HDDS-3985 > URL: https://issues.apache.org/jira/browse/HDDS-3985 > Project: Hadoop Distributed Data Store > Issue Type: Task > Components: Ozone Datanode, Ozone Manager >Affects Versions: 0.6.0 >Reporter: Vivek Ratnavel Subramanian >Assignee: Bharat Viswanadham >Priority: Major > Labels: pull-request-available > > HDDS-3807 and HDDS-3612 introduced new additions to proto files but failed to > update proto.lock files. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3985) Update proto.lock files

[ https://issues.apache.org/jira/browse/HDDS-3985?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham updated HDDS-3985: - Status: Patch Available (was: Open) > Update proto.lock files > --- > > Key: HDDS-3985 > URL: https://issues.apache.org/jira/browse/HDDS-3985 > Project: Hadoop Distributed Data Store > Issue Type: Task > Components: Ozone Datanode, Ozone Manager >Affects Versions: 0.6.0 >Reporter: Vivek Ratnavel Subramanian >Assignee: Vivek Ratnavel Subramanian >Priority: Major > Labels: pull-request-available > > HDDS-3807 and HDDS-3612 introduced new additions to proto files but failed to > update proto.lock files. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3985) Update proto.lock files

[ https://issues.apache.org/jira/browse/HDDS-3985?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham updated HDDS-3985: - Reporter: Bharat Viswanadham (was: Vivek Ratnavel Subramanian) > Update proto.lock files > --- > > Key: HDDS-3985 > URL: https://issues.apache.org/jira/browse/HDDS-3985 > Project: Hadoop Distributed Data Store > Issue Type: Task > Components: Ozone Datanode, Ozone Manager >Affects Versions: 0.6.0 >Reporter: Bharat Viswanadham >Assignee: Bharat Viswanadham >Priority: Major > Labels: pull-request-available > > HDDS-3807 and HDDS-3612 introduced new additions to proto files but failed to > update proto.lock files. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3985) Update proto.lock files

[ https://issues.apache.org/jira/browse/HDDS-3985?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham updated HDDS-3985: - Component/s: (was: Ozone CLI) Ozone Manager > Update proto.lock files > --- > > Key: HDDS-3985 > URL: https://issues.apache.org/jira/browse/HDDS-3985 > Project: Hadoop Distributed Data Store > Issue Type: Task > Components: Ozone Datanode, Ozone Manager >Affects Versions: 0.6.0 >Reporter: Vivek Ratnavel Subramanian >Assignee: Vivek Ratnavel Subramanian >Priority: Major > Labels: pull-request-available > > HDDS-3807 and HDDS-3612 introduced new additions to proto files but failed to > update proto.lock files. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 opened a new pull request #1219: HDDS-3985. Update proto.lock files.

bharatviswa504 opened a new pull request #1219: URL: https://github.com/apache/hadoop-ozone/pull/1219 ## What changes were proposed in this pull request? update proto.lock files that were missed in HDDS-3807 and HDDS-3612 ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-3985 ## How was this patch tested? No testing needed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3985) Update proto.lock files

[ https://issues.apache.org/jira/browse/HDDS-3985?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham updated HDDS-3985: - Description: HDDS-3807 and HDDS-3612 introduced new additions to proto files but failed to update proto.lock files. (was: HDDS-3807 introduced new additions to proto files but failed to update proto.lock files. ) > Update proto.lock files > --- > > Key: HDDS-3985 > URL: https://issues.apache.org/jira/browse/HDDS-3985 > Project: Hadoop Distributed Data Store > Issue Type: Task > Components: Ozone CLI, Ozone Datanode >Affects Versions: 0.6.0 >Reporter: Vivek Ratnavel Subramanian >Assignee: Vivek Ratnavel Subramanian >Priority: Major > Labels: pull-request-available > > HDDS-3807 and HDDS-3612 introduced new additions to proto files but failed to > update proto.lock files. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-3985) Update proto.lock files

Bharat Viswanadham created HDDS-3985: Summary: Update proto.lock files Key: HDDS-3985 URL: https://issues.apache.org/jira/browse/HDDS-3985 Project: Hadoop Distributed Data Store Issue Type: Task Components: Ozone CLI, Ozone Datanode Affects Versions: 0.6.0 Reporter: Vivek Ratnavel Subramanian Assignee: Vivek Ratnavel Subramanian HDDS-426 introduced new additions to proto files but failed to update proto.lock files. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3985) Update proto.lock files

[ https://issues.apache.org/jira/browse/HDDS-3985?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham updated HDDS-3985: - Description: HDDS-3807 introduced new additions to proto files but failed to update proto.lock files. (was: HDDS-426 introduced new additions to proto files but failed to update proto.lock files. ) > Update proto.lock files > --- > > Key: HDDS-3985 > URL: https://issues.apache.org/jira/browse/HDDS-3985 > Project: Hadoop Distributed Data Store > Issue Type: Task > Components: Ozone CLI, Ozone Datanode >Affects Versions: 0.6.0 >Reporter: Vivek Ratnavel Subramanian >Assignee: Vivek Ratnavel Subramanian >Priority: Major > Labels: pull-request-available > > HDDS-3807 introduced new additions to proto files but failed to update > proto.lock files. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] umamaheswararao edited a comment on pull request #1215: HDDS-3982. Disable moveToTrash in o3fs and ofs temporarily

umamaheswararao edited a comment on pull request #1215:

URL: https://github.com/apache/hadoop-ozone/pull/1215#issuecomment-660398646

@smengcl Thank you for working on it.

This is a good idea.

- But the below log message from could confuse people?

```

fs.rename(path, trashPath,

Rename.TO_TRASH);

LOG.info("Moved: '" + path + "' to trash at: " + trashPath);

```

I don't see a way to avoid though. :-(

Probably we will say: A generic statement in previous log "We will not

retain any data in trash. This may just reduce confusion that, they will think

data moved but deleted immediately. :-)

Probably your logs will looks like:

INFO ozone.BasicOzoneFileSystem: Move to trash is disabled for o3fs,

deleting instead: o3fs://bucket2.volume1.om/dir3/key5.

Files/dirs

will not be retained in Trash.

INFO fs.TrashPolicyDefault: Moved: 'o3fs://bucket2.volume1.om/dir3/key5' to

trash at: /.Trash/hadoop/Current/dir3/key5

I am not this is confusing more. Think about some generic message to convey

that next message is just fake.

- I think we need to add test case to make sure no files moving under trash

folder.

One another thought could be that: ( I am not proposing it to do it now,

just for discussion):

Currently in Hadoop side, the trash policy config is common for all kinds of

fs.

```

Class trashClass = conf.getClass(

"fs.trash.classname", TrashPolicyDefault.class, TrashPolicy.class);

TrashPolicy trash = ReflectionUtils.newInstance(trashClass, conf);

trash.initialize(conf, fs); // initialize TrashPolicy

return trash

```

If user wants different policies based on their FS behaviors, that's not

possible. Probably if we have confg like

> `fs..trash.classname`

and by default we can use TrashPolicyDefault.class. if user wants, they can

configure per fs specific policy.

So, If config was defined this way, our life would have been easier now. In

our case we could have configured our own policy and simply delete the files in

it.

Anyway what we are doing is temporary until we have proper cleanup. So, this

option will not help as we need changes in Hadoop side.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] vivekratnavel commented on pull request #1218: HDDS-3984. Support filter and search the columns in recon UI

vivekratnavel commented on pull request #1218: URL: https://github.com/apache/hadoop-ozone/pull/1218#issuecomment-660537728 @runitao Thanks for working on this! I tested your changes locally and it looks good. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] vivekratnavel commented on a change in pull request #1218: HDDS-3984. Support filter and search the columns in recon UI

vivekratnavel commented on a change in pull request #1218:

URL: https://github.com/apache/hadoop-ozone/pull/1218#discussion_r456824126

##

File path:

hadoop-ozone/recon/src/main/resources/webapps/recon/ozone-recon-web/src/components/columnSearch/columnSearch.tsx

##

@@ -0,0 +1,74 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+import React from 'react';

+import {Input, Button, Icon} from 'antd';

+

+class ColumnSearch extends React.Component {

Review comment:

This can be a React.PureComponent, since no state change is involved.

```suggestion

class ColumnSearch extends React.PureComponent {

```

##

File path:

hadoop-ozone/recon/src/main/resources/webapps/recon/ozone-recon-web/src/components/columnSearch/columnSearch.tsx

##

@@ -0,0 +1,74 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+import React from 'react';

+import {Input, Button, Icon} from 'antd';

+

+class ColumnSearch extends React.Component {

+ getColumnSearchProps = (dataIndex: string) => ({

+filterDropdown: ({setSelectedKeys, selectedKeys, confirm, clearFilters})

=> (

+

+ {

+this.searchInput = node;

+ }}

+ placeholder={`Search ${dataIndex}`}

+ value={selectedKeys[0]}

+ style={{width: 188, marginBottom: 8, display: 'block'}}

+ onChange={e => setSelectedKeys(e.target.value ? [e.target.value] :

[])}

+ onPressEnter={() => this.handleSearch(selectedKeys, confirm)}

+/>

+ this.handleSearch(selectedKeys, confirm)}

+>

+ Search

+

+

this.handleReset(clearFilters)}>

+ Reset

+

+

+),

+filterIcon: filtered => (

+

+),

+onFilter: (value, record) =>

Review comment:

Add types to params

##

File path:

hadoop-ozone/recon/src/main/resources/webapps/recon/ozone-recon-web/src/components/columnSearch/columnSearch.tsx

##

@@ -0,0 +1,74 @@

+/*

Review comment:

Please move this file to `utils/columnSearch.tsx`

##

File path:

hadoop-ozone/recon/src/main/resources/webapps/recon/ozone-recon-web/src/components/columnSearch/columnSearch.tsx

##

@@ -0,0 +1,74 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+import React from 'react';

+import {Input, Button, Icon} from 'antd';

+

+class ColumnSearch extends React.Component {

+ getColumnSearchProps = (dataIndex: string) => ({

+filterDropdown: ({setSelectedKeys, selectedKeys, confirm, clearFilters})

=> (

Review comment:

Add types for the parameters:

```suggestion

filterDropdown: ({setSelectedKeys, selectedKeys, confirm, clearFilters}

[jira] [Updated] (HDDS-3975) Use Duration for time in RatisClientConfig

[

https://issues.apache.org/jira/browse/HDDS-3975?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Attila Doroszlai updated HDDS-3975:

---

Status: Patch Available (was: In Progress)

> Use Duration for time in RatisClientConfig

> --

>

> Key: HDDS-3975

> URL: https://issues.apache.org/jira/browse/HDDS-3975

> Project: Hadoop Distributed Data Store

> Issue Type: Improvement

>Reporter: Attila Doroszlai

>Assignee: Attila Doroszlai

>Priority: Major

> Labels: pull-request-available

>

> Change parameter and return type of time-related config methods in

> {{RatisClientConfig}} to {{Duration}}. This results in more readable

> parameter values and type safety.

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3984) Support filter and search the columns in recon UI

[ https://issues.apache.org/jira/browse/HDDS-3984?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HDDS-3984: - Labels: pull-request-available (was: ) > Support filter and search the columns in recon UI > - > > Key: HDDS-3984 > URL: https://issues.apache.org/jira/browse/HDDS-3984 > Project: Hadoop Distributed Data Store > Issue Type: Improvement > Components: Ozone Recon >Reporter: HuangTao >Assignee: HuangTao >Priority: Major > Labels: pull-request-available > > Add the functions of filter and search in recon UI. People can filter/search > the columns. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

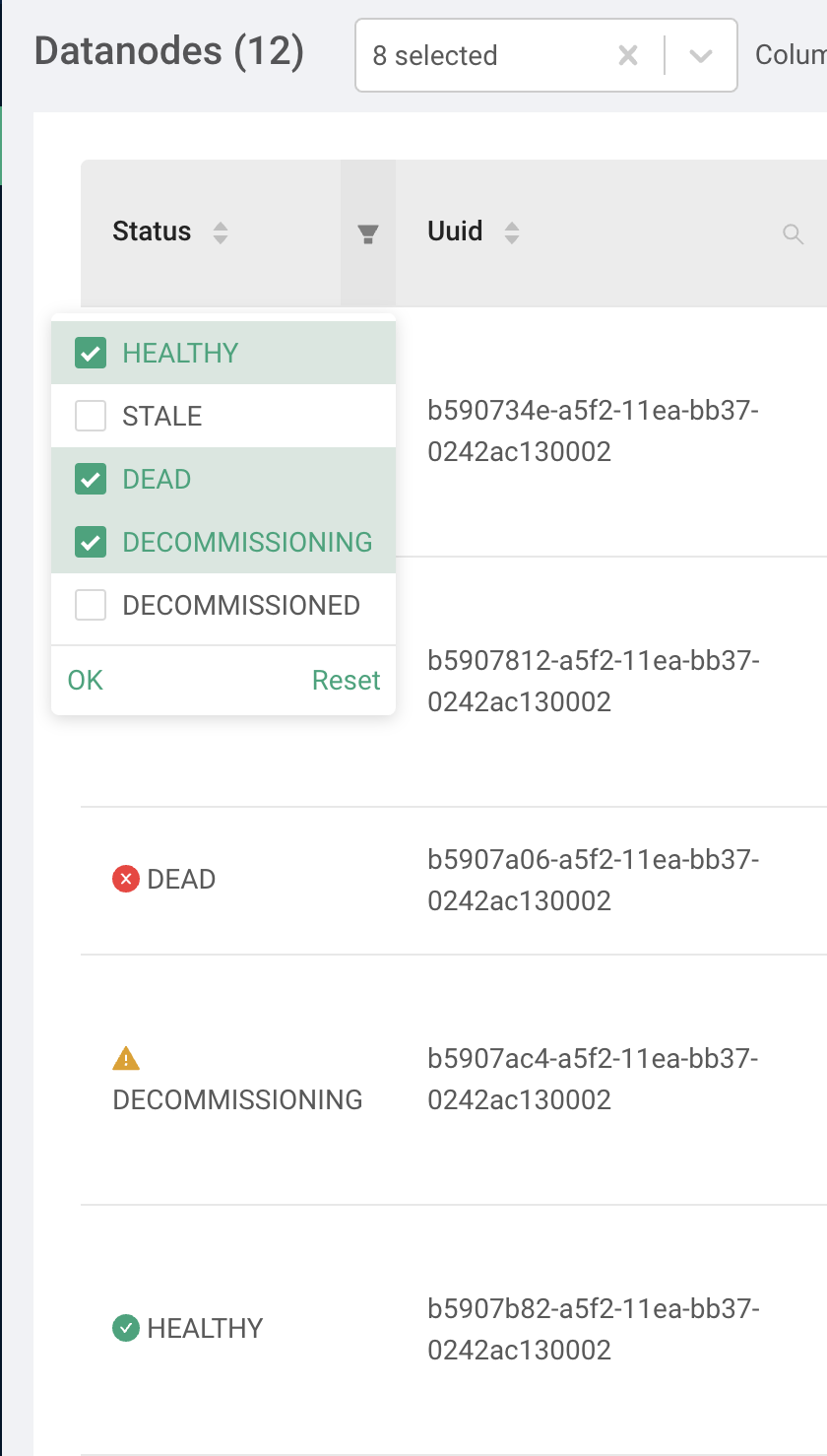

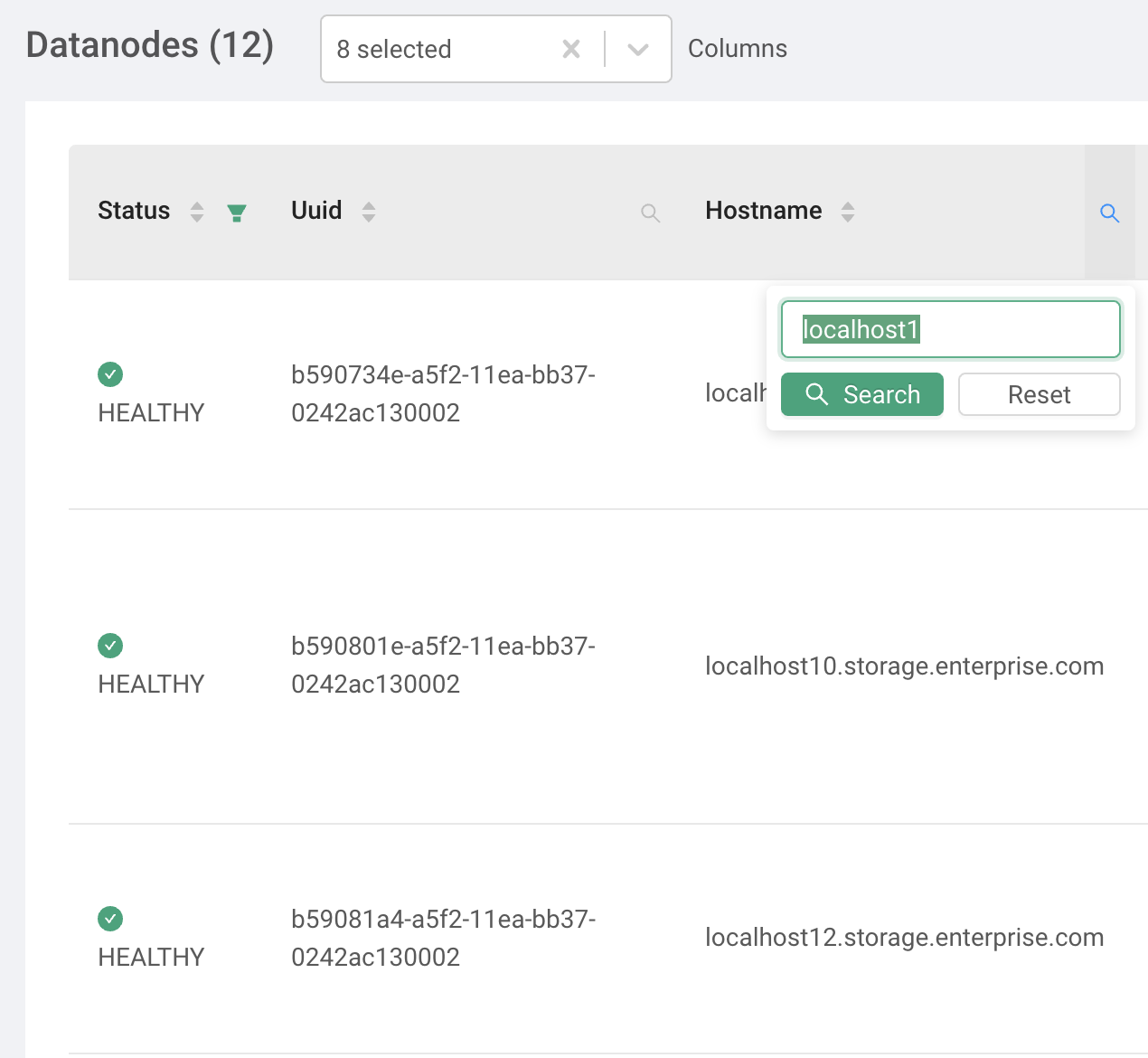

[GitHub] [hadoop-ozone] runitao opened a new pull request #1218: HDDS-3984. Support filter and search the columns in recon UI

runitao opened a new pull request #1218: URL: https://github.com/apache/hadoop-ozone/pull/1218 ## What changes were proposed in this pull request? Add the functions of filter and search in recon UI. People can filter/search the columns. ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-3984 ## How was this patch tested? lint with `yarn run lint:fix` test with `pnpm run dev` This picture show how filter work.  And this picture show how search work.  This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3955) Unable to list intermediate paths on keys created using S3G.

[ https://issues.apache.org/jira/browse/HDDS-3955?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Arpit Agarwal updated HDDS-3955: Resolution: Fixed Status: Resolved (was: Patch Available) +1 merged via Gerrit. Thanks for the contribution [~bharat]. > Unable to list intermediate paths on keys created using S3G. > > > Key: HDDS-3955 > URL: https://issues.apache.org/jira/browse/HDDS-3955 > Project: Hadoop Distributed Data Store > Issue Type: Bug > Components: Ozone Manager >Reporter: Aravindan Vijayan >Assignee: Bharat Viswanadham >Priority: Blocker > Labels: pull-request-available > > Keys created via the S3 Gateway currently use the createKey OM API to create > the ozone key. Hence, when using a hdfs client to list intermediate > directories in the key, OM returns key not found error. This was encountered > while using fluentd to write Hive logs to Ozone via the s3 gateway. > cc [~bharat] -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] arp7 commented on a change in pull request #1196: HDDS-3955. Unable to list intermediate paths on keys created using S3G.

arp7 commented on a change in pull request #1196:

URL: https://github.com/apache/hadoop-ozone/pull/1196#discussion_r456809378

##

File path:

hadoop-ozone/ozone-manager/src/test/java/org/apache/hadoop/ozone/om/request/TestNormalizePaths.java

##

@@ -0,0 +1,109 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ *

+ */

+

+package org.apache.hadoop.ozone.om.request;

+

+import org.apache.hadoop.ozone.om.exceptions.OMException;

+import org.junit.Assert;

+import org.junit.Rule;

+import org.junit.Test;

+import org.junit.rules.ExpectedException;

+

+import static

org.apache.hadoop.ozone.om.request.OMClientRequest.validateAndNormalizeKey;

+import static org.junit.Assert.fail;

+

+/**

+ * Class to test normalize paths.

+ */

+public class TestNormalizePaths {

+

+ @Rule

+ public ExpectedException exceptionRule = ExpectedException.none();

+

+ @Test

+ public void testNormalizePathsEnabled() throws Exception {

+

+Assert.assertEquals("a/b/c/d",

+validateAndNormalizeKey(true, "a/b/c/d"));

+Assert.assertEquals("a/b/c/d",

+validateAndNormalizeKey(true, "/a/b/c/d"));

+Assert.assertEquals("a/b/c/d",

+validateAndNormalizeKey(true, "a/b/c/d"));

+Assert.assertEquals("a/b/c/d",

+validateAndNormalizeKey(true, "a/b/c/d"));

+Assert.assertEquals("a/b/c/./d",

+validateAndNormalizeKey(true, "a/b/c/./d"));

Review comment:

Right, I got confused.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] arp7 merged pull request #1196: HDDS-3955. Unable to list intermediate paths on keys created using S3G.

arp7 merged pull request #1196: URL: https://github.com/apache/hadoop-ozone/pull/1196 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3975) Use Duration for time in RatisClientConfig

[

https://issues.apache.org/jira/browse/HDDS-3975?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

ASF GitHub Bot updated HDDS-3975:

-

Labels: pull-request-available (was: )

> Use Duration for time in RatisClientConfig

> --

>

> Key: HDDS-3975

> URL: https://issues.apache.org/jira/browse/HDDS-3975

> Project: Hadoop Distributed Data Store

> Issue Type: Improvement

>Reporter: Attila Doroszlai

>Assignee: Attila Doroszlai

>Priority: Major

> Labels: pull-request-available

>

> Change parameter and return type of time-related config methods in

> {{RatisClientConfig}} to {{Duration}}. This results in more readable

> parameter values and type safety.

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] adoroszlai opened a new pull request #1217: HDDS-3975. Use Duration for time in RatisClientConfig

adoroszlai opened a new pull request #1217: URL: https://github.com/apache/hadoop-ozone/pull/1217 ## What changes were proposed in this pull request? Change parameter and return type of time-related config methods in `RatisClientConfig` to `Duration`. The benefit is more readable parameter values and better type safety. https://issues.apache.org/jira/browse/HDDS-3975 ## How was this patch tested? Added unit test. https://github.com/adoroszlai/hadoop-ozone/runs/884817688 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-3984) Support filter and search in recon UI

HuangTao created HDDS-3984: -- Summary: Support filter and search in recon UI Key: HDDS-3984 URL: https://issues.apache.org/jira/browse/HDDS-3984 Project: Hadoop Distributed Data Store Issue Type: Improvement Components: Ozone Recon Reporter: HuangTao Add the functions of filter and search in recon UI. People can filter/search the columns. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Assigned] (HDDS-3984) Support filter and search in recon UI

[ https://issues.apache.org/jira/browse/HDDS-3984?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] HuangTao reassigned HDDS-3984: -- Assignee: HuangTao > Support filter and search in recon UI > - > > Key: HDDS-3984 > URL: https://issues.apache.org/jira/browse/HDDS-3984 > Project: Hadoop Distributed Data Store > Issue Type: Improvement > Components: Ozone Recon >Reporter: HuangTao >Assignee: HuangTao >Priority: Major > > Add the functions of filter and search in recon UI. People can filter/search > the columns. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3984) Support filter and search the columns in recon UI

[ https://issues.apache.org/jira/browse/HDDS-3984?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] HuangTao updated HDDS-3984: --- Summary: Support filter and search the columns in recon UI (was: Support filter and search in recon UI) > Support filter and search the columns in recon UI > - > > Key: HDDS-3984 > URL: https://issues.apache.org/jira/browse/HDDS-3984 > Project: Hadoop Distributed Data Store > Issue Type: Improvement > Components: Ozone Recon >Reporter: HuangTao >Assignee: HuangTao >Priority: Major > > Add the functions of filter and search in recon UI. People can filter/search > the columns. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] adoroszlai commented on a change in pull request #1150: HDDS-3903. OzoneRpcClient support batch rename keys.

adoroszlai commented on a change in pull request #1150:

URL: https://github.com/apache/hadoop-ozone/pull/1150#discussion_r456491277

##

File path:

hadoop-ozone/ozone-manager/src/main/java/org/apache/hadoop/ozone/om/request/key/OMKeysRenameRequest.java

##

@@ -0,0 +1,315 @@

+/**

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.hadoop.ozone.om.request.key;

+

+import com.google.common.base.Optional;

+import com.google.common.base.Preconditions;

+import org.apache.hadoop.hdds.utils.db.Table;

+import org.apache.hadoop.hdds.utils.db.cache.CacheKey;

+import org.apache.hadoop.hdds.utils.db.cache.CacheValue;

+import org.apache.hadoop.ozone.audit.AuditLogger;

+import org.apache.hadoop.ozone.audit.OMAction;

+import org.apache.hadoop.ozone.om.OMMetadataManager;

+import org.apache.hadoop.ozone.om.OMMetrics;

+import org.apache.hadoop.ozone.om.OmRenameKeyInfo;

+import org.apache.hadoop.ozone.om.OzoneManager;

+import org.apache.hadoop.ozone.om.helpers.OmKeyInfo;

+import org.apache.hadoop.ozone.om.ratis.utils.OzoneManagerDoubleBufferHelper;

+import org.apache.hadoop.ozone.om.request.util.OmResponseUtil;

+import org.apache.hadoop.ozone.om.response.OMClientResponse;

+import org.apache.hadoop.ozone.om.response.key.OMKeysRenameResponse;

+import org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos;

+import

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.KeyArgs;

+import

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.OMRequest;

+import

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.OMResponse;

+import

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.RenameKeyArgs;

+import

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.RenameKeyRequest;

+import

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.RenameKeysRequest;

+import

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.RenameKeysResponse;

+import org.apache.hadoop.ozone.security.acl.IAccessAuthorizer;

+import org.apache.hadoop.ozone.security.acl.OzoneObj;

+import org.apache.hadoop.util.Time;

+import org.slf4j.Logger;

+import org.slf4j.LoggerFactory;

+

+import java.io.IOException;

+import java.util.ArrayList;

+import java.util.HashMap;

+import java.util.List;

+import java.util.Map;

+

+import static

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.Status.OK;

+import static

org.apache.hadoop.ozone.protocol.proto.OzoneManagerProtocolProtos.Status.PARTIAL_RENAME;

+import static org.apache.hadoop.ozone.OzoneConsts.BUCKET;

+import static org.apache.hadoop.ozone.OzoneConsts.RENAMED_KEYS_MAP;

+import static org.apache.hadoop.ozone.OzoneConsts.UNRENAMED_KEYS_MAP;

+import static org.apache.hadoop.ozone.OzoneConsts.VOLUME;

+import static

org.apache.hadoop.ozone.om.lock.OzoneManagerLock.Resource.BUCKET_LOCK;

+

+/**

+ * Handles rename keys request.

+ */

+public class OMKeysRenameRequest extends OMKeyRequest {

+

+ private static final Logger LOG =

+ LoggerFactory.getLogger(OMKeysRenameRequest.class);

+

+ public OMKeysRenameRequest(OMRequest omRequest) {

+super(omRequest);

+ }

+

+ @Override

+ public OMRequest preExecute(OzoneManager ozoneManager) throws IOException {

+

+RenameKeysRequest renameKeys = getOmRequest().getRenameKeysRequest();

+Preconditions.checkNotNull(renameKeys);

+

+List renameKeyList = new ArrayList<>();

+for (RenameKeyRequest renameKey : renameKeys.getRenameKeyRequestList()) {

+ // Set modification time.

+ KeyArgs.Builder newKeyArgs = renameKey.getKeyArgs().toBuilder()

+ .setModificationTime(Time.now());

+ renameKey.toBuilder().setKeyArgs(newKeyArgs);

+ renameKeyList.add(renameKey);

+}

+RenameKeysRequest renameKeysRequest = RenameKeysRequest

+.newBuilder().addAllRenameKeyRequest(renameKeyList).build();

+return getOmRequest().toBuilder().setRenameKeysRequest(renameKeysRequest)

+.setUserInfo(getUserInfo()).build();

+ }

+

+ @Override

+ @SuppressWarnings("methodlength")

+ public OMClientResponse validateAndUpdateCache(OzoneManager ozoneManager,

+ long trxnLogIndex, OzoneManagerDoubleBufferHelper omDoubleBufferHelper) {

+

+

[GitHub] [hadoop-ozone] umamaheswararao edited a comment on pull request #1215: HDDS-3982. Disable moveToTrash in o3fs and ofs temporarily

umamaheswararao edited a comment on pull request #1215:

URL: https://github.com/apache/hadoop-ozone/pull/1215#issuecomment-660448738

@smengcl yes, fs.trash.classname configuration is for all HCFS.

If we wanted to have different impls for HCFSs, we may need to introduce new

advanced config, that should be like

'fs.SCHEME.trash.classname'.

The impl can be something like below:

```

public static TrashPolicy getInstance(Configuration conf, FileSystem fs) {

Class defaultTrashClass = conf.getClass(

"fs.trash.classname", TrashPolicyDefault.class, TrashPolicy.class);

Class trashClass = conf.getClass(String.format(

"fs.%s.trash.classname",

fs.getScheme()), defaultTrashClass, TrashPolicy.class);

TrashPolicy trash = ReflectionUtils.newInstance(trashClass, conf);

trash.initialize(conf, fs); // initialize TrashPolicy

return trash;

}

```

```

@Test

public void testPluggableFileSystemSpecificTrash() throws IOException {

Configuration conf = new Configuration();

// Test plugged TrashPolicy

conf.setClass("fs.file.trash.classname", TestTrashPolicy.class,

TrashPolicy.class);

Trash trash = new Trash(conf);

assertTrue(trash.getTrashPolicy().getClass().equals(TestTrashPolicy.class));

}

```

So, by no change in behavior. If some one wants to override the behaviors

for a specific fs, provide your TrashClass name in config. Ex: if you want to

provide a trashPolicy for ofs separately, then configure like:

fs.ofs.trash.classname = OfsTrashPolicyDefault.class.getName();

Otherwise same TrashPolicyDefault.class will be used.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] umamaheswararao commented on pull request #1215: HDDS-3982. Disable moveToTrash in o3fs and ofs temporarily

umamaheswararao commented on pull request #1215:

URL: https://github.com/apache/hadoop-ozone/pull/1215#issuecomment-660448738

@smengcl yes, fs.trash.classname configuration is for all HCFS.

If we wanted to have different impls for HCFSs, we may need to introduce new

advanced config, that should be like fs..trash.classname.

The impl can be something like below:

```

public static TrashPolicy getInstance(Configuration conf, FileSystem fs) {

Class defaultTrashClass = conf.getClass(

"fs.trash.classname", TrashPolicyDefault.class, TrashPolicy.class);

Class trashClass = conf.getClass(String.format(

"fs.%s.trash.classname",

fs.getScheme()), defaultTrashClass, TrashPolicy.class);

TrashPolicy trash = ReflectionUtils.newInstance(trashClass, conf);

trash.initialize(conf, fs); // initialize TrashPolicy

return trash;

}

```

```

@Test

public void testPluggableFileSystemSpecificTrash() throws IOException {

Configuration conf = new Configuration();

// Test plugged TrashPolicy

conf.setClass("fs.file.trash.classname", TestTrashPolicy.class,

TrashPolicy.class);

Trash trash = new Trash(conf);

assertTrue(trash.getTrashPolicy().getClass().equals(TestTrashPolicy.class));

}

```

So, by no change in behavior. If some one wants to override the behaviors

for a specific fs, provide your TrashClass name in config. Ex: if you want to

provide a trashPolicy for ofs separately, then configure like:

fs.ofs.trash.classname = OfsTrashPolicyDefault.class.getName();

Otherwise same TrashPolicyDefault.class will be used.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org