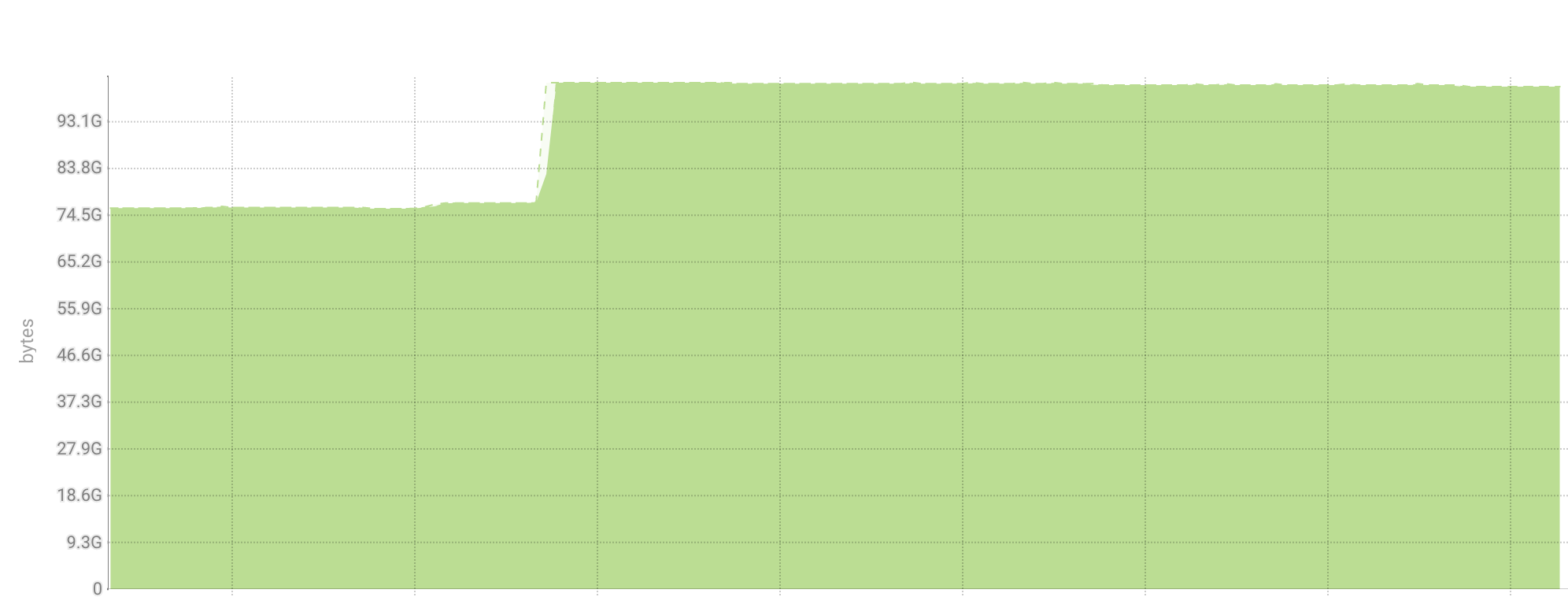

akashdw opened a new issue #5427: [Proposal] config to allow load segment to physical memory on load URL: https://github.com/apache/incubator-druid/issues/5427 Seeing some of the segments(roughly 30%) in in-memory tier are not loaded to memory as buffer cache and causing page-faults at the **query time**. In our setup, in-memory tier is backed by non-ssd disk which sometimes causes ~100-600 millis to load segments to memory. After debugging I found there is no explicit `MappedByteBuffer.load()` call here https://github.com/druid-io/druid/blob/master/java-util/src/main/java/io/druid/java/util/common/io/smoosh/SmooshedFileMapper.java#L132. I believe not to explicitly load segment decision was made as loading would potentially evict a whole bunch of stuff and it would load up the full segment even if only one column is ever actually used. But in case of **in-memory tier** it would be nice to have a configuration that defaults to `false` to allow `MappedByteBuffer.load()` to load new segments to memory. Server details: Running an in-memory tier, in-memory server `maxSize`=100G, memory available to map ~120G. VM options : ```vm.dirty_ratio = 80, vm.swappiness = 0, vm.dirty_background_ratio = 5.``` Java version: ``` java version "1.8.0_121" Java(TM) SE Runtime Environment (build 1.8.0_121-b13) Java HotSpot(TM) 64-Bit Server VM (build 25.121-b13, mixed mode) ``` Running java historicals with following jvm args: ``` -Xmx6144M -XX:MaxDirectMemorySize=133120M -XX:+HeapDumpOnOutOfMemoryError -XX:HeapDumpPath=/tmp/CD-DRUID-RxOxjYtF_CD-Da1880e55-DRUID_HISTORICAL-1805d8a436c0f10c0f5e57a824d10507_pid46815.hprof -XX:OnOutOfMemoryError=/usr/lib64/cmf/service/common/killparent.sh -server -Djava.net.preferIPv4Stack=true -Duser.timezone=UTC -Dfile.encoding=UTF-8 -Djava.io.tmpdir=/run/cloudera-scm-agent/process/2708-druid-DRUID_HISTORICAL/tmp -Djava.util.logging.manager=org.apache.logging.log4j.jul.LogManager -XX:MaxPermSize=256m -XX:+UseG1GC -XX:+PrintGCDetails -XX:+PrintGCDateStamps -Djute.maxbuffer=0xffffff ``` Wrote a simple java program to debug this (passing segment files as argument): ``` import java.io.File; import java.io.RandomAccessFile; import java.nio.MappedByteBuffer; import java.nio.channels.FileChannel; public class MemoryMappedFile { public static void main(String[] args) throws Exception { File file = new File(args[0]); //Get file channel in readonly mode FileChannel fileChannel = new RandomAccessFile(file, "r").getChannel(); MappedByteBuffer buf = fileChannel.map(FileChannel.MapMode.READ_ONLY, 0, fileChannel.size()); System.out.println(buf.isLoaded()); System.out.println(buf.capacity()); buf = buf.load(); System.out.println(buf.isLoaded()); } } ``` Produces : ``` false 262157890 true true 358945508 true false 284802939 true true 311227380 true ``` output after rerunning the same program: ``` true 262157890 true true 358945508 true true 284802939 true true 311227380 true ``` buffer/cache graph after running above in one of the historical

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services --------------------------------------------------------------------- To unsubscribe, e-mail: commits-unsubscr...@druid.apache.org For additional commands, e-mail: commits-h...@druid.apache.org