[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3932: [CARBONDATA-3994] Skip Order by for map task if it is a first sort column and use limit pushdown for array_contains filter

CarbonDataQA1 commented on pull request #3932: URL: https://github.com/apache/carbondata/pull/3932#issuecomment-714223959 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4601/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3932: [CARBONDATA-3994] Skip Order by for map task if it is a first sort column and use limit pushdown for array_contains filter

CarbonDataQA1 commented on pull request #3932: URL: https://github.com/apache/carbondata/pull/3932#issuecomment-714224032 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2849/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3993: [TEST] run CI4

CarbonDataQA1 commented on pull request #3993: URL: https://github.com/apache/carbondata/pull/3993#issuecomment-714221995 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4604/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3991: [TEST] run CI2

CarbonDataQA1 commented on pull request #3991: URL: https://github.com/apache/carbondata/pull/3991#issuecomment-714221901 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4606/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3993: [TEST] run CI4

CarbonDataQA1 commented on pull request #3993: URL: https://github.com/apache/carbondata/pull/3993#issuecomment-714222032 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2854/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3992: [TEST] run CI3

CarbonDataQA1 commented on pull request #3992: URL: https://github.com/apache/carbondata/pull/3992#issuecomment-714221908 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4605/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3990: [TEST] run CI1

CarbonDataQA1 commented on pull request #3990: URL: https://github.com/apache/carbondata/pull/3990#issuecomment-714221663 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2853/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3992: [TEST] run CI3

CarbonDataQA1 commented on pull request #3992: URL: https://github.com/apache/carbondata/pull/3992#issuecomment-714221092 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2851/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3991: [TEST] run CI2

CarbonDataQA1 commented on pull request #3991: URL: https://github.com/apache/carbondata/pull/3991#issuecomment-714221242 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2852/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3990: [TEST] run CI1

CarbonDataQA1 commented on pull request #3990: URL: https://github.com/apache/carbondata/pull/3990#issuecomment-714220219 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4603/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] ajantha-bhat commented on pull request #3932: [CARBONDATA-3994] Skip Order by for map task if it is a first sort column and use limit pushdown for array_contains filter

ajantha-bhat commented on pull request #3932: URL: https://github.com/apache/carbondata/pull/3932#issuecomment-714214698 retest this please This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3991: [TEST] run CI2

CarbonDataQA1 commented on pull request #3991: URL: https://github.com/apache/carbondata/pull/3991#issuecomment-714213757 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3993: [TEST] run CI4

CarbonDataQA1 commented on pull request #3993: URL: https://github.com/apache/carbondata/pull/3993#issuecomment-714213753 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2843/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3990: [TEST] run CI1

CarbonDataQA1 commented on pull request #3990: URL: https://github.com/apache/carbondata/pull/3990#issuecomment-714213752 Build Success with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4598/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3992: [TEST] run CI3

CarbonDataQA1 commented on pull request #3992: URL: https://github.com/apache/carbondata/pull/3992#issuecomment-714213754 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3986: [CARBONDATA-4034] Improve the time-consuming of Horizontal Compaction for update

CarbonDataQA1 commented on pull request #3986: URL: https://github.com/apache/carbondata/pull/3986#issuecomment-714213749 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3993: [TEST] run CI4

CarbonDataQA1 commented on pull request #3993: URL: https://github.com/apache/carbondata/pull/3993#issuecomment-714206736 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4595/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3990: [TEST] run CI1

CarbonDataQA1 commented on pull request #3990: URL: https://github.com/apache/carbondata/pull/3990#issuecomment-714185552 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2846/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3875: [CARBONDATA-3934]Support write transactional table with presto.

CarbonDataQA1 commented on pull request #3875: URL: https://github.com/apache/carbondata/pull/3875#issuecomment-713853801 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4594/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3875: [CARBONDATA-3934]Support write transactional table with presto.

CarbonDataQA1 commented on pull request #3875: URL: https://github.com/apache/carbondata/pull/3875#issuecomment-713820234 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2842/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3992: [TEST] run CI3

CarbonDataQA1 commented on pull request #3992: URL: https://github.com/apache/carbondata/pull/3992#issuecomment-713653611 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3991: [TEST] run CI2

CarbonDataQA1 commented on pull request #3991: URL: https://github.com/apache/carbondata/pull/3991#issuecomment-713653608 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3993: [TEST] run CI4

CarbonDataQA1 commented on pull request #3993: URL: https://github.com/apache/carbondata/pull/3993#issuecomment-713653621 Build Success with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2839/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3993: [TEST] run CI4

CarbonDataQA1 commented on pull request #3993: URL: https://github.com/apache/carbondata/pull/3993#issuecomment-713633142 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4589/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3990: [TEST] run CI1

CarbonDataQA1 commented on pull request #3990: URL: https://github.com/apache/carbondata/pull/3990#issuecomment-713622528 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2838/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3990: [TEST] run CI1

CarbonDataQA1 commented on pull request #3990: URL: https://github.com/apache/carbondata/pull/3990#issuecomment-713616580 Build Success with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4588/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (CARBONDATA-3938) In Hive read table, we are unable to read a projection column or read a full scan - select * query. Even the aggregate queries are not working.

[

https://issues.apache.org/jira/browse/CARBONDATA-3938?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Prasanna Ravichandran updated CARBONDATA-3938:

--

Description:

In Hive read table, we are unable to read a projection column or full scan

query. But the aggregate queries are working fine.

Test query:

--spark beeline;

drop table if exists uniqdata;

drop table if exists uniqdata1;

CREATE TABLE uniqdata(CUST_ID int,CUST_NAME String,ACTIVE_EMUI_VERSION string,

DOB timestamp, DOJ timestamp, BIGINT_COLUMN1 bigint,BIGINT_COLUMN2

bigint,DECIMAL_COLUMN1 decimal(30,10), DECIMAL_COLUMN2

decimal(36,10),Double_COLUMN1 double, Double_COLUMN2 double,INTEGER_COLUMN1

int) stored as carbondata ;

LOAD DATA INPATH 'hdfs://hacluster/user/prasanna/2000_UniqData.csv' into table

uniqdata OPTIONS('DELIMITER'=',',

'QUOTECHAR'='"','BAD_RECORDS_ACTION'='FORCE','FILEHEADER'='CUST_ID,CUST_NAME,ACTIVE_EMUI_VERSION,DOB,DOJ,BIGINT_COLUMN1,BIGINT_COLUMN2,DECIMAL_COLUMN1,DECIMAL_COLUMN2,Double_COLUMN1,Double_COLUMN2,INTEGER_COLUMN1');

CREATE TABLE IF NOT EXISTS uniqdata1 (CUST_ID int,CUST_NAME

String,ACTIVE_EMUI_VERSION string, DOB timestamp, DOJ timestamp, BIGINT_COLUMN1

bigint,BIGINT_COLUMN2 bigint,DECIMAL_COLUMN1 decimal(30,10), DECIMAL_COLUMN2

decimal(36,10),Double_COLUMN1 double, Double_COLUMN2 double,INTEGER_COLUMN1

int) ROW FORMAT SERDE 'org.apache.carbondata.hive.CarbonHiveSerDe' WITH

SERDEPROPERTIES

('mapreduce.input.carboninputformat.databaseName'='default','mapreduce.input.carboninputformat.tableName'='uniqdata')

STORED AS INPUTFORMAT 'org.apache.carbondata.hive.MapredCarbonInputFormat'

OUTPUTFORMAT 'org.apache.carbondata.hive.MapredCarbonOutputFormat' LOCATION

'hdfs://hacluster/user/hive/warehouse/uniqdata';

select count(*) from uniqdata1;

--Hive Beeline;

select count(*) from uniqdata1; --not working, returning 0 rows, eventhough

2000 rows are there;--Issue 1 on Hive read format table;

select * from uniqdata1; --Return no rows;--Issue 2 - a) full scan on Hive read

format table;

select cust_id from uniqdata1 limit 5;--Return no rows;–Issue 2-b select query

with projection, not working, returning now rows;

Attached the logs for your reference.

With the Hive write table the aggregate& filter queries are not working but

select * full scan queries are working.

All 3 Issues (Full scan - select *, filter queries and aggregate queries) is

not working in Hive read format table.

This issue also exists when a normal carbon table(created through stored as

carbondata) is created in Spark and data is read through select query from Hive

beeline.)

was:

In Hive read table, we are unable to read a projection column or full scan

query. But the aggregate queries are working fine.

Test query:

--spark beeline;

drop table if exists uniqdata;

drop table if exists uniqdata1;

CREATE TABLE uniqdata(CUST_ID int,CUST_NAME String,ACTIVE_EMUI_VERSION string,

DOB timestamp, DOJ timestamp, BIGINT_COLUMN1 bigint,BIGINT_COLUMN2

bigint,DECIMAL_COLUMN1 decimal(30,10), DECIMAL_COLUMN2

decimal(36,10),Double_COLUMN1 double, Double_COLUMN2 double,INTEGER_COLUMN1

int) stored as carbondata ;

LOAD DATA INPATH 'hdfs://hacluster/user/prasanna/2000_UniqData.csv' into table

uniqdata OPTIONS('DELIMITER'=',',

'QUOTECHAR'='"','BAD_RECORDS_ACTION'='FORCE','FILEHEADER'='CUST_ID,CUST_NAME,ACTIVE_EMUI_VERSION,DOB,DOJ,BIGINT_COLUMN1,BIGINT_COLUMN2,DECIMAL_COLUMN1,DECIMAL_COLUMN2,Double_COLUMN1,Double_COLUMN2,INTEGER_COLUMN1');

CREATE TABLE IF NOT EXISTS uniqdata1 (CUST_ID int,CUST_NAME

String,ACTIVE_EMUI_VERSION string, DOB timestamp, DOJ timestamp, BIGINT_COLUMN1

bigint,BIGINT_COLUMN2 bigint,DECIMAL_COLUMN1 decimal(30,10), DECIMAL_COLUMN2

decimal(36,10),Double_COLUMN1 double, Double_COLUMN2 double,INTEGER_COLUMN1

int) ROW FORMAT SERDE 'org.apache.carbondata.hive.CarbonHiveSerDe' WITH

SERDEPROPERTIES

('mapreduce.input.carboninputformat.databaseName'='default','mapreduce.input.carboninputformat.tableName'='uniqdata')

STORED AS INPUTFORMAT 'org.apache.carbondata.hive.MapredCarbonInputFormat'

OUTPUTFORMAT 'org.apache.carbondata.hive.MapredCarbonOutputFormat' LOCATION

'hdfs://hacluster/user/hive/warehouse/uniqdata';

select count(*) from uniqdata1;

--Hive Beeline;

select count(*) from uniqdata1; --not working, returning 0 rows, eventhough

2000 rows are there;--Issue 1 on Hive read format table;

select * from uniqdata1; --Return no rows;--Issue 2 - a) full scan on Hive read

format table;

select cust_id from uniqdata1 limit 5;--Return no rows;–Issue 2-b select query

with projection, not working, returning now rows;

Attached the logs for your reference. With the Hive write table this issue is

not seen. Issue is only seen in Hive read format table.

This issue also exists when a normal carbon table is created in Spark and read

through Hive beeline.

> In Hive read table, we are unable to read a projection

[GitHub] [carbondata] vikramahuja1001 commented on pull request #3917: [CARBONDATA-3978] Clean Files Refactor and support for trash folder in carbondata

vikramahuja1001 commented on pull request #3917: URL: https://github.com/apache/carbondata/pull/3917#issuecomment-713591443 retest this please This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] Karan980 commented on pull request #3970: [CARBONDATA-4007] Fix multiple issues in SDK

Karan980 commented on pull request #3970: URL: https://github.com/apache/carbondata/pull/3970#issuecomment-713574419 retest this please This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] QiangCai opened a new pull request #3993: [TEST] run CI4

QiangCai opened a new pull request #3993: URL: https://github.com/apache/carbondata/pull/3993 ### Why is this PR needed? ### What changes were proposed in this PR? ### Does this PR introduce any user interface change? - No - Yes. (please explain the change and update document) ### Is any new testcase added? - No - Yes This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] QiangCai opened a new pull request #3992: [TEST] run CI3

QiangCai opened a new pull request #3992: URL: https://github.com/apache/carbondata/pull/3992 ### Why is this PR needed? ### What changes were proposed in this PR? ### Does this PR introduce any user interface change? - No - Yes. (please explain the change and update document) ### Is any new testcase added? - No - Yes This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] QiangCai opened a new pull request #3991: [TEST] run CI2

QiangCai opened a new pull request #3991: URL: https://github.com/apache/carbondata/pull/3991 ### Why is this PR needed? run CI ### What changes were proposed in this PR? ### Does this PR introduce any user interface change? - No - Yes. (please explain the change and update document) ### Is any new testcase added? - No - Yes This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] QiangCai opened a new pull request #3990: [TEST] run CI

QiangCai opened a new pull request #3990: URL: https://github.com/apache/carbondata/pull/3990 ### Why is this PR needed? run CI ### What changes were proposed in this PR? ### Does this PR introduce any user interface change? - No - Yes. (please explain the change and update document) ### Is any new testcase added? - No - Yes This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] dependabot[bot] commented on pull request #3447: Bump dep.jackson.version from 2.6.5 to 2.10.1 in /store/sdk

dependabot[bot] commented on pull request #3447: URL: https://github.com/apache/carbondata/pull/3447#issuecomment-713538518 Dependabot tried to update this pull request, but something went wrong. We're looking into it, but in the meantime you can retry the update by commenting `@dependabot rebase`. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] dependabot[bot] commented on pull request #3456: Bump solr.version from 6.3.0 to 8.3.0 in /datamap/lucene

dependabot[bot] commented on pull request #3456: URL: https://github.com/apache/carbondata/pull/3456#issuecomment-713538495 Dependabot tried to update this pull request, but something went wrong. We're looking into it, but in the meantime you can retry the update by commenting `@dependabot rebase`. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] asfgit closed pull request #3950: [CARBONDATA-3889] Enable scalastyle check for all scala test code

asfgit closed pull request #3950: URL: https://github.com/apache/carbondata/pull/3950 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] ajantha-bhat commented on pull request #3950: [CARBONDATA-3889] Enable scalastyle check for all scala test code

ajantha-bhat commented on pull request #3950: URL: https://github.com/apache/carbondata/pull/3950#issuecomment-713528503 Merging it. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

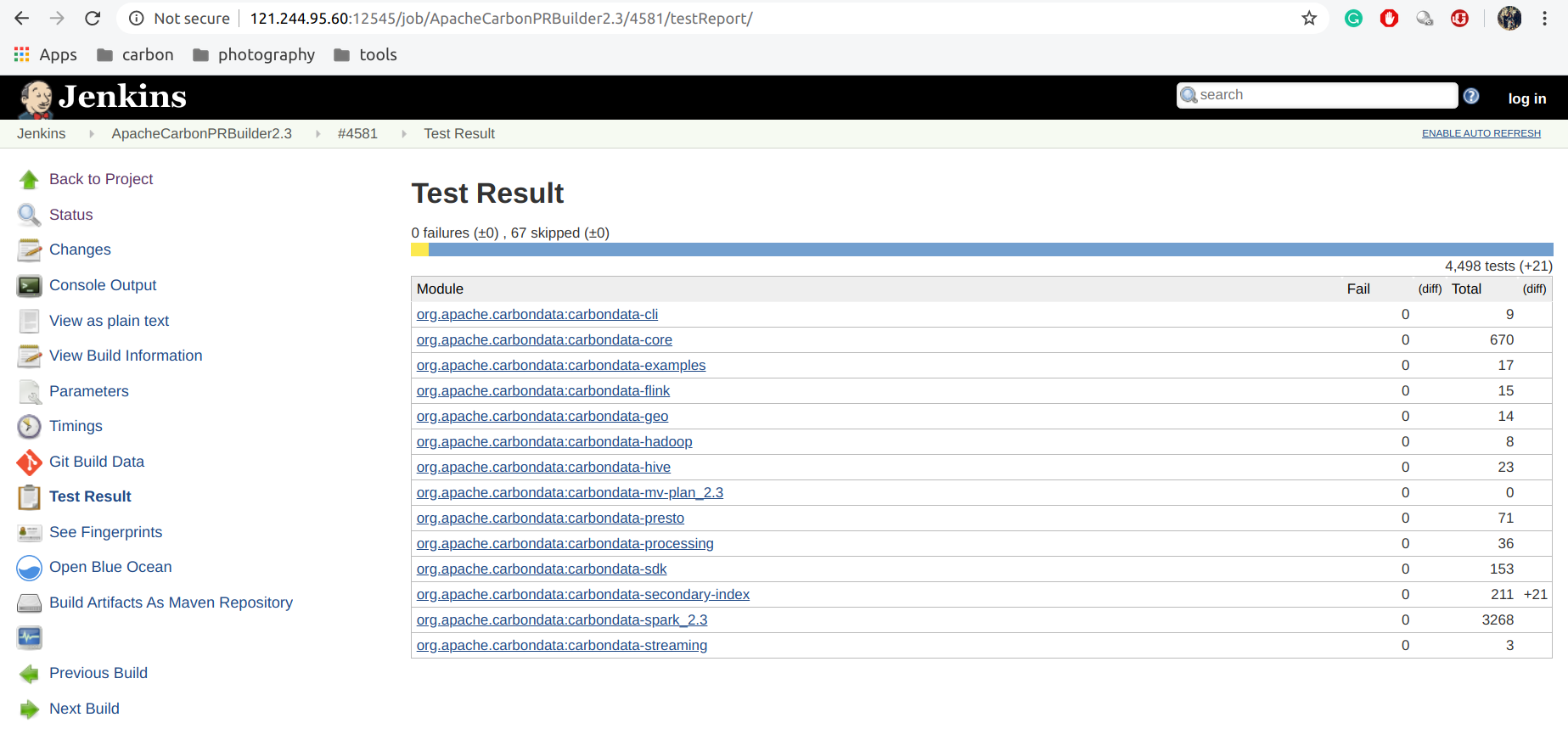

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3950: [CARBONDATA-3889] Enable scalastyle check for all scala test code

CarbonDataQA1 commented on pull request #3950: URL: https://github.com/apache/carbondata/pull/3950#issuecomment-713528043 Build Success with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4581/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] ajantha-bhat commented on pull request #3950: [CARBONDATA-3889] Enable scalastyle check for all scala test code

ajantha-bhat commented on pull request #3950: URL: https://github.com/apache/carbondata/pull/3950#issuecomment-713526336 ok, I verified it as latest build. As people are restarting CI for checking random failures, report is not coming here.  This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] QiangCai commented on pull request #3950: [CARBONDATA-3889] Enable scalastyle check for all scala test code

QiangCai commented on pull request #3950: URL: https://github.com/apache/carbondata/pull/3950#issuecomment-713523908 http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4581/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] QiangCai edited a comment on pull request #3950: [CARBONDATA-3889] Enable scalastyle check for all scala test code

QiangCai edited a comment on pull request #3950: URL: https://github.com/apache/carbondata/pull/3950#issuecomment-713523908 it passed http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4581/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] akashrn5 edited a comment on pull request #3986: [CARBONDATA-4034] Improve the time-consuming of Horizontal Compaction for update

akashrn5 edited a comment on pull request #3986: URL: https://github.com/apache/carbondata/pull/3986#issuecomment-713522116 > Have you ever tested this optimization? Could you pls give a comparison result for this change? as @Zhangshunyu said, its better if you can get the reading and update in PR, it would be great. Thanks This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] ajantha-bhat commented on pull request #3950: [CARBONDATA-3889] Enable scalastyle check for all scala test code

ajantha-bhat commented on pull request #3950: URL: https://github.com/apache/carbondata/pull/3950#issuecomment-713521651 LGTM. Appreciate your concern on clean code and making code more readable and maintainable !! I will merge once 2.3 build is passed. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] akashrn5 commented on pull request #3986: [CARBONDATA-4034] Improve the time-consuming of Horizontal Compaction for update

akashrn5 commented on pull request #3986: URL: https://github.com/apache/carbondata/pull/3986#issuecomment-713522116 > Have you ever tested this optimization? Could you pls give a comparison result for this change? as @Zhangshunyu said, its better if you can get the reading and update in PR, it would be great. Thanks This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] akashrn5 commented on a change in pull request #3986: [CARBONDATA-4034] Improve the time-consuming of Horizontal Compaction for update

akashrn5 commented on a change in pull request #3986:

URL: https://github.com/apache/carbondata/pull/3986#discussion_r509221753

##

File path:

processing/src/main/java/org/apache/carbondata/processing/merger/CarbonDataMergerUtil.java

##

@@ -1039,22 +1039,24 @@ private static boolean

isSegmentValid(LoadMetadataDetails seg) {

if (CompactionType.IUD_DELETE_DELTA == compactionTypeIUD) {

int numberDeleteDeltaFilesThreshold =

CarbonProperties.getInstance().getNoDeleteDeltaFilesThresholdForIUDCompaction();

- List deleteSegments = new ArrayList<>();

- for (Segment seg : segments) {

-if (checkDeleteDeltaFilesInSeg(seg, segmentUpdateStatusManager,

-numberDeleteDeltaFilesThreshold)) {

- deleteSegments.add(seg);

+

+ // firstly find the valid segments which are updated from

SegmentUpdateDetails,

+ // in order to reduce the segment list for behind traversal

+ List segmentsPresentInSegmentUpdateDetails = new ArrayList<>();

Review comment:

you dont need to delete the old code, its already going inside logic if

the `updateDetails `are present, So you can just put the

`getDeleteDeltaFilesInSeg` method inside `checkDeleteDeltaFilesInSeg` and no

need to completely modify `getSegListIUDCompactionQualified`, it will be more

clean.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] marchpure commented on pull request #3982: [CARBONDATA-4032] Fix drop partition command clean data issue

marchpure commented on pull request #3982: URL: https://github.com/apache/carbondata/pull/3982#issuecomment-713513371 retest this please This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3987: [CARBONDATA-4039] Support Local dictionary for Presto complex datatypes

CarbonDataQA1 commented on pull request #3987: URL: https://github.com/apache/carbondata/pull/3987#issuecomment-713509134 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4584/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3989: [TEST] CI

CarbonDataQA1 commented on pull request #3989: URL: https://github.com/apache/carbondata/pull/3989#issuecomment-713507326 Build Success with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4579/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] akashrn5 commented on a change in pull request #3986: [CARBONDATA-4034] Improve the time-consuming of Horizontal Compaction for update

akashrn5 commented on a change in pull request #3986:

URL: https://github.com/apache/carbondata/pull/3986#discussion_r509204221

##

File path:

core/src/main/java/org/apache/carbondata/core/statusmanager/SegmentUpdateStatusManager.java

##

@@ -415,44 +415,38 @@ public boolean accept(CarbonFile pathName) {

}

/**

- * Return all delta file for a block.

- * @param segmentId

- * @param blockName

- * @return

+ * Get all delete delta files of the block of specified segment.

+ * Actually, delete delta file name is generated from each

SegmentUpdateDetails.

+ *

+ * @param seg the segment which is to find block and its delete delta files

+ * @param blockName the specified block of the segment

+ * @return delete delta file list of the block

*/

- public CarbonFile[] getDeleteDeltaFilesList(final Segment segmentId, final

String blockName) {

+ public List getDeleteDeltaFilesList(final Segment seg, final String

blockName) {

+

+List deleteDeltaFileList = new ArrayList<>();

String segmentPath = CarbonTablePath.getSegmentPath(

-identifier.getTablePath(), segmentId.getSegmentNo());

-CarbonFile segDir =

-FileFactory.getCarbonFile(segmentPath);

+identifier.getTablePath(), seg.getSegmentNo());

+

for (SegmentUpdateDetails block : updateDetails) {

if ((block.getBlockName().equalsIgnoreCase(blockName)) &&

- (block.getSegmentName().equalsIgnoreCase(segmentId.getSegmentNo()))

- && !CarbonUpdateUtil.isBlockInvalid((block.getSegmentStatus( {

+ (block.getSegmentName().equalsIgnoreCase(seg.getSegmentNo())) &&

+ !CarbonUpdateUtil.isBlockInvalid(block.getSegmentStatus())) {

final long deltaStartTimestamp =

getStartTimeOfDeltaFile(CarbonCommonConstants.DELETE_DELTA_FILE_EXT, block);

final long deltaEndTimeStamp =

getEndTimeOfDeltaFile(CarbonCommonConstants.DELETE_DELTA_FILE_EXT,

block);

-

-return segDir.listFiles(new CarbonFileFilter() {

-

- @Override

- public boolean accept(CarbonFile pathName) {

-String fileName = pathName.getName();

-if (pathName.getSize() > 0

-&&

fileName.endsWith(CarbonCommonConstants.DELETE_DELTA_FILE_EXT)) {

- String blkName = fileName.substring(0,

fileName.lastIndexOf("-"));

- long timestamp =

-

Long.parseLong(CarbonTablePath.DataFileUtil.getTimeStampFromFileName(fileName));

- return blockName.equals(blkName) && timestamp <=

deltaEndTimeStamp

- && timestamp >= deltaStartTimestamp;

-}

-return false;

- }

-});

+Set deleteDeltaFiles = new HashSet<>();

Review comment:

instead of this you can follow like below

1. from `SegmentUpdateDetails`, call `getDeltaFileStamps` method and check

if not null and size > 0, if not null, directly take the list of delta

timestamps and prepare to final list with the blockname, as we already know

2. If the `getDeltaFileStamps` gives null, then there is only one valid

delta timestamp, in this case, `SegmentUpdateDetails` will have same delta

start and end timestamp, so you can take one and form a delta file name and

return only this, as only one file is there. No need to create list just to add

one, so u can create list only in 1st case.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] akashrn5 commented on a change in pull request #3986: [CARBONDATA-4034] Improve the time-consuming of Horizontal Compaction for update

akashrn5 commented on a change in pull request #3986:

URL: https://github.com/apache/carbondata/pull/3986#discussion_r509202313

##

File path:

core/src/main/java/org/apache/carbondata/core/statusmanager/SegmentUpdateStatusManager.java

##

@@ -415,44 +415,38 @@ public boolean accept(CarbonFile pathName) {

}

/**

- * Return all delta file for a block.

- * @param segmentId

- * @param blockName

- * @return

+ * Get all delete delta files of the block of specified segment.

+ * Actually, delete delta file name is generated from each

SegmentUpdateDetails.

+ *

+ * @param seg the segment which is to find block and its delete delta files

+ * @param blockName the specified block of the segment

+ * @return delete delta file list of the block

*/

- public CarbonFile[] getDeleteDeltaFilesList(final Segment segmentId, final

String blockName) {

+ public List getDeleteDeltaFilesList(final Segment seg, final String

blockName) {

+

+List deleteDeltaFileList = new ArrayList<>();

String segmentPath = CarbonTablePath.getSegmentPath(

-identifier.getTablePath(), segmentId.getSegmentNo());

-CarbonFile segDir =

-FileFactory.getCarbonFile(segmentPath);

+identifier.getTablePath(), seg.getSegmentNo());

+

for (SegmentUpdateDetails block : updateDetails) {

if ((block.getBlockName().equalsIgnoreCase(blockName)) &&

- (block.getSegmentName().equalsIgnoreCase(segmentId.getSegmentNo()))

- && !CarbonUpdateUtil.isBlockInvalid((block.getSegmentStatus( {

+ (block.getSegmentName().equalsIgnoreCase(seg.getSegmentNo())) &&

+ !CarbonUpdateUtil.isBlockInvalid(block.getSegmentStatus())) {

final long deltaStartTimestamp =

getStartTimeOfDeltaFile(CarbonCommonConstants.DELETE_DELTA_FILE_EXT, block);

final long deltaEndTimeStamp =

getEndTimeOfDeltaFile(CarbonCommonConstants.DELETE_DELTA_FILE_EXT,

block);

-

-return segDir.listFiles(new CarbonFileFilter() {

-

- @Override

- public boolean accept(CarbonFile pathName) {

-String fileName = pathName.getName();

-if (pathName.getSize() > 0

-&&

fileName.endsWith(CarbonCommonConstants.DELETE_DELTA_FILE_EXT)) {

- String blkName = fileName.substring(0,

fileName.lastIndexOf("-"));

- long timestamp =

-

Long.parseLong(CarbonTablePath.DataFileUtil.getTimeStampFromFileName(fileName));

- return blockName.equals(blkName) && timestamp <=

deltaEndTimeStamp

- && timestamp >= deltaStartTimestamp;

-}

-return false;

- }

-});

+Set deleteDeltaFiles = new HashSet<>();

+deleteDeltaFiles.add(segmentPath +

CarbonCommonConstants.FILE_SEPARATOR +

+blockName + CarbonCommonConstants.HYPHEN + deltaStartTimestamp +

+CarbonCommonConstants.DELETE_DELTA_FILE_EXT);

+deleteDeltaFiles.add(segmentPath +

CarbonCommonConstants.FILE_SEPARATOR +

+blockName + CarbonCommonConstants.HYPHEN + deltaEndTimeStamp +

+CarbonCommonConstants.DELETE_DELTA_FILE_EXT);

Review comment:

why are you adding two times here with start and end timestamp? This is

wrong, please check

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] PurujitChaugule commented on a change in pull request #3917: [CARBONDATA-3978] Clean Files Refactor and support for trash folder in carbondata

PurujitChaugule commented on a change in pull request #3917: URL: https://github.com/apache/carbondata/pull/3917#discussion_r509201958 ## File path: docs/dml-of-carbondata.md ## @@ -552,3 +553,50 @@ CarbonData DML statements are documented here,which includes: ``` CLEAN FILES FOR TABLE carbon_table ``` + +## CLEAN FILES + + Clean files command is used to remove the Compacted and Marked + For Delete Segments from the store. Carbondata also supports Trash + Folder where all the stale data is moved to after clean files + is called + + There are several types of compaction + + ``` + CLEAN FILES ON TABLE TableName + ``` Review comment: Clean files syntax needs to be changed from "clean files on table tablename" to "clean files for table tablename" as testcases mentioned use the above This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3950: [CARBONDATA-3889] Enable scalastyle check for all scala test code

CarbonDataQA1 commented on pull request #3950: URL: https://github.com/apache/carbondata/pull/3950#issuecomment-713503264 Build Success with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2829/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3875: [CARBONDATA-3934]Support write transactional table with presto.

CarbonDataQA1 commented on pull request #3875: URL: https://github.com/apache/carbondata/pull/3875#issuecomment-713498847 Build Failed with Spark 2.3.4, Please check CI http://121.244.95.60:12545/job/ApacheCarbonPRBuilder2.3/4586/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] CarbonDataQA1 commented on pull request #3875: [CARBONDATA-3934]Support write transactional table with presto.

CarbonDataQA1 commented on pull request #3875: URL: https://github.com/apache/carbondata/pull/3875#issuecomment-713498928 Build Failed with Spark 2.4.5, Please check CI http://121.244.95.60:12545/job/ApacheCarbon_PR_Builder_2.4.5/2836/ This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] akashrn5 commented on a change in pull request #3986: [CARBONDATA-4034] Improve the time-consuming of Horizontal Compaction for update

akashrn5 commented on a change in pull request #3986:

URL: https://github.com/apache/carbondata/pull/3986#discussion_r509190649

##

File path:

integration/spark/src/main/scala/org/apache/spark/sql/execution/command/mutation/HorizontalCompaction.scala

##

@@ -125,12 +125,18 @@ object HorizontalCompaction {

segLists: util.List[Segment]): Unit = {

val db = carbonTable.getDatabaseName

val table = carbonTable.getTableName

+val startTime = System.nanoTime()

+

// get the valid segments qualified for update compaction.

val validSegList =

CarbonDataMergerUtil.getSegListIUDCompactionQualified(segLists,

absTableIdentifier,

segmentUpdateStatusManager,

compactionTypeIUD)

+val endTime = System.nanoTime()

+LOG.info(s"time taken to get segment list for Horizontal Update Compaction

is" +

Review comment:

I dont think time in this log will help us, just segment list can help

in someway, not time

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] akashrn5 commented on a change in pull request #3986: [CARBONDATA-4034] Improve the time-consuming of Horizontal Compaction for update

akashrn5 commented on a change in pull request #3986:

URL: https://github.com/apache/carbondata/pull/3986#discussion_r509190746

##

File path:

integration/spark/src/main/scala/org/apache/spark/sql/execution/command/mutation/HorizontalCompaction.scala

##

@@ -173,11 +179,17 @@ object HorizontalCompaction {

val db = carbonTable.getDatabaseName

val table = carbonTable.getTableName

+val startTime = System.nanoTime()

+

val deletedBlocksList =

CarbonDataMergerUtil.getSegListIUDCompactionQualified(segLists,

absTableIdentifier,

segmentUpdateStatusManager,

compactionTypeIUD)

+val endTime = System.nanoTime()

+LOG.info(s"time taken to get deleted block list for Horizontal Delete

Compaction is" +

Review comment:

same as above

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] chetandb commented on a change in pull request #3917: [CARBONDATA-3978] Clean Files Refactor and support for trash folder in carbondata

chetandb commented on a change in pull request #3917:

URL: https://github.com/apache/carbondata/pull/3917#discussion_r509160183

##

File path: docs/cleanfiles.md

##

@@ -0,0 +1,78 @@

+

+

+

+## CLEAN FILES

+

+Clean files command is used to remove the Compacted, Marked For Delete ,In

Progress which are stale and Partial(Segments which are missing from the table

status file but their data is present)

+ segments from the store.

+

+ Clean Files Command

+ ```

+ CLEAN FILES ON TABLE TABLE_NAME

+ ```

+

+

+### TRASH FOLDER

+

+ Carbondata supports a Trash Folder which is used as a redundant folder where

all the unnecessary files and folders are moved to during clean files operation.

+ This trash folder is mantained inside the table path. It is a hidden

folder(.Trash). The segments that are moved to the trash folder are mantained

under a timestamp

+ subfolder(timestamp at which clean files operation is called). This helps

the user to list down segments by timestamp. By default all the timestamp

sub-directory have an expiration

+ time of (3 days since that timestamp) and it can be configured by the user

using the following carbon property

+ ```

+ carbon.trash.expiration.time = "Number of days"

+ ```

+ Once the timestamp subdirectory is expired as per the configured expiration

day value, the subdirectory is deleted from the trash folder in the subsequent

clean files command.

+

+

+

+

+### DRY RUN

+ Support for dry run is provided before the actual clean files operation.

This dry run operation will list down all the segments which are going to be

manipulated during

+ the clean files operation. The dry run result will show the current location

of the segment(it can be in FACT folder, Partition folder or trash folder) and

where that segment

+ will be moved(to the trash folder or deleted from store) once the actual

operation will be called.

+

+

+ ```

+ CLEAN FILES ON TABLE TABLE_NAME options('dry_run'='true')

+ ```

+

+### FORCE DELETE TRASH

+The force option with clean files command deletes all the files and folders

from the trash folder.

+

+ ```

+ CLEAN FILES ON TABLE TABLE_NAME options('force'='true')

+ ```

+

+### DATA RECOVERY FROM THE TRASH FOLDER

+

+The segments from can be recovered from the trash folder by creating an

external table from the desired segment location

Review comment:

Change "The segments from" to "The segments"

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] akkio-97 commented on a change in pull request #3987: [CARBONDATA-4039] Support Local dictionary for Presto complex datatypes

akkio-97 commented on a change in pull request #3987:

URL: https://github.com/apache/carbondata/pull/3987#discussion_r509152696

##

File path:

core/src/main/java/org/apache/carbondata/core/datastore/chunk/store/impl/LocalDictDimensionDataChunkStore.java

##

@@ -64,6 +66,14 @@ public void fillVector(int[] invertedIndex, int[]

invertedIndexReverse, byte[] d

int columnValueSize = dimensionDataChunkStore.getColumnValueSize();

int rowsNum = dataLength / columnValueSize;

CarbonColumnVector vector = vectorInfo.vector;

+if (vector.getType().isComplexType()) {

+ vector = vectorInfo.vectorStack.peek();

Review comment:

We are calling that right below after creating dictionaryVector.

Also on line 69 - vector is intialized as sliceStreamReader before we create

dictionaryVector. Otherwise dictionaryVector will be null, which will lead to

NPE. So I think it is better if we place it above only.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] akkio-97 commented on a change in pull request #3987: [CARBONDATA-4039] Support Local dictionary for Presto complex datatypes

akkio-97 commented on a change in pull request #3987:

URL: https://github.com/apache/carbondata/pull/3987#discussion_r509158326

##

File path:

core/src/main/java/org/apache/carbondata/core/datastore/chunk/reader/dimension/v3/DimensionChunkReaderV3.java

##

@@ -296,6 +297,12 @@ protected DimensionColumnPage

decodeDimension(DimensionRawColumnChunk rawColumnP

}

}

BitSet nullBitSet = QueryUtil.getNullBitSet(pageMetadata.presence,

this.compressor);

+ // store rawColumnChunk for local dictionary

+ if (vectorInfo != null && !vectorInfo.vectorStack.isEmpty()) {

Review comment:

done

##

File path:

core/src/main/java/org/apache/carbondata/core/scan/result/vector/impl/CarbonColumnVectorImpl.java

##

@@ -81,6 +82,8 @@

private List childElementsForEachRow;

+ public DimensionRawColumnChunk rawColumnChunk;

Review comment:

done

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] akkio-97 commented on a change in pull request #3987: [CARBONDATA-4039] Support Local dictionary for Presto complex datatypes

akkio-97 commented on a change in pull request #3987:

URL: https://github.com/apache/carbondata/pull/3987#discussion_r509157828

##

File path:

integration/presto/src/test/scala/org/apache/carbondata/presto/integrationtest/PrestoTestNonTransactionalTableFiles.scala

##

@@ -36,6 +36,7 @@ import org.apache.carbondata.presto.server.{PrestoServer,

PrestoTestUtil}

import org.apache.carbondata.sdk.file.{CarbonWriter, Schema}

+

Review comment:

done

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] akkio-97 commented on a change in pull request #3987: [CARBONDATA-4039] Support Local dictionary for Presto complex datatypes

akkio-97 commented on a change in pull request #3987:

URL: https://github.com/apache/carbondata/pull/3987#discussion_r509156660

##

File path:

core/src/main/java/org/apache/carbondata/core/scan/result/vector/impl/CarbonColumnVectorImpl.java

##

@@ -156,6 +159,14 @@ public void setNumberOfChildElementsForStruct(byte[]

parentPageData, int pageSiz

setNumberOfChildElementsInEachRow(childElementsForEachRow);

}

+ public void setPositionCount(int positionCount) {

+

Review comment:

SliceStreamReader belongs to presto module. I will have to add

dependency in order to do that. So I have moved this as a default

implementation to CarbonColumnVector.java.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] chetandb commented on a change in pull request #3917: [CARBONDATA-3978] Clean Files Refactor and support for trash folder in carbondata

chetandb commented on a change in pull request #3917: URL: https://github.com/apache/carbondata/pull/3917#discussion_r509157185 ## File path: docs/cleanfiles.md ## @@ -0,0 +1,78 @@ + + + +## CLEAN FILES + +Clean files command is used to remove the Compacted, Marked For Delete ,In Progress which are stale and Partial(Segments which are missing from the table status file but their data is present) + segments from the store. + + Clean Files Command + ``` + CLEAN FILES ON TABLE TABLE_NAME Review comment: The test cases in TestCleanFileCommand are having syntax "clean files for table tablename" whereas here its mentioned as "clean files on table tablename" This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [carbondata] akkio-97 commented on a change in pull request #3987: [CARBONDATA-4039] Support Local dictionary for Presto complex datatypes

akkio-97 commented on a change in pull request #3987:

URL: https://github.com/apache/carbondata/pull/3987#discussion_r509156660

##

File path:

core/src/main/java/org/apache/carbondata/core/scan/result/vector/impl/CarbonColumnVectorImpl.java

##

@@ -156,6 +159,14 @@ public void setNumberOfChildElementsForStruct(byte[]

parentPageData, int pageSiz

setNumberOfChildElementsInEachRow(childElementsForEachRow);

}

+ public void setPositionCount(int positionCount) {

+

Review comment:

Moved this as a default implementation to CarbonColumnVector.java

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] vikramahuja1001 commented on a change in pull request #3917: [CARBONDATA-3978] Clean Files Refactor and support for trash folder in carbondata

vikramahuja1001 commented on a change in pull request #3917:

URL: https://github.com/apache/carbondata/pull/3917#discussion_r509138245

##

File path:

core/src/main/java/org/apache/carbondata/core/util/path/TrashUtil.java

##

@@ -0,0 +1,114 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.carbondata.core.util.path;

+

+import java.io.File;

+import java.io.IOException;

+import java.util.List;

+

+import org.apache.carbondata.common.logging.LogServiceFactory;

+import org.apache.carbondata.core.constants.CarbonCommonConstants;

+import org.apache.carbondata.core.datastore.filesystem.CarbonFile;

+import org.apache.carbondata.core.datastore.impl.FileFactory;

+import org.apache.carbondata.core.util.CarbonUtil;

+

+import org.apache.commons.io.FileUtils;

+

+import org.apache.hadoop.fs.permission.FsAction;

+import org.apache.hadoop.fs.permission.FsPermission;

+

+import org.apache.log4j.Logger;

+

+public final class TrashUtil {

+

+ /**

+ * Attribute for Carbon LOGGER

+ */

+ private static final Logger LOGGER =

+ LogServiceFactory.getLogService(CarbonUtil.class.getName());

+

+ private TrashUtil() {

+

+ }

+

+ public static void copyDataToTrashFolder(String carbonTablePath, String

pathOfFileToCopy,

+ String suffixToAdd) throws

IOException {

+String trashFolderPath = carbonTablePath +

CarbonCommonConstants.FILE_SEPARATOR +

+CarbonCommonConstants.CARBON_TRASH_FOLDER_NAME +

CarbonCommonConstants.FILE_SEPARATOR

++ suffixToAdd;

+try {

+ if (new File(pathOfFileToCopy).exists()) {

+if (!FileFactory.isFileExist(trashFolderPath)) {

+ LOGGER.info("Creating Trash folder at:" + trashFolderPath);

+ FileFactory.createDirectoryAndSetPermission(trashFolderPath,

+ new FsPermission(FsAction.ALL, FsAction.ALL, FsAction.ALL));

+}

+FileUtils.copyFileToDirectory(new File(pathOfFileToCopy),

+new File(trashFolderPath));

+ }

+} catch (IOException e) {

+ LOGGER.error("Unable to copy " + pathOfFileToCopy + " to the trash

folder");

+}

+ }

+

+ public static void copyDataRecursivelyToTrashFolder(CarbonFile path, String

carbonTablePath,

+ String segmentNo) throws

IOException {

+if (!path.isDirectory()) {

+ // copy data to trash

+ copyDataToTrashFolder(carbonTablePath, path.getAbsolutePath(),

segmentNo);

+ return;

+}

+CarbonFile[] files = path.listFiles();

Review comment:

changed logic

##

File path:

core/src/main/java/org/apache/carbondata/core/util/path/TrashUtil.java

##

@@ -0,0 +1,114 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.carbondata.core.util.path;

+

+import java.io.File;

+import java.io.IOException;

+import java.util.List;

+

+import org.apache.carbondata.common.logging.LogServiceFactory;

+import org.apache.carbondata.core.constants.CarbonCommonConstants;

+import org.apache.carbondata.core.datastore.filesystem.CarbonFile;

+import org.apache.carbondata.core.datastore.impl.FileFactory;

+import org.apache.carbondata.core.util.CarbonUtil;

+

+import org.apache.commons.io.FileUtils;

+

+import org.apache.hadoop.fs.permission.FsAction;

+import org.apache.hadoop.fs.permission.FsPermission;

+

+import org.apache.log4j.Logger;

+

+public final class TrashUtil {

+

+ /**

+ *

[GitHub] [carbondata] akkio-97 commented on a change in pull request #3987: [CARBONDATA-4039] Support Local dictionary for Presto complex datatypes

akkio-97 commented on a change in pull request #3987:

URL: https://github.com/apache/carbondata/pull/3987#discussion_r509152696

##

File path:

core/src/main/java/org/apache/carbondata/core/datastore/chunk/store/impl/LocalDictDimensionDataChunkStore.java

##

@@ -64,6 +66,14 @@ public void fillVector(int[] invertedIndex, int[]

invertedIndexReverse, byte[] d

int columnValueSize = dimensionDataChunkStore.getColumnValueSize();

int rowsNum = dataLength / columnValueSize;

CarbonColumnVector vector = vectorInfo.vector;

+if (vector.getType().isComplexType()) {

+ vector = vectorInfo.vectorStack.peek();

Review comment:

We are calling that right below after creating dictionaryVector.

on line 69 - vector is intialized as sliceStreamReader before we create

dictionaryVector. Otherwise dictionaryVector will be null, which will lead to

NPE

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] vikramahuja1001 commented on a change in pull request #3917: [CARBONDATA-3978] Clean Files Refactor and support for trash folder in carbondata

vikramahuja1001 commented on a change in pull request #3917:

URL: https://github.com/apache/carbondata/pull/3917#discussion_r509135611

##

File path:

core/src/main/java/org/apache/carbondata/core/util/path/TrashUtil.java

##

@@ -0,0 +1,114 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.carbondata.core.util.path;

+

+import java.io.File;

+import java.io.IOException;

+import java.util.List;

+

+import org.apache.carbondata.common.logging.LogServiceFactory;

+import org.apache.carbondata.core.constants.CarbonCommonConstants;

+import org.apache.carbondata.core.datastore.filesystem.CarbonFile;

+import org.apache.carbondata.core.datastore.impl.FileFactory;

+import org.apache.carbondata.core.util.CarbonUtil;

+

+import org.apache.commons.io.FileUtils;

+

+import org.apache.hadoop.fs.permission.FsAction;

+import org.apache.hadoop.fs.permission.FsPermission;

+

+import org.apache.log4j.Logger;

+

+public final class TrashUtil {

+

+ /**

+ * Attribute for Carbon LOGGER

+ */

+ private static final Logger LOGGER =

+ LogServiceFactory.getLogService(CarbonUtil.class.getName());

+

+ private TrashUtil() {

+

+ }

+

+ public static void copyDataToTrashFolder(String carbonTablePath, String

pathOfFileToCopy,

+ String suffixToAdd) throws

IOException {

+String trashFolderPath = carbonTablePath +

CarbonCommonConstants.FILE_SEPARATOR +

+CarbonCommonConstants.CARBON_TRASH_FOLDER_NAME +

CarbonCommonConstants.FILE_SEPARATOR

++ suffixToAdd;

+try {

+ if (new File(pathOfFileToCopy).exists()) {

+if (!FileFactory.isFileExist(trashFolderPath)) {

+ LOGGER.info("Creating Trash folder at:" + trashFolderPath);

+ FileFactory.createDirectoryAndSetPermission(trashFolderPath,

+ new FsPermission(FsAction.ALL, FsAction.ALL, FsAction.ALL));

+}

+FileUtils.copyFileToDirectory(new File(pathOfFileToCopy),

Review comment:

using copy, because if anything crashes while moving files, cannot

recover them. So, copying all the files of a segment and then deleting them

after copying is success

##

File path:

processing/src/main/java/org/apache/carbondata/processing/loading/TableProcessingOperations.java

##

@@ -152,6 +123,41 @@ public static void

deletePartialLoadDataIfExist(CarbonTable carbonTable,

}

}

+ public static HashMap

getStaleSegments(LoadMetadataDetails[] details,

Review comment:

done

##

File path:

integration/spark/src/main/scala/org/apache/spark/sql/execution/command/mutation/CarbonTruncateCommand.scala

##

@@ -45,9 +45,11 @@ case class CarbonTruncateCommand(child:

TruncateTableCommand) extends DataComman

throw new MalformedCarbonCommandException(

"Unsupported truncate table with specified partition")

}

+val optionList = List.empty[(String, String)]

+

CarbonCleanFilesCommand(

databaseNameOp = Option(dbName),

- tableName = Option(tableName),

+ tableName = Option(tableName), Option(optionList),

Review comment:

done

##

File path:

integration/spark/src/main/scala/org/apache/spark/sql/execution/command/mutation/CarbonTruncateCommand.scala

##

@@ -45,9 +45,11 @@ case class CarbonTruncateCommand(child:

TruncateTableCommand) extends DataComman

throw new MalformedCarbonCommandException(

"Unsupported truncate table with specified partition")

}

+val optionList = List.empty[(String, String)]

Review comment:

done

##

File path:

integration/spark/src/main/scala/org/apache/spark/sql/execution/command/management/CarbonLoadDataCommand.scala

##

@@ -108,7 +108,7 @@ case class CarbonLoadDataCommand(databaseNameOp:

Option[String],

// Delete stale segment folders that are not in table status but are

physically present in

// the Fact folder

LOGGER.info(s"Deleting stale folders if present for table

$dbName.$tableName")

-TableProcessingOperations.deletePartialLoadDataIfExist(table, false)

+// TableProcessingOperations.deletePartialLoadDataIfExist(table, false)

Review comment:

done

##

File path:

[GitHub] [carbondata] vikramahuja1001 commented on a change in pull request #3917: [CARBONDATA-3978] Clean Files Refactor and support for trash folder in carbondata

vikramahuja1001 commented on a change in pull request #3917:

URL: https://github.com/apache/carbondata/pull/3917#discussion_r509138090

##

File path:

core/src/main/java/org/apache/carbondata/core/util/path/TrashUtil.java

##

@@ -0,0 +1,114 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.carbondata.core.util.path;

+

+import java.io.File;

+import java.io.IOException;

+import java.util.List;

+

+import org.apache.carbondata.common.logging.LogServiceFactory;

+import org.apache.carbondata.core.constants.CarbonCommonConstants;

+import org.apache.carbondata.core.datastore.filesystem.CarbonFile;

+import org.apache.carbondata.core.datastore.impl.FileFactory;

+import org.apache.carbondata.core.util.CarbonUtil;

+

+import org.apache.commons.io.FileUtils;

+

+import org.apache.hadoop.fs.permission.FsAction;

+import org.apache.hadoop.fs.permission.FsPermission;

+

+import org.apache.log4j.Logger;

+

+public final class TrashUtil {

+

+ /**

+ * Attribute for Carbon LOGGER

+ */

Review comment:

done

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] akkio-97 commented on a change in pull request #3987: [CARBONDATA-4039] Support Local dictionary for Presto complex datatypes

akkio-97 commented on a change in pull request #3987:

URL: https://github.com/apache/carbondata/pull/3987#discussion_r509152696

##

File path:

core/src/main/java/org/apache/carbondata/core/datastore/chunk/store/impl/LocalDictDimensionDataChunkStore.java

##

@@ -64,6 +66,14 @@ public void fillVector(int[] invertedIndex, int[]

invertedIndexReverse, byte[] d

int columnValueSize = dimensionDataChunkStore.getColumnValueSize();

int rowsNum = dataLength / columnValueSize;

CarbonColumnVector vector = vectorInfo.vector;

+if (vector.getType().isComplexType()) {

+ vector = vectorInfo.vectorStack.peek();

Review comment:

We are calling that right below after creating dictionary block.

on line 69 - vector is intialized as sliceStreamReader before we create

dictionaryBlock. Otherwise dictionaryBlock will be null, which will lead to NPE

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [carbondata] chetandb commented on a change in pull request #3917: [CARBONDATA-3978] Clean Files Refactor and support for trash folder in carbondata

chetandb commented on a change in pull request #3917:

URL: https://github.com/apache/carbondata/pull/3917#discussion_r509154365

##

File path:

integration/spark/src/test/scala/org/apache/carbondata/spark/testsuite/cleanfiles/TestCleanFileCommand.scala

##

@@ -0,0 +1,484 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ *

+ */

+

+package org.apache.carbondata.spark.testsuite.cleanfiles

+

+import java.io.{File, PrintWriter}

+import java.util

+import java.util.List

+

+import org.apache.carbondata.cleanfiles.CleanFilesUtil

+import org.apache.carbondata.core.constants.CarbonCommonConstants

+import org.apache.carbondata.core.datastore.filesystem.CarbonFile

+import org.apache.carbondata.core.datastore.impl.FileFactory

+import org.apache.carbondata.core.util.CarbonUtil

+import org.apache.spark.sql.{CarbonEnv, Row}

+import org.apache.spark.sql.test.util.QueryTest

+import org.scalatest.BeforeAndAfterAll

+

+import scala.io.Source

+

+class TestCleanFileCommand extends QueryTest with BeforeAndAfterAll {

+

+ var count = 0

+

+ test("clean up table and test trash folder with In Progress segments") {

+sql("""DROP TABLE IF EXISTS CLEANTEST""")

+sql("""DROP TABLE IF EXISTS CLEANTEST1""")

+sql(

+ """

+| CREATE TABLE cleantest (name String, id Int)

+| STORED AS carbondata

+ """.stripMargin)

+sql(s"""INSERT INTO CLEANTEST SELECT "abc", 1""")

+sql(s"""INSERT INTO CLEANTEST SELECT "abc", 1""")

+sql(s"""INSERT INTO CLEANTEST SELECT "abc", 1""")

+// run a select query before deletion

+checkAnswer(sql(s"""select count(*) from cleantest"""),

+ Seq(Row(3)))

+

+val path = CarbonEnv.getCarbonTable(Some("default"),

"cleantest")(sqlContext.sparkSession)

+ .getTablePath

+val tableStatusFilePath = path + CarbonCommonConstants.FILE_SEPARATOR +

"Metadata" +

+ CarbonCommonConstants.FILE_SEPARATOR + "tableStatus"

+editTableStatusFile(path)

+val trashFolderPath = path + CarbonCommonConstants.FILE_SEPARATOR +

+ CarbonCommonConstants.CARBON_TRASH_FOLDER_NAME

+

+assert(!FileFactory.isFileExist(trashFolderPath))

+val dryRun = sql(s"CLEAN FILES FOR TABLE cleantest

OPTIONS('isDryRun'='true')").count()

+// dry run shows 3 segments to move to trash

+assert(dryRun == 3)

+

+sql(s"CLEAN FILES FOR TABLE cleantest").show

+

+checkAnswer(sql(s"""select count(*) from cleantest"""),

+ Seq(Row(0)))

+assert(FileFactory.isFileExist(trashFolderPath))

+var list = getFileCountInTrashFolder(trashFolderPath)

+assert(list == 6)

+

+val dryRun1 = sql(s"CLEAN FILES FOR TABLE cleantest

OPTIONS('isDryRun'='true')").count()

+sql(s"CLEAN FILES FOR TABLE cleantest").show

+

+count = 0

+list = getFileCountInTrashFolder(trashFolderPath)

+// no carbondata file is added to the trash

+assert(list == 6)

+

+

+val timeStamp = getTimestampFolderName(trashFolderPath)

+

+// recovering data from trash folder

+sql(

+ """

+| CREATE TABLE cleantest1 (name String, id Int)

+| STORED AS carbondata

+ """.stripMargin)

+

+val segment0Path = trashFolderPath + CarbonCommonConstants.FILE_SEPARATOR

+ timeStamp +

+ CarbonCommonConstants.FILE_SEPARATOR + CarbonCommonConstants.LOAD_FOLDER

+ '0'

+val segment1Path = trashFolderPath + CarbonCommonConstants.FILE_SEPARATOR

+ timeStamp +

+ CarbonCommonConstants.FILE_SEPARATOR + CarbonCommonConstants.LOAD_FOLDER

+ '1'

+val segment2Path = trashFolderPath + CarbonCommonConstants.FILE_SEPARATOR

+ timeStamp +