[GitHub] jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum

jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum URL: https://github.com/apache/couchdb/pull/1139#issuecomment-403727481 @janl I've updated db deletion tests to cover new behaviour Thanks This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum

jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum URL: https://github.com/apache/couchdb/pull/1139#issuecomment-377358283 @wohali I'll modify the condition to respond with 200 - Ok only if we have a response from all the nodes, If we have a positive response from some of them 202-Accepted is returned. The problem I see is that the behaviour is not consistent with the creation where the quorum is considered, but is better than the current situation which returns a 500-Error This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum

jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum URL: https://github.com/apache/couchdb/pull/1139#issuecomment-370928857 Thanks @kocolosk for your comments. I see that the orphaned files question is a different issue. We are facing it as part of a cleanup process that we have implemented in our system. Databases are periodically transferred to a temporary database and then moved back to the work database. It is like a purging procedure that allows us to keep the databases small. During this process we are removing and creating databases and orphaned files are produced. **+1 for the orphaned files cleanup process enhancement.** Respect to the main issue of this PR, I think that the main problem is to respond with a `500 Error` when the operation has been accepted and the database is deleted on the nodes that have received the request. It is responding with an error but the database is deleted in the active nodes, and later on propagated to the rest of nodes once they are accessible. It seem to me that this behaviour is more akin to a `202 Accepted` result. The idea of responding `200 Ok` in the case that the quorum is met is mainly to keep the API consistent with other operations where the quorum is considered but I see that this can have some drawbacks. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum

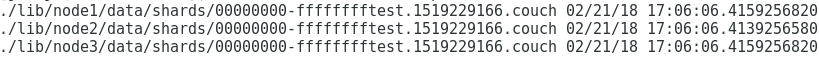

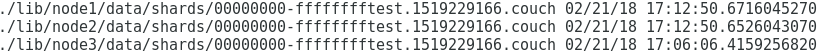

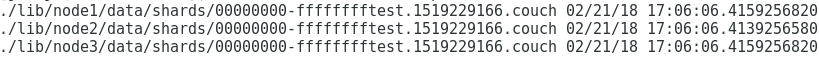

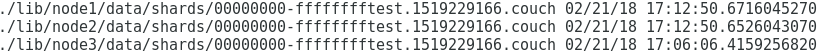

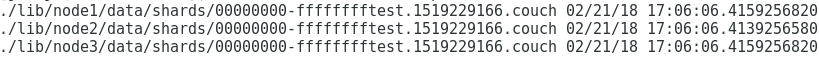

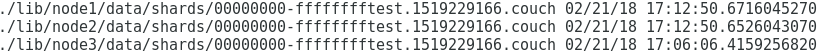

jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum URL: https://github.com/apache/couchdb/pull/1139#issuecomment-367381444 @rnewson I've done some more testing on the deletion with different cluster conditions. It seems that some .couch files keep orphaned if a database is deleted with one of the nodes of the cluster stopped. - Start 3-node cluster and create test db: ``` ./dev/run -n 3 --with-admin-party-please curl -X PUT http://127.0.0.1:15984/test?q=1 ```  *One .couch file is created per node* - Stop 1 node in the cluster and delete the database ``` ./dev/run -n 3 --with-admin-party-please --degrade-cluster 1 curl -X DELETE http://127.0.0.1:15984/test ```  *The .couch is removed and persist in the stopped node as expected* - Start the complete cluster and check if the database is deleted ``` ./dev/run -n 3 --with-admin-party-please curl -X HEAD http://127.0.0.1:15984/test -v ``` *The database is not found but the .couch file is propagated from the restarted node to the rest*  It seems that .couch files keep orphaned in the system after a deletion with at least a node stopped. This PR does not modify this behavior. Do you think, this is an issue? This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum

jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum URL: https://github.com/apache/couchdb/pull/1139#issuecomment-367381444 @rnewson I've done some more testing on the deletion with different cluster conditions. It seems that some .couch files keep orphaned if a database is deleted with one of the nodes of the cluster stopped. - Start 3-node cluster and create test db: ``` ./dev/run -n 3 --with-admin-party-please curl -X PUT http://127.0.0.1:15984/test?q=1 ```  *One .couch file is created per node* - Stop 1 node in the cluster and delete the database ``` ./dev/run -n 3 --with-admin-party-please --degrade-cluster 1 curl -X DELETE http://127.0.0.1:15984/test ```  *The .couch is removed and persist in the stopped node as expected* - Start the complete cluster and check if the database is deleted ``` ./dev/run -n 3 --with-admin-party-please curl -X HEAD http://127.0.0.1:15984/test -v ``` *The database is not found but the .couch file is propagated from the restarted node to the rest*  It seems that .couch files keeps orphaned in the system after a deletion with at least a node stopped. This PR does not modify this behavior. Do you think, this is an issue? This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum

jjrodrig commented on issue #1139: Fix for issue #1136 - Error 500 deleting DB without quorum URL: https://github.com/apache/couchdb/pull/1139#issuecomment-367381444 @rnewson I've done some more testing on the deletion with different cluster conditions. It seems that some .couch files keep orphaned if a database is deleted with one of the nodes of the cluster stopped. - Start 3-node cluster and create test db: ``` ./dev/run -n 3 --with-admin-party-please curl -X PUT http://127.0.0.1:15984/test?q=1 ```  *One .couch file is created per node* - Stop 1 node in the cluster and delete the database ``` ./dev/run -n 3 --with-admin-party-please --degrade-cluster 1 curl -X DELETE http://127.0.0.1:15984/test ```  *The .couch is removed and persist in the stopped node as expected* - Start the complete cluster and check if the database is deleted ``` ./dev/run -n 3 --with-admin-party-please curl -X HEAD http://127.0.0.1:15984/test -v ``` *The database is not found but the .couch file is propagated from the restarted node to the rest*  It seems that .couch file keeps orphaned in the system after a deletion with at least a node stopped. This PR does not modify this behavior. Do you think, this is an issue? This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services