Jorricks opened a new issue, #26068: URL: https://github.com/apache/airflow/issues/26068

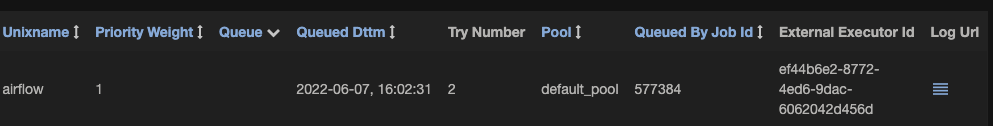

### Apache Airflow version 2.3.4 ### What happened Some tasks do not have a `queue` value defined and equal `None`. When you load the GRID view for these DAGs you get a 502 error with the following error appearing in the logs: ``` https://airflow-webserver-hdp-beta-b.is.adyen.com/api/v1/dags/shopper_connect_report/tasks validation error: None is not of type 'string' Failed validating 'type' in schema['properties']['tasks']['items']['properties']['queue']: {'readOnly': True, 'type': 'string'} On instance['tasks'][4]['queue']: None ``` ### What you think should happen instead The API should return a successful 200 response with the data. The schema should allow for the `queue` to be nullable. Currently it's not as you can see here: ``` retries: type: number readOnly: true queue: type: string readOnly: true ... execution_timeout: $ref: '#/components/schemas/TimeDelta' nullable: true ``` We should add `nullable: true` to this field. Currently this crashes the webserver with this error. ### How to reproduce Create some TaskInstances. Then make the queue field empty in a Database. You can check this in the TaskInstance view:  Then open the GRID view. ### Operating System CentOS Linux 7 (Core) ### Versions of Apache Airflow Providers ``` apache-airflow-providers-apache-druid==2.0.2 # via apache-airflow apache-airflow-providers-apache-hdfs==2.1.1 # via apache-airflow apache-airflow-providers-apache-hive==2.0.2 # via apache-airflow apache-airflow-providers-apache-spark==2.0.1 # via apache-airflow apache-airflow-providers-celery==2.1.0 # via # -r adyen_airflow/requirements/requirements.txt # apache-airflow apache-airflow-providers-ftp==2.0.1 # via apache-airflow apache-airflow-providers-http==2.0.1 # via apache-airflow apache-airflow-providers-imap==2.0.1 # via apache-airflow apache-airflow-providers-pagerduty==2.0.1 # via apache-airflow apache-airflow-providers-postgres==2.3.0 # via apache-airflow apache-airflow-providers-sqlite==2.0.1 # via apache-airflow ``` ### Deployment Other ### Deployment details Deployed on centos boxes without virtualisation ### Anything else This problem occurs only on specific DAGs. It remains unknown to me why we have tasks that do not have a Queue specified. My expectation is that new tasks were added, then these tasks are set to success by setting the DAGRun to a success. ### Are you willing to submit PR? - [X] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]