Firefly4H commented on issue #13903:

URL:

https://github.com/apache/dolphinscheduler/issues/13903#issuecomment-1515743337

> > > > `{ "reader": { "parameter": { "password": "*******", "column": [

"RowKey", "DataId", "DataType", "ChannelIndex", "FlowName", "SubFlowName",

"ChannelTag1", "ChannelTag2", "CaptureType", "CaptureAngle", "Inputer",

"DeviceSN", "DeviceIndex", "StartEventTime", "EventTime", "CaptureTime",

"LPRClass", "LPR", "LPRColor", "VehicleClass", "VehicleType" ], "connection": [

{ "jdbcUrl": [ "jdbc:mysql://170.31.11.193:3306/enshi_its" ], "table": [

"`VehiclePassCPT_t`" ] } ], "splitPk": "", "username": "root" }, "name":

"mysqlreader" }, "writer": { "parameter": { "path":

"/vision01/user/hive/warehouse/dcm_first.db/ods_vehiclepasscpt_t_vv5/dt=$[yyyyMMdd]",

"fileName": "ods_vehiclepasscpt_t_vv5", "hadoopConfig": {

"dfs.namenode.rpc-address.maxvision01.nn2": "170.31.11.202:8020",

"dfs.namenode.rpc-address.maxvision01.nn1": "170.31.11.201:8020",

"dfs.client.failover.proxy.provider.maxvision01":

"org.apache.hadoop.hdfs.server.namenode.ha.ConfiguredFailoverProxyProvider",

"dfs.nameservices": "

maxvision01", "dfs.ha.namenodes.maxvision01": "nn1,nn2" }, "column": [ {

"name": "rowkey", "type": "string" }, { "name": "dataid", "type": "string" }, {

"name": "datatype", "type": "int" }, { "name": "channelindex", "type": "int" },

{ "name": "flowname", "type": "string" }, { "name": "subflowname", "type":

"string" }, { "name": "channeltag1", "type": "string" }, { "name":

"channeltag2", "type": "string" }, { "name": "capturetype", "type": "int" }, {

"name": "captureangle", "type": "int" }, { "name": "inputer", "type": "string"

}, { "name": "devicesn", "type": "string" }, { "name": "deviceindex", "type":

"int" }, { "name": "starteventtime", "type": "string" }, { "name": "eventtime",

"type": "string" }, { "name": "capturetime", "type": "string" }, { "name":

"lprclass", "type": "int" }, { "name": "lpr", "type": "string" }, { "name":

"lprcolor", "type": "int" }, { "name": "vehicleclass", "type": "int" }, {

"name": "vehicletype", "type": "string" } ], "defaultFS": "hdfs://vision01",

"wri

teMode": "truncate", "fieldDelimiter": "|", "fileType": "text" }, "name":

"hdfswriter" } }`

> > >

> > >

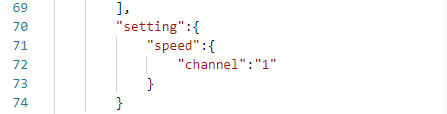

> > > can you show ` setting`

> > >

> >

> >

> >

> > The test found a problem with the mysql database and mysql driver

version used by dolphinsscheduler. After lowering the mysql version, it can be

executed normally. The problematic mysql version is 8.0.32.

>

> Hi @Firefly4H I think this is because the jar package of version 5.x is

used by default in the `DataX` component,not because of DS <img alt="image"

width="1423"

src="https://user-images.githubusercontent.com/33984497/233016316-e9172680-1a9e-489c-9728-3beafd2af38c.png";>

I don't think so. Using datax alone to run tasks is not a problem, as it can

occur when used together with DS

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]