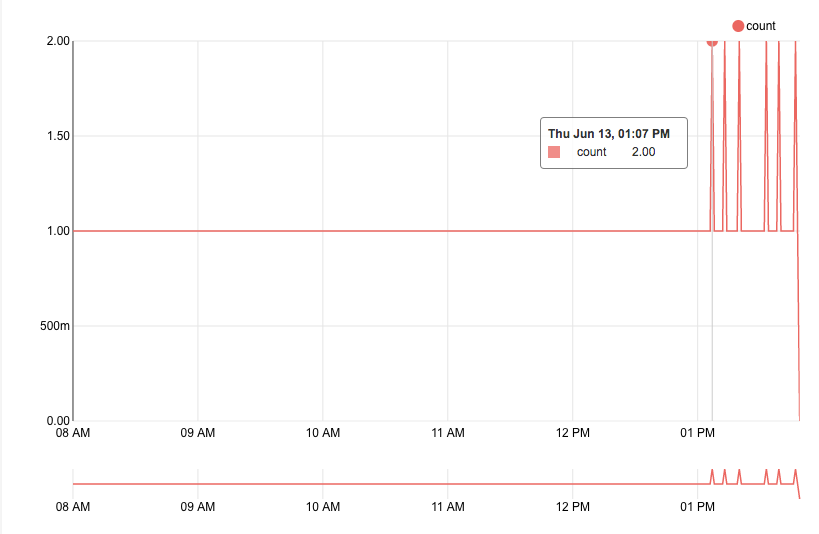

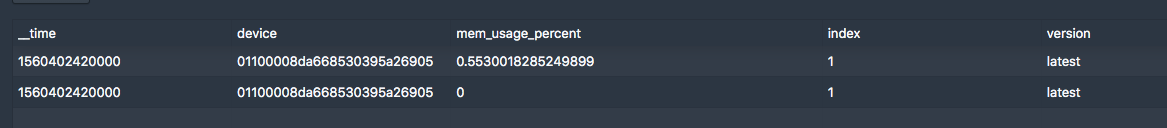

z1q1q7 opened a new issue #7877: scan return non-exist data from peon URL: https://github.com/apache/incubator-druid/issues/7877 I hava a tranquility process ingest data from kafka. When task doesn't end, I aggregate(use **count** aggregator) data from broker and find that latest data are not accurate, some timestamp's count seems to be doubles. datasource: docker_container dimensions: device metric: mem_usage_percent query params as follows: { "queryType": "timeseries", "dataSource": "docker_container", "aggregations": [ { "type": "count", "name": "count" } ], "granularity": { "type": "period", "timeZone": "CST", "period": "PT1M" }, "postAggregations": [], "intervals": "2019-06-13T08:00:00+08:00/2019-06-13T14:00:00+08:00", "filter": { "type": "and", "fields": [ { "type": "selector", "dimension": "device", "value": "01100008da" }, ] } } in superset:  Then, I scan the doubled timestamp as follows: { "queryType": "scan", "dataSource": "docker_container", "resultFormat": "list", "granularity": "minute", "columns":["__time", "mem_usage_percent"], "filter": { "type": "and", "fields": [ { "type": "selector", "dimension": "device", "value": "01100008da" }, ] } "intervals": "2019-06-13T05:07:00+00:00/2019-06-13T05:08:00+00:00", "context" : { "skipEmptyBuckets": "true" } } return as follows:  Same dimension, same timestamp return two metrics with one's value 0, the other is correct. Seems fill zero? At the same time, I found the two doubled point's interval seems be the segment persist's interval. When task end, same timestamp's result is ok. Any idea, have anyone meet same problem? Hope for reply!

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]