waywtdcc opened a new issue #4868: URL: https://github.com/apache/hudi/issues/4868

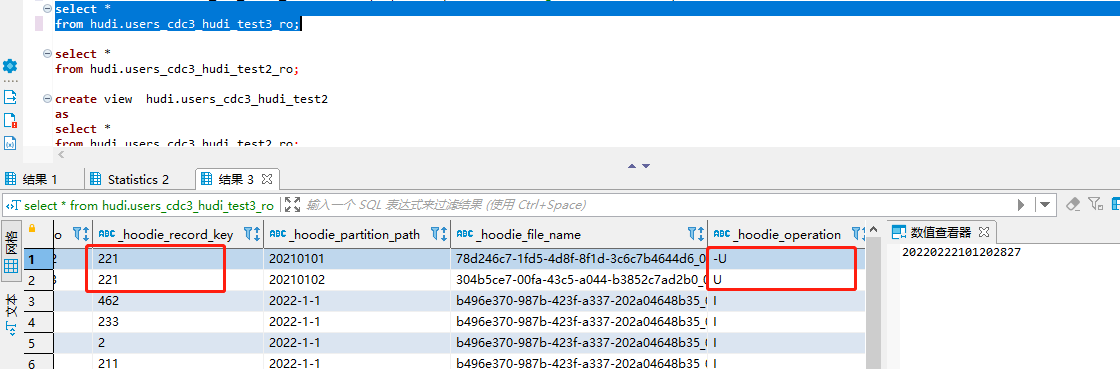

After the changlog mode write is enabled, the spark batch mode reads repeatedly, and multiple - U operation data appear  `CREATE TABLE `hudi.users_cdc3_hudi_test3`( ) ROW FORMAT SERDE 'org.apache.hadoop.hive.serde2.lazy.LazySimpleSerDe' STORED AS INPUTFORMAT 'org.apache.hadoop.mapred.TextInputFormat' OUTPUTFORMAT 'org.apache.hadoop.hive.ql.io.IgnoreKeyTextOutputFormat' LOCATION 'hdfs://test/user/hive/warehouse/hudi.db/users_cdc3_hudi_test3' TBLPROPERTIES ( 'flink.changelog.enabled'='true', 'flink.compaction.async.enabled'='true', 'flink.compaction.delta_commits'='1', 'flink.compaction.tasks'='1', 'flink.compaction.trigger.strategy'='num_or_time', 'flink.connector'='hudi', 'flink.hive_sync.db'='hudi', 'flink.hive_sync.enable'='true', 'flink.hive_sync.metastore.uris'='thrift://pmaster:53083,thrift://pnode3:53083,thrift://pnode1:53083', 'flink.hive_sync.mode'='hms', 'flink.hive_sync.skip_ro_suffix'='false', 'flink.hive_sync.table'='users_cdc3_hudi_test3', 'flink.hoodie.datasource.write.recordkey.field'='id', 'flink.index.bootstrap.enabled'='true', 'flink.index.global.enabled'='true', 'flink.index.state.ttl'='0', 'flink.partition.keys.0.name'='date_str', 'flink.path'='test', 'flink.read.streaming.enabled'='false', 'flink.schema.0.data-type'='BIGINT NOT NULL', 'flink.schema.0.name'='id', 'flink.schema.1.data-type'='VARCHAR(2147483647)', 'flink.schema.1.name'='name3', 'flink.schema.2.data-type'='TIMESTAMP(3)', 'flink.schema.2.name'='birthday3', 'flink.schema.3.data-type'='TIMESTAMP(3)', 'flink.schema.3.name'='ts3', 'flink.schema.4.data-type'='VARCHAR(2147483647)', 'flink.schema.4.name'='date_str', 'flink.schema.primary-key.columns'='id', 'flink.schema.primary-key.name'='PK_3386', 'flink.table.type'='MERGE_ON_READ', 'flink.write.tasks'='1')` A clear and concise description of what you expected to happen. **Environment Description** * Hudi version : 0.10.0 * Spark version : 2.4.7 * flink version : 1.13.5 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]