imrewang commented on issue #9513:

URL: https://github.com/apache/hudi/issues/9513#issuecomment-1696992219

# 一、snapshot

### 1. Mysql is `snapshot data` synchronized to Hudi

```sql

CREATE TABLE sink_scho

(

`name` STRING,

`address` STRING,

CONSTRAINT `PRIMARY` PRIMARY KEY (`name`) NOT ENFORCED

)

WITH (

'connector' = 'hudi',

'compaction.max_memory' = '1024',

'write.task.max.size' = '2048',

'write.merge.max_memory' = '1024',

'write.operation' = 'insert',

'index.bootstrap.enabled' = 'true',

'path' = 'hdfs://132.178.4.190:8020/user/hive/warehouse/test.db/scho',

'write.tasks' = '1',

'hive_sync.enable' = 'true',

'hive_sync.mode' = 'hms',

'hive_sync.metastore.uris' = 'thrift://132.178.4.190:9083',

'hive_sync.table' = 'scho',

'hive_sync.db' = 'test',

'hive_sync.username' = '',

'hive_sync.password' = '' )

```

# 二、incremental

### 1. Flinkcdc captures Mysql change data

```java

SourceFunction<String> mySqlSource = MySqlSource.<String>builder()

.hostname("123:23:23:34")

.port(123)

.username("ss")

.password(111)

.databaseList("db")

.tableList("tab")

.deserializer(new JsonDebeziumDeserializationSchema())

.startupOptions( StartupOptions.latest())) //This is the

incremental stage, so use latest

.build();

```

> my incremental sql(that is, the sql that executes [ **delete**, update,

insert ] above)

>

> ```sql

> CREATE TABLE sink_scho

> (

> `name` STRING,

> `address` STRING,

> CONSTRAINT `PRIMARY` PRIMARY KEY (`name`) NOT ENFORCED

> )

> WITH (

> 'connector' = 'hudi',

> 'compaction.max_memory' = '1024',

> 'write.task.max.size' = '2048',

> 'write.merge.max_memory' = '1024',

> 'write.operation' = 'upsert',

> 'index.bootstrap.enabled' = 'true',

> 'path' = 'hdfs://132.178.4.190:8020/user/hive/warehouse/test.db/scho',

> 'write.tasks' = '1',

> 'hive_sync.enable' = 'true',

> 'hive_sync.mode' = 'hms',

> 'hive_sync.metastore.uris' = 'thrift://132.178.4.190:9083',

> 'hive_sync.table' = 'scho',

> 'hive_sync.db' = 'test',

> 'hive_sync.username' = '',

> 'hive_sync.password' = '' )

> ```

### 2. Test -D, +I, -U, +U

> ### Are you sure row-level deletion of snapshot data is supported ?😖

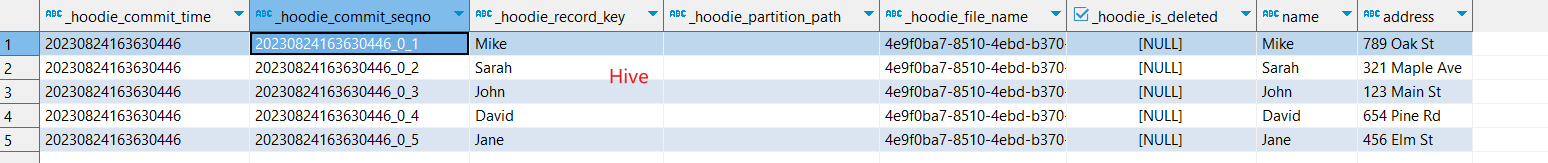

> The `snapshot data` of Hudi that is synchronized to the Hive table :

>

>

>

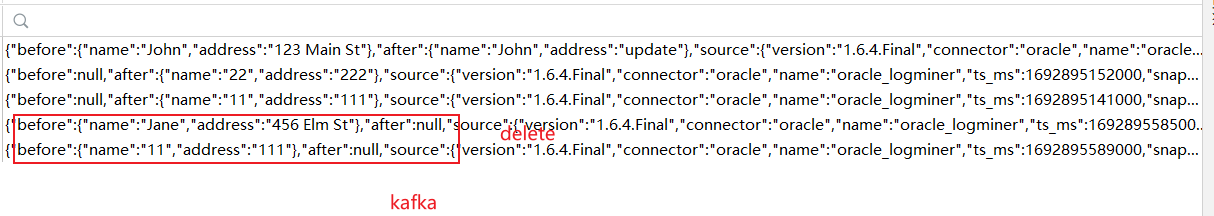

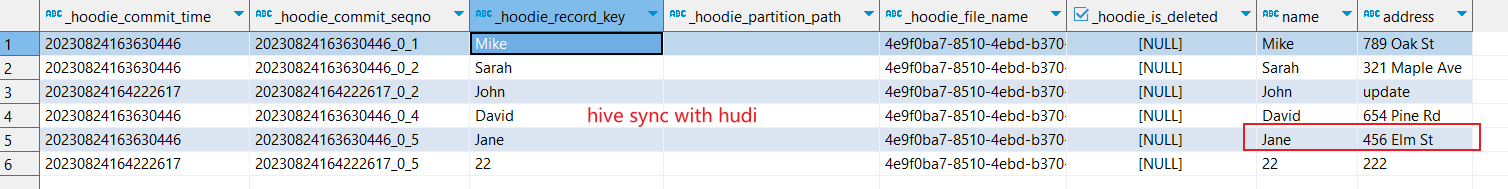

> Update a row of `snapshot data` `John update` and insert two rows of

`incremental data` `11 111` `22 222` _UPDATE and INSERT succeed_ Now delete one

`snapshot data` `Jane 456 Elm st`and one `incremental data` `11 111` :

>

>

>

> The **`snapshot data`** `Jane 456 Elm st` deletion **failed**, the

**`incremental data`** `11 111` deletion **succeeded :**

>

>

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]