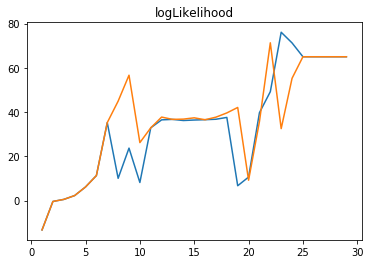

zhengruifeng commented on issue #27519: [SPARK-30770][ML] avoid vector conversion in GMM.transform URL: https://github.com/apache/spark/pull/27519#issuecomment-591871256 using following code to compare the convergence: ```python from pyspark.ml.linalg import Vectors from pyspark.ml.clustering import * data = [(Vectors.dense([-0.1, -0.05 ]),), (Vectors.dense([-0.01, -0.1]),), (Vectors.dense([0.9, 0.8]),), (Vectors.dense([0.75, 0.935]),), (Vectors.dense([-0.83, -0.68]),), (Vectors.dense([-0.91, -0.76]),)] df = spark.createDataFrame(sc.parallelize(data, 2), ["features"]) gm = GaussianMixture(k=3, tol=0.0001, seed=10) curve = [gm.setMaxIter(k).fit(df).summary.logLikelihood for k in range(0,30)] ```  The Blue curve is Master, and the Orange one is https://github.com/apache/spark/commit/87472a41aa1f08474e03341f9e5fe09b594cab77. The result is the same with https://github.com/apache/spark/pull/26735. With maxIter=30, both the two curves converge to `65.02945125241477`. I also check the coefficients of models, and they are the same.

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]