dongjoon-hyun commented on a change in pull request #29303:

URL: https://github.com/apache/spark/pull/29303#discussion_r464729021

##########

File path:

sql/hive-thriftserver/src/test/scala/org/apache/spark/sql/hive/thriftserver/ThriftServerWithSparkContextSuite.scala

##########

@@ -101,6 +104,135 @@ trait ThriftServerWithSparkContextSuite extends

SharedThriftServer {

}

}

}

+

+ test("check results from get columns operation from thrift server") {

+ val schemaName = "default"

+ val tableName = "spark_get_col_operation"

+ val schema = new StructType()

+ .add("c0", "boolean", nullable = false, "0")

+ .add("c1", "tinyint", nullable = true, "1")

+ .add("c2", "smallint", nullable = false, "2")

+ .add("c3", "int", nullable = true, "3")

+ .add("c4", "long", nullable = false, "4")

+ .add("c5", "float", nullable = true, "5")

+ .add("c6", "double", nullable = false, "6")

+ .add("c7", "decimal(38, 20)", nullable = true, "7")

+ .add("c8", "decimal(10, 2)", nullable = false, "8")

+ .add("c9", "string", nullable = true, "9")

+ .add("c10", "array<long>", nullable = false, "10")

+ .add("c11", "array<string>", nullable = true, "11")

+ .add("c12", "map<smallint, tinyint>", nullable = false, "12")

+ .add("c13", "date", nullable = true, "13")

+ .add("c14", "timestamp", nullable = false, "14")

+ .add("c15", "struct<X: bigint,Y: double>", nullable = true, "15")

+ .add("c16", "binary", nullable = false, "16")

+

+ val ddl =

+ s"""

+ |CREATE TABLE $schemaName.$tableName (

+ | ${schema.toDDL}

+ |)

+ |using parquet""".stripMargin

+

+ withCLIServiceClient { client =>

+ val sessionHandle = client.openSession(user, "")

+ val confOverlay = new java.util.HashMap[java.lang.String,

java.lang.String]

+ val opHandle = client.executeStatement(sessionHandle, ddl, confOverlay)

+ var status = client.getOperationStatus(opHandle)

+ while (!status.getState.isTerminal) {

+ Thread.sleep(10)

+ status = client.getOperationStatus(opHandle)

+ }

+ val getCol = client.getColumns(sessionHandle, "", schemaName, tableName,

null)

Review comment:

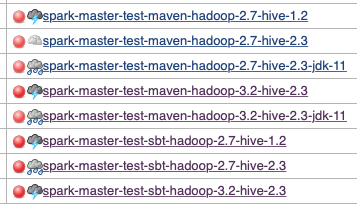

Hi, @yaooqinn and @cloud-fan . I'm not sure but this new test case

seems to break all Jenkins job on `master` branch. Could you take a look?

```

org.apache.hive.service.cli.HiveSQLException: java.lang.RuntimeException:

org.apache.hadoop.hive.ql.metadata.HiveException: java.lang.ClassCastException:

org.apache.hadoop.hive.ql.security.HadoopDefaultAuthenticator cannot be cast to

org.apache.hadoop.hive.ql.security.HiveAuthenticationProvider

at

org.apache.hive.service.cli.thrift.ThriftCLIServiceClient.checkStatus(ThriftCLIServiceClient.java:42)

at

org.apache.hive.service.cli.thrift.ThriftCLIServiceClient.getColumns(ThriftCLIServiceClient.java:275)

at

org.apache.spark.sql.hive.thriftserver.ThriftServerWithSparkContextSuite.$anonfun$$init$$17(ThriftServerWithSparkContextSuite.scala:146)

```

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]