AngersZhuuuu commented on pull request #30139:

URL: https://github.com/apache/spark/pull/30139#issuecomment-716263003

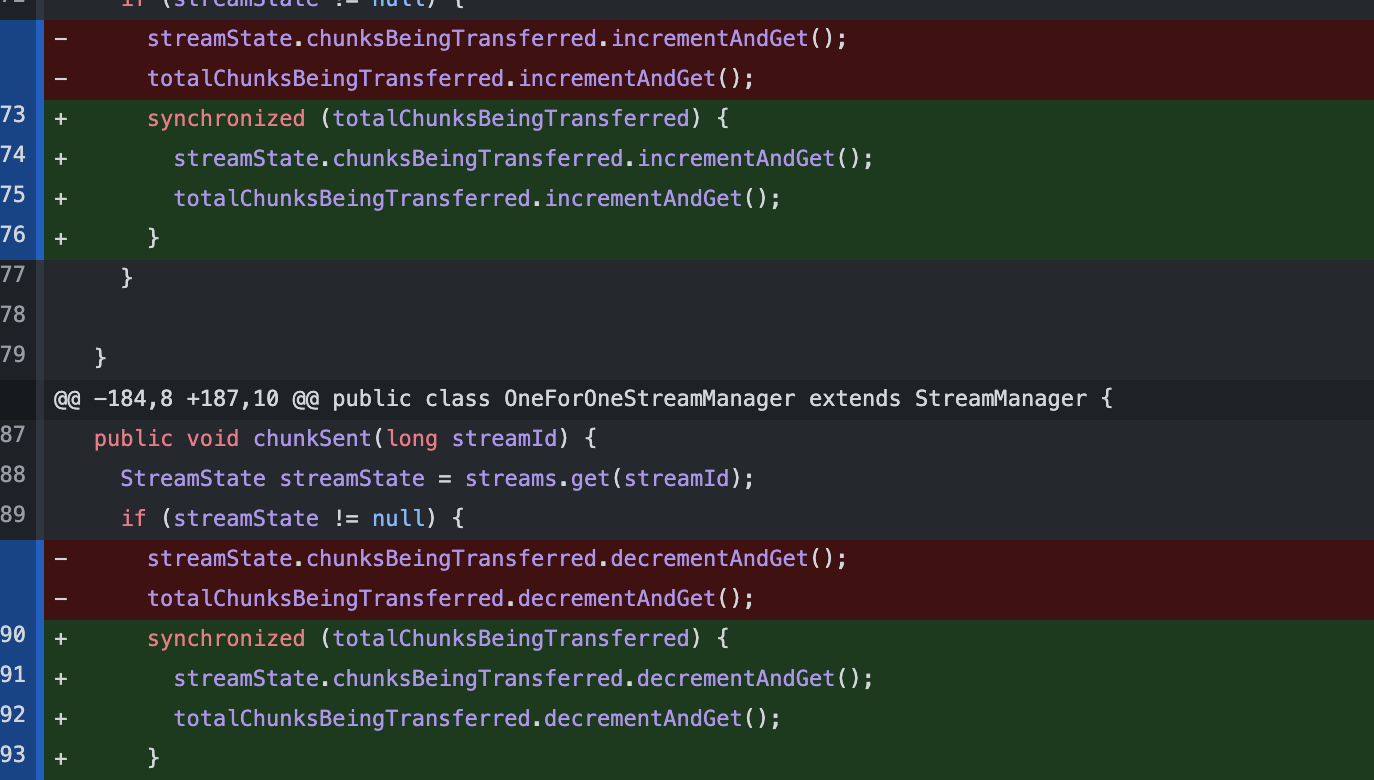

> I am concerned that the `totalChunksBeingTransferred` can diverge over

time from state of `streams` when there are concurrent updates. Either updates

to both should be within the same critical section, or we should carefully

ensure there is no potential for divergence. Can you relook and ensure this is

not the case ?

>

> For example, can there be concurrent execution between `chunkBeingSent`,

`chunkSent` and `connectionTerminated` ? If yes, can we ensure the state

remains consistent.

In origin code, we just use a concurrent hash map(streams) and also there no

strong locking mechanism. And `chunksBeingTransferred` is only used to check

with config.

```

long chunksBeingTransferred = streamManager.chunksBeingTransferred();

if (chunksBeingTransferred >= maxChunksBeingTransferred) {

logger.warn("The number of chunks being transferred {} is above {},

close the connection.",

chunksBeingTransferred, maxChunksBeingTransferred);

channel.close();

return;

}

```

IMO, we can add a lock to keep strong consistence of value

`totalChunksBeingTransferred`, such as

And I have run the test in desc, this change has little effect on

performance. WDYT?

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]