wangyum opened a new pull request #31691: URL: https://github.com/apache/spark/pull/31691

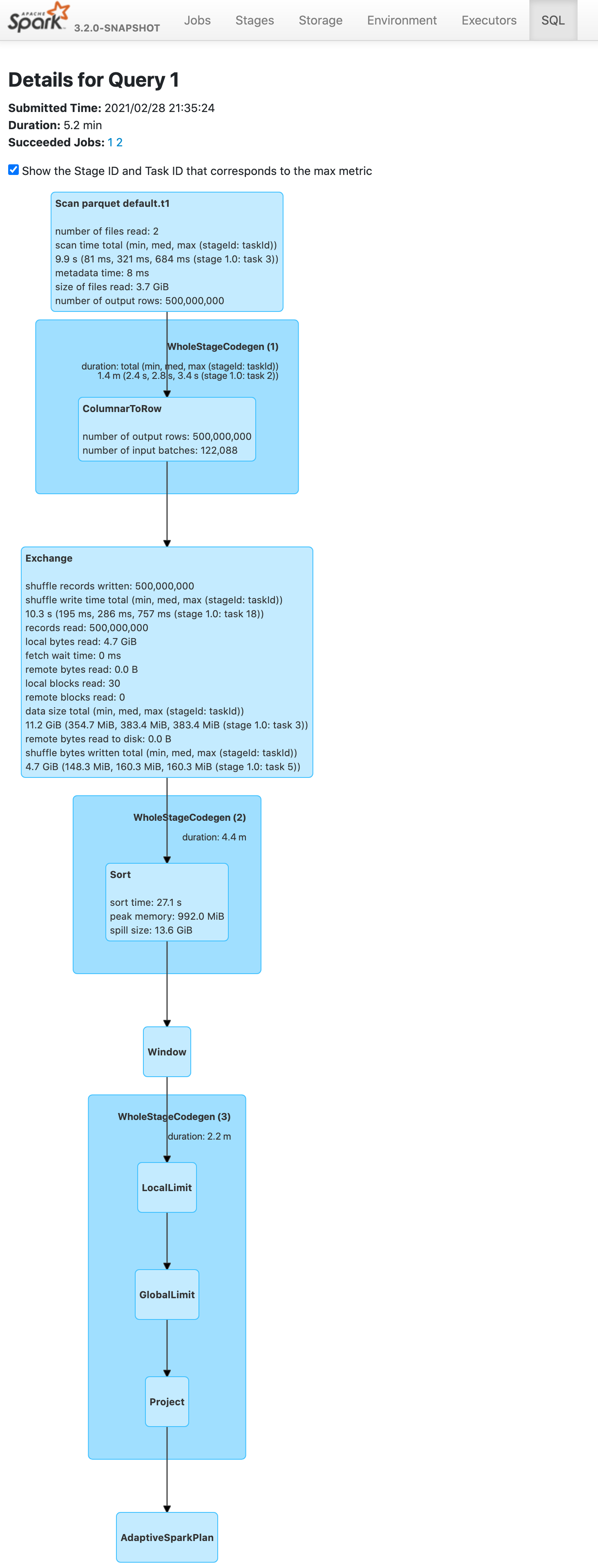

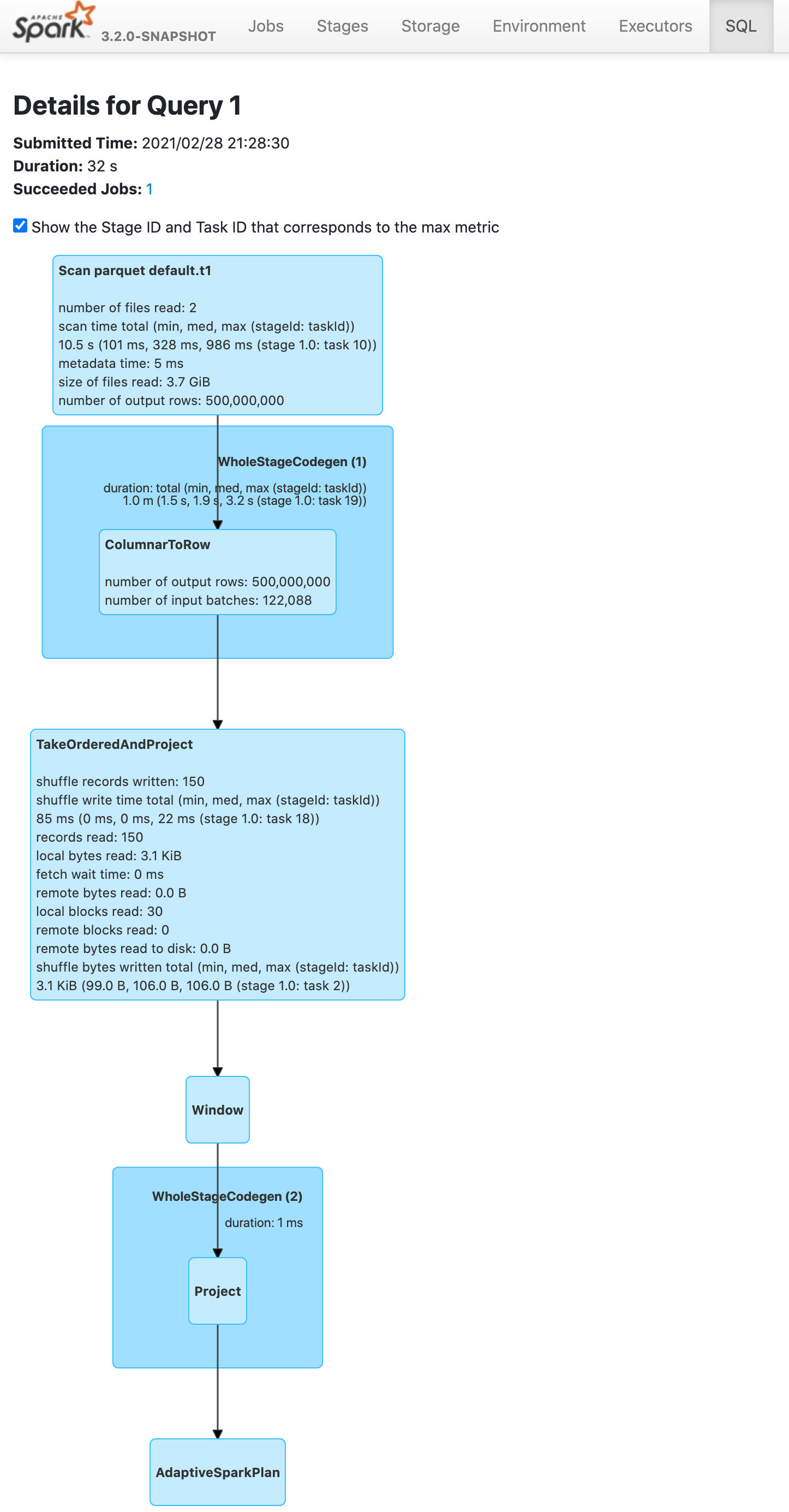

### What changes were proposed in this pull request? Push down limit through `Window` when partitionSpec is empty and window function is range based. This is a real case from production:  ### Why are the changes needed? Improve query performance. ```scala spark.range(500000000).selectExpr("id AS a", "id AS b").write.saveAsTable("t1") spark.sql("SELECT *, ROW_NUMBER() OVER(ORDER BY a) AS rowId FROM t1 LIMIT 5").show ``` Before this pr | After this pr -- | --  |  ### Does this PR introduce _any_ user-facing change? No. ### How was this patch tested? Unit test. ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]