Yikun opened a new pull request #33174: URL: https://github.com/apache/spark/pull/33174

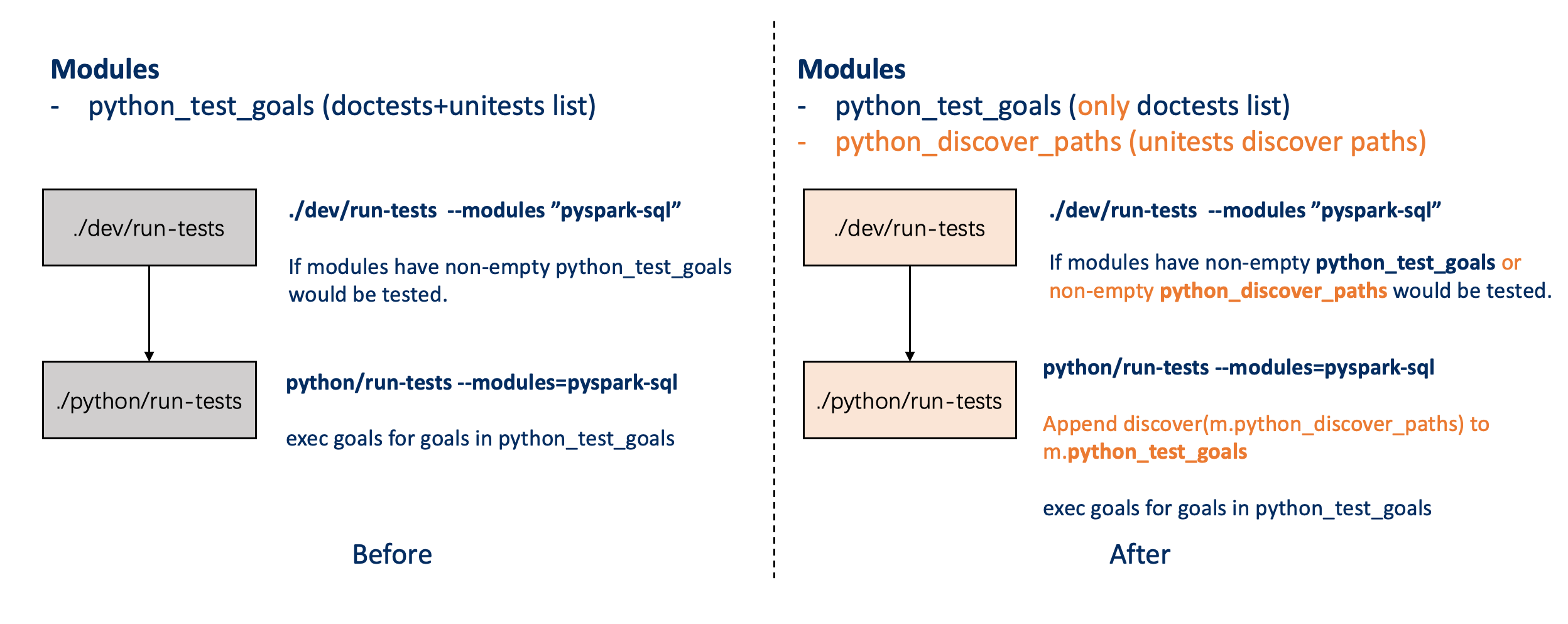

### What changes were proposed in this pull request? Add path level discover for python unittests.  Change list: - Introduce a **python_discover_paths** in modules. - Add **_discover_python_unittests** function: it would be called in pthon/run-tests.py to load test module - Add **_append_discovred_goals function**: call _discover_python_unittests to refresh m.python_test_goals - if modules have python_test_goals or **python_discover_paths** would also be considered as python tests. - Fix: Move logging.basicConfig to head to make sure logging config before any possible logging print. - Fix: Change python/pyspark/testing/utils.py SPARK_HOME use _find_spark_home to get value. - Fix: export py4j PYTHONPATH before run test. Note that the test discover will do real import for every modules, so we need install all deps of module(which are expeceted to be test) before run-tests. ### Why are the changes needed? Now we need to specify the python test cases by manually when we add a new testcase. Sometime, we forgot to add the testcase to module list, the testcase would not be executed. Such as: pyspark-core pyspark.tests.test_pin_thread Thus we need some auto-discover way to find all testcase rather than specified every case by manually. related: https://github.com/apache/spark/pull/32867 ### Does this PR introduce _any_ user-facing change? No ### How was this patch tested? 1. Add doc tests for _discover_python_unittests. 2. Compare the CI results, see diff in: Build modules: pyspark-sql, pyspark-mllib, pyspark-resource: https://www.diffchecker.com/CRtc3jph Build modules: pyspark-core, pyspark-streaming, pyspark-ml: https://www.diffchecker.com/We0fGQnx Build modules: pyspark-pandas:https://www.diffchecker.com/vbSh6LiP Build modules: pyspark-pandas-slow:https://www.diffchecker.com/1DXA88iH 3. local test for python modules: ./dev/run-tests --parallelism 2 --modules "pyspark-sql" -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected] --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]