wangyum commented on a change in pull request #33522: URL: https://github.com/apache/spark/pull/33522#discussion_r743317327

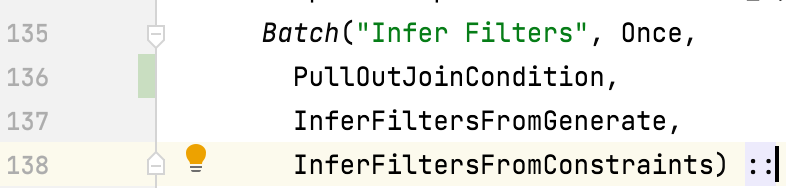

########## File path: sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/PullOutJoinCondition.scala ########## @@ -0,0 +1,76 @@ +/* + * Licensed to the Apache Software Foundation (ASF) under one or more + * contributor license agreements. See the NOTICE file distributed with + * this work for additional information regarding copyright ownership. + * The ASF licenses this file to You under the Apache License, Version 2.0 + * (the "License"); you may not use this file except in compliance with + * the License. You may obtain a copy of the License at + * + * http://www.apache.org/licenses/LICENSE-2.0 + * + * Unless required by applicable law or agreed to in writing, software + * distributed under the License is distributed on an "AS IS" BASIS, + * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + * See the License for the specific language governing permissions and + * limitations under the License. + */ + +package org.apache.spark.sql.catalyst.optimizer + +import org.apache.spark.sql.catalyst.expressions.{Alias, Expression, PredicateHelper} +import org.apache.spark.sql.catalyst.plans.logical.{Join, LogicalPlan, Project} +import org.apache.spark.sql.catalyst.rules.Rule +import org.apache.spark.sql.catalyst.trees.TreePattern.JOIN + +/** + * This rule ensures that [[Join]] condition doesn't contain complex expressions in the + * optimization phase. + * + * Complex condition expressions are pulled out to a [[Project]] node under [[Join]] and are + * referenced in join condition. + * + * {{{ + * SELECT * FROM t1 JOIN t2 ON t1.a + 10 = t2.x ==> + * Project [a#0, b#1, x#2, y#3] + * +- Join Inner, ((spark_catalog.default.t1.a + 10)#8 = x#2) + * :- Project [a#0, b#1, (a#0 + 10) AS (spark_catalog.default.t1.a + 10)#8] + * : +- Filter isnotnull((a#0 + 10)) + * : +- Relation default.t1[a#0,b#1] parquet + * +- Filter isnotnull(x#2) + * +- Relation default.t2[x#2,y#3] parquet + * }}} + */ +object PullOutJoinCondition extends Rule[LogicalPlan] + with JoinSelectionHelper with PredicateHelper { + + def apply(plan: LogicalPlan): LogicalPlan = plan.transformWithPruning(_.containsPattern(JOIN)) { + case j @ Join(left, right, _, Some(condition), _) + if j.resolved && !canPlanAsBroadcastHashJoin(j, conf) => Review comment: Can we put it in `Infer Filters`? This can infer more filters mentioned in https://github.com/apache/spark/pull/28642: https://github.com/apache/spark/blob/7f6edf8118b39a5b1854c2d0a1fb9bbbb3c11f86/sql/core/src/test/scala/org/apache/spark/sql/SQLQuerySuite.scala#L4231-L4254  ########## File path: sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/PullOutJoinCondition.scala ########## @@ -0,0 +1,76 @@ +/* + * Licensed to the Apache Software Foundation (ASF) under one or more + * contributor license agreements. See the NOTICE file distributed with + * this work for additional information regarding copyright ownership. + * The ASF licenses this file to You under the Apache License, Version 2.0 + * (the "License"); you may not use this file except in compliance with + * the License. You may obtain a copy of the License at + * + * http://www.apache.org/licenses/LICENSE-2.0 + * + * Unless required by applicable law or agreed to in writing, software + * distributed under the License is distributed on an "AS IS" BASIS, + * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + * See the License for the specific language governing permissions and + * limitations under the License. + */ + +package org.apache.spark.sql.catalyst.optimizer + +import org.apache.spark.sql.catalyst.expressions.{Alias, Expression, PredicateHelper} +import org.apache.spark.sql.catalyst.plans.logical.{Join, LogicalPlan, Project} +import org.apache.spark.sql.catalyst.rules.Rule +import org.apache.spark.sql.catalyst.trees.TreePattern.JOIN + +/** + * This rule ensures that [[Join]] condition doesn't contain complex expressions in the + * optimization phase. + * + * Complex condition expressions are pulled out to a [[Project]] node under [[Join]] and are + * referenced in join condition. + * + * {{{ + * SELECT * FROM t1 JOIN t2 ON t1.a + 10 = t2.x ==> + * Project [a#0, b#1, x#2, y#3] + * +- Join Inner, ((spark_catalog.default.t1.a + 10)#8 = x#2) + * :- Project [a#0, b#1, (a#0 + 10) AS (spark_catalog.default.t1.a + 10)#8] + * : +- Filter isnotnull((a#0 + 10)) + * : +- Relation default.t1[a#0,b#1] parquet + * +- Filter isnotnull(x#2) + * +- Relation default.t2[x#2,y#3] parquet + * }}} + */ +object PullOutJoinCondition extends Rule[LogicalPlan] + with JoinSelectionHelper with PredicateHelper { + + def apply(plan: LogicalPlan): LogicalPlan = plan.transformWithPruning(_.containsPattern(JOIN)) { + case j @ Join(left, right, _, Some(condition), _) + if j.resolved && !canPlanAsBroadcastHashJoin(j, conf) => Review comment: Sorry. If we need stats, `PullOutGroupingExpressions` should be after `Early Filter and Projection Push-Down`: https://github.com/apache/spark/blob/36b3bbc0aa9f9c39677960cd93f32988c7d7aaca/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/Optimizer.scala#L211-L214 ########## File path: sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/PullOutJoinCondition.scala ########## @@ -0,0 +1,76 @@ +/* + * Licensed to the Apache Software Foundation (ASF) under one or more + * contributor license agreements. See the NOTICE file distributed with + * this work for additional information regarding copyright ownership. + * The ASF licenses this file to You under the Apache License, Version 2.0 + * (the "License"); you may not use this file except in compliance with + * the License. You may obtain a copy of the License at + * + * http://www.apache.org/licenses/LICENSE-2.0 + * + * Unless required by applicable law or agreed to in writing, software + * distributed under the License is distributed on an "AS IS" BASIS, + * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + * See the License for the specific language governing permissions and + * limitations under the License. + */ + +package org.apache.spark.sql.catalyst.optimizer + +import org.apache.spark.sql.catalyst.expressions.{Alias, Expression, PredicateHelper} +import org.apache.spark.sql.catalyst.plans.logical.{Join, LogicalPlan, Project} +import org.apache.spark.sql.catalyst.rules.Rule +import org.apache.spark.sql.catalyst.trees.TreePattern.JOIN + +/** + * This rule ensures that [[Join]] condition doesn't contain complex expressions in the + * optimization phase. + * + * Complex condition expressions are pulled out to a [[Project]] node under [[Join]] and are + * referenced in join condition. + * + * {{{ + * SELECT * FROM t1 JOIN t2 ON t1.a + 10 = t2.x ==> + * Project [a#0, b#1, x#2, y#3] + * +- Join Inner, ((spark_catalog.default.t1.a + 10)#8 = x#2) + * :- Project [a#0, b#1, (a#0 + 10) AS (spark_catalog.default.t1.a + 10)#8] + * : +- Filter isnotnull((a#0 + 10)) + * : +- Relation default.t1[a#0,b#1] parquet + * +- Filter isnotnull(x#2) + * +- Relation default.t2[x#2,y#3] parquet + * }}} + */ +object PullOutJoinCondition extends Rule[LogicalPlan] + with JoinSelectionHelper with PredicateHelper { + + def apply(plan: LogicalPlan): LogicalPlan = plan.transformWithPruning(_.containsPattern(JOIN)) { + case j @ Join(left, right, _, Some(condition), _) + if j.resolved && !canPlanAsBroadcastHashJoin(j, conf) => Review comment: Sorry. If we need stats, `PullOutJoinCondition` should be after `Early Filter and Projection Push-Down`: https://github.com/apache/spark/blob/36b3bbc0aa9f9c39677960cd93f32988c7d7aaca/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/Optimizer.scala#L211-L214 ########## File path: sql/core/src/test/scala/org/apache/spark/sql/JoinSuite.scala ########## @@ -1057,7 +1057,7 @@ class JoinSuite extends QueryTest with SharedSparkSession with AdaptiveSparkPlan val pythonEvals = collect(joinNode.get) { case p: BatchEvalPythonExec => p } - assert(pythonEvals.size == 2) + assert(pythonEvals.size == 4) Review comment: It is because we will infer two `isnotnull(cast(pythonUDF0 as int))`: ``` == Optimized Logical Plan == Project [a#225, b#226, c#236, d#237] +- Join Inner, (CAST(udf(cast(a as string)) AS INT)#250 = CAST(udf(cast(c as string)) AS INT)#251) :- Project [_1#220 AS a#225, _2#221 AS b#226, cast(pythonUDF0#253 as int) AS CAST(udf(cast(a as string)) AS INT)#250] : +- BatchEvalPython [udf(cast(_1#220 as string))], [pythonUDF0#253] : +- Project [_1#220, _2#221] : +- Filter isnotnull(cast(pythonUDF0#252 as int)) : +- BatchEvalPython [udf(cast(_1#220 as string))], [pythonUDF0#252] : +- LocalRelation [_1#220, _2#221] +- Project [_1#231 AS c#236, _2#232 AS d#237, cast(pythonUDF0#255 as int) AS CAST(udf(cast(c as string)) AS INT)#251] +- BatchEvalPython [udf(cast(_1#231 as string))], [pythonUDF0#255] +- Project [_1#231, _2#232] +- Filter isnotnull(cast(pythonUDF0#254 as int)) +- BatchEvalPython [udf(cast(_1#231 as string))], [pythonUDF0#254] +- LocalRelation [_1#231, _2#232] ``` ########## File path: sql/core/src/test/scala/org/apache/spark/sql/JoinSuite.scala ########## @@ -1057,7 +1057,7 @@ class JoinSuite extends QueryTest with SharedSparkSession with AdaptiveSparkPlan val pythonEvals = collect(joinNode.get) { case p: BatchEvalPythonExec => p } - assert(pythonEvals.size == 2) + assert(pythonEvals.size == 4) Review comment: It is because we will infer two `isnotnull(cast(pythonUDF0 as int))`: ``` == Optimized Logical Plan == Project [a#225, b#226, c#236, d#237] +- Join Inner, (CAST(udf(cast(a as string)) AS INT)#250 = CAST(udf(cast(c as string)) AS INT)#251) :- Project [_1#220 AS a#225, _2#221 AS b#226, cast(pythonUDF0#253 as int) AS CAST(udf(cast(a as string)) AS INT)#250] : +- BatchEvalPython [udf(cast(_1#220 as string))], [pythonUDF0#253] : +- Project [_1#220, _2#221] : +- Filter isnotnull(cast(pythonUDF0#252 as int)) : +- BatchEvalPython [udf(cast(_1#220 as string))], [pythonUDF0#252] : +- LocalRelation [_1#220, _2#221] +- Project [_1#231 AS c#236, _2#232 AS d#237, cast(pythonUDF0#255 as int) AS CAST(udf(cast(c as string)) AS INT)#251] +- BatchEvalPython [udf(cast(_1#231 as string))], [pythonUDF0#255] +- Project [_1#231, _2#232] +- Filter isnotnull(cast(pythonUDF0#254 as int)) +- BatchEvalPython [udf(cast(_1#231 as string))], [pythonUDF0#254] +- LocalRelation [_1#231, _2#232] ``` Before this pr: ``` == Optimized Logical Plan == Project [a#225, b#226, c#236, d#237] +- Join Inner, (cast(pythonUDF0#250 as int) = cast(pythonUDF0#251 as int)) :- BatchEvalPython [udf(cast(a#225 as string))], [pythonUDF0#250] : +- Project [_1#220 AS a#225, _2#221 AS b#226] : +- LocalRelation [_1#220, _2#221] +- BatchEvalPython [udf(cast(c#236 as string))], [pythonUDF0#251] +- Project [_1#231 AS c#236, _2#232 AS d#237] +- LocalRelation [_1#231, _2#232] ``` ########## File path: sql/core/src/test/scala/org/apache/spark/sql/JoinSuite.scala ########## @@ -1057,7 +1057,7 @@ class JoinSuite extends QueryTest with SharedSparkSession with AdaptiveSparkPlan val pythonEvals = collect(joinNode.get) { case p: BatchEvalPythonExec => p } - assert(pythonEvals.size == 2) + assert(pythonEvals.size == 4) Review comment: Yes. If we disable `spark.sql.constraintPropagation.enabled`, the plan is: ``` == Optimized Logical Plan == Project [a#225, b#226, c#236, d#237] +- Join Inner, (CAST(udf(cast(a as string)) AS INT)#250 = CAST(udf(cast(c as string)) AS INT)#251) :- Project [_1#220 AS a#225, _2#221 AS b#226, cast(pythonUDF0#252 as int) AS CAST(udf(cast(a as string)) AS INT)#250] : +- BatchEvalPython [udf(cast(_1#220 as string))], [pythonUDF0#252] : +- LocalRelation [_1#220, _2#221] +- Project [_1#231 AS c#236, _2#232 AS d#237, cast(pythonUDF0#253 as int) AS CAST(udf(cast(c as string)) AS INT)#251] +- BatchEvalPython [udf(cast(_1#231 as string))], [pythonUDF0#253] +- LocalRelation [_1#231, _2#232] ``` ########## File path: sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/optimizer/PullOutJoinConditionSuite.scala ########## @@ -0,0 +1,133 @@ +/* + * Licensed to the Apache Software Foundation (ASF) under one or more + * contributor license agreements. See the NOTICE file distributed with + * this work for additional information regarding copyright ownership. + * The ASF licenses this file to You under the Apache License, Version 2.0 + * (the "License"); you may not use this file except in compliance with + * the License. You may obtain a copy of the License at + * + * http://www.apache.org/licenses/LICENSE-2.0 + * + * Unless required by applicable law or agreed to in writing, software + * distributed under the License is distributed on an "AS IS" BASIS, + * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + * See the License for the specific language governing permissions and + * limitations under the License. + */ + +package org.apache.spark.sql.catalyst.optimizer + +import org.apache.spark.sql.catalyst.dsl.expressions._ +import org.apache.spark.sql.catalyst.dsl.plans._ +import org.apache.spark.sql.catalyst.expressions.{Alias, Coalesce, IsNull, Literal, Substring, Upper} +import org.apache.spark.sql.catalyst.plans._ +import org.apache.spark.sql.catalyst.plans.logical._ +import org.apache.spark.sql.catalyst.rules._ +import org.apache.spark.sql.internal.SQLConf + +class PullOutJoinConditionSuite extends PlanTest { + + private object Optimize extends RuleExecutor[LogicalPlan] { + val batches = + Batch("Pull out join condition", Once, + PullOutJoinCondition, + CollapseProject) :: Nil + } + + private val testRelation = LocalRelation('a.string, 'b.int, 'c.int) + private val testRelation1 = LocalRelation('d.string, 'e.int) + private val x = testRelation.subquery('x) + private val y = testRelation1.subquery('y) + + test("Pull out join keys evaluation(String expressions)") { + val joinType = Inner + Seq(Upper("y.d".attr), Substring("y.d".attr, 1, 5)).foreach { udf => + val originalQuery = x.join(y, joinType, Option("x.a".attr === udf)) + .select("x.a".attr, "y.e".attr) + val correctAnswer = x.select("x.a".attr, "x.b".attr, "x.c".attr) + .join(y.select("y.d".attr, "y.e".attr, Alias(udf, udf.sql)()), + joinType, Option("x.a".attr === s"`${udf.sql}`".attr)).select("x.a".attr, "y.e".attr) + + comparePlans(Optimize.execute(originalQuery.analyze), correctAnswer.analyze) + } + } + + test("Pull out join keys evaluation(null expressions)") { + val joinType = Inner + val udf = Coalesce(Seq("x.b".attr, "x.c".attr)) + val originalQuery = x.join(y, joinType, Option(udf === "y.e".attr)) + .select("x.a".attr, "y.e".attr) + val correctAnswer = + x.select("x.a".attr, "x.b".attr, "x.c".attr, Alias(udf, udf.sql)()).join( + y.select("y.d".attr, "y.e".attr), + joinType, Option(s"`${udf.sql}`".attr === "y.e".attr)) + .select("x.a".attr, "y.e".attr) + + comparePlans(Optimize.execute(originalQuery.analyze), correctAnswer.analyze) + } + + test("Pull out join condition contains other predicates") { + val udf = Substring("y.d".attr, 1, 5) + val joinType = Inner + val originalQuery = x.join(y, joinType, Option("x.a".attr === udf && "x.b".attr > "y.e".attr)) + .select("x.a".attr, "y.e".attr) + val correctAnswer = + x.select("x.a".attr, "x.b".attr, "x.c".attr).join( + y.select("y.d".attr, "y.e".attr, Alias(udf, udf.sql)()), + joinType, Option("x.a".attr === s"`${udf.sql}`".attr && "x.b".attr > "y.e".attr)) + .select("x.a".attr, "y.e".attr) + + comparePlans(Optimize.execute(originalQuery.analyze), correctAnswer.analyze) + } + + test("Pull out EqualNullSafe join condition") { + withSQLConf(SQLConf.AUTO_BROADCASTJOIN_THRESHOLD.key -> "-1") { Review comment: Removed it. ########## File path: sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/optimizer/PullOutJoinConditionSuite.scala ########## @@ -0,0 +1,133 @@ +/* + * Licensed to the Apache Software Foundation (ASF) under one or more + * contributor license agreements. See the NOTICE file distributed with + * this work for additional information regarding copyright ownership. + * The ASF licenses this file to You under the Apache License, Version 2.0 + * (the "License"); you may not use this file except in compliance with + * the License. You may obtain a copy of the License at + * + * http://www.apache.org/licenses/LICENSE-2.0 + * + * Unless required by applicable law or agreed to in writing, software + * distributed under the License is distributed on an "AS IS" BASIS, + * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + * See the License for the specific language governing permissions and + * limitations under the License. + */ + +package org.apache.spark.sql.catalyst.optimizer + +import org.apache.spark.sql.catalyst.dsl.expressions._ +import org.apache.spark.sql.catalyst.dsl.plans._ +import org.apache.spark.sql.catalyst.expressions.{Alias, Coalesce, IsNull, Literal, Substring, Upper} +import org.apache.spark.sql.catalyst.plans._ +import org.apache.spark.sql.catalyst.plans.logical._ +import org.apache.spark.sql.catalyst.rules._ +import org.apache.spark.sql.internal.SQLConf + +class PullOutJoinConditionSuite extends PlanTest { + + private object Optimize extends RuleExecutor[LogicalPlan] { + val batches = + Batch("Pull out join condition", Once, + PullOutJoinCondition, + CollapseProject) :: Nil + } + + private val testRelation = LocalRelation('a.string, 'b.int, 'c.int) + private val testRelation1 = LocalRelation('d.string, 'e.int) + private val x = testRelation.subquery('x) + private val y = testRelation1.subquery('y) + + test("Pull out join keys evaluation(String expressions)") { + val joinType = Inner + Seq(Upper("y.d".attr), Substring("y.d".attr, 1, 5)).foreach { udf => + val originalQuery = x.join(y, joinType, Option("x.a".attr === udf)) + .select("x.a".attr, "y.e".attr) Review comment: Removed it. But UDF need it otherwise: ``` == FAIL: Plans do not match === 'Project [a#0, e#0] 'Project [a#0, e#0] !+- 'Join Inner, (a#0 = upper(y.d)#0) +- 'Join Inner, (upper(d)#0 = a#0) :- Project [a#0, b#0, c#0] :- Project [a#0, b#0, c#0] : +- LocalRelation <empty>, [a#0, b#0, c#0] : +- LocalRelation <empty>, [a#0, b#0, c#0] ! +- Project [d#0, e#0, upper(d#0) AS upper(y.d)#0] +- Project [d#0, e#0, upper(d#0) AS upper(d)#0] +- LocalRelation <empty>, [d#0, e#0] +- LocalRelation <empty>, [d#0, e#0] ``` ########## File path: sql/catalyst/src/test/scala/org/apache/spark/sql/catalyst/optimizer/PullOutJoinConditionSuite.scala ########## @@ -0,0 +1,133 @@ +/* + * Licensed to the Apache Software Foundation (ASF) under one or more + * contributor license agreements. See the NOTICE file distributed with + * this work for additional information regarding copyright ownership. + * The ASF licenses this file to You under the Apache License, Version 2.0 + * (the "License"); you may not use this file except in compliance with + * the License. You may obtain a copy of the License at + * + * http://www.apache.org/licenses/LICENSE-2.0 + * + * Unless required by applicable law or agreed to in writing, software + * distributed under the License is distributed on an "AS IS" BASIS, + * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. + * See the License for the specific language governing permissions and + * limitations under the License. + */ + +package org.apache.spark.sql.catalyst.optimizer + +import org.apache.spark.sql.catalyst.dsl.expressions._ +import org.apache.spark.sql.catalyst.dsl.plans._ +import org.apache.spark.sql.catalyst.expressions.{Alias, Coalesce, IsNull, Literal, Substring, Upper} +import org.apache.spark.sql.catalyst.plans._ +import org.apache.spark.sql.catalyst.plans.logical._ +import org.apache.spark.sql.catalyst.rules._ +import org.apache.spark.sql.internal.SQLConf + +class PullOutJoinConditionSuite extends PlanTest { + + private object Optimize extends RuleExecutor[LogicalPlan] { + val batches = + Batch("Pull out join condition", Once, + PullOutJoinCondition, + CollapseProject) :: Nil + } + + private val testRelation = LocalRelation('a.string, 'b.int, 'c.int) + private val testRelation1 = LocalRelation('d.string, 'e.int) + private val x = testRelation.subquery('x) + private val y = testRelation1.subquery('y) + + test("Pull out join keys evaluation(String expressions)") { + val joinType = Inner + Seq(Upper("y.d".attr), Substring("y.d".attr, 1, 5)).foreach { udf => + val originalQuery = x.join(y, joinType, Option("x.a".attr === udf)) + .select("x.a".attr, "y.e".attr) + val correctAnswer = x.select("x.a".attr, "x.b".attr, "x.c".attr) + .join(y.select("y.d".attr, "y.e".attr, Alias(udf, udf.sql)()), + joinType, Option("x.a".attr === s"`${udf.sql}`".attr)).select("x.a".attr, "y.e".attr) + + comparePlans(Optimize.execute(originalQuery.analyze), correctAnswer.analyze) + } + } + + test("Pull out join keys evaluation(null expressions)") { + val joinType = Inner + val udf = Coalesce(Seq("x.b".attr, "x.c".attr)) Review comment: Move this test to `SQLQuerySuite` to test infer more `IsNotNull`: https://github.com/apache/spark/blob/4aebe0a19fdf84516e30ab917eb149d39c4d4ac6/sql/core/src/test/scala/org/apache/spark/sql/SQLQuerySuite.scala#L4231-L4253 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected] --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]