zhengruifeng edited a comment on pull request #34367:

URL: https://github.com/apache/spark/pull/34367#issuecomment-949516811

a simple skewed window example:

```

import org.apache.spark.sql.expressions.Window

val df1 = spark.range(0, 100000000, 1, 9).select(when('id < 90000000,

123).otherwise('id).as("key1"), 'id as "value1").withColumn("hash1",

abs(hash(col("key1"))).mod(1000))

df1.withColumn("rank",

row_number().over(Window.partitionBy("hash1").orderBy("value1"))).where(col("rank")

<= 1).write.mode("overwrite").parquet("/tmp/tmp1")

spark.conf.set("spark.sql.rankLimit.enabled", "true")

df1.withColumn("rank",

row_number().over(Window.partitionBy("hash1").orderBy("value1"))).where(col("rank")

<= 1).write.mode("overwrite").parquet("/tmp/tmp2")

```

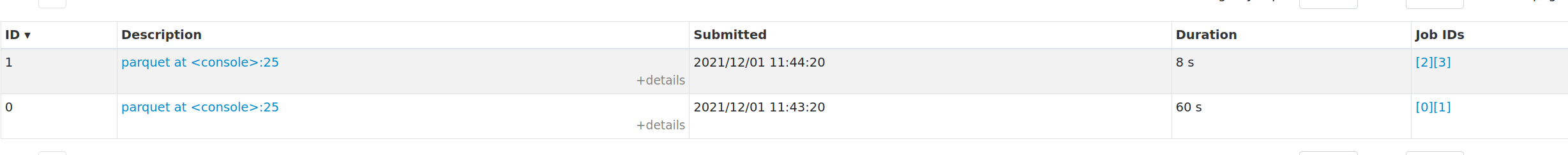

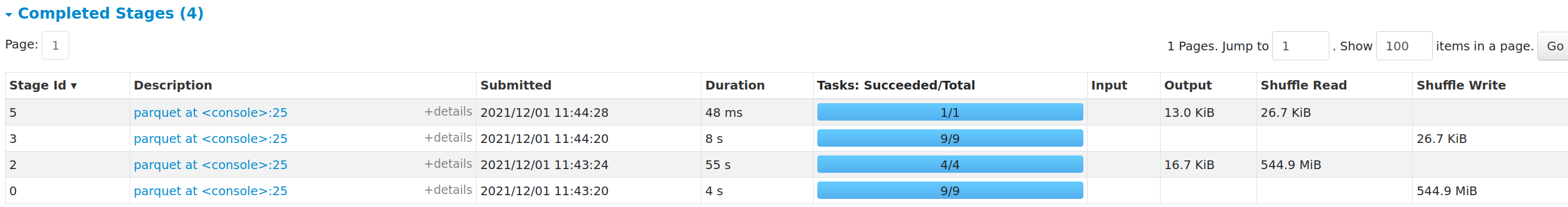

existing plan took 60 sec, while the new plan with `RankLimit` took only 8

sec.

and the shuffle write was reduced from 544.9 MiB to 26.7 KiB

```

== Physical Plan ==

Execute InsertIntoHadoopFsRelationCommand (20)

+- AdaptiveSparkPlan (19)

+- == Final Plan ==

* Filter (12)

+- Window (11)

+- RankLimit (10)

+- * Sort (9)

+- AQEShuffleRead (8)

+- ShuffleQueryStage (7)

+- Exchange (6)

+- RankLimit (5)

+- * Sort (4)

+- * Project (3)

+- * Project (2)

+- * Range (1)

+- == Initial Plan ==

Filter (18)

+- Window (17)

+- RankLimit (16)

+- Sort (15)

+- Exchange (14)

+- RankLimit (13)

+- Sort (4)

+- Project (3)

+- Project (2)

+- Range (1)

(1) Range [codegen id : 1]

Output [1]: [id#0L]

Arguments: Range (0, 100000000, step=1, splits=Some(9))

(2) Project [codegen id : 1]

Output [2]: [CASE WHEN (id#0L < 90000000) THEN 123 ELSE id#0L END AS

key1#2L, id#0L AS value1#3L]

Input [1]: [id#0L]

(3) Project [codegen id : 1]

Output [3]: [key1#2L, value1#3L, (abs(hash(key1#2L, 42), false) % 1000) AS

hash1#6]

Input [2]: [key1#2L, value1#3L]

(4) Sort [codegen id : 1]

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [hash1#6 ASC NULLS FIRST], false, 0

(5) RankLimit

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [hash1#6], [value1#3L ASC NULLS FIRST], row_number(), 1, Partial

(6) Exchange

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: hashpartitioning(hash1#6, 200), ENSURE_REQUIREMENTS, [id=#119]

(7) ShuffleQueryStage

Output [3]: [key1#2L, value1#3L, hash1#6]

Arguments: 0

(8) AQEShuffleRead

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: coalesced

(9) Sort [codegen id : 2]

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [hash1#6 ASC NULLS FIRST], false, 0

(10) RankLimit

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [hash1#6], [value1#3L ASC NULLS FIRST], row_number(), 1, Final

(11) Window

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [row_number() windowspecdefinition(hash1#6, value1#3L ASC NULLS

FIRST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS

rank#21], [hash1#6], [value1#3L ASC NULLS FIRST]

(12) Filter [codegen id : 3]

Input [4]: [key1#2L, value1#3L, hash1#6, rank#21]

Condition : (rank#21 <= 1)

(13) RankLimit

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [hash1#6], [value1#3L ASC NULLS FIRST], row_number(), 1, Partial

(14) Exchange

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: hashpartitioning(hash1#6, 200), ENSURE_REQUIREMENTS, [id=#100]

(15) Sort

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [hash1#6 ASC NULLS FIRST], false, 0

(16) RankLimit

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [hash1#6], [value1#3L ASC NULLS FIRST], row_number(), 1, Final

(17) Window

Input [3]: [key1#2L, value1#3L, hash1#6]

Arguments: [row_number() windowspecdefinition(hash1#6, value1#3L ASC NULLS

FIRST, specifiedwindowframe(RowFrame, unboundedpreceding$(), currentrow$())) AS

rank#21], [hash1#6], [value1#3L ASC NULLS FIRST]

(18) Filter

Input [4]: [key1#2L, value1#3L, hash1#6, rank#21]

Condition : (rank#21 <= 1)

(19) AdaptiveSparkPlan

Output [4]: [key1#2L, value1#3L, hash1#6, rank#21]

Arguments: isFinalPlan=true

(20) Execute InsertIntoHadoopFsRelationCommand

Input [4]: [key1#2L, value1#3L, hash1#6, rank#21]

Arguments: file:/tmp/tmp2, false, Parquet, [path=/tmp/tmp2], Overwrite,

[key1, value1, hash1, rank]

```

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]