puneetguptanitj opened a new pull request, #40909:

URL: https://github.com/apache/spark/pull/40909

### What changes were proposed in this pull request?

Following describes the changes made, all changes are behind respective

configuration properties

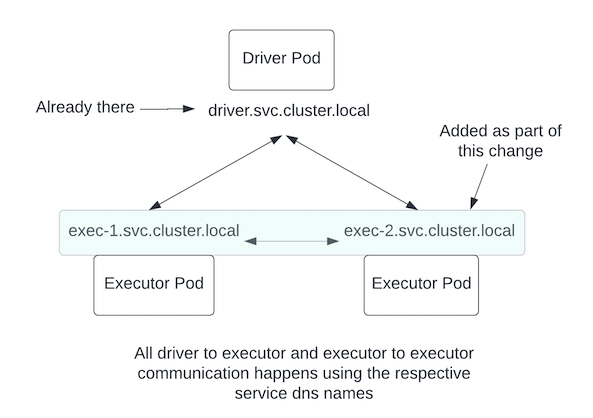

1. Followed the same model as driver to create svc records for executors as

well. The lifecycle of the SVC record is tied to executor lifecycle. While

registering with drivers, executors now supply their SVC hostname. **Controlled

by a new configuration (added as part of this PR):

`spark.kubernetes.executor.service`**

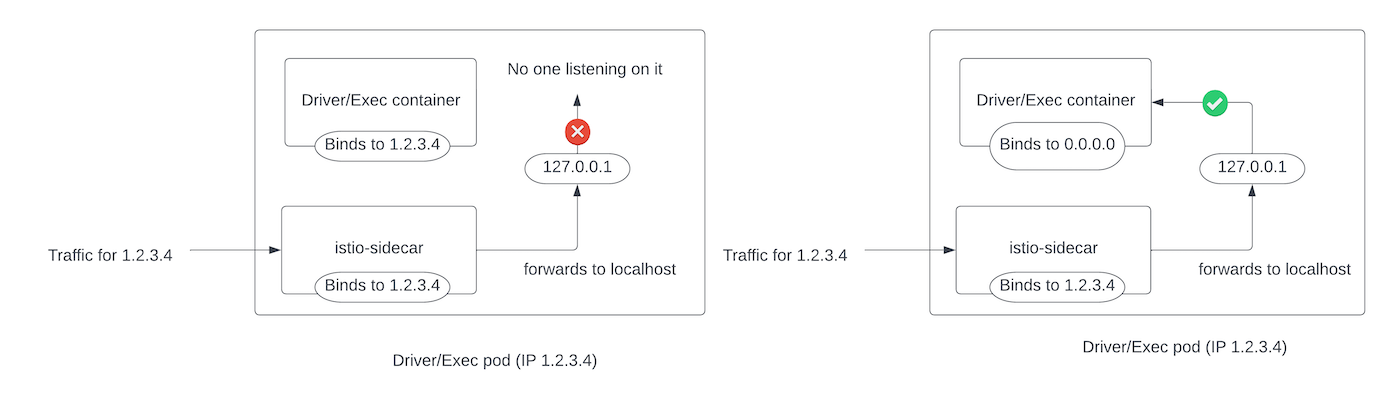

2. Allowed drivers and executors to bind to all IPs. **Controlled by

existing properties `spark.driver.bindAddress` and

`spark.executor.bindAddress`. This PR makes `0.0.0.0` a permissible value**

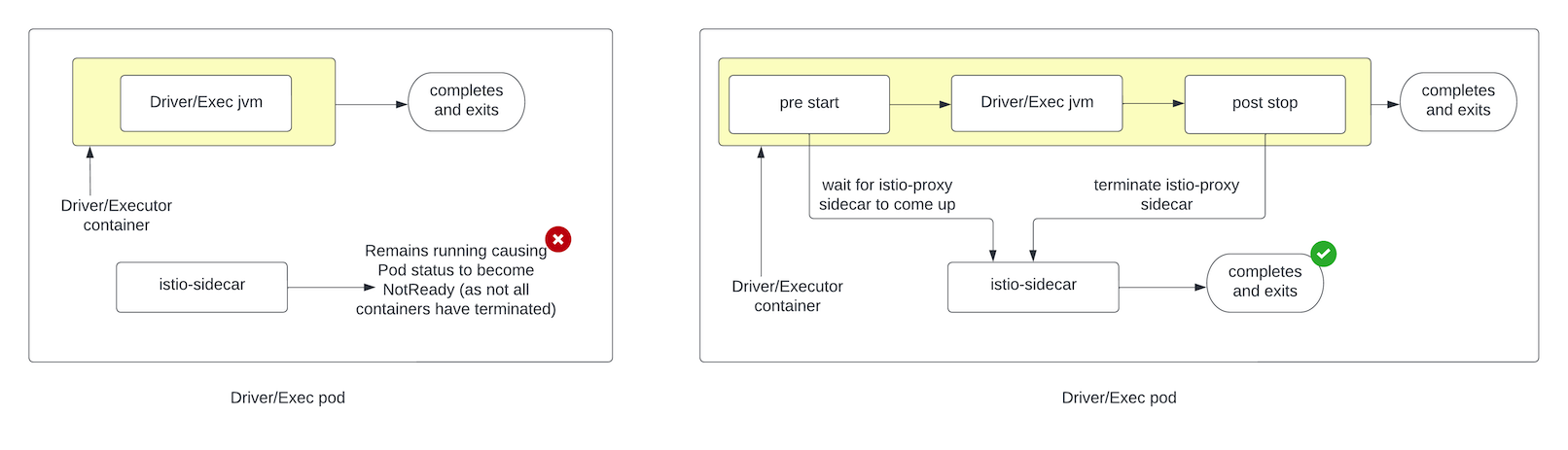

3. Added support for providing

1. pre start script: that would be run before driver/executor JVM gets

started. This script can do any setup e.g. waiting for istio-proxy sidecar to

be up.

2. post stop script: that would be run after driver/executor JVM

completes. This script can do any cleanup example in our case it makes a REST

call to shutdown sidecar.These scripts are not part of the PR because the onus

of providing any specialized cleanup would lie with the client. In our case it

is provided by Proton. **Controlled by new configurations (added as part of

this PR): `spark.kubernetes.post.stop.script`,

`spark.kubernetes.pre.start.script` which when set will be executed before and

after the driver/executor JVM**

### Why are the changes needed?

Spark allows using Kubernetes as the resource scheduler however off the

shelf does not work with Kubernetes cluster using Istio service mesh in strict

MTLS mode because:

1. For Istio to work, it needs to know the network identity of all possible

network paths. Currently network identity (through a K8s service record) is

created only for the driver pod but not for executors.

2. Istio adds a istio-proxy sidecar to every pod and this sidecar handles

all pod to pod networking. However the sidecar binds to Pod IP and then sends

ingress traffic to localhost (if PILOT_ENABLE_INBOUND_PASSTHROUGH is set to

false). Therefore for ingress traffic to correctly reach application processes

(like driver and executor JVMs), the processes need to bind to all IPs and not

just Pod IP, as otherwise, traffic routed to localhost by the sidecar would not

reach the application processes. Off the shelf Spark allows driver and

executors to only bind to Pod IP and therefore does not work with Istio.

3. Unlike the Istio sidecar, driver/executor containers in the pod can

finish. In which case a pod would enter NotReady state (as driver/executor

containers can complete) while sidecar would continue to run. Therefore once

the driver/executor containers are done, they need to signal to the istio

sidecar as well to terminate.

### Does this PR introduce *any* user-facing change?

Yes, it adds configs that can be used to run on an K8s cluster using Istio

service mesh, with strict MTLS.

### How was this patch tested?

- Added new unit tests

- Tested on a strict MTLS Istio Kubernetes cluster.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: [email protected]

For queries about this service, please contact Infrastructure at:

[email protected]

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]