GitHub user cfangplus opened a pull request:

https://github.com/apache/spark/pull/22335

[SPARK-25091][SQL] reduce the storage memory in Executor Tab when â¦

â¦unpersist rdd

@zsxwing

@vanzin

@attilapiros

## What changes were proposed in this pull request?

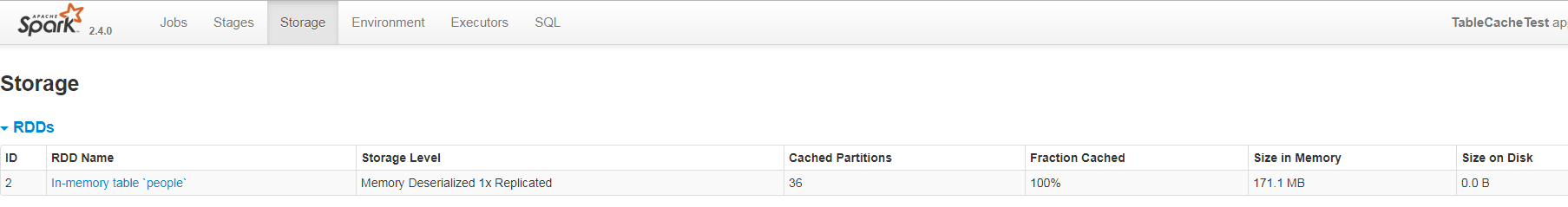

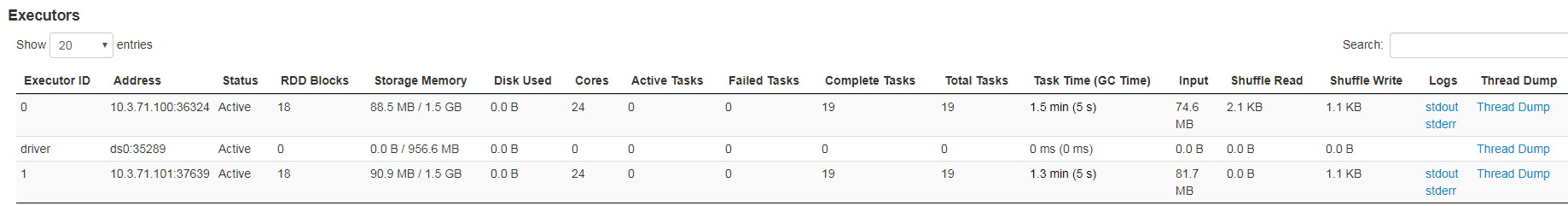

This issue is a UI issue. When we unpersist rdd or UNCACHE TABLEï¼the

storage memory size in Executor tab could not be reduced. So I modify code as

follow:

1ï¼BlockManager send block status to BlockManagerMaster after it removed

the block

2ï¼AppStatusListener receive block remove status and modify LiveRDD and

LiveExecutor info, so the storage memory size and rdd blocks in Executor Tab

could change when we unpersist rdd.

## How was this patch tested?

cache table and then unpersist table. Watch the WebUI for both storage and

executor tab.

You can merge this pull request into a Git repository by running:

$ git pull https://github.com/cfangplus/spark master

Alternatively you can review and apply these changes as the patch at:

https://github.com/apache/spark/pull/22335.patch

To close this pull request, make a commit to your master/trunk branch

with (at least) the following in the commit message:

This closes #22335

----

commit 5955fa6c6daa3d345046fc1e1fdc6e7eb73cd5e2

Author: cfangplus <cfang1109@...>

Date: 2018-09-04T13:14:12Z

[SPARK-25091][WEB UI] reduce the storage memory in Executor Tab when

unpersist rdd

----

---

---------------------------------------------------------------------

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org