Github user phegstrom commented on a diff in the pull request:

https://github.com/apache/spark/pull/22227#discussion_r215764191

--- Diff: python/pyspark/sql/functions.py ---

@@ -1669,20 +1669,33 @@ def repeat(col, n):

return Column(sc._jvm.functions.repeat(_to_java_column(col), n))

-@since(1.5)

+@since(2.4)

@ignore_unicode_prefix

-def split(str, pattern):

+def split(str, pattern, limit=-1):

"""

- Splits str around pattern (pattern is a regular expression).

+ Splits str around matches of the given pattern.

+

+ :param str: a string expression to split

+ :param pattern: a string representing a regular expression. The regex

string should be

+ a Java regular expression.

+ :param limit: an integer expression which controls the number of times

the pattern is applied.

- .. note:: pattern is a string represent the regular expression.

+ * ``limit > 0``: The resulting array's length will not be more

than `limit`, and the

+ resulting array's last entry will contain all

input beyond the last

+ matched pattern.

+ * ``limit <= 0``: `pattern` will be applied as many times as

possible, and the resulting

+ array can be of any size.

--- End diff --

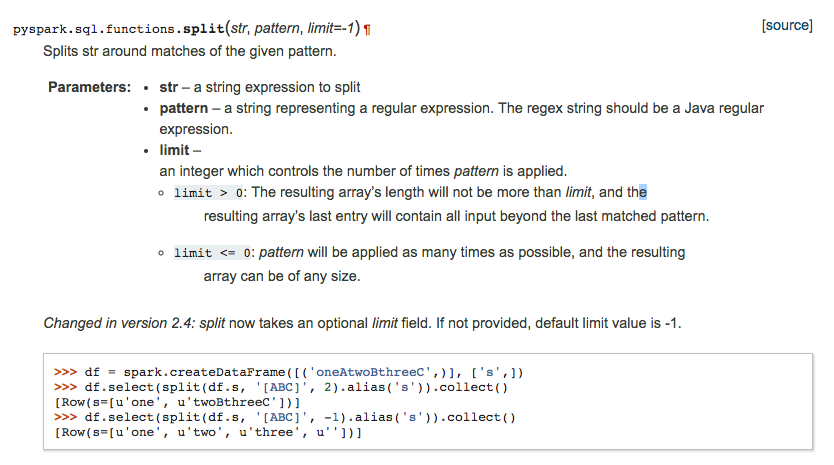

I did, see attached! Let me know what you think (unsure why initial

description of limit starts on a new line):

---

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]