Deegue commented on a change in pull request #23559: [SPARK-26630][SQL] Fix

ClassCastException in TableReader while creating HadoopRDD

URL: https://github.com/apache/spark/pull/23559#discussion_r249310923

##########

File path: sql/hive/src/main/scala/org/apache/spark/sql/hive/TableReader.scala

##########

@@ -289,15 +287,28 @@ class HadoopTableReader(

}

/**

- * Creates a HadoopRDD based on the broadcasted HiveConf and other job

properties that will be

+ * The entry of creating a RDD.

+ */

+ private def createHadoopRDD(

+ inputClassName: String, localTableDesc: TableDesc, inputPathStr:

String): RDD[Writable] = {

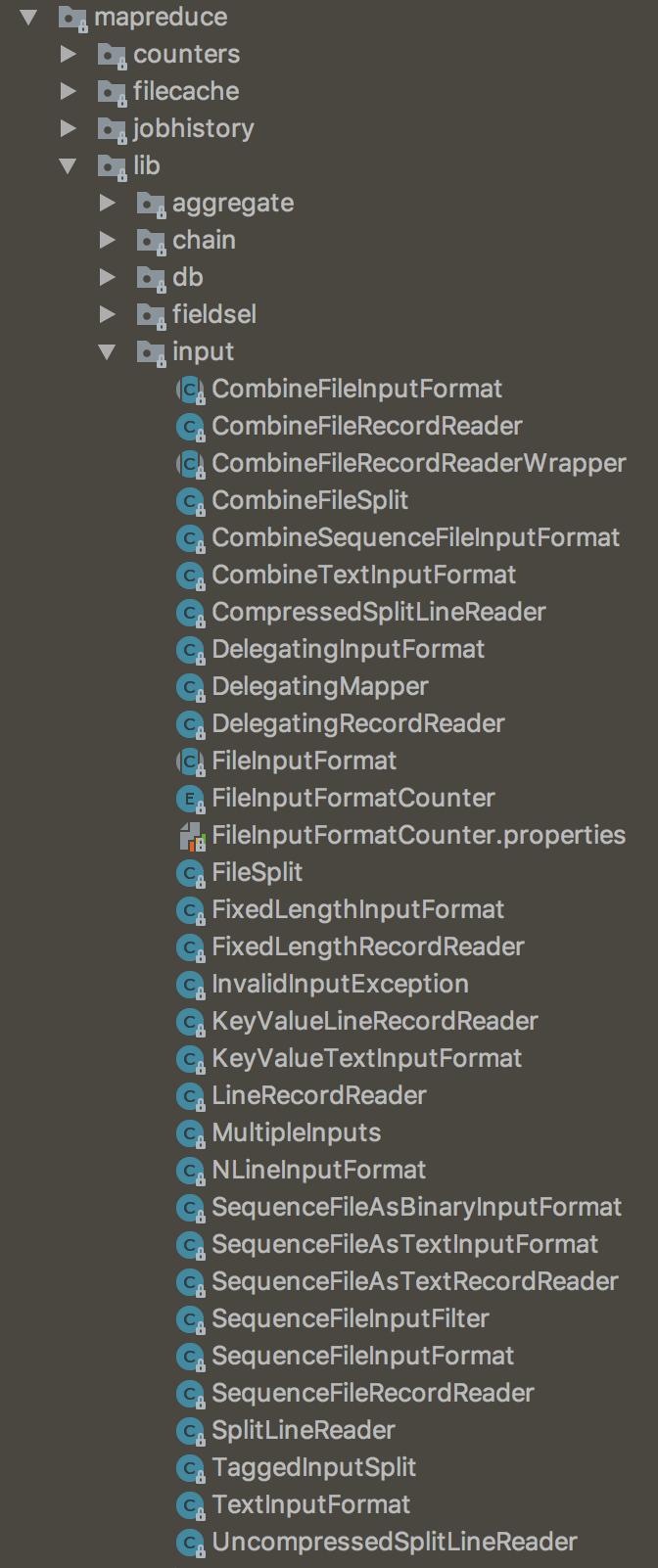

+ if (classOf[org.apache.hadoop.mapreduce.InputFormat[_, _]]

Review comment:

I think we need that because the result of `hiveTable.getInputFormatClass`

can be various.

It's difficult to list all of the input format classes and we can find the

similar usage in `org.apache.spark.scheduler.InputFormatInfo`(line 71 and 76)

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]

With regards,

Apache Git Services

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]