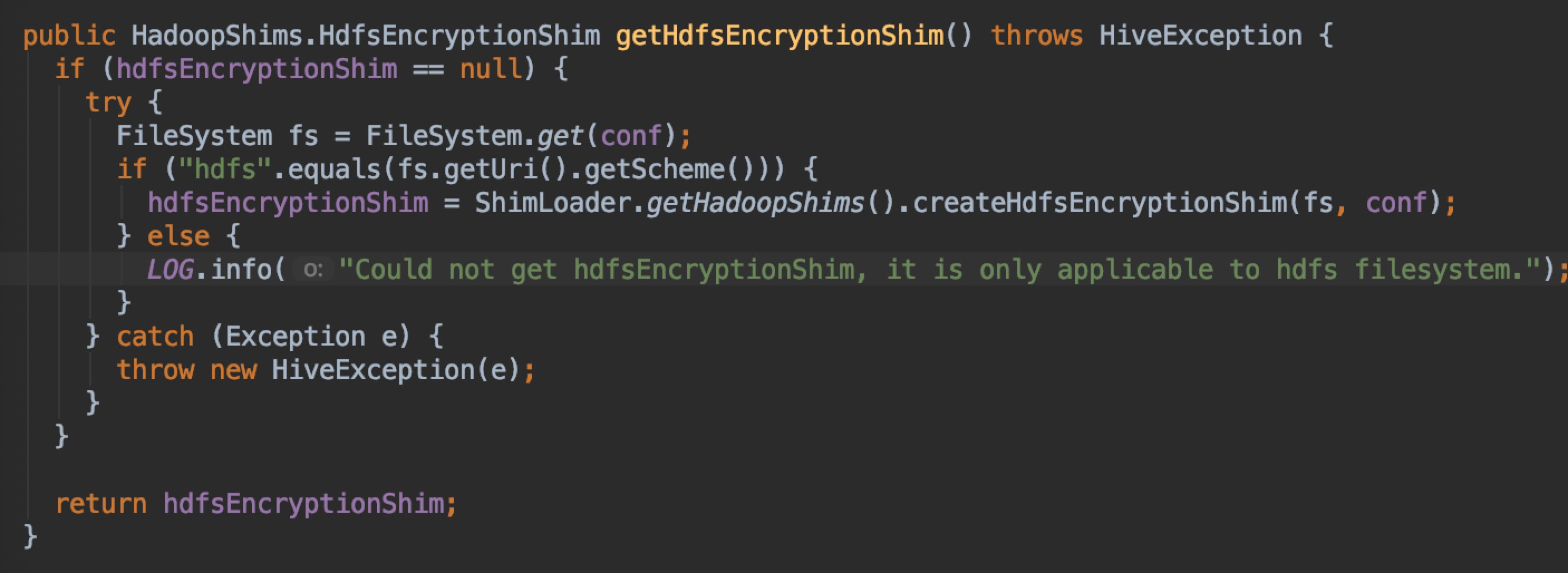

AngersZhuuuu opened a new pull request #25218: [SPARK-21067][SQL][FOLLOW-UP] fix sts FileSystem closed error when to call Hive.moveFile URL: https://github.com/apache/spark/pull/25218 ## What changes were proposed in this pull request? When we close a session of STS, if this session has done some SQL about insert, then other session do CTAS/INSERT and trigger Hive.moveFile, DFSClient will do checkOpen and throw java.io.IOException: Filesystem closed. **Root cause** : When we first execut SQL like CTAS/INSERT, it will call Hive.moveFile, during this method, it will initialize this field SessionState.hdfsEncryptionShim , when initialize this field, it will initialize a FS.  But this FS is under current HiveSessionImpleWithUgi.sessionUgi, so when we close this session, it will call `FileSystem.closeForUgi()`, above FileSystem will be closed, then during other session execute SQL like CTAS/INSERT, such error will happen since FS has been close. Some one may be confused why HiveServer2 won't appear this problem : - In HiveServer2, each session has it's own SessionState, so close current session's FS is ok. - In SparkThriftServer, all session interact with hive through one HiveClientImpl, it has only one SessionState, when we call method with HiveClientImpl, it will call **withHiveState** first to set HiveClientImpl's sessionState to current Thread. ## How was this patch tested? manual tested

---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] With regards, Apache Git Services --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]