[GitHub] zhongjiajie commented on a change in pull request #4535: [AIRFLOW-865] - Configure FTP connection mode

zhongjiajie commented on a change in pull request #4535: [AIRFLOW-865] -

Configure FTP connection mode

URL: https://github.com/apache/airflow/pull/4535#discussion_r248946469

##

File path: tests/contrib/hooks/test_ftp_hook.py

##

@@ -125,5 +127,50 @@ def test_retrieve_file_with_callback(self):

self.conn_mock.retrbinary.assert_called_once_with('RETR path', func)

+class TestIntegrationFTPHook(unittest.TestCase):

+

+def setUp(self):

+super(TestIntegrationFTPHook, self).setUp()

+from airflow import configuration

+from airflow.utils import db

+from airflow import models

+

+configuration.load_test_config()

+db.merge_conn(

+models.Connection(

Review comment:

The CI test show error like this

```

41) ERROR: test_ftp_active_mode

(tests.contrib.hooks.test_ftp_hook.TestIntegrationFTPHook)

--

Traceback (most recent call last):

tests/contrib/hooks/test_ftp_hook.py line 140 in setUp

models.Connection(

AttributeError: module 'airflow.models' has no attribute 'Connection'

==

```

I think I failed due to `models.Connection`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] potiuk edited a comment on issue #4543: [AIRFLOW-3718] Multi-layered version of the docker image

potiuk edited a comment on issue #4543: [AIRFLOW-3718] Multi-layered version of the docker image URL: https://github.com/apache/airflow/pull/4543#issuecomment-455439058 Hey @Fokko - I pushed all the changes. Please let me know if you have more concerns about caching and invalidation proposed there. Additionally if you have a concern about semi-regularly building everything from the scratch, I have done a simple POC of slight improvement to this approach - to accommodate automated building and pushing not only the "latest" tag but also the tag/released/branch ones. With [advanced build settings of Dockerhub](https://docs.docker.com/docker-hub/builds/advanced/) we can very easily configure custom builds and have full control over caching and building images for both - tags and master Here is the work-in-progress of the POC (I will be testing it today): https://github.com/PolideaInternal/airflow/commit/16ffadaadf72eb598cb48de9c33168f804e38079 It allows to build master-> airflow:latest images with incremental layers as described above, but then every time a new release is created - i.e. tag matching appropriate regex is pushed - the whole image can be rebuilt from the scratch based on AIRFLOW_TAG custom build argument that you can pass. I put the AIRFLOW_TAG argument at the beginning of the Dockerfile which means that it will invalidate the whole cache and rebuild it from the scratch for every different TAG we build. This way you can actually re-test (automatically) if rebuilding of the whole image from the scratch stlil works when you do RC builds. Without any additional effort nor actions from release manager, it will all be automated. It's just the matter of defining appropriate regex rules that will mach the tags or branches you want to build and subscribing to notifications whether the build did not fail. It also allows to build anyone their own copy of Airflow image in their own Dockerhub based on their own branches - very useful for anyone who would make any changes to Dockerfile. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io edited a comment on issue #4543: [AIRFLOW-3718] Multi-layered version of the docker image

codecov-io edited a comment on issue #4543: [AIRFLOW-3718] Multi-layered version of the docker image URL: https://github.com/apache/airflow/pull/4543#issuecomment-454963037 # [Codecov](https://codecov.io/gh/apache/airflow/pull/4543?src=pr=h1) Report > Merging [#4543](https://codecov.io/gh/apache/airflow/pull/4543?src=pr=desc) into [master](https://codecov.io/gh/apache/airflow/commit/0bd6d87e37f55a49768d30110d675e84a763103d?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/airflow/pull/4543?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4543 +/- ## === Coverage 74.08% 74.08% === Files 421 421 Lines 2766527665 === Hits2049620496 Misses 7169 7169 ``` -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/airflow/pull/4543?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/airflow/pull/4543?src=pr=footer). Last update [0bd6d87...a55814a](https://codecov.io/gh/apache/airflow/pull/4543?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io edited a comment on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst

codecov-io edited a comment on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst URL: https://github.com/apache/airflow/pull/4550#issuecomment-455434092 # [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=h1) Report > Merging [#4550](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=desc) into [master](https://codecov.io/gh/apache/airflow/commit/0bd6d87e37f55a49768d30110d675e84a763103d?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4550 +/- ## === Coverage 74.08% 74.08% === Files 421 421 Lines 2766527665 === Hits2049620496 Misses 7169 7169 ``` | [Impacted Files](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/models/\_\_init\_\_.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMvX19pbml0X18ucHk=) | `92.13% <0%> (-0.05%)` | :arrow_down: | | [airflow/contrib/operators/ssh\_operator.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL29wZXJhdG9ycy9zc2hfb3BlcmF0b3IucHk=) | `85% <0%> (+1.25%)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=footer). Last update [0bd6d87...2583223](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io edited a comment on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst

codecov-io edited a comment on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst URL: https://github.com/apache/airflow/pull/4550#issuecomment-455434092 # [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=h1) Report > Merging [#4550](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=desc) into [master](https://codecov.io/gh/apache/airflow/commit/0bd6d87e37f55a49768d30110d675e84a763103d?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4550 +/- ## === Coverage 74.08% 74.08% === Files 421 421 Lines 2766527665 === Hits2049620496 Misses 7169 7169 ``` | [Impacted Files](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/models/\_\_init\_\_.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMvX19pbml0X18ucHk=) | `92.13% <0%> (-0.05%)` | :arrow_down: | | [airflow/contrib/operators/ssh\_operator.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL29wZXJhdG9ycy9zc2hfb3BlcmF0b3IucHk=) | `85% <0%> (+1.25%)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=footer). Last update [0bd6d87...2583223](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io edited a comment on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst

codecov-io edited a comment on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst URL: https://github.com/apache/airflow/pull/4550#issuecomment-455434092 # [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=h1) Report > Merging [#4550](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=desc) into [master](https://codecov.io/gh/apache/airflow/commit/0bd6d87e37f55a49768d30110d675e84a763103d?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4550 +/- ## === Coverage 74.08% 74.08% === Files 421 421 Lines 2766527665 === Hits2049620496 Misses 7169 7169 ``` | [Impacted Files](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/models/\_\_init\_\_.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMvX19pbml0X18ucHk=) | `92.13% <0%> (-0.05%)` | :arrow_down: | | [airflow/contrib/operators/ssh\_operator.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL29wZXJhdG9ycy9zc2hfb3BlcmF0b3IucHk=) | `85% <0%> (+1.25%)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=footer). Last update [0bd6d87...2583223](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io edited a comment on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst

codecov-io edited a comment on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst URL: https://github.com/apache/airflow/pull/4550#issuecomment-455434092 # [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=h1) Report > Merging [#4550](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=desc) into [master](https://codecov.io/gh/apache/airflow/commit/0bd6d87e37f55a49768d30110d675e84a763103d?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4550 +/- ## === Coverage 74.08% 74.08% === Files 421 421 Lines 2766527665 === Hits2049620496 Misses 7169 7169 ``` | [Impacted Files](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/models/\_\_init\_\_.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMvX19pbml0X18ucHk=) | `92.13% <0%> (-0.05%)` | :arrow_down: | | [airflow/contrib/operators/ssh\_operator.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL29wZXJhdG9ycy9zc2hfb3BlcmF0b3IucHk=) | `85% <0%> (+1.25%)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=footer). Last update [0bd6d87...2583223](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io commented on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst

codecov-io commented on issue #4550: [Airflow 3714] Correct DAG name in docs/start.rst URL: https://github.com/apache/airflow/pull/4550#issuecomment-455434092 # [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=h1) Report > Merging [#4550](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=desc) into [master](https://codecov.io/gh/apache/airflow/commit/0bd6d87e37f55a49768d30110d675e84a763103d?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4550 +/- ## === Coverage 74.08% 74.08% === Files 421 421 Lines 2766527665 === Hits2049620496 Misses 7169 7169 ``` | [Impacted Files](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/models/\_\_init\_\_.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMvX19pbml0X18ucHk=) | `92.13% <0%> (-0.05%)` | :arrow_down: | | [airflow/contrib/operators/ssh\_operator.py](https://codecov.io/gh/apache/airflow/pull/4550/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL29wZXJhdG9ycy9zc2hfb3BlcmF0b3IucHk=) | `85% <0%> (+1.25%)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=footer). Last update [0bd6d87...2583223](https://codecov.io/gh/apache/airflow/pull/4550?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] reubenvanammers commented on a change in pull request #4156: [AIRFLOW-3314] Changed auto inlets feature to work as described

reubenvanammers commented on a change in pull request #4156: [AIRFLOW-3314]

Changed auto inlets feature to work as described

URL: https://github.com/apache/airflow/pull/4156#discussion_r248929437

##

File path: airflow/lineage/__init__.py

##

@@ -110,26 +114,31 @@ def wrapper(self, context, *args, **kwargs):

for i in inlets]

self.inlets.extend(inlets)

-if self._inlets['auto']:

-# dont append twice

-task_ids = set(self._inlets['task_ids']).symmetric_difference(

-self.upstream_task_ids

-)

-inlets = self.xcom_pull(context,

-task_ids=task_ids,

-dag_id=self.dag_id,

-key=PIPELINE_OUTLETS)

-inlets = [item for sublist in inlets if sublist for item in

sublist]

-inlets = [DataSet.map_type(i['typeName'])(data=i['attributes'])

- for i in inlets]

-self.inlets.extend(inlets)

-

-if len(self._inlets['datasets']) > 0:

-self.inlets.extend(self._inlets['datasets'])

+if self._inlets["auto"]:

+visited_task_ids = set(self._inlets["task_ids"]) # prevent double

counting of outlets

+stack = {self.task_id}

+while stack:

+task_id = stack.pop()

+task = self._dag.task_dict[task_id]

+visited_task_ids.add(task_id)

+inlets = self.xcom_pull(

Review comment:

Hey @bolkedebruin, I changed the logic a while ago on how the database was

called, so it will only do a single db call. Would you mind having another

look?

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] zhongjiajie opened a new pull request #4550: [Airflow 3714] Correct DAG name in docs/start.rst

zhongjiajie opened a new pull request #4550: [Airflow 3714] Correct DAG name in

docs/start.rst

URL: https://github.com/apache/airflow/pull/4550

Make sure you have checked _all_ steps below.

### Jira

- [x] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues and references

them in the PR title. For example, "\[AIRFLOW-XXX\] My Airflow PR"

- https://issues.apache.org/jira/browse/AIRFLOW-3714

- In case you are fixing a typo in the documentation you can prepend your

commit with \[AIRFLOW-XXX\], code changes always need a Jira issue.

### Description

- [x] Correct the doc

[quick-start](https://airflow.apache.org/start.html#quick-start), change

`example1` to `example_bash_operator` as same as the command below

### Tests

- [x] My PR does not need testing for this extremely good reason: just

correct the doc

### Commits

- [x] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

### Documentation

- [x] I just correct the doc, so do not need Documentation

### Code Quality

- [x] Passes `flake8`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[jira] [Resolved] (AIRFLOW-2843) ExternalTaskSensor: Add option to cease waiting immediately if the external DAG/task doesn't exist

[ https://issues.apache.org/jira/browse/AIRFLOW-2843?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Xiaodong DENG resolved AIRFLOW-2843. Resolution: Resolved > ExternalTaskSensor: Add option to cease waiting immediately if the external > DAG/task doesn't exist > -- > > Key: AIRFLOW-2843 > URL: https://issues.apache.org/jira/browse/AIRFLOW-2843 > Project: Apache Airflow > Issue Type: Improvement > Components: operators >Reporter: Xiaodong DENG >Assignee: Xiaodong DENG >Priority: Minor > > h2. Background > *ExternalTaskSensor* will keep waiting (given restrictions of retries, > poke_interval, etc), even if the external DAG/task specified doesn't exist at > all. In some cases, this waiting may still make sense as new DAG may backfill. > But it may be good to provide an option to cease waiting immediately if the > external DAG/task specified doesn't exist. > h2. Proposal > Provide an argument "check_existence". Set to *True* to check if the external > DAG/task exists, and immediately cease waiting if the external DAG/task does > not exist. > The default value is set to *False* (no check or ceasing will happen) so it > will not affect any existing DAGs or user expectation. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Assigned] (AIRFLOW-3714) Quickstart references outdated DAG

[ https://issues.apache.org/jira/browse/AIRFLOW-3714?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] zhongjiajie reassigned AIRFLOW-3714: Assignee: zhongjiajie > Quickstart references outdated DAG > -- > > Key: AIRFLOW-3714 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3714 > Project: Apache Airflow > Issue Type: Bug > Components: Documentation >Reporter: Steve Greenberg >Assignee: zhongjiajie >Priority: Minor > > [https://airflow.apache.org/start.html] mentions > > "You should be able to see the status of the jobs change in the example1 DAG > as you run the commands below." > > There is no example1 DAG. This should probably say "example_bash_operator" > instead. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Created] (AIRFLOW-3727) Fix a wrong reference to timezone method

Kengo Seki created AIRFLOW-3727:

---

Summary: Fix a wrong reference to timezone method

Key: AIRFLOW-3727

URL: https://issues.apache.org/jira/browse/AIRFLOW-3727

Project: Apache Airflow

Issue Type: Bug

Components: Documentation

Reporter: Kengo Seki

[https://airflow.apache.org/timezone.html] says:

{quote}You can use timezone.is_aware() and timezone.is_naive() to determine

whether datetimes are aware or naive.

{quote}

But the right method name is {{timezone.is_localized}}, not

{{timezone.is_aware}}.

(Or, should we add {{timezone.is_aware}} as an alias of

{{timezone.is_localized}}, to be congruent with {{timezone.make_aware}} and

{{timezone.make_naive}}?)

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[GitHub] dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455389403 @r39132 your crash is specific to MacOS. Apple makes modifications to the vanilla install, resulting in this specific bug you're encountering; see [here](https://stackoverflow.com/questions/50953117/os-x-pip3-broken-with-brew-install-python3-6-5-1). If you install Python from the source you won't have this crash. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] dargueta commented on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta commented on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455389403 @r39132 your crash is specific to MacOS. Apple makes modifications to the vanilla install, resulting in this specific bug you're encountering; see [here](https://stackoverflow.com/questions/50953117/os-x-pip3-broken-with-brew-install-python3-6-5-1). If you install Python from the source you won't have this problem. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] mik-laj commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection

mik-laj commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection URL: https://github.com/apache/airflow/pull/4514#issuecomment-455387553 I do not think that it is worth writing a list of operators and hooks. just mention the default conn_id is enough This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] mik-laj edited a comment on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection

mik-laj edited a comment on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection URL: https://github.com/apache/airflow/pull/4514#issuecomment-455385856 This is already described when my PR is accepted.I personally think that this is not enough. Unfortunately people are lazy and if they are to write a long command, they will rather look for it, only to copy it. I see it myself. It's easy to make a mistake, so I type in Google to get a full and ready command. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] mik-laj edited a comment on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection

mik-laj edited a comment on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection URL: https://github.com/apache/airflow/pull/4514#issuecomment-455385856 This is already described when my PR is accepted.I personally think that this is not enough. People is unfortunately lazy and when he has to write a long command we will rather look for it, only to copy. I see it This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] mik-laj commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection

mik-laj commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection URL: https://github.com/apache/airflow/pull/4514#issuecomment-455385856 This is already described when my PR is accepted. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455369056 I also can't reproduce the crash. `apache-airflow`: 1.10.1 `tenacity`: I tried 4.11 _and_ 4.12. Python: 3.7.2 and 3.6.8 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] danabananarama commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection

danabananarama commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection URL: https://github.com/apache/airflow/pull/4514#issuecomment-455384494 Since the query parameter bit is the same as for other Connection types, perhaps it makes more sense for the general case to be documented in the "Creating a Connection with Environment Variables" section to avoid repetition? I'll add the general default `conn_id`, but I'm not sure it's worth including all of the hooks and operators for this, there are quite a lot and it would be tough to ensure this doc section is completely maintained going forward. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] mik-laj commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection

mik-laj commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection URL: https://github.com/apache/airflow/pull/4514#issuecomment-455378999 @mik-laj What specific things do you need? `schema` part must be equals `aws`. Query parameters must cotanins extra parameters. For example: `aws_access_key_id=AA_secret_access_key=BB=aws_account_id'. You should also write what the default `con_id` is in hooks and operators for this platforms. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] XD-DENG commented on issue #4547: [AIRFLOW-2843] Add flag in ExternalTaskSensor to check if external DAG/task exists

XD-DENG commented on issue #4547: [AIRFLOW-2843] Add flag in ExternalTaskSensor to check if external DAG/task exists URL: https://github.com/apache/airflow/pull/4547#issuecomment-455375389 Thanks @feng-tao This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Created] (AIRFLOW-3726) Change the description and the default value for broker_url in airflow.cfg

Kengo Seki created AIRFLOW-3726:

---

Summary: Change the description and the default value for

broker_url in airflow.cfg

Key: AIRFLOW-3726

URL: https://issues.apache.org/jira/browse/AIRFLOW-3726

Project: Apache Airflow

Issue Type: Bug

Components: configuration

Reporter: Kengo Seki

Celery seems to have dropped SQLAlchemy support for its broker from 4.0.0

(introduced at Airflow 1.9.0).

http://docs.celeryproject.org/en/4.0/whatsnew-4.0.html#features-removed-for-lack-of-funding

But the default airflow.cfg can be read as it's still supported.

{code:title=airflow/config_templates/default_airflow.cfg}

# The Celery broker URL. Celery supports RabbitMQ, Redis and experimentally

# a sqlalchemy database. Refer to the Celery documentation for more

# information.

broker_url = sqla+mysql://airflow:airflow@localhost:3306/airflow

{code}

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[GitHub] codecov-io edited a comment on issue #4321: incubator-airflow/scripts/ launchctl

codecov-io edited a comment on issue #4321: incubator-airflow/scripts/ launchctl URL: https://github.com/apache/airflow/pull/4321#issuecomment-453847975 # [Codecov](https://codecov.io/gh/apache/airflow/pull/4321?src=pr=h1) Report > Merging [#4321](https://codecov.io/gh/apache/airflow/pull/4321?src=pr=desc) into [master](https://codecov.io/gh/apache/airflow/commit/fc440dc3c84ec7c5d3f39038090b214496f95c6a?src=pr=desc) will **increase** coverage by `2.57%`. > The diff coverage is `77.98%`. [](https://codecov.io/gh/apache/airflow/pull/4321?src=pr=tree) ```diff @@Coverage Diff@@ ## master #4321 +/- ## = + Coverage 74.02% 76.6% +2.57% = Files 421 203 -218 Lines 27644 16493 -11151 = - Hits20463 12634-7829 + Misses 71813859-3322 ``` | [Impacted Files](https://codecov.io/gh/apache/airflow/pull/4321?src=pr=tree) | Coverage Δ | | |---|---|---| | [...ample\_dags/example\_branch\_python\_dop\_operator\_3.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy9leGFtcGxlX2RhZ3MvZXhhbXBsZV9icmFuY2hfcHl0aG9uX2RvcF9vcGVyYXRvcl8zLnB5) | `73.33% <ø> (ø)` | :arrow_up: | | [airflow/operators/\_\_init\_\_.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy9vcGVyYXRvcnMvX19pbml0X18ucHk=) | `61.11% <ø> (-38.89%)` | :arrow_down: | | [airflow/hooks/hive\_hooks.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy9ob29rcy9oaXZlX2hvb2tzLnB5) | `73.42% <ø> (-1.85%)` | :arrow_down: | | [airflow/utils/email.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy91dGlscy9lbWFpbC5weQ==) | `100% <ø> (ø)` | :arrow_up: | | [airflow/utils/sqlalchemy.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy91dGlscy9zcWxhbGNoZW15LnB5) | `81.42% <ø> (-0.39%)` | :arrow_down: | | [airflow/operators/python\_operator.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy9vcGVyYXRvcnMvcHl0aG9uX29wZXJhdG9yLnB5) | `95.03% <ø> (-0.1%)` | :arrow_down: | | [airflow/example\_dags/example\_http\_operator.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy9leGFtcGxlX2RhZ3MvZXhhbXBsZV9odHRwX29wZXJhdG9yLnB5) | `100% <ø> (ø)` | :arrow_up: | | [airflow/hooks/druid\_hook.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy9ob29rcy9kcnVpZF9ob29rLnB5) | `88% <ø> (ø)` | :arrow_up: | | [airflow/security/utils.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy9zZWN1cml0eS91dGlscy5weQ==) | `26.92% <ø> (ø)` | :arrow_up: | | [airflow/hooks/base\_hook.py](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree#diff-YWlyZmxvdy9ob29rcy9iYXNlX2hvb2sucHk=) | `92.15% <ø> (ø)` | :arrow_up: | | ... and [282 more](https://codecov.io/gh/apache/airflow/pull/4321/diff?src=pr=tree-more) | | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/airflow/pull/4321?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/airflow/pull/4321?src=pr=footer). Last update [fc440dc...d727783](https://codecov.io/gh/apache/airflow/pull/4321?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455369056 I also can't reproduce the crash. `apache-airflow`: 1.10.1 Python: 3.7.2 tenacity: I tried 4.11 _and_ 4.12. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455369056 I also can't reproduce the crash. `apache-airflow`: 1.10.1 `tenacity`: I tried 4.11 _and_ 4.12. Python: 3.7.2 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Updated] (AIRFLOW-3702) Reverse Backfilling(from current date to start date)

[ https://issues.apache.org/jira/browse/AIRFLOW-3702?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Kaxil Naik updated AIRFLOW-3702: Fix Version/s: (was: 1.10.2) 2.0.0 > Reverse Backfilling(from current date to start date) > > > Key: AIRFLOW-3702 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3702 > Project: Apache Airflow > Issue Type: Improvement > Components: configuration, DAG, models >Affects Versions: 1.10.1 > Environment: MacOS High Sierra > python2.7 > Airflow-1.10.1 >Reporter: Shubham Gupta >Assignee: Tao Feng >Priority: Major > Labels: critical, improvement, priority > Fix For: 2.0.0 > > Original Estimate: 336h > Remaining Estimate: 336h > > Hello, > I think there is a need to have reverse backfilling option as well because > recent jobs would take precedence over the historical jobs. We can come up > with some variable in the DAG such as dagrun_order_default = True/False . > This would help in many use cases, in which previous date pipeline does not > depends on current pipeline. > I saw this page which talks about this -> > http://mail-archives.apache.org/mod_mbox/airflow-dev/201804.mbox/%3CCAPUwX3M7_qrn=1bqysmkdv_ifjbta6lbtq7czhhexszmdjk...@mail.gmail.com%3E > Thanks! > Regards, > Shubham -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (AIRFLOW-3229) New install with python3.7 fail - Need to bump tenacity version

[

https://issues.apache.org/jira/browse/AIRFLOW-3229?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16745610#comment-16745610

]

Diego Argueta commented on AIRFLOW-3229:

After some experimenting I've been able to get this to work on Python 3.7 with

tenacity 4.11.0 and greater. It'd be great if we could at least pin it to

{{>=4.8, <5.0}}.

> New install with python3.7 fail - Need to bump tenacity version

> ---

>

> Key: AIRFLOW-3229

> URL: https://issues.apache.org/jira/browse/AIRFLOW-3229

> Project: Apache Airflow

> Issue Type: Bug

> Components: dependencies

>Reporter: Qj Chv

>Priority: Critical

> Original Estimate: 24h

> Remaining Estimate: 24h

>

> Current master requires tenacity==4.8 as dependency but [tenacity

> 4.8|https://github.com/jd/tenacity/tree/4.8.0/tenacity] is not compatible

> with python 3.7 because of `async` keyword.

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[GitHub] dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455369056 I also can't reproduce the crash. `apache-airflow`: 1.10.1 Python: 3.7.2 I installed `tenacity` using the constraints `>=4.11, <5.0` (gives `4.12`) and this works. I followed exactly the same steps as feluelle. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455369056 I also can't reproduce the crash. `apache-airflow`: 1.10.1 Python: 3.7.2 I installed `tenacity` using the constraints `>=4.11, <5.0` gives `4.12` and this works. I followed exactly the same steps as feluelle. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta edited a comment on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455369056 I also can't reproduce the crash. `apache-airflow`: 1.10.1 Python: 3.7.2 `tenacity >=4.11, <5.0` gives `4.12` and this works. I followed exactly the same steps as feluelle. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] dargueta commented on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12

dargueta commented on issue #3723: [AIRFLOW-2876] Update Tenacity to 4.12 URL: https://github.com/apache/airflow/pull/3723#issuecomment-455369056 I also can't reproduce the crash. `apache-airflow`: 1.10.1 Python: 3.7.2 tenacity >=4.11, <5.0 gives `4.12` and this works. I followed exactly the same steps as feluelle. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Updated] (AIRFLOW-3720) GoogleCloudStorageToS3Operator - incorrect folder compare

[ https://issues.apache.org/jira/browse/AIRFLOW-3720?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Kaxil Naik updated AIRFLOW-3720: Fix Version/s: (was: 1.10.2) 2.0.0 > GoogleCloudStorageToS3Operator - incorrect folder compare > -- > > Key: AIRFLOW-3720 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3720 > Project: Apache Airflow > Issue Type: Bug > Components: boto3 >Affects Versions: 1.10.0 >Reporter: Chaim >Assignee: Chaim >Priority: Major > Fix For: 2.0.0 > > > the code that compares folders from gcp to s3 is incorrect. > the code is: > files = set(files) - set(existing_files) > but the list from gcp has a "/" to the name, for example: "myfolder/", while > in s3 it does not have "/" so the folder is "myfolder" > the result is that the code tries to recopy the folder name but fails since > it already exists -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (AIRFLOW-2876) Bump version of Tenacity

[

https://issues.apache.org/jira/browse/AIRFLOW-2876?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16745616#comment-16745616

]

Diego Argueta commented on AIRFLOW-2876:

Would it be possible to pin this to {{>=4.11.0, <5.0}} for compatibility with

projects that use Airflow in addition to other packages?

> Bump version of Tenacity

>

>

> Key: AIRFLOW-2876

> URL: https://issues.apache.org/jira/browse/AIRFLOW-2876

> Project: Apache Airflow

> Issue Type: Bug

>Reporter: Fokko Driesprong

>Priority: Major

>

> Since 4.8.0 is not Python 3.7 compatible, we want to bump the version to

> 4.12.0

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[jira] [Commented] (AIRFLOW-3725) Bigquery_hook should be able to supply private_key to pandas_gbq.read_gbq

[ https://issues.apache.org/jira/browse/AIRFLOW-3725?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16745605#comment-16745605 ] ASF GitHub Bot commented on AIRFLOW-3725: - asawitt commented on pull request #4549: [AIRFLOW-3725] Add private_key to bigquery_hook get_pandas_df URL: https://github.com/apache/airflow/pull/4549 [AIRFLOW-3725] Bigquery Hook authentication currently defaults to Google User-account credentials, and the user is asked to authenticate with Pandas GBQ manually. This diff allows users to specify a private_key in either json or key_path form, in keeping with the Google Cloud Platform connection type. ### Tests The following unit tests have been added: TestPandasGbqPrivateKey.test_key_path_provided TestPandasGbqPrivateKey.test_key_json_provided TestPandasGbqPrivateKey.test_no_key_provided ### Code Quality - [✓] Passes `flake8` This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Bigquery_hook should be able to supply private_key to pandas_gbq.read_gbq > - > > Key: AIRFLOW-3725 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3725 > Project: Apache Airflow > Issue Type: Improvement >Reporter: Asa >Assignee: Asa >Priority: Minor > > Pandas_gbq.read_gbq uses Google application default credentials if a > private_key is not supplied. We should allow users to pass in this > private_key in order to allow key-based authentication. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[GitHub] asawitt opened a new pull request #4549: [AIRFLOW-3725] Add private_key to bigquery_hook get_pandas_df

asawitt opened a new pull request #4549: [AIRFLOW-3725] Add private_key to bigquery_hook get_pandas_df URL: https://github.com/apache/airflow/pull/4549 [AIRFLOW-3725] Bigquery Hook authentication currently defaults to Google User-account credentials, and the user is asked to authenticate with Pandas GBQ manually. This diff allows users to specify a private_key in either json or key_path form, in keeping with the Google Cloud Platform connection type. ### Tests The following unit tests have been added: TestPandasGbqPrivateKey.test_key_path_provided TestPandasGbqPrivateKey.test_key_json_provided TestPandasGbqPrivateKey.test_no_key_provided ### Code Quality - [✓] Passes `flake8` This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] danabananarama commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection

danabananarama commented on issue #4514: [AIRFLOW-3698] Add documentation for AWS Connection URL: https://github.com/apache/airflow/pull/4514#issuecomment-455352968 @mik-laj Oh cool, I didn't know you could do that! I'm afraid I haven't set up the AWS Connection that way before so I'm not aware of any AWS-specific things you might need to specify when setting it up that way. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] potiuk edited a comment on issue #4543: [AIRFLOW-3718] Multi-layered version of the docker image

potiuk edited a comment on issue #4543: [AIRFLOW-3718] Multi-layered version of the docker image URL: https://github.com/apache/airflow/pull/4543#issuecomment-455242821 @fokko - I am pushing an updated version. I know that famous quote, but I think in this case cache invalidation works in our favour. That quote really is about that you never know when to do the invalidation and in our case we will do very smart invalidation (as explained in detail in your question about implicit dependencies). PTAL and let me know if the strategy I explained makes sense to you. Actually we could even build in some mechanism to invalidate such cashe automatically from time to time. My whole problem with the current image is that it should not simply be done as is today - in the way that the whole image is always build from the scratch. There is totally no need for that and it has the nasty side effect for the users that it will pollute their docker lib directory with a lot of unused, frequently invalidated images. In this case the problem is with cache invalidation on the user side in fact. Docker does not know when an already downloaded image will not be needed so it will cache it until someone does 'docker system prune'. Otherwise the /var/lib/docker library will grow forever for someone who will regularly pull airflow images. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] potiuk commented on a change in pull request #4543: [AIRFLOW-3718] Multi-layered version of the docker image

potiuk commented on a change in pull request #4543: [AIRFLOW-3718]

Multi-layered version of the docker image

URL: https://github.com/apache/airflow/pull/4543#discussion_r248747685

##

File path: Dockerfile

##

@@ -16,26 +16,83 @@

FROM python:3.6-slim

-COPY . /opt/airflow/

+SHELL ["/bin/bash", "-c"]

+

+# Make sure noninteractie debian install is used

+ENV DEBIAN_FRONTEND=noninteractive

+

+# Increase the value to force renstalling of all apt-get dependencies

+ENV FORCE_REINSTALL_APT_GET_DEPENDENCIES=1

+

+# Install core build dependencies

+RUN apt-get update \

+&& apt-get install -y --no-install-recommends \

+libkrb5-dev libsasl2-dev libssl-dev libffi-dev libpq-dev git \

+&& apt-get clean

+

+# Install useful utilities and other airflow required dependencies

+RUN apt-get update \

+&& apt-get install -y --no-install-recommends \

+libsasl2-dev freetds-bin build-essential default-libmysqlclient-dev

apt-utils \

+curl rsync netcat locales \

+&& apt-get clean

ARG AIRFLOW_HOME=/usr/local/airflow

-ARG AIRFLOW_DEPS="all"

-ARG PYTHON_DEPS=""

-ARG buildDeps="freetds-dev libkrb5-dev libsasl2-dev libssl-dev libffi-dev

libpq-dev git"

-ARG APT_DEPS="$buildDeps libsasl2-dev freetds-bin build-essential

default-libmysqlclient-dev apt-utils curl rsync netcat locales"

+RUN mkdir -p $AIRFLOW_HOME

+

+# Airflow extras to be installed

+ARG AIRFLOW_EXTRAS="all"

+

+# Increase the value here to force reinstalling Apache Airflow pip dependencies

+ENV FORCE_REINSTALL_ALL_PIP_DEPENDENCIES=1

+

+# Speeds up building the image - cassandra driver without CYTHON saves around

10 minutes

+# of build on typical machine

+ARG CASS_DRIVER_NO_CYTHON_ARG=""

+

+# Build cassandra driver on multiple CPUs

+ENV CASS_DRIVER_BUILD_CONCURRENCY=8

+

+# Speeds up the installation of cassandra driver

+ENV CASS_DRIVER_NO_CYTHON=${CASS_DRIVER_NO_CYTHON_ARG}

+

+## Airflow requires this variable be set on installation to avoid a GPL

dependency.

+ENV SLUGIFY_USES_TEXT_UNIDECODE yes

+

+# Airflow sources change frequently but dependency onfiguration won't change

that often

+# We copy setup.py and other files needed to perform setup of dependencies

+# This way cache here will only be invalidated if any of the

+# version/setup configuration change but not when airflow sources change

Review comment:

The dependencies should be resolved properly and minor updates should be

installed as needed. It works as follows:

1) The first pip install will install the dependencies as they are at the

moment the dockerfile is generated for the first time (line 74).

2) Whenever any of the sources of airflow change, the docker image at line

77 gets invalidated and docker is rebuilt starting from that line. Then we

upgrade apt-get dependencies to the latest ones (line 80) and then we run `pip

install` Again (without using the old cache) to see if the pip transient

dependencies will resolve to different versions of related packages. In most

cases, this will be no-op but in case some transient dependencies will cause

different resolution of them - they will get installed at that time (line 89)

3) Whenever any of the setup.*, README, version.py, airflow script change

(all files related to setup) we go even back a step - the Docker is invalidated

at one of the lines 66-70 and it will continue from there - which means that

completely fresh 'pip install' is run from scratch.

4) We can always force installation from the scratch by increasing value of

ENV FORCE_REINSTALL_APT_GET_DEPENDENCIES (currently = 1). If we manually

increase it to 2 and commit such Dockerfile to docker it will invalidate docker

images at line 25 and the all the installation is run from the scratch.

5) The only thing I am not 100% sure (but about 99%) is what happens when

new version of python3.6-slim gets released. I believe it will trigger the

build from scratch, but that's something that we will have to confirm with

DockerHub (It might depend on how they are doing caching as this part might be

done differently). It's not a big problem even if this is not the case because

at point 3) we do `apt-get upgrade` and at this time python version will get

upgraded as well if it is released. So this is more of an optimisation question

than a problem.

So eventually - whenever you make any change to airflow sources, you are

sure that both 'apt-get upgrade' and 'pip install' have been called. Whenever

you make change to setup.py - you are sure that 'pip install' is called from

scratch , whenever you force it you can do apt-get install from the scratch.

I thin this fairly robust and expected behaviour.

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure

[GitHub] feng-tao commented on issue #4459: [AIRFLOW-3649] Feature to add extra labels to kubernetes worker pods

feng-tao commented on issue #4459: [AIRFLOW-3649] Feature to add extra labels to kubernetes worker pods URL: https://github.com/apache/airflow/pull/4459#issuecomment-455347596 once you rebase with master, it should be good @wyndhblb This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

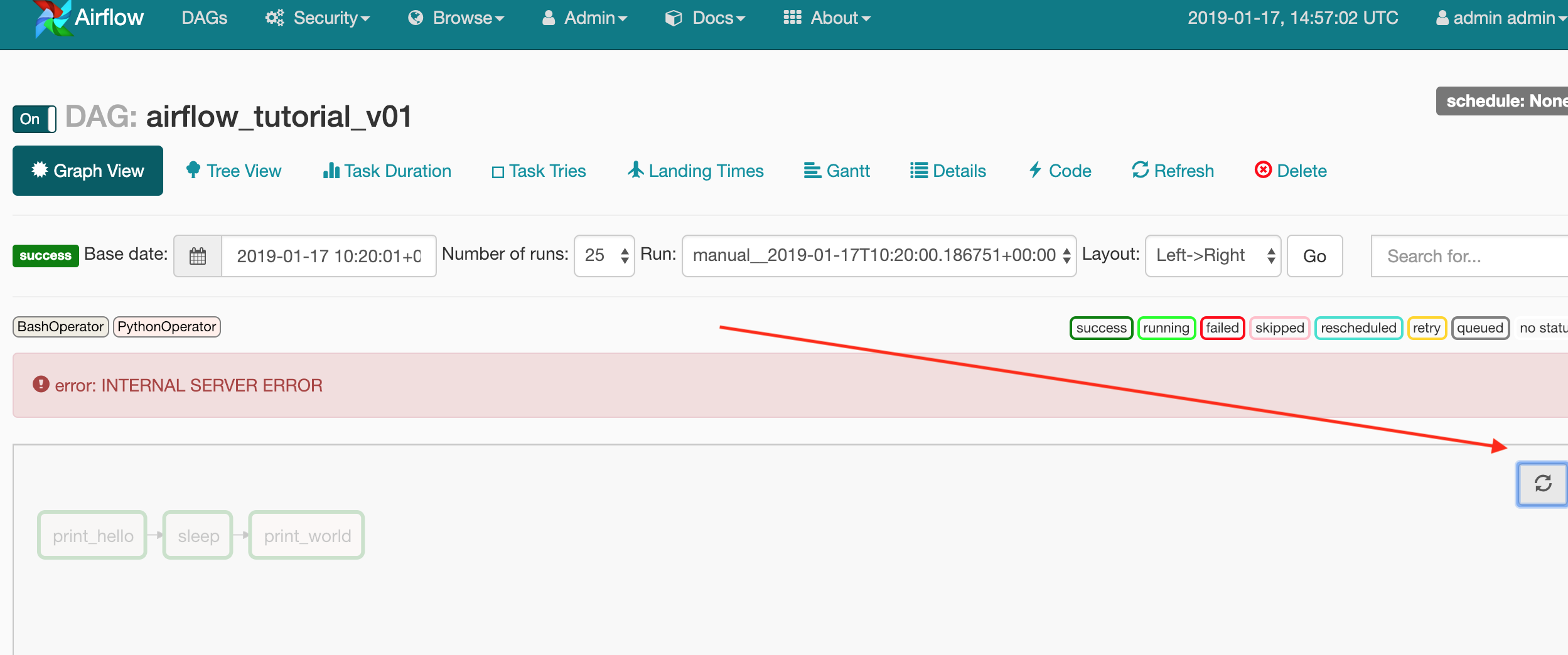

[jira] [Commented] (AIRFLOW-3724) Fix the broken refresh button on Graph View in RBAC UI

[ https://issues.apache.org/jira/browse/AIRFLOW-3724?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16745544#comment-16745544 ] ASF subversion and git services commented on AIRFLOW-3724: -- Commit 559d78de052227828a12ace6b01eb64ff66ffb72 in airflow's branch refs/heads/v1-10-stable from Kaxil Naik [ https://gitbox.apache.org/repos/asf?p=airflow.git;h=559d78d ] [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI > Fix the broken refresh button on Graph View in RBAC UI > -- > > Key: AIRFLOW-3724 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3724 > Project: Apache Airflow > Issue Type: Bug > Components: ui >Affects Versions: 1.10.2 >Reporter: Kaxil Naik >Assignee: Kaxil Naik >Priority: Minor > Fix For: 1.10.2 > > > Pressing on the refresh button in Graph View results in an error `error: > INTERNAL SERVER ERROR` as shown in the attachment. > ` -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (AIRFLOW-3724) Fix the broken refresh button on Graph View in RBAC UI

[ https://issues.apache.org/jira/browse/AIRFLOW-3724?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16745543#comment-16745543 ] ASF subversion and git services commented on AIRFLOW-3724: -- Commit 559d78de052227828a12ace6b01eb64ff66ffb72 in airflow's branch refs/heads/v1-10-test from Kaxil Naik [ https://gitbox.apache.org/repos/asf?p=airflow.git;h=559d78d ] [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI > Fix the broken refresh button on Graph View in RBAC UI > -- > > Key: AIRFLOW-3724 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3724 > Project: Apache Airflow > Issue Type: Bug > Components: ui >Affects Versions: 1.10.2 >Reporter: Kaxil Naik >Assignee: Kaxil Naik >Priority: Minor > Fix For: 1.10.2 > > > Pressing on the refresh button in Graph View results in an error `error: > INTERNAL SERVER ERROR` as shown in the attachment. > ` -- This message was sent by Atlassian JIRA (v7.6.3#76005)

svn commit: r32026 - /dev/airflow/1.10.2rc2/

Author: kaxilnaik Date: Thu Jan 17 21:49:15 2019 New Revision: 32026 Log: Add artefacts for Airflow 1.10.2rc2 Added: dev/airflow/1.10.2rc2/ dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz (with props) dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.asc dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.md5 dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.sha512 dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz (with props) dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.asc dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.md5 dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.sha512 Added: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz == Binary file - no diff available. Propchange: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz -- svn:mime-type = application/octet-stream Added: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.asc == --- dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.asc (added) +++ dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.asc Thu Jan 17 21:49:15 2019 @@ -0,0 +1,11 @@ +-BEGIN PGP SIGNATURE- + +iQEzBAABCAAdFiEE99i2+6MdZAxeQM4i3XSEoCXxdJQFAlxA94UACgkQ3XSEoCXx +dJRzXQf/SlWqw6otq2GLZrKrpz879e4Kmohqt8w4SgmrPl8T9Y4asl90LZ6YDRX7 +Lyyfvh/Z55eNknYTrBcdoUOmFbsXTWxBNg/3KtDzHKWMQkeYVxaoBkXFiDAm5dY2 +XSWzKyC/arjIpnxBpuuW3M0K/oAeJ5cdgu1CgEjbbAcx+xC+RcC/dEh95Hqn7NC9 +nN3p5m/DWeYRA+73jei2KzPlHUaS7SUrhI9qsCAJhQtxyvKHsaDjHbet1oPI6162 +4e5xeianDEV2TXEP85X9GHqJz4mO/ewjw3CZs5d1SIS9jlSHcZxuL7nc5k747G6K +2iPgN2nGFPj38hnHTxX2TxijzzczhA== +=GmvN +-END PGP SIGNATURE- Added: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.md5 == --- dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.md5 (added) +++ dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.md5 Thu Jan 17 21:49:15 2019 @@ -0,0 +1,2 @@ +apache-airflow-1.10.2rc2-bin.tar.gz: 1C 4A 4A A9 79 C1 4B 22 A5 72 FB CC CC 23 + B6 3F Added: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.sha512 == --- dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.sha512 (added) +++ dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-bin.tar.gz.sha512 Thu Jan 17 21:49:15 2019 @@ -0,0 +1,4 @@ +apache-airflow-1.10.2rc2-bin.tar.gz: E82778D9 8C8A59A9 EA1D2EDE 374E326F + A156E39B EAB77096 0CAFD617 7F501EFF + B4045CC7 9DD2A87A 8C8F8C40 910DDD4D + F2FFD7D7 CA2D5A5F 192C8553 4524A3A8 Added: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz == Binary file - no diff available. Propchange: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz -- svn:mime-type = application/octet-stream Added: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.asc == --- dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.asc (added) +++ dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.asc Thu Jan 17 21:49:15 2019 @@ -0,0 +1,11 @@ +-BEGIN PGP SIGNATURE- + +iQEzBAABCAAdFiEE99i2+6MdZAxeQM4i3XSEoCXxdJQFAlxA93sACgkQ3XSEoCXx +dJRM5wf6AodB3LEpAs2C8+Lo5sJRPo/0XOGCgsw1eAFa5+VnobY+hxx4FcY8xedR +/i9SpiQVT0V2wmUP4TPefpnag0Yhr3HUuVGMet08NcxF6YH0CW/877l71orga/CF +z+Iax+raIYx+qeOCjs6MQ8l90Ljoqca41frht7pjwKZFK5UGiXHokTT+OO5M6uvX +IgDW41woiYIcFbi8RhvMb1x+vOTv30JOiyO/U0siOClXgy5n3QwMaYVM0dqxCJ+q +IVMBvVPxAX7hcgx59Vs6KtXeXOkxE0tc0tMOb5m+fZFd7jIOZfifzMV9uPcr5CYS +zRP2tHtXNHkeVQu9xehicpgVoHynGw== +=cewv +-END PGP SIGNATURE- Added: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.md5 == --- dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.md5 (added) +++ dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.md5 Thu Jan 17 21:49:15 2019 @@ -0,0 +1,2 @@ +apache-airflow-1.10.2rc2-source.tar.gz: BE 8B 56 2C E6 87 24 9D BE 85 7B F4 98 +CB 10 9C Added: dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.sha512 == --- dev/airflow/1.10.2rc2/apache-airflow-1.10.2rc2-source.tar.gz.sha512 (added) +++

[GitHub] idavison commented on issue #4294: [AIRFLOW-3451] Add UI button to refresh all dags

idavison commented on issue #4294: [AIRFLOW-3451] Add UI button to refresh all dags URL: https://github.com/apache/airflow/pull/4294#issuecomment-455342669 @ron819 rebased This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Commented] (AIRFLOW-3724) Fix the broken refresh button on Graph View in RBAC UI

[ https://issues.apache.org/jira/browse/AIRFLOW-3724?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16745524#comment-16745524 ] ASF GitHub Bot commented on AIRFLOW-3724: - kaxil commented on pull request #4548: [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI URL: https://github.com/apache/airflow/pull/4548 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Fix the broken refresh button on Graph View in RBAC UI > -- > > Key: AIRFLOW-3724 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3724 > Project: Apache Airflow > Issue Type: Bug > Components: ui >Affects Versions: 1.10.2 >Reporter: Kaxil Naik >Assignee: Kaxil Naik >Priority: Minor > > Pressing on the refresh button in Graph View results in an error `error: > INTERNAL SERVER ERROR` as shown in the attachment. > ` -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (AIRFLOW-3724) Fix the broken refresh button on Graph View in RBAC UI

[ https://issues.apache.org/jira/browse/AIRFLOW-3724?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16745525#comment-16745525 ] ASF subversion and git services commented on AIRFLOW-3724: -- Commit 0bd6d87e37f55a49768d30110d675e84a763103d in airflow's branch refs/heads/master from Kaxil Naik [ https://gitbox.apache.org/repos/asf?p=airflow.git;h=0bd6d87 ] [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI (#4548) > Fix the broken refresh button on Graph View in RBAC UI > -- > > Key: AIRFLOW-3724 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3724 > Project: Apache Airflow > Issue Type: Bug > Components: ui >Affects Versions: 1.10.2 >Reporter: Kaxil Naik >Assignee: Kaxil Naik >Priority: Minor > > Pressing on the refresh button in Graph View results in an error `error: > INTERNAL SERVER ERROR` as shown in the attachment. > ` -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Resolved] (AIRFLOW-3724) Fix the broken refresh button on Graph View in RBAC UI

[ https://issues.apache.org/jira/browse/AIRFLOW-3724?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Kaxil Naik resolved AIRFLOW-3724. - Resolution: Fixed Fix Version/s: 1.10.2 > Fix the broken refresh button on Graph View in RBAC UI > -- > > Key: AIRFLOW-3724 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3724 > Project: Apache Airflow > Issue Type: Bug > Components: ui >Affects Versions: 1.10.2 >Reporter: Kaxil Naik >Assignee: Kaxil Naik >Priority: Minor > Fix For: 1.10.2 > > > Pressing on the refresh button in Graph View results in an error `error: > INTERNAL SERVER ERROR` as shown in the attachment. > ` -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[GitHub] kaxil merged pull request #4548: [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI

kaxil merged pull request #4548: [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI URL: https://github.com/apache/airflow/pull/4548 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io commented on issue #4548: [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI

codecov-io commented on issue #4548: [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI URL: https://github.com/apache/airflow/pull/4548#issuecomment-455333956 # [Codecov](https://codecov.io/gh/apache/airflow/pull/4548?src=pr=h1) Report > Merging [#4548](https://codecov.io/gh/apache/airflow/pull/4548?src=pr=desc) into [master](https://codecov.io/gh/apache/airflow/commit/3cc9f75da9e12642633aa30193e230dc96a4af89?src=pr=desc) will **increase** coverage by `0.01%`. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/airflow/pull/4548?src=pr=tree) ```diff @@Coverage Diff @@ ## master#4548 +/- ## == + Coverage 74.07% 74.08% +0.01% == Files 421 421 Lines 2764927665 +16 == + Hits2048120496 +15 - Misses 7168 7169 +1 ``` | [Impacted Files](https://codecov.io/gh/apache/airflow/pull/4548?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/sensors/external\_task\_sensor.py](https://codecov.io/gh/apache/airflow/pull/4548/diff?src=pr=tree#diff-YWlyZmxvdy9zZW5zb3JzL2V4dGVybmFsX3Rhc2tfc2Vuc29yLnB5) | `96.29% <0%> (-1.21%)` | :arrow_down: | | [airflow/contrib/hooks/gcp\_spanner\_hook.py](https://codecov.io/gh/apache/airflow/pull/4548/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL2hvb2tzL2djcF9zcGFubmVyX2hvb2sucHk=) | `72.07% <0%> (ø)` | :arrow_up: | | [airflow/contrib/hooks/gcp\_function\_hook.py](https://codecov.io/gh/apache/airflow/pull/4548/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL2hvb2tzL2djcF9mdW5jdGlvbl9ob29rLnB5) | `73.07% <0%> (ø)` | :arrow_up: | | [airflow/contrib/hooks/gcp\_sql\_hook.py](https://codecov.io/gh/apache/airflow/pull/4548/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL2hvb2tzL2djcF9zcWxfaG9vay5weQ==) | `69.21% <0%> (ø)` | :arrow_up: | | [airflow/contrib/hooks/gcp\_bigtable\_hook.py](https://codecov.io/gh/apache/airflow/pull/4548/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL2hvb2tzL2djcF9iaWd0YWJsZV9ob29rLnB5) | `93.22% <0%> (ø)` | :arrow_up: | | [airflow/contrib/hooks/snowflake\_hook.py](https://codecov.io/gh/apache/airflow/pull/4548/diff?src=pr=tree#diff-YWlyZmxvdy9jb250cmliL2hvb2tzL3Nub3dmbGFrZV9ob29rLnB5) | `73.68% <0%> (+1.46%)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/airflow/pull/4548?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/airflow/pull/4548?src=pr=footer). Last update [3cc9f75...bc20f4a](https://codecov.io/gh/apache/airflow/pull/4548?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] mik-laj commented on a change in pull request #2455: [AIRFLOW-1423] Add logs to the scheduler DAG run decision logic

mik-laj commented on a change in pull request #2455: [AIRFLOW-1423] Add logs to

the scheduler DAG run decision logic

URL: https://github.com/apache/airflow/pull/2455#discussion_r240830440

##

File path: airflow/jobs.py

##

@@ -786,6 +788,9 @@ def create_dag_run(self, dag, session=None):

)

# return if already reached maximum active runs and no timeout

setting

if len(active_runs) >= dag.max_active_runs and not

dag.dagrun_timeout:

+self.logger.info(

+"Dag reached maximum of {} active runs (no timeout)".

Review comment:

Such formatting of messages causes that a string object is created, which

then may not be used anywhere, when the login level will be too low.

Formatting parameters should be passed as arguments to the `info` method.

Example:

python

self.log.info("The Table '%s' does not exists already.", self.table_id)

```

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] mik-laj commented on a change in pull request #4494: [AIRFLOW-3284] Azure Batch AI Operator

mik-laj commented on a change in pull request #4494: [AIRFLOW-3284] Azure Batch

AI Operator

URL: https://github.com/apache/airflow/pull/4494#discussion_r248836913

##

File path: airflow/contrib/hooks/azure_batchai_hook.py

##

@@ -0,0 +1,111 @@

+# -*- coding: utf-8 -*-

+#

+# Licensed under the Apache License, Version 2.0 (the "License");

+# you may not use this file except in compliance with the License.

+# You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing, software

+# distributed under the License is distributed on an "AS IS" BASIS,

+# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+# See the License for the specific language governing permissions and

+# limitations under the License.

+#

+

+import os

+

+from airflow.hooks.base_hook import BaseHook

+from airflow.exceptions import AirflowException

+

+from azure.common.client_factory import get_client_from_auth_file

+from azure.common.credentials import ServicePrincipalCredentials

+

+from azure.mgmt.batchai import BatchAIManagementClient

+

+

+class AzureBatchAIHook(BaseHook):

+"""

+Interact with Azure Batch AI

+:param azure_batchai_conn_id: Reference to the Azure Batch AI connection

+:type azure_batchai_conn_id: str

+:param config_data: JSON Object with credential and subscription

information

+:type config_data: str

+"""

+

+def __init__(self, azure_batchai_conn_id='azure_batchai_default',

config_data=None):

+self.conn_id = azure_batchai_conn_id

+self.connection = self.get_conn()

+self.configData = config_data

+self.credentials = None

+self.subscription_id = None

+

+def get_conn(self):

+try:

+conn = self.get_connection(self.conn_id)

+key_path = conn.extra_dejson.get('key_path', False)

+if key_path:

+if key_path.endswith('.json'):

+self.log.info('Getting connection using a JSON key file.')

+return get_client_from_auth_file(BatchAIManagementClient,

+ key_path)

+else:

+raise AirflowException('Unrecognised extension for key

file.')

+

+elif os.environ.get('AZURE_AUTH_LOCATION'):

Review comment:

The user can define a new connection using the environment variable

`AIRFLOW_CONN_*`. For example: `AIRFLOW_CONN_AZURE_DEFAULT`. Official

documentation is missing, but you can look at this PR:

https://github.com/apache/airflow/pull/4523/files

I found another operator who has a very similar looking code, so if it

really has to be it should not duplicate the code that is already in the

project.

https://github.com/apache/airflow/blob/master/airflow/contrib/hooks/azure_container_instance_hook.py

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] mik-laj commented on a change in pull request #4494: [AIRFLOW-3284] Azure Batch AI Operator

mik-laj commented on a change in pull request #4494: [AIRFLOW-3284] Azure Batch

AI Operator

URL: https://github.com/apache/airflow/pull/4494#discussion_r248833770

##

File path: airflow/utils/db.py

##

@@ -280,6 +280,14 @@ def initdb(rbac=False):

Connection(

conn_id='azure_cosmos_default', conn_type='azure_cosmos',

extra='{"database_name": "", "collection_name":

"" }'))

+merge_conn(

Review comment:

I am suggesting here to use `azure_default`, and then prepare another PR

that will introduce changes to other operators.

Look from the user's perspective. Would you like to give the same password

several times? It will not be comfortable.

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] feng-tao commented on issue #4502: [AIRFLOW-3591] Fix start date, end date, duration for rescheduled tasks

feng-tao commented on issue #4502: [AIRFLOW-3591] Fix start date, end date, duration for rescheduled tasks URL: https://github.com/apache/airflow/pull/4502#issuecomment-455325072 LGTM, PTAL @ashb and see if he has any other comments. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] mik-laj edited a comment on issue #4523: [AIRFLOW-3616] Add aliases for schema with underscore

mik-laj edited a comment on issue #4523: [AIRFLOW-3616] Add aliases for schema with underscore URL: https://github.com/apache/airflow/pull/4523#issuecomment-455198427 @ashb I introduced all expected changes. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] kaxil commented on a change in pull request #4548: [AIRFLOW-3724] Fix the broken refresh button on Graph View in RBAC UI

kaxil commented on a change in pull request #4548: [AIRFLOW-3724] Fix the

broken refresh button on Graph View in RBAC UI

URL: https://github.com/apache/airflow/pull/4548#discussion_r248829454

##

File path: airflow/www/templates/airflow/graph.html

##

@@ -112,8 +112,8 @@

// Below variables are being used in dag.js

var tasks = {{ tasks|safe }};

var task_instances = {{ task_instances|safe }};

-var getTaskInstanceURL = '{{ url_for("Airflow.task_instances", dag_id='+

- dag.dag_id + ', execution_date=' + execution_date + ') }}';

+var getTaskInstanceURL = "{{ url_for('Airflow.task_instances',

+ execution_date=execution_date)}}_id={{dag.dag_id}}";

Review comment:

Updated as per your suggestion

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[jira] [Closed] (AIRFLOW-2165) XCOM values are being saved as bytestring

[ https://issues.apache.org/jira/browse/AIRFLOW-2165?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Kaxil Naik closed AIRFLOW-2165. --- Resolution: Not A Problem > XCOM values are being saved as bytestring > - > > Key: AIRFLOW-2165 > URL: https://issues.apache.org/jira/browse/AIRFLOW-2165 > Project: Apache Airflow > Issue Type: Bug > Components: xcom >Affects Versions: 1.9.0 > Environment: Ubuntu > Airflow 1.9.0 from PIP >Reporter: Cong Qin >Priority: Major > Attachments: Screen Shot 2018-03-02 at 11.09.15 AM.png > > > I noticed after upgrading to 1.9.0 that XCOM values are now being saved as > byte strings that cannot be decoded. Once I downgraded back to 1.8.2 the > "old" behavior is back. > It means that when I'm storing certain values inside I cannot pull those > values back out sometimes. I'm not sure if this was a documented change > anywhere (I looked at the changelog between 1.8.2 and 1.9.0) and I couldn't > find out if this was a config level change or something. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Closed] (AIRFLOW-1850) Sqoop password is overwritten by reference