[jira] [Updated] (HDDS-4025) Add test for creating encrypted key

[ https://issues.apache.org/jira/browse/HDDS-4025?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham updated HDDS-4025: - Fix Version/s: 0.7.0 Resolution: Fixed Status: Resolved (was: Patch Available) > Add test for creating encrypted key > --- > > Key: HDDS-4025 > URL: https://issues.apache.org/jira/browse/HDDS-4025 > Project: Hadoop Distributed Data Store > Issue Type: Test > Components: Ozone Manager >Reporter: Attila Doroszlai >Assignee: Attila Doroszlai >Priority: Major > Labels: pull-request-available > Fix For: 0.7.0 > > > Add acceptance test to create a key in encrypted bucket. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 commented on pull request #1254: HDDS-4025. Add test for creating encrypted key

bharatviswa504 commented on pull request #1254: URL: https://github.com/apache/hadoop-ozone/pull/1254#issuecomment-663811868 Thank You @adoroszlai for the contribution and @xiaoyuyao for the review This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 merged pull request #1254: HDDS-4025. Add test for creating encrypted key

bharatviswa504 merged pull request #1254: URL: https://github.com/apache/hadoop-ozone/pull/1254 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 commented on pull request #1242: HDDS-4007. Generate encryption info for the bucket outside bucket lock.

bharatviswa504 commented on pull request #1242: URL: https://github.com/apache/hadoop-ozone/pull/1242#issuecomment-663810580 Thank You @adoroszlai for the review and test. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3955) Unable to list intermediate paths on keys created using S3G.

[ https://issues.apache.org/jira/browse/HDDS-3955?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham updated HDDS-3955: - Issue Type: New Feature (was: Bug) > Unable to list intermediate paths on keys created using S3G. > > > Key: HDDS-3955 > URL: https://issues.apache.org/jira/browse/HDDS-3955 > Project: Hadoop Distributed Data Store > Issue Type: New Feature > Components: Ozone Manager >Reporter: Aravindan Vijayan >Assignee: Bharat Viswanadham >Priority: Blocker > Labels: pull-request-available > > Keys created via the S3 Gateway currently use the createKey OM API to create > the ozone key. Hence, when using a hdfs client to list intermediate > directories in the key, OM returns key not found error. This was encountered > while using fluentd to write Hive logs to Ozone via the s3 gateway. > cc [~bharat] -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Resolved] (HDDS-4007) Generate encryption info for the bucket outside bucket lock

[ https://issues.apache.org/jira/browse/HDDS-4007?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Bharat Viswanadham resolved HDDS-4007. -- Fix Version/s: 0.7.0 Resolution: Fixed > Generate encryption info for the bucket outside bucket lock > --- > > Key: HDDS-4007 > URL: https://issues.apache.org/jira/browse/HDDS-4007 > Project: Hadoop Distributed Data Store > Issue Type: Improvement > Components: Ozone Manager, Security >Reporter: Bharat Viswanadham >Assignee: Bharat Viswanadham >Priority: Major > Labels: pull-request-available > Fix For: 0.7.0 > > > This Jira is to generate FileEncryption for a key outside the bucket lock. > As right now, we hold the lock when making a network call to KMS to obtain > encryption info. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 merged pull request #1242: HDDS-4007. Generate encryption info for the bucket outside bucket lock.

bharatviswa504 merged pull request #1242: URL: https://github.com/apache/hadoop-ozone/pull/1242 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] codecov-commenter commented on pull request #1256: HDDS-4026. Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

codecov-commenter commented on pull request #1256: URL: https://github.com/apache/hadoop-ozone/pull/1256#issuecomment-663800442 # [Codecov](https://codecov.io/gh/apache/hadoop-ozone/pull/1256?src=pr=h1) Report > Merging [#1256](https://codecov.io/gh/apache/hadoop-ozone/pull/1256?src=pr=desc) into [master](https://codecov.io/gh/apache/hadoop-ozone/commit/32ac7bf79529e30f2ec170456bf3a8803a380edd=desc) will **decrease** coverage by `0.12%`. > The diff coverage is `100.00%`. [](https://codecov.io/gh/apache/hadoop-ozone/pull/1256?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#1256 +/- ## - Coverage 73.75% 73.62% -0.13% + Complexity1012210118 -4 Files 974 974 Lines 5012350180 +57 Branches 4881 4892 +11 - Hits 3696736944 -23 - Misses1082410892 +68 - Partials 2332 2344 +12 ``` | [Impacted Files](https://codecov.io/gh/apache/hadoop-ozone/pull/1256?src=pr=tree) | Coverage Δ | Complexity Δ | | |---|---|---|---| | [...adoop/ozone/om/request/key/OMKeyRenameRequest.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLW96b25lL296b25lLW1hbmFnZXIvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9vbS9yZXF1ZXN0L2tleS9PTUtleVJlbmFtZVJlcXVlc3QuamF2YQ==) | `93.10% <100.00%> (-0.45%)` | `10.00 <0.00> (ø)` | | | [...x509/certificates/utils/SelfSignedCertificate.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvZnJhbWV3b3JrL3NyYy9tYWluL2phdmEvb3JnL2FwYWNoZS9oYWRvb3AvaGRkcy9zZWN1cml0eS94NTA5L2NlcnRpZmljYXRlcy91dGlscy9TZWxmU2lnbmVkQ2VydGlmaWNhdGUuamF2YQ==) | `76.59% <0.00%> (-15.95%)` | `7.00% <0.00%> (+1.00%)` | :arrow_down: | | [...va/org/apache/hadoop/hdds/utils/db/RDBMetrics.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvZnJhbWV3b3JrL3NyYy9tYWluL2phdmEvb3JnL2FwYWNoZS9oYWRvb3AvaGRkcy91dGlscy9kYi9SREJNZXRyaWNzLmphdmE=) | `85.71% <0.00%> (-14.29%)` | `13.00% <0.00%> (-2.00%)` | | | [...doop/hdds/scm/container/ContainerStateManager.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvc2VydmVyLXNjbS9zcmMvbWFpbi9qYXZhL29yZy9hcGFjaGUvaGFkb29wL2hkZHMvc2NtL2NvbnRhaW5lci9Db250YWluZXJTdGF0ZU1hbmFnZXIuamF2YQ==) | `81.53% <0.00%> (-6.93%)` | `31.00% <0.00%> (-3.00%)` | | | [...java/org/apache/hadoop/hdds/utils/db/RDBTable.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvZnJhbWV3b3JrL3NyYy9tYWluL2phdmEvb3JnL2FwYWNoZS9oYWRvb3AvaGRkcy91dGlscy9kYi9SREJUYWJsZS5qYXZh) | `57.33% <0.00%> (-6.67%)` | `19.00% <0.00%> (-3.00%)` | | | [...ozone/container/ozoneimpl/ContainerController.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvb3pvbmVpbXBsL0NvbnRhaW5lckNvbnRyb2xsZXIuamF2YQ==) | `63.15% <0.00%> (-5.27%)` | `11.00% <0.00%> (-1.00%)` | | | [.../transport/server/ratis/ContainerStateMachine.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvY29tbW9uL3RyYW5zcG9ydC9zZXJ2ZXIvcmF0aXMvQ29udGFpbmVyU3RhdGVNYWNoaW5lLmphdmE=) | `71.07% <0.00%> (-5.16%)` | `62.00% <0.00%> (-3.00%)` | | | [.../common/volume/RoundRobinVolumeChoosingPolicy.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvY29tbW9uL3ZvbHVtZS9Sb3VuZFJvYmluVm9sdW1lQ2hvb3NpbmdQb2xpY3kuamF2YQ==) | `80.95% <0.00%> (-4.77%)` | `5.00% <0.00%> (-1.00%)` | | | [...e/commandhandler/CreatePipelineCommandHandler.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvY29tbW9uL3N0YXRlbWFjaGluZS9jb21tYW5kaGFuZGxlci9DcmVhdGVQaXBlbGluZUNvbW1hbmRIYW5kbGVyLmphdmE=) | `87.50% <0.00%> (-3.99%)` | `8.00% <0.00%> (ø%)` | | | [...iner/common/transport/server/ratis/CSMMetrics.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1256/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvY29tbW9uL3RyYW5zcG9ydC9zZXJ2ZXIvcmF0aXMvQ1NNTWV0cmljcy5qYXZh) | `70.76% <0.00%> (-3.08%)` | `20.00% <0.00%>

[GitHub] [hadoop-ozone] prashantpogde opened a new pull request #1257: HDDS-3970. Enabling TestStorageContainerManager with all failures add…

prashantpogde opened a new pull request #1257: URL: https://github.com/apache/hadoop-ozone/pull/1257 ## What changes were proposed in this pull request? ContainerStateManager: Addressing test failures ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-3970 ## How was this patch tested? Testing TestStorageContainerManager several times in a row for failures. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3970) ContainerStateManager: invalid state transition state: CLOSING event: FINALIZE

[ https://issues.apache.org/jira/browse/HDDS-3970?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HDDS-3970: - Labels: pull-request-available (was: ) > ContainerStateManager: invalid state transition state: CLOSING event: FINALIZE > -- > > Key: HDDS-3970 > URL: https://issues.apache.org/jira/browse/HDDS-3970 > Project: Hadoop Distributed Data Store > Issue Type: Bug > Components: SCM >Reporter: Prashant Pogde >Assignee: Prashant Pogde >Priority: Major > Labels: pull-request-available > Attachments: > TEST-org.apache.hadoop.ozone.TestStorageContainerManager.xml > > > Invalid State transition detected during Storage Container Manger test. This > doesn't happen all the time but only some time. Looks like there is some > timing issue involved. > Please see the details below > classname="org.apache.hadoop.ozone.TestStorageContainerManager" time="20.12"> > type="org.apache.hadoop.hdds.scm.exceptions.SCMException">org.apache.hadoop.hdds.scm.exceptions.SCMException: > Failed to update container state #5, reason: invalid state transition from > state: CLOSING upon event: FINALIZE. > at > org.apache.hadoop.hdds.scm.container.ContainerStateManager.updateContainerState(ContainerStateManager.java:357) > at > org.apache.hadoop.hdds.scm.container.SCMContainerManager.updateContainerState(SCMContainerManager.java:345) > at > org.apache.hadoop.hdds.scm.container.SCMContainerManager.updateContainerState(SCMContainerManager.java:331) > at > org.apache.hadoop.ozone.TestStorageContainerManager.testCloseContainerCommandOnRestart(TestStorageContainerManager.java:606) > at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) > at > sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) > at > sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) > at java.lang.reflect.Method.invoke(Method.java:498) > at > org.junit.runners.model.FrameworkMethod$1.runReflectiveCall(FrameworkMethod.java:47) > at > org.junit.internal.runners.model.ReflectiveCallable.run(ReflectiveCallable.java:12) > at > org.junit.runners.model.FrameworkMethod.invokeExplosively(FrameworkMethod.java:44) > at > org.junit.internal.runners.statements.InvokeMethod.evaluate(InvokeMethod.java:17) > at > org.junit.internal.runners.statements.FailOnTimeout$StatementThread.run(FailOnTimeout.java:74) > -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4026) Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

[

https://issues.apache.org/jira/browse/HDDS-4026?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

ASF GitHub Bot updated HDDS-4026:

-

Labels: pull-request-available (was: )

> Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

> --

>

> Key: HDDS-4026

> URL: https://issues.apache.org/jira/browse/HDDS-4026

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

>Reporter: Rakesh Radhakrishnan

>Assignee: Bharat Viswanadham

>Priority: Blocker

> Labels: pull-request-available

>

> Sets ozone.om.enable.filesystem.paths=true, then starts the Ozone cluster.

> {code:java}

> [root~]$ ozone fs -mkdir o3fs://bucket2.vol2.ozone1/subdir2

> [root~]$ ozone fs -mv o3fs://bucket2.vol2.ozone1/subdir2

> o3fs://bucket2.vol2.ozone1/subdir2-renamedmv: Key not found

> /vol2/bucket2/subdir2

> {code}

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] bharatviswa504 opened a new pull request #1256: HDDS-4026. Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

bharatviswa504 opened a new pull request #1256: URL: https://github.com/apache/hadoop-ozone/pull/1256 ## What changes were proposed in this pull request? Rename failed for directory when ozone.om.enable.filesystem.paths is set to true. As rename is not used by ObjectStore API, we don't need to normalize the path when ozone.om.enable.filesystem.paths is set to true. O3FS uses `deleteObjects` for deleting directory and `deleteKey` for delete file/key. So, normalizing the path when the config is enabled should be fine, as the` deletekey` only used for deleting file. (Note: `deleteObject` does not normalize paths) Still, a fix is required for OFS delete, as it uses `deleteKey` for deleting the directory. I will open a Jira to fix the issue. ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-4026 ## How was this patch tested? Added tests for rename and delete. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] xiaoyuyao commented on pull request #1254: HDDS-4025. Add test for creating encrypted key

xiaoyuyao commented on pull request #1254: URL: https://github.com/apache/hadoop-ozone/pull/1254#issuecomment-663781002 Thanks @adoroszlai for working on this. LGTM, +1. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Resolved] (HDDS-3997) Ozone certificate needs additional flags and SAN extension for GRPC TLS.

[ https://issues.apache.org/jira/browse/HDDS-3997?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Xiaoyu Yao resolved HDDS-3997. -- Fix Version/s: 0.6.0 Resolution: Fixed > Ozone certificate needs additional flags and SAN extension for GRPC TLS. > > > Key: HDDS-3997 > URL: https://issues.apache.org/jira/browse/HDDS-3997 > Project: Hadoop Distributed Data Store > Issue Type: Improvement >Reporter: Xiaoyu Yao >Assignee: Xiaoyu Yao >Priority: Major > Labels: pull-request-available > Fix For: 0.6.0 > > > Current Ozone certificate are good for sign/verify tokens but can't do SSL > handshake. > Here are a few missing pieces: > 1. Caused by: java.security.cert.CertificateException: No subject alternative > names present > at > java.base/sun.security.util.HostnameChecker.matchIP(HostnameChecker.java:137) > 2. Caused by: sun.security.validator.ValidatorException: KeyUsage does not > allow digital signatures > at > java.base/sun.security.validator.EndEntityChecker.checkTLSServer(EndEntityChecker.java:278) -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] xiaoyuyao merged pull request #1235: HDDS-3997. Ozone certificate needs additional flags and SAN extension…

xiaoyuyao merged pull request #1235: URL: https://github.com/apache/hadoop-ozone/pull/1235 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] xiaoyuyao commented on pull request #1235: HDDS-3997. Ozone certificate needs additional flags and SAN extension…

xiaoyuyao commented on pull request #1235: URL: https://github.com/apache/hadoop-ozone/pull/1235#issuecomment-663779372 Thanks @jnp for the review. I will merge the PR shortly. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] xiaoyuyao edited a comment on pull request #1234: HDDS-3996. Missing TLS client configurations to allow ozone.grpc.tls.…

xiaoyuyao edited a comment on pull request #1234: URL: https://github.com/apache/hadoop-ozone/pull/1234#issuecomment-663773891 Thanks @jnp for the review. I will merge it shortly. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-3996) Missing TLS client configurations to allow ozone.grpc.tls.enabled.

[ https://issues.apache.org/jira/browse/HDDS-3996?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Xiaoyu Yao updated HDDS-3996: - Reporter: Nilotpal Nandi (was: Xiaoyu Yao) > Missing TLS client configurations to allow ozone.grpc.tls.enabled. > -- > > Key: HDDS-3996 > URL: https://issues.apache.org/jira/browse/HDDS-3996 > Project: Hadoop Distributed Data Store > Issue Type: Improvement >Reporter: Nilotpal Nandi >Assignee: Xiaoyu Yao >Priority: Major > Labels: pull-request-available > Fix For: 0.6.0 > > > As a result, when ozone.grpc.tls.enabled, RATIS THREE pipeline will not work > as DN failed in SSL handshaking without the TLS configuration. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] xiaoyuyao merged pull request #1234: HDDS-3996. Missing TLS client configurations to allow ozone.grpc.tls.…

xiaoyuyao merged pull request #1234: URL: https://github.com/apache/hadoop-ozone/pull/1234 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Resolved] (HDDS-3996) Missing TLS client configurations to allow ozone.grpc.tls.enabled.

[ https://issues.apache.org/jira/browse/HDDS-3996?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Xiaoyu Yao resolved HDDS-3996. -- Fix Version/s: 0.6.0 Resolution: Fixed > Missing TLS client configurations to allow ozone.grpc.tls.enabled. > -- > > Key: HDDS-3996 > URL: https://issues.apache.org/jira/browse/HDDS-3996 > Project: Hadoop Distributed Data Store > Issue Type: Improvement >Reporter: Xiaoyu Yao >Assignee: Xiaoyu Yao >Priority: Major > Labels: pull-request-available > Fix For: 0.6.0 > > > As a result, when ozone.grpc.tls.enabled, RATIS THREE pipeline will not work > as DN failed in SSL handshaking without the TLS configuration. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] xiaoyuyao commented on pull request #1234: HDDS-3996. Missing TLS client configurations to allow ozone.grpc.tls.…

xiaoyuyao commented on pull request #1234: URL: https://github.com/apache/hadoop-ozone/pull/1234#issuecomment-663773891 Thansk @jnp for the review. I will merge it shortly. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4021) Organize Recon DBs into a 'DBDefinition'.

[ https://issues.apache.org/jira/browse/HDDS-4021?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HDDS-4021: - Labels: pull-request-available (was: ) > Organize Recon DBs into a 'DBDefinition'. > - > > Key: HDDS-4021 > URL: https://issues.apache.org/jira/browse/HDDS-4021 > Project: Hadoop Distributed Data Store > Issue Type: Bug > Components: Ozone Recon >Affects Versions: 0.5.0 >Reporter: Vivek Ratnavel Subramanian >Assignee: Aravindan Vijayan >Priority: Major > Labels: pull-request-available > > * ReconNodeManager uses node db in an old format which is not part of > ReconDBDefinition. Move the definition to ReconDBDefinition. > * Create DB Definition for Recon Container DB. > * Modify DBScanner tool to allow it to read Recon DBs. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] avijayanhwx opened a new pull request #1255: HDDS-4021. Organize Recon DBs into a 'DBDefinition'.

avijayanhwx opened a new pull request #1255: URL: https://github.com/apache/hadoop-ozone/pull/1255 ## What changes were proposed in this pull request? - ReconNodeManager uses node db in an old format which is not part of ReconDBDefinition. Move the definition to ReconDBDefinition. - Create DB Definition for Recon Container DB. - Modify DBScanner tool to allow it to read Recon DBs. ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-4021 ## How was this patch tested? Manually tested on cluster. Added unit test for DBScanner changes. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-4029) Recon unable to add a new container which is in CLOSED state.

Aravindan Vijayan created HDDS-4029:

---

Summary: Recon unable to add a new container which is in CLOSED

state.

Key: HDDS-4029

URL: https://issues.apache.org/jira/browse/HDDS-4029

Project: Hadoop Distributed Data Store

Issue Type: Bug

Components: Ozone Recon

Reporter: Aravindan Vijayan

Assignee: Aravindan Vijayan

{code}

2020-07-24 19:56:11,777 INFO

org.apache.hadoop.ozone.recon.scm.ReconContainerManager: Exception while adding

container #1 .

java.io.IOException: Pipeline PipelineID=ccfb3a54-848c-4ed2-91bf-a174267e3435

not found. Cannot add container #1

at

org.apache.hadoop.ozone.recon.scm.ReconContainerManager.addNewContainer(ReconContainerManager.java:119)

at

org.apache.hadoop.ozone.recon.scm.ReconContainerManager.checkAndAddNewContainer(ReconContainerManager.java:92)

at

org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler.onMessage(ReconContainerReportHandler.java:62)

at

org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler.onMessage(ReconContainerReportHandler.java:38)

at

org.apache.hadoop.hdds.server.events.SingleThreadExecutor.lambda$onMessage$1(SingleThreadExecutor.java:81)

at

java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at

java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

2020-07-24 19:56:11,777 ERROR

org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler: Exception while

checking and adding new container.

org.apache.hadoop.hdds.scm.pipeline.PipelineNotFoundException:

PipelineID=06bf6a83-9afb-4477-b18d-de4c6556ce4b not found

at

org.apache.hadoop.hdds.scm.pipeline.PipelineStateMap.removeContainerFromPipeline(PipelineStateMap.java:372)

at

org.apache.hadoop.hdds.scm.pipeline.PipelineStateManager.removeContainerFromPipeline(PipelineStateManager.java:111)

at

org.apache.hadoop.hdds.scm.pipeline.SCMPipelineManager.removeContainerFromPipeline(SCMPipelineManager.java:350)

at

org.apache.hadoop.ozone.recon.scm.ReconContainerManager.addNewContainer(ReconContainerManager.java:126)

at

org.apache.hadoop.ozone.recon.scm.ReconContainerManager.checkAndAddNewContainer(ReconContainerManager.java:92)

at

org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler.onMessage(ReconContainerReportHandler.java:62)

at

org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler.onMessage(ReconContainerReportHandler.java:38)

at

org.apache.hadoop.hdds.server.events.SingleThreadExecutor.lambda$onMessage$1(SingleThreadExecutor.java:81)

at

java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at

java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

{code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4029) Recon unable to add a new container which is in CLOSED state.

[

https://issues.apache.org/jira/browse/HDDS-4029?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Aravindan Vijayan updated HDDS-4029:

Target Version/s: 0.6.0

> Recon unable to add a new container which is in CLOSED state.

> -

>

> Key: HDDS-4029

> URL: https://issues.apache.org/jira/browse/HDDS-4029

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: Ozone Recon

>Reporter: Aravindan Vijayan

>Assignee: Aravindan Vijayan

>Priority: Blocker

>

> {code}

> 2020-07-24 19:56:11,777 INFO

> org.apache.hadoop.ozone.recon.scm.ReconContainerManager: Exception while

> adding container #1 .

> java.io.IOException: Pipeline PipelineID=ccfb3a54-848c-4ed2-91bf-a174267e3435

> not found. Cannot add container #1

> at

> org.apache.hadoop.ozone.recon.scm.ReconContainerManager.addNewContainer(ReconContainerManager.java:119)

> at

> org.apache.hadoop.ozone.recon.scm.ReconContainerManager.checkAndAddNewContainer(ReconContainerManager.java:92)

> at

> org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler.onMessage(ReconContainerReportHandler.java:62)

> at

> org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler.onMessage(ReconContainerReportHandler.java:38)

> at

> org.apache.hadoop.hdds.server.events.SingleThreadExecutor.lambda$onMessage$1(SingleThreadExecutor.java:81)

> at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

> at

> java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

> at java.lang.Thread.run(Thread.java:748)

> 2020-07-24 19:56:11,777 ERROR

> org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler: Exception

> while checking and adding new container.

> org.apache.hadoop.hdds.scm.pipeline.PipelineNotFoundException:

> PipelineID=06bf6a83-9afb-4477-b18d-de4c6556ce4b not found

> at

> org.apache.hadoop.hdds.scm.pipeline.PipelineStateMap.removeContainerFromPipeline(PipelineStateMap.java:372)

> at

> org.apache.hadoop.hdds.scm.pipeline.PipelineStateManager.removeContainerFromPipeline(PipelineStateManager.java:111)

> at

> org.apache.hadoop.hdds.scm.pipeline.SCMPipelineManager.removeContainerFromPipeline(SCMPipelineManager.java:350)

> at

> org.apache.hadoop.ozone.recon.scm.ReconContainerManager.addNewContainer(ReconContainerManager.java:126)

> at

> org.apache.hadoop.ozone.recon.scm.ReconContainerManager.checkAndAddNewContainer(ReconContainerManager.java:92)

> at

> org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler.onMessage(ReconContainerReportHandler.java:62)

> at

> org.apache.hadoop.ozone.recon.scm.ReconContainerReportHandler.onMessage(ReconContainerReportHandler.java:38)

> at

> org.apache.hadoop.hdds.server.events.SingleThreadExecutor.lambda$onMessage$1(SingleThreadExecutor.java:81)

> at

> java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

> at

> java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

> at java.lang.Thread.run(Thread.java:748)

> {code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Assigned] (HDDS-4026) Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

[

https://issues.apache.org/jira/browse/HDDS-4026?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Arpit Agarwal reassigned HDDS-4026:

---

Assignee: Bharat Viswanadham

> Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

> --

>

> Key: HDDS-4026

> URL: https://issues.apache.org/jira/browse/HDDS-4026

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

>Reporter: Rakesh Radhakrishnan

>Assignee: Bharat Viswanadham

>Priority: Blocker

>

> Sets ozone.om.enable.filesystem.paths=true, then starts the Ozone cluster.

> {code:java}

> [root~]$ ozone fs -mkdir o3fs://bucket2.vol2.ozone1/subdir2

> [root~]$ ozone fs -mv o3fs://bucket2.vol2.ozone1/subdir2

> o3fs://bucket2.vol2.ozone1/subdir2-renamedmv: Key not found

> /vol2/bucket2/subdir2

> {code}

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-4028) Fix parser failure message for FS shell command put

Siyao Meng created HDDS-4028:

Summary: Fix parser failure message for FS shell command put

Key: HDDS-4028

URL: https://issues.apache.org/jira/browse/HDDS-4028

Project: Hadoop Distributed Data Store

Issue Type: Bug

Reporter: Siyao Meng

Assignee: Siyao Meng

The address was missing a '/' at the end. But {{Fatal internal error}}

shouldn't be the right response:

{code}

bash-4.2$ ozone fs -put README.md o3fs://buck1.vol1.om

-put: Fatal internal error

java.lang.StringIndexOutOfBoundsException: String index out of range: -1

at java.base/java.lang.String.substring(String.java:1841)

at

org.apache.hadoop.fs.ozone.BasicOzoneFileSystem.pathToKey(BasicOzoneFileSystem.java:751)

at

org.apache.hadoop.fs.ozone.BasicOzoneFileSystem.getFileStatus(BasicOzoneFileSystem.java:710)

at org.apache.hadoop.fs.FileSystem.exists(FileSystem.java:1683)

at org.apache.hadoop.fs.shell.PathData.parentExists(PathData.java:245)

at

org.apache.hadoop.fs.shell.CommandWithDestination.processArguments(CommandWithDestination.java:224)

at

org.apache.hadoop.fs.shell.CopyCommands$Put.processArguments(CopyCommands.java:290)

at

org.apache.hadoop.fs.shell.FsCommand.processRawArguments(FsCommand.java:120)

at org.apache.hadoop.fs.shell.Command.run(Command.java:177)

at org.apache.hadoop.fs.FsShell.run(FsShell.java:327)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:76)

at org.apache.hadoop.util.ToolRunner.run(ToolRunner.java:90)

at org.apache.hadoop.fs.ozone.OzoneFsShell.main(OzoneFsShell.java:80)

{code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-4027) Suppress ERROR message when SCM attempt to create additional pipelines

Xiaoyu Yao created HDDS-4027:

Summary: Suppress ERROR message when SCM attempt to create

additional pipelines

Key: HDDS-4027

URL: https://issues.apache.org/jira/browse/HDDS-4027

Project: Hadoop Distributed Data Store

Issue Type: Improvement

Reporter: Xiaoyu Yao

Assignee: Xiaoyu Yao

This gives false negative errors and can flood SCM log.

{code:java}

scm_1 | 2020-07-24 16:39:51,756 [RatisPipelineUtilsThread] ERROR

pipeline.SCMPipelineManager: Failed to create pipeline of type RATIS and factor

ONE. Exception: Cannot create pipeline of factor 1 using 0 nodes. Used 3 nodes.

Healthy nodes 3

scm_1 | 2020-07-24 16:39:51,757 [RatisPipelineUtilsThread] ERROR

pipeline.SCMPipelineManager: Failed to create pipeline of type RATIS and factor

THREE. Exception: Pipeline creation failed because nodes are engaged in other

pipelines and every node can only be engaged in max 2 pipelines. Required 3.

Found 0

{code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4025) Add test for creating encrypted key

[ https://issues.apache.org/jira/browse/HDDS-4025?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Attila Doroszlai updated HDDS-4025: --- Status: Patch Available (was: In Progress) > Add test for creating encrypted key > --- > > Key: HDDS-4025 > URL: https://issues.apache.org/jira/browse/HDDS-4025 > Project: Hadoop Distributed Data Store > Issue Type: Test > Components: Ozone Manager >Reporter: Attila Doroszlai >Assignee: Attila Doroszlai >Priority: Major > Labels: pull-request-available > > Add acceptance test to create a key in encrypted bucket. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4025) Add test for creating encrypted key

[ https://issues.apache.org/jira/browse/HDDS-4025?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HDDS-4025: - Labels: pull-request-available (was: ) > Add test for creating encrypted key > --- > > Key: HDDS-4025 > URL: https://issues.apache.org/jira/browse/HDDS-4025 > Project: Hadoop Distributed Data Store > Issue Type: Test > Components: Ozone Manager >Reporter: Attila Doroszlai >Assignee: Attila Doroszlai >Priority: Major > Labels: pull-request-available > > Add acceptance test to create a key in encrypted bucket. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] adoroszlai opened a new pull request #1254: HDDS-4025. Add test for creating encrypted key

adoroszlai opened a new pull request #1254: URL: https://github.com/apache/hadoop-ozone/pull/1254 ## What changes were proposed in this pull request? Add acceptance test case to create a key in encrypted bucket. Inspired by HDDS-4007. https://issues.apache.org/jira/browse/HDDS-4025 ## How was this patch tested? ``` Create Encrypted Bucket | PASS | -- Create Key in Encrypted Bucket| PASS | ``` https://github.com/adoroszlai/hadoop-ozone/runs/906155913#step:7:2556 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Resolved] (HDDS-3999) OM NPE and shutdown while S3MultipartUploadCommitPartResponse#checkAndUpdateDB

[ https://issues.apache.org/jira/browse/HDDS-3999?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Arpit Agarwal resolved HDDS-3999. - Fix Version/s: 0.6.0 Target Version/s: (was: 0.6.0) Resolution: Fixed > OM NPE and shutdown while S3MultipartUploadCommitPartResponse#checkAndUpdateDB > -- > > Key: HDDS-3999 > URL: https://issues.apache.org/jira/browse/HDDS-3999 > Project: Hadoop Distributed Data Store > Issue Type: Bug > Components: Ozone Manager >Affects Versions: 0.6.0 >Reporter: maobaolong >Assignee: maobaolong >Priority: Blocker > Labels: pull-request-available > Fix For: 0.6.0 > > Attachments: OM-NPE-Full.log, screenshot-1.png, test-repro.patch > > > The related bad code. > !screenshot-1.png! > The following is the part log of OM. If you want to see the full log, you can > download the attachment. > 2020-07-20 16:28:56,395 ERROR ratis.OzoneManagerDoubleBuffer > (ExitUtils.java:terminate(133)) - Terminating with exit status 2: > OMDoubleBuffer flush threadOMDoubleBufferFlushThreadencountered Throwable > error > java.lang.NullPointerException > at > org.apache.hadoop.ozone.om.response.s3.multipart.S3MultipartUploadCommitPartResponse.checkAndUpdateDB(S3MultipartUploadCommitPartResponse.java:99) > at > org.apache.hadoop.ozone.om.ratis.OzoneManagerDoubleBuffer.lambda$null$0(OzoneManagerDoubleBuffer.java:256) > at > org.apache.hadoop.ozone.om.ratis.OzoneManagerDoubleBuffer.addToBatchWithTrace(OzoneManagerDoubleBuffer.java:201) > at > org.apache.hadoop.ozone.om.ratis.OzoneManagerDoubleBuffer.lambda$flushTransactions$1(OzoneManagerDoubleBuffer.java:254) > at java.util.Iterator.forEachRemaining(Iterator.java:116) > at > org.apache.hadoop.ozone.om.ratis.OzoneManagerDoubleBuffer.flushTransactions(OzoneManagerDoubleBuffer.java:250) > at java.lang.Thread.run(Thread.java:748) > 2020-07-20 16:28:56,426 INFO om.OzoneManagerStarter > (StringUtils.java:lambda$startupShutdownMessage$0(124)) - SHUTDOWN_MSG: > / > SHUTDOWN_MSG: Shutting down OzoneManager at BAOLOONGMAO-MB0/10.78.32.49 > / -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] arp7 merged pull request #1244: HDDS-3999. OM Shutdown when Commit part tries to commit the part, after abort upload.

arp7 merged pull request #1244: URL: https://github.com/apache/hadoop-ozone/pull/1244 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] arp7 commented on pull request #1244: HDDS-3999. OM Shutdown when Commit part tries to commit the part, after abort upload.

arp7 commented on pull request #1244: URL: https://github.com/apache/hadoop-ozone/pull/1244#issuecomment-663608276 Thanks for contributing this patch @bharatviswa504 and thanks for the review @maobaolong. I plan to commit this shortly. If there's any remaining unaddressed comments we can address them in a followup Jira. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] jnp commented on pull request #1235: HDDS-3997. Ozone certificate needs additional flags and SAN extension…

jnp commented on pull request #1235: URL: https://github.com/apache/hadoop-ozone/pull/1235#issuecomment-663603229 +1 for the patch. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] jnp commented on pull request #1234: HDDS-3996. Missing TLS client configurations to allow ozone.grpc.tls.…

jnp commented on pull request #1234: URL: https://github.com/apache/hadoop-ozone/pull/1234#issuecomment-663600025 +1 for the patch. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4026) Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

[

https://issues.apache.org/jira/browse/HDDS-4026?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Arpit Agarwal updated HDDS-4026:

Target Version/s: 0.6.0

Priority: Blocker (was: Major)

> Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

> --

>

> Key: HDDS-4026

> URL: https://issues.apache.org/jira/browse/HDDS-4026

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

>Reporter: Rakesh Radhakrishnan

>Priority: Blocker

>

> Sets ozone.om.enable.filesystem.paths=true, then starts the Ozone cluster.

> {code:java}

> [root~]$ ozone fs -mkdir o3fs://bucket2.vol2.ozone1/subdir2

> [root~]$ ozone fs -mv o3fs://bucket2.vol2.ozone1/subdir2

> o3fs://bucket2.vol2.ozone1/subdir2-renamedmv: Key not found

> /vol2/bucket2/subdir2

> {code}

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] maobaolong commented on a change in pull request #1096: HDDS-3833. Use Pipeline choose policy to choose pipeline from exist pipeline list

maobaolong commented on a change in pull request #1096:

URL: https://github.com/apache/hadoop-ozone/pull/1096#discussion_r460067221

##

File path:

hadoop-hdds/server-scm/src/main/java/org/apache/hadoop/hdds/scm/pipeline/choose/algorithms/PipelineChoosePolicyFactory.java

##

@@ -0,0 +1,71 @@

+/**

+ * Licensed to the Apache Software Foundation (ASF) under one

+ * or more contributor license agreements. See the NOTICE file

+ * distributed with this work for additional information

+ * regarding copyright ownership. The ASF licenses this file

+ * to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance

+ * with the License. You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.hadoop.hdds.scm.pipeline.choose.algorithms;

+

+import org.apache.hadoop.hdds.conf.ConfigurationSource;

+import org.apache.hadoop.hdds.scm.PipelineChoosePolicy;

+import org.apache.hadoop.hdds.scm.ScmConfigKeys;

+import org.apache.hadoop.hdds.scm.exceptions.SCMException;

+import org.slf4j.Logger;

+import org.slf4j.LoggerFactory;

+

+import java.lang.reflect.Constructor;

+

+/**

+ * A factory to create pipeline choose policy instance based on configuration

+ * property {@link ScmConfigKeys#OZONE_SCM_PIPELINE_CHOOSE_IMPL_KEY}.

+ */

+public final class PipelineChoosePolicyFactory {

+ private static final Logger LOG =

+ LoggerFactory.getLogger(PipelineChoosePolicyFactory.class);

+

+ private static final Class

+ OZONE_SCM_PIPELINE_CHOOSE_IMPL_DEFAULT =

+ RandomPipelineChoosePolicy.class;

+

+ private PipelineChoosePolicyFactory() {

+ }

+

+ public static PipelineChoosePolicy getPolicy(

+ ConfigurationSource conf) throws SCMException {

+final Class policyClass = conf

+.getClass(ScmConfigKeys.OZONE_SCM_PIPELINE_CHOOSE_IMPL_KEY,

+OZONE_SCM_PIPELINE_CHOOSE_IMPL_DEFAULT,

+PipelineChoosePolicy.class);

+Constructor constructor;

+try {

+ constructor = policyClass.getDeclaredConstructor();

Review comment:

Now it cannot get the client address while allocate block. After this

PR, we can create a new ticket to add client parameter to the allocateblock

method, then, I can write a localFirstPolicy. Also, I plan to let datanode

report its utilized to scm, so i can implement a LowLoadFirstPolicy, we can

also collect the pipeline vote count, batch size, use these information, we can

get write many kind of choose pipeline policy.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] maobaolong commented on a change in pull request #1096: HDDS-3833. Use Pipeline choose policy to choose pipeline from exist pipeline list

maobaolong commented on a change in pull request #1096:

URL: https://github.com/apache/hadoop-ozone/pull/1096#discussion_r460063742

##

File path:

hadoop-hdds/common/src/main/java/org/apache/hadoop/hdds/scm/PipelineChoosePolicy.java

##

@@ -0,0 +1,36 @@

+/**

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with this

+ * work for additional information regarding copyright ownership. The ASF

+ * licenses this file to you under the Apache License, Version 2.0 (the

+ * "License"); you may not use this file except in compliance with the License.

+ * You may obtain a copy of the License at

+ *

+ * http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS, WITHOUT

+ * WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied. See the

+ * License for the specific language governing permissions and limitations

under

+ * the License.

+ */

+

+package org.apache.hadoop.hdds.scm;

+

+import org.apache.hadoop.hdds.scm.pipeline.Pipeline;

+

+import java.util.List;

+

+/**

+ * A {@link PipelineChoosePolicy} support choosing pipeline from exist list.

+ */

+public interface PipelineChoosePolicy {

+

+ /**

+ * Given an initial list of pipelines, return one of the pipelines.

+ *

+ * @param pipelineList list of pipelines.

+ * @return one of the pipelines.

+ */

+ Pipeline choosePipeline(List pipelineList);

Review comment:

@avijayanhwx Thank you for your suggestion, I add a arguments map, for

extension purpose, i use Object type as value type.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-4026) Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

Rakesh Radhakrishnan created HDDS-4026:

--

Summary: Dir rename failed when sets

'ozone.om.enable.filesystem.paths' to true

Key: HDDS-4026

URL: https://issues.apache.org/jira/browse/HDDS-4026

Project: Hadoop Distributed Data Store

Issue Type: Bug

Reporter: Rakesh Radhakrishnan

Sets ozone.om.enable.filesystem.paths=true, then starts the Ozone cluster.

{code:java}

[root~]$ ozone fs -mkdir o3fs://bucket2.vol2.ozone1/subdir2

[root~]$ ozone fs -mv o3fs://bucket2.vol2.ozone1/subdir2

o3fs://bucket2.vol2.ozone1/subdir2-renamedmv: Key not found

/vol2/bucket2/subdir2

{code}

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Commented] (HDDS-4026) Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

[

https://issues.apache.org/jira/browse/HDDS-4026?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17164438#comment-17164438

]

Rakesh Radhakrishnan commented on HDDS-4026:

*Analysis:* With flag to true, it is not adding the trailing slash to the

'fromKeyName' and 'toKeyName', which results in KEY_NOT_FOUND from KeyTable.

{code:java}

String fromKeyName = keyArgs.getKeyName();

// value coming as '/vol2/bucket2/subdir2'. For a directory, it stored the key

with trailing slash and it should be like '/vol2/bucket2/subdir2/'.

{code}

We can verify this case via unit test case -

[testFileSystem#testRenameDir()|https://github.com/apache/hadoop-ozone/blob/master/hadoop-ozone/integration-test/src/test/java/org/apache/hadoop/fs/ozone/TestOzoneFileSystem.java#L193].

by setting flag to true.

> Dir rename failed when sets 'ozone.om.enable.filesystem.paths' to true

> --

>

> Key: HDDS-4026

> URL: https://issues.apache.org/jira/browse/HDDS-4026

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

>Reporter: Rakesh Radhakrishnan

>Priority: Major

>

> Sets ozone.om.enable.filesystem.paths=true, then starts the Ozone cluster.

> {code:java}

> [root~]$ ozone fs -mkdir o3fs://bucket2.vol2.ozone1/subdir2

> [root~]$ ozone fs -mv o3fs://bucket2.vol2.ozone1/subdir2

> o3fs://bucket2.vol2.ozone1/subdir2-renamedmv: Key not found

> /vol2/bucket2/subdir2

> {code}

>

--

This message was sent by Atlassian Jira

(v8.3.4#803005)

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] codecov-commenter commented on pull request #1236: HDDS-4000. Split acceptance tests to reduce CI feedback time

codecov-commenter commented on pull request #1236: URL: https://github.com/apache/hadoop-ozone/pull/1236#issuecomment-663530749 # [Codecov](https://codecov.io/gh/apache/hadoop-ozone/pull/1236?src=pr=h1) Report > Merging [#1236](https://codecov.io/gh/apache/hadoop-ozone/pull/1236?src=pr=desc) into [master](https://codecov.io/gh/apache/hadoop-ozone/commit/a4f7e32b438a1ac74e23f70be3b24aac9a61e00c=desc) will **decrease** coverage by `0.05%`. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/hadoop-ozone/pull/1236?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#1236 +/- ## - Coverage 73.73% 73.68% -0.06% + Complexity1012410113 -11 Files 974 974 Lines 5012350123 Branches 4881 4881 - Hits 3695836932 -26 - Misses1083510858 +23 - Partials 2330 2333 +3 ``` | [Impacted Files](https://codecov.io/gh/apache/hadoop-ozone/pull/1236?src=pr=tree) | Coverage Δ | Complexity Δ | | |---|---|---|---| | [...e/hadoop/ozone/recon/tasks/OMUpdateEventBatch.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLW96b25lL3JlY29uL3NyYy9tYWluL2phdmEvb3JnL2FwYWNoZS9oYWRvb3Avb3pvbmUvcmVjb24vdGFza3MvT01VcGRhdGVFdmVudEJhdGNoLmphdmE=) | `75.00% <0.00%> (-8.34%)` | `6.00% <0.00%> (-1.00%)` | | | [.../transport/server/ratis/ContainerStateMachine.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvY29tbW9uL3RyYW5zcG9ydC9zZXJ2ZXIvcmF0aXMvQ29udGFpbmVyU3RhdGVNYWNoaW5lLmphdmE=) | `71.07% <0.00%> (-5.39%)` | `62.00% <0.00%> (-4.00%)` | | | [...ozone/container/ozoneimpl/ContainerController.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvb3pvbmVpbXBsL0NvbnRhaW5lckNvbnRyb2xsZXIuamF2YQ==) | `63.15% <0.00%> (-5.27%)` | `11.00% <0.00%> (-1.00%)` | | | [.../apache/hadoop/ozone/protocolPB/OzonePBHelper.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLW96b25lL2NvbW1vbi9zcmMvbWFpbi9qYXZhL29yZy9hcGFjaGUvaGFkb29wL296b25lL3Byb3RvY29sUEIvT3pvbmVQQkhlbHBlci5qYXZh) | `90.00% <0.00%> (-5.00%)` | `6.00% <0.00%> (-1.00%)` | | | [.../common/volume/RoundRobinVolumeChoosingPolicy.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvY29tbW9uL3ZvbHVtZS9Sb3VuZFJvYmluVm9sdW1lQ2hvb3NpbmdQb2xpY3kuamF2YQ==) | `80.95% <0.00%> (-4.77%)` | `5.00% <0.00%> (-1.00%)` | | | [...e/commandhandler/CreatePipelineCommandHandler.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvY29tbW9uL3N0YXRlbWFjaGluZS9jb21tYW5kaGFuZGxlci9DcmVhdGVQaXBlbGluZUNvbW1hbmRIYW5kbGVyLmphdmE=) | `87.23% <0.00%> (-4.26%)` | `8.00% <0.00%> (ø%)` | | | [...iner/common/transport/server/ratis/CSMMetrics.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIvY29tbW9uL3RyYW5zcG9ydC9zZXJ2ZXIvcmF0aXMvQ1NNTWV0cmljcy5qYXZh) | `70.76% <0.00%> (-3.08%)` | `20.00% <0.00%> (-1.00%)` | | | [.../statemachine/background/BlockDeletingService.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLWhkZHMvY29udGFpbmVyLXNlcnZpY2Uvc3JjL21haW4vamF2YS9vcmcvYXBhY2hlL2hhZG9vcC9vem9uZS9jb250YWluZXIva2V5dmFsdWUvc3RhdGVtYWNoaW5lL2JhY2tncm91bmQvQmxvY2tEZWxldGluZ1NlcnZpY2UuamF2YQ==) | `75.73% <0.00%> (-1.48%)` | `12.00% <0.00%> (-1.00%)` | | | [...apache/hadoop/ozone/client/io/KeyOutputStream.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLW96b25lL2NsaWVudC9zcmMvbWFpbi9qYXZhL29yZy9hcGFjaGUvaGFkb29wL296b25lL2NsaWVudC9pby9LZXlPdXRwdXRTdHJlYW0uamF2YQ==) | `79.58% <0.00%> (-1.25%)` | `46.00% <0.00%> (-2.00%)` | | | [...oop/ozone/recon/tasks/ReconTaskControllerImpl.java](https://codecov.io/gh/apache/hadoop-ozone/pull/1236/diff?src=pr=tree#diff-aGFkb29wLW96b25lL3JlY29uL3NyYy9tYWluL2phdmEvb3JnL2FwYWNoZS9oYWRvb3Avb3pvbmUvcmVjb24vdGFza3MvUmVjb25UYXNrQ29udHJvbGxlckltcGwuamF2YQ==) | `92.38% <0.00%> (-0.96%)` | `29.00% <0.00%>

[GitHub] [hadoop-ozone] leosunli commented on a change in pull request #1033: HDDS-3667. If we gracefully stop datanode it would be better to notify scm and r…

leosunli commented on a change in pull request #1033:

URL: https://github.com/apache/hadoop-ozone/pull/1033#discussion_r459992764

##

File path:

hadoop-hdds/server-scm/src/main/java/org/apache/hadoop/hdds/scm/node/NodeStateManager.java

##

@@ -708,6 +707,21 @@ private void updateNodeState(DatanodeInfo node,

Predicate condition,

}

}

+ /**

+ *

+ * @param datanodeDetails

+ */

+ public void stopNode(DatanodeDetails datanodeDetails)

+ throws NodeNotFoundException {

+UUID uuid = datanodeDetails.getUuid();

+NodeState state = nodeStateMap.getNodeState(uuid);

+DatanodeInfo node = nodeStateMap.getNodeInfo(uuid);

+nodeStateMap.updateNodeState(datanodeDetails.getUuid(), state,

+NodeState.DEAD);

+node.updateLastHeartbeatTime(0L);

Review comment:

Yeah, this problem really exists.

i will create a new jira to solve this prolem.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-4025) Add test for creating encrypted key

Attila Doroszlai created HDDS-4025: -- Summary: Add test for creating encrypted key Key: HDDS-4025 URL: https://issues.apache.org/jira/browse/HDDS-4025 Project: Hadoop Distributed Data Store Issue Type: Test Components: Ozone Manager Reporter: Attila Doroszlai Assignee: Attila Doroszlai Add acceptance test to create a key in encrypted bucket. -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] elek commented on pull request #1226: HDDS-3989. Display revision and build date of DN in recon UI

elek commented on pull request #1226: URL: https://github.com/apache/hadoop-ozone/pull/1226#issuecomment-663416483 Hi, thanks for the contributions @runitao, it's very useful for the UI. It looked good to me, but when I analyzed the network traffic found that the two new fields are added two all the requests as the DatanodeInfo protocol is a shared type. For example when I do `createKey` or block allocations the new fields are added. Which means that the performance is worse in case of many small keys. I think it can be better to further improve it and add the fields only to the SCM registration type. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

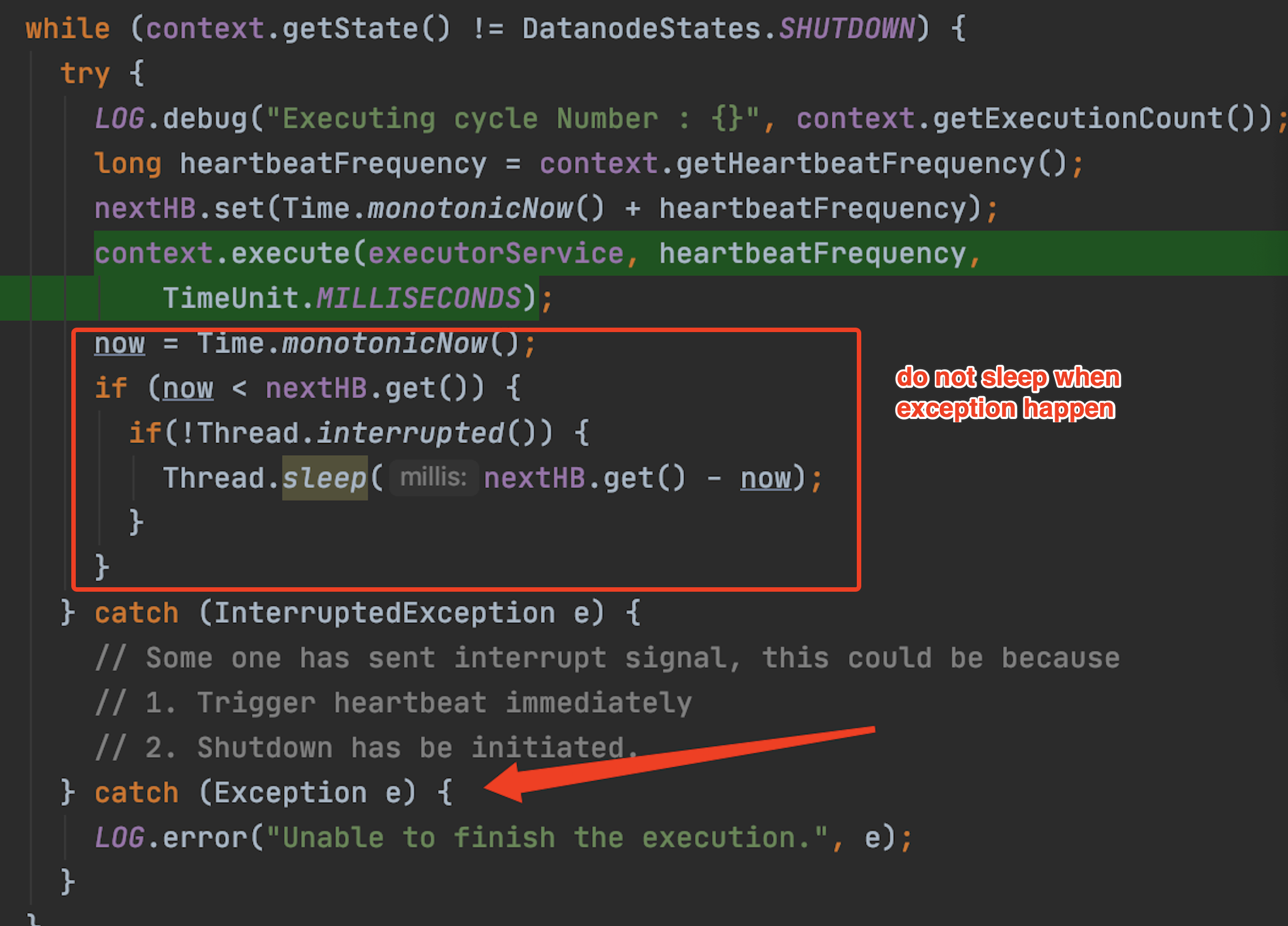

[GitHub] [hadoop-ozone] runzhiwang commented on a change in pull request #1253: HDDS-4024. Avoid while loop too soon when exception happen

runzhiwang commented on a change in pull request #1253:

URL: https://github.com/apache/hadoop-ozone/pull/1253#discussion_r459923041

##

File path:

hadoop-hdds/container-service/src/main/java/org/apache/hadoop/ozone/container/common/statemachine/DatanodeStateMachine.java

##

@@ -224,19 +224,25 @@ private void start() throws IOException {

nextHB.set(Time.monotonicNow() + heartbeatFrequency);

context.execute(executorService, heartbeatFrequency,

TimeUnit.MILLISECONDS);

-now = Time.monotonicNow();

-if (now < nextHB.get()) {

- if(!Thread.interrupted()) {

-Thread.sleep(nextHB.get() - now);

- }

-}

} catch (InterruptedException e) {

// Some one has sent interrupt signal, this could be because

// 1. Trigger heartbeat immediately

// 2. Shutdown has be initiated.

+LOG.warn("Interrupt the execution.", e);

Review comment:

@adoroszlai Thanks for review. I have updated the patch.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] adoroszlai commented on a change in pull request #1253: HDDS-4024. Avoid while loop too soon when exception happen

adoroszlai commented on a change in pull request #1253:

URL: https://github.com/apache/hadoop-ozone/pull/1253#discussion_r459900072

##

File path:

hadoop-hdds/container-service/src/main/java/org/apache/hadoop/ozone/container/common/statemachine/DatanodeStateMachine.java

##

@@ -224,19 +224,25 @@ private void start() throws IOException {

nextHB.set(Time.monotonicNow() + heartbeatFrequency);

context.execute(executorService, heartbeatFrequency,

TimeUnit.MILLISECONDS);

-now = Time.monotonicNow();

-if (now < nextHB.get()) {

- if(!Thread.interrupted()) {

-Thread.sleep(nextHB.get() - now);

- }

-}

} catch (InterruptedException e) {

// Some one has sent interrupt signal, this could be because

// 1. Trigger heartbeat immediately

// 2. Shutdown has be initiated.

+LOG.warn("Interrupt the execution.", e);

Review comment:

Now that sleep is moved outside this `try`, I think interrupted state

needs to be restored here. Otherwise if `execute` is interrupted, this catch

clears interrupted state and the thread will sleep below.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] maobaolong commented on a change in pull request #1033: HDDS-3667. If we gracefully stop datanode it would be better to notify scm and r…

maobaolong commented on a change in pull request #1033:

URL: https://github.com/apache/hadoop-ozone/pull/1033#discussion_r459897552

##

File path:

hadoop-hdds/server-scm/src/main/java/org/apache/hadoop/hdds/scm/node/NodeStateManager.java

##

@@ -708,6 +707,21 @@ private void updateNodeState(DatanodeInfo node,

Predicate condition,

}

}

+ /**

+ *

+ * @param datanodeDetails

+ */

+ public void stopNode(DatanodeDetails datanodeDetails)

+ throws NodeNotFoundException {

+UUID uuid = datanodeDetails.getUuid();

+NodeState state = nodeStateMap.getNodeState(uuid);

+DatanodeInfo node = nodeStateMap.getNodeInfo(uuid);

+nodeStateMap.updateNodeState(datanodeDetails.getUuid(), state,

+NodeState.DEAD);

+node.updateLastHeartbeatTime(0L);

Review comment:

Yeah, i got it, i agree that it can works, but the downside, we lost the

real lastHeartBeatTime.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4024) Avoid while loop too soon when exception happen

[ https://issues.apache.org/jira/browse/HDDS-4024?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] ASF GitHub Bot updated HDDS-4024: - Labels: pull-request-available (was: ) > Avoid while loop too soon when exception happen > --- > > Key: HDDS-4024 > URL: https://issues.apache.org/jira/browse/HDDS-4024 > Project: Hadoop Distributed Data Store > Issue Type: Bug >Reporter: runzhiwang >Assignee: runzhiwang >Priority: Major > Labels: pull-request-available > -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] runzhiwang opened a new pull request #1253: HDDS-4024. Avoid while loop too soon when exception happen

runzhiwang opened a new pull request #1253: URL: https://github.com/apache/hadoop-ozone/pull/1253 ## What changes were proposed in this pull request? When exception throw in `context.execute(executorService, heartbeatFrequency, TimeUnit.MILLISECONDS)`, thread will not sleep and start another while loop immediately, it will cause cpu very high and huge log size.  ## What is the link to the Apache JIRA https://issues.apache.org/jira/browse/HDDS-4024 ## How was this patch tested? No need add test. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[GitHub] [hadoop-ozone] adoroszlai commented on a change in pull request #1245: HDDS-4011. Update S3 related documentation.

adoroszlai commented on a change in pull request #1245: URL: https://github.com/apache/hadoop-ozone/pull/1245#discussion_r459868266 ## File path: hadoop-hdds/docs/content/start/StartFromDockerHub.md ## @@ -72,7 +72,7 @@ connecting to the SCM's UI at [http://localhost:9876](http://localhost:9876). The S3 gateway endpoint will be exposed at port 9878. You can use Ozone's S3 support as if you are working against the real S3. S3 buckets are stored under -the `/s3v` volume, which needs to be created by an administrator first: +the `/s3v` volume: ``` docker-compose exec scm ozone sh volume create /s3v Review comment: Thanks @bharatviswa504 for updating the patch. I think you missed this `volume create` command. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Created] (HDDS-4024) Avoid while loop too soon when exception happen

runzhiwang created HDDS-4024: Summary: Avoid while loop too soon when exception happen Key: HDDS-4024 URL: https://issues.apache.org/jira/browse/HDDS-4024 Project: Hadoop Distributed Data Store Issue Type: Bug Reporter: runzhiwang Assignee: runzhiwang -- This message was sent by Atlassian Jira (v8.3.4#803005) - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4018) Datanode log spammed by NPE

[

https://issues.apache.org/jira/browse/HDDS-4018?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

ASF GitHub Bot updated HDDS-4018:

-

Labels: pull-request-available (was: )

> Datanode log spammed by NPE

> ---

>

> Key: HDDS-4018

> URL: https://issues.apache.org/jira/browse/HDDS-4018

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: Ozone Datanode

>Affects Versions: 0.6.0

>Reporter: Attila Doroszlai

>Assignee: Attila Doroszlai

>Priority: Blocker

> Labels: pull-request-available

> Fix For: 0.7.0

>

>

> {code}

> datanode_1 | 2020-07-22 13:11:47,845 [Datanode State Machine Thread - 0]

> WARN statemachine.StateContext: No available thread in pool for past 2

> seconds.

> datanode_1 | 2020-07-22 13:11:47,846 [Datanode State Machine Thread - 0]

> ERROR statemachine.DatanodeStateMachine: Unable to finish the execution.

> datanode_1 | java.lang.NullPointerException

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:218)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:50)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.StateContext.execute(StateContext.java:451)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.DatanodeStateMachine.start(DatanodeStateMachine.java:225)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.DatanodeStateMachine.lambda$startDaemon$0(DatanodeStateMachine.java:396)

> datanode_1 | at java.base/java.lang.Thread.run(Thread.java:834)

> datanode_1 | 2020-07-22 13:11:47,847 [Datanode State Machine Thread - 0]

> ERROR statemachine.DatanodeStateMachine: Unable to finish the execution.

> datanode_1 | java.lang.NullPointerException

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:218)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:50)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.StateContext.execute(StateContext.java:451)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.DatanodeStateMachine.start(DatanodeStateMachine.java:225)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.DatanodeStateMachine.lambda$startDaemon$0(DatanodeStateMachine.java:396)

> datanode_1 | at java.base/java.lang.Thread.run(Thread.java:834)

> datanode_1 | 2020-07-22 13:11:47,848 [Datanode State Machine Thread - 0]

> ERROR statemachine.DatanodeStateMachine: Unable to finish the execution.

> datanode_1 | java.lang.NullPointerException

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:218)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:50)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.StateContext.execute(StateContext.java:451)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.DatanodeStateMachine.start(DatanodeStateMachine.java:225)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.DatanodeStateMachine.lambda$startDaemon$0(DatanodeStateMachine.java:396)

> datanode_1 | at java.base/java.lang.Thread.run(Thread.java:834)

> datanode_1 | 2020-07-22 13:11:47,848 [Datanode State Machine Thread - 0]

> ERROR statemachine.DatanodeStateMachine: Unable to finish the execution.

> datanode_1 | java.lang.NullPointerException

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:218)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:50)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.StateContext.execute(StateContext.java:451)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.DatanodeStateMachine.start(DatanodeStateMachine.java:225)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.statemachine.DatanodeStateMachine.lambda$startDaemon$0(DatanodeStateMachine.java:396)

> datanode_1 | at java.base/java.lang.Thread.run(Thread.java:834)

> datanode_1 | 2020-07-22 13:11:47,851 [Datanode State Machine Thread - 0]

> ERROR statemachine.DatanodeStateMachine: Unable to finish the execution.

> datanode_1 | java.lang.NullPointerException

> datanode_1 | at

>

[GitHub] [hadoop-ozone] runzhiwang commented on pull request #1250: HDDS-4018. Datanode log spammed by NPE

runzhiwang commented on pull request #1250: URL: https://github.com/apache/hadoop-ozone/pull/1250#issuecomment-663360990 @adoroszlai Thanks. I will file a new issue for that. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: ozone-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: ozone-issues-h...@hadoop.apache.org

[jira] [Updated] (HDDS-4018) Datanode log spammed by NPE

[

https://issues.apache.org/jira/browse/HDDS-4018?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel

]

Attila Doroszlai updated HDDS-4018:

---

Labels: (was: pull-request-available)

> Datanode log spammed by NPE

> ---

>

> Key: HDDS-4018

> URL: https://issues.apache.org/jira/browse/HDDS-4018

> Project: Hadoop Distributed Data Store

> Issue Type: Bug

> Components: Ozone Datanode

>Affects Versions: 0.6.0

>Reporter: Attila Doroszlai

>Assignee: Attila Doroszlai

>Priority: Blocker

> Fix For: 0.7.0

>

>

> {code}

> datanode_1 | 2020-07-22 13:11:47,845 [Datanode State Machine Thread - 0]

> WARN statemachine.StateContext: No available thread in pool for past 2

> seconds.

> datanode_1 | 2020-07-22 13:11:47,846 [Datanode State Machine Thread - 0]

> ERROR statemachine.DatanodeStateMachine: Unable to finish the execution.

> datanode_1 | java.lang.NullPointerException

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:218)

> datanode_1 | at

> org.apache.hadoop.ozone.container.common.states.datanode.RunningDatanodeState.await(RunningDatanodeState.java:50)

> datanode_1 | at