Re: [PR] [SPARK-48116][INFRA][FOLLOW-UP] Simplify the build with fixing the if condition [spark]

HyukjinKwon commented on PR #46381: URL: https://github.com/apache/spark/pull/46381#issuecomment-2094024562  vs  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA][FOLLOW-UP] Simplify the build with fixing the if condition [spark]

HyukjinKwon commented on PR #46381: URL: https://github.com/apache/spark/pull/46381#issuecomment-2094022030 Tested at https://github.com/HyukjinKwon/spark/actions/runs/8948215777/job/24581131687 cc @dongjoon-hyun -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA][FOLLOWUP] Simplify the build with fixing the if condition [spark]

dongjoon-hyun commented on PR #46380: URL: https://github.com/apache/spark/pull/46380#issuecomment-2094017688 Oh, thank you for the fast recovery. Looking forward to seeing the new try. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA][FOLLOWUP] Simplify the build with fixing the if condition [spark]

HyukjinKwon commented on PR #46380: URL: https://github.com/apache/spark/pull/46380#issuecomment-2094016895 Reverted. I will make a new try with trying within my own fork. I cherry-picked yours and merged it. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA][FOLLOWUP] Simplify the build with fixing the if condition [spark]

HyukjinKwon closed pull request #46380: [SPARK-48116][INFRA][FOLLOWUP] Simplify the build with fixing the if condition URL: https://github.com/apache/spark/pull/46380 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA][FOLLOWUP] Simplify the build with fixing the if condition [spark]

HyukjinKwon commented on PR #46380: URL: https://github.com/apache/spark/pull/46380#issuecomment-2094015219 Merged to master. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48127][INFRA] Fix `dev/scalastyle` to check `hadoop-cloud` and `jvm-profiler` modules [spark]

dongjoon-hyun closed pull request #46376: [SPARK-48127][INFRA] Fix `dev/scalastyle` to check `hadoop-cloud` and `jvm-profiler` modules URL: https://github.com/apache/spark/pull/46376 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48127][INFRA] Fix `dev/scalastyle` to check `hadoop-cloud` and `jvm-profiler` modules [spark]

dongjoon-hyun commented on PR #46376: URL: https://github.com/apache/spark/pull/46376#issuecomment-2094014606 Thank you, @viirya ! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA][FOLLOWUP] Fix `if` statement to check repository [spark]

dongjoon-hyun commented on PR #46379: URL: https://github.com/apache/spark/pull/46379#issuecomment-2094014537 Of course. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA][FOLLOWUP] Fix `if` statement to check repository [spark]

dongjoon-hyun closed pull request #46379: [SPARK-48116][INFRA][FOLLOWUP] Fix `if` statement to check repository URL: https://github.com/apache/spark/pull/46379 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48127][INFRA] Fix `dev/scalastyle` to check `hadoop-cloud` and `jvm-profiler` modules [spark]

viirya commented on PR #46376: URL: https://github.com/apache/spark/pull/46376#issuecomment-2094014506 Good catch! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Simplify the build with fixing the if condition [spark]

dongjoon-hyun commented on PR #46380: URL: https://github.com/apache/spark/pull/46380#issuecomment-2094014497 Feel free to merge at any time when this PR is ready ~ -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA][FOLLOWUP] Fix `if` statement to check repository [spark]

HyukjinKwon commented on PR #46379: URL: https://github.com/apache/spark/pull/46379#issuecomment-2094014303 @dongjoon-hyun Can we maybe just merge https://github.com/apache/spark/pull/46380 instead? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Simplify the build with fixing the if condition [spark]

HyukjinKwon commented on PR #46380: URL: https://github.com/apache/spark/pull/46380#issuecomment-2094013225 cc @dongjoon-hyun -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48127][INFRA] Fix `dev/scalastyle` to check `hadoop-cloud` and `jvm-profiler` modules [spark]

dongjoon-hyun commented on PR #46376: URL: https://github.com/apache/spark/pull/46376#issuecomment-2094007120 Could you review this PR, @viirya ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48127][INFRA] Fix `dev/scalastyle` to check `hadoop-cloud` and `jvm-profiler` modules [spark]

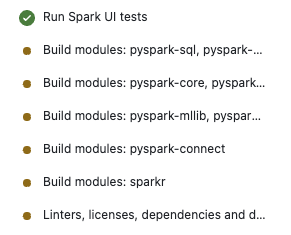

dongjoon-hyun commented on PR #46376: URL: https://github.com/apache/spark/pull/46376#issuecomment-2094006942 All tests passed. https://github.com/apache/spark/assets/9700541/0865ffb8-9bfa-467b-9bde-9bd7ef703b8f;> -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[PR] [SPARK-48116][INFRA][FOLLOWUP] Fix `if` statement to check repository [spark]

dongjoon-hyun opened a new pull request, #46379: URL: https://github.com/apache/spark/pull/46379 ### What changes were proposed in this pull request? ### Why are the changes needed? ### Does this PR introduce _any_ user-facing change? ### How was this patch tested? ### Was this patch authored or co-authored using generative AI tooling? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs [spark]

dongjoon-hyun commented on PR #46367: URL: https://github.com/apache/spark/pull/46367#issuecomment-2094000682 Merged to master~ -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs [spark]

dongjoon-hyun closed pull request #46367: [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs URL: https://github.com/apache/spark/pull/46367 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs [spark]

dongjoon-hyun commented on PR #46367: URL: https://github.com/apache/spark/pull/46367#issuecomment-2094000544 Thank you for the suggestion. Yes, I hope to do the deduplication later~ Let me merge this to monitor the reduced usage. Thank you, @HyukjinKwon -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48056][CONNECT][PYTHON] Re-execute plan if a SESSION_NOT_FOUND error is raised and no partial response was received [spark]

HyukjinKwon commented on PR #46297: URL: https://github.com/apache/spark/pull/46297#issuecomment-2093996045 Thanks for fixing -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs [spark]

HyukjinKwon commented on PR #46367: URL: https://github.com/apache/spark/pull/46367#issuecomment-2093995678 LGTM,one comment but I can followup. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs [spark]

HyukjinKwon commented on code in PR #46367: URL: https://github.com/apache/spark/pull/46367#discussion_r1589877623 ## .github/workflows/build_and_test.yml: ## @@ -347,12 +353,139 @@ jobs: pyspark-core, pyspark-errors, pyspark-streaming - >- pyspark-mllib, pyspark-ml, pyspark-ml-connect + - >- Review Comment: I'm fine with sikpping itself but wonder if we can deduplicate this by directly adding an if to the module based on the JSON input above. I can follow up. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs [spark]

dongjoon-hyun commented on PR #46367: URL: https://github.com/apache/spark/pull/46367#issuecomment-2093993468 Could you review this PR, @gengliangwang ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48127][INFRA] Fix `dev/scalastyle` to check `hadoop-cloud` and `jvm-profiler` modules [spark]

dongjoon-hyun commented on PR #46376: URL: https://github.com/apache/spark/pull/46376#issuecomment-2093993155 cc @panbingkun and @gengliangwang -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48056][PYTHON][CONNECT][FOLLOW-UP] Use `assertEqual` instead of `assertEquals` for Python 3.12 [spark]

dongjoon-hyun closed pull request #46377: [SPARK-48056][PYTHON][CONNECT][FOLLOW-UP] Use `assertEqual` instead of `assertEquals` for Python 3.12 URL: https://github.com/apache/spark/pull/46377 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48056][PYTHON][CONNECT][FOLLOW-UP] Use `assertEqual` instead of `assertEquals` for Python 3.12 [spark]

dongjoon-hyun commented on PR #46377: URL: https://github.com/apache/spark/pull/46377#issuecomment-2093992824 Let me merge this to recover the Python CI. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47969][PYTHON][TESTS][FOLLOWUP] Make Test `test_creation_index` deterministic [spark]

dongjoon-hyun commented on PR #46378: URL: https://github.com/apache/spark/pull/46378#issuecomment-2093991946 Merged to master. Thank you, @zhengruifeng . -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47969][PYTHON][TESTS][FOLLOWUP] Make Test `test_creation_index` deterministic [spark]

dongjoon-hyun closed pull request #46378: [SPARK-47969][PYTHON][TESTS][FOLLOWUP] Make Test `test_creation_index` deterministic URL: https://github.com/apache/spark/pull/46378 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48056][CONNECT][PYTHON] Re-execute plan if a SESSION_NOT_FOUND error is raised and no partial response was received [spark]

dongjoon-hyun commented on PR #46297: URL: https://github.com/apache/spark/pull/46297#issuecomment-2093955454 This seems to be missed in another PR too. - #45242 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[PR] [SPARK-47969][PYTHON][TESTS][FOLLOWUP] Make Test `test_creation_index` deterministic - followup [spark]

zhengruifeng opened a new pull request, #46378: URL: https://github.com/apache/spark/pull/46378 ### What changes were proposed in this pull request? followup https://github.com/apache/spark/pull/46200 ### Why are the changes needed? there is still non-deterministic codes in this test: ``` Traceback (most recent call last): File "/home/jenkins/python/pyspark/testing/pandasutils.py", line 91, in _assert_pandas_equal assert_frame_equal( File "/databricks/python3/lib/python3.11/site-packages/pandas/_testing/asserters.py", line 1257, in assert_frame_equal assert_index_equal( File "/databricks/python3/lib/python3.11/site-packages/pandas/_testing/asserters.py", line 407, in assert_index_equal raise_assert_detail(obj, msg, left, right) File "/databricks/python3/lib/python3.11/site-packages/pandas/_testing/asserters.py", line 665, in raise_assert_detail raise AssertionError(msg) AssertionError: DataFrame.index are different DataFrame.index values are different (75.0 %) [left]: DatetimeIndex(['2022-09-02', '2022-09-03', '2022-08-31', '2022-09-05'], dtype='datetime64[ns]', freq=None) [right]: DatetimeIndex(['2022-08-31', '2022-09-02', '2022-09-03', '2022-09-05'], dtype='datetime64[ns]', freq=None) ``` ### Does this PR introduce _any_ user-facing change? no, test only ### How was this patch tested? ci ### Was this patch authored or co-authored using generative AI tooling? no -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[PR] [SPARK-48056][PYTHON][CONNECT][FOLLOW-UP] Use `assertEqual` instead of `assertEquals` for Python 3.12 [spark]

dongjoon-hyun opened a new pull request, #46377: URL: https://github.com/apache/spark/pull/46377 … ### What changes were proposed in this pull request? ### Why are the changes needed? ### Does this PR introduce _any_ user-facing change? ### How was this patch tested? ### Was this patch authored or co-authored using generative AI tooling? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs [spark]

dongjoon-hyun commented on PR #46367: URL: https://github.com/apache/spark/pull/46367#issuecomment-2093951179 ASF Infra team requests GitHub Action usage control to Apache Spark PMC. - https://lists.apache.org/thread/rgy1cg17tkd3yox7qfq87ht12sqclkbg -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48116][INFRA] Run `pyspark-pandas*` only in PR builder and Daily Python CIs [spark]

dongjoon-hyun commented on PR #46367: URL: https://github.com/apache/spark/pull/46367#issuecomment-2093950583 Could you review this PR, @zhengruifeng ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47594] Connector module: Migrate logInfo with variables to structured logging framework [spark]

dongjoon-hyun commented on code in PR #46022:

URL: https://github.com/apache/spark/pull/46022#discussion_r1589845846

##

connector/profiler/src/main/scala/org/apache/spark/executor/profiler/ExecutorJVMProfiler.scala:

##

@@ -25,7 +25,8 @@ import org.apache.hadoop.fs.{FileSystem, FSDataOutputStream,

Path}

import org.apache.spark.SparkConf

import org.apache.spark.deploy.SparkHadoopUtil

-import org.apache.spark.internal.Logging

+import org.apache.spark.internal.LogKey.PATH

+import org.apache.spark.internal.{Logging, MDC}

Review Comment:

Unfortunately, this import ordering issue was missed because

`dev/scalastyle` didn't include this module.

Here is the fix for `dev/scalastyle` and this.

- https://github.com/apache/spark/pull/46376

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47594] Connector module: Migrate logInfo with variables to structured logging framework [spark]

dongjoon-hyun commented on code in PR #46022:

URL: https://github.com/apache/spark/pull/46022#discussion_r1589845864

##

connector/profiler/src/main/scala/org/apache/spark/executor/profiler/ExecutorProfilerPlugin.scala:

##

@@ -23,7 +23,8 @@ import scala.util.Random

import org.apache.spark.SparkConf

import org.apache.spark.api.plugin.{DriverPlugin, ExecutorPlugin,

PluginContext, SparkPlugin}

-import org.apache.spark.internal.Logging

+import org.apache.spark.internal.LogKey.EXECUTOR_ID

+import org.apache.spark.internal.{Logging, MDC}

Review Comment:

ditto. Import ordering issue.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48058][SPARK-43727][PYTHON][CONNECT] `UserDefinedFunction.returnType` parse the DDL string [spark]

zhengruifeng commented on PR #46300: URL: https://github.com/apache/spark/pull/46300#issuecomment-2093941093 thanks @HyukjinKwon and @dongjoon-hyun for reviews! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[PR] [SPARK-48127][INFRA] Fix `dev/scalastyle` to check `hadoop-cloud` and `jvm-profiler` modules [spark]

dongjoon-hyun opened a new pull request, #46376: URL: https://github.com/apache/spark/pull/46376 … ### What changes were proposed in this pull request? ### Why are the changes needed? ### Does this PR introduce _any_ user-facing change? ### How was this patch tested? ### Was this patch authored or co-authored using generative AI tooling? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48049][BUILD] Upgrade Scala to 2.13.14 [spark]

panbingkun commented on PR #46288: URL: https://github.com/apache/spark/pull/46288#issuecomment-2093917835 Currently, this GA has turned green. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48049][BUILD] Upgrade Scala to 2.13.14 [spark]

panbingkun commented on PR #46288: URL: https://github.com/apache/spark/pull/46288#issuecomment-2093915493 > Thank you for the analysis and sharing, @panbingkun and @LuciferYang . > [INFO] Restricted to JDK 17 yet org.jline:jline:jar:3.25.1:compile contains org/jline/terminal/impl/ffm/CLibrary$termios.class targeted to 65.-257 > > Oh... It seems that `CLibrary$termios.class` is not compatible with Java 8. > > In jline 3.25.1, CLibrary references some implementations that were moved to the `java.lang.foreign` package in Java 21, but in Java 17, they were still in the `jdk.incubator.foreign` package. > > However, in jline 3.24.1, CLibrary also import classes in `java.lang.foreign` package, it's just that the final jline jar does not include the `terminal-ffm` module, so it won't trigger a compilation error from the `maven-enforcer-plugin`. > > So it seems that using jline 3.25.1 should not pose any issues. It's unclear whether it's possible to workaround this by modifying the rules of the `maven-enforcer-plugin`. @panbingkun Let me try, We should be able to refer to the following: https://github.com/mojohaus/extra-enforcer-rules/issues/46 https://github.com/apache/spark/assets/15246973/db42b4e1-650e-44c2-9cfb-d9f6dc9914b8;> But I'm not sure we really want to do this? We have 2 options: 1. Use `org.jline:jline:3.14.1` (exclude `org.jline:jline:3.15.1` from `org.scala-lang:scala-compiler:jar:2.13.14`) 2. Use `org.jline:jline:3.15.1` (Modify the rule `enforceBytecodeVersion` by configuring `ignoreClasses ` and bypass it ) WDYT @dongjoon-hyun -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48059][CORE] Implement the structured log framework on the java side [spark]

gengliangwang commented on PR #46301: URL: https://github.com/apache/spark/pull/46301#issuecomment-2093912796 @panbingkun Awesome, thanks for the work! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48059][CORE] Implement the structured log framework on the java side [spark]

panbingkun commented on PR #46301: URL: https://github.com/apache/spark/pull/46301#issuecomment-2093912354 > Thanks, merging to master Thank you very much. Based on this, I will do log migration and auxiliary work on the java side. (eg, after the migration is completed, we can add a `rule` in `checkstyle` that prohibits importing `org.slf4j.Logger` and `org.slf4j.LoggerFactory`) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47578][CORE] Migrate logWarning with variables to structured logging framework [spark]

dtenedor commented on PR #46309: URL: https://github.com/apache/spark/pull/46309#issuecomment-2093911859 The two remaining test failures appear to be flaky/unrelated. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-46813][CORE] Don't set the executor id to "driver" when SparkContext is created by the executor side [spark]

github-actions[bot] commented on PR #44855: URL: https://github.com/apache/spark/pull/44855#issuecomment-2093908111 We're closing this PR because it hasn't been updated in a while. This isn't a judgement on the merit of the PR in any way. It's just a way of keeping the PR queue manageable. If you'd like to revive this PR, please reopen it and ask a committer to remove the Stale tag! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [WIP][SQL] Avoid parquet footer reads twice [spark]

github-actions[bot] closed pull request #44853: [WIP][SQL] Avoid parquet footer reads twice URL: https://github.com/apache/spark/pull/44853 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-46738][CONNECT][PYTHON] Make the display of `cast` in `Regular Spark` and `Spark Connect` consistent [spark]

github-actions[bot] closed pull request #44829: [SPARK-46738][CONNECT][PYTHON] Make the display of `cast` in `Regular Spark` and `Spark Connect` consistent URL: https://github.com/apache/spark/pull/44829 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-46714][SQL] Overwrite a partition with custom location [spark]

github-actions[bot] closed pull request #44725: [SPARK-46714][SQL] Overwrite a partition with custom location URL: https://github.com/apache/spark/pull/44725 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-42669][CONNECT] Short circuit local relation RPCs [spark]

github-actions[bot] closed pull request #40782: [SPARK-42669][CONNECT] Short circuit local relation RPCs URL: https://github.com/apache/spark/pull/40782 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47484][SQL] Allow trailing comma in column definition list [spark]

srielau commented on PR #45593: URL: https://github.com/apache/spark/pull/45593#issuecomment-2093902007 > Sorry for being late, @MaxGekk . > > * Is this ANSI SQL syntax? > * Do you have any reference for this syntax in other references? I only know of this for the SELECT list, where it is popularized by duckDB and now Snowflake. This is NOT ANSI SQL syntax and I'm not in favor of starting down this path for two reasons: 1. This will increase pressure on trailing commas in SELECT which we cannot do without a hard breaking change 2. Generally I think "nothing" after a comma is associated with an unset value (i.e. a NULL). E.g. (,,) should, if we were to support this be parsed as (NULL, NULL, NULL). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47097][CONNECT][TESTS][FOLLOWUP] Increase timeout to `1 minute` for `interrupt tag` test [spark]

dongjoon-hyun commented on PR #46374: URL: https://github.com/apache/spark/pull/46374#issuecomment-2093895463 Merged to master. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47097][CONNECT][TESTS][FOLLOWUP] Increase timeout to `1 minute` for `interrupt tag` test [spark]

dongjoon-hyun closed pull request #46374: [SPARK-47097][CONNECT][TESTS][FOLLOWUP] Increase timeout to `1 minute` for `interrupt tag` test URL: https://github.com/apache/spark/pull/46374 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47097][CONNECT][TESTS][FOLLOWUP] Increase timeout to `1 minute` for `interrupt tag` test [spark]

dongjoon-hyun commented on PR #46374: URL: https://github.com/apache/spark/pull/46374#issuecomment-2093895254 Thank you! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48123][Core] Provide a constant table schema for querying structured logs [spark]

gengliangwang commented on code in PR #46375:

URL: https://github.com/apache/spark/pull/46375#discussion_r1589812045

##

common/utils/src/main/scala/org/apache/spark/util/LogUtils.scala:

##

@@ -0,0 +1,35 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.spark.util

+

+object LogUtils {

+ val LOG_SCHEMA = """|ts TIMESTAMP,

Review Comment:

Updated

##

common/utils/src/main/scala/org/apache/spark/util/LogUtils.scala:

##

@@ -0,0 +1,35 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.spark.util

+

+object LogUtils {

+ val LOG_SCHEMA = """|ts TIMESTAMP,

+| level STRING,

Review Comment:

Thanks, updated

##

sql/core/src/test/scala/org/apache/spark/sql/LogQuerySuite.scala:

##

@@ -0,0 +1,86 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.sql

+

+import java.io.File

+

+import org.scalatest.BeforeAndAfterAll

+

+import org.apache.spark.internal.{Logging, LogKeys, MDC}

+import org.apache.spark.sql.test.SharedSparkSession

+import org.apache.spark.util.LogUtils.LOG_SCHEMA

+

+/**

+ * Test suite for querying Spark logs using SQL.

+ */

+class LogQuerySuite extends QueryTest

+with SharedSparkSession with BeforeAndAfterAll with Logging {

Review Comment:

Done

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48123][Core] Provide a constant table schema for querying structured logs [spark]

gengliangwang commented on code in PR #46375:

URL: https://github.com/apache/spark/pull/46375#discussion_r1589811109

##

common/utils/src/main/scala/org/apache/spark/util/LogUtils.scala:

##

@@ -0,0 +1,35 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.spark.util

+

+object LogUtils {

Review Comment:

Sure, updated

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48123][Core] Provide a constant table schema for querying structured logs [spark]

ueshin commented on code in PR #46375:

URL: https://github.com/apache/spark/pull/46375#discussion_r1589805454

##

common/utils/src/main/scala/org/apache/spark/util/LogUtils.scala:

##

@@ -0,0 +1,35 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.spark.util

+

+object LogUtils {

+ val LOG_SCHEMA = """|ts TIMESTAMP,

+| level STRING,

Review Comment:

nit:

```suggestion

val LOG_SCHEMA = """

| ts TIMESTAMP,

| level STRING,

```

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47097][CONNECT][TESTS][FOLLOWUP] Increase timeout to 1 minute for `interrupt tag` test [spark]

dongjoon-hyun commented on PR #46374: URL: https://github.com/apache/spark/pull/46374#issuecomment-2093886085 Could you review this test PR, @gengliangwang ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48123][Core] Provide a constant table schema for querying structured logs [spark]

dongjoon-hyun commented on code in PR #46375:

URL: https://github.com/apache/spark/pull/46375#discussion_r1589807167

##

sql/core/src/test/scala/org/apache/spark/sql/LogQuerySuite.scala:

##

@@ -0,0 +1,86 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.sql

+

+import java.io.File

+

+import org.scalatest.BeforeAndAfterAll

+

+import org.apache.spark.internal.{Logging, LogKeys, MDC}

+import org.apache.spark.sql.test.SharedSparkSession

+import org.apache.spark.util.LogUtils.LOG_SCHEMA

+

+/**

+ * Test suite for querying Spark logs using SQL.

+ */

+class LogQuerySuite extends QueryTest

+with SharedSparkSession with BeforeAndAfterAll with Logging {

Review Comment:

Please remove `with BeforeAndAfterAll` because we have it already.

-

https://github.com/apache/spark/blob/5c01f196afc3ba75f10c4aedf2c8405b6f59336a/sql/core/src/test/scala/org/apache/spark/sql/test/SQLTestUtils.scala#L226

##

sql/core/src/test/scala/org/apache/spark/sql/LogQuerySuite.scala:

##

@@ -0,0 +1,86 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.sql

+

+import java.io.File

+

+import org.scalatest.BeforeAndAfterAll

+

+import org.apache.spark.internal.{Logging, LogKeys, MDC}

+import org.apache.spark.sql.test.SharedSparkSession

+import org.apache.spark.util.LogUtils.LOG_SCHEMA

+

+/**

+ * Test suite for querying Spark logs using SQL.

+ */

+class LogQuerySuite extends QueryTest

+with SharedSparkSession with BeforeAndAfterAll with Logging {

Review Comment:

Please remove `with BeforeAndAfterAll` because we have it already.

https://github.com/apache/spark/blob/5c01f196afc3ba75f10c4aedf2c8405b6f59336a/sql/core/src/test/scala/org/apache/spark/sql/test/SQLTestUtils.scala#L226

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48123][Core] Provide a constant table schema for querying structured logs [spark]

ueshin commented on code in PR #46375:

URL: https://github.com/apache/spark/pull/46375#discussion_r1589805454

##

common/utils/src/main/scala/org/apache/spark/util/LogUtils.scala:

##

@@ -0,0 +1,35 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.spark.util

+

+object LogUtils {

+ val LOG_SCHEMA = """|ts TIMESTAMP,

+| level STRING,

Review Comment:

nit: Just my preference, you can ignore:

```suggestion

val LOG_SCHEMA = """

| ts TIMESTAMP,

| level STRING,

```

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48059][CORE] Implement the structured log framework on the java side [spark]

gengliangwang closed pull request #46301: [SPARK-48059][CORE] Implement the structured log framework on the java side URL: https://github.com/apache/spark/pull/46301 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48059][CORE] Implement the structured log framework on the java side [spark]

gengliangwang commented on PR #46301: URL: https://github.com/apache/spark/pull/46301#issuecomment-2093883660 Thanks, merging to master -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48059][CORE] Implement the structured log framework on the java side [spark]

gengliangwang commented on code in PR #46301:

URL: https://github.com/apache/spark/pull/46301#discussion_r1589805794

##

common/utils/src/test/java/org/apache/spark/util/LoggerSuiteBase.java:

##

@@ -0,0 +1,248 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.util;

+

+import java.io.File;

+import java.io.IOException;

+import java.nio.file.Files;

+import java.util.List;

+

+import org.apache.commons.lang3.tuple.Pair;

+import org.apache.logging.log4j.Level;

+import org.junit.jupiter.api.Test;

+

+import org.apache.spark.internal.Logger;

+import org.apache.spark.internal.LogKeys;

+import org.apache.spark.internal.MDC;

+

+public abstract class LoggerSuiteBase {

Review Comment:

nit: ideally, we can have a followup to reduce the duplicated test code.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48123][Core] Provide a constant table schema for querying structured logs [spark]

dongjoon-hyun commented on code in PR #46375:

URL: https://github.com/apache/spark/pull/46375#discussion_r1589805435

##

common/utils/src/main/scala/org/apache/spark/util/LogUtils.scala:

##

@@ -0,0 +1,35 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.spark.util

+

+object LogUtils {

Review Comment:

Could you add `DeveloperApi` and `Since` tag officially?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48123][Core] Provide a constant table schema for querying structured logs [spark]

dongjoon-hyun commented on code in PR #46375:

URL: https://github.com/apache/spark/pull/46375#discussion_r1589805250

##

common/utils/src/main/scala/org/apache/spark/util/LogUtils.scala:

##

@@ -0,0 +1,35 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.spark.util

+

+object LogUtils {

+ val LOG_SCHEMA = """|ts TIMESTAMP,

Review Comment:

This looks a little weird. Could you reformat this?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48121][K8S] Promote `KubernetesDriverConf` to `DeveloperApi` [spark]

dongjoon-hyun commented on PR #46373: URL: https://github.com/apache/spark/pull/46373#issuecomment-2093881734 Merged to master for Apache Spark 4.0.0-preview. Thank you, @jiangzho . -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48121][K8S] Promote `KubernetesDriverConf` to `DeveloperApi` [spark]

dongjoon-hyun closed pull request #46373: [SPARK-48121][K8S] Promote `KubernetesDriverConf` to `DeveloperApi` URL: https://github.com/apache/spark/pull/46373 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[PR] [SPARK-48123][Core] Provide a constant table schema for querying structured logs [spark]

gengliangwang opened a new pull request, #46375:

URL: https://github.com/apache/spark/pull/46375

### What changes were proposed in this pull request?

Providing a table schema LOG_SCHEMA, so that users can load structured logs

with the following code:

```

import org.apache.spark.util.LogUtils.LOG_SCHEMA

val logDf = spark.read.schema(LOG_SCHEMA).json("path/to/logs")

```

### Why are the changes needed?

Provide a convenient way to query Spark logs using Spark SQL.

### Does this PR introduce _any_ user-facing change?

No

### How was this patch tested?

New UT

### Was this patch authored or co-authored using generative AI tooling?

No

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47578][CORE] Migrate logWarning with variables to structured logging framework [spark]

dtenedor commented on code in PR #46309:

URL: https://github.com/apache/spark/pull/46309#discussion_r1589791573

##

core/src/test/scala/org/apache/spark/deploy/RPackageUtilsSuite.scala:

##

@@ -127,7 +127,7 @@ class RPackageUtilsSuite

RPackageUtils.checkAndBuildRPackage(jar.getAbsolutePath, new

BufferPrintStream,

verbose = true)

val output = lineBuffer.mkString("\n")

- assert(output.contains(RPackageUtils.RJarDoc))

+ assert(output.contains("Building R package"))

Review Comment:

Created https://issues.apache.org/jira/browse/SPARK-48122 to track it

separately.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48121][K8S] Promote `KubernetesDriverConf` to `DeveloperApi` [spark]

dongjoon-hyun commented on code in PR #46373:

URL: https://github.com/apache/spark/pull/46373#discussion_r1589790988

##

resource-managers/kubernetes/core/src/main/scala/org/apache/spark/deploy/k8s/KubernetesConf.scala:

##

@@ -78,7 +79,16 @@ private[spark] abstract class KubernetesConf(val sparkConf:

SparkConf) {

def getOption(key: String): Option[String] = sparkConf.getOption(key)

}

-private[spark] class KubernetesDriverConf(

+

+/**

+ * :: DeveloperApi ::

+ *

+ * Used for K8s operations internally and Spark K8s operator.

+ */

+@Unstable

+@DeveloperApi

+@Since("4.0.0")

Review Comment:

Ya, I hope we can make it stable from 4.0.0.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48121][K8S] Promote `KubernetesDriverConf` to `DeveloperApi` [spark]

dongjoon-hyun commented on code in PR #46373:

URL: https://github.com/apache/spark/pull/46373#discussion_r1589790586

##

resource-managers/kubernetes/core/src/main/scala/org/apache/spark/deploy/k8s/KubernetesConf.scala:

##

@@ -78,7 +79,16 @@ private[spark] abstract class KubernetesConf(val sparkConf:

SparkConf) {

def getOption(key: String): Option[String] = sparkConf.getOption(key)

}

-private[spark] class KubernetesDriverConf(

+

+/**

+ * :: DeveloperApi ::

+ *

+ * Used for K8s operations internally and Spark K8s operator.

+ */

+@Unstable

+@DeveloperApi

+@Since("4.0.0")

Review Comment:

Is this correct?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-47578][CORE] Migrate logWarning with variables to structured logging framework [spark]

dtenedor commented on code in PR #46309:

URL: https://github.com/apache/spark/pull/46309#discussion_r1589790627

##

core/src/test/scala/org/apache/spark/deploy/RPackageUtilsSuite.scala:

##

@@ -127,7 +127,7 @@ class RPackageUtilsSuite

RPackageUtils.checkAndBuildRPackage(jar.getAbsolutePath, new

BufferPrintStream,

verbose = true)

val output = lineBuffer.mkString("\n")

- assert(output.contains(RPackageUtils.RJarDoc))

+ assert(output.contains("Building R package"))

Review Comment:

You are right, this change is not too great.

I reverted the changes to `RPackageUtils.scala` and this file for now.

I will figure out how to get the R packages installed on my local machine

and fix this file in a next step.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48105][SS] Fix the race condition between state store unloading and snapshotting [spark]

HeartSaVioR commented on PR #46351: URL: https://github.com/apache/spark/pull/46351#issuecomment-2093861697 > @HeartSaVioR - do we also need to port to older Spark versions ? 3.5 maybe ? Ideally all non-EOL version lines, 3.5/3.4. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48121][K8S] Promote `KubernetesDriverConf` to `DeveloperApi` [spark]

dongjoon-hyun commented on code in PR #46373:

URL: https://github.com/apache/spark/pull/46373#discussion_r1589790522

##

resource-managers/kubernetes/core/src/main/scala/org/apache/spark/deploy/k8s/KubernetesConf.scala:

##

@@ -78,7 +79,16 @@ private[spark] abstract class KubernetesConf(val sparkConf:

SparkConf) {

def getOption(key: String): Option[String] = sparkConf.getOption(key)

}

-private[spark] class KubernetesDriverConf(

+

Review Comment:

Please remove this redundant empty line addition.

##

resource-managers/kubernetes/core/src/main/scala/org/apache/spark/deploy/k8s/KubernetesConf.scala:

##

@@ -78,7 +79,16 @@ private[spark] abstract class KubernetesConf(val sparkConf:

SparkConf) {

def getOption(key: String): Option[String] = sparkConf.getOption(key)

}

-private[spark] class KubernetesDriverConf(

+

+/**

+ * :: DeveloperApi ::

+ *

+ * Used for K8s operations internally and Spark K8s operator.

+ */

+@Unstable

+@DeveloperApi

+@Since("4.0.0")

Review Comment:

Is this correct?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48120] Enable autolink to SPARK jira issue [spark-kubernetes-operator]

dongjoon-hyun closed pull request #11: [SPARK-48120] Enable autolink to SPARK jira issue URL: https://github.com/apache/spark-kubernetes-operator/pull/11 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48120] Enable autolink to SPARK jira issue [spark-kubernetes-operator]

dongjoon-hyun commented on PR #11: URL: https://github.com/apache/spark-kubernetes-operator/pull/11#issuecomment-2093859360 Thank you for review and approval, @jiangzho ! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48019][SQL][FOLLOWUP] Use primitive arrays over object arrays when nulls exist [spark]

gene-db commented on PR #46372: URL: https://github.com/apache/spark/pull/46372#issuecomment-2093857652 > Please revise the PR title and description. @dongjoon-hyun Updated the title and description. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] Promote `KubernetesDriverConf` to `DeveloperApi` [spark]

dongjoon-hyun commented on PR #46373: URL: https://github.com/apache/spark/pull/46373#issuecomment-2093854600 One more thing. If you have some time, please review the following PR on `Apache Spark K8s Operator` repository. - https://github.com/apache/spark-kubernetes-operator/pull/11 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

Re: [PR] [SPARK-48019][SQL][FOLLOWUP] Set nulls correctly for primitive arrays [spark]