[GitHub] codecov-io edited a comment on issue #4279: [AIRFLOW-3444] Explicitly set transfer operator description.

codecov-io edited a comment on issue #4279: [AIRFLOW-3444] Explicitly set transfer operator description. URL: https://github.com/apache/incubator-airflow/pull/4279#issuecomment-444370208 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=h1) Report > Merging [#4279](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4279 +/- ## === Coverage 78.08% 78.08% === Files 201 201 Lines 1645816458 === Hits1285112851 Misses 3607 3607 ``` -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=footer). Last update [9c04e8f...3844805](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io commented on issue #4279: [AIRFLOW-3444] Explicitly set transfer operator description.

codecov-io commented on issue #4279: [AIRFLOW-3444] Explicitly set transfer operator description. URL: https://github.com/apache/incubator-airflow/pull/4279#issuecomment-444370203 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=h1) Report > Merging [#4279](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4279 +/- ## === Coverage 78.08% 78.08% === Files 201 201 Lines 1645816458 === Hits1285112851 Misses 3607 3607 ``` -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=footer). Last update [9c04e8f...3844805](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io commented on issue #4279: [AIRFLOW-3444] Explicitly set transfer operator description.

codecov-io commented on issue #4279: [AIRFLOW-3444] Explicitly set transfer operator description. URL: https://github.com/apache/incubator-airflow/pull/4279#issuecomment-444370208 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=h1) Report > Merging [#4279](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4279 +/- ## === Coverage 78.08% 78.08% === Files 201 201 Lines 1645816458 === Hits1285112851 Misses 3607 3607 ``` -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=footer). Last update [9c04e8f...3844805](https://codecov.io/gh/apache/incubator-airflow/pull/4279?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Commented] (AIRFLOW-3444) Expand templated fields in gcs transfer service operator

[

https://issues.apache.org/jira/browse/AIRFLOW-3444?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16709616#comment-16709616

]

ASF GitHub Bot commented on AIRFLOW-3444:

-

jmcarp opened a new pull request #4279: [AIRFLOW-3444] Explicitly set transfer

operator description.

URL: https://github.com/apache/incubator-airflow/pull/4279

Instead of passing transfer operator descriptions through generic

kwargs, accept description as a named parameter; no other parameters are

accepted by the API, so generic kwargs aren't useful. Also, add

description and object conditions to templated fields.

Make sure you have checked _all_ steps below.

### Jira

- [x] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues and references

them in the PR title. For example, "\[AIRFLOW-XXX\] My Airflow PR"

- https://issues.apache.org/jira/browse/AIRFLOW-3444

- In case you are fixing a typo in the documentation you can prepend your

commit with \[AIRFLOW-XXX\], code changes always need a Jira issue.

### Description

- [x] Here are some details about my PR, including screenshots of any UI

changes:

The `S3ToGoogleCloudStorageTransferOperator` should support an explicit

`description` parameter and allow that parameter to be templated. This will

make it easier to set job descriptions, and we'll be able to drop the

`job_kwargs` attribute, which doesn't have any other use.

### Tests

- [x] My PR adds the following unit tests __OR__ does not need testing for

this extremely good reason:

Updates existing tests

### Commits

- [x] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

### Documentation

- [x] In case of new functionality, my PR adds documentation that describes

how to use it.

- When adding new operators/hooks/sensors, the autoclass documentation

generation needs to be added.

### Code Quality

- [x] Passes `flake8`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Expand templated fields in gcs transfer service operator

>

>

> Key: AIRFLOW-3444

> URL: https://issues.apache.org/jira/browse/AIRFLOW-3444

> Project: Apache Airflow

> Issue Type: Improvement

>Reporter: Josh Carp

>Assignee: Josh Carp

>Priority: Trivial

>

> The `S3ToGoogleCloudStorageTransferOperator` should support an explicit

> `description` parameter and allow that parameter to be templated. This will

> make it easier to set job descriptions, and we'll be able to drop the

> `job_kwargs` attribute, which doesn't have any other use.

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[GitHub] jmcarp opened a new pull request #4279: [AIRFLOW-3444] Explicitly set transfer operator description.

jmcarp opened a new pull request #4279: [AIRFLOW-3444] Explicitly set transfer

operator description.

URL: https://github.com/apache/incubator-airflow/pull/4279

Instead of passing transfer operator descriptions through generic

kwargs, accept description as a named parameter; no other parameters are

accepted by the API, so generic kwargs aren't useful. Also, add

description and object conditions to templated fields.

Make sure you have checked _all_ steps below.

### Jira

- [x] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues and references

them in the PR title. For example, "\[AIRFLOW-XXX\] My Airflow PR"

- https://issues.apache.org/jira/browse/AIRFLOW-3444

- In case you are fixing a typo in the documentation you can prepend your

commit with \[AIRFLOW-XXX\], code changes always need a Jira issue.

### Description

- [x] Here are some details about my PR, including screenshots of any UI

changes:

The `S3ToGoogleCloudStorageTransferOperator` should support an explicit

`description` parameter and allow that parameter to be templated. This will

make it easier to set job descriptions, and we'll be able to drop the

`job_kwargs` attribute, which doesn't have any other use.

### Tests

- [x] My PR adds the following unit tests __OR__ does not need testing for

this extremely good reason:

Updates existing tests

### Commits

- [x] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

### Documentation

- [x] In case of new functionality, my PR adds documentation that describes

how to use it.

- When adding new operators/hooks/sensors, the autoclass documentation

generation needs to be added.

### Code Quality

- [x] Passes `flake8`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[jira] [Created] (AIRFLOW-3444) Expand templated fields in gcs transfer service operator

Josh Carp created AIRFLOW-3444: -- Summary: Expand templated fields in gcs transfer service operator Key: AIRFLOW-3444 URL: https://issues.apache.org/jira/browse/AIRFLOW-3444 Project: Apache Airflow Issue Type: Improvement Reporter: Josh Carp Assignee: Josh Carp The `S3ToGoogleCloudStorageTransferOperator` should support an explicit `description` parameter and allow that parameter to be templated. This will make it easier to set job descriptions, and we'll be able to drop the `job_kwargs` attribute, which doesn't have any other use. -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[GitHub] codecov-io edited a comment on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

codecov-io edited a comment on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265#issuecomment-444328155 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=h1) Report > Merging [#4265](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **decrease** coverage by `<.01%`. > The diff coverage is `75%`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) ```diff @@Coverage Diff @@ ## master#4265 +/- ## == - Coverage 78.08% 78.08% -0.01% == Files 201 201 Lines 1645816462 +4 == + Hits1285112854 +3 - Misses 3607 3608 +1 ``` | [Impacted Files](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/utils/db.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy91dGlscy9kYi5weQ==) | `33.33% <0%> (-0.27%)` | :arrow_down: | | [airflow/models.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMucHk=) | `92.34% <100%> (ø)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=footer). Last update [9c04e8f...42f8942](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io edited a comment on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

codecov-io edited a comment on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265#issuecomment-444328155 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=h1) Report > Merging [#4265](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **decrease** coverage by `<.01%`. > The diff coverage is `75%`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) ```diff @@Coverage Diff @@ ## master#4265 +/- ## == - Coverage 78.08% 78.08% -0.01% == Files 201 201 Lines 1645816462 +4 == + Hits1285112854 +3 - Misses 3607 3608 +1 ``` | [Impacted Files](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/utils/db.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy91dGlscy9kYi5weQ==) | `33.33% <0%> (-0.27%)` | :arrow_down: | | [airflow/models.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMucHk=) | `92.34% <100%> (ø)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=footer). Last update [9c04e8f...42f8942](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Commented] (AIRFLOW-3406) Implement an Azure CosmosDB operator

[

https://issues.apache.org/jira/browse/AIRFLOW-3406?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16709543#comment-16709543

]

ASF GitHub Bot commented on AIRFLOW-3406:

-

tmiller-msft opened a new pull request #4265: [AIRFLOW-3406] Implement an Azure

CosmosDB operator

URL: https://github.com/apache/incubator-airflow/pull/4265

Add an operator and hook to manipulate and use Azure

CosmosDB documents, including creation, deletion, and

updating documents and collections.

Includes sensor to detect documents being added to a

collection.

Make sure you have checked _all_ steps below.

### Jira

- [X] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues and references

them in the PR title. For example, "\[AIRFLOW-XXX\] My Airflow PR"

- https://issues.apache.org/jira/browse/AIRFLOW-3406

- In case you are fixing a typo in the documentation you can prepend your

commit with \[AIRFLOW-XXX\], code changes always need a Jira issue.

### Description

- [X] Here are some details about my PR, including screenshots of any UI

changes:

An Azure CosmosDB hook, operator, and sensor for manipulating documents and

collections

### Tests

- [X] My PR adds the following unit tests __OR__ does not need testing for

this extremely good reason:

### Commits

- [X] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

### Documentation

- [X] In case of new functionality, my PR adds documentation that describes

how to use it.

- When adding new operators/hooks/sensors, the autoclass documentation

generation needs to be added.

### Code Quality

- [X] Passes `flake8`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Implement an Azure CosmosDB operator

> -

>

> Key: AIRFLOW-3406

> URL: https://issues.apache.org/jira/browse/AIRFLOW-3406

> Project: Apache Airflow

> Issue Type: New Feature

>Reporter: Tom Miller

>Assignee: Tom Miller

>Priority: Major

>

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[GitHub] tmiller-msft opened a new pull request #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

tmiller-msft opened a new pull request #4265: [AIRFLOW-3406] Implement an Azure

CosmosDB operator

URL: https://github.com/apache/incubator-airflow/pull/4265

Add an operator and hook to manipulate and use Azure

CosmosDB documents, including creation, deletion, and

updating documents and collections.

Includes sensor to detect documents being added to a

collection.

Make sure you have checked _all_ steps below.

### Jira

- [X] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues and references

them in the PR title. For example, "\[AIRFLOW-XXX\] My Airflow PR"

- https://issues.apache.org/jira/browse/AIRFLOW-3406

- In case you are fixing a typo in the documentation you can prepend your

commit with \[AIRFLOW-XXX\], code changes always need a Jira issue.

### Description

- [X] Here are some details about my PR, including screenshots of any UI

changes:

An Azure CosmosDB hook, operator, and sensor for manipulating documents and

collections

### Tests

- [X] My PR adds the following unit tests __OR__ does not need testing for

this extremely good reason:

### Commits

- [X] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

### Documentation

- [X] In case of new functionality, my PR adds documentation that describes

how to use it.

- When adding new operators/hooks/sensors, the autoclass documentation

generation needs to be added.

### Code Quality

- [X] Passes `flake8`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[jira] [Commented] (AIRFLOW-3406) Implement an Azure CosmosDB operator

[ https://issues.apache.org/jira/browse/AIRFLOW-3406?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16709526#comment-16709526 ] ASF GitHub Bot commented on AIRFLOW-3406: - tmiller-msft closed pull request #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265 This is a PR merged from a forked repository. As GitHub hides the original diff on merge, it is displayed below for the sake of provenance: As this is a foreign pull request (from a fork), the diff is supplied below (as it won't show otherwise due to GitHub magic): This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org > Implement an Azure CosmosDB operator > - > > Key: AIRFLOW-3406 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3406 > Project: Apache Airflow > Issue Type: New Feature >Reporter: Tom Miller >Assignee: Tom Miller >Priority: Major > -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[GitHub] tmiller-msft closed pull request #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

tmiller-msft closed pull request #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265 This is a PR merged from a forked repository. As GitHub hides the original diff on merge, it is displayed below for the sake of provenance: As this is a foreign pull request (from a fork), the diff is supplied below (as it won't show otherwise due to GitHub magic): This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io edited a comment on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

codecov-io edited a comment on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265#issuecomment-444328155 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=h1) Report > Merging [#4265](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **decrease** coverage by `<.01%`. > The diff coverage is `75%`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) ```diff @@Coverage Diff @@ ## master#4265 +/- ## == - Coverage 78.08% 78.08% -0.01% == Files 201 201 Lines 1645816462 +4 == + Hits1285112854 +3 - Misses 3607 3608 +1 ``` | [Impacted Files](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/utils/db.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy91dGlscy9kYi5weQ==) | `33.33% <0%> (-0.27%)` | :arrow_down: | | [airflow/models.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMucHk=) | `92.34% <100%> (ø)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=footer). Last update [9c04e8f...13d5df0](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io edited a comment on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

codecov-io edited a comment on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265#issuecomment-444328155 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=h1) Report > Merging [#4265](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **decrease** coverage by `<.01%`. > The diff coverage is `75%`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) ```diff @@Coverage Diff @@ ## master#4265 +/- ## == - Coverage 78.08% 78.08% -0.01% == Files 201 201 Lines 1645816462 +4 == + Hits1285112854 +3 - Misses 3607 3608 +1 ``` | [Impacted Files](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/utils/db.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy91dGlscy9kYi5weQ==) | `33.33% <0%> (-0.27%)` | :arrow_down: | | [airflow/models.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMucHk=) | `92.34% <100%> (ø)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=footer). Last update [9c04e8f...13d5df0](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io commented on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

codecov-io commented on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265#issuecomment-444328152 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=h1) Report > Merging [#4265](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **decrease** coverage by `<.01%`. > The diff coverage is `75%`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) ```diff @@Coverage Diff @@ ## master#4265 +/- ## == - Coverage 78.08% 78.08% -0.01% == Files 201 201 Lines 1645816462 +4 == + Hits1285112854 +3 - Misses 3607 3608 +1 ``` | [Impacted Files](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/utils/db.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy91dGlscy9kYi5weQ==) | `33.33% <0%> (-0.27%)` | :arrow_down: | | [airflow/models.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMucHk=) | `92.34% <100%> (ø)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=footer). Last update [9c04e8f...13d5df0](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io commented on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

codecov-io commented on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265#issuecomment-444328155 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=h1) Report > Merging [#4265](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **decrease** coverage by `<.01%`. > The diff coverage is `75%`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) ```diff @@Coverage Diff @@ ## master#4265 +/- ## == - Coverage 78.08% 78.08% -0.01% == Files 201 201 Lines 1645816462 +4 == + Hits1285112854 +3 - Misses 3607 3608 +1 ``` | [Impacted Files](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=tree) | Coverage Δ | | |---|---|---| | [airflow/utils/db.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy91dGlscy9kYi5weQ==) | `33.33% <0%> (-0.27%)` | :arrow_down: | | [airflow/models.py](https://codecov.io/gh/apache/incubator-airflow/pull/4265/diff?src=pr=tree#diff-YWlyZmxvdy9tb2RlbHMucHk=) | `92.34% <100%> (ø)` | :arrow_up: | -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=footer). Last update [9c04e8f...13d5df0](https://codecov.io/gh/apache/incubator-airflow/pull/4265?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] XD-DENG commented on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

XD-DENG commented on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265#issuecomment-444327014 Thanks @tmiller-msft .Kindly take your time :-) This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] tmiller-msft commented on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

tmiller-msft commented on issue #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator URL: https://github.com/apache/incubator-airflow/pull/4265#issuecomment-444326544 Ah yes, I see what you mean.. I will update to do that instead.. and yeah, fixing rebasing as well, give me an hour or three. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] tmiller-msft commented on a change in pull request #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

tmiller-msft commented on a change in pull request #4265: [AIRFLOW-3406]

Implement an Azure CosmosDB operator

URL: https://github.com/apache/incubator-airflow/pull/4265#discussion_r238889159

##

File path: airflow/contrib/sensors/azure_cosmos_sensor.py

##

@@ -0,0 +1,68 @@

+# -*- coding: utf-8 -*-

+#

+# Licensed to the Apache Software Foundation (ASF) under one

+# or more contributor license agreements. See the NOTICE file

+# distributed with this work for additional information

+# regarding copyright ownership. The ASF licenses this file

+# to you under the Apache License, Version 2.0 (the

+# "License"); you may not use this file except in compliance

+# with the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing,

+# software distributed under the License is distributed on an

+# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+# KIND, either express or implied. See the License for the

+# specific language governing permissions and limitations

+# under the License.

+from airflow.contrib.hooks.azure_cosmos_hook import AzureCosmosDBHook

+from airflow.sensors.base_sensor_operator import BaseSensorOperator

+from airflow.utils.decorators import apply_defaults

+

+

+class AzureCosmosDocumentSensor(BaseSensorOperator):

+"""

+Checks for the existence of a document which

+matches the given query in CosmosDB. Example:

+

+>>> azure_cosmos_sensor =

AzureCosmosDocumentSensor(database_name="somedatabase_name",

+...collection_name="somecollection_name",

+...document_id="unique-doc-id",

+...azure_cosmos_conn_id="azure_cosmos_default",

+...task_id="azure_cosmos_sensor")

+"""

+template_fields = ('database_name', 'collection_name', 'document_id')

+

+@apply_defaults

+def __init__(

+self,

+database_name,

+collection_name,

+document_id,

+azure_cosmos_conn_id="azure_cosmos_default",

+*args,

+**kwargs):

+"""

+Create a new AzureCosmosDocumentSensor

+

+:param database_name: Target CosmosDB database_name.

+:type database_name: str

+:param collection_name: Target CosmosDB collection_name.

+:type collection_name: str

+:param document_id: The ID of the target document.

+:type query: str

+:param azure_cosmos_conn_id: The connection ID to use

+ when connecting to CosmosDB.

Review comment:

Thanks, updated to mirror this parameter on the other classes, which also

addresses this since it no longer wraps at all.

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] tmiller-msft commented on a change in pull request #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

tmiller-msft commented on a change in pull request #4265: [AIRFLOW-3406]

Implement an Azure CosmosDB operator

URL: https://github.com/apache/incubator-airflow/pull/4265#discussion_r238889041

##

File path: airflow/contrib/operators/azure_cosmos_insertdocument_operator.py

##

@@ -0,0 +1,67 @@

+# -*- coding: utf-8 -*-

+#

+# Licensed to the Apache Software Foundation (ASF) under one

+# or more contributor license agreements. See the NOTICE file

+# distributed with this work for additional information

+# regarding copyright ownership. The ASF licenses this file

+# to you under the Apache License, Version 2.0 (the

+# "License"); you may not use this file except in compliance

+# with the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing,

+# software distributed under the License is distributed on an

+# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+# KIND, either express or implied. See the License for the

+# specific language governing permissions and limitations

+# under the License.

+

+from airflow.contrib.hooks.azure_cosmos_hook import AzureCosmosDBHook

+from airflow.models import BaseOperator

+from airflow.utils.decorators import apply_defaults

+

+

+class AzureCosmosInsertDocumentOperator(BaseOperator):

+"""

+Inserts a new document into the specified Cosmos database and collection

+It will create both the database and collection if they do not already

exist

+

+:param database_name: The name of the database. (templated)

+:type database_name: str

+:param collection_name: The name of the collection. (templated)

+:type collection_name: str

+:param document: The document to insert

+:type document: json

+:param azure_cosmos_conn_id: reference to a CosmosDB connection.

+:type azure_cosmos_conn_id: str

+"""

+template_fields = ('database_name', 'collection_name')

+ui_color = '#e4f0e8'

+

+@apply_defaults

+def __init__(self,

+ database_name,

+ collection_name,

+ document,

+ azure_cosmos_conn_id='azure_cosmos_default',

+ *args,

+ **kwargs):

+super(AzureCosmosInsertDocumentOperator, self).__init__(*args,

**kwargs)

+self.database_name = database_name

+self.collection_name = collection_name

+self.document = document

+self.azure_cosmos_conn_id = azure_cosmos_conn_id

+

+def execute(self, context):

+# Create the hook

+hook =

AzureCosmosDBHook(azure_cosmos_conn_id=self.azure_cosmos_conn_id)

+

+# Create the DB if it doesn't already exist

+hook.create_database(self.database_name)

+

+# Create the collection as well

+hook.create_collection(self.collection_name, self.database_name)

Review comment:

Thanks! Updated to query for existing database and only creating when not

existing rather than using exception flow.

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] tmiller-msft commented on a change in pull request #4265: [AIRFLOW-3406] Implement an Azure CosmosDB operator

tmiller-msft commented on a change in pull request #4265: [AIRFLOW-3406]

Implement an Azure CosmosDB operator

URL: https://github.com/apache/incubator-airflow/pull/4265#discussion_r23991

##

File path: airflow/contrib/operators/azure_cosmos_insertdocument_operator.py

##

@@ -0,0 +1,67 @@

+# -*- coding: utf-8 -*-

+#

+# Licensed to the Apache Software Foundation (ASF) under one

+# or more contributor license agreements. See the NOTICE file

+# distributed with this work for additional information

+# regarding copyright ownership. The ASF licenses this file

+# to you under the Apache License, Version 2.0 (the

+# "License"); you may not use this file except in compliance

+# with the License. You may obtain a copy of the License at

+#

+# http://www.apache.org/licenses/LICENSE-2.0

+#

+# Unless required by applicable law or agreed to in writing,

+# software distributed under the License is distributed on an

+# "AS IS" BASIS, WITHOUT WARRANTIES OR CONDITIONS OF ANY

+# KIND, either express or implied. See the License for the

+# specific language governing permissions and limitations

+# under the License.

+

+from airflow.contrib.hooks.azure_cosmos_hook import AzureCosmosDBHook

+from airflow.models import BaseOperator

+from airflow.utils.decorators import apply_defaults

+

+

+class AzureCosmosInsertDocumentOperator(BaseOperator):

+"""

+Inserts a new document into the specified Cosmos database and collection

+It will create both the database and collection if they do not already

exist

+

+:param database_name: The name of the database. (templated)

+:type database_name: str

+:param collection_name: The name of the collection. (templated)

+:type collection_name: str

+:param document: The document to insert

+:type document: json

+:param azure_cosmos_conn_id: reference to a CosmosDB connection.

+:type azure_cosmos_conn_id: str

+"""

+template_fields = ('database_name', 'collection_name')

+ui_color = '#e4f0e8'

+

+@apply_defaults

+def __init__(self,

+ database_name,

+ collection_name,

+ document,

+ azure_cosmos_conn_id='azure_cosmos_default',

+ *args,

+ **kwargs):

+super(AzureCosmosInsertDocumentOperator, self).__init__(*args,

**kwargs)

+self.database_name = database_name

+self.collection_name = collection_name

+self.document = document

+self.azure_cosmos_conn_id = azure_cosmos_conn_id

+

+def execute(self, context):

+# Create the hook

+hook =

AzureCosmosDBHook(azure_cosmos_conn_id=self.azure_cosmos_conn_id)

+

+# Create the DB if it doesn't already exist

+hook.create_database(self.database_name)

Review comment:

Thanks! Updated to query for existing database and only creating when not

existing rather than using exception flow.

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[jira] [Closed] (AIRFLOW-3443) KubernetesPodOperator image_pull_secrets must be a valid parameter

[ https://issues.apache.org/jira/browse/AIRFLOW-3443?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Maxime Rauer closed AIRFLOW-3443. - Resolution: Duplicate > KubernetesPodOperator image_pull_secrets must be a valid parameter > -- > > Key: AIRFLOW-3443 > URL: https://issues.apache.org/jira/browse/AIRFLOW-3443 > Project: Apache Airflow > Issue Type: Bug > Components: kubernetes >Affects Versions: 1.10.1 >Reporter: Maxime Rauer >Assignee: Maxime Rauer >Priority: Blocker > Original Estimate: 24h > Remaining Estimate: 24h > > We've been successfully using the KubernetesPodOperator in our company with a > local Docker registry, but when switching to a private repository such as > Amazon ECR, Airflow wasn't able to pull the secrets from the cluster. > I have made a change in the *make_pod()* function on > *kubernetes_pod_operator.py* to support a new *image_pull_secrets* field. > This works great on our end, and the community could benefit from it for the > version 10.0.1 > -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[GitHub] feluelle commented on issue #4121: [AIRFLOW-2568] Azure Container Instances operator

feluelle commented on issue #4121: [AIRFLOW-2568] Azure Container Instances operator URL: https://github.com/apache/incubator-airflow/pull/4121#issuecomment-444283991 Could you please add tests for the hook, too? :) This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Created] (AIRFLOW-3443) KubernetesPodOperator image_pull_secrets must be a valid parameter

Maxime Rauer created AIRFLOW-3443: - Summary: KubernetesPodOperator image_pull_secrets must be a valid parameter Key: AIRFLOW-3443 URL: https://issues.apache.org/jira/browse/AIRFLOW-3443 Project: Apache Airflow Issue Type: Bug Components: kubernetes Affects Versions: 1.10.1 Reporter: Maxime Rauer Assignee: Maxime Rauer We've been successfully using the KubernetesPodOperator in our company with a local Docker registry, but when switching to a private repository such as Amazon ECR, Airflow wasn't able to pull the secrets from the cluster. I have made a change in the *make_pod()* function on *kubernetes_pod_operator.py* to support a new *image_pull_secrets* field. This works great on our end, and the community could benefit from it for the version 10.0.1 -- This message was sent by Atlassian JIRA (v7.6.3#76005)

[jira] [Commented] (AIRFLOW-3430) Document how to become a commiter

[

https://issues.apache.org/jira/browse/AIRFLOW-3430?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16709294#comment-16709294

]

Fokko Driesprong commented on AIRFLOW-3430:

---

Updated the existing documentation:

https://cwiki.apache.org/confluence/display/AIRFLOW/Committers

> Document how to become a commiter

> -

>

> Key: AIRFLOW-3430

> URL: https://issues.apache.org/jira/browse/AIRFLOW-3430

> Project: Apache Airflow

> Issue Type: Improvement

>Reporter: Ash Berlin-Taylor

>Assignee: Fokko Driesprong

>Priority: Major

>

> Add to our documents what the process is to become a committer (CO50):

> {quote}The way in which contributors can be granted more rights such as

> commit access or decision power is clearly documented and is the same for all

> contributors.

> {quote}

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[GitHub] ashb commented on issue #3546: AIRFLOW-2664: Support filtering dag runs by id prefix in API.

ashb commented on issue #3546: AIRFLOW-2664: Support filtering dag runs by id prefix in API. URL: https://github.com/apache/incubator-airflow/pull/3546#issuecomment-444268426 Shouldn't our test set up already create those? If not how aren't all the other RBAC tests failing? This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io commented on issue #4278: [AIRFLOW-2524] Add SageMaker doc to AWS integration section

codecov-io commented on issue #4278: [AIRFLOW-2524] Add SageMaker doc to AWS integration section URL: https://github.com/apache/incubator-airflow/pull/4278#issuecomment-444268064 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4278?src=pr=h1) Report > Merging [#4278](https://codecov.io/gh/apache/incubator-airflow/pull/4278?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4278?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4278 +/- ## === Coverage 78.08% 78.08% === Files 201 201 Lines 1645816458 === Hits1285112851 Misses 3607 3607 ``` -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4278?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4278?src=pr=footer). Last update [9c04e8f...7f292c6](https://codecov.io/gh/apache/incubator-airflow/pull/4278?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] shayan90 edited a comment on issue #4068: [AIRFLOW-2310]: Add AWS Glue Job Compatibility to Airflow

shayan90 edited a comment on issue #4068: [AIRFLOW-2310]: Add AWS Glue Job Compatibility to Airflow URL: https://github.com/apache/incubator-airflow/pull/4068#issuecomment-444252494 This is great, when are you guys planning to merge this ? @oelesinsc24 it might be useful to fully leverage Glue API, two usefull arguments are `AllocatedCapacity` for number of DPUs per Job Run and `SecurityConfiguration` would allow encryption at rest using KMS. https://github.com/apache/incubator-airflow/blob/71cb37eaedae50e2abe9a13591e31a56f2e3659e/airflow/contrib/hooks/aws_glue_job_hook.py#L67-L70 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] Fokko commented on issue #3546: AIRFLOW-2664: Support filtering dag runs by id prefix in API.

Fokko commented on issue #3546: AIRFLOW-2664: Support filtering dag runs by id prefix in API. URL: https://github.com/apache/incubator-airflow/pull/3546#issuecomment-444262224 It looks like the `ab_` tables aren't properly initialized. ab is short for Flask App Builder: https://github.com/dpgaspar/Flask-AppBuilder These tables need to be initialized as well if you want to use the RBAC UI. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] Fokko commented on issue #4207: [AIRFLOW-3367] Run celery integration test with redis broker.

Fokko commented on issue #4207: [AIRFLOW-3367] Run celery integration test with redis broker. URL: https://github.com/apache/incubator-airflow/pull/4207#issuecomment-444261366 LGTM, sorry for the delayed reply. I'm currently a bit short on time :( This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] Fokko closed pull request #2362: Make FileSensor detect file or folder

Fokko closed pull request #2362: Make FileSensor detect file or folder

URL: https://github.com/apache/incubator-airflow/pull/2362

This is a PR merged from a forked repository.

As GitHub hides the original diff on merge, it is displayed below for

the sake of provenance:

As this is a foreign pull request (from a fork), the diff is supplied

below (as it won't show otherwise due to GitHub magic):

diff --git a/airflow/contrib/operators/fs_operator.py

b/airflow/contrib/operators/fs_operator.py

index 259648709d..b654682268 100644

--- a/airflow/contrib/operators/fs_operator.py

+++ b/airflow/contrib/operators/fs_operator.py

@@ -13,7 +13,7 @@

# limitations under the License.

#

-from os import walk

+import os

import logging

from airflow.operators.sensors import BaseSensorOperator

@@ -51,8 +51,5 @@ def poke(self, context):

full_path = "/".join([basepath, self.filepath])

logging.info(

'Poking for file {full_path} '.format(**locals()))

-try:

-files = [f for f in walk(full_path)]

-except:

-return False

-return True

+

+return os.path.exists(full_path)

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] Fokko commented on issue #2362: Make FileSensor detect file or folder

Fokko commented on issue #2362: Make FileSensor detect file or folder URL: https://github.com/apache/incubator-airflow/pull/2362#issuecomment-444255503 Please open a new PR @colinbreame if you still want to improve the behaviour of the FileSensor This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Commented] (AIRFLOW-2524) Airflow integration with AWS Sagemaker

[

https://issues.apache.org/jira/browse/AIRFLOW-2524?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16709238#comment-16709238

]

ASF GitHub Bot commented on AIRFLOW-2524:

-

yangaws opened a new pull request #4278: [AIRFLOW-2524] Add SageMaker doc to

AWS integration section

URL: https://github.com/apache/incubator-airflow/pull/4278

Make sure you have checked _all_ steps below.

### Jira

- [x] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues and references

them in the PR title. For example, "\[AIRFLOW-XXX\] My Airflow PR"

- https://issues.apache.org/jira/browse/AIRFLOW-XXX

- In case you are fixing a typo in the documentation you can prepend your

commit with \[AIRFLOW-XXX\], code changes always need a Jira issue.

### Description

- [x] Here are some details about my PR, including screenshots of any UI

changes:

Add SageMaker doc to AWS integration section

### Tests

- [ ] My PR adds the following unit tests __OR__ does not need testing for

this extremely good reason:

### Commits

- [x] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

### Documentation

- [ ] In case of new functionality, my PR adds documentation that describes

how to use it.

- When adding new operators/hooks/sensors, the autoclass documentation

generation needs to be added.

### Code Quality

- [x] Passes `flake8`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

> Airflow integration with AWS Sagemaker

> --

>

> Key: AIRFLOW-2524

> URL: https://issues.apache.org/jira/browse/AIRFLOW-2524

> Project: Apache Airflow

> Issue Type: Improvement

> Components: aws, contrib

>Reporter: Rajeev Srinivasan

>Assignee: Yang Yu

>Priority: Major

> Labels: AWS

> Fix For: 1.10.1

>

> Time Spent: 10m

> Remaining Estimate: 0h

>

> Would it be possible to orchestrate an end to end AWS Sagemaker job using

> Airflow.

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[GitHub] yangaws opened a new pull request #4278: [AIRFLOW-2524] Add SageMaker doc to AWS integration section

yangaws opened a new pull request #4278: [AIRFLOW-2524] Add SageMaker doc to

AWS integration section

URL: https://github.com/apache/incubator-airflow/pull/4278

Make sure you have checked _all_ steps below.

### Jira

- [x] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues and references

them in the PR title. For example, "\[AIRFLOW-XXX\] My Airflow PR"

- https://issues.apache.org/jira/browse/AIRFLOW-XXX

- In case you are fixing a typo in the documentation you can prepend your

commit with \[AIRFLOW-XXX\], code changes always need a Jira issue.

### Description

- [x] Here are some details about my PR, including screenshots of any UI

changes:

Add SageMaker doc to AWS integration section

### Tests

- [ ] My PR adds the following unit tests __OR__ does not need testing for

this extremely good reason:

### Commits

- [x] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

### Documentation

- [ ] In case of new functionality, my PR adds documentation that describes

how to use it.

- When adding new operators/hooks/sensors, the autoclass documentation

generation needs to be added.

### Code Quality

- [x] Passes `flake8`

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] shayan90 edited a comment on issue #4068: [AIRFLOW-2310]: Add AWS Glue Job Compatibility to Airflow

shayan90 edited a comment on issue #4068: [AIRFLOW-2310]: Add AWS Glue Job Compatibility to Airflow URL: https://github.com/apache/incubator-airflow/pull/4068#issuecomment-444252494 This is great, when are you guys planning to merge this ? also @oelesinsc24 it might be useful to fully leverage Glue API, two usefull arguments are `AllocatedCapacity` for number of DPUs per Job Run and `SecurityConfiguration` would allow encryption at rest using KMS. https://github.com/apache/incubator-airflow/blob/71cb37eaedae50e2abe9a13591e31a56f2e3659e/airflow/contrib/hooks/aws_glue_job_hook.py#L67-L70 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] shayan90 edited a comment on issue #4068: [AIRFLOW-2310]: Add AWS Glue Job Compatibility to Airflow

shayan90 edited a comment on issue #4068: [AIRFLOW-2310]: Add AWS Glue Job Compatibility to Airflow URL: https://github.com/apache/incubator-airflow/pull/4068#issuecomment-444252494 This is great, when are you guys planning to merge this ? also @oelesinsc24 it might be useful to fully leverage Glue API, two usefull arguments are `AllocatedCapacity` allocate capacity for number of DPUs per Job Run and `SecurityConfiguration` would allow encryption at rest using KMS. https://github.com/apache/incubator-airflow/blob/71cb37eaedae50e2abe9a13591e31a56f2e3659e/airflow/contrib/hooks/aws_glue_job_hook.py#L67-L70 This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] shayan90 commented on issue #4068: [AIRFLOW-2310]: Add AWS Glue Job Compatibility to Airflow

shayan90 commented on issue #4068: [AIRFLOW-2310]: Add AWS Glue Job Compatibility to Airflow URL: https://github.com/apache/incubator-airflow/pull/4068#issuecomment-444252494 This is great, when are you guys planning to merge this ? also @oelesinsc24 it might be useful to fully leverage Glue API, two use full arguments are `AllocatedCapacity` allocate capacity for number of DPUs per Job Run and `SecurityConfiguration` would allow encryption at rest using KMS. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] codecov-io commented on issue #4277: [AIRFLOW-3217] Airflow log view and code view should wrap

codecov-io commented on issue #4277: [AIRFLOW-3217] Airflow log view and code view should wrap URL: https://github.com/apache/incubator-airflow/pull/4277#issuecomment-444199709 # [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4277?src=pr=h1) Report > Merging [#4277](https://codecov.io/gh/apache/incubator-airflow/pull/4277?src=pr=desc) into [master](https://codecov.io/gh/apache/incubator-airflow/commit/9c04e8f339a6d84b2fff983e6584af2b81249652?src=pr=desc) will **not change** coverage. > The diff coverage is `n/a`. [](https://codecov.io/gh/apache/incubator-airflow/pull/4277?src=pr=tree) ```diff @@ Coverage Diff @@ ## master#4277 +/- ## === Coverage 78.08% 78.08% === Files 201 201 Lines 1645816458 === Hits1285112851 Misses 3607 3607 ``` -- [Continue to review full report at Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4277?src=pr=continue). > **Legend** - [Click here to learn more](https://docs.codecov.io/docs/codecov-delta) > `Δ = absolute (impact)`, `ø = not affected`, `? = missing data` > Powered by [Codecov](https://codecov.io/gh/apache/incubator-airflow/pull/4277?src=pr=footer). Last update [9c04e8f...d4fe8a7](https://codecov.io/gh/apache/incubator-airflow/pull/4277?src=pr=lastupdated). Read the [comment docs](https://docs.codecov.io/docs/pull-request-comments). This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] nmcalabroso commented on issue #2906: [AIRFLOW-1956] Add parameter whether the navbar clock time is UTC

nmcalabroso commented on issue #2906: [AIRFLOW-1956] Add parameter whether the navbar clock time is UTC URL: https://github.com/apache/incubator-airflow/pull/2906#issuecomment-444184660 Now with the introduction of `default_timezone` config, I think this PR is now relevant. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[jira] [Commented] (AIRFLOW-3217) Airflow log view and code view should wrap

[

https://issues.apache.org/jira/browse/AIRFLOW-3217?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=16709011#comment-16709011

]

ASF GitHub Bot commented on AIRFLOW-3217:

-

Eronarn opened a new pull request #4277: [AIRFLOW-3217] Airflow log view and

code view should wrap

URL: https://github.com/apache/incubator-airflow/pull/4277

### Jira

- [x] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues:

https://issues.apache.org/jira/browse/AIRFLOW-3217

### Description

- [x] Here are some details about my PR, including screenshots of any UI

changes:

DAG code view before/after:

Log view before/after:

### Tests

- [x] My PR adds the following unit tests __OR__ does not need testing for

this extremely good reason: it's css

### Commits

- [x] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

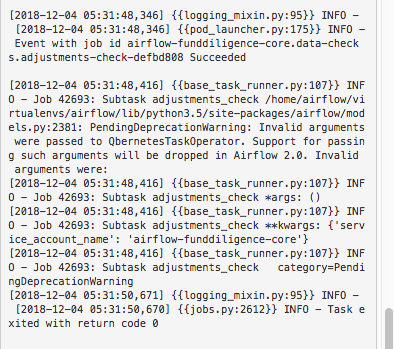

> Airflow log view and code view should wrap

> --

>

> Key: AIRFLOW-3217

> URL: https://issues.apache.org/jira/browse/AIRFLOW-3217

> Project: Apache Airflow

> Issue Type: Bug

> Components: logging

>Affects Versions: 1.10.0

>Reporter: James Meickle

>Priority: Minor

>

> Being able to look at logs and code in Airflow is great, but the Kubernetes

> operator in particular makes very long loglines. For example, a pod log might

> have something like this:

>

> `{{[2018-10-16 10:32:38,195] {{logging_mixin.py:95}} INFO - [2018-10-16

> 10:32:38,195] {{pod_launcher.py:95}} INFO - b'[2018-10-16 10:32:38.190104]

> INFO:`}}

>

> ...and that's before any log text! Since there is no wrapping or navigation

> on this screen, it's very challenging to make use of these logs.

--

This message was sent by Atlassian JIRA

(v7.6.3#76005)

[GitHub] Eronarn opened a new pull request #4277: [AIRFLOW-3217] Airflow log view and code view should wrap

Eronarn opened a new pull request #4277: [AIRFLOW-3217] Airflow log view and

code view should wrap

URL: https://github.com/apache/incubator-airflow/pull/4277

### Jira

- [x] My PR addresses the following [Airflow

Jira](https://issues.apache.org/jira/browse/AIRFLOW/) issues:

https://issues.apache.org/jira/browse/AIRFLOW-3217

### Description

- [x] Here are some details about my PR, including screenshots of any UI

changes:

DAG code view before/after:

Log view before/after:

### Tests

- [x] My PR adds the following unit tests __OR__ does not need testing for

this extremely good reason: it's css

### Commits

- [x] My commits all reference Jira issues in their subject lines, and I

have squashed multiple commits if they address the same issue. In addition, my

commits follow the guidelines from "[How to write a good git commit

message](http://chris.beams.io/posts/git-commit/)":

1. Subject is separated from body by a blank line

1. Subject is limited to 50 characters (not including Jira issue reference)

1. Subject does not end with a period

1. Subject uses the imperative mood ("add", not "adding")

1. Body wraps at 72 characters

1. Body explains "what" and "why", not "how"

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] ashb commented on a change in pull request #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth

ashb commented on a change in pull request #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth URL: https://github.com/apache/incubator-airflow/pull/4276#discussion_r238722905 ## File path: airflow/contrib/auth/backends/password_auth.py ## @@ -106,8 +102,8 @@ def load_user(userid, session=None): if not userid or userid == 'None': return None -user = session.query(models.User).filter(models.User.id == int(userid)).first() -return PasswordUser(user) +user = session.query(PasswordUser).filter(PasswordUser.id == int(userid)).first() Review comment: No timing on 2.0.0 (q1 or q2 next year) but the "RBAC" based UI is available behind a config flag on 1.10.0 so you can start experimenting with it already https://github.com/apache/incubator-airflow/blob/master/UPDATING.md#new-webserver-ui-with-role-based-access-control This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] thomasbrockmeier commented on a change in pull request #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth

thomasbrockmeier commented on a change in pull request #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth URL: https://github.com/apache/incubator-airflow/pull/4276#discussion_r238721561 ## File path: airflow/contrib/auth/backends/password_auth.py ## @@ -106,8 +102,8 @@ def load_user(userid, session=None): if not userid or userid == 'None': return None -user = session.query(models.User).filter(models.User.id == int(userid)).first() -return PasswordUser(user) +user = session.query(PasswordUser).filter(PasswordUser.id == int(userid)).first() Review comment: If it keeps the API from breaking, I can see if I can fix it in [views.py:2070](https://github.com/apache/incubator-airflow/blob/master/airflow/www/views.py#L2070) with something like ``` do_filter = FILTER_BY_OWNER and (not current_user.user.is_superuser()) ``` Not the nicest way to handle this, I guess, but perhaps worth considering if it enables filtering DAGs by owner for the time being? Do you have any indication when 2.0.0 is scheduled for release? Depending on the time frame, I may be able to invest a couple of hours to look into this further as this feature would make my life a lot easier :) This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] thomasbrockmeier commented on a change in pull request #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth

thomasbrockmeier commented on a change in pull request #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth URL: https://github.com/apache/incubator-airflow/pull/4276#discussion_r238721561 ## File path: airflow/contrib/auth/backends/password_auth.py ## @@ -106,8 +102,8 @@ def load_user(userid, session=None): if not userid or userid == 'None': return None -user = session.query(models.User).filter(models.User.id == int(userid)).first() -return PasswordUser(user) +user = session.query(PasswordUser).filter(PasswordUser.id == int(userid)).first() Review comment: If it keeps the API from breaking, I can see if I can fix it in [views.py:2070](https://github.com/apache/incubator-airflow/blob/master/airflow/www/views.py#L2070) with something like ```suggestion do_filter = FILTER_BY_OWNER and (not current_user.user.is_superuser()) ``` Not the nicest way to handle this, I guess, but perhaps worth considering if it enables filtering DAGs by owner for the time being? Do you have any indication when 2.0.0 is scheduled for release? Depending on the time frame, I may be able to invest a couple of hours to look into this further as this feature would make my life a lot easier :) This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] ashb commented on a change in pull request #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth

ashb commented on a change in pull request #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth URL: https://github.com/apache/incubator-airflow/pull/4276#discussion_r238710034 ## File path: airflow/contrib/auth/backends/password_auth.py ## @@ -106,8 +102,8 @@ def load_user(userid, session=None): if not userid or userid == 'None': return None -user = session.query(models.User).filter(models.User.id == int(userid)).first() -return PasswordUser(user) +user = session.query(PasswordUser).filter(PasswordUser.id == int(userid)).first() Review comment: I suspect this will fail tests as https://github.com/apache/incubator-airflow/blob/b7a7fd66d693dbfbc471a6d08bc274441ee4841c/airflow/www/utils.py#L299 won't work anymore. And this (admittedly silly) API is part of the external API that people have written custom auth backends against so we can't change it. If we were keeping these I'd say it's worth "fixing" this (as you have here) but since we're removing the custom auth backends in Favour of Flask-AppBuilder in 2.0.0 it's probably worth just keeping the sillyness. This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] ashb commented on issue #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth

ashb commented on issue #4276: [AIRFLOW-1552] Airflow Filter_by_owner not working with password_auth URL: https://github.com/apache/incubator-airflow/pull/4276#issuecomment-444139550 @thomasbrockmeier FYI: This auth mechanism is probably going away in Airflow 2.0.0 @kaxil one for 1.10.2 perhaps? This is an automated message from the Apache Git Service. To respond to the message, please log on GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] EamonKeane commented on a change in pull request #4160: [AIRFLOW-3311] Allow pod operator to keep failed pods

EamonKeane commented on a change in pull request #4160: [AIRFLOW-3311] Allow

pod operator to keep failed pods

URL: https://github.com/apache/incubator-airflow/pull/4160#discussion_r238708915

##

File path: tests/contrib/minikube/test_kubernetes_pod_operator.py

##

@@ -111,6 +111,21 @@ def test_delete_operator_pod():

)

k.execute(None)

+def test_keep_failed_pod(self):

+k = KubernetesPodOperator(

+namespace='default',

+image="ubuntu:16.04",

+cmds=["bash", "-cx"],

+arguments=["exit 1"],

+labels={"foo": "bar"},

+name="test",

+task_id="task",

+is_delete_operator_pod=True,

+keep_failed_pod=True

+)

+with self.assertRaises(AirflowException):

+k.execute(None)

Review comment:

The pod will enter a failed state instantly. We just need to confirm it is

still there after this the `Kubernetes Pod Operator` has gotten past the line

below (which currently deletes all pods regardless of exit code):

https://github.com/apache/incubator-airflow/blob/a8203aa3263656c32ebc2e8090322b08be4e1ded/airflow/contrib/operators/kubernetes_pod_operator.py#L136

In practice waiting a second or two should suffice, or there may be another

way to structure it if you have any ideas?

This is an automated message from the Apache Git Service.

To respond to the message, please log on GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,