[GitHub] [incubator-hudi] vinothchandar merged pull request #783: Updating site with latest content from docs folder

vinothchandar merged pull request #783: Updating site with latest content from docs folder URL: https://github.com/apache/incubator-hudi/pull/783 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[incubator-hudi] branch asf-site updated: Updating site with latest content from docs folder (#783)

This is an automated email from the ASF dual-hosted git repository. vinoth pushed a commit to branch asf-site in repository https://gitbox.apache.org/repos/asf/incubator-hudi.git The following commit(s) were added to refs/heads/asf-site by this push: new a9539c1 Updating site with latest content from docs folder (#783) a9539c1 is described below commit a9539c19fe1926e03d17b4ab660a3e882ee45933 Author: vinoth chandar AuthorDate: Thu Jul 11 23:04:53 2019 -0700 Updating site with latest content from docs folder (#783) - yotpo usage - hoodie-utilities-bundle jar replacement in deltastreamer commands --- content/Gemfile| 10 --- content/Gemfile.lock | 156 - content/contributing.html | 4 +- content/docker_demo.html | 14 +++- content/feed.xml | 4 +- content/powered_by.html| 14 +++- content/querying_data.html | 7 ++ content/writing_data.html | 6 +- docs/contributing.md | 2 +- 9 files changed, 39 insertions(+), 178 deletions(-) diff --git a/content/Gemfile b/content/Gemfile deleted file mode 100644 index b301eda..000 --- a/content/Gemfile +++ /dev/null @@ -1,10 +0,0 @@ -source "https://rubygems.org"; - - -gem "jekyll", "3.3.1" - - -group :jekyll_plugins do - gem "jekyll-feed", "~> 0.6" - gem 'github-pages', '~> 106' -end diff --git a/content/Gemfile.lock b/content/Gemfile.lock deleted file mode 100644 index b72b9b1..000 --- a/content/Gemfile.lock +++ /dev/null @@ -1,156 +0,0 @@ -GEM - remote: https://rubygems.org/ - specs: -activesupport (4.2.7) - i18n (~> 0.7) - json (~> 1.7, >= 1.7.7) - minitest (~> 5.1) - thread_safe (~> 0.3, >= 0.3.4) - tzinfo (~> 1.1) -addressable (2.4.0) -coffee-script (2.4.1) - coffee-script-source - execjs -coffee-script-source (1.12.2) -colorator (1.1.0) -concurrent-ruby (1.1.4) -ethon (0.12.0) - ffi (>= 1.3.0) -execjs (2.7.0) -faraday (0.15.4) - multipart-post (>= 1.2, < 3) -ffi (1.10.0) -forwardable-extended (2.6.0) -gemoji (2.1.0) -github-pages (106) - activesupport (= 4.2.7) - github-pages-health-check (= 1.2.0) - jekyll (= 3.3.1) - jekyll-avatar (= 0.4.2) - jekyll-coffeescript (= 1.0.1) - jekyll-feed (= 0.8.0) - jekyll-gist (= 1.4.0) - jekyll-github-metadata (= 2.2.0) - jekyll-mentions (= 1.2.0) - jekyll-paginate (= 1.1.0) - jekyll-redirect-from (= 0.11.0) - jekyll-relative-links (= 0.2.1) - jekyll-sass-converter (= 1.3.0) - jekyll-seo-tag (= 2.1.0) - jekyll-sitemap (= 0.12.0) - jekyll-swiss (= 0.4.0) - jemoji (= 0.7.0) - kramdown (= 1.11.1) - liquid (= 3.0.6) - listen (= 3.0.6) - mercenary (~> 0.3) - minima (= 2.0.0) - rouge (= 1.11.1) - terminal-table (~> 1.4) -github-pages-health-check (1.2.0) - addressable (~> 2.3) - net-dns (~> 0.8) - octokit (~> 4.0) - public_suffix (~> 1.4) - typhoeus (~> 0.7) -html-pipeline (2.10.0) - activesupport (>= 2) - nokogiri (>= 1.4) -i18n (0.9.5) - concurrent-ruby (~> 1.0) -jekyll (3.3.1) - addressable (~> 2.4) - colorator (~> 1.0) - jekyll-sass-converter (~> 1.0) - jekyll-watch (~> 1.1) - kramdown (~> 1.3) - liquid (~> 3.0) - mercenary (~> 0.3.3) - pathutil (~> 0.9) - rouge (~> 1.7) - safe_yaml (~> 1.0) -jekyll-avatar (0.4.2) - jekyll (~> 3.0) -jekyll-coffeescript (1.0.1) - coffee-script (~> 2.2) -jekyll-feed (0.8.0) - jekyll (~> 3.3) -jekyll-gist (1.4.0) - octokit (~> 4.2) -jekyll-github-metadata (2.2.0) - jekyll (~> 3.1) - octokit (~> 4.0, != 4.4.0) -jekyll-mentions (1.2.0) - activesupport (~> 4.0) - html-pipeline (~> 2.3) - jekyll (~> 3.0) -jekyll-paginate (1.1.0) -jekyll-redirect-from (0.11.0) - jekyll (>= 2.0) -jekyll-relative-links (0.2.1) - jekyll (~> 3.3) -jekyll-sass-converter (1.3.0) - sass (~> 3.2) -jekyll-seo-tag (2.1.0) - jekyll (~> 3.3) -jekyll-sitemap (0.12.0) - jekyll (~> 3.3) -jekyll-swiss (0.4.0) -jekyll-watch (1.5.1) - listen (~> 3.0) -jemoji (0.7.0) - activesupport (~> 4.0) - gemoji (~> 2.0) - html-pipeline (~> 2.2) - jekyll (>= 3.0) -json (2.1.0) -kramdown (1.11.1) -liquid (3.0.6) -listen (3.0.6) - rb-fsevent (>= 0.9.3) - rb-inotify (>= 0.9.7) -mercenary (0.3.6) -mini_portile2 (2.4.0) -minima (2.0.0) -minitest (5.11.3) -multipart-post (2.0.0) -net-dns (0.9.0) -nokogiri (1.10.1) - mini_portile2 (~> 2.4.0) -octokit (4.13.0) - sawyer (~> 0.8.0, >= 0.5.3) -pathutil (0.16.2) - forwardable-extended (~> 2.6) -public_suffix (1.5.3) -rb-fsevent (0.10.3) -rb-inotify (0.10.0) - ffi (~> 1.0) -rouge (1.11.1) -safe_yaml (1.0.

[GitHub] [incubator-hudi] vinothchandar opened a new pull request #783: Updating site with latest content from docs folder

vinothchandar opened a new pull request #783: Updating site with latest content from docs folder URL: https://github.com/apache/incubator-hudi/pull/783 - yotpo usage - hoodie-utilities-bundle jar replacement in deltastreamer commands This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on a change in pull request #780: Cleanup Maven POM/Classpath

vinothchandar commented on a change in pull request #780: Cleanup Maven

POM/Classpath

URL: https://github.com/apache/incubator-hudi/pull/780#discussion_r302763907

##

File path: hoodie-client/pom.xml

##

@@ -78,6 +79,71 @@

hoodie-timeline-service

${project.version}

+

+

+

+ log4j

+ log4j

+

+

+

+

+ org.apache.parquet

+ parquet-avro

+

+

+ org.apache.parquet

+ parquet-hadoop

+

+

+

+

+ org.apache.spark

+ spark-core_2.11

+

+

+ org.apache.spark

+ spark-sql_2.11

+

+

+

+

+ io.dropwizard.metrics

+ metrics-graphite

+

+

+ io.dropwizard.metrics

+ metrics-core

+

+

+

+ com.google.guava

+ guava

+

+

+

+ com.beust

+ jcommander

+ 1.48

+

+

+

+ org.htrace

Review comment:

removed already

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on a change in pull request #780: Cleanup Maven POM/Classpath

vinothchandar commented on a change in pull request #780: Cleanup Maven POM/Classpath URL: https://github.com/apache/incubator-hudi/pull/780#discussion_r302762037 ## File path: hoodie-cli/pom.xml ## @@ -29,8 +29,6 @@ 1.2.0.RELEASE org.springframework.shell.Bootstrap -1.2.17 -4.10 Review comment: already removed junit. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on a change in pull request #780: Cleanup Maven POM/Classpath

vinothchandar commented on a change in pull request #780: Cleanup Maven

POM/Classpath

URL: https://github.com/apache/incubator-hudi/pull/780#discussion_r302763536

##

File path: hoodie-client/pom.xml

##

@@ -78,6 +79,71 @@

hoodie-timeline-service

${project.version}

+

+

+

+ log4j

+ log4j

+

+

+

+

+ org.apache.parquet

+ parquet-avro

+

+

+ org.apache.parquet

Review comment:

removed already

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on a change in pull request #780: Cleanup Maven POM/Classpath

vinothchandar commented on a change in pull request #780: Cleanup Maven

POM/Classpath

URL: https://github.com/apache/incubator-hudi/pull/780#discussion_r302762896

##

File path: hoodie-cli/pom.xml

##

@@ -159,67 +176,51 @@

spark-sql_2.11

+

- com.jakewharton.fliptables

- fliptables

- 1.0.2

+ commons-dbcp

+ commons-dbcp

- log4j

- log4j

- ${log4j.version}

+ org.springframework.shell

+ spring-shell

+ ${spring.shell.version}

- com.uber.hoodie

- hoodie-hive

- ${project.version}

+ de.vandermeer

+ asciitable

+ 0.2.5

- com.uber.hoodie

- hoodie-client

- ${project.version}

+ com.jakewharton.fliptables

+ fliptables

+ 1.0.2

+

+ joda-time

+ joda-time

Review comment:

removed version

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on a change in pull request #780: Cleanup Maven POM/Classpath

vinothchandar commented on a change in pull request #780: Cleanup Maven

POM/Classpath

URL: https://github.com/apache/incubator-hudi/pull/780#discussion_r302762792

##

File path: hoodie-cli/pom.xml

##

@@ -159,67 +176,51 @@

spark-sql_2.11

+

- com.jakewharton.fliptables

- fliptables

- 1.0.2

+ commons-dbcp

+ commons-dbcp

- log4j

- log4j

- ${log4j.version}

+ org.springframework.shell

+ spring-shell

+ ${spring.shell.version}

- com.uber.hoodie

- hoodie-hive

- ${project.version}

+ de.vandermeer

Review comment:

not needed. removed already

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

[GitHub] [incubator-hudi] yihua opened a new pull request #781: [HUDI-161] Remove --key-generator-class CLI arg in HoodieDeltaStreamer and use key generator class specified in datasource properties

yihua opened a new pull request #781: [HUDI-161] Remove --key-generator-class CLI arg in HoodieDeltaStreamer and use key generator class specified in datasource properties URL: https://github.com/apache/incubator-hudi/pull/781 1. Removing --key-generator-class CLI arg in HoodieDeltaStreamer. 2. Changing the method `createKeyGenerator()` in `DataSourceUtils` class to directly get the class name of the key generator from the properties, instead of using the passed-in argument of the key generator class. 3. Adjusting the tests in `TestHoodieDeltaStreamer` to take the specified key generator class. Adding one test around the invalid class name of the key generator. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] thesuperzapper commented on issue #638: [HUDI-12] Upgrade to Spark 2.4, Avro 1.8.2, Parquet 1.10.0...

thesuperzapper commented on issue #638: [HUDI-12] Upgrade to Spark 2.4, Avro 1.8.2, Parquet 1.10.0... URL: https://github.com/apache/incubator-hudi/pull/638#issuecomment-510649936 We are working on #780 first This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present

vinothchandar commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present URL: https://github.com/apache/incubator-hudi/issues/764#issuecomment-510644864 @amaranathv again the issue from this line `.onParentPath(new Path(hoodieTable.getMetaClient().getBasePath(), partitionPath)) ` where I suspect partitionPath is null. Can you please ensure your dataset contains non-null partition paths? (I am going to add an explicit exception to flag this case in the KeyGenerator subclasses or somewhere else. ) This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

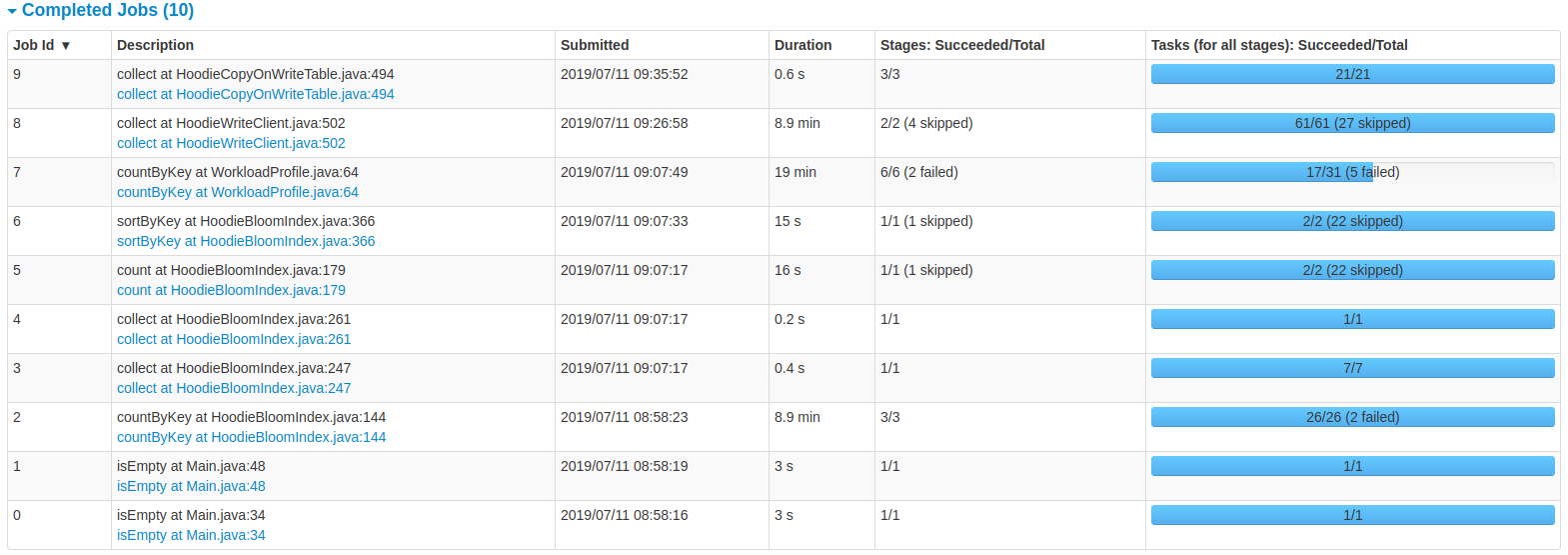

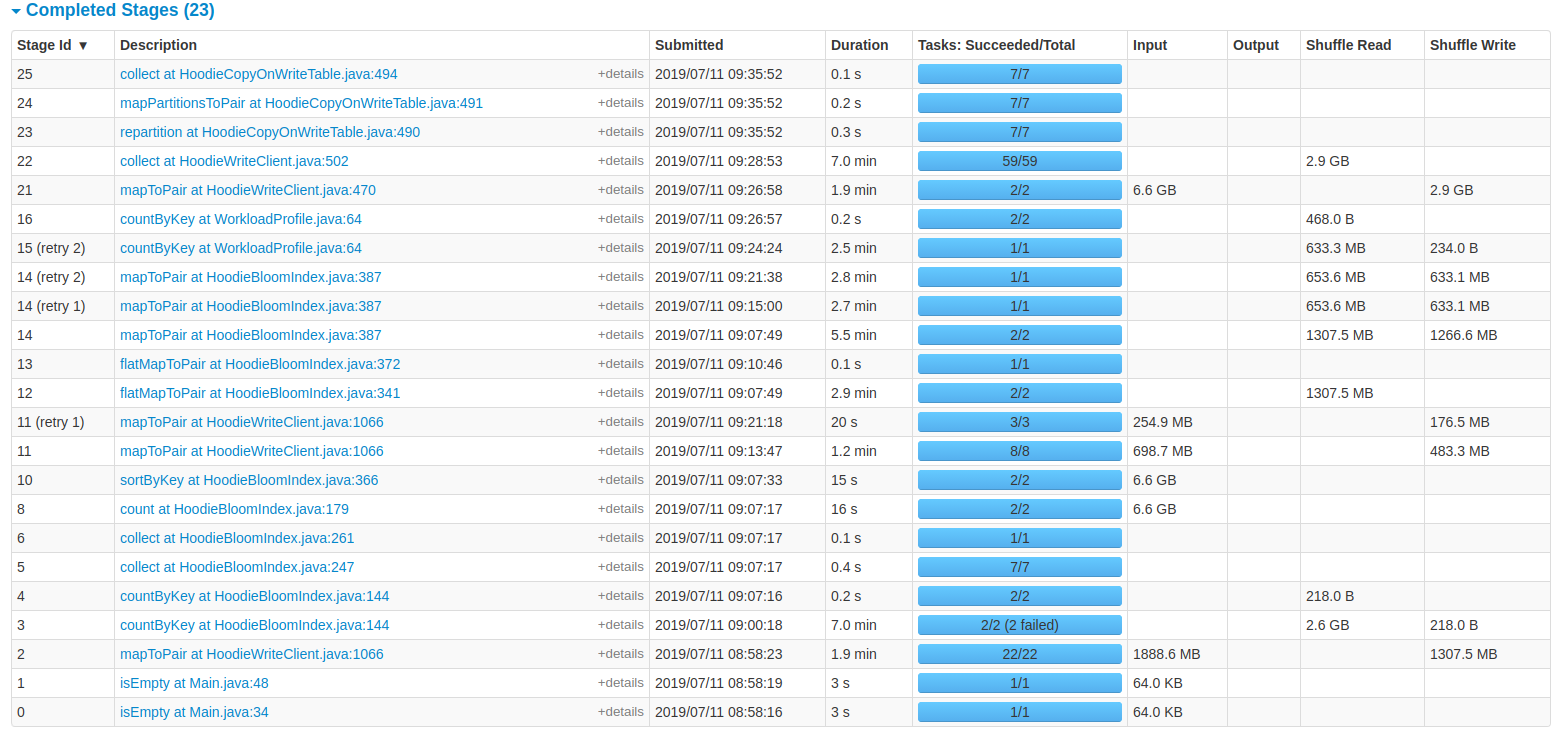

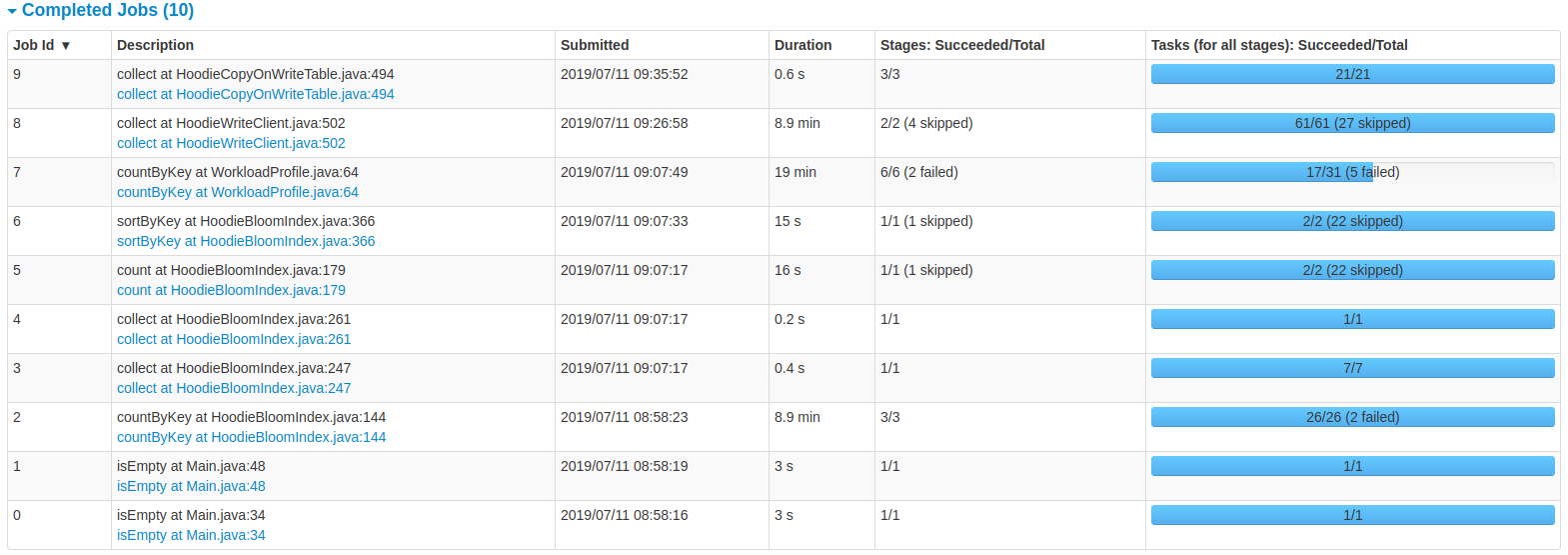

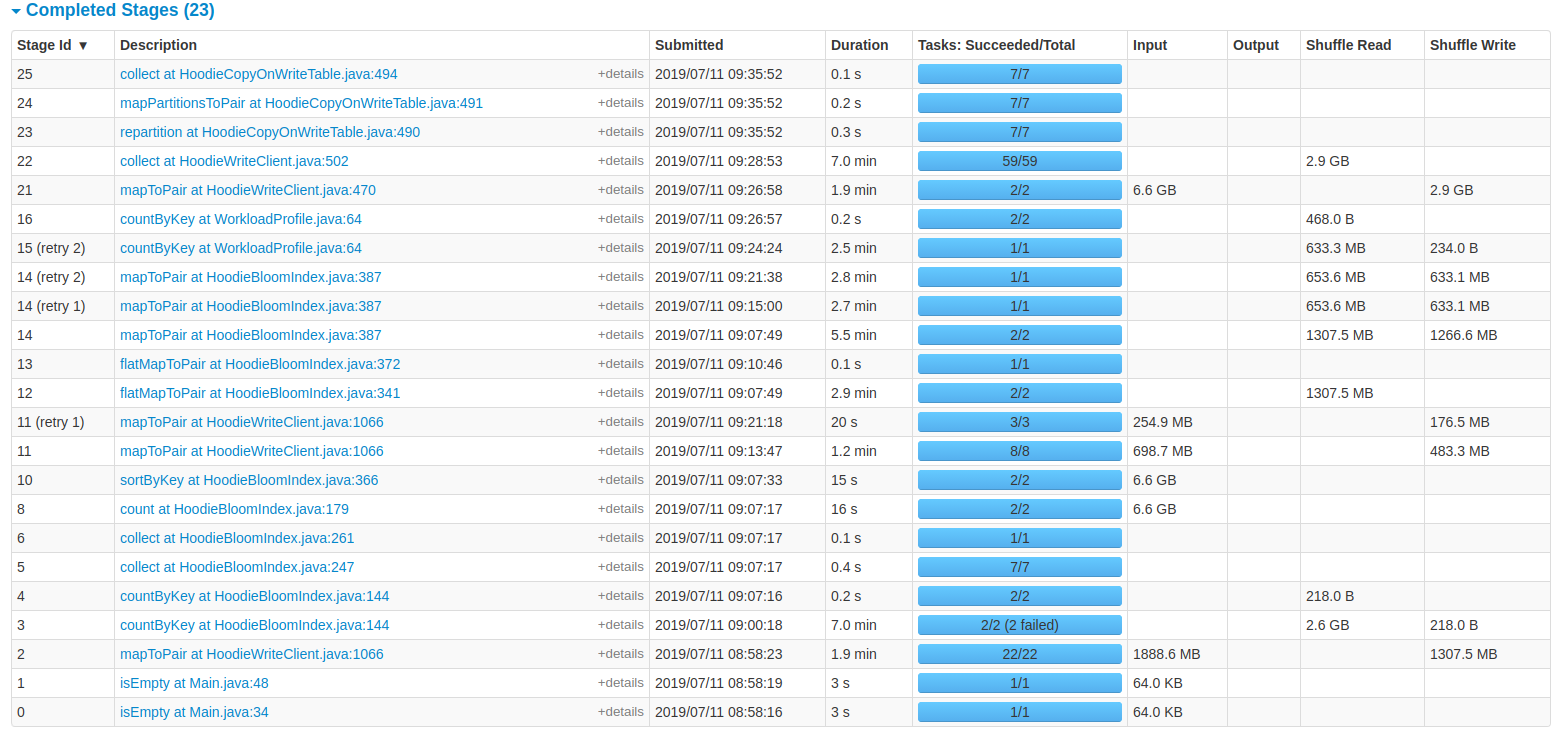

[GitHub] [incubator-hudi] vinothchandar commented on issue #714: Performance Comparison of HoodieDeltaStreamer and DataSourceAPI

vinothchandar commented on issue #714: Performance Comparison of HoodieDeltaStreamer and DataSourceAPI URL: https://github.com/apache/incubator-hudi/issues/714#issuecomment-510636174 One thing I notice is about 10 mins is spent in retrying the stages . This is usually indicatve that the job is running a degraded state. do you know what these failures are? also stage 3, simply parses the input and caches the hoodie records in memory.. Not sure why this takes 7 minutes - usually indicates input data parsing issues. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] amaranathv commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present

amaranathv commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present URL: https://github.com/apache/incubator-hudi/issues/764#issuecomment-510609967 DataSourceWriteOptions.KEYGENERATOR_CLASS_OPT_KEY-> "com.uber.hoodie.NonpartitionedKeyGenerator" This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] amaranathv commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present

amaranathv commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present URL: https://github.com/apache/incubator-hudi/issues/764#issuecomment-510609856 yes This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present

vinothchandar commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present URL: https://github.com/apache/incubator-hudi/issues/764#issuecomment-510606829 @amaranathv just to confirm you are using `NonpartitionedKeyGenerator` as the key generator? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] amaranathv edited a comment on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present

amaranathv edited a comment on issue #764: Hoodie 0.4.7: Error upserting

bucketType UPDATE for partition #, No value present

URL: https://github.com/apache/incubator-hudi/issues/764#issuecomment-510581260

I am getting same error.

```

scala>

.save("/datalake/888/888/888/hive/warehouse/test_hudi_spark_no_part_1_mor")

19/07/11 12:31:45 WARN TaskSetManager: Lost task 0.0 in stage 304.0 (TID

464, 8.uhc.com, executor 2):

com.uber.hoodie.exception.HoodieUpsertException: Error upserting bucketType

UPDATE for partition :0

at

com.uber.hoodie.table.HoodieCopyOnWriteTable.handleUpsertPartition(HoodieCopyOnWriteTable.java:274)

at

com.uber.hoodie.HoodieWriteClient.lambda$upsertRecordsInternal$7ef77fd$1(HoodieWriteClient.java:451)

at

org.apache.spark.api.java.JavaRDDLike$$anonfun$mapPartitionsWithIndex$1.apply(JavaRDDLike.scala:102)

at

org.apache.spark.api.java.JavaRDDLike$$anonfun$mapPartitionsWithIndex$1.apply(JavaRDDLike.scala:102)

at

org.apache.spark.rdd.RDD$$anonfun$mapPartitionsWithIndex$1$$anonfun$apply$26.apply(RDD.scala:844)

at

org.apache.spark.rdd.RDD$$anonfun$mapPartitionsWithIndex$1$$anonfun$apply$26.apply(RDD.scala:844)

at

org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:38)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:323)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:287)

at

org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:38)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:323)

at org.apache.spark.rdd.RDD$$anonfun$8.apply(RDD.scala:336)

at org.apache.spark.rdd.RDD$$anonfun$8.apply(RDD.scala:334)

at

org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1055)

at

org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1029)

at

org.apache.spark.storage.BlockManager.doPut(BlockManager.scala:969)

at

org.apache.spark.storage.BlockManager.doPutIterator(BlockManager.scala:1029)

at

org.apache.spark.storage.BlockManager.getOrElseUpdate(BlockManager.scala:760)

at org.apache.spark.rdd.RDD.getOrCompute(RDD.scala:334)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:285)

at

org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:38)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:323)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:287)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:87)

at org.apache.spark.scheduler.Task.run(Task.scala:108)

at

org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:338)

at

java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at

java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: com.uber.hoodie.exception.HoodieUpsertException: Failed to

initialize HoodieAppendHandle for FileId:

951d569b-188d-46e4-ad94-a32525fac797-0 on commit 20190711123144 on HDFS path

/datalake/optum/optuminsight/udw/hive/warehouse/test_hudi_spark_no_part_1_mor

at

com.uber.hoodie.io.HoodieAppendHandle.init(HoodieAppendHandle.java:141)

at

com.uber.hoodie.io.HoodieAppendHandle.doAppend(HoodieAppendHandle.java:193)

at

com.uber.hoodie.table.HoodieMergeOnReadTable.handleUpdate(HoodieMergeOnReadTable.java:118)

at

com.uber.hoodie.table.HoodieCopyOnWriteTable.handleUpsertPartition(HoodieCopyOnWriteTable.java:266)

... 28 more

Caused by: java.lang.IllegalArgumentException: Can not create a Path from an

empty string

at org.apache.hadoop.fs.Path.checkPathArg(Path.java:130)

at org.apache.hadoop.fs.Path.(Path.java:138)

at org.apache.hadoop.fs.Path.(Path.java:92)

at

com.uber.hoodie.io.HoodieAppendHandle.createLogWriter(HoodieAppendHandle.java:277)

at

com.uber.hoodie.io.HoodieAppendHandle.init(HoodieAppendHandle.java:132)

... 31 more

19/07/11 12:31:45 ERROR TaskSetManager: Task 0 in stage 304.0 failed 4

times; aborting job

org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in

stage 304.0 failed 4 times, most recent failure: Lost task 0.3 in stage 304.0

(TID 467, dbslt1829.uhc.com, executor 2):

com.uber.hoodie.exception.HoodieUpsertException: Error upserting bucketType

UPDATE for partition :0

at

com.uber.hoodie.table.HoodieCopyOnWriteTable.handleUpsertPartition(HoodieCopyOnWriteTable.java:274)

at

com.uber.hoodie.HoodieWriteClient.lambda$upsertRecordsInternal$7ef77fd$1(HoodieWriteClient.java:451)

at

org.apache.spark.api.java.JavaRDDLike$$anonfun$mapPartitionsWithIndex$1.apply(JavaRD

[GitHub] [incubator-hudi] vinothchandar commented on issue #774: Matching question of the version in Spark and Hive2

vinothchandar commented on issue #774: Matching question of the version in Spark and Hive2 URL: https://github.com/apache/incubator-hudi/issues/774#issuecomment-510605473 #751 has the changes in mostly final form.. @cdmikechen we have been thinking about whether we should directly talk to the metastore to perform hive sync, instead of talking to HiveServer. wdyt? Would it simplify few things This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar closed issue #550: NoSuchMethodError: org.eclipse.jetty.servlet.ServletMapping.setDefault(Z)V

vinothchandar closed issue #550: NoSuchMethodError: org.eclipse.jetty.servlet.ServletMapping.setDefault(Z)V URL: https://github.com/apache/incubator-hudi/issues/550 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on issue #550: NoSuchMethodError: org.eclipse.jetty.servlet.ServletMapping.setDefault(Z)V

vinothchandar commented on issue #550: NoSuchMethodError: org.eclipse.jetty.servlet.ServletMapping.setDefault(Z)V URL: https://github.com/apache/incubator-hudi/issues/550#issuecomment-510590195 In #751 , I actually found that the jetty jars are needed by the new embedded timeline server.. ``` 2019-07-11 17:54:10 INFO FileSystemViewManager:176 - Creating embedded rocks-db based Table View Exception in thread "main" java.lang.NoClassDefFoundError: org/eclipse/jetty/util/log/Log at io.javalin.core.util.JettyServerUtil.(JettyServerUtil.kt:34) at io.javalin.Javalin.create(Javalin.java:106) at com.uber.hoodie.timeline.service.TimelineService.startService(TimelineService.java:101) at com.uber.hoodie.client.embedded.EmbeddedTimelineService.startServer(EmbeddedTimelineService.java:72) at com.uber.hoodie.AbstractHoodieClient.startEmbeddedServerView(AbstractHoodieClient.java:98) at com.uber.hoodie.AbstractHoodieClient.(AbstractHoodieClient.java:66) at com.uber.hoodie.HoodieWriteClient.(HoodieWriteClient.java:134) at com.uber.hoodie.HoodieWriteClient.(HoodieWriteClient.java:129) at com.uber.hoodie.HoodieWriteClient.(HoodieWriteClient.java:123) at com.uber.hoodie.utilities.deltastreamer.DeltaSync.setupWriteClient(DeltaSync.java:442) at com.uber.hoodie.utilities.deltastreamer.DeltaSync.(DeltaSync.java:185) at com.uber.hoodie.utilities.deltastreamer.HoodieDeltaStreamer$DeltaSyncService.(HoodieDeltaStreamer.java:362) at com.uber.hoodie.utilities.deltastreamer.HoodieDeltaStreamer.(HoodieDeltaStreamer.java:101) at com.uber.hoodie.utilities.deltastreamer.HoodieDeltaStreamer.(HoodieDeltaStreamer.java:95) at com.uber.hoodie.utilities.deltastreamer.HoodieDeltaStreamer.main(HoodieDeltaStreamer.java:289) at sun.reflect.NativeMethodAccessorImpl.invoke0(Native Method) at sun.reflect.NativeMethodAccessorImpl.invoke(NativeMethodAccessorImpl.java:62) at sun.reflect.DelegatingMethodAccessorImpl.invoke(DelegatingMethodAccessorImpl.java:43) at java.lang.reflect.Method.invoke(Method.java:498) at org.apache.spark.deploy.JavaMainApplication.start(SparkApplication.scala:52) at org.apache.spark.deploy.SparkSubmit$.org$apache$spark$deploy$SparkSubmit$$runMain(SparkSubmit.scala:894) at org.apache.spark.deploy.SparkSubmit$.doRunMain$1(SparkSubmit.scala:198) at org.apache.spark.deploy.SparkSubmit$.submit(SparkSubmit.scala:228) at org.apache.spark.deploy.SparkSubmit$.main(SparkSubmit.scala:137) at org.apache.spark.deploy.SparkSubmit.main(SparkSubmit.scala) Caused by: java.lang.ClassNotFoundException: org.eclipse.jetty.util.log.Log at java.net.URLClassLoader.findClass(URLClassLoader.java:382) at java.lang.ClassLoader.loadClass(ClassLoader.java:424) at java.lang.ClassLoader.loadClass(ClassLoader.java:357) ``` but lets shade them. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on issue #736: hoodie-hive-hundle don't have hive jars

vinothchandar commented on issue #736: hoodie-hive-hundle don't have hive jars URL: https://github.com/apache/incubator-hudi/issues/736#issuecomment-510581625 @cdmikechen these jars are in the hive installation, thats why we don't bundle them. ``` ls hive/lib/datanucleus-* hive/lib/datanucleus-api-jdo-4.2.4.jar hive/lib/datanucleus-core-4.1.17.jar hive/lib/datanucleus-rdbms-4.1.19.jar root@adhoc-2:/opt# ``` is it possible the the script is not just picking them up? are you able to repro this on top of #751 and see if this still is an issue? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] amaranathv commented on issue #764: Hoodie 0.4.7: Error upserting bucketType UPDATE for partition #, No value present

amaranathv commented on issue #764: Hoodie 0.4.7: Error upserting bucketType

UPDATE for partition #, No value present

URL: https://github.com/apache/incubator-hudi/issues/764#issuecomment-510581260

I am getting same error.

scala>

.save("/datalake/888/888/888/hive/warehouse/test_hudi_spark_no_part_1_mor")

19/07/11 12:31:45 WARN TaskSetManager: Lost task 0.0 in stage 304.0 (TID

464, 8.uhc.com, executor 2):

com.uber.hoodie.exception.HoodieUpsertException: Error upserting bucketType

UPDATE for partition :0

at

com.uber.hoodie.table.HoodieCopyOnWriteTable.handleUpsertPartition(HoodieCopyOnWriteTable.java:274)

at

com.uber.hoodie.HoodieWriteClient.lambda$upsertRecordsInternal$7ef77fd$1(HoodieWriteClient.java:451)

at

org.apache.spark.api.java.JavaRDDLike$$anonfun$mapPartitionsWithIndex$1.apply(JavaRDDLike.scala:102)

at

org.apache.spark.api.java.JavaRDDLike$$anonfun$mapPartitionsWithIndex$1.apply(JavaRDDLike.scala:102)

at

org.apache.spark.rdd.RDD$$anonfun$mapPartitionsWithIndex$1$$anonfun$apply$26.apply(RDD.scala:844)

at

org.apache.spark.rdd.RDD$$anonfun$mapPartitionsWithIndex$1$$anonfun$apply$26.apply(RDD.scala:844)

at

org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:38)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:323)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:287)

at

org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:38)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:323)

at org.apache.spark.rdd.RDD$$anonfun$8.apply(RDD.scala:336)

at org.apache.spark.rdd.RDD$$anonfun$8.apply(RDD.scala:334)

at

org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1055)

at

org.apache.spark.storage.BlockManager$$anonfun$doPutIterator$1.apply(BlockManager.scala:1029)

at

org.apache.spark.storage.BlockManager.doPut(BlockManager.scala:969)

at

org.apache.spark.storage.BlockManager.doPutIterator(BlockManager.scala:1029)

at

org.apache.spark.storage.BlockManager.getOrElseUpdate(BlockManager.scala:760)

at org.apache.spark.rdd.RDD.getOrCompute(RDD.scala:334)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:285)

at

org.apache.spark.rdd.MapPartitionsRDD.compute(MapPartitionsRDD.scala:38)

at org.apache.spark.rdd.RDD.computeOrReadCheckpoint(RDD.scala:323)

at org.apache.spark.rdd.RDD.iterator(RDD.scala:287)

at org.apache.spark.scheduler.ResultTask.runTask(ResultTask.scala:87)

at org.apache.spark.scheduler.Task.run(Task.scala:108)

at

org.apache.spark.executor.Executor$TaskRunner.run(Executor.scala:338)

at

java.util.concurrent.ThreadPoolExecutor.runWorker(ThreadPoolExecutor.java:1149)

at

java.util.concurrent.ThreadPoolExecutor$Worker.run(ThreadPoolExecutor.java:624)

at java.lang.Thread.run(Thread.java:748)

Caused by: com.uber.hoodie.exception.HoodieUpsertException: Failed to

initialize HoodieAppendHandle for FileId:

951d569b-188d-46e4-ad94-a32525fac797-0 on commit 20190711123144 on HDFS path

/datalake/optum/optuminsight/udw/hive/warehouse/test_hudi_spark_no_part_1_mor

at

com.uber.hoodie.io.HoodieAppendHandle.init(HoodieAppendHandle.java:141)

at

com.uber.hoodie.io.HoodieAppendHandle.doAppend(HoodieAppendHandle.java:193)

at

com.uber.hoodie.table.HoodieMergeOnReadTable.handleUpdate(HoodieMergeOnReadTable.java:118)

at

com.uber.hoodie.table.HoodieCopyOnWriteTable.handleUpsertPartition(HoodieCopyOnWriteTable.java:266)

... 28 more

Caused by: java.lang.IllegalArgumentException: Can not create a Path from an

empty string

at org.apache.hadoop.fs.Path.checkPathArg(Path.java:130)

at org.apache.hadoop.fs.Path.(Path.java:138)

at org.apache.hadoop.fs.Path.(Path.java:92)

at

com.uber.hoodie.io.HoodieAppendHandle.createLogWriter(HoodieAppendHandle.java:277)

at

com.uber.hoodie.io.HoodieAppendHandle.init(HoodieAppendHandle.java:132)

... 31 more

19/07/11 12:31:45 ERROR TaskSetManager: Task 0 in stage 304.0 failed 4

times; aborting job

org.apache.spark.SparkException: Job aborted due to stage failure: Task 0 in

stage 304.0 failed 4 times, most recent failure: Lost task 0.3 in stage 304.0

(TID 467, dbslt1829.uhc.com, executor 2):

com.uber.hoodie.exception.HoodieUpsertException: Error upserting bucketType

UPDATE for partition :0

at

com.uber.hoodie.table.HoodieCopyOnWriteTable.handleUpsertPartition(HoodieCopyOnWriteTable.java:274)

at

com.uber.hoodie.HoodieWriteClient.lambda$upsertRecordsInternal$7ef77fd$1(HoodieWriteClient.java:451)

at

org.apache.spark.api.java.JavaRDDLike$$anonfun$mapPartitionsWithIndex$1.apply(JavaRDDLike.scal

[GitHub] [incubator-hudi] vinothchandar closed issue #705: hadoop 2.8.x miss RecoveryInProgressException class

vinothchandar closed issue #705: hadoop 2.8.x miss RecoveryInProgressException class URL: https://github.com/apache/incubator-hudi/issues/705 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on issue #735: Failed to instantiate SLF4J LoggerFactory

vinothchandar commented on issue #735: Failed to instantiate SLF4J LoggerFactory URL: https://github.com/apache/incubator-hudi/issues/735#issuecomment-510579271 @Gowthamsb12 #751 is redoing the bundles. but for this issue, I think you should be able to find log4J if the classpath is set correctly? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] tooptoop4 commented on issue #638: [HUDI-12] Upgrade to Spark 2.4, Avro 1.8.2, Parquet 1.10.0...

tooptoop4 commented on issue #638: [HUDI-12] Upgrade to Spark 2.4, Avro 1.8.2, Parquet 1.10.0... URL: https://github.com/apache/incubator-hudi/pull/638#issuecomment-510575991 @thesuperzapper @bvaradar gentle ping This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] NetsanetGeb edited a comment on issue #714: Performance Comparison of HoodieDeltaStreamer and DataSourceAPI

NetsanetGeb edited a comment on issue #714: Performance Comparison of HoodieDeltaStreamer and DataSourceAPI URL: https://github.com/apache/incubator-hudi/issues/714#issuecomment-510569606 **Benchmarking Hudi Upsert** I am trying to bench mark Hudi upsert operation and the latency of ingesting 6 GB of data is 38 minutes with the cluster i provided. How can i enhance this? For my specific use case, i used a spliced JSON data source with the schema having 20 columns. The settings i used for a cluster with (30 GB of RAM and 100 GB available disk) are: spark.driver.memory = 4096m spark.executor.memory = 6144m spark.executor.instances =3 spark.driver.cores =1 spark.executor.cores =1 hoodie.datasource.write.operation="upsert" hoodie.upsert.shuffle.parallellism="1500" You can see the details from the UI of the spark job provided below:   This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] NetsanetGeb commented on issue #714: Performance Comparison of HoodieDeltaStreamer and DataSourceAPI

NetsanetGeb commented on issue #714: Performance Comparison of HoodieDeltaStreamer and DataSourceAPI URL: https://github.com/apache/incubator-hudi/issues/714#issuecomment-510569606 **### Bench marking Hudi Upsert** I am trying to bench mark Hudi upsert operation and the latency of ingesting 6 GB of data is 38 minutes with the cluster i provided. How can i enhance this? For my specific use case, i used a spliced JSON data source with the schema having 20 columns. The settings i used for a cluster with (30 GB of RAM and 100 GB available disk) are: spark.driver.memory = 4096m spark.executor.memory = 6144m spark.executor.instances =3 spark.driver.cores =1 spark.executor.cores =1 hoodie.datasource.write.operation="upsert" hoodie.upsert.shuffle.parallellism="1500" You can see the details from the UI of the spark job provided below:   This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] NetsanetGeb opened a new issue #714: Performance Comparison of HoodieDeltaStreamer and DataSourceAPI

NetsanetGeb opened a new issue #714: Performance Comparison of HoodieDeltaStreamer and DataSourceAPI URL: https://github.com/apache/incubator-hudi/issues/714 I am trying to ingest data using the DatasourceAPI and HoodieDeltaStreamer. The ingestion latency of using DataSource API with the HoodieSparkSQLWriter is high compared to using delta streamer.Both datasource and deltastreamer use the same APIs. Why is it slow? Are there specific option where we could specify to minimize the ingestion latency. For example: when i run the delta streamer its talking about 1 minute to insert some data. If i use DataSource API with HoodieSparkSQLWriter, its taking 4 minutes. How can we optimize this? I am attaching the spark UI of HoodieDeltaStreamer and DataSourceAPI respectively. Spark UI of HoodieDeltaStreamer Note: The class Main is same as the HoodieDeltaStreamer  SparkUI of DataSourceAPI Note: am using the hoodie delta streamer first to get the dataframe and checkpoint and then later use the hoodie spark sql writer for ingestion.  This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on issue #700: HUDI-138 - Meta Files handling also need to support consistency guard

vinothchandar commented on issue #700: HUDI-138 - Meta Files handling also need to support consistency guard URL: https://github.com/apache/incubator-hudi/pull/700#issuecomment-510562983 @bvaradar is this ready for merging? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on issue #780: Cleanup Maven POM/Classpath

vinothchandar commented on issue #780: Cleanup Maven POM/Classpath URL: https://github.com/apache/incubator-hudi/pull/780#issuecomment-510547665 @thesuperzapper as for testing, if you can run the demo steps once and confirm there are no NoClassDefFound errors and such, it would be a good start. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] vinothchandar commented on issue #780: Cleanup Maven POM/Classpath

vinothchandar commented on issue #780: Cleanup Maven POM/Classpath URL: https://github.com/apache/incubator-hudi/pull/780#issuecomment-510538727 @thesuperzapper Thanks! This is great.. let me review these. I have also been working on #751 around the same thing.. Ideally, if we can merge these changes onto a branch and test, that would be ideal.. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services

[GitHub] [incubator-hudi] thesuperzapper opened a new pull request #780: Cleanup Maven POM/Classpath

thesuperzapper opened a new pull request #780: Cleanup Maven POM/Classpath URL: https://github.com/apache/incubator-hudi/pull/780 This PR is to cleanup the Maven pom.xml files. This is necessary if we want to bump versions of core dependencies like Spark, Avro, Parquet, as in its current state, the maven dependency structure is very fragile, and has many assumptions about exclusions and shading. This PR currently has all unit/integration tests pass properly. I would love input and testing, as these changes affect every part of Hudi, and I am sceptical that the current unit tests actually cover everything. We can probably clean up even more than this, (especially in the maven plugin space, where we are running multiple versions of the same plugin in each sub-package) *PS: yes, the diffs look disgusting, it's because of xml* This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org With regards, Apache Git Services