[GitHub] nifi issue #3215: NIFI-5889 - changed the wording about Destination URL in S...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3215 LGTM +1, merging. Thanks @pvillard31! ---

[GitHub] nifi pull request #3214: NIFI-5890 Added a unit test that proves that 1.9 fi...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3214#discussion_r240856001

--- Diff:

nifi-nar-bundles/nifi-elasticsearch-bundle/nifi-elasticsearch-processors/src/main/java/org/apache/nifi/processors/elasticsearch/PutElasticsearchHttpRecord.java

---

@@ -404,7 +404,7 @@ public void onTrigger(final ProcessContext context,

final ProcessSession session

reason =

itemNode.findPath("reason").asText();

}

errorReason = reason;

-logger.error("Failed to process {}

due to {}, transferring to failure",

+talogger.error("Failed to process

{} due to {}, transferring to failure",

--- End diff --

I believe this change is not intentional.

---

[GitHub] nifi issue #3205: NIFI-5875 Improve docs around the PriorityAttributePriorit...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3205 Looks perfect, +1 merging. Thanks @wselwood ! ---

[GitHub] nifi issue #3208: NIFI-5872 - Added compression option to JsonRecordSetWrite...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3208 The performance improvement result is impressing! Code looks good and I was able to compress records. +1 merging. Thanks @pvillard31 ---

[GitHub] nifi issue #3205: NIFI-5875 Improve docs around the PriorityAttributePriorit...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3205 @wselwood Thanks for the update, looks much better! For the even better readability, can we improve structure a little bit more organized? How about something like this? Example output:  --- (As plain text) PriorityAttributePrioritizer: Given two FlowFiles that both have a "priority" attribute, the one that has the highest priority value will be processed first. - Note that an UpdateAttribute processor should be used to add the "priority" attribute to the FlowFiles before they reach a connection that has this prioritizer set. - If only one has that attribute it will go first. - Values for the "priority" attribute may be alphanumeric, where "a" is a higher priority than "z", and "1" is a higher priority than "9", for example. - If "priority" attribute cannot be parsed as a long, unicode string ordering will be used. For example: "99" and "100" will be ordered so the flowfile with "99" comes first, but "A-99" and "A-100" will sort so the flowfile with "A-100" comes first. ---

[GitHub] nifi pull request #3212: NIFI-5885 ArrayOutOfBoundsException at EL 'or' and ...

GitHub user ijokarumawak opened a pull request: https://github.com/apache/nifi/pull/3212 NIFI-5885 ArrayOutOfBoundsException at EL 'or' and 'and' functions EL 'or' and 'and' functions can be called multiple times within the same context using the same evaluator instance. That happens if their subject is derived from an IteratingEvaluator such as 'anyDelineatedValues'. And if the right hand side expression for such 'or' and 'and' contains another IteratingEvaluator, then it can be evaluated more than the number of its candidates, ultimately an ArrayOutOfBoundsException is thrown. This commit makes Or/AndEvaluator caching its right hand side result to prevent that happens. For 'or' and 'and' functions, the right hand side expression is independant from their subject boolean value. It's enough evaluating right hand side once, because it returns the same result even with different subjects. Thank you for submitting a contribution to Apache NiFi. In order to streamline the review of the contribution we ask you to ensure the following steps have been taken: ### For all changes: - [x] Is there a JIRA ticket associated with this PR? Is it referenced in the commit message? - [x] Does your PR title start with NIFI- where is the JIRA number you are trying to resolve? Pay particular attention to the hyphen "-" character. - [x] Has your PR been rebased against the latest commit within the target branch (typically master)? - [x] Is your initial contribution a single, squashed commit? ### For code changes: - [ ] Have you ensured that the full suite of tests is executed via mvn -Pcontrib-check clean install at the root nifi folder? - [x] Have you written or updated unit tests to verify your changes? - [ ] If adding new dependencies to the code, are these dependencies licensed in a way that is compatible for inclusion under [ASF 2.0](http://www.apache.org/legal/resolved.html#category-a)? - [ ] If applicable, have you updated the LICENSE file, including the main LICENSE file under nifi-assembly? - [ ] If applicable, have you updated the NOTICE file, including the main NOTICE file found under nifi-assembly? - [ ] If adding new Properties, have you added .displayName in addition to .name (programmatic access) for each of the new properties? ### For documentation related changes: - [ ] Have you ensured that format looks appropriate for the output in which it is rendered? ### Note: Please ensure that once the PR is submitted, you check travis-ci for build issues and submit an update to your PR as soon as possible. You can merge this pull request into a Git repository by running: $ git pull https://github.com/ijokarumawak/nifi nifi-5885 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/nifi/pull/3212.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #3212 commit 44988792b47472a491673cd642b0e8557c274649 Author: Koji Kawamura Date: 2018-12-10T05:31:16Z NIFI-5885 ArrayOutOfBoundsException at EL 'or' and 'and' functions EL 'or' and 'and' functions can be called multiple times within the same context using the same evaluator instance. That happens if their subject is derived from an IteratingEvaluator such as 'anyDelineatedValues'. And if the right hand side expression for such 'or' and 'and' contains another IteratingEvaluator, then it can be evaluated more than the number of its candidates, ultimately an ArrayOutOfBoundsException is thrown. This commit makes Or/AndEvaluator caching its right hand side result to prevent that happens. For 'or' and 'and' functions, the right hand side expression is independant from their subject boolean value. It's enough evaluating right hand side once, because it returns the same result even with different subjects. ---

[GitHub] nifi issue #3211: NIFI-5884 Bumping hbase-client version from 1.1.2 to 1.1.1...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3211 I agree with the idea of using 1.1.x HBase client despite of having 1.1.2 in the component name. +1 merging. Thanks @bbende ! ---

[GitHub] nifi issue #3210: NIFI-5881: Enable to export the template for non-ascii nam...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3210 Thanks @kemixkoo for your contribution! I confirmed that Japanese characters can be used in template file name with this fix. +1 merging! ---

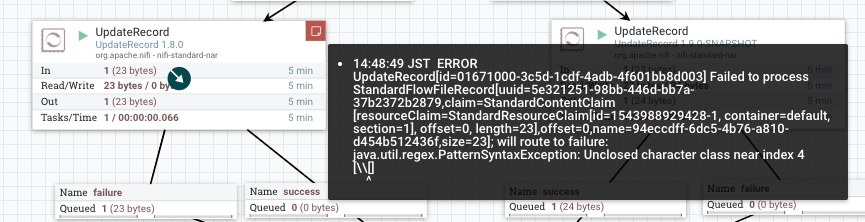

[GitHub] nifi issue #3200: NIFI-5826 Fix back-slash escaping at Lexers

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3200 @bdesert @ottobackwards Thanks for reviewing. I've added unit tests. As a reference, I run the added tests with Lexer before this PR. Following tests failed with current Lexer, but passes with the updated Lexer: This test failed with original Lexer because `[\s]` is converted to `[\\s]` by Lexer and didn't match. ``` [ERROR] testReplaceRegexEscapedCharacters(org.apache.nifi.record.path.TestRecordPath) Time elapsed: 0.007 s <<< FAILURE! org.junit.ComparisonFailure: Replacing whitespace to new line expected: but was: at org.apache.nifi.record.path.TestRecordPath.testReplaceRegexEscapedCharacters(TestRecordPath.java:1046) ``` This test failed with original Lexer because `\[` is converted to `\\[` by Lexer and produced RegEx syntax error. ``` [ERROR] testReplaceRegexEscapedBrackets(org.apache.nifi.record.path.TestRecordPath) Time elapsed: 0.001 s <<< ERROR! org.apache.nifi.record.path.exception.RecordPathException: java.util.regex.PatternSyntaxException: Unclosed character class near index 2 \\[ ^ at org.apache.nifi.record.path.TestRecordPath.testReplaceRegexEscapedBrackets(TestRecordPath.java:1149) ``` Both tests failed with the same cause, and fixed by this PR. ---

[GitHub] nifi pull request #3205: NIFI-5875 Improve docs around the PriorityAttribute...

Github user ijokarumawak commented on a diff in the pull request: https://github.com/apache/nifi/pull/3205#discussion_r239684255 --- Diff: nifi-docs/src/main/asciidoc/user-guide.adoc --- @@ -1091,7 +1091,7 @@ The following prioritizers are available: - *FirstInFirstOutPrioritizer*: Given two FlowFiles, the one that reached the connection first will be processed first. - *NewestFlowFileFirstPrioritizer*: Given two FlowFiles, the one that is newest in the dataflow will be processed first. - *OldestFlowFileFirstPrioritizer*: Given two FlowFiles, the one that is oldest in the dataflow will be processed first. 'This is the default scheme that is used if no prioritizers are selected'. -- *PriorityAttributePrioritizer*: Given two FlowFiles that both have a "priority" attribute, the one that has the highest priority value will be processed first. Note that an UpdateAttribute processor should be used to add the "priority" attribute to the FlowFiles before they reach a connection that has this prioritizer set. Values for the "priority" attribute may be alphanumeric, where "a" is a higher priority than "z", and "1" is a higher priority than "9", for example. +- *PriorityAttributePrioritizer*: Given two FlowFiles, an attribute called âpriorityâ will be extracted. If only one has that attribute it will go first. If the attributes can be parsed as a long, they will be ordered largest number first. For example 9 will come before 1 and 100 will be selected before 99. If only one of them parses as a long that one will be considered to have the higher priority. Otherwise, they will be ordered in alphanumeric order, where "a" is a higher priority than "z", and "1" is a higher priority than "9", for example. --- End diff -- >If the attributes can be parsed as a long, they will be ordered largest number first. For example 9 will come before 1 and 100 will be selected before 99 Is this true? As following test case shows, if `1` and `2` are compared, `1` goes first. https://github.com/apache/nifi/blob/master/nifi-nar-bundles/nifi-framework-bundle/nifi-framework/nifi-standard-prioritizers/src/test/java/org/apache/nifi/prioritizer/PriorityAttributePrioritizerTest.java#L91 ---

[GitHub] nifi pull request #3201: NIFI-4621

Github user ijokarumawak commented on a diff in the pull request: https://github.com/apache/nifi/pull/3201#discussion_r239324396 --- Diff: nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/ListFTP.java --- @@ -35,17 +36,21 @@ import org.apache.nifi.components.ValidationResult; import org.apache.nifi.components.state.Scope; import org.apache.nifi.context.PropertyContext; +import org.apache.nifi.expression.ExpressionLanguageScope; import org.apache.nifi.processor.ProcessContext; import org.apache.nifi.processor.util.list.ListedEntityTracker; import org.apache.nifi.processors.standard.util.FileTransfer; import org.apache.nifi.processors.standard.util.FTPTransfer; @PrimaryNodeOnly @TriggerSerially -@InputRequirement(Requirement.INPUT_FORBIDDEN) +@InputRequirement(Requirement.INPUT_ALLOWED) --- End diff -- Let's design how we improve this processors (or create other processors if necessary) first. I remember there was some discussion on JIRA or ML before. Please check those, too. If PATh can be supplied by incoming FlowFiles, then I personally prefer passing minimum timestamps by FlowFiles, too. ---

[GitHub] nifi pull request #3201: NIFI-4621

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3201#discussion_r239307533

--- Diff:

nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/ListFTP.java

---

@@ -35,17 +36,21 @@

import org.apache.nifi.components.ValidationResult;

import org.apache.nifi.components.state.Scope;

import org.apache.nifi.context.PropertyContext;

+import org.apache.nifi.expression.ExpressionLanguageScope;

import org.apache.nifi.processor.ProcessContext;

import org.apache.nifi.processor.util.list.ListedEntityTracker;

import org.apache.nifi.processors.standard.util.FileTransfer;

import org.apache.nifi.processors.standard.util.FTPTransfer;

@PrimaryNodeOnly

@TriggerSerially

-@InputRequirement(Requirement.INPUT_FORBIDDEN)

+@InputRequirement(Requirement.INPUT_ALLOWED)

@Tags({"list", "ftp", "remote", "ingest", "source", "input", "files"})

@CapabilityDescription("Performs a listing of the files residing on an FTP

server. For each file that is found on the remote server, a new FlowFile will

be created with the filename attribute "

+ "set to the name of the file on the remote server. This can then be

used in conjunction with FetchFTP in order to fetch those files.")

+@DynamicProperty(name = "Relationship Name", value = "Attribute Expression

Language",

+expressionLanguageScope =

ExpressionLanguageScope.FLOWFILE_ATTRIBUTES, description = "Routes FlowFiles

whose attributes match the "

++ "Attribute Expression Language specified in the Dynamic Property

Value to the Relationship specified in the Dynamic Property Key")

--- End diff --

What's this used for?

---

[GitHub] nifi pull request #3201: NIFI-4621

Github user ijokarumawak commented on a diff in the pull request: https://github.com/apache/nifi/pull/3201#discussion_r239310311 --- Diff: nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/ListFTP.java --- @@ -35,17 +36,21 @@ import org.apache.nifi.components.ValidationResult; import org.apache.nifi.components.state.Scope; import org.apache.nifi.context.PropertyContext; +import org.apache.nifi.expression.ExpressionLanguageScope; import org.apache.nifi.processor.ProcessContext; import org.apache.nifi.processor.util.list.ListedEntityTracker; import org.apache.nifi.processors.standard.util.FileTransfer; import org.apache.nifi.processors.standard.util.FTPTransfer; @PrimaryNodeOnly @TriggerSerially -@InputRequirement(Requirement.INPUT_FORBIDDEN) +@InputRequirement(Requirement.INPUT_ALLOWED) --- End diff -- Changing input requirement is not enough if we want to support incoming FlowFiles. Processors need to: 1. Take input FlowFiles, LogMessage processor can be the simplest example, please look [here](https://github.com/apache/nifi/blob/master/nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/LogMessage.java#L125) 2. Evaluate ExpressionLanguages using the FlowFiles, again LogMessage as [an example](https://github.com/apache/nifi/blob/master/nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/LogMessage.java#L130) Currently [FTPTransfer.getListing()](https://github.com/apache/nifi/blob/master/nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/util/FTPTransfer.java#L187) doesn't do above things. And we also need to support existing use-cases with no input connection. Also, we need to track listing state such as latest timestamps ... etc. We need to do that efficiently with dynamically changing target paths if it's configured by incoming FlowFiles attribute. There are more things need to be done. ---

[GitHub] nifi pull request #3183: NIFI-5826 Fix to escaped backslash

Github user ijokarumawak commented on a diff in the pull request: https://github.com/apache/nifi/pull/3183#discussion_r238943337 --- Diff: nifi-commons/nifi-record-path/src/main/java/org/apache/nifi/record/path/util/RecordPathUtils.java --- @@ -39,4 +39,52 @@ public static String getFirstStringValue(final RecordPathSegment segment, final return stringValue; } + +/** + * This method handles backslash sequences after ANTLR parser converts all backslash into double ones + * with exception for \t, \r and \n. See + * org/apache/nifi/record/path/RecordPathParser.g --- End diff -- @bdesert Thanks for sharing the example. I tried it out but result was different. I believe the content (attribute value) itself won't processed by the Lexer, so changing Lexer wouldn't affect that EL usages. Please look at #3200 for detail. I shared flow template and results. I hope I have set up the flow as you mentioned, but please let me know if I misunderstood! Providing generic util method such as `unescapeBackslash` is fine. But I think using it to address NIFI-5826 is not a good idea. As it would make just another confusion around how these actually work. @ottobackwards Agreed to add more unit tests and keep discussion going for both approaches with more examples. I created #3200 for comparison. ---

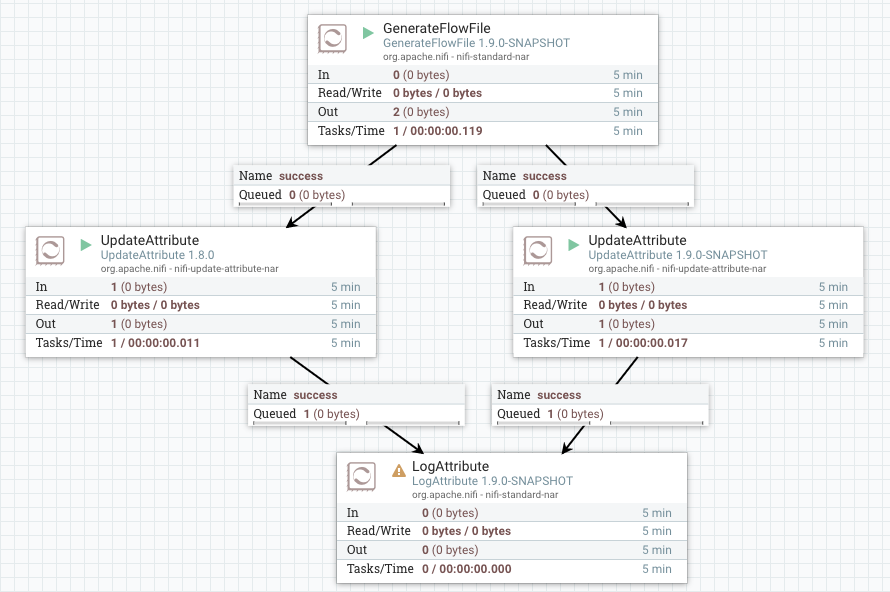

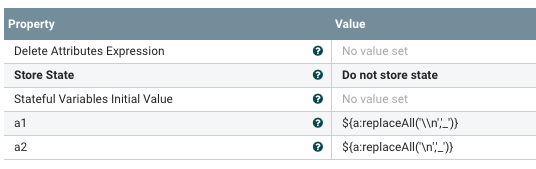

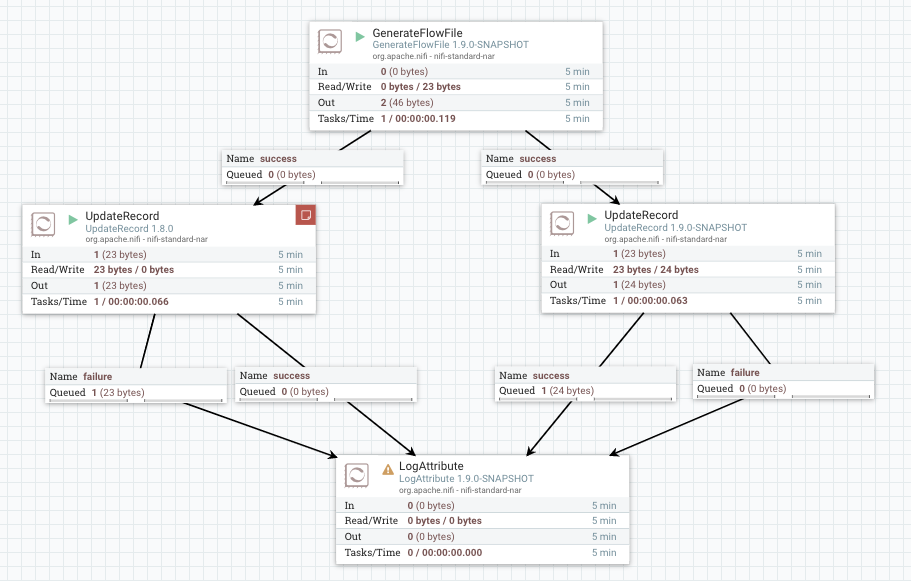

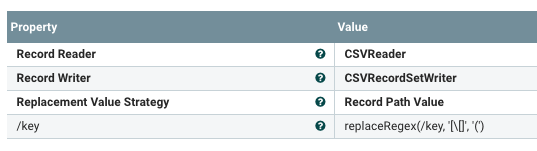

[GitHub] nifi pull request #3200: NIFI-5826 WIP Fix back-slash escaping at Lexers

GitHub user ijokarumawak opened a pull request:

https://github.com/apache/nifi/pull/3200

NIFI-5826 WIP Fix back-slash escaping at Lexers

## Summary

Current Lexers convert a back-slash plus another character sequence (e.g.

'\[') to double back-slash plus the next character (e.g. '\\[').

But from detailed analysis (see below), it seems the conversion is wrong

and it should leave such characters as it is.

## Details

I debugged how Lexer works, and found that:

- The `ESC` fragment handles an escaped special character in String

representation. I.e. String `\t` will be converted to actual tab character.

- The string values user input from NiFi UI are passed to

`RecordPath.compile` method as it is. E.g. the input string

`replaceRegex(/name, '\[', '')` is passed to as is, then the single back-slash

is converted to double back-slash by the ESC fragment line 155.

- I believe the line 153-156 is aimed to preserve escaped characters as it

is, because such escape doesn't mean anything for the RecordPath/AttrExpLang

spec. And those should be unescaped later by underlying syntaxes such as RegEx.

- And current line 155 does it wrongly. It should append a single

back-slash..

- Other Lexers (AttributeExpressionLexer.g and HL7QueryLexer.g) have

the same issue.

- So, I think we should fix all Lexers instead of adding another conversion.

Here is the [Lexer

code](https://github.com/apache/nifi/blob/master/nifi-commons/nifi-record-path/src/main/antlr3/org/apache/nifi/record/path/RecordPathLexer.g#L143)

for reference:

```

143 fragment

144 ESC

145 : '\\'

146 (

147 '"'{ setText("\""); }

148 | '\'' { setText("\'"); }

149 | 'r' { setText("\r"); }

150 | 'n' { setText("\n"); }

151 | 't' { setText("\t"); }

152 | '\\' { setText(""); }

153 | nextChar = ~('"' | '\'' | 'r' | 'n' | 't' | '\\')

154{

155 StringBuilder lBuf = new StringBuilder();

lBuf.append("").appendCodePoint(nextChar); setText(lBuf.toString());

156}

157)

158 ;

```

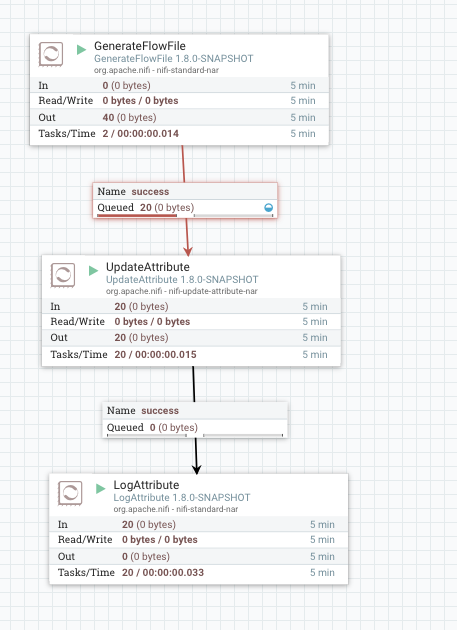

## NiFi template for test

Here is a NiFi flow template to test how before/after this change.

https://gist.github.com/ijokarumawak/b6bdca8074a4457bc4a425b90a6b17f0

In order to try the template, you need to build this PR as NiFi

1.9.0-SNAPSHOT, then download following 1.8.0 nars in your SNAPSHOT's lib dir,

so that both versions can be used in the flow.

-

https://repo.maven.apache.org/maven2/org/apache/nifi/nifi-standard-nar/1.8.0/nifi-standard-nar-1.8.0.nar

-

https://repo.maven.apache.org/maven2/org/apache/nifi/nifi-update-attribute-nar/1.8.0/nifi-update-attribute-nar-1.8.0.nar

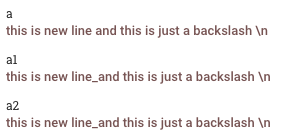

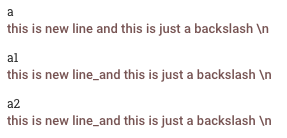

## Test result

### UpdateAttribute test for backward compatibility

GenerateFlowFile generates FlowFiles with attribute `a` whose value is:

```

this is new line

and this is just a backslash \n

```

Result

1.8.0

1.9.0-SNAPSHOT

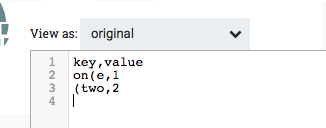

### UpdateRecord test illustrating the NIFI-5826 issue is addressed

GenerateFlowFile generates content:

```

key,value

on[e,1

[two,2

```

Result

1.8.0

Regex compilation error as reported

1.9.0-SNAPSHOT

The square brackets are converted successfully

---

Thank you for submitting a contribution to Apache NiFi.

In order to streamline the review of the contribution we ask you

to ensure the following steps have been taken:

### For all changes:

- [ ] Is there a JIRA ticket associated with this PR? Is it referenced

in the commit message?

- [ ] Does your PR title start with NIFI- where is the JIRA number

you are trying to resolve? Pay particular attention to the hyphen "-" character.

[GitHub] nifi issue #3199: NIFI-5863 Fixed AbstractFlowFileQueue logging

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3199 Example log output: ``` 2018-12-05 10:22:57,822 DEBUG [List FlowFiles for Connection 7bf5b85d-0167-1000-0fd3-42a79872] o.a.n.c.queue.AbstractFlowFileQueue org.apache.nifi.controller.queue.AbstractFlowFileQueue$1@4e4dd5ce Finished listing FlowFiles for active queue with a total of 100 results out of 1000 FlowFiles ``` ---

[GitHub] nifi pull request #3199: NIFI-5863 Fixed AbstractFlowFileQueue logging

GitHub user ijokarumawak opened a pull request: https://github.com/apache/nifi/pull/3199 NIFI-5863 Fixed AbstractFlowFileQueue logging Thank you for submitting a contribution to Apache NiFi. In order to streamline the review of the contribution we ask you to ensure the following steps have been taken: ### For all changes: - [x] Is there a JIRA ticket associated with this PR? Is it referenced in the commit message? - [x] Does your PR title start with NIFI- where is the JIRA number you are trying to resolve? Pay particular attention to the hyphen "-" character. - [x] Has your PR been rebased against the latest commit within the target branch (typically master)? - [x] Is your initial contribution a single, squashed commit? ### For code changes: - [ ] Have you ensured that the full suite of tests is executed via mvn -Pcontrib-check clean install at the root nifi folder? - [ ] Have you written or updated unit tests to verify your changes? - [ ] If adding new dependencies to the code, are these dependencies licensed in a way that is compatible for inclusion under [ASF 2.0](http://www.apache.org/legal/resolved.html#category-a)? - [ ] If applicable, have you updated the LICENSE file, including the main LICENSE file under nifi-assembly? - [ ] If applicable, have you updated the NOTICE file, including the main NOTICE file found under nifi-assembly? - [ ] If adding new Properties, have you added .displayName in addition to .name (programmatic access) for each of the new properties? ### For documentation related changes: - [ ] Have you ensured that format looks appropriate for the output in which it is rendered? ### Note: Please ensure that once the PR is submitted, you check travis-ci for build issues and submit an update to your PR as soon as possible. You can merge this pull request into a Git repository by running: $ git pull https://github.com/ijokarumawak/nifi NIFI-5863 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/nifi/pull/3199.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #3199 commit cef667cb3c19f742ab27a8041532a0df406089c5 Author: Koji Kawamura Date: 2018-12-05T01:23:57Z NIFI-5863 Fixed AbstractFlowFileQueue logging ---

[GitHub] nifi issue #3195: NIFI-5862 MockRecordParser Has Bad Logic for failAfterN

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3195 Thanks @patricker good catch! +1 merging to master. ---

[GitHub] nifi issue #3182: NIFI-5838 - Improve the schema validation method in Kite p...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3182 Thanks @pvillard31 for confirming that. I'm +1 and going to merge this. Please update [Migration Guidance](https://cwiki.apache.org/confluence/display/NIFI/Migration+Guidance) to note about this behavioral change. A section title like "Migrating from 1.x.x to 1.9.0 (under development, not released yet, subject to be changed)" would be nice. ---

[GitHub] nifi pull request #3183: NIFI-5826 Fix to escaped backslash

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3183#discussion_r237764346

--- Diff:

nifi-commons/nifi-record-path/src/main/java/org/apache/nifi/record/path/util/RecordPathUtils.java

---

@@ -39,4 +39,52 @@ public static String getFirstStringValue(final

RecordPathSegment segment, final

return stringValue;

}

+

+/**

+ * This method handles backslash sequences after ANTLR parser converts

all backslash into double ones

+ * with exception for \t, \r and \n. See

+ * org/apache/nifi/record/path/RecordPathParser.g

--- End diff --

@bdesert Thanks for the explanation. I read through your comment over and

over, and tested different input string. However, that differs from what what

I'm seeing.

I debugged how Lexer works, too, and found that:

- The `ESC` fragment handles an escaped special character in String

representation. I.e. String `\t` will be converted to actual tab character.

- The string values user input from NiFi UI are passed to

`RecordPath.compile` method as it is. E.g. the input string

`replaceRegex(/name, '\[', '')` is passed to as is, then the single back-slash

is converted to double back-slash by the ESC fragment line 155.

- Since the escaped characters such as `\n` are already unescaped by this

Lexer, the `RecordPathUtils.unescapeBackslash` method added by this PR will not

see any **escaped** characters other than the doubled back-slash `//`. And the

doubled back-slash is converted back to a single back-slash by

`RecordPathUtils.unescapeBackslash`.

- I believe the line 153-156 is aimed to preserve escaped characters as it

is, because such escape doesn't mean anything for the RecordPath/AttrExpLang

spec. And those should be unescaped later by underlying syntaxes such as RegEx.

- And current line 155 does it wrongly. It should append a single

back-slash..

- Other Lexers (AttributeExpressionLexer.g and HL7QueryLexer.g) have

the same issue.

- So, I think we should fix all Lexers instead of adding another conversion.

Here is the [Lexer

code](https://github.com/apache/nifi/blob/master/nifi-commons/nifi-record-path/src/main/antlr3/org/apache/nifi/record/path/RecordPathLexer.g#L143)

for reference:

```

143 fragment

144 ESC

145 : '\\'

146 (

147 '"'{ setText("\""); }

148 | '\'' { setText("\'"); }

149 | 'r' { setText("\r"); }

150 | 'n' { setText("\n"); }

151 | 't' { setText("\t"); }

152 | '\\' { setText(""); }

153 | nextChar = ~('"' | '\'' | 'r' | 'n' | 't' | '\\')

154{

155 StringBuilder lBuf = new StringBuilder();

lBuf.append("").appendCodePoint(nextChar); setText(lBuf.toString());

156}

157)

158 ;

```

Can share any input String value that a user would set into a processor

property text box (or passed to RecordPath.compile method), that will go the

proposed `RecordPathUtils.unescapeBackslash` method lines of code for `\n`,

`\r` or `\t`? Current test case does have regex test cases using those escaped

characters, but coverage shows those branches are not used:

---

[GitHub] nifi pull request #3189: NIFI-5849: ListXXX can lose cluster state on proces...

GitHub user ijokarumawak opened a pull request: https://github.com/apache/nifi/pull/3189 NIFI-5849: ListXXX can lose cluster state on processor restart NIFI-5406 introduced the issue by trying to use the resetState variable for different purposes. AbstractListProcessor should have had a different variable to control whether to clear state for tracking entity strategy. Thank you for submitting a contribution to Apache NiFi. In order to streamline the review of the contribution we ask you to ensure the following steps have been taken: ### For all changes: - [x] Is there a JIRA ticket associated with this PR? Is it referenced in the commit message? - [x] Does your PR title start with NIFI- where is the JIRA number you are trying to resolve? Pay particular attention to the hyphen "-" character. - [x] Has your PR been rebased against the latest commit within the target branch (typically master)? - [x] Is your initial contribution a single, squashed commit? ### For code changes: - [ ] Have you ensured that the full suite of tests is executed via mvn -Pcontrib-check clean install at the root nifi folder? - [ ] Have you written or updated unit tests to verify your changes? - [ ] If adding new dependencies to the code, are these dependencies licensed in a way that is compatible for inclusion under [ASF 2.0](http://www.apache.org/legal/resolved.html#category-a)? - [ ] If applicable, have you updated the LICENSE file, including the main LICENSE file under nifi-assembly? - [ ] If applicable, have you updated the NOTICE file, including the main NOTICE file found under nifi-assembly? - [ ] If adding new Properties, have you added .displayName in addition to .name (programmatic access) for each of the new properties? ### For documentation related changes: - [ ] Have you ensured that format looks appropriate for the output in which it is rendered? ### Note: Please ensure that once the PR is submitted, you check travis-ci for build issues and submit an update to your PR as soon as possible. You can merge this pull request into a Git repository by running: $ git pull https://github.com/ijokarumawak/nifi nifi-5849 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/nifi/pull/3189.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #3189 commit 6dac106dc1c8c946db9dce7a5be9b7769a7ea6c8 Author: Koji Kawamura Date: 2018-11-29T08:44:44Z NIFI-5849: ListXXX can lose cluster state on processor restart NIFI-5406 introduced the issue by trying to use the resetState variable for different purposes. AbstractListProcessor should have had a different variable to control whether to clear state for tracking entity strategy. ---

[GitHub] nifi pull request #3183: NIFI-5826 Fix to escaped backslash

Github user ijokarumawak commented on a diff in the pull request: https://github.com/apache/nifi/pull/3183#discussion_r237373282 --- Diff: nifi-commons/nifi-record-path/src/main/java/org/apache/nifi/record/path/util/RecordPathUtils.java --- @@ -39,4 +39,52 @@ public static String getFirstStringValue(final RecordPathSegment segment, final return stringValue; } + +/** + * This method handles backslash sequences after ANTLR parser converts all backslash into double ones + * with exception for \t, \r and \n. See + * org/apache/nifi/record/path/RecordPathParser.g --- End diff -- I wonder you wanted to link to `RecordPathLexer.g` instead of `RecordPathParser.g`. ---

[GitHub] nifi pull request #3188: NIFI-5829 Create Lookup Controller Services for Rec...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3188#discussion_r237331455

--- Diff:

nifi-nar-bundles/nifi-scripting-bundle/nifi-scripting-processors/src/main/java/org/apache/nifi/record/script/ScriptedRecordSetWriter.java

---

@@ -60,12 +61,16 @@ public void onEnabled(final ConfigurationContext

context) {

super.onEnabled(context);

}

-

@Override

public RecordSetWriter createWriter(ComponentLog logger, RecordSchema

schema, OutputStream out) throws SchemaNotFoundException, IOException {

+return createWriter(new HashMap<>(), logger, schema, out);

--- End diff --

`Collections.emptyMap()` would be better than `new HashMap<>()` to avoid

unnecessary instance creation.

---

[GitHub] nifi issue #3184: NIFI-5845: Add support for OTHER and SQLXML JDBC types to ...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3184 LGTM +1. Merging. Thanks @mattyb149! ---

[GitHub] nifi pull request #3182: NIFI-5838 - Improve the schema validation method in...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3182#discussion_r237317639

--- Diff:

nifi-nar-bundles/nifi-kite-bundle/nifi-kite-processors/src/main/java/org/apache/nifi/processors/kite/AbstractKiteProcessor.java

---

@@ -101,38 +100,38 @@ protected static Schema getSchema(String

uriOrLiteral, Configuration conf) {

return parseSchema(uriOrLiteral);

}

+if(uri.getScheme() == null) {

+throw new SchemaNotFoundException("If the schema is not a JSON

string, a scheme must be specified in the URI "

++ "(ex: dataset:, view:, resource:, file:, hdfs:,

etc).");

+}

+

try {

if ("dataset".equals(uri.getScheme()) ||

"view".equals(uri.getScheme())) {

return

Datasets.load(uri).getDataset().getDescriptor().getSchema();

} else if ("resource".equals(uri.getScheme())) {

-try (InputStream in =

Resources.getResource(uri.getSchemeSpecificPart())

-.openStream()) {

+try (InputStream in =

Resources.getResource(uri.getSchemeSpecificPart()).openStream()) {

return parseSchema(uri, in);

}

} else {

// try to open the file

Path schemaPath = new Path(uri);

-FileSystem fs = schemaPath.getFileSystem(conf);

-try (InputStream in = fs.open(schemaPath)) {

+try (InputStream in =

schemaPath.getFileSystem(conf).open(schemaPath)) {

--- End diff --

This statement doesn't make FileSystem.close gets called. Please change it

to

```

try (FileSystem fs = schemaPath.getFileSystem(conf); InputStream in =

fs.open(schemaPath)) {

```

This way, both in.close() and fs.close() will be called.

---

[GitHub] nifi pull request #3184: NIFI-5845: Add support for OTHER and SQLXML JDBC ty...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3184#discussion_r236940096

--- Diff:

nifi-nar-bundles/nifi-hive-bundle/nifi-hive-processors/src/main/java/org/apache/nifi/util/hive/HiveJdbcCommon.java

---

@@ -402,6 +409,9 @@ public static long convertToCsvStream(final ResultSet

rs, final OutputStream out

rowValues.add("");

}

break;

+case SQLXML:

+

rowValues.add(StringEscapeUtils.escapeCsv(((SQLXML) value).getString()));

--- End diff --

Shouldn't we take care of null `value` here?

---

[GitHub] nifi pull request #3184: NIFI-5845: Add support for OTHER and SQLXML JDBC ty...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3184#discussion_r236940080

--- Diff:

nifi-nar-bundles/nifi-hive-bundle/nifi-hive3-processors/src/main/java/org/apache/nifi/util/hive/HiveJdbcCommon.java

---

@@ -402,6 +409,9 @@ public static long convertToCsvStream(final ResultSet

rs, final OutputStream out

rowValues.add("");

}

break;

+case SQLXML:

+

rowValues.add(StringEscapeUtils.escapeCsv(((java.sql.SQLXML)

value).getString()));

--- End diff --

Shouldn't we take care of null `value` here, too?

---

[GitHub] nifi issue #3182: NIFI-5838 - Improve the schema validation method in Kite p...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3182 @pvillard31 I understand the intent of this change and it looks good if we want to invalidate schema URL setting if it doesn't have URI.schema. Just wondering if we close the `FileSystem fs`, too, the lingering `BLOCKED on org.apache.hadoop.ipc.Client$Connection` threads can be discarded? Current code only closes opened input streams, but doesn't close FileSystem. ---

[GitHub] nifi pull request #3179: NIFI-5834: Restore default PutHiveQL error handling...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3179#discussion_r236571657

--- Diff:

nifi-nar-bundles/nifi-hive-bundle/nifi-hive3-processors/src/main/java/org/apache/nifi/processors/hive/PutHive3QL.java

---

@@ -148,7 +148,23 @@ public void constructProcess() {

if (e instanceof SQLNonTransientException) {

return ErrorTypes.InvalidInput;

} else if (e instanceof SQLException) {

-return ErrorTypes.TemporalFailure;

+// Use the SQLException's vendor code for guidance -- see

Hive's ErrorMsg class for details on error codes

+int errorCode = ((SQLException) e).getErrorCode();

--- End diff --

I think that's a good idea :)

---

[GitHub] nifi pull request #3180: NIFI-5833 Treat GetTwitter API properties as sensit...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3180#discussion_r235285563

--- Diff:

nifi-nar-bundles/nifi-social-media-bundle/nifi-twitter-processors/src/main/java/org/apache/nifi/processors/twitter/GetTwitter.java

---

@@ -56,24 +67,10 @@

import org.apache.nifi.processor.io.OutputStreamCallback;

import org.apache.nifi.processor.util.StandardValidators;

-import com.twitter.hbc.ClientBuilder;

-import com.twitter.hbc.core.Client;

-import com.twitter.hbc.core.Constants;

-import com.twitter.hbc.core.endpoint.Location ;

-import com.twitter.hbc.core.endpoint.Location.Coordinate ;

-import com.twitter.hbc.core.endpoint.StatusesFilterEndpoint;

-import com.twitter.hbc.core.endpoint.StatusesFirehoseEndpoint;

-import com.twitter.hbc.core.endpoint.StatusesSampleEndpoint;

-import com.twitter.hbc.core.endpoint.StreamingEndpoint;

-import com.twitter.hbc.core.event.Event;

-import com.twitter.hbc.core.processor.StringDelimitedProcessor;

-import com.twitter.hbc.httpclient.auth.Authentication;

-import com.twitter.hbc.httpclient.auth.OAuth1;

-

@SupportsBatching

@InputRequirement(Requirement.INPUT_FORBIDDEN)

@Tags({"twitter", "tweets", "social media", "status", "json"})

-@CapabilityDescription("Pulls status changes from Twitter's streaming API")

+@CapabilityDescription("Pulls status changes from Twitter's streaming API.

In versions starting with 1.9.0, the Consumer Key and Access Token are marked

as sensitive according to

https://developer.twitter.com/en/docs/basics/authentication/guides/securing-keys-and-tokens;)

--- End diff --

I'd move the additional note to each properties as it's not a capability

description.

---

[GitHub] nifi pull request #3180: NIFI-5833 Treat GetTwitter API properties as sensit...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3180#discussion_r235285755

--- Diff:

nifi-nar-bundles/nifi-framework-bundle/nifi-framework/nifi-framework-core/src/test/groovy/org/apache/nifi/controller/serialization/FlowFromDOMFactoryTest.groovy

---

@@ -135,6 +140,83 @@ class FlowFromDOMFactoryTest {

assert msg.message =~ "Check that the ${KEY} value in

nifi.properties matches the value used to encrypt the flow.xml.gz file"

}

+@Test

+void

testShouldDecryptSensitiveFlowValueRegardlessOfPropertySensitiveStatus() throws

Exception {

+// Arrange

+

+// Create a mock Element object to be parsed

+

+// TODO: Mock call to StandardFlowSynchronizer#readFlowFromDisk()

+final String FLOW_XML = """

+

+ 10

+ 5

+

+

+32aeba59-0167-1000-fc76-847bf5d10d73

+NiFi Flow

+

+

+

+ 32af5e4e-0167-1000-ad5f-c79ff57c851e

+ Example Processor

+

+

+

+ org.apache.nifi.processors.test.ExampleProcessor

+

+org.apache.nifi

+nifi-test-nar

+1.9.0-SNAPSHOT

+

+ 1

+ 0 sec

+ 30 sec

+ 1 sec

+ WARN

+ false

+ STOPPED

+ TIMER_DRIVEN

+ ALL

+ 0

+

+Plaintext Property

+plain value

+

+

+Sensitive Property

+

enc{29077eedc9af7515cc3e0d2d29a93a5cbb059164876948458fd0c890009c8661}

+

+

+

+

+

+

+"""

+

+// TODO: Mock call to StandardFlowSynchronizer#parseFlowBytes()

--- End diff --

Do we still need this TODO comment?

---

[GitHub] nifi pull request #3180: NIFI-5833 Treat GetTwitter API properties as sensit...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3180#discussion_r235285625

--- Diff:

nifi-nar-bundles/nifi-framework-bundle/nifi-framework/nifi-framework-core/src/test/groovy/org/apache/nifi/controller/serialization/FlowFromDOMFactoryTest.groovy

---

@@ -135,6 +140,83 @@ class FlowFromDOMFactoryTest {

assert msg.message =~ "Check that the ${KEY} value in

nifi.properties matches the value used to encrypt the flow.xml.gz file"

}

+@Test

+void

testShouldDecryptSensitiveFlowValueRegardlessOfPropertySensitiveStatus() throws

Exception {

+// Arrange

+

+// Create a mock Element object to be parsed

+

+// TODO: Mock call to StandardFlowSynchronizer#readFlowFromDisk()

--- End diff --

Do we still need this TODO comment?

---

[GitHub] nifi pull request #3179: NIFI-5834: Restore default PutHiveQL error handling...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3179#discussion_r235277747

--- Diff:

nifi-nar-bundles/nifi-hive-bundle/nifi-hive3-processors/src/main/java/org/apache/nifi/processors/hive/PutHive3QL.java

---

@@ -148,7 +148,23 @@ public void constructProcess() {

if (e instanceof SQLNonTransientException) {

return ErrorTypes.InvalidInput;

} else if (e instanceof SQLException) {

-return ErrorTypes.TemporalFailure;

+// Use the SQLException's vendor code for guidance -- see

Hive's ErrorMsg class for details on error codes

+int errorCode = ((SQLException) e).getErrorCode();

--- End diff --

When a SQLException is thrown, NiFi logs don't show what the SQLException

error code is. I suggest adding a debug log to write errorCode here.

Alternatively we can update `PutHive3QL.onFlowFileError` method to add

errorCode in case the exception is SQLException.

Being able to know the actual error code will make investigation process

easier in the future.

---

[GitHub] nifi pull request #3179: NIFI-5834: Restore default PutHiveQL error handling...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3179#discussion_r235274647

--- Diff:

nifi-nar-bundles/nifi-hive-bundle/nifi-hive3-processors/src/main/java/org/apache/nifi/processors/hive/PutHive3QL.java

---

@@ -148,7 +148,23 @@ public void constructProcess() {

if (e instanceof SQLNonTransientException) {

return ErrorTypes.InvalidInput;

} else if (e instanceof SQLException) {

-return ErrorTypes.TemporalFailure;

+// Use the SQLException's vendor code for guidance -- see

Hive's ErrorMsg class for details on error codes

+int errorCode = ((SQLException) e).getErrorCode();

+if (errorCode >= 1 && errorCode < 2) {

+return ErrorTypes.InvalidInput;

+} else if (errorCode >= 2 && errorCode < 3) {

+return ErrorTypes.TemporalFailure;

--- End diff --

Based on Hive source code and the detail of these errors, I think we should

map 2 error code to InvalidInput as retrying will not succeed.

> 2 to 2: Runtime errors where Hive believes that retries are

unlikely to succeed.

https://github.com/apache/hive/blob/master/ql/src/java/org/apache/hadoop/hive/ql/ErrorMsg.java#L48

---

[GitHub] nifi issue #3179: NIFI-5834: Restore default PutHiveQL error handling behavi...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3179 Reviewing.. ---

[GitHub] nifi issue #3177: NIFI-5828: Documents behavior of ExecuteSQL attrs when Max...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3177 LGTM +1, merging. Thanks @colindean ! ---

[GitHub] nifi issue #3172: NIFI-5823: Fixes typo in min idle connections property nam...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3172 LGTM +1, merged to master. Good catch before releasing :) ---

[GitHub] nifi issue #3170: NIFI-5652: Fixed LogMessage when logging level is disabled

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3170 LGTM +1, merging. Thanks @mattyb149! ---

[GitHub] nifi issue #3169: NIFI-5815 - PutORC processor 'Restricted' still requires a...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3169 LGTM +1, merging. Thanks @pvillard31! ---

[GitHub] nifi pull request #3169: NIFI-5815 - PutORC processor 'Restricted' still req...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3169#discussion_r232895405

--- Diff:

nifi-nar-bundles/nifi-hive-bundle/nifi-hive3-processors/src/main/java/org/apache/nifi/processors/orc/PutORC.java

---

@@ -63,7 +65,11 @@

+ "the path is the directory that contains this ORC file

on HDFS. For example, this processor can send flow files downstream to

ReplaceText to set the content "

+ "to this DDL (plus the LOCATION clause as described),

then to PutHiveQL processor to create the table if it doesn't exist.")

})

-@Restricted("Provides operator the ability to write to any file that NiFi

has access to in HDFS or the local filesystem.")

+@Restricted(restrictions = {

+@Restriction(

+requiredPermission = RequiredPermission.WRITE_FILESYSTEM,

+explanation = "Provides operator the ability to delete any

file that NiFi has access to in HDFS or the local filesystem.")

--- End diff --

Is the term 'delete' correct?

---

[GitHub] nifi issue #3163: NIFI-5809: If QueryRecord has a single-column projection a...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3163 LGTM, +1, merging. Thanks for fixing this @markap14! ---

[GitHub] nifi issue #3163: NIFI-5809: If QueryRecord has a single-column projection a...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3163 Reviewing ---

[GitHub] nifi issue #3160: NIFI-5805: Pool the BinaryEncoders used by the WriteAvroRe...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3160 @markap14 The updates look good to me. I'm +1 on this PR. However, there are some conflicts with the recent change and I think it's safe for you to resolve the conflict instead of me doing that. Please address conflicts and merge it. Thanks! ---

[GitHub] nifi pull request #3113: NIFI-5724 making the database connection autocommit...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3113#discussion_r232159628

--- Diff:

nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/PutSQL.java

---

@@ -189,13 +201,40 @@

properties.add(CONNECTION_POOL);

properties.add(SQL_STATEMENT);

properties.add(SUPPORT_TRANSACTIONS);

+properties.add(AUTO_COMMIT);

properties.add(TRANSACTION_TIMEOUT);

properties.add(BATCH_SIZE);

properties.add(OBTAIN_GENERATED_KEYS);

properties.add(RollbackOnFailure.ROLLBACK_ON_FAILURE);

return properties;

}

+@Override

+protected final Collection

customValidate(ValidationContext context) {

+final Collection results = new ArrayList<>();

+final String support_transactions =

context.getProperty(SUPPORT_TRANSACTIONS).getValue();

+final String rollback_on_failure =

context.getProperty(RollbackOnFailure.ROLLBACK_ON_FAILURE).getValue();

+final String auto_commit =

context.getProperty(AUTO_COMMIT).getValue();

+

+if(auto_commit.equalsIgnoreCase("true")) {

+if(support_transactions.equalsIgnoreCase("true")) {

+results.add(new ValidationResult.Builder()

+

.subject(SUPPORT_TRANSACTIONS.getDisplayName())

+.explanation(format("'%s' cannot be set to

'true' when '%s' is also set to 'true'."

++ "Transactions for batch updates

cannot be supported when auto commit is set to 'true'",

SUPPORT_TRANSACTIONS.getDisplayName(), AUTO_COMMIT.getDisplayName()))

+.build());

+}

+if(rollback_on_failure.equalsIgnoreCase("true")) {

+results.add(new ValidationResult.Builder()

+

.subject(RollbackOnFailure.ROLLBACK_ON_FAILURE.getDisplayName())

+.explanation(format("'%s' cannot be set to 'true'

when '%s' is also set to 'true'."

++ "Transaction rollbacks for batch updates

cannot be supported when auto commit is set to 'true'",

RollbackOnFailure.ROLLBACK_ON_FAILURE.getDisplayName(),

AUTO_COMMIT.getDisplayName()))

--- End diff --

This line causes following check-style error. Please build with

`-Pcontrib-check` to confirm styles locally.

```

src/main/java/org/apache/nifi/processors/standard/PutSQL.java:[231] (sizes)

LineLength: Line is longer than 200 characters (found 217).

```

---

[GitHub] nifi pull request #3113: NIFI-5724 making the database connection autocommit...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3113#discussion_r232166089

--- Diff:

nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/PutSQL.java

---

@@ -134,6 +138,14 @@

.expressionLanguageSupported(ExpressionLanguageScope.FLOWFILE_ATTRIBUTES)

.build();

+static final PropertyDescriptor AUTO_COMMIT = new

PropertyDescriptor.Builder()

+.name("database-session-autocommit")

+.displayName("Database session autocommit value")

--- End diff --

I'm going to change this displayName to "Database Session AutoCommit" to

align capitalization with other properties.

---

[GitHub] nifi issue #3161: Increase timeouts in unit tests to avoid frequent failures

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3161 @markap14 This PR has been merged to master branch, but still open since there was not 'This closes ...' comment. Do you mind closing this? Thanks! ---

[GitHub] nifi pull request #3160: NIFI-5805: Pool the BinaryEncoders used by the Writ...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3160#discussion_r232119197

--- Diff:

nifi-nar-bundles/nifi-standard-services/nifi-record-serialization-services-bundle/nifi-record-serialization-services/src/main/java/org/apache/nifi/avro/WriteAvroResultWithExternalSchema.java

---

@@ -33,24 +28,34 @@

import org.apache.nifi.serialization.record.Record;

import org.apache.nifi.serialization.record.RecordSchema;

+import java.io.BufferedOutputStream;

+import java.io.IOException;

+import java.io.OutputStream;

+import java.util.Map;

+import java.util.concurrent.BlockingQueue;

+

public class WriteAvroResultWithExternalSchema extends

AbstractRecordSetWriter {

private final SchemaAccessWriter schemaAccessWriter;

private final RecordSchema recordSchema;

private final Schema avroSchema;

private final BinaryEncoder encoder;

private final OutputStream buffered;

private final DatumWriter datumWriter;

+private final BlockingQueue recycleQueue;

public WriteAvroResultWithExternalSchema(final Schema avroSchema,

final RecordSchema recordSchema,

-final SchemaAccessWriter schemaAccessWriter, final OutputStream

out) throws IOException {

+final SchemaAccessWriter schemaAccessWriter, final OutputStream

out, final BlockingQueue recycleQueue) {

super(out);

this.recordSchema = recordSchema;

this.schemaAccessWriter = schemaAccessWriter;

this.avroSchema = avroSchema;

this.buffered = new BufferedOutputStream(out);

+this.recycleQueue = recycleQueue;

+

+BinaryEncoder reusableEncoder = recycleQueue.poll();

+encoder = EncoderFactory.get().blockingBinaryEncoder(buffered,

reusableEncoder);

--- End diff --

Probably, we should add a debug log here to provide information whether

current number of pool size fits the actual usage. If there are more null

reusableEncorder and user want to improve performance, then they can increase

pool size ... etc.

---

[GitHub] nifi pull request #3160: NIFI-5805: Pool the BinaryEncoders used by the Writ...

Github user ijokarumawak commented on a diff in the pull request: https://github.com/apache/nifi/pull/3160#discussion_r232116819 --- Diff: nifi-nar-bundles/nifi-standard-services/nifi-record-serialization-services-bundle/nifi-record-serialization-services/src/main/java/org/apache/nifi/avro/AvroRecordSetWriter.java --- @@ -68,16 +76,40 @@ .required(true) .build(); +static final PropertyDescriptor ENCODER_POOL_SIZE = new Builder() --- End diff -- Since this configuration is performance related and depends on environment, I'd suggest supporting variable registry EL. ---

[GitHub] nifi pull request #3160: NIFI-5805: Pool the BinaryEncoders used by the Writ...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3160#discussion_r232117307

--- Diff:

nifi-nar-bundles/nifi-standard-services/nifi-record-serialization-services-bundle/nifi-record-serialization-services/src/main/java/org/apache/nifi/avro/AvroRecordSetWriter.java

---

@@ -68,16 +76,40 @@

.required(true)

.build();

+static final PropertyDescriptor ENCODER_POOL_SIZE = new Builder()

+.name("encoder-pool-size")

+.displayName("Encoder Pool Size")

+.description("Avro Writers require the use of an Encoder. Creation

of Encoders is expensive, but once created, they can be reused. This property

controls the maximum number of Encoders that" +

+" can be pooled and reused. Setting this value too small can

result in degraded performance, but setting it higher can result in more heap

being used.")

--- End diff --

Just for clarification, I'd suggest adding a note mentioning that, this

property doesn't have any effect with 'Embed Avro Schema' strategy.

---

[GitHub] nifi issue #3159: NIFI-5794 Allow empty string demarcator in Consume/Publish...

Github user ijokarumawak commented on the issue:

https://github.com/apache/nifi/pull/3159

LGTM +1. I was able to confirm the expected benefit of using an empty

demarcator string to combine multiple Avro formatted Kafka messages into a

single FlowFile. Thanks @pvillard31!

For those who want to use this improvement with older NiFi versions, using

EL `${empty}` can mimic the non-empty validation and result the same effect .

This assumes there's no variable named `empty`, the EL evaluation result will

be an empty string while this is a valid configuration since it's not an empty

property value.

---

[GitHub] nifi pull request #3158: NIFI-5802: Add QueryRecord nullable field support

GitHub user ijokarumawak opened a pull request: https://github.com/apache/nifi/pull/3158 NIFI-5802: Add QueryRecord nullable field support Thank you for submitting a contribution to Apache NiFi. In order to streamline the review of the contribution we ask you to ensure the following steps have been taken: ### For all changes: - [x] Is there a JIRA ticket associated with this PR? Is it referenced in the commit message? - [x] Does your PR title start with NIFI- where is the JIRA number you are trying to resolve? Pay particular attention to the hyphen "-" character. - [x] Has your PR been rebased against the latest commit within the target branch (typically master)? - [x] Is your initial contribution a single, squashed commit? ### For code changes: - [x] Have you ensured that the full suite of tests is executed via mvn -Pcontrib-check clean install at the root nifi folder? - [x] Have you written or updated unit tests to verify your changes? - [ ] If adding new dependencies to the code, are these dependencies licensed in a way that is compatible for inclusion under [ASF 2.0](http://www.apache.org/legal/resolved.html#category-a)? - [ ] If applicable, have you updated the LICENSE file, including the main LICENSE file under nifi-assembly? - [ ] If applicable, have you updated the NOTICE file, including the main NOTICE file found under nifi-assembly? - [ ] If adding new Properties, have you added .displayName in addition to .name (programmatic access) for each of the new properties? ### For documentation related changes: - [ ] Have you ensured that format looks appropriate for the output in which it is rendered? ### Note: Please ensure that once the PR is submitted, you check travis-ci for build issues and submit an update to your PR as soon as possible. You can merge this pull request into a Git repository by running: $ git pull https://github.com/ijokarumawak/nifi nifi-5802 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/nifi/pull/3158.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #3158 commit 8bf29d4c56fb1f507d987c8aea774e5345b286f7 Author: Koji Kawamura Date: 2018-11-08T03:10:36Z NIFI-5802: Add QueryRecord nullable field support ---

[GitHub] nifi pull request #3113: NIFI-5724 making the database connection autocommit...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3113#discussion_r231743426

--- Diff:

nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/PutSQL.java

---

@@ -134,6 +134,14 @@

.expressionLanguageSupported(ExpressionLanguageScope.FLOWFILE_ATTRIBUTES)

.build();

+static final PropertyDescriptor AUTO_COMMIT = new

PropertyDescriptor.Builder()

+.name("database-session-autocommit")

+.displayName("Database session autocommit value")

+.description("The autocommit mode to set on the database

connection being used.")

+.allowableValues("true", "false")

+.defaultValue("false")

+.build();

--- End diff --

@viswaug Thanks for the snowflake doc link and explanation. Look forward to

see new commits for further review. In order to update your PR, you just need

to:

1. Make code changes

2. Add the changed files for a commit: `git add ` or `git add -A`

(all changed files)

3. Commit with some comments: `git commit`, this makes a commit to your

local branch

4. Push the commit to your remote branch: `git push origin

configurable_autocommit_putsql`. This command adds the new commit to this PR.

---

[GitHub] nifi issue #3110: NIFI-5752: Load balancing fails with wildcard certs

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3110 It looks good. +1. Merging. Thanks, @kotarot! ---

[GitHub] nifi issue #3122: NIFI-5777: Update the tag and the property of LogMessage

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3122 LGTM, +1. Merging. Thanks @kotarot ! ---

[GitHub] nifi issue #3127: NIFI-5787 Update ReadMe doc to start Nifi on windows

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3127 LGTM, +1, thanks for your contribution @brandonJY! ---

[GitHub] nifi pull request #3110: NIFI-5752: Load balancing fails with wildcard certs

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3110#discussion_r231011542

--- Diff:

nifi-nar-bundles/nifi-framework-bundle/nifi-framework/nifi-framework-core/src/main/java/org/apache/nifi/controller/queue/clustered/server/ClusterLoadBalanceAuthorizer.java

---

@@ -33,14 +42,27 @@

private final ClusterCoordinator clusterCoordinator;

private final EventReporter eventReporter;

+private final HostnameVerifier hostnameVerifier;

public ClusterLoadBalanceAuthorizer(final ClusterCoordinator

clusterCoordinator, final EventReporter eventReporter) {

this.clusterCoordinator = clusterCoordinator;

this.eventReporter = eventReporter;

+this.hostnameVerifier = new DefaultHostnameVerifier();

}

@Override

-public String authorize(final Collection clientIdentities)

throws NotAuthorizedException {

+public String authorize(SSLSocket sslSocket) throws

NotAuthorizedException, IOException {

+final SSLSession sslSession = sslSocket.getSession();

+

+final Set clientIdentities;

+try {

+clientIdentities = getCertificateIdentities(sslSession);

+} catch (final CertificateException e) {

+throw new IOException("Failed to extract Client Certificate",

e);

+}

+

+logger.debug("Will perform authorization against Client Identities

'{}'", clientIdentities);

+

if (clientIdentities == null) {

--- End diff --

The existing log message indicating that this block is meant for the case

where NiFi clustering is not secured (not sending data via SSLSocket). This

block contradicts with the other block such as following

getCertificateIdentities method:

```

final Certificate[] certs = sslSession.getPeerCertificates();

if (certs == null || certs.length == 0) {

throw new SSLPeerUnverifiedException("No certificates found");

}

```

If we care about `clientIdentities` being null, then we should throw

`SSLPeerUnverifiedException("No client identities found");` instead of

authorizing it. Having said that, I believe removing this block is safe as

`clientIdentities` are populated using `Collectors.toSet`. If no SAN is found,

the value will be an empty set instead of null.

---

[GitHub] nifi issue #3125: NIFI-5677 Added note to clarify why modifying/creating var...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi/pull/3125 LGTM, +1. Merging. Thanks @andrewmlim! ---

[GitHub] nifi-registry pull request #146: NIFIREG-205: Allow Git repo to delete a flo...

GitHub user ijokarumawak opened a pull request: https://github.com/apache/nifi-registry/pull/146 NIFIREG-205: Allow Git repo to delete a flow with snapshot version 0 You can merge this pull request into a Git repository by running: $ git pull https://github.com/ijokarumawak/nifi-registry nifireg-205 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/nifi-registry/pull/146.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #146 commit e225f8db39c85467bb95271b495887b6cceba2d6 Author: Koji Kawamura Date: 2018-11-06T05:24:08Z NIFIREG-205: Allow Git repo to delete a flow with snapshot version 0 ---

[GitHub] nifi pull request #3134: NIFI-5792: Remember created versioned flow informat...

GitHub user ijokarumawak opened a pull request: https://github.com/apache/nifi/pull/3134 NIFI-5792: Remember created versioned flow information - Before this fix, NiFi loses information about created versioned flow in case of subsequent snapshot creation failure, and NiFi API returned an error response - This commit makes: - The created versioned Flow information is stored even if subsequent snapshot creation fails - NiFi API to return a successful 200 response in that case, but return versioned flow status as SYNC_FAILURE with an explanation. NiFi UI shows a popup error dialog with the explanation. - Versioned flow status will be LOCALLY_MODIFIED if the latest version is 0. Thank you for submitting a contribution to Apache NiFi. In order to streamline the review of the contribution we ask you to ensure the following steps have been taken: ### For all changes: - [ ] Is there a JIRA ticket associated with this PR? Is it referenced in the commit message? - [ ] Does your PR title start with NIFI- where is the JIRA number you are trying to resolve? Pay particular attention to the hyphen "-" character. - [ ] Has your PR been rebased against the latest commit within the target branch (typically master)? - [ ] Is your initial contribution a single, squashed commit? ### For code changes: - [ ] Have you ensured that the full suite of tests is executed via mvn -Pcontrib-check clean install at the root nifi folder? - [ ] Have you written or updated unit tests to verify your changes? - [ ] If adding new dependencies to the code, are these dependencies licensed in a way that is compatible for inclusion under [ASF 2.0](http://www.apache.org/legal/resolved.html#category-a)? - [ ] If applicable, have you updated the LICENSE file, including the main LICENSE file under nifi-assembly? - [ ] If applicable, have you updated the NOTICE file, including the main NOTICE file found under nifi-assembly? - [ ] If adding new Properties, have you added .displayName in addition to .name (programmatic access) for each of the new properties? ### For documentation related changes: - [ ] Have you ensured that format looks appropriate for the output in which it is rendered? ### Note: Please ensure that once the PR is submitted, you check travis-ci for build issues and submit an update to your PR as soon as possible. You can merge this pull request into a Git repository by running: $ git pull https://github.com/ijokarumawak/nifi nifi-5792 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/nifi/pull/3134.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #3134 commit 2eb5f2c42f0cbc6a793cd91b1e821c4acdd10d47 Author: Koji Kawamura Date: 2018-11-06T05:08:23Z NIFI-5792: Remember created versioned flow information - Before this fix, NiFi loses information about created versioned flow in case of subsequent snapshot creation failure, and NiFi API returned an error response - This commit makes: - The created versioned Flow information is stored even if subsequent snapshot creation fails - NiFi API to return a successful 200 response in that case, but return versioned flow status as SYNC_FAILURE with an explanation. NiFi UI shows a popup error dialog with the explanation. - Versioned flow status will be LOCALLY_MODIFIED if the latest version is 0. ---

[GitHub] nifi pull request #3125: NIFI-5677 Added note to clarify why modifying/creat...

Github user ijokarumawak commented on a diff in the pull request: https://github.com/apache/nifi/pull/3125#discussion_r230623728 --- Diff: nifi-docs/src/main/asciidoc/user-guide.adoc --- @@ -1928,7 +1928,9 @@ The following actions are not considered local changes: * modifying sensitive property values * modifying remote process group URLs * updating a processor that was referencing a non-existent controller service to reference an externally available controller service -* modifying variables +* creating or modifying variables --- End diff -- A bit nit-picky, but how about deleting variables? ---

[GitHub] nifi pull request #3122: NIFI-5777: Update the tag and the property of LogMe...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3122#discussion_r230526767

--- Diff:

nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/test/java/org/apache/nifi/processors/standard/TestLogMessage.java

---

@@ -57,7 +57,7 @@ MockComponentLog getMockComponentLog() {

public void before() throws InitializationException {

testableLogMessage = new TestableLogMessage();

runner = TestRunners.newTestRunner(testableLogMessage);

-

+runner.setValidateExpressionUsage(false);

--- End diff --

Sorry for the confusion. I was just looking at the source code. When I run

the processor, I realized that the processor doesn't allow EL. The logLevel is

shown as a free text input, and I can input EL, but validation failed because

of the configured allowable values.

I still think changing log level by EL would be nice:

My suggestion is, changing:

```

.description("The Log Level to use when logging the message")

.allowableValues(MessageLogLevel.values())

```

to

```

.description("The Log Level to use when logging the message: "

+ Arrays.toString(MessageLogLevel.values()))

.addValidator(StandardValidators.NON_EMPTY_VALIDATOR)

```

How do you guys think?

---

[GitHub] nifi pull request #3110: NIFI-5752: Load balancing fails with wildcard certs

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3110#discussion_r230287180

--- Diff:

nifi-nar-bundles/nifi-framework-bundle/nifi-framework/nifi-framework-core/src/main/java/org/apache/nifi/controller/queue/clustered/server/ClusterLoadBalanceAuthorizer.java

---

@@ -57,11 +79,35 @@ public String authorize(final Collection

clientIdentities) throws NotAut

}

}

-final String message = String.format("Authorization failed for

Client ID's %s to Load Balance data because none of the ID's are known Cluster

Node Identifiers",

-clientIdentities);

+// If there are no matches of Client IDs, try to verify it by

HostnameVerifier. In this way, we can support wildcard certificates.

+for (final String nodeId : nodeIds) {

+if (hostnameVerifier.verify(nodeId, sslSession)) {

+final String clientId =

sslSocket.getInetAddress().getHostName();

+logger.debug("The request was verified with node '{}'. The

hostname derived from the socket is '{}'. Authorizing Client to Load Balance

data", nodeId, clientId);

+return clientId;

+}

+}

+

+final String message = String.format("Authorization failed for

Client ID's to Load Balance data because none of the ID's are known Cluster

Node Identifiers");

--- End diff --

We don't have to use `String.format()` here, please the String to

`logger.warn()` directly.

---

[GitHub] nifi pull request #3110: NIFI-5752: Load balancing fails with wildcard certs

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3110#discussion_r230287682

--- Diff:

nifi-nar-bundles/nifi-framework-bundle/nifi-framework/nifi-framework-core/src/main/java/org/apache/nifi/controller/queue/clustered/server/ClusterLoadBalanceAuthorizer.java

---

@@ -33,14 +42,27 @@

private final ClusterCoordinator clusterCoordinator;

private final EventReporter eventReporter;

+private final HostnameVerifier hostnameVerifier;

public ClusterLoadBalanceAuthorizer(final ClusterCoordinator

clusterCoordinator, final EventReporter eventReporter) {

this.clusterCoordinator = clusterCoordinator;

this.eventReporter = eventReporter;

+this.hostnameVerifier = new DefaultHostnameVerifier();

}

@Override

-public String authorize(final Collection clientIdentities)

throws NotAuthorizedException {

+public String authorize(SSLSocket sslSocket) throws

NotAuthorizedException, IOException {

+final SSLSession sslSession = sslSocket.getSession();

+

+final Set clientIdentities;

+try {

+clientIdentities = getCertificateIdentities(sslSession);

+} catch (final CertificateException e) {

+throw new IOException("Failed to extract Client Certificate",

e);

+}

+

+logger.debug("Will perform authorization against Client Identities

'{}'", clientIdentities);

+

if (clientIdentities == null) {

--- End diff --

Now we only call this `authorize()` method if socket is a SSLSocket. We can

remove this block.

---

[GitHub] nifi pull request #3122: NIFI-5777: Update the tag and the property of LogMe...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3122#discussion_r230257806

--- Diff:

nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/test/java/org/apache/nifi/processors/standard/TestLogMessage.java

---

@@ -57,7 +57,7 @@ MockComponentLog getMockComponentLog() {

public void before() throws InitializationException {

testableLogMessage = new TestableLogMessage();

runner = TestRunners.newTestRunner(testableLogMessage);

-

+runner.setValidateExpressionUsage(false);

--- End diff --

Hmm, I think changing logLevel based on expression makes sense. For

example, if you want to debug for a particular type of FlowFiles, you'd use EL

to write WARN level log for specific attribute value and use TRACE/DEBUG for

others to minimize the noise. I don't think specifying log level by text is a

critical issue..

---

[GitHub] nifi-registry issue #144: NIFIREG-209 Rebuild metadata DB from FlowPersisten...

Github user ijokarumawak commented on the issue: https://github.com/apache/nifi-registry/pull/144 @bbende This is really cool! I was able to: 1. Setup two NiFi Registry environment using the same remote Git repository, but different branches, dev and prod. 2. Create new flow versions at dev and push it to remote origin/dev branch 3. Merge commits to origin/prod branch. Then remove database dir at prod environment to generate it from updated Git commits. 4. After restarting the prod NiFi Registry, I can import the new flows from dev to prod environment. 5. Updating existing flow scenario also worked. Even if I restored database from Git commits, NiFi was able to keep notice new version and change the deployed PG to a new version. Haven't looked at the detail of implementation, but from functional point of view, I'm a huge +1! Great job! ---

[GitHub] nifi pull request #3113: NIFI-5724 making the database connection autocommit...

Github user ijokarumawak commented on a diff in the pull request:

https://github.com/apache/nifi/pull/3113#discussion_r229960433

--- Diff:

nifi-nar-bundles/nifi-standard-bundle/nifi-standard-processors/src/main/java/org/apache/nifi/processors/standard/PutSQL.java

---

@@ -134,6 +134,14 @@

.expressionLanguageSupported(ExpressionLanguageScope.FLOWFILE_ATTRIBUTES)

.build();

+static final PropertyDescriptor AUTO_COMMIT = new

PropertyDescriptor.Builder()

+.name("database-session-autocommit")

+.displayName("Database session autocommit value")

+.description("The autocommit mode to set on the database

connection being used.")

+.allowableValues("true", "false")

+.defaultValue("false")

+.build();

--- End diff --

@viswaug That approach may work, too. However, I prefer exposing

auto-commit property and let user to enable it explicitly. And protect existing

logics work as expected by adding following custom validation:

- if auto-commit is enabled:

- `Support Fragmented Transactions` should be false

- && `Rollback On Failure` should be false

This way even if we forgot about other conditions that require auto-commit,

user can disable auto-commit to work-around. PutSQL has been there for long

time and used by so many flows. We can not introduce any degrading issue by

supporting auto-commit.

BTW, the description of Snowflake database issue is not clear enough to me

on how auto-commit setting relates to the issue.

> This is causing an issue with the snowflake DB where abruptly

disconnected sessions do not release the locks they have taken.

Do you have any existing Snowflake issue or resource that we can refer? Or

if not have you consider reporting the issue to Snowflake project?

And also, if you have any use-case (other than avoiding Snowflake issue)

where auto-commit is preferable, please add such to auto-commit property

description.

---

[GitHub] nifi pull request #3120: NIFI-5776: ListSFTP should log SSH_FXP_STATUS id va...