[GitHub] [kafka] mjsax merged pull request #9614: KAFKA-10500: Add failed-stream-threads metric for adding + removing stream threads

mjsax merged pull request #9614: URL: https://github.com/apache/kafka/pull/9614 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] chia7712 commented on a change in pull request #9667: MINOR: Do not print log4j for memberId required

chia7712 commented on a change in pull request #9667:

URL: https://github.com/apache/kafka/pull/9667#discussion_r535900161

##

File path:

clients/src/main/java/org/apache/kafka/clients/consumer/internals/AbstractCoordinator.java

##

@@ -465,7 +465,11 @@ boolean joinGroupIfNeeded(final Timer timer) {

}

} else {

final RuntimeException exception = future.exception();

-log.info("Rebalance failed.", exception);

+

+if (!(exception instanceof MemberIdRequiredException)) {

Review comment:

For another, does it need some comment to explain why

```MemberIdRequiredException``` is excluded.

##

File path:

clients/src/main/java/org/apache/kafka/clients/consumer/internals/AbstractCoordinator.java

##

@@ -465,7 +465,11 @@ boolean joinGroupIfNeeded(final Timer timer) {

}

} else {

final RuntimeException exception = future.exception();

-log.info("Rebalance failed.", exception);

+

+if (!(exception instanceof MemberIdRequiredException)) {

Review comment:

Is ```JoinGroupResponseHandler``` a better place to log error? For

example, the error ```UNKNOWN_MEMBER_ID``` is log twice.

(https://github.com/apache/kafka/blob/trunk/clients/src/main/java/org/apache/kafka/clients/consumer/internals/AbstractCoordinator.java#L605)

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[jira] [Assigned] (KAFKA-10017) Flaky Test EosBetaUpgradeIntegrationTest.shouldUpgradeFromEosAlphaToEosBeta

[ https://issues.apache.org/jira/browse/KAFKA-10017?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Matthias J. Sax reassigned KAFKA-10017: --- Assignee: Matthias J. Sax (was: A. Sophie Blee-Goldman) > Flaky Test EosBetaUpgradeIntegrationTest.shouldUpgradeFromEosAlphaToEosBeta > --- > > Key: KAFKA-10017 > URL: https://issues.apache.org/jira/browse/KAFKA-10017 > Project: Kafka > Issue Type: Bug > Components: streams >Affects Versions: 2.6.0 >Reporter: A. Sophie Blee-Goldman >Assignee: Matthias J. Sax >Priority: Blocker > Labels: flaky-test, unit-test > Fix For: 2.8.0 > > > Creating a new ticket for this since the root cause is different than > https://issues.apache.org/jira/browse/KAFKA-9966 > With injectError = true: > h3. Stacktrace > java.lang.AssertionError: Did not receive all 20 records from topic > multiPartitionOutputTopic within 6 ms Expected: is a value equal to or > greater than <20> but: <15> was less than <20> at > org.hamcrest.MatcherAssert.assertThat(MatcherAssert.java:20) at > org.apache.kafka.streams.integration.utils.IntegrationTestUtils.lambda$waitUntilMinKeyValueRecordsReceived$1(IntegrationTestUtils.java:563) > at > org.apache.kafka.test.TestUtils.retryOnExceptionWithTimeout(TestUtils.java:429) > at > org.apache.kafka.test.TestUtils.retryOnExceptionWithTimeout(TestUtils.java:397) > at > org.apache.kafka.streams.integration.utils.IntegrationTestUtils.waitUntilMinKeyValueRecordsReceived(IntegrationTestUtils.java:559) > at > org.apache.kafka.streams.integration.utils.IntegrationTestUtils.waitUntilMinKeyValueRecordsReceived(IntegrationTestUtils.java:530) > at > org.apache.kafka.streams.integration.EosBetaUpgradeIntegrationTest.readResult(EosBetaUpgradeIntegrationTest.java:973) > at > org.apache.kafka.streams.integration.EosBetaUpgradeIntegrationTest.verifyCommitted(EosBetaUpgradeIntegrationTest.java:961) > at > org.apache.kafka.streams.integration.EosBetaUpgradeIntegrationTest.shouldUpgradeFromEosAlphaToEosBeta(EosBetaUpgradeIntegrationTest.java:427) -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (KAFKA-2967) Move Kafka documentation to ReStructuredText

[ https://issues.apache.org/jira/browse/KAFKA-2967?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243797#comment-17243797 ] Matthias J. Sax commented on KAFKA-2967: I don't know the details either. But I think it would be a huge improvement over HTML! > Move Kafka documentation to ReStructuredText > > > Key: KAFKA-2967 > URL: https://issues.apache.org/jira/browse/KAFKA-2967 > Project: Kafka > Issue Type: Bug >Reporter: Gwen Shapira >Assignee: Gwen Shapira >Priority: Major > Labels: documentation > > Storing documentation as HTML is kind of BS :) > * Formatting is a pain, and making it look good is even worse > * Its just HTML, can't generate PDFs > * Reading and editting is painful > * Validating changes is hard because our formatting relies on all kinds of > Apache Server features. > I suggest: > * Move to RST > * Generate HTML and PDF during build using Sphinx plugin for Gradle. > Lots of Apache projects are doing this. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] ijuma commented on a change in pull request #7409: MINOR: Skip conversion to `Struct` when serializing generated requests/responses

ijuma commented on a change in pull request #7409:

URL: https://github.com/apache/kafka/pull/7409#discussion_r535876326

##

File path:

clients/src/main/java/org/apache/kafka/common/requests/AbstractResponse.java

##

@@ -84,148 +95,151 @@ protected void updateErrorCounts(Map

errorCounts, Errors error)

errorCounts.put(error, count + 1);

}

-protected abstract Struct toStruct(short version);

+protected abstract Message data();

/**

* Parse a response from the provided buffer. The buffer is expected to

hold both

* the {@link ResponseHeader} as well as the response payload.

*/

-public static AbstractResponse parseResponse(ByteBuffer byteBuffer,

RequestHeader requestHeader) {

+public static AbstractResponse parseResponse(ByteBuffer buffer,

RequestHeader requestHeader) {

ApiKeys apiKey = requestHeader.apiKey();

short apiVersion = requestHeader.apiVersion();

-ResponseHeader responseHeader = ResponseHeader.parse(byteBuffer,

apiKey.responseHeaderVersion(apiVersion));

+ResponseHeader responseHeader = ResponseHeader.parse(buffer,

apiKey.responseHeaderVersion(apiVersion));

+AbstractResponse response = AbstractResponse.parseResponse(apiKey,

buffer, apiVersion);

+

+// We correlate after parsing the response to avoid spurious

correlation errors when receiving malformed

+// responses

Review comment:

TODO: Handle this better.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [kafka] g1geordie commented on pull request #9687: MINOR: Move lock method outside try block

g1geordie commented on pull request #9687: URL: https://github.com/apache/kafka/pull/9687#issuecomment-738601977 @chia7712 can you help me to take a look? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] g1geordie opened a new pull request #9687: MINOR: Move lock method outside try block

g1geordie opened a new pull request #9687:

URL: https://github.com/apache/kafka/pull/9687

The `Lock.lock` in the try block may cause `Lock.unlock` throw exception

when it throw exception .

I think it's nice to move outside although` ReentrantLock.lock` impl

doesn't throw exception.

```

class X {

private final ReentrantLock lock = new ReentrantLock();

// ...

public void m() {

lock.lock(); // block until condition holds

try {

// ... method body

} finally {

lock.unlock()

}

}

}

```

pattern recommended by

https://docs.oracle.com/javase/10/docs/api/java/util/concurrent/locks/ReentrantLock.html

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [kafka] ning2008wisc commented on a change in pull request #9224: KAFKA-10304: refactor MM2 integration tests

ning2008wisc commented on a change in pull request #9224:

URL: https://github.com/apache/kafka/pull/9224#discussion_r535856935

##

File path:

connect/mirror/src/test/java/org/apache/kafka/connect/mirror/integration/MirrorConnectorsIntegrationBaseTest.java

##

@@ -0,0 +1,617 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.kafka.connect.mirror.integration;

+

+import org.apache.kafka.clients.admin.Admin;

+import org.apache.kafka.clients.admin.Config;

+import org.apache.kafka.clients.admin.ConfigEntry;

+import org.apache.kafka.clients.admin.DescribeConfigsResult;

+import org.apache.kafka.clients.consumer.Consumer;

+import org.apache.kafka.clients.consumer.ConsumerRecords;

+import org.apache.kafka.clients.consumer.OffsetAndMetadata;

+import org.apache.kafka.clients.CommonClientConfigs;

+import org.apache.kafka.common.config.ConfigResource;

+import org.apache.kafka.common.config.TopicConfig;

+import org.apache.kafka.common.config.SslConfigs;

+import org.apache.kafka.common.config.types.Password;

+import org.apache.kafka.common.utils.Exit;

+import org.apache.kafka.common.TopicPartition;

+import org.apache.kafka.connect.connector.Connector;

+import org.apache.kafka.connect.mirror.MirrorClient;

+import org.apache.kafka.connect.mirror.MirrorHeartbeatConnector;

+import org.apache.kafka.connect.mirror.MirrorMakerConfig;

+import org.apache.kafka.connect.mirror.MirrorSourceConnector;

+import org.apache.kafka.connect.mirror.SourceAndTarget;

+import org.apache.kafka.connect.mirror.MirrorCheckpointConnector;

+import org.apache.kafka.connect.util.clusters.EmbeddedConnectCluster;

+import org.apache.kafka.connect.util.clusters.EmbeddedKafkaCluster;

+import org.apache.kafka.test.IntegrationTest;

+import static org.apache.kafka.test.TestUtils.waitForCondition;

+

+import java.time.Duration;

+import java.util.ArrayList;

+import java.util.Arrays;

+import java.util.Collection;

+import java.util.List;

+import java.util.Collections;

+import java.util.HashMap;

+import java.util.Iterator;

+import java.util.Map;

+import java.util.Properties;

+import java.util.concurrent.TimeUnit;

+import java.util.concurrent.atomic.AtomicBoolean;

+import java.util.concurrent.atomic.AtomicInteger;

+import java.util.stream.Collectors;

+

+import org.slf4j.Logger;

+import org.slf4j.LoggerFactory;

+

+import static org.junit.Assert.assertFalse;

+import static org.junit.Assert.assertEquals;

+import static org.junit.Assert.assertTrue;

+import static org.junit.Assert.assertNotNull;

+import org.junit.Test;

+import org.junit.experimental.categories.Category;

+

+import static org.apache.kafka.connect.mirror.TestUtils.generateRecords;

+

+/**

+ * Tests MM2 replication and failover/failback logic.

+ *

+ * MM2 is configured with active/active replication between two Kafka

clusters. Tests validate that

+ * records sent to either cluster arrive at the other cluster. Then, a

consumer group is migrated from

+ * one cluster to the other and back. Tests validate that consumer offsets are

translated and replicated

+ * between clusters during this failover and failback.

+ */

+@Category(IntegrationTest.class)

+public abstract class MirrorConnectorsIntegrationBaseTest {

+private static final Logger log =

LoggerFactory.getLogger(MirrorConnectorsIntegrationBaseTest.class);

+

+private static final int NUM_RECORDS_PER_PARTITION = 10;

+private static final int NUM_PARTITIONS = 10;

+private static final int NUM_RECORDS_PRODUCED = NUM_PARTITIONS *

NUM_RECORDS_PER_PARTITION;

+private static final int RECORD_TRANSFER_DURATION_MS = 30_000;

+private static final int CHECKPOINT_DURATION_MS = 20_000;

+private static final int RECORD_CONSUME_DURATION_MS = 20_000;

+private static final int OFFSET_SYNC_DURATION_MS = 30_000;

+private static final int NUM_WORKERS = 3;

+private static final int CONSUMER_POLL_TIMEOUT_MS = 500;

+private static final int BROKER_RESTART_TIMEOUT_MS = 10_000;

+private static final long DEFAULT_PRODUCE_SEND_DURATION_MS =

TimeUnit.SECONDS.toMillis(120);

+private static final String PRIMARY_CLUSTER_ALIAS = "primary";

+private static final String BACKUP_CLUSTER_ALIAS = "backup";

+private static final List>

[jira] [Commented] (KAFKA-10672) Restarting Kafka always takes a lot of time

[ https://issues.apache.org/jira/browse/KAFKA-10672?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243730#comment-17243730 ] Wenbing Shen commented on KAFKA-10672: -- * We increased the batch number of one IO read * After many tests, the startup speed increased by 50% on average * See the attached file for detailed code > Restarting Kafka always takes a lot of time > --- > > Key: KAFKA-10672 > URL: https://issues.apache.org/jira/browse/KAFKA-10672 > Project: Kafka > Issue Type: Improvement > Components: core >Affects Versions: 2.0.0 > Environment: A cluster of 21 Kafka nodes; > Each node has 12 disks; > Each node has about 1500 partitions; > There are approximately 700 leader partitions per node; > Slow-loading partitions have about 1000 log segments; >Reporter: Wenbing Shen >Priority: Major > Attachments: AbstractIterator.java, AbstractIteratorOfRestart.java, > AbstractLegacyRecordBatch.java, ByteBufferLogInputStream.java, > DefaultRecordBatch.java, FileLogInputStream.java, FileRecords.java, > LazyDownConversionRecords.java, Log.scala, LogInputStream.java, > LogManager.scala, LogSegment.scala, MemoryRecords.java, > RecordBatchIterator.java, RecordBatchIteratorOfRestart.java, Records.java, > server.log > > > When the snapshot file does not exist, or the latest snapshot file before the > current active period, restoring the state of producers will traverse the log > section, it will traverse the log all batch, in the period when the > individual broker node partition number many, that there are most of the > number of logs, can cause a lot of IO number, IO will only load one batch at > a time, such as a log there will always be in the tens of thousands of batch, > I found that in the code for each batch are at least two IO operation, when a > batch as the default 16 KB,When a log segment is 1G, 65,536 batches will be > generated, and then at least 65,536 *2= 131,072 IO operations will be > generated, which will lead to a lot of time spent in kafka startup process. > We configured 15 log recovery threads in the production environment, and it > still took more than 2 hours to load a partition,can community puts forward > some proposals to the situation or improve.For detailed logs, see the section > on test-perf-18 partitions in the nearby logs -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] guozhangwang commented on pull request #9441: KAFKA-10614: Ensure group state (un)load is executed in the submitted order

guozhangwang commented on pull request #9441: URL: https://github.com/apache/kafka/pull/9441#issuecomment-738579702 Hey @tombentley Sorry for the late reply! I also checked the source code of `ScheduledThreadPoolExecutor` and I think what you've inferred is correct: although it did not keep a single thread alive all the time but dynamically creates and stops the thread in case there's no tasks scheduled for a long time (note we set num.thread == 1), the blocking queue should still guarantee a FIFO ordering. So what's possibly happening is that, the thread handling an leaderISR request with the old controller epoch grabs the lock and proceeds first. In that case, maybe we can simplify your proposed solution as the following: * note that, like @hachikuji mentioned, `onLeadershipChange` is actually protected by the `replicaStateChangeLock` inside the `becomeLeaderOrFollower`, and hence we only need to pass in the controller epoch in `groupCoordinator.onElection/onResignation` just like what we did in `txnCoordinator`. * Inside the `groupCoordinator.onElection/onResignation`, which is lock-protected, we just remember the latest controller epoch at the `GroupCoordinator`, and then we just to one check right before `scheduleLoadGroupAndOffsets/removeGroupsForPartition` against that controller epoch, if not passed, we can log and skip the loading / unloading function call. WDYT? This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (KAFKA-10672) Restarting Kafka always takes a lot of time

[ https://issues.apache.org/jira/browse/KAFKA-10672?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Wenbing Shen updated KAFKA-10672: - Attachment: Records.java RecordBatchIteratorOfRestart.java RecordBatchIterator.java MemoryRecords.java LogSegment.scala LogManager.scala LogInputStream.java Log.scala LazyDownConversionRecords.java FileRecords.java FileLogInputStream.java DefaultRecordBatch.java ByteBufferLogInputStream.java AbstractLegacyRecordBatch.java AbstractIteratorOfRestart.java AbstractIterator.java > Restarting Kafka always takes a lot of time > --- > > Key: KAFKA-10672 > URL: https://issues.apache.org/jira/browse/KAFKA-10672 > Project: Kafka > Issue Type: Improvement > Components: core >Affects Versions: 2.0.0 > Environment: A cluster of 21 Kafka nodes; > Each node has 12 disks; > Each node has about 1500 partitions; > There are approximately 700 leader partitions per node; > Slow-loading partitions have about 1000 log segments; >Reporter: Wenbing Shen >Priority: Major > Attachments: AbstractIterator.java, AbstractIteratorOfRestart.java, > AbstractLegacyRecordBatch.java, ByteBufferLogInputStream.java, > DefaultRecordBatch.java, FileLogInputStream.java, FileRecords.java, > LazyDownConversionRecords.java, Log.scala, LogInputStream.java, > LogManager.scala, LogSegment.scala, MemoryRecords.java, > RecordBatchIterator.java, RecordBatchIteratorOfRestart.java, Records.java, > server.log > > > When the snapshot file does not exist, or the latest snapshot file before the > current active period, restoring the state of producers will traverse the log > section, it will traverse the log all batch, in the period when the > individual broker node partition number many, that there are most of the > number of logs, can cause a lot of IO number, IO will only load one batch at > a time, such as a log there will always be in the tens of thousands of batch, > I found that in the code for each batch are at least two IO operation, when a > batch as the default 16 KB,When a log segment is 1G, 65,536 batches will be > generated, and then at least 65,536 *2= 131,072 IO operations will be > generated, which will lead to a lot of time spent in kafka startup process. > We configured 15 log recovery threads in the production environment, and it > still took more than 2 hours to load a partition,can community puts forward > some proposals to the situation or improve.For detailed logs, see the section > on test-perf-18 partitions in the nearby logs -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] ning2008wisc commented on a change in pull request #9224: KAFKA-10304: refactor MM2 integration tests

ning2008wisc commented on a change in pull request #9224:

URL: https://github.com/apache/kafka/pull/9224#discussion_r535846145

##

File path:

connect/mirror/src/test/java/org/apache/kafka/connect/mirror/integration/MirrorConnectorsIntegrationBaseTest.java

##

@@ -0,0 +1,617 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.kafka.connect.mirror.integration;

+

+import org.apache.kafka.clients.admin.Admin;

+import org.apache.kafka.clients.admin.Config;

+import org.apache.kafka.clients.admin.ConfigEntry;

+import org.apache.kafka.clients.admin.DescribeConfigsResult;

+import org.apache.kafka.clients.consumer.Consumer;

+import org.apache.kafka.clients.consumer.ConsumerRecords;

+import org.apache.kafka.clients.consumer.OffsetAndMetadata;

+import org.apache.kafka.clients.CommonClientConfigs;

+import org.apache.kafka.common.config.ConfigResource;

+import org.apache.kafka.common.config.TopicConfig;

+import org.apache.kafka.common.config.SslConfigs;

+import org.apache.kafka.common.config.types.Password;

+import org.apache.kafka.common.utils.Exit;

+import org.apache.kafka.common.TopicPartition;

+import org.apache.kafka.connect.connector.Connector;

+import org.apache.kafka.connect.mirror.MirrorClient;

+import org.apache.kafka.connect.mirror.MirrorHeartbeatConnector;

+import org.apache.kafka.connect.mirror.MirrorMakerConfig;

+import org.apache.kafka.connect.mirror.MirrorSourceConnector;

+import org.apache.kafka.connect.mirror.SourceAndTarget;

+import org.apache.kafka.connect.mirror.MirrorCheckpointConnector;

+import org.apache.kafka.connect.util.clusters.EmbeddedConnectCluster;

+import org.apache.kafka.connect.util.clusters.EmbeddedKafkaCluster;

+import org.apache.kafka.test.IntegrationTest;

+import static org.apache.kafka.test.TestUtils.waitForCondition;

+

+import java.time.Duration;

+import java.util.ArrayList;

+import java.util.Arrays;

+import java.util.Collection;

+import java.util.List;

+import java.util.Collections;

+import java.util.HashMap;

+import java.util.Iterator;

+import java.util.Map;

+import java.util.Properties;

+import java.util.concurrent.TimeUnit;

+import java.util.concurrent.atomic.AtomicBoolean;

+import java.util.concurrent.atomic.AtomicInteger;

+import java.util.stream.Collectors;

+

+import org.slf4j.Logger;

+import org.slf4j.LoggerFactory;

+

+import static org.junit.Assert.assertFalse;

+import static org.junit.Assert.assertEquals;

+import static org.junit.Assert.assertTrue;

+import static org.junit.Assert.assertNotNull;

+import org.junit.Test;

+import org.junit.experimental.categories.Category;

+

+import static org.apache.kafka.connect.mirror.TestUtils.generateRecords;

+

+/**

+ * Tests MM2 replication and failover/failback logic.

+ *

+ * MM2 is configured with active/active replication between two Kafka

clusters. Tests validate that

+ * records sent to either cluster arrive at the other cluster. Then, a

consumer group is migrated from

+ * one cluster to the other and back. Tests validate that consumer offsets are

translated and replicated

+ * between clusters during this failover and failback.

+ */

+@Category(IntegrationTest.class)

+public abstract class MirrorConnectorsIntegrationBaseTest {

+private static final Logger log =

LoggerFactory.getLogger(MirrorConnectorsIntegrationBaseTest.class);

+

+private static final int NUM_RECORDS_PER_PARTITION = 10;

+private static final int NUM_PARTITIONS = 10;

+private static final int NUM_RECORDS_PRODUCED = NUM_PARTITIONS *

NUM_RECORDS_PER_PARTITION;

+private static final int RECORD_TRANSFER_DURATION_MS = 30_000;

+private static final int CHECKPOINT_DURATION_MS = 20_000;

+private static final int RECORD_CONSUME_DURATION_MS = 20_000;

+private static final int OFFSET_SYNC_DURATION_MS = 30_000;

+private static final int NUM_WORKERS = 3;

+private static final int CONSUMER_POLL_TIMEOUT_MS = 500;

+private static final int BROKER_RESTART_TIMEOUT_MS = 10_000;

+private static final long DEFAULT_PRODUCE_SEND_DURATION_MS =

TimeUnit.SECONDS.toMillis(120);

+private static final String PRIMARY_CLUSTER_ALIAS = "primary";

+private static final String BACKUP_CLUSTER_ALIAS = "backup";

+private static final List>

[GitHub] [kafka] apovzner commented on a change in pull request #9628: KAFKA-10747: Implement APIs for altering and describing IP connection rate quotas

apovzner commented on a change in pull request #9628:

URL: https://github.com/apache/kafka/pull/9628#discussion_r535830690

##

File path: core/src/test/scala/unit/kafka/admin/ConfigCommandTest.scala

##

@@ -472,6 +512,134 @@ class ConfigCommandTest extends ZooKeeperTestHarness with

Logging {

EasyMock.reset(alterResult, describeResult)

}

+ @Test

+ def shouldNotAlterNonQuotaIpConfigsUsingBootstrapServer(): Unit = {

+// when using --bootstrap-server, it should be illegal to alter anything

that is not a connection quota

+// for ip entities

+val node = new Node(1, "localhost", 9092)

+val mockAdminClient = new

MockAdminClient(util.Collections.singletonList(node), node)

+

+def verifyCommand(entityType: String, alterOpts: String*): Unit = {

+ val opts = new ConfigCommandOptions(Array("--bootstrap-server",

"localhost:9092",

+"--entity-type", entityType, "--entity-name", "admin",

+"--alter") ++ alterOpts)

+ val e = intercept[IllegalArgumentException] {

+ConfigCommand.alterConfig(mockAdminClient, opts)

+ }

+ assertTrue(s"Unexpected exception: $e",

e.getMessage.contains("some_config"))

+}

+

+verifyCommand("ips", "--add-config",

"connection_creation_rate=1,some_config=10")

Review comment:

super tiny nit: `verifyCommand` name makes the code a bit confusing

since the command is expected to fail. What about `verifyCommandFails` or

`verifyFails`?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [kafka] apovzner commented on a change in pull request #9628: KAFKA-10747: Implement APIs for altering and describing IP connection rate quotas

apovzner commented on a change in pull request #9628:

URL: https://github.com/apache/kafka/pull/9628#discussion_r535829042

##

File path:

core/src/test/scala/integration/kafka/network/DynamicConnectionQuotaTest.scala

##

@@ -259,13 +264,19 @@ class DynamicConnectionQuotaTest extends BaseRequestTest {

}

private def updateIpConnectionRate(ip: Option[String], updatedRate: Int):

Unit = {

-adminZkClient.changeIpConfig(ip.getOrElse(ConfigEntityName.Default),

- CoreUtils.propsWith(DynamicConfig.Ip.IpConnectionRateOverrideProp,

updatedRate.toString))

+val initialConnectionCount = connectionCount

+val adminClient = createAdminClient()

Review comment:

`TestUtils.alterClientQuotas` called before `adminClient.close` can

throw an exception. Admin extends AutoCloseable, so you can use

try-with-resources.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [kafka] chia7712 merged pull request #9682: KAFKA-10803: Fix improper removal of bad dynamic config

chia7712 merged pull request #9682: URL: https://github.com/apache/kafka/pull/9682 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Assigned] (KAFKA-10803) Skip improper dynamic configs while initialization and include the rest correct ones

[ https://issues.apache.org/jira/browse/KAFKA-10803?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Chia-Ping Tsai reassigned KAFKA-10803: -- Assignee: Prateek Agarwal > Skip improper dynamic configs while initialization and include the rest > correct ones > > > Key: KAFKA-10803 > URL: https://issues.apache.org/jira/browse/KAFKA-10803 > Project: Kafka > Issue Type: Bug > Components: core >Affects Versions: 1.1.1, 2.6.0, 2.5.1, 2.7.0, 2.8.0 >Reporter: Prateek Agarwal >Assignee: Prateek Agarwal >Priority: Major > > There is [a > bug|https://github.com/apache/kafka/blob/trunk/core/src/main/scala/kafka/server/DynamicBrokerConfig.scala#L470] > in how incorrect dynamic config keys are removed from the original > Properties list, resulting in persisting the improper configs in the > properties list. > This eventually results in exception being thrown while parsing the list by > [KafkaConfig > ctor|https://github.com/apache/kafka/blob/trunk/core/src/main/scala/kafka/server/DynamicBrokerConfig.scala#L531], > resulting in skipping of the complete dynamic list (including the correct > ones). -- This message was sent by Atlassian Jira (v8.3.4#803005)

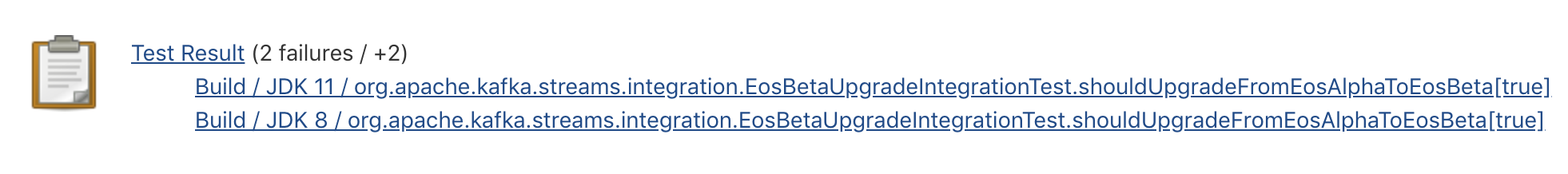

[GitHub] [kafka] chia7712 commented on pull request #9682: KAFKA-10803: Fix improper removal of bad dynamic config

chia7712 commented on pull request #9682: URL: https://github.com/apache/kafka/pull/9682#issuecomment-738549721 ``` Build / JDK 11 / org.apache.kafka.streams.integration.EosBetaUpgradeIntegrationTest.shouldUpgradeFromEosAlphaToEosBeta[true] ``` known flaky. Will merge this to trunk This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] chia7712 merged pull request #9679: MINOR: Make Histogram.clear more readable

chia7712 merged pull request #9679: URL: https://github.com/apache/kafka/pull/9679 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] chia7712 opened a new pull request #9686: KAFKA-10804 add more subsets and exclude performance tests

chia7712 opened a new pull request #9686: URL: https://github.com/apache/kafka/pull/9686 This PR is a part of https://issues.apache.org/jira/browse/KAFKA-10804 The following tasks are included by this PR. 1. add more subsets 1. not to run all system tests - exclude performance ### Committer Checklist (excluded from commit message) - [ ] Verify design and implementation - [ ] Verify test coverage and CI build status - [ ] Verify documentation (including upgrade notes) This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Assigned] (KAFKA-10804) Tune travis system tests to avoid timeouts

[ https://issues.apache.org/jira/browse/KAFKA-10804?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Chia-Ping Tsai reassigned KAFKA-10804: -- Assignee: Chia-Ping Tsai > Tune travis system tests to avoid timeouts > -- > > Key: KAFKA-10804 > URL: https://issues.apache.org/jira/browse/KAFKA-10804 > Project: Kafka > Issue Type: Bug >Reporter: Jason Gustafson >Assignee: Chia-Ping Tsai >Priority: Major > > Thanks to https://github.com/apache/kafka/pull/9652, we are now running > system tests for PRs. However, it looks like we need some tuning because many > of the subsets are timing out. For example: > https://travis-ci.com/github/apache/kafka/jobs/453241933. This might just be > a matter of adding more subsets or changing the timeout, but we should > probably also consider whether we want to run all system tests or if there is > a more useful subset of them. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] dongjinleekr commented on a change in pull request #8404: KAFKA-10787: Introduce an import order in Java sources

dongjinleekr commented on a change in pull request #8404: URL: https://github.com/apache/kafka/pull/8404#discussion_r535791013 ## File path: clients/src/main/java/org/apache/kafka/clients/ClusterConnectionStates.java ## @@ -16,21 +16,21 @@ */ package org.apache.kafka.clients; -import java.util.HashSet; -import java.util.Set; - -import java.util.stream.Collectors; import org.apache.kafka.common.errors.AuthenticationException; import org.apache.kafka.common.utils.ExponentialBackoff; import org.apache.kafka.common.utils.LogContext; + import org.slf4j.Logger; Review comment: @chia7712 Oh, the description I had at first on 2nd April is outdated; [After the discussion](https://lists.apache.org/thread.html/rf6f49c845a3d48efe8a91916c8fbaddb76da17742eef06798fc5b24d%40%3Cdev.kafka.apache.org%3E), we concluded that [the following three-group ordering](https://issues.apache.org/jira/browse/KAFKA-10787) would be better: - `kafka`, `org.apache.kafka` - `com`, `net`, `org` - `java`, `javax` This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (KAFKA-10636) Bypass log validation for writes to raft log

[ https://issues.apache.org/jira/browse/KAFKA-10636?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243646#comment-17243646 ] feyman commented on KAFKA-10636: [~hachikuji] Cool, thanks for the help! > Bypass log validation for writes to raft log > > > Key: KAFKA-10636 > URL: https://issues.apache.org/jira/browse/KAFKA-10636 > Project: Kafka > Issue Type: Sub-task >Reporter: Jason Gustafson >Assignee: feyman >Priority: Major > Labels: raft-log-management > > The raft leader is responsible for creating the records written to the log > (including assigning offsets and the epoch), so we can consider bypassing the > validation done in `LogValidator`. This lets us skip potentially expensive > decompression and the unnecessary recomputation of the CRC. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] showuon commented on pull request #9627: KAFKA-10746: Change to Warn logs when necessary to notify users

showuon commented on pull request #9627: URL: https://github.com/apache/kafka/pull/9627#issuecomment-738505590 @abbccdda @guozhangwang , could you please help review this PR? Thanks. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Created] (KAFKA-10807) AlterConfig should be validated by the target broker

Boyang Chen created KAFKA-10807: --- Summary: AlterConfig should be validated by the target broker Key: KAFKA-10807 URL: https://issues.apache.org/jira/browse/KAFKA-10807 Project: Kafka Issue Type: Sub-task Reporter: Boyang Chen After forwarding is enabled, AlterConfigs will no longer be sent to the target broker. This behavior bypasses some important config change validations, such as path existence, static config conflict, or even worse when the target broker is offline, the propagated result does not reflect a true applied result. We should gather those necessary cases, and decide whether to actually handle the AlterConfig request firstly on the target broker, and then forward, in a validate-forward-apply path. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (KAFKA-10345) Add file-watch based update for trust/key store paths

[ https://issues.apache.org/jira/browse/KAFKA-10345?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Boyang Chen updated KAFKA-10345: Description: With forwarding enabled, per-broker alter-config doesn't go to the target broker anymore, we need to have a mechanism to propagate in-place file update through file watch. (was: With forwarding enabled, per-broker alter-config doesn't go to the target broker anymore, we need to have a mechanism to propagate that update through ZK without affecting other config changes.) > Add file-watch based update for trust/key store paths > - > > Key: KAFKA-10345 > URL: https://issues.apache.org/jira/browse/KAFKA-10345 > Project: Kafka > Issue Type: Sub-task >Reporter: Boyang Chen >Assignee: Boyang Chen >Priority: Major > Fix For: 2.8.0 > > > With forwarding enabled, per-broker alter-config doesn't go to the target > broker anymore, we need to have a mechanism to propagate in-place file update > through file watch. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Updated] (KAFKA-10345) Add file-watch based update for trust/key store paths

[ https://issues.apache.org/jira/browse/KAFKA-10345?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Boyang Chen updated KAFKA-10345: Summary: Add file-watch based update for trust/key store paths (was: Add ZK-notification based update for trust/key store paths) > Add file-watch based update for trust/key store paths > - > > Key: KAFKA-10345 > URL: https://issues.apache.org/jira/browse/KAFKA-10345 > Project: Kafka > Issue Type: Sub-task >Reporter: Boyang Chen >Assignee: Boyang Chen >Priority: Major > Fix For: 2.8.0 > > > With forwarding enabled, per-broker alter-config doesn't go to the target > broker anymore, we need to have a mechanism to propagate that update through > ZK without affecting other config changes. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] splett2 opened a new pull request #9685: KAFKA-10748: Add IP connection rate throttling metric

splett2 opened a new pull request #9685: URL: https://github.com/apache/kafka/pull/9685 This PR adds the IP throttling metric as described in KIP-612. ### Committer Checklist (excluded from commit message) - [ ] Verify design and implementation - [ ] Verify test coverage and CI build status - [ ] Verify documentation (including upgrade notes) This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] jolshan commented on a change in pull request #9684: KAFKA-10764: Add support for returning topic IDs on create, supplying topic IDs for delete

jolshan commented on a change in pull request #9684: URL: https://github.com/apache/kafka/pull/9684#discussion_r535743912 ## File path: core/src/main/scala/kafka/server/KafkaApis.scala ## @@ -1896,6 +1896,11 @@ class KafkaApis(val requestChannel: RequestChannel, .setTopicConfigErrorCode(Errors.NONE.code) } } +val topicIds = zkClient.getTopicIdsForTopics(results.asScala.map(result => result.name()).toSet) Review comment: If going to ZK here is too slow, another option is to provide a callback to adminManager.createTopics which can be called after createTopicWithAssignment. This callback would add the topic ID to the result. The idea is that createTopicWithAssignment (and writeTopicPartitionAssignment) would return the topic ID to avoid an extra call to ZK. I wasn't sure which option was better. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] jolshan commented on a change in pull request #9684: KAFKA-10764: Add support for returning topic IDs on create, supplying topic IDs for delete

jolshan commented on a change in pull request #9684: URL: https://github.com/apache/kafka/pull/9684#discussion_r535743912 ## File path: core/src/main/scala/kafka/server/KafkaApis.scala ## @@ -1896,6 +1896,11 @@ class KafkaApis(val requestChannel: RequestChannel, .setTopicConfigErrorCode(Errors.NONE.code) } } +val topicIds = zkClient.getTopicIdsForTopics(results.asScala.map(result => result.name()).toSet) Review comment: If going to ZK here is too slow, another option is to provide a callback to adminManager.createTopics which can be called after createTopicWithAssignment. The idea is that createTopicWithAssignment (and writeTopicPartitionAssignment) would return the topic ID to avoid an extra call to ZK. I wasn't sure which option was better. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] jolshan opened a new pull request #9684: KAFKA-10764: Add support for returning topic IDs on create, supplying topic IDs for delete

jolshan opened a new pull request #9684: URL: https://github.com/apache/kafka/pull/9684 Updated CreateTopicResponse, DeleteTopicsRequest/Response and added some new AdminClient methods and classes. Now the newly created topic ID will be returned in CreateTopicsResult and found in TopicAndMetadataConfig, and topics can be deleted by supplying topic IDs through deleteTopicsWithIds which will return DeleteTopicsWithIdsResult. ### Committer Checklist (excluded from commit message) - [ ] Verify design and implementation - [ ] Verify test coverage and CI build status - [ ] Verify documentation (including upgrade notes) This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] ableegoldman commented on a change in pull request #9668: MINOR: add test for repartition/source-topic/changelog optimization

ableegoldman commented on a change in pull request #9668:

URL: https://github.com/apache/kafka/pull/9668#discussion_r535724656

##

File path:

streams/src/test/java/org/apache/kafka/streams/StreamsBuilderTest.java

##

@@ -463,7 +463,36 @@ public void

shouldReuseSourceTopicAsChangelogsWithOptimization20() {

}

@Test

-public void shouldNotReuseSourceTopicAsChangelogsByDefault() {

+public void shouldNotReuseRepartitionTopicAsChangelogs() {

+final String topic = "topic";

+builder.stream(topic).repartition().toTable(Materialized.as("store"));

+final Properties props = StreamsTestUtils.getStreamsConfig("appId");

+props.put(StreamsConfig.TOPOLOGY_OPTIMIZATION_CONFIG,

StreamsConfig.OPTIMIZE);

+final Topology topology = builder.build(props);

+

+final InternalTopologyBuilder internalTopologyBuilder =

TopologyWrapper.getInternalTopologyBuilder(topology);

+internalTopologyBuilder.rewriteTopology(new StreamsConfig(props));

+

+assertThat(

+internalTopologyBuilder.buildTopology().storeToChangelogTopic(),

+equalTo(Collections.singletonMap("store", "appId-store-changelog"))

+);

+assertThat(

+internalTopologyBuilder.stateStores().keySet(),

+equalTo(Collections.singleton("store"))

+);

+assertThat(

+

internalTopologyBuilder.stateStores().get("store").loggingEnabled(),

+equalTo(true)

+);

+assertThat(

+

internalTopologyBuilder.topicGroups().get(1).stateChangelogTopics.keySet(),

+equalTo(Collections.singleton("appId-store-changelog"))

+);

+}

+

+@Test

+public void shouldNotReuseRepartitionTopicAsChangelogsByDefault() {

Review comment:

This should still be called

`shouldNotReuseSourceTopicAsChangelogsByDefault`, right?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [kafka] guozhangwang commented on a change in pull request #9676: KAFKA-10778: Fence appends after write failure

guozhangwang commented on a change in pull request #9676:

URL: https://github.com/apache/kafka/pull/9676#discussion_r535691107

##

File path: core/src/main/scala/kafka/log/Log.scala

##

@@ -1219,6 +1219,9 @@ class Log(@volatile private var _dir: File,

appendInfo.logAppendTime = duplicate.timestamp

appendInfo.logStartOffset = logStartOffset

case None =>

+ if (logDirFailureChannel.logDirIsOffline(parentDir)) {

+throw new KafkaStorageException(s"The log dir $parentDir has

failed.");

Review comment:

Maybe we can leave a more informative error message here? Sth like "...

dir has failed due to a previous IO exception", just indicating it is not

failed because of the current calling trace.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [kafka] guozhangwang merged pull request #9681: MINOR: Fix flaky test shouldQueryOnlyActivePartitionStoresByDefault

guozhangwang merged pull request #9681: URL: https://github.com/apache/kafka/pull/9681 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Updated] (KAFKA-10636) Bypass log validation for writes to raft log

[ https://issues.apache.org/jira/browse/KAFKA-10636?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Guozhang Wang updated KAFKA-10636: -- Labels: raft-log-management (was: ) > Bypass log validation for writes to raft log > > > Key: KAFKA-10636 > URL: https://issues.apache.org/jira/browse/KAFKA-10636 > Project: Kafka > Issue Type: Sub-task >Reporter: Jason Gustafson >Assignee: feyman >Priority: Major > Labels: raft-log-management > > The raft leader is responsible for creating the records written to the log > (including assigning offsets and the epoch), so we can consider bypassing the > validation done in `LogValidator`. This lets us skip potentially expensive > decompression and the unnecessary recomputation of the CRC. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Created] (KAFKA-10806) throwable from user callback on completeFutureAndFireCallbacks can lead to unhandled exceptions

xiongqi wu created KAFKA-10806: -- Summary: throwable from user callback on completeFutureAndFireCallbacks can lead to unhandled exceptions Key: KAFKA-10806 URL: https://issues.apache.org/jira/browse/KAFKA-10806 Project: Kafka Issue Type: Bug Reporter: xiongqi wu When kafka producer tries to complete/abort a batch, producer invokes user callback. However, "completeFutureAndFireCallbacks" only captures exceptions from user callback not all throwables. An uncaught throwable can prevent the batch from being freed. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (KAFKA-10636) Bypass log validation for writes to raft log

[ https://issues.apache.org/jira/browse/KAFKA-10636?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243525#comment-17243525 ] Jason Gustafson commented on KAFKA-10636: - [~feyman] Sounds good, thanks for picking it up. It might just be a matter of taking advantage of the `AppendOrigin` which we added to `Log.appendAsLeader`. > Bypass log validation for writes to raft log > > > Key: KAFKA-10636 > URL: https://issues.apache.org/jira/browse/KAFKA-10636 > Project: Kafka > Issue Type: Sub-task >Reporter: Jason Gustafson >Assignee: feyman >Priority: Major > > The raft leader is responsible for creating the records written to the log > (including assigning offsets and the epoch), so we can consider bypassing the > validation done in `LogValidator`. This lets us skip potentially expensive > decompression and the unnecessary recomputation of the CRC. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (KAFKA-2967) Move Kafka documentation to ReStructuredText

[ https://issues.apache.org/jira/browse/KAFKA-2967?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243518#comment-17243518 ] James Galasyn commented on KAFKA-2967: -- Thank you for the feedback, [~vvcephei]! I only suggested RTD because that's what we do for ksqlDB, but I'm very happy to use the existing infra. I think you're right about leveraging the existing job, but I don't know the deets. Maybe [~guozhang] or [~mjsax] can weigh in. So it seems like it's not a huge lift to make this happen. If everybody thinks it's worthwhile for me to investigate, I'd like to dive in for a couple of days and see what I come up with. > Move Kafka documentation to ReStructuredText > > > Key: KAFKA-2967 > URL: https://issues.apache.org/jira/browse/KAFKA-2967 > Project: Kafka > Issue Type: Bug >Reporter: Gwen Shapira >Assignee: Gwen Shapira >Priority: Major > Labels: documentation > > Storing documentation as HTML is kind of BS :) > * Formatting is a pain, and making it look good is even worse > * Its just HTML, can't generate PDFs > * Reading and editting is painful > * Validating changes is hard because our formatting relies on all kinds of > Apache Server features. > I suggest: > * Move to RST > * Generate HTML and PDF during build using Sphinx plugin for Gradle. > Lots of Apache projects are doing this. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] mumrah merged pull request #9677: KAFKA-10799 AlterIsr utilizes ReplicaManager ISR metrics

mumrah merged pull request #9677: URL: https://github.com/apache/kafka/pull/9677 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] mumrah commented on pull request #9677: KAFKA-10799 AlterIsr utilizes ReplicaManager ISR metrics

mumrah commented on pull request #9677: URL: https://github.com/apache/kafka/pull/9677#issuecomment-738303699 Failed tests are known flaky https://ci-builds.apache.org/job/Kafka/job/kafka-pr/job/PR-9677/8/  This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Commented] (KAFKA-2967) Move Kafka documentation to ReStructuredText

[ https://issues.apache.org/jira/browse/KAFKA-2967?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243502#comment-17243502 ] James Galasyn commented on KAFKA-2967: -- [~mjsax] Very cool, thank you for the tip! > Move Kafka documentation to ReStructuredText > > > Key: KAFKA-2967 > URL: https://issues.apache.org/jira/browse/KAFKA-2967 > Project: Kafka > Issue Type: Bug >Reporter: Gwen Shapira >Assignee: Gwen Shapira >Priority: Major > Labels: documentation > > Storing documentation as HTML is kind of BS :) > * Formatting is a pain, and making it look good is even worse > * Its just HTML, can't generate PDFs > * Reading and editting is painful > * Validating changes is hard because our formatting relies on all kinds of > Apache Server features. > I suggest: > * Move to RST > * Generate HTML and PDF during build using Sphinx plugin for Gradle. > Lots of Apache projects are doing this. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (KAFKA-2967) Move Kafka documentation to ReStructuredText

[ https://issues.apache.org/jira/browse/KAFKA-2967?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243503#comment-17243503 ] John Roesler commented on KAFKA-2967: - Hi [~JimGalasyn] , Thanks for that information! It's very cool that you were able to convert the site to markdown. Thanks for actually proving that it can be done with a reasonable level of effort. I didn't understand the rationale for using ReadTheDocs. The docs are currently hosted as a static site by the ASF for free. If we have markdown, we should be able to use Jekyll or Hugo or something to render the markdown into HTML as part of the job that currently copies the site over to the static host, right? It seems like the ASF has some suggestions for how we could do this: https://infra.apache.org/project-site.html Thanks again, -John > Move Kafka documentation to ReStructuredText > > > Key: KAFKA-2967 > URL: https://issues.apache.org/jira/browse/KAFKA-2967 > Project: Kafka > Issue Type: Bug >Reporter: Gwen Shapira >Assignee: Gwen Shapira >Priority: Major > Labels: documentation > > Storing documentation as HTML is kind of BS :) > * Formatting is a pain, and making it look good is even worse > * Its just HTML, can't generate PDFs > * Reading and editting is painful > * Validating changes is hard because our formatting relies on all kinds of > Apache Server features. > I suggest: > * Move to RST > * Generate HTML and PDF during build using Sphinx plugin for Gradle. > Lots of Apache projects are doing this. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (KAFKA-2967) Move Kafka documentation to ReStructuredText

[ https://issues.apache.org/jira/browse/KAFKA-2967?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243500#comment-17243500 ] Matthias J. Sax commented on KAFKA-2967: Btw: [https://infra.apache.org/project-site.html] > Move Kafka documentation to ReStructuredText > > > Key: KAFKA-2967 > URL: https://issues.apache.org/jira/browse/KAFKA-2967 > Project: Kafka > Issue Type: Bug >Reporter: Gwen Shapira >Assignee: Gwen Shapira >Priority: Major > Labels: documentation > > Storing documentation as HTML is kind of BS :) > * Formatting is a pain, and making it look good is even worse > * Its just HTML, can't generate PDFs > * Reading and editting is painful > * Validating changes is hard because our formatting relies on all kinds of > Apache Server features. > I suggest: > * Move to RST > * Generate HTML and PDF during build using Sphinx plugin for Gradle. > Lots of Apache projects are doing this. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] bbejeck commented on pull request #9674: KAFKA-10665: close all kafkaStreams before purgeLocalStreamsState

bbejeck commented on pull request #9674: URL: https://github.com/apache/kafka/pull/9674#issuecomment-738292594 Java 11 failed with ``` > Task :streams:streams-scala:integrationTest FAILED 05:26:47 org.gradle.internal.remote.internal.ConnectException: Could not connect to server [704081bf-4ce4-4a82-ae43-69c7e855ca11 port:38063, addresses:[/127.0.0.1]]. Tried addresses: [/127.0.0.1]. ``` This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] ijuma commented on a change in pull request #9512: KAFKA-10394: generate snapshot

ijuma commented on a change in pull request #9512:

URL: https://github.com/apache/kafka/pull/9512#discussion_r535574184

##

File path:

raft/src/main/java/org/apache/kafka/snapshot/FileRawSnapshotWriter.java

##

@@ -0,0 +1,108 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.kafka.snapshot;

+

+import java.io.IOException;

+import java.nio.ByteBuffer;

+import java.nio.channels.FileChannel;

+import java.nio.file.Files;

+import java.nio.file.Path;

+import java.nio.file.StandardCopyOption;

+import java.nio.file.StandardOpenOption;

+import org.apache.kafka.common.utils.Utils;

+import org.apache.kafka.raft.OffsetAndEpoch;

+

+public final class FileRawSnapshotWriter implements RawSnapshotWriter {

+private final Path tempSnapshotPath;

+private final FileChannel channel;

+private final OffsetAndEpoch snapshotId;

+private boolean frozen = false;

+

+private FileRawSnapshotWriter(

+Path tempSnapshotPath,

+FileChannel channel,

+OffsetAndEpoch snapshotId

+) {

+this.tempSnapshotPath = tempSnapshotPath;

+this.channel = channel;

+this.snapshotId = snapshotId;

+}

+

+@Override

+public OffsetAndEpoch snapshotId() {

+return snapshotId;

+}

+

+@Override

+public long sizeInBytes() throws IOException {

+return channel.size();

+}

+

+@Override

+public void append(ByteBuffer buffer) throws IOException {

+if (frozen) {

+throw new IllegalStateException(

+String.format("Append not supported. Snapshot is already

frozen: id = %s; temp path = %s", snapshotId, tempSnapshotPath)

+);

+}

+

+Utils.writeFully(channel, buffer);

+}

+

+@Override

+public boolean isFrozen() {

+return frozen;

+}

+

+@Override

+public void freeze() throws IOException {

+channel.close();

+frozen = true;

+

+// Set readonly and ignore the result

+if (!tempSnapshotPath.toFile().setReadOnly()) {

+throw new IOException(String.format("Unable to set file (%s) as

read-only", tempSnapshotPath));

+}

+

+Path destination = Snapshots.moveRename(tempSnapshotPath, snapshotId);

+Files.move(tempSnapshotPath, destination,

StandardCopyOption.ATOMIC_MOVE);

Review comment:

Also, I would not rely on reading the code to assume one way or another.

You'd want to test it too.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [kafka] ijuma commented on a change in pull request #9512: KAFKA-10394: generate snapshot

ijuma commented on a change in pull request #9512:

URL: https://github.com/apache/kafka/pull/9512#discussion_r535572033

##

File path:

raft/src/main/java/org/apache/kafka/snapshot/FileRawSnapshotWriter.java

##

@@ -0,0 +1,108 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+package org.apache.kafka.snapshot;

+

+import java.io.IOException;

+import java.nio.ByteBuffer;

+import java.nio.channels.FileChannel;

+import java.nio.file.Files;

+import java.nio.file.Path;

+import java.nio.file.StandardCopyOption;

+import java.nio.file.StandardOpenOption;

+import org.apache.kafka.common.utils.Utils;

+import org.apache.kafka.raft.OffsetAndEpoch;

+

+public final class FileRawSnapshotWriter implements RawSnapshotWriter {

+private final Path tempSnapshotPath;

+private final FileChannel channel;

+private final OffsetAndEpoch snapshotId;

+private boolean frozen = false;

+

+private FileRawSnapshotWriter(

+Path tempSnapshotPath,

+FileChannel channel,

+OffsetAndEpoch snapshotId

+) {

+this.tempSnapshotPath = tempSnapshotPath;

+this.channel = channel;

+this.snapshotId = snapshotId;

+}

+

+@Override

+public OffsetAndEpoch snapshotId() {

+return snapshotId;

+}

+

+@Override

+public long sizeInBytes() throws IOException {

+return channel.size();

+}

+

+@Override

+public void append(ByteBuffer buffer) throws IOException {

+if (frozen) {

+throw new IllegalStateException(

+String.format("Append not supported. Snapshot is already

frozen: id = %s; temp path = %s", snapshotId, tempSnapshotPath)

+);

+}

+

+Utils.writeFully(channel, buffer);

+}

+

+@Override

+public boolean isFrozen() {

+return frozen;

+}

+

+@Override

+public void freeze() throws IOException {

+channel.close();

+frozen = true;

+

+// Set readonly and ignore the result

+if (!tempSnapshotPath.toFile().setReadOnly()) {

+throw new IOException(String.format("Unable to set file (%s) as

read-only", tempSnapshotPath));

+}

+

+Path destination = Snapshots.moveRename(tempSnapshotPath, snapshotId);

+Files.move(tempSnapshotPath, destination,

StandardCopyOption.ATOMIC_MOVE);

Review comment:

It's not only Windows, NFS also has some restrictions. Why don't you

want to use the utility method?

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

[GitHub] [kafka] mjsax commented on pull request #9683: MINOR: Fix KTable-KTable foreign-key join example

mjsax commented on pull request #9683: URL: https://github.com/apache/kafka/pull/9683#issuecomment-738284048 Merged to `trunk` and cherry-picked to `2.7` and `2.6` branches. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] mjsax merged pull request #9683: MINOR: Fix KTable-KTable foreign-key join example

mjsax merged pull request #9683: URL: https://github.com/apache/kafka/pull/9683 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] JimGalasyn opened a new pull request #9683: DOCS-6115: Fix KTable-KTable foreign-key join example

JimGalasyn opened a new pull request #9683: URL: https://github.com/apache/kafka/pull/9683 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Assigned] (KAFKA-10555) Improve client state machine

[ https://issues.apache.org/jira/browse/KAFKA-10555?page=com.atlassian.jira.plugin.system.issuetabpanels:all-tabpanel ] Walker Carlson reassigned KAFKA-10555: -- Assignee: Walker Carlson > Improve client state machine > > > Key: KAFKA-10555 > URL: https://issues.apache.org/jira/browse/KAFKA-10555 > Project: Kafka > Issue Type: Improvement > Components: streams >Reporter: Matthias J. Sax >Assignee: Walker Carlson >Priority: Major > Labels: needs-kip > > The KafkaStreams client exposes its state to the user for monitoring purpose > (ie, RUNNING, REBALANCING etc). The state of the client depends on the > state(s) of the internal StreamThreads that have their own states. > Furthermore, the client state has impact on what the user can do with the > client. For example, active task can only be queried in RUNNING state and > similar. > With KIP-671 and KIP-663 we improved error handling capabilities and allow to > add/remove stream thread dynamically. We allow adding/removing threads only > in RUNNING and REBALANCING state. This puts us in a "weird" position, because > if we enter ERROR state (ie, if the last thread dies), we cannot add new > threads and longer. However, if we have multiple threads and one dies, we > don't enter ERROR state and do allow to recover the thread. > Before the KIPs the definition of ERROR state was clear, however, with both > KIPs it seem that we should revisit its semantics. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (KAFKA-10805) More useful reporting from travis system tests

[ https://issues.apache.org/jira/browse/KAFKA-10805?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243461#comment-17243461 ] Jason Gustafson commented on KAFKA-10805: - cc [~chia7712] > More useful reporting from travis system tests > -- > > Key: KAFKA-10805 > URL: https://issues.apache.org/jira/browse/KAFKA-10805 > Project: Kafka > Issue Type: Improvement >Reporter: Jason Gustafson >Priority: Major > > Inspecting system test output from travis is a bit painful at the moment > because you have to check the build logs to find the tests that failed. > Additionally, there is no logging available from the workers which is often > essential to debug a failure. We should look into how we can improve the > build so that the output is more convenient and useful. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Created] (KAFKA-10805) More useful reporting from travis system tests

Jason Gustafson created KAFKA-10805: --- Summary: More useful reporting from travis system tests Key: KAFKA-10805 URL: https://issues.apache.org/jira/browse/KAFKA-10805 Project: Kafka Issue Type: Improvement Reporter: Jason Gustafson Inspecting system test output from travis is a bit painful at the moment because you have to check the build logs to find the tests that failed. Additionally, there is no logging available from the workers which is often essential to debug a failure. We should look into how we can improve the build so that the output is more convenient and useful. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Commented] (KAFKA-10804) Tune travis system tests to avoid timeouts

[ https://issues.apache.org/jira/browse/KAFKA-10804?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243458#comment-17243458 ] Jason Gustafson commented on KAFKA-10804: - cc [~chia7712] > Tune travis system tests to avoid timeouts > -- > > Key: KAFKA-10804 > URL: https://issues.apache.org/jira/browse/KAFKA-10804 > Project: Kafka > Issue Type: Bug >Reporter: Jason Gustafson >Priority: Major > > Thanks to https://github.com/apache/kafka/pull/9652, we are now running > system tests for PRs. However, it looks like we need some tuning because many > of the subsets are timing out. For example: > https://travis-ci.com/github/apache/kafka/jobs/453241933. This might just be > a matter of adding more subsets or changing the timeout, but we should > probably also consider whether we want to run all system tests or if there is > a more useful subset of them. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Created] (KAFKA-10804) Tune travis system tests to avoid timeouts

Jason Gustafson created KAFKA-10804: --- Summary: Tune travis system tests to avoid timeouts Key: KAFKA-10804 URL: https://issues.apache.org/jira/browse/KAFKA-10804 Project: Kafka Issue Type: Bug Reporter: Jason Gustafson Thanks to https://github.com/apache/kafka/pull/9652, we are now running system tests for PRs. However, it looks like we need some tuning because many of the subsets are timing out. For example: https://travis-ci.com/github/apache/kafka/jobs/453241933. This might just be a matter of adding more subsets or changing the timeout, but we should probably also consider whether we want to run all system tests or if there is a more useful subset of them. -- This message was sent by Atlassian Jira (v8.3.4#803005)

[GitHub] [kafka] guozhangwang merged pull request #9276: KAFKA-10473: Add docs on partition size-on-disk, and other log-related metrics

guozhangwang merged pull request #9276: URL: https://github.com/apache/kafka/pull/9276 This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[GitHub] [kafka] guozhangwang commented on a change in pull request #9276: KAFKA-10473: Add docs on partition size-on-disk, and other log-related metrics

guozhangwang commented on a change in pull request #9276: URL: https://github.com/apache/kafka/pull/9276#discussion_r535511409 ## File path: docs/ops.html ## @@ -1129,6 +1128,26 @@ Security Considerations for Remote Mon kafka.server:type=BrokerTopicMetrics,name=ReassignmentBytesInPerSec + +Size of a partition on disk (in bytes) + kafka.log:type=Log,name=Size,topic=([-.\w]+),partition=([0-9]+) +The size of a partition on disk, measured in bytes. + + +Number of log segments in a partition + kafka.log:type=Log,name=NumLogSegments,topic=([-.\w]+),partition=([0-9]+) +The number of log segments in a partition. Review comment: I think this is fine :) ## File path: docs/ops.html ## @@ -1129,6 +1128,26 @@ Security Considerations for Remote Mon kafka.server:type=BrokerTopicMetrics,name=ReassignmentBytesInPerSec + +Size of a partition on disk (in bytes) + kafka.log:type=Log,name=Size,topic=([-.\w]+),partition=([0-9]+) +The size of a partition on disk, measured in bytes. + + +Number of log segments in a partition + kafka.log:type=Log,name=NumLogSegments,topic=([-.\w]+),partition=([0-9]+) +The number of log segments in a partition. + + +First offset in a partition + kafka.log:type=Log,name=LogStartOffset,topic=([-.\w]+),partition=([0-9]+) +The first offset in a partition. + + +Last offset in a partition + kafka.log:type=Log,name=LogEndOffset,topic=([-.\w]+),partition=([0-9]+) +The last offset in a partition. Review comment: This is the last uncomitted offset: after the local append it would be incremented, not waiting for other replicas to ack. This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: us...@infra.apache.org

[jira] [Comment Edited] (KAFKA-10774) Support Describe topic using topic IDs

[ https://issues.apache.org/jira/browse/KAFKA-10774?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243454#comment-17243454 ] Justine Olshan edited comment on KAFKA-10774 at 12/3/20, 7:16 PM: -- I've updated the KIP to add another method like describeTopics that uses topic IDs instead [see here |https://cwiki.apache.org/confluence/display/KAFKA/KIP-516%3A+Topic+Identifiers#KIP516:TopicIdentifiers-AdminandKafkaAdminClient] was (Author: jolshan): I've updated the KIP to add another method like describeTopics that uses topic IDs instead [see here | https://cwiki.apache.org/confluence/display/KAFKA/KIP-516%3A+Topic+Identifiers#KIP516:TopicIdentifiers-AdminandKafkaAdminClient] > Support Describe topic using topic IDs > -- > > Key: KAFKA-10774 > URL: https://issues.apache.org/jira/browse/KAFKA-10774 > Project: Kafka > Issue Type: Sub-task >Reporter: dengziming >Assignee: dengziming >Priority: Major > > Similar to KAFKA-10547 which add topic IDs in MetadataResp, we add topic IDs > to MetadataReq and can get TopicDesc by topic IDs -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Comment Edited] (KAFKA-10774) Support Describe topic using topic IDs

[ https://issues.apache.org/jira/browse/KAFKA-10774?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243454#comment-17243454 ] Justine Olshan edited comment on KAFKA-10774 at 12/3/20, 7:15 PM: -- I've updated the KIP to add another method like describeTopics that uses topic IDs instead [see here| https://cwiki.apache.org/confluence/display/KAFKA/KIP-516%3A+Topic+Identifiers#KIP516:TopicIdentifiers-AdminandKafkaAdminClient] was (Author: jolshan): I've updated the KIP to add another method like describeTopics that uses topic IDs instead [see here| [https://cwiki.apache.org/confluence/display/KAFKA/KIP-516%3A+Topic+Identifiers#KIP516:TopicIdentifiers-AdminandKafkaAdminClient|http://example.com/]] > Support Describe topic using topic IDs > -- > > Key: KAFKA-10774 > URL: https://issues.apache.org/jira/browse/KAFKA-10774 > Project: Kafka > Issue Type: Sub-task >Reporter: dengziming >Assignee: dengziming >Priority: Major > > Similar to KAFKA-10547 which add topic IDs in MetadataResp, we add topic IDs > to MetadataReq and can get TopicDesc by topic IDs -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Comment Edited] (KAFKA-10774) Support Describe topic using topic IDs

[ https://issues.apache.org/jira/browse/KAFKA-10774?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17243454#comment-17243454 ] Justine Olshan edited comment on KAFKA-10774 at 12/3/20, 7:15 PM: -- I've updated the KIP to add another method like describeTopics that uses topic IDs instead [see here| https://cwiki.apache.org/confluence/display/KAFKA/KIP-516%3A+Topic+Identifiers#KIP516:TopicIdentifiers-AdminandKafkaAdminClient] was (Author: jolshan): I've updated the KIP to add another method like describeTopics that uses topic IDs instead [see here| https://cwiki.apache.org/confluence/display/KAFKA/KIP-516%3A+Topic+Identifiers#KIP516:TopicIdentifiers-AdminandKafkaAdminClient] > Support Describe topic using topic IDs > -- > > Key: KAFKA-10774 > URL: https://issues.apache.org/jira/browse/KAFKA-10774 > Project: Kafka > Issue Type: Sub-task >Reporter: dengziming >Assignee: dengziming >Priority: Major > > Similar to KAFKA-10547 which add topic IDs in MetadataResp, we add topic IDs > to MetadataReq and can get TopicDesc by topic IDs -- This message was sent by Atlassian Jira (v8.3.4#803005)

[jira] [Comment Edited] (KAFKA-10774) Support Describe topic using topic IDs