Github user yunjzhang closed the pull request at:

https://github.com/apache/spark/pull/22177

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

Github user yunjzhang commented on the issue:

https://github.com/apache/spark/pull/22177

I respect your decision.

this PR does not fix the order issue from the root.

I saw differences when jobs/tasks were shown in spark 2.1/2.3.

same insert...select was treated as 2 jobs

Github user yunjzhang commented on the issue:

https://github.com/apache/spark/pull/22177

my fault, just fixed.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail

Github user yunjzhang commented on the issue:

https://github.com/apache/spark/pull/22177

thanks for the suggestion, just rename the PR.

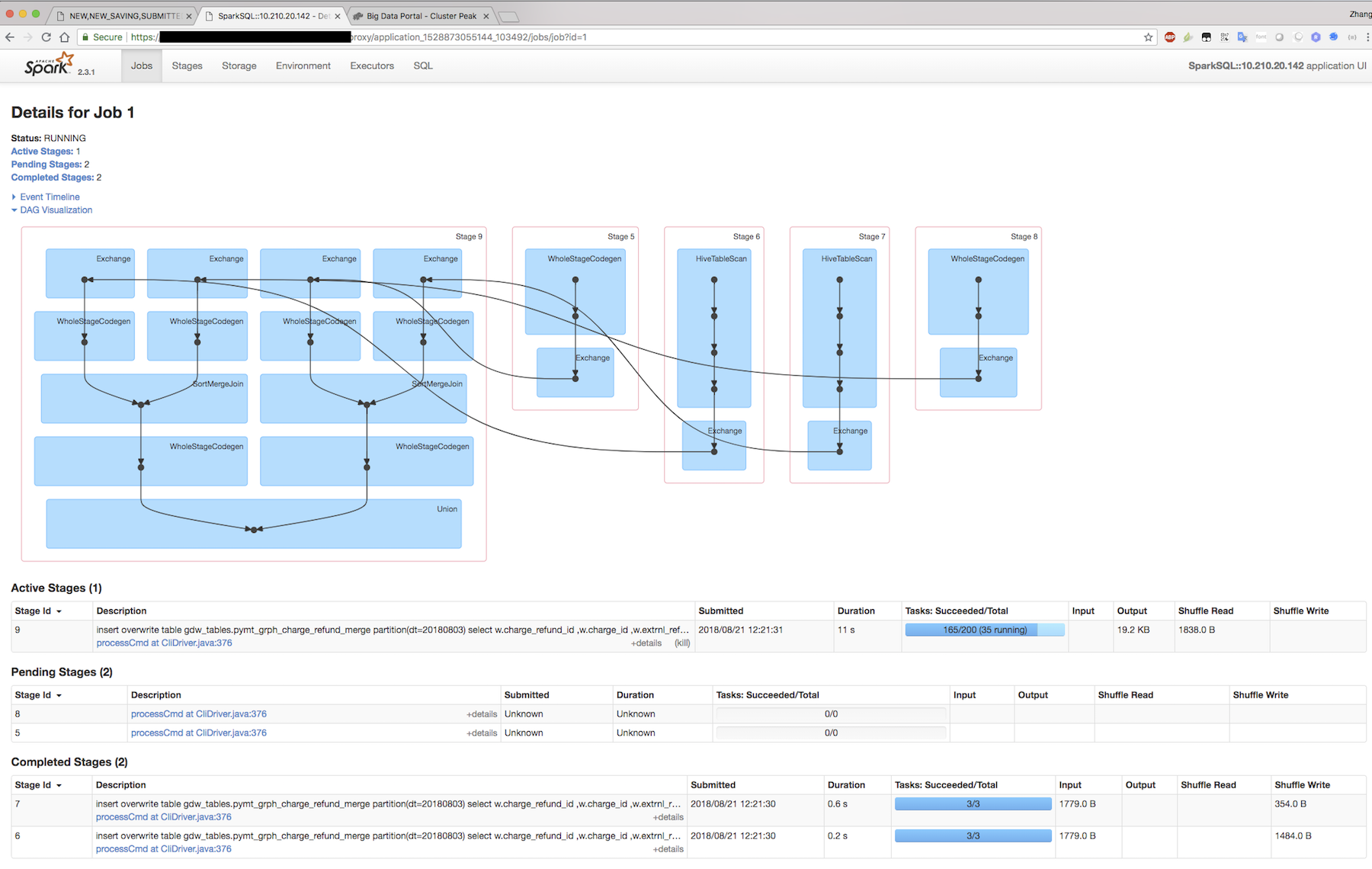

before fix

after fix

GitHub user yunjzhang opened a pull request:

https://github.com/apache/spark/pull/22177

stages in wrong order within job page DAG chart

if spark job contains multiple tasks , the order in DAG chart might be

incorrect.

sample and screen snapshot can be found in jira ticket