[GitHub] [spark] bjornjorgensen commented on pull request #40381: [SPARK-42761][BUILD] Upgrade `fabric8:kubernetes-client` to 6.5.0

bjornjorgensen commented on PR #40381: URL: https://github.com/apache/spark/pull/40381#issuecomment-1465158915 **The maintainers of the library contend that the application's trust would already have had to be compromised or established and therefore dispute the risk associated with this issue on the basis that there is a high bar for exploitation. Thus, no fix is expected.** https://security.snyk.io/vuln/SNYK-JAVA-ORGYAML-3152153 This is part of snakeyaml release notes 2.0 (2023-02-26) Fix #570: SafeConstructor ignores LoaderOptions setCodePointLimit() (thanks to Robert Patrick) Update #565: (Backwards-incompatible) Do not allow global tags by default to fix CVE-2022-1471 (thanks to Jonathan Leitschuh) 1.32 (2022-09-12) Fix #547: Set the limit for incoming data to prevent a CVE report in NIST. By default it is 3MB https://bitbucket.org/snakeyaml/snakeyaml/wiki/Changes -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] MaxGekk commented on pull request #39239: [SPARK-41730][PYTHON] Set tz to UTC while converting of timestamps to python's datetime

MaxGekk commented on PR #39239:

URL: https://github.com/apache/spark/pull/39239#issuecomment-1465148669

@HyukjinKwon @cloud-fan A problem of PySpark's timestamp_ltz is it is a

local timestamp, and not a physical timestamp that timestamp_ltz is supposed to

be. Let's see even Java 7 timestamp (it also has some issues but it is still a

physical time point not local timestamp):

```

➜ ~ TZ=America/Los_Angeles ./spark-3.3/bin/spark-shell

Welcome to

__

/ __/__ ___ _/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.3.2

/_/

scala> spark.conf.set("spark.sql.session.timeZone", "Europe/Moscow")

scala> val df = sql("select timestamp'1970-01-01T00:00:00+'")

df: org.apache.spark.sql.DataFrame = [TIMESTAMP '1970-01-01 03:00:00':

timestamp]

scala> df.collect()(0).getTimestamp(0).toGMTString

res1: String = 1 Jan 1970 00:00:00 GMT

```

The timestamp still knows how to show itself in the UTC time zone because it

contains an offset from the epoch, and can render itself in any time zone.

Let's see at PySpark timestamp:

```

$ TZ=America/Los_Angeles ./spark-3.3/bin/pyspark

Welcome to

__

/ __/__ ___ _/ /__

_\ \/ _ \/ _ `/ __/ '_/

/__ / .__/\_,_/_/ /_/\_\ version 3.3.2

/_/

>>> spark.conf.set("spark.sql.session.timeZone", "Europe/Moscow")

>>>

>>> df = sql("select timestamp'1970-01-01T00:00:00+'")

>>> df.collect()[0][0].utctimetuple()

time.struct_time(tm_year=1969, tm_mon=12, tm_mday=31, tm_hour=16, tm_min=0,

tm_sec=0, tm_wday=2, tm_yday=365, tm_isdst=0)

```

Since python's timestamp becomes a local timestamp at the conversion:

```python

return datetime.datetime.fromtimestamp(ts //

100).replace(microsecond=ts % 100)

```

it cannot render itself in the UTC time zone correctly.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] bjornjorgensen commented on pull request #40381: [SPARK-42761][BUILD] Upgrade `fabric8:kubernetes-client` to 6.5.0

bjornjorgensen commented on PR #40381: URL: https://github.com/apache/spark/pull/40381#issuecomment-1465141874 Well, the comment that you are refereeing to, have a link but I cant get in  3 weeks later they merged a PR https://github.com/fabric8io/kubernetes-client/commit/43b04f6cc2cde0b8cebb76c842c09de30c236780 that fix this issue. And yesterday SNYK open a PR to my repo for this issue. https://github.com/bjornjorgensen/spark/pull/102 I can always change the text for this PR, but I haven't seen anything that makes me believe that kubernets-client is not affected by this CVE. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] yabola commented on pull request #39950: [SPARK-42388][SQL] Avoid parquet footer reads twice when no filters in vectorized reader

yabola commented on PR #39950: URL: https://github.com/apache/spark/pull/39950#issuecomment-1465215240 @sunchao Hi~ Could you take a look at this PR? I think it will be useful when there are joined tables and filter conditions are on few tables. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465291603 cc @wangyum and @kenny-ddd -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] wangyum commented on pull request #40365: [MINOR][SQL] Fix incorrect comment in LimitPushDownThroughWindow

wangyum commented on PR #40365: URL: https://github.com/apache/spark/pull/40365#issuecomment-1465210746 Merged to master. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] bjornjorgensen commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

bjornjorgensen commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465304288 @dongjoon-hyun Thank you. Yes, this PR looks ok. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] bjornjorgensen commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

bjornjorgensen commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465316569 Seams to be working now :) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] github-actions[bot] commented on pull request #38887: [SPARK-41368][SQL] Reorder the window partition expressions by expression stats

github-actions[bot] commented on PR #38887: URL: https://github.com/apache/spark/pull/38887#issuecomment-1465343346 We're closing this PR because it hasn't been updated in a while. This isn't a judgement on the merit of the PR in any way. It's just a way of keeping the PR queue manageable. If you'd like to revive this PR, please reopen it and ask a committer to remove the Stale tag! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] github-actions[bot] closed pull request #38674: [SPARK-41160][YARN] Fix error when submitting a task to the yarn that enabled the timeline service

github-actions[bot] closed pull request #38674: [SPARK-41160][YARN] Fix error when submitting a task to the yarn that enabled the timeline service URL: https://github.com/apache/spark/pull/38674 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40365: [MINOR][SQL] Fix incorrect comment in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40365: URL: https://github.com/apache/spark/pull/40365#issuecomment-1465290797 Oh, it seems to break Scala style, @wangyum . ``` [error] /home/runner/work/spark/spark/sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/LimitPushDownThroughWindow.scala:33: File line length exceeds 100 characters ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a diff in pull request #40365: [MINOR][SQL] Fix incorrect comment in LimitPushDownThroughWindow

dongjoon-hyun commented on code in PR #40365:

URL: https://github.com/apache/spark/pull/40365#discussion_r1133318969

##

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/optimizer/LimitPushDownThroughWindow.scala:

##

@@ -30,7 +30,7 @@ import

org.apache.spark.sql.catalyst.trees.TreePattern.{LIMIT, WINDOW}

* }}}

*/

object LimitPushDownThroughWindow extends Rule[LogicalPlan] {

- // The window frame of RankLike and RowNumberLike can only be UNBOUNDED

PRECEDING to CURRENT ROW.

+ // The window frame of Rank, DenseRank and RowNumber can only be UNBOUNDED

PRECEDING to CURRENT ROW.

Review Comment:

This seems to exceed 100 line and this PR didn't pass the CI.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40381: [SPARK-42761][BUILD] Upgrade `fabric8:kubernetes-client` to 6.5.0

dongjoon-hyun commented on PR #40381: URL: https://github.com/apache/spark/pull/40381#issuecomment-1465293982 BTW, I have two additional questions for the following PR you referred. 1. Do you mean it's the evidence of the previous assessment? > SafeConstructor ignores LoaderOptions setCodePointLimit() (thanks to Robert Patrick) 2. Do you think the following major version change is safe to us? ``` - 1.33 + 2.5 ``` -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465298354 Scala linter passed.  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465298419 Hi, @bjornjorgensen . Could you review this PR? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40365: [MINOR][SQL] Fix incorrect comment in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40365: URL: https://github.com/apache/spark/pull/40365#issuecomment-1465332828 Oh, no problem at all. I just wanted to share my old mistakes. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] StevenChenDatabricks opened a new pull request, #40385: [SPARK-42753] ReusedExchange refers to non-existent nodes

StevenChenDatabricks opened a new pull request, #40385: URL: https://github.com/apache/spark/pull/40385 ### What changes were proposed in this pull request? ### Why are the changes needed? ### Does this PR introduce _any_ user-facing change? ### How was this patch tested? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] bjornjorgensen commented on pull request #40381: [SPARK-42761][BUILD] Upgrade `fabric8:kubernetes-client` to 6.5.0

bjornjorgensen commented on PR #40381: URL: https://github.com/apache/spark/pull/40381#issuecomment-1465294879 ok, I didn't know who this user was. I have updated the PR text now. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40365: [MINOR][SQL] Fix incorrect comment in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40365: URL: https://github.com/apache/spark/pull/40365#issuecomment-1465294691 To @wangyum , sometimes, the contributor's GitHub Action doesn't work properly. I also made similar mistakes before by forgetting the check. It's our responsibility, the committers, are responsible to check it manually. :)  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465305631 No, only the PR author can do that because we use the author's GitHub Actions for that PR. > I have seen this one time before. Can you restart tests on the PR that fails? For Apache Spark branch, of course, you can because you are the committer. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun opened a new pull request, #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun opened a new pull request, #40386: URL: https://github.com/apache/spark/pull/40386 ### What changes were proposed in this pull request? ### Why are the changes needed? ### Does this PR introduce _any_ user-facing change? ### How was this patch tested? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465300338 Could you review this in order to recover master branch, @sunchao ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] wangyum commented on pull request #40365: [MINOR][SQL] Fix incorrect comment in LimitPushDownThroughWindow

wangyum commented on PR #40365: URL: https://github.com/apache/spark/pull/40365#issuecomment-1465332554 @dongjoon-hyun Sorry. I will pay attention to check next time. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun closed pull request #40381: [SPARK-42761][BUILD][K8S] Upgrade `kubernetes-client` to 6.5.0

dongjoon-hyun closed pull request #40381: [SPARK-42761][BUILD][K8S] Upgrade `kubernetes-client` to 6.5.0 URL: https://github.com/apache/spark/pull/40381 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] srowen commented on pull request #40380: [SPARK-42760][DOCS][PYTHON] provide one format for writing to kafka

srowen commented on PR #40380: URL: https://github.com/apache/spark/pull/40380#issuecomment-1465301926 Wrong JIRA is linked, please adjust -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] bjornjorgensen commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

bjornjorgensen commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465304673 I have seen this one time before. Can you restart tests on the PR that fails? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465304619 Thank you so much, @bjornjorgensen . -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

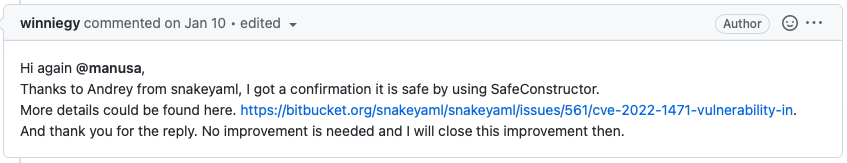

[GitHub] [spark] dongjoon-hyun commented on pull request #40381: [SPARK-42761][BUILD] Upgrade `fabric8:kubernetes-client` to 6.5.0

dongjoon-hyun commented on PR #40381: URL: https://github.com/apache/spark/pull/40381#issuecomment-1465293060 @bjornjorgensen . Do you mean you can not trust `winniegy`, the fabric8io community member's comment? He close the issue after that comment.  Hence, the migration happens independently from the CVE just for the future release. In short, the following claim is wrong according to the context. > 3 weeks later they merged a PR https://github.com/fabric8io/kubernetes-client/commit/43b04f6cc2cde0b8cebb76c842c09de30c236780 that fix this issue. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] bjornjorgensen commented on pull request #40381: [SPARK-42761][BUILD] Upgrade `fabric8:kubernetes-client` to 6.5.0

bjornjorgensen commented on PR #40381: URL: https://github.com/apache/spark/pull/40381#issuecomment-1465295815 Now that it turns out that the information that I have been given is not correct. This change the whole picture with why we should include this PR. I think we can wait until we are done with 3.4 so that we are on the safe side. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465302694 Since this is a comment only change, let me merge this to recover the master branch and PRs.  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun closed pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun closed pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow URL: https://github.com/apache/spark/pull/40386 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40386: [MINOR][SQL][FOLLOWUP] Fix scalastyle in LimitPushDownThroughWindow

dongjoon-hyun commented on PR #40386: URL: https://github.com/apache/spark/pull/40386#issuecomment-1465302800 Merged to master. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] wangyum closed pull request #40365: [MINOR][SQL] Fix incorrect comment in LimitPushDownThroughWindow

wangyum closed pull request #40365: [MINOR][SQL] Fix incorrect comment in LimitPushDownThroughWindow URL: https://github.com/apache/spark/pull/40365 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] ivoson commented on pull request #40286: [SPARK-42577][CORE] Add max attempts limitation for stages to avoid potential infinite retry

ivoson commented on PR #40286: URL: https://github.com/apache/spark/pull/40286#issuecomment-1465226363 > Just a doc change I had missed last time around. Rest looks good to me - can you check the proposed change, and reformulate it to something similar ? I will merge it once done Hi @mridulm thanks for the suggestion. Updated. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] mridulm commented on pull request #40286: [SPARK-42577][CORE] Add max attempts limitation for stages to avoid potential infinite retry

mridulm commented on PR #40286: URL: https://github.com/apache/spark/pull/40286#issuecomment-1465327881 Can you update to latest @ivoson ? The style failure is not related to your change, but blocks build -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] zhengruifeng commented on a diff in pull request #40375: [SPARK-42755][CONNECT] Factor literal value conversion out to `connect-common`

zhengruifeng commented on code in PR #40375:

URL: https://github.com/apache/spark/pull/40375#discussion_r1133354786

##

connector/connect/server/src/main/scala/org/apache/spark/sql/connect/planner/LiteralExpressionProtoConverter.scala:

##

@@ -107,7 +107,7 @@ object LiteralValueProtoConverter {

ArrayType(DataTypeProtoConverter.toCatalystType(lit.getArray.getElementType)))

case _ =>

-throw InvalidPlanInput(

+throw new UnsupportedOperationException(

s"Unsupported Literal Type: ${lit.getLiteralTypeCase.getNumber}" +

s"(${lit.getLiteralTypeCase.name})")

Review Comment:

+1, let me revert here

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] zhengruifeng commented on a diff in pull request #40376: [SPARK-42756][CONNECT][PYTHON] Helper function to convert proto literal to value in Python Client

zhengruifeng commented on code in PR #40376:

URL: https://github.com/apache/spark/pull/40376#discussion_r1133369721

##

python/pyspark/sql/connect/expressions.py:

##

@@ -308,6 +308,43 @@ def _infer_type(cls, value: Any) -> DataType:

def _from_value(cls, value: Any) -> "LiteralExpression":

return LiteralExpression(value=value,

dataType=LiteralExpression._infer_type(value))

+@classmethod

+def _to_value(cls, literal: "proto.Expression.Literal") -> Any:

+if literal.HasField("null"):

+return None

+elif literal.HasField("binary"):

+return literal.binary

+elif literal.HasField("boolean"):

+return literal.boolean

+elif literal.HasField("byte"):

+return literal.byte

+elif literal.HasField("short"):

+return literal.short

+elif literal.HasField("integer"):

+return literal.integer

+elif literal.HasField("long"):

+return literal.long

+elif literal.HasField("float"):

+return literal.float

+elif literal.HasField("double"):

+return literal.double

+elif literal.HasField("decimal"):

+return decimal.Decimal(literal.decimal.value)

+elif literal.HasField("string"):

+return literal.string

+elif literal.HasField("date"):

+return DateType().fromInternal(literal.date)

+elif literal.HasField("timestamp"):

+return TimestampType().fromInternal(literal.timestamp)

+elif literal.HasField("timestamp_ntz"):

+return TimestampNTZType().fromInternal(literal.timestamp_ntz)

+elif literal.HasField("day_time_interval"):

+return

DayTimeIntervalType().fromInternal(literal.day_time_interval)

+elif literal.HasField("array"):

+return [LiteralExpression._to_value(v) for v in

literal.array.elements]

Review Comment:

that is fine

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] zhengruifeng commented on a diff in pull request #40376: [SPARK-42756][CONNECT][PYTHON] Helper function to convert proto literal to value in Python Client

zhengruifeng commented on code in PR #40376:

URL: https://github.com/apache/spark/pull/40376#discussion_r1133369822

##

python/pyspark/sql/connect/expressions.py:

##

@@ -308,6 +308,43 @@ def _infer_type(cls, value: Any) -> DataType:

def _from_value(cls, value: Any) -> "LiteralExpression":

return LiteralExpression(value=value,

dataType=LiteralExpression._infer_type(value))

+@classmethod

+def _to_value(cls, literal: "proto.Expression.Literal") -> Any:

+if literal.HasField("null"):

+return None

Review Comment:

`None` here is a valid value.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] ulysses-you opened a new pull request, #40390: [SPARK-42768][SQL] Enable cached plan apply AQE by default

ulysses-you opened a new pull request, #40390: URL: https://github.com/apache/spark/pull/40390 ### What changes were proposed in this pull request? This pr enables the `spark.sql.optimizer.canChangeCachedPlanOutputPartitioning` by default. ### Why are the changes needed? We have fixed all known issues when enable cache + AQE since SPARK-42101. There is no reason to skip AQE optimizing cached plan. ### Does this PR introduce _any_ user-facing change? yes, the default config changed ### How was this patch tested? Pass CI -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] ulysses-you commented on a diff in pull request #40262: [SPARK-42651][SQL] Optimize global sort to driver sort

ulysses-you commented on code in PR #40262:

URL: https://github.com/apache/spark/pull/40262#discussion_r1133461951

##

sql/core/src/main/scala/org/apache/spark/sql/execution/DriverSortExec.scala:

##

@@ -0,0 +1,73 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.sql.execution

+

+import java.util.concurrent.TimeUnit.NANOSECONDS

+

+import org.apache.spark.rdd.RDD

+import org.apache.spark.sql.catalyst.InternalRow

+import org.apache.spark.sql.catalyst.expressions.{Attribute, NamedExpression,

SortOrder, UnsafeProjection}

+import

org.apache.spark.sql.catalyst.expressions.codegen.LazilyGeneratedOrdering

+import org.apache.spark.sql.catalyst.plans.physical.{Partitioning,

SinglePartition}

+import org.apache.spark.sql.execution.metric.{SQLMetric, SQLMetrics}

+

+/**

+ * A special operator for global [[SortExec]] if it is the root node

with/without [[ProjectExec]].

+ */

+case class DriverSortExec(

Review Comment:

We have a rule `EliminateLimits` to remove limit if it's large enough, i.e.,

the actually row count is less than or equal to limit number. So if the `Sort`

is the root node that means:

1. the user does not specify the limit

2. the limit has no meaning and has been eliminated

I think if the data size is small enough, `DriverSortExec` is faster than

`TakeOrderedAndProjectExec`:

- `DriverSortExec` only sort data once at driver side

- `TakeOrderedAndProjectExec` sort the data at executor side then merge the

sorted data at driver side

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] zhengruifeng commented on a diff in pull request #40376: [SPARK-42756][CONNECT][PYTHON] Helper function to convert proto literal to value in Python Client

zhengruifeng commented on code in PR #40376:

URL: https://github.com/apache/spark/pull/40376#discussion_r1133373330

##

python/pyspark/sql/connect/expressions.py:

##

@@ -308,6 +308,43 @@ def _infer_type(cls, value: Any) -> DataType:

def _from_value(cls, value: Any) -> "LiteralExpression":

return LiteralExpression(value=value,

dataType=LiteralExpression._infer_type(value))

+@classmethod

+def _to_value(cls, literal: "proto.Expression.Literal") -> Any:

+if literal.HasField("null"):

+return None

+elif literal.HasField("binary"):

+return literal.binary

+elif literal.HasField("boolean"):

+return literal.boolean

+elif literal.HasField("byte"):

+return literal.byte

+elif literal.HasField("short"):

+return literal.short

+elif literal.HasField("integer"):

+return literal.integer

+elif literal.HasField("long"):

+return literal.long

+elif literal.HasField("float"):

+return literal.float

+elif literal.HasField("double"):

+return literal.double

+elif literal.HasField("decimal"):

+return decimal.Decimal(literal.decimal.value)

+elif literal.HasField("string"):

+return literal.string

+elif literal.HasField("date"):

+return DateType().fromInternal(literal.date)

+elif literal.HasField("timestamp"):

+return TimestampType().fromInternal(literal.timestamp)

+elif literal.HasField("timestamp_ntz"):

+return TimestampNTZType().fromInternal(literal.timestamp_ntz)

+elif literal.HasField("day_time_interval"):

+return

DayTimeIntervalType().fromInternal(literal.day_time_interval)

+elif literal.HasField("array"):

+return [LiteralExpression._to_value(v) for v in

literal.array.elements]

Review Comment:

let me add one

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] navinvishy commented on pull request #38947: [SPARK-41233][SQL][PYTHON] Add `array_prepend` function

navinvishy commented on PR #38947: URL: https://github.com/apache/spark/pull/38947#issuecomment-1465390080 > @navinvishy sorry, it seems late for 3.4.0. would you mind changing the version from `3.4.0` to `3.5.0`? > > I am going to merge this PR to master this week if no more comments. I've updated the version. Thanks @zhengruifeng ! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] xinrong-meng opened a new pull request, #40388: [SPARK-42765][CONNECT][PYTHON] Regulate the import path of `pandas_udf`

xinrong-meng opened a new pull request, #40388:

URL: https://github.com/apache/spark/pull/40388

### What changes were proposed in this pull request?

Regulate the import path of `pandas_udf`.

### Why are the changes needed?

Usability.

### Does this PR introduce _any_ user-facing change?

No. Only the error message is improved, as shown below.

FROM

```sh

>>> pyspark.sql.connect.functions.pandas_udf()

Traceback (most recent call last):

File "", line 1, in

File "/Users/xinrong.meng/spark/python/pyspark/sql/connect/functions.py",

line 2470, in pandas_udf

raise NotImplementedError("pandas_udf() is not implemented.")

NotImplementedError: pandas_udf() is not implemented.

```

TO

```sh

>>> pyspark.sql.connect.functions.pandas_udf()

Traceback (most recent call last):

File "", line 1, in

File "/Users/xinrong.meng/spark/python/pyspark/sql/connect/functions.py",

line 2470, in pandas_udf

raise NotImplementedError("Please import pandas_udf from

pyspark.sql.functions.")

NotImplementedError: Please import pandas_udf from pyspark.sql.functions

```

### How was this patch tested?

Unit test.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] liang3zy22 commented on a diff in pull request #40347: [SPARK-42711][BUILD]Update usage info for sbt tool

liang3zy22 commented on code in PR #40347: URL: https://github.com/apache/spark/pull/40347#discussion_r1133395930 ## build/sbt: ## @@ -17,7 +17,7 @@ # limitations under the License. # -SELF=$(cd $(dirname $0) && pwd) +SELF=$(cd "$(dirname "$0")" && pwd) Review Comment: This is shellcheck error/warning fix. I think we can keep it. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] liang3zy22 commented on a diff in pull request #40347: [SPARK-42711][BUILD]Update usage info for sbt tool

liang3zy22 commented on code in PR #40347: URL: https://github.com/apache/spark/pull/40347#discussion_r1133397703 ## build/sbt: ## @@ -62,7 +63,7 @@ Usage: $script_name [options] -sbt-rc use an RC version of sbt -sbt-snapshot use a snapshot version of sbt - # java version (default: java from PATH, currently $(java -version 2>&1 | grep version)) + # JAVA_HOME (this will override default JAVA_HOME) Review Comment: Well, my words are not clear. I will rollback to old hint info. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] LuciferYang commented on pull request #40389: [SPARK-42767][CONNECT][TESTS] Add check condition to start connect server fallback with `in-memory` and auto ignored some tests strongly

LuciferYang commented on PR #40389: URL: https://github.com/apache/spark/pull/40389#issuecomment-1465482691 Will update pr description later -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] LuciferYang opened a new pull request, #40389: [SPARK-42767][CONNECT][TESTS] Add check condition to start connect server fallback with `in-memory` and auto ignored some tests strongly

LuciferYang opened a new pull request, #40389: URL: https://github.com/apache/spark/pull/40389 ### What changes were proposed in this pull request? ### Why are the changes needed? ### Does this PR introduce _any_ user-facing change? ### How was this patch tested? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] aokolnychyi commented on a diff in pull request #40308: [SPARK-42151][SQL] Align UPDATE assignments with table attributes

aokolnychyi commented on code in PR #40308:

URL: https://github.com/apache/spark/pull/40308#discussion_r1133467299

##

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/Analyzer.scala:

##

@@ -3344,43 +3345,6 @@ class Analyzer(override val catalogManager:

CatalogManager) extends RuleExecutor

} else {

v2Write

}

-

- case u: UpdateTable if !u.skipSchemaResolution && u.resolved =>

Review Comment:

The only reason I would consider adding a config is to avoid an implicit

behavior change as we currently check field compatibility for INSERTS during

analysis. What is the community policy on such things? Is it okay to just

transition to runtime checks in 3.5?

##

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/Analyzer.scala:

##

@@ -3344,43 +3345,6 @@ class Analyzer(override val catalogManager:

CatalogManager) extends RuleExecutor

} else {

v2Write

}

-

- case u: UpdateTable if !u.skipSchemaResolution && u.resolved =>

Review Comment:

The only reason I would consider adding a config is to avoid an implicit

behavior change as we currently check field compatibility for INSERTs during

analysis. What is the community policy on such things? Is it okay to just

transition to runtime checks in 3.5?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on pull request #40387: [SPARK-42764][K8S] Parameterize the max number of attempts for driver props fetcher in KubernetesExecutorBackend

dongjoon-hyun commented on PR #40387: URL: https://github.com/apache/spark/pull/40387#issuecomment-1465358231 Could you review this PR, @viirya ? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] viirya commented on a diff in pull request #40308: [SPARK-42151][SQL] Align UPDATE assignments with table attributes

viirya commented on code in PR #40308:

URL: https://github.com/apache/spark/pull/40308#discussion_r1133355436

##

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/AssignmentUtils.scala:

##

@@ -0,0 +1,275 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.sql.catalyst.analysis

+

+import scala.collection.mutable

+

+import org.apache.spark.sql.AnalysisException

+import org.apache.spark.sql.catalyst.SQLConfHelper

+import org.apache.spark.sql.catalyst.expressions.{Alias, Attribute,

AttributeReference, CreateNamedStruct, Expression, ExtractValue,

GetStructField, Literal, NamedExpression}

+import org.apache.spark.sql.catalyst.plans.logical.Assignment

+import org.apache.spark.sql.catalyst.util.CharVarcharUtils

+import org.apache.spark.sql.connector.catalog.CatalogV2Implicits._

+import org.apache.spark.sql.errors.QueryCompilationErrors

+import org.apache.spark.sql.internal.SQLConf.StoreAssignmentPolicy

+import org.apache.spark.sql.types.{ArrayType, DataType, MapType, StructType}

+

+object AssignmentUtils extends SQLConfHelper with CastSupport {

+

+ private case class ColumnUpdate(ref: Seq[String], expr: Expression)

+

+ /**

+ * Aligns assignments to match table columns.

+ *

+ * This method processes and reorders given assignments so that each target

column gets

+ * an expression it should be set to. If a column does not have a matching

assignment,

+ * it will be set to its current value. For example, if one passes table

attributes c1, c2

+ * and an assignment c2 = 1, this method will return c1 = c1, c2 = 1.

+ *

+ * This method also handles updates to nested columns. If there is an

assignment to a particular

+ * nested field, this method will construct a new struct with one field

updated preserving other

+ * fields that have not been modified. For example, if one passes table

attributes c1, c2

+ * where c2 is a struct with fields n1 and n2 and an assignment c2.n2 = 1,

this method will

+ * return c1 = c1, c2 = struct(c2.n1, 1).

+ *

+ * @param attrs table attributes

+ * @param assignments assignments to align

+ * @return aligned assignments that match table columns

Review Comment:

nit: a consistent description

```suggestion

* @param attrs table attributes

* @param assignments assignments to align

* @return aligned assignments that match table attributes

```

##

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/Analyzer.scala:

##

@@ -3344,43 +3345,6 @@ class Analyzer(override val catalogManager:

CatalogManager) extends RuleExecutor

} else {

v2Write

}

-

- case u: UpdateTable if !u.skipSchemaResolution && u.resolved =>

Review Comment:

Hmm, I feel it is a bit more than required to have additional config for

this runtime error/analysis error behavior. It sounds like an internal decision

to databases, not sure if we need it to be configurable. Maybe I don't see too

much good with such config.

Ideally it should be an unified behavior, I agree this too. If there is

already some inconsistency. I think we can address them incrementally.

##

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/Analyzer.scala:

##

@@ -319,6 +319,7 @@ class Analyzer(override val catalogManager: CatalogManager)

extends RuleExecutor

ResolveRandomSeed ::

ResolveBinaryArithmetic ::

ResolveUnion ::

+ AlignRowLevelCommandAssignments ::

Review Comment:

Maybe add a comment that this rule cannot be changed in order for now.

##

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/analysis/AssignmentUtils.scala:

##

@@ -0,0 +1,275 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *

[GitHub] [spark] zhengruifeng commented on pull request #38947: [SPARK-41233][SQL][PYTHON] Add `array_prepend` function

zhengruifeng commented on PR #38947: URL: https://github.com/apache/spark/pull/38947#issuecomment-1465385482 @navinvishy sorry, it seems late for 3.4.0. would you mind changing the version from `3.4.0` to `3.5.0`? I am going to merge this PR to master this week if no more comments. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] zhengruifeng commented on pull request #40376: [SPARK-42756][CONNECT][PYTHON] Helper function to convert proto literal to value in Python Client

zhengruifeng commented on PR #40376: URL: https://github.com/apache/spark/pull/40376#issuecomment-1465436796 > Do we intentionally ignore `YearMonthIntervalType`? it seems that we have not support `YearMonthIntervalType` in vanilla PySpark, and the Python Client is reusing PySpark's types. So it seems that we should add `YearMonthIntervalType` in PySpark first -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] cloud-fan commented on pull request #40359: [SPARK-42740][SQL] Fix the bug that pushdown offset or paging is invalid for some built-in dialect

cloud-fan commented on PR #40359: URL: https://github.com/apache/spark/pull/40359#issuecomment-1465448190 thanks, merged to master! can you open a backport PR for 3.4? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] cloud-fan commented on a diff in pull request #40262: [SPARK-42651][SQL] Optimize global sort to driver sort

cloud-fan commented on code in PR #40262:

URL: https://github.com/apache/spark/pull/40262#discussion_r1133447713

##

sql/core/src/main/scala/org/apache/spark/sql/execution/DriverSortExec.scala:

##

@@ -0,0 +1,73 @@

+/*

+ * Licensed to the Apache Software Foundation (ASF) under one or more

+ * contributor license agreements. See the NOTICE file distributed with

+ * this work for additional information regarding copyright ownership.

+ * The ASF licenses this file to You under the Apache License, Version 2.0

+ * (the "License"); you may not use this file except in compliance with

+ * the License. You may obtain a copy of the License at

+ *

+ *http://www.apache.org/licenses/LICENSE-2.0

+ *

+ * Unless required by applicable law or agreed to in writing, software

+ * distributed under the License is distributed on an "AS IS" BASIS,

+ * WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

+ * See the License for the specific language governing permissions and

+ * limitations under the License.

+ */

+

+package org.apache.spark.sql.execution

+

+import java.util.concurrent.TimeUnit.NANOSECONDS

+

+import org.apache.spark.rdd.RDD

+import org.apache.spark.sql.catalyst.InternalRow

+import org.apache.spark.sql.catalyst.expressions.{Attribute, NamedExpression,

SortOrder, UnsafeProjection}

+import

org.apache.spark.sql.catalyst.expressions.codegen.LazilyGeneratedOrdering

+import org.apache.spark.sql.catalyst.plans.physical.{Partitioning,

SinglePartition}

+import org.apache.spark.sql.execution.metric.{SQLMetric, SQLMetrics}

+

+/**

+ * A special operator for global [[SortExec]] if it is the root node

with/without [[ProjectExec]].

+ */

+case class DriverSortExec(

Review Comment:

Can we use `TakeOrderedAndProjectExec` to replace it? They seem to be

similar. What if we give a large enough limit to `TakeOrderedAndProjectExec`?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] ulysses-you commented on pull request #40390: [SPARK-42768][SQL] Enable cached plan apply AQE by default

ulysses-you commented on PR #40390: URL: https://github.com/apache/spark/pull/40390#issuecomment-1465534836 thank you @dongjoon-hyun , I'm fine to hold on this until next release. Another thought is I want to make sure all tests can be passed. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] cloud-fan commented on pull request #40314: [SPARK-42698][CORE] SparkSubmit should also stop SparkContext when exit program in yarn mode and pass exitCode to AM side

cloud-fan commented on PR #40314: URL: https://github.com/apache/spark/pull/40314#issuecomment-1465541487 @AngersZh can you comment on the original discussion thread and convince related people to add back `sc.stop`? https://github.com/apache/spark/pull/32081#discussion_r663434289 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun opened a new pull request, #40387: [SPARK-42764][K8S] Parameterize the max number of attempts for driver props fetcher in KubernetesExecutorBackend

dongjoon-hyun opened a new pull request, #40387: URL: https://github.com/apache/spark/pull/40387 … ### What changes were proposed in this pull request? ### Why are the changes needed? ### Does this PR introduce _any_ user-facing change? ### How was this patch tested? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] cloud-fan commented on pull request #39624: [SPARK-42101][SQL] Make AQE support InMemoryTableScanExec

cloud-fan commented on PR #39624: URL: https://github.com/apache/spark/pull/39624#issuecomment-1465444732 thanks, merging to master! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] zhengruifeng commented on a diff in pull request #40375: [SPARK-42755][CONNECT] Factor literal value conversion out to `connect-common`

zhengruifeng commented on code in PR #40375:

URL: https://github.com/apache/spark/pull/40375#discussion_r1133419950

##

connector/connect/server/src/main/scala/org/apache/spark/sql/connect/planner/LiteralExpressionProtoConverter.scala:

##

@@ -192,8 +173,7 @@ object LiteralValueProtoConverter {

} else if (elementType.hasArray) {

makeArrayData(v => toArrayData(v.getArray))

} else {

- throw InvalidPlanInput(s"Unsupported Literal Type: $elementType)")

+ throw new UnsupportedOperationException(s"Unsupported Literal Type:

$elementType)")

Review Comment:

thanks

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] cloud-fan commented on a diff in pull request #38947: [SPARK-41233][SQL][PYTHON] Add `array_prepend` function

cloud-fan commented on code in PR #38947:

URL: https://github.com/apache/spark/pull/38947#discussion_r1133450986

##

sql/catalyst/src/main/scala/org/apache/spark/sql/catalyst/expressions/collectionOperations.scala:

##

@@ -1399,6 +1399,151 @@ case class ArrayContains(left: Expression, right:

Expression)

copy(left = newLeft, right = newRight)

}

+// scalastyle:off line.size.limit

+@ExpressionDescription(

+ usage = """

+ _FUNC_(array, element) - Add the element at the beginning of the array

passed as first

+ argument. Type of element should be similar to type of the elements of

the array.

+ Null element is also prepended to the array. But if the array passed is

NULL

+ output is NULL

+""",

+ examples = """

+Examples:

+ > SELECT _FUNC_(array('b', 'd', 'c', 'a'), 'd');

+ ["d","b","d","c","a"]

+ > SELECT _FUNC_(array(1, 2, 3, null), null);

+ [null,1,2,3,null]

+ > SELECT _FUNC_(CAST(null as Array), 2);

+ NULL

+ """,

+ group = "array_funcs",

+ since = "3.5.0")

+case class ArrayPrepend(left: Expression, right: Expression)

+ extends BinaryExpression

+with ImplicitCastInputTypes

+with ComplexTypeMergingExpression

+with QueryErrorsBase {

+

+ override def nullable: Boolean = left.nullable

+

+ @transient protected lazy val elementType: DataType =

+inputTypes.head.asInstanceOf[ArrayType].elementType

+

+ override def eval(input: InternalRow): Any = {

+val value1 = left.eval(input)

+if (value1 == null) {

+ null

+} else {

+ val value2 = right.eval(input)

+ nullSafeEval(value1, value2)

+}

+ }

+ override def nullSafeEval(arr: Any, elementData: Any): Any = {

+val arrayData = arr.asInstanceOf[ArrayData]

+val numberOfElements = arrayData.numElements() + 1

+if (numberOfElements > ByteArrayMethods.MAX_ROUNDED_ARRAY_LENGTH) {

+ throw

QueryExecutionErrors.concatArraysWithElementsExceedLimitError(numberOfElements)

+}

+val finalData = new Array[Any](numberOfElements)

+finalData.update(0, elementData)

+arrayData.foreach(elementType, (i: Int, v: Any) => finalData.update(i + 1,

v))

+new GenericArrayData(finalData)

+ }

+ override protected def doGenCode(ctx: CodegenContext, ev: ExprCode):

ExprCode = {

+val leftGen = left.genCode(ctx)

+val rightGen = right.genCode(ctx)

+val f = (arr: String, value: String) => {

+ val newArraySize = ctx.freshName("newArraySize")

+ val newArray = ctx.freshName("newArray")

+ val i = ctx.freshName("i")

+ val iPlus1 = s"$i+1"

+ val zero = "0"

+ val allocation = CodeGenerator.createArrayData(

+newArray,

+elementType,

+newArraySize,

Review Comment:

do we really need this variable? can we just pass `s"$arr.numElements() +

1"`?

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] xinrong-meng commented on pull request #40376: [SPARK-42756][CONNECT][PYTHON] Helper function to convert proto literal to value in Python Client

xinrong-meng commented on PR #40376: URL: https://github.com/apache/spark/pull/40376#issuecomment-1465422914 Do we intentionally ignore `YearMonthIntervalType`? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] cloud-fan closed pull request #39624: [SPARK-42101][SQL] Make AQE support InMemoryTableScanExec

cloud-fan closed pull request #39624: [SPARK-42101][SQL] Make AQE support InMemoryTableScanExec URL: https://github.com/apache/spark/pull/39624 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] beliefer commented on pull request #40359: [SPARK-42740][SQL] Fix the bug that pushdown offset or paging is invalid for some built-in dialect

beliefer commented on PR #40359: URL: https://github.com/apache/spark/pull/40359#issuecomment-1465460479 > thanks, merged to master! can you open a backport PR for 3.4? Thank you! I will create it. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] zhengruifeng commented on pull request #40372: [SPARK-42752][PYSPARK][SQL] Make PySpark exceptions printable during initialization

zhengruifeng commented on PR #40372: URL: https://github.com/apache/spark/pull/40372#issuecomment-1465459996 cc @itholic @HyukjinKwon -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] beliefer commented on a diff in pull request #40375: [SPARK-42755][CONNECT] Factor literal value conversion out to `connect-common`

beliefer commented on code in PR #40375:

URL: https://github.com/apache/spark/pull/40375#discussion_r1133416489

##

connector/connect/server/src/main/scala/org/apache/spark/sql/connect/planner/LiteralExpressionProtoConverter.scala:

##

@@ -192,8 +173,7 @@ object LiteralValueProtoConverter {

} else if (elementType.hasArray) {

makeArrayData(v => toArrayData(v.getArray))

} else {

- throw InvalidPlanInput(s"Unsupported Literal Type: $elementType)")

+ throw new UnsupportedOperationException(s"Unsupported Literal Type:

$elementType)")

Review Comment:

ditto

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] dongjoon-hyun commented on a diff in pull request #40387: [SPARK-42764][K8S] Parameterize the max number of attempts for driver props fetcher in KubernetesExecutorBackend

dongjoon-hyun commented on code in PR #40387:

URL: https://github.com/apache/spark/pull/40387#discussion_r1133444982

##

resource-managers/kubernetes/core/src/main/scala/org/apache/spark/scheduler/cluster/k8s/KubernetesExecutorBackend.scala:

##

@@ -82,7 +82,7 @@ private[spark] object KubernetesExecutorBackend extends

Logging {

clientMode = true)

var driver: RpcEndpointRef = null

- val nTries = 3

+ val nTries =

sys.env.getOrElse("EXECUTOR_DRIVER_PROPS_FETCHER_MAX_ATTEMPTS", 3).toInt

Review Comment:

Thank you for review. This is an internal parameter like `.internal` config.

However, this code path is invoked to get `SparkConf`. So, we cannot use

`SparkConf` value. We may document this env variable later in some debug

section.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] ulysses-you commented on pull request #39037: [SPARK-41214][SQL] Fix AQE cache does not update plan and metrics

ulysses-you commented on PR #39037: URL: https://github.com/apache/spark/pull/39037#issuecomment-1465513263 close this in favor of https://github.com/apache/spark/pull/39624 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] ulysses-you closed pull request #39037: [SPARK-41214][SQL] Fix AQE cache does not update plan and metrics

ulysses-you closed pull request #39037: [SPARK-41214][SQL] Fix AQE cache does not update plan and metrics URL: https://github.com/apache/spark/pull/39037 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] cloud-fan commented on a diff in pull request #38947: [SPARK-41233][SQL][PYTHON] Add `array_prepend` function