Elphel Development Blog

July 21, 2018 1:32 PM

You have subscribed to these e-mail notices about new posts to the blog.

If you want to change your settings or unsubscribe please visit:

https://blog.elphel.com/post_notification_header/?code=cd9ad4791210eef4735d43f52cc21dd2&addr=support-list%40support.elphel.com&

If you want to change your settings or unsubscribe please visit:

https://blog.elphel.com/post_notification_header/?code=cd9ad4791210eef4735d43f52cc21dd2&addr=support-list%40support.elphel.com&

CVPR 2018 - from Elphel's perspective

In this blog article we will recall the most interesting results of Elphel participation at CVPR 2018 Expo, the conversations we had with visitor’s at the booth, FAQs as well as unusual questions, and what we learned from it. InDay One: The best show ever!

While we are standing nervously at our booth, thinking: “Is there going to be any interest? Will people come, will they ask questions?”, the first poster session starts and a wave of visitors floods the exhibition floor. Our first guest at the booth spends 30 minutes, knowledgeably inquiring about Elphel’s long-range 3D technology and leaves his business card, saying that he is very impressed. This was a good start of a very busy day full of technical discussions. CVPR is the first exhibition we have participated in where we did not have any problems explaining our projects.The most common questions that were asked:

Q: Why does your camera have 4 lenses? A: With 4 lenses we have 4 stereo-pairs: horizontal stereo-pair is responsible for vertical features (it is the same as in regular stereo-camera); the vertical pair detects horizontal features, while the 2 diagonal pairs ensure that almost any edge is detected. (Elphel Presentation, p.8). Q: Why don’t you use lidars? A: Lidars are not capable of long range distance measurements. The practical maximum range ofÂDay 2: Neural network focus.

Our current results:

- 500 meters, high resolution, 3D reconstruction with 5% accuracy with 150mm stereo-base (quad-stereo camera), passive (image-based)

- 2000 meters with 5% accuracy with 2 quad-stereo cameras based at 1256 mm from each other

- 3D-Reconstruction is done in post-processing with software simulated for FPGA porting. With FPGA – 12fps in 3D

- Train the neural network with the current 3D-image sets to achieve same results as in (2) – 2000 meter 3D reconstruction, with just one quad-sensor camera

- Port software to FPGA to achieve real-time 3D reconstruction (12 fps)

- Manufacture custom ASIC for 3D-reconstruction to reconstruct 3D scenes on the fly with video frame rate. The small size of the custom chip will allow us to mass produce a long-range 3D reconstruction camera for industrial and commercial purposes. Smaller form-factor, inexpensive, and fast.

- Scalable design:Â a smaller stereo-base with 4 sensors – phone application (200 meter accurate 3D reconstruction for consumer applications); larger stereo-base: extremely long range passive 3D reconstruction – military applications, drone applications, etc.

CNNs are not efficient for the real time high-resolution 3-D image processing.

Elphel’s approach to 3D reconstruction with the help of neural network is different from the mainstream one. This is partially due to our expertise is building calibrated hardware, which already produces robust 3D measurements. Therefore we see the value in pre-processing the images before feeding them to the NN similar to the eye pre-procesing visual information before sending it to the brain. The human eye receives 150 MPix of data, processes it on the retina, and sends only 1Mpix to the brain – 150 times reduction. One question came up twice, both times from the conference attendees, about the difficulty the NN has to process high-resolution images. The current approach to NN training recommends to use low resolution raw images (640×480), because the NN has to be trained on a large amount of images (thousands). The last 6 slides of Elphel’s presentation talk about augmenting the NN with Elphel high-performance Tile Processor, that significantly reduces the amount of data, while leaving the “decision making” to the network. We argue that the End-to-End approach is less efficient for high-resolution image processing then NN augmented with training-tree, linear image processing.Elphel training sets for Neural Network:

Elphel offers unique image sets for neural network training. These are high-resolution, space-invariant data sets. Elphel is seeking collaboration with machine learning research teams working in the area of ML applications for 3D object classification, localization and tracking.Day 3: Autonomous Vehicle Focus:

The exhibition is slowing down. Some booths start packing at midday. Elphel, however is busier- Researcher from Apple: “You have the most unique technology on this Expo!”

- Autonomous Vehicle company A: “I can not believe you can accurately measure distances at 500 meters with just a small base of 150mm? With just 5-10% error? It is simply impossible! I have to come see it!” After visiting our booth seeing the demo he asks: “Can you demonstrate your technology on the car? It has to be in Singapore!”

- Autonomous Vehicle company B: ” We are happy with the use of the Lidars on the city streets, with close range perception and 30 mph driving speed. But on the highway, where the speed if much higher and the distance much farther the Lidar’s output is too sparse. Passive, long-range 3D reconstruction would be a perfect solution.”

- Autonomous Vehicle company C: “How dense is your 3D scene? Oh, it is dense, then we can use it.”

- Autonomous Vehicle company D: “We are building the perception solution for self-driving trucks. We need long-range distance measurement.”

- Autonomous Vehicle company E: “We have 16 different types of sensors on the car right now. I don’t think we have room to put another camera. Besides the area behind the wind shield is very valuable, we already have 4 cameras there – there is no room. By the way, we re-evaluate our approach every 6 months, and we just did that, so let’s, maybe, talk in 6 months again?” Interesting thought – why would anyone need unobstructed windshield in a self-driving car? They did not explain that.

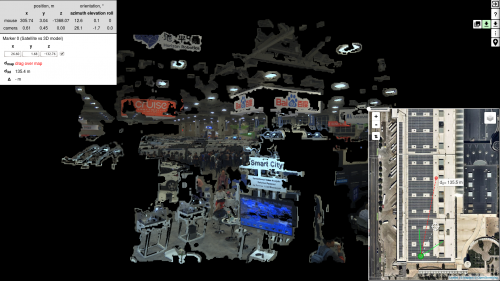

View of the CVPR 2018 Expo “From Elphel Perspective”:

One last thing to do before the show is over is to get the 3D-X-Cam up high and build the 3D model of the CVPR 2018 EXPO in “real-time”!_______________________________________________ Support-list mailing list Support-list@support.elphel.com http://support.elphel.com/mailman/listinfo/support-list_support.elphel.com