Gollum999 opened a new issue, #26069: URL: https://github.com/apache/airflow/issues/26069

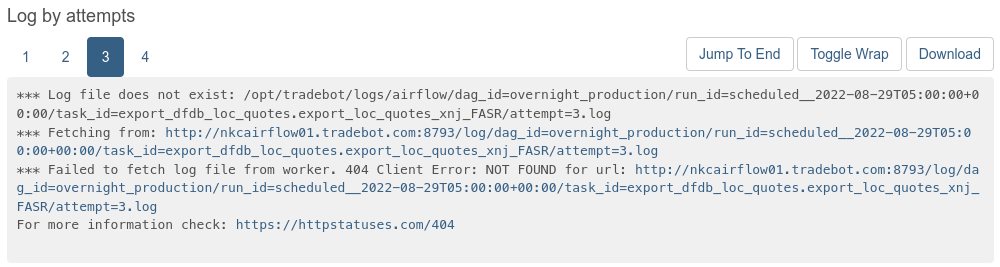

### Apache Airflow version Other Airflow 2 version ### What happened If a task gets scheduled on a different worker host from a previous run, logs from that previous run will be unavailable from the webserver.  From my observation, it's pretty clear that this is happening because Airflow is looking for logs at the _most recent_ hostname, not at the hostname that hosted the historical run. You can watch this happen "live" with the example DAG below - each time the task retries on a different worker, the URL that it attempts to use for historical logs changes to use the most recent hostname. ### What you think should happen instead All previous logs should be available from the webserver (as long as the log file still exists on disk). ### How to reproduce ``` #!/usr/bin/env python3 import datetime import logging import socket from airflow.decorators import dag, task logger = logging.getLogger(__name__) @dag( schedule_interval='@daily', start_date=datetime.datetime(2022, 8, 30), default_args={ 'retries': 9, 'retry_delay': 10.0, }, ) def test_logs(): @task def log(): logger.info(f'running from {socket.gethostname()}') raise RuntimeError('force fail') log() dag = test_logs() if __name__ == '__main__': dag.cli() ``` ### Operating System CentOS Stream 8 ### Versions of Apache Airflow Providers None ### Deployment Other ### Deployment details Airflow v2.3.3 Self-hosted Postgres DB backend CeleryExecutor ### Anything else Possibly related: * #13483 * #561 ### Are you willing to submit PR? - [ ] Yes I am willing to submit a PR! ### Code of Conduct - [X] I agree to follow this project's [Code of Conduct](https://github.com/apache/airflow/blob/main/CODE_OF_CONDUCT.md) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: [email protected] For queries about this service, please contact Infrastructure at: [email protected]