JkSelf commented on pull request #28954: URL: https://github.com/apache/spark/pull/28954#issuecomment-652302783

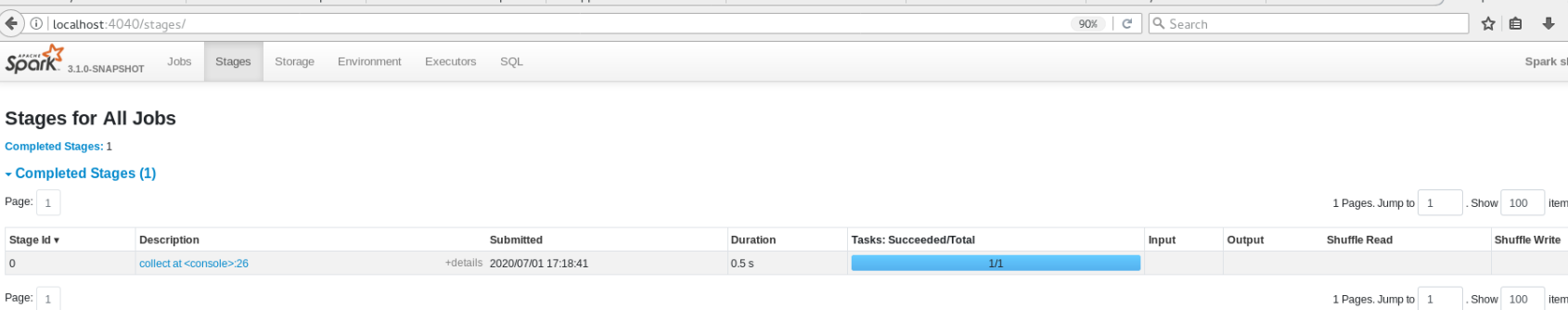

@manuzhang It seems still only one stage and no unnecessary task for empty partitions.  related code: ``` spark.conf.set("spark.sql.adaptive.enabled", "true") spark.conf.set("spark.sql.autoBroadcastJoinThreshold", "-1") val df1 = spark.range(10).withColumn("a", 'id) val df2 = spark.range(10).withColumn("b", 'id) val result = df1.where('a > 10).join(df2.where('b > 10), "id").groupBy('a).count() result.collect() ``` ---------------------------------------------------------------- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. For queries about this service, please contact Infrastructure at: [email protected] --------------------------------------------------------------------- To unsubscribe, e-mail: [email protected] For additional commands, e-mail: [email protected]