[GitHub] [hadoop] hadoop-yetus commented on pull request #4488: HDFS-16640. RBF: Show datanode IP list when click DN histogram in Router

hadoop-yetus commented on PR #4488:

URL: https://github.com/apache/hadoop/pull/4488#issuecomment-1165211795

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 52s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +0 :ok: | xmllint | 0m 0s | | xmllint was not available. |

| +0 :ok: | jshint | 0m 0s | | jshint was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

_ trunk Compile Tests _ |

| +0 :ok: | mvndep | 15m 6s | | Maven dependency ordering for branch |

| +1 :green_heart: | mvninstall | 28m 16s | | trunk passed |

| +1 :green_heart: | shadedclient | 66m 3s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +0 :ok: | mvndep | 0m 24s | | Maven dependency ordering for patch |

| +1 :green_heart: | mvninstall | 2m 2s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | shadedclient | 22m 1s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | asflicense | 0m 41s | | The patch does not

generate ASF License warnings. |

| | | 93m 53s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4488/3/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4488 |

| Optional Tests | dupname asflicense shadedclient codespell detsecrets

xmllint jshint |

| uname | Linux f4567394809c 4.15.0-175-generic #184-Ubuntu SMP Thu Mar 24

17:48:36 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 550cf7145ea36948de7ada1e48f3c534d1760850 |

| Max. process+thread count | 578 (vs. ulimit of 5500) |

| modules | C: hadoop-hdfs-project/hadoop-hdfs

hadoop-hdfs-project/hadoop-hdfs-rbf U: hadoop-hdfs-project |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4488/3/console |

| versions | git=2.25.1 maven=3.6.3 |

| Powered by | Apache Yetus 0.14.0 https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] hadoop-yetus commented on pull request #4488: HDFS-16640. RBF: Show datanode IP list when click DN histogram in Router

hadoop-yetus commented on PR #4488:

URL: https://github.com/apache/hadoop/pull/4488#issuecomment-1165207837

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 46s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +0 :ok: | xmllint | 0m 0s | | xmllint was not available. |

| +0 :ok: | jshint | 0m 0s | | jshint was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

_ trunk Compile Tests _ |

| +0 :ok: | mvndep | 15m 21s | | Maven dependency ordering for branch |

| +1 :green_heart: | mvninstall | 27m 0s | | trunk passed |

| +1 :green_heart: | shadedclient | 62m 4s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +0 :ok: | mvndep | 0m 32s | | Maven dependency ordering for patch |

| +1 :green_heart: | mvninstall | 2m 3s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | shadedclient | 19m 21s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | asflicense | 0m 51s | | The patch does not

generate ASF License warnings. |

| | | 87m 35s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4488/2/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4488 |

| Optional Tests | dupname asflicense shadedclient codespell detsecrets

xmllint jshint |

| uname | Linux bbd2d63a1905 4.15.0-169-generic #177-Ubuntu SMP Thu Feb 3

10:50:38 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 550cf7145ea36948de7ada1e48f3c534d1760850 |

| Max. process+thread count | 558 (vs. ulimit of 5500) |

| modules | C: hadoop-hdfs-project/hadoop-hdfs

hadoop-hdfs-project/hadoop-hdfs-rbf U: hadoop-hdfs-project |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4488/2/console |

| versions | git=2.25.1 maven=3.6.3 |

| Powered by | Apache Yetus 0.14.0 https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] wzhallright commented on pull request #4488: HDFS-16640. RBF: Show datanode IP list when click DN histogram in Router

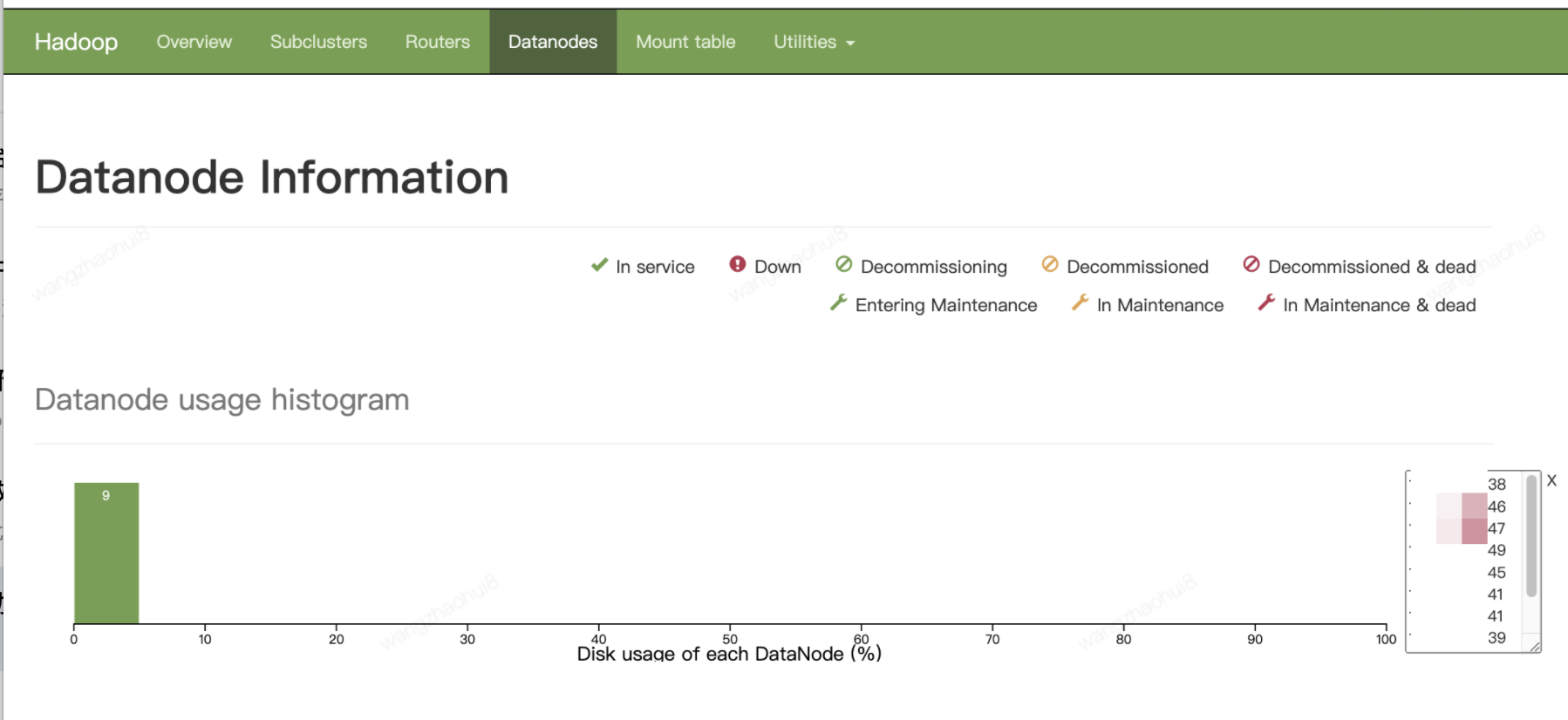

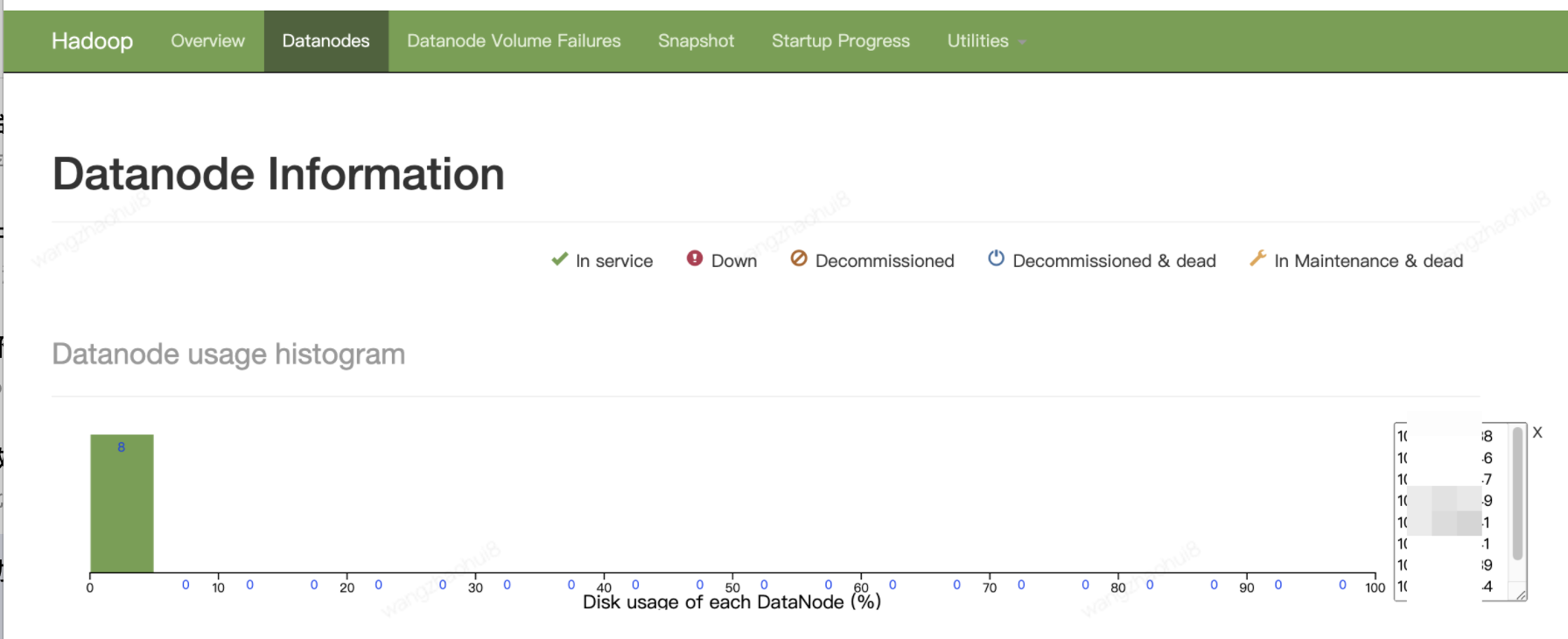

wzhallright commented on PR #4488: URL: https://github.com/apache/hadoop/pull/4488#issuecomment-1165167793 @ayushtkn Add histogram-hostip.js, after modified the screenshots. Please take a review, Thanks! RBF. . NN  -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18044) Hadoop - Upgrade to JQuery 3.6.0

[

https://issues.apache.org/jira/browse/HADOOP-18044?focusedWorklogId=784405=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784405

]

ASF GitHub Bot logged work on HADOOP-18044:

---

Author: ASF GitHub Bot

Created on: 24/Jun/22 03:26

Start Date: 24/Jun/22 03:26

Worklog Time Spent: 10m

Work Description: hadoop-yetus commented on PR #4495:

URL: https://github.com/apache/hadoop/pull/4495#issuecomment-1165155367

:broken_heart: **-1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 10m 55s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | jshint | 0m 0s | | jshint was not available. |

| +0 :ok: | shelldocs | 0m 1s | | Shelldocs was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| -1 :x: | test4tests | 0m 0s | | The patch doesn't appear to include

any new or modified tests. Please justify why no new tests are needed for this

patch. Also please list what manual steps were performed to verify this patch.

|

_ branch-3.3.4 Compile Tests _ |

| +0 :ok: | mvndep | 5m 0s | | Maven dependency ordering for branch |

| +1 :green_heart: | mvninstall | 31m 23s | | branch-3.3.4 passed |

| +1 :green_heart: | compile | 18m 8s | | branch-3.3.4 passed |

| +1 :green_heart: | mvnsite | 25m 7s | | branch-3.3.4 passed |

| +1 :green_heart: | javadoc | 8m 12s | | branch-3.3.4 passed |

| +1 :green_heart: | shadedclient | 31m 15s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +0 :ok: | mvndep | 0m 34s | | Maven dependency ordering for patch |

| +1 :green_heart: | mvninstall | 24m 35s | | the patch passed |

| +1 :green_heart: | compile | 17m 23s | | the patch passed |

| +1 :green_heart: | javac | 17m 23s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | mvnsite | 20m 54s | | the patch passed |

| +1 :green_heart: | shellcheck | 0m 0s | | No new issues. |

| +1 :green_heart: | xml | 0m 2s | | The patch has no ill-formed XML

file. |

| +1 :green_heart: | javadoc | 8m 3s | | the patch passed |

| +1 :green_heart: | shadedclient | 32m 48s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| -1 :x: | unit | 749m 42s |

[/patch-unit-root.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4495/1/artifact/out/patch-unit-root.txt)

| root in the patch passed. |

| +1 :green_heart: | asflicense | 2m 53s | | The patch does not

generate ASF License warnings. |

| | | 973m 36s | | |

| Reason | Tests |

|---:|:--|

| Failed junit tests | hadoop.yarn.sls.TestReservationSystemInvariants |

| | hadoop.hdfs.server.namenode.ha.TestHAAppend |

| | hadoop.hdfs.server.datanode.TestBPOfferService |

| | hadoop.yarn.csi.client.TestCsiClient |

| | hadoop.yarn.server.resourcemanager.TestRMEmbeddedElector |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4495/1/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4495 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient codespell xml jshint shellcheck shelldocs |

| uname | Linux 1da82b6adfcb 4.15.0-65-generic #74-Ubuntu SMP Tue Sep 17

17:06:04 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | branch-3.3.4 / 4c22960836eebe082c01ea2545f9cea5eeb06526 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~18.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4495/1/testReport/ |

| Max. process+thread count | 2741 (vs. ulimit of 5500) |

| modules | C: hadoop-hdfs-project/hadoop-hdfs

hadoop-hdfs-project/hadoop-hdfs-rbf

hadoop-yarn-project/hadoop-yarn/hadoop-yarn-common . U: . |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4495/1/console |

| versions | git=2.17.1 maven=3.6.0 shellcheck=0.4.6 |

| Powered by | Apache Yetus 0.14.0-SNAPSHOT https://yetus.apache.org |

This message was automatically generated.

Issue Time Tracking

---

Worklog Id:

[GitHub] [hadoop] hadoop-yetus commented on pull request #4495: HADOOP-18044. Hadoop - Upgrade to jQuery 3.6.0 (#3791)

hadoop-yetus commented on PR #4495:

URL: https://github.com/apache/hadoop/pull/4495#issuecomment-1165155367

:broken_heart: **-1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 10m 55s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | jshint | 0m 0s | | jshint was not available. |

| +0 :ok: | shelldocs | 0m 1s | | Shelldocs was not available. |

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| -1 :x: | test4tests | 0m 0s | | The patch doesn't appear to include

any new or modified tests. Please justify why no new tests are needed for this

patch. Also please list what manual steps were performed to verify this patch.

|

_ branch-3.3.4 Compile Tests _ |

| +0 :ok: | mvndep | 5m 0s | | Maven dependency ordering for branch |

| +1 :green_heart: | mvninstall | 31m 23s | | branch-3.3.4 passed |

| +1 :green_heart: | compile | 18m 8s | | branch-3.3.4 passed |

| +1 :green_heart: | mvnsite | 25m 7s | | branch-3.3.4 passed |

| +1 :green_heart: | javadoc | 8m 12s | | branch-3.3.4 passed |

| +1 :green_heart: | shadedclient | 31m 15s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +0 :ok: | mvndep | 0m 34s | | Maven dependency ordering for patch |

| +1 :green_heart: | mvninstall | 24m 35s | | the patch passed |

| +1 :green_heart: | compile | 17m 23s | | the patch passed |

| +1 :green_heart: | javac | 17m 23s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | mvnsite | 20m 54s | | the patch passed |

| +1 :green_heart: | shellcheck | 0m 0s | | No new issues. |

| +1 :green_heart: | xml | 0m 2s | | The patch has no ill-formed XML

file. |

| +1 :green_heart: | javadoc | 8m 3s | | the patch passed |

| +1 :green_heart: | shadedclient | 32m 48s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| -1 :x: | unit | 749m 42s |

[/patch-unit-root.txt](https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4495/1/artifact/out/patch-unit-root.txt)

| root in the patch passed. |

| +1 :green_heart: | asflicense | 2m 53s | | The patch does not

generate ASF License warnings. |

| | | 973m 36s | | |

| Reason | Tests |

|---:|:--|

| Failed junit tests | hadoop.yarn.sls.TestReservationSystemInvariants |

| | hadoop.hdfs.server.namenode.ha.TestHAAppend |

| | hadoop.hdfs.server.datanode.TestBPOfferService |

| | hadoop.yarn.csi.client.TestCsiClient |

| | hadoop.yarn.server.resourcemanager.TestRMEmbeddedElector |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4495/1/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4495 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient codespell xml jshint shellcheck shelldocs |

| uname | Linux 1da82b6adfcb 4.15.0-65-generic #74-Ubuntu SMP Tue Sep 17

17:06:04 UTC 2019 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | branch-3.3.4 / 4c22960836eebe082c01ea2545f9cea5eeb06526 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~18.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4495/1/testReport/ |

| Max. process+thread count | 2741 (vs. ulimit of 5500) |

| modules | C: hadoop-hdfs-project/hadoop-hdfs

hadoop-hdfs-project/hadoop-hdfs-rbf

hadoop-yarn-project/hadoop-yarn/hadoop-yarn-common . U: . |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4495/1/console |

| versions | git=2.17.1 maven=3.6.0 shellcheck=0.4.6 |

| Powered by | Apache Yetus 0.14.0-SNAPSHOT https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional

[GitHub] [hadoop] hadoop-yetus commented on pull request #4406: HDFS-16619. Fix HttpHeaders.Values And HttpHeaders.Names Deprecated Import

hadoop-yetus commented on PR #4406:

URL: https://github.com/apache/hadoop/pull/4406#issuecomment-1165151975

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 46s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 1s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 2 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 37m 41s | | trunk passed |

| +1 :green_heart: | compile | 1m 38s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 1m 35s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 25s | | trunk passed |

| +1 :green_heart: | mvnsite | 1m 45s | | trunk passed |

| +1 :green_heart: | javadoc | 1m 24s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 50s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 41s | | trunk passed |

| +1 :green_heart: | shadedclient | 23m 2s | | branch has no errors

when building and testing our client artifacts. |

| -0 :warning: | patch | 23m 29s | | Used diff version of patch file.

Binary files and potentially other changes not applied. Please rebase and

squash commits if necessary. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 1m 23s | | the patch passed |

| +1 :green_heart: | compile | 1m 28s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javac | 1m 28s | |

hadoop-hdfs-project_hadoop-hdfs-jdkPrivateBuild-11.0.15+10-Ubuntu-0ubuntu0.20.04.1

with JDK Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 generated 0 new +

911 unchanged - 26 fixed = 911 total (was 937) |

| +1 :green_heart: | compile | 1m 21s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 1m 21s | |

hadoop-hdfs-project_hadoop-hdfs-jdkPrivateBuild-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07

with JDK Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 generated 0 new

+ 890 unchanged - 26 fixed = 890 total (was 916) |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 1m 0s | | the patch passed |

| +1 :green_heart: | mvnsite | 1m 23s | | the patch passed |

| +1 :green_heart: | javadoc | 0m 59s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 30s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 20s | | the patch passed |

| +1 :green_heart: | shadedclient | 22m 28s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 236m 26s | | hadoop-hdfs in the patch

passed. |

| +1 :green_heart: | asflicense | 1m 16s | | The patch does not

generate ASF License warnings. |

| | | 345m 49s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4406/10/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4406 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell detsecrets |

| uname | Linux 743797d72837 4.15.0-112-generic #113-Ubuntu SMP Thu Jul 9

23:41:39 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 38082b886a768a6834f85ef5114a96969b2ed1d8 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions | /usr/lib/jvm/java-11-openjdk-amd64:Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4406/10/testReport/ |

| Max. process+thread count | 3523 (vs. ulimit of 5500) |

| modules | C:

[GitHub] [hadoop] wzhallright commented on pull request #4488: HDFS-16640. RBF: Show datanode IP list when click DN histogram in Router

wzhallright commented on PR #4488: URL: https://github.com/apache/hadoop/pull/4488#issuecomment-1165134491 > I'm not good at JS... But I can try to modify -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18303) Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime

[ https://issues.apache.org/jira/browse/HADOOP-18303?focusedWorklogId=784395=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784395 ] ASF GitHub Bot logged work on HADOOP-18303: --- Author: ASF GitHub Bot Created on: 24/Jun/22 02:13 Start Date: 24/Jun/22 02:13 Worklog Time Spent: 10m Work Description: virajjasani commented on PR #4461: URL: https://github.com/apache/hadoop/pull/4461#issuecomment-1165109458 Even if HADOOP-18033 had not excluded javax.ws.rs-api from shading, as [mentioned here](https://github.com/apache/hadoop/pull/4461#pullrequestreview-1012678699), we would have anyways caused subtle problems by having only one version of common class in the final client jar (as opposed to having different classes for both javax.ws.rs-api and jsr311-api). Issue Time Tracking --- Worklog Id: (was: 784395) Time Spent: 3h 10m (was: 3h) > Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime > -- > > Key: HADOOP-18303 > URL: https://issues.apache.org/jira/browse/HADOOP-18303 > Project: Hadoop Common > Issue Type: Bug >Reporter: Viraj Jasani >Assignee: Viraj Jasani >Priority: Critical > Labels: pull-request-available > Time Spent: 3h 10m > Remaining Estimate: 0h > > As part of HADOOP-18033, we have excluded shading of javax.ws.rs-api from > both hadoop-client-runtime and hadoop-client-minicluster. This has caused > issues for downstreamers e.g. > [https://github.com/apache/incubator-kyuubi/issues/2904], more discussions. > We should put the shading back in hadoop-client-runtime to fix CNFE issues > for downstreamers. > cc [~ayushsaxena] [~pan3793] -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] virajjasani commented on pull request #4461: HADOOP-18303. Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime

virajjasani commented on PR #4461: URL: https://github.com/apache/hadoop/pull/4461#issuecomment-1165109458 Even if HADOOP-18033 had not excluded javax.ws.rs-api from shading, as [mentioned here](https://github.com/apache/hadoop/pull/4461#pullrequestreview-1012678699), we would have anyways caused subtle problems by having only one version of common class in the final client jar (as opposed to having different classes for both javax.ws.rs-api and jsr311-api). -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18303) Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime

[ https://issues.apache.org/jira/browse/HADOOP-18303?focusedWorklogId=784392=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784392 ] ASF GitHub Bot logged work on HADOOP-18303: --- Author: ASF GitHub Bot Created on: 24/Jun/22 02:08 Start Date: 24/Jun/22 02:08 Worklog Time Spent: 10m Work Description: virajjasani commented on PR #4461: URL: https://github.com/apache/hadoop/pull/4461#issuecomment-1165107308 While HADOOP-18033 is released in 3.3.2, HADOOP-18178 has also made it's way to 3.3.3. Issue Time Tracking --- Worklog Id: (was: 784392) Time Spent: 3h (was: 2h 50m) > Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime > -- > > Key: HADOOP-18303 > URL: https://issues.apache.org/jira/browse/HADOOP-18303 > Project: Hadoop Common > Issue Type: Bug >Reporter: Viraj Jasani >Assignee: Viraj Jasani >Priority: Critical > Labels: pull-request-available > Time Spent: 3h > Remaining Estimate: 0h > > As part of HADOOP-18033, we have excluded shading of javax.ws.rs-api from > both hadoop-client-runtime and hadoop-client-minicluster. This has caused > issues for downstreamers e.g. > [https://github.com/apache/incubator-kyuubi/issues/2904], more discussions. > We should put the shading back in hadoop-client-runtime to fix CNFE issues > for downstreamers. > cc [~ayushsaxena] [~pan3793] -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] virajjasani commented on pull request #4461: HADOOP-18303. Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime

virajjasani commented on PR #4461: URL: https://github.com/apache/hadoop/pull/4461#issuecomment-1165107308 While HADOOP-18033 is released in 3.3.2, HADOOP-18178 has also made it's way to 3.3.3. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Commented] (HADOOP-18290) Fix some compatibility issues with 3.3.3 release notes

[ https://issues.apache.org/jira/browse/HADOOP-18290?page=com.atlassian.jira.plugin.system.issuetabpanels:comment-tabpanel=17558284#comment-17558284 ] JiangHua Zhu commented on HADOOP-18290: --- I appreciate your suggestion, [~ste...@apache.org]. Automating builds is very important work, thank you very much for your work. > Fix some compatibility issues with 3.3.3 release notes > -- > > Key: HADOOP-18290 > URL: https://issues.apache.org/jira/browse/HADOOP-18290 > Project: Hadoop Common > Issue Type: Improvement > Components: build, documentation >Affects Versions: 3.3.3 >Reporter: JiangHua Zhu >Priority: Major > Attachments: image-2022-06-14-10-27-23-027.png, > image-2022-06-14-10-28-53-822.png > > > 3.3.3 Release Notes: > https://hadoop.apache.org/docs/r3.3.3/hadoop-project-dist/hadoop-common/release/3.3.3/RELEASENOTES.3.3.3.html > There are some compatibility issues here. E.g: > !image-2022-06-14-10-27-23-027.png! > I think this is happening due to a syntax issue. > It would be more appropriate to change it to this: > !image-2022-06-14-10-28-53-822.png! -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18254) Add in configuration option to enable prefetching

[ https://issues.apache.org/jira/browse/HADOOP-18254?focusedWorklogId=784390=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784390 ] ASF GitHub Bot logged work on HADOOP-18254: --- Author: ASF GitHub Bot Created on: 24/Jun/22 01:55 Start Date: 24/Jun/22 01:55 Worklog Time Spent: 10m Work Description: ashutoshcipher commented on PR #4469: URL: https://github.com/apache/hadoop/pull/4469#issuecomment-1165100507 LGTM +1(non-binding) Issue Time Tracking --- Worklog Id: (was: 784390) Time Spent: 1h (was: 50m) > Add in configuration option to enable prefetching > - > > Key: HADOOP-18254 > URL: https://issues.apache.org/jira/browse/HADOOP-18254 > Project: Hadoop Common > Issue Type: Sub-task >Reporter: Ahmar Suhail >Assignee: Ahmar Suhail >Priority: Minor > Labels: pull-request-available > Time Spent: 1h > Remaining Estimate: 0h > > Currently prefetching is enabled by default, we should instead add in a > config option to enable it. -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] ashutoshcipher commented on pull request #4469: HADOOP-18254. Disable prefetching by default.

ashutoshcipher commented on PR #4469: URL: https://github.com/apache/hadoop/pull/4469#issuecomment-1165100507 LGTM +1(non-binding) -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] ashutoshcipher commented on a diff in pull request #4248: MAPREDUCE-7370. Parallelize MultipleOutputs#close call

ashutoshcipher commented on code in PR #4248:

URL: https://github.com/apache/hadoop/pull/4248#discussion_r905654385

##

hadoop-mapreduce-project/hadoop-mapreduce-client/hadoop-mapreduce-client-core/src/main/java/org/apache/hadoop/mapreduce/lib/output/MultipleOutputs.java:

##

@@ -570,8 +570,14 @@ public void setStatus(String status) {

*/

@SuppressWarnings("unchecked")

public void close() throws IOException, InterruptedException {

-for (RecordWriter writer : recordWriters.values()) {

- writer.close(context);

-}

+recordWriters.values().parallelStream().forEach(writer -> {

Review Comment:

Thanks @cnauroth and @steveloughran for comments. I will make the required

changes. Thanks.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18309) Upgrade bundled Tomcat to 8.5.76 or higher

[

https://issues.apache.org/jira/browse/HADOOP-18309?focusedWorklogId=784386=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784386

]

ASF GitHub Bot logged work on HADOOP-18309:

---

Author: ASF GitHub Bot

Created on: 24/Jun/22 00:12

Start Date: 24/Jun/22 00:12

Worklog Time Spent: 10m

Work Description: aajisaka commented on PR #4479:

URL: https://github.com/apache/hadoop/pull/4479#issuecomment-1165034064

Thank you @ashutoshcipher for the contribution and thank you @iwasakims for

testing and merging the PR.

Issue Time Tracking

---

Worklog Id: (was: 784386)

Time Spent: 40m (was: 0.5h)

> Upgrade bundled Tomcat to 8.5.76 or higher

> --

>

> Key: HADOOP-18309

> URL: https://issues.apache.org/jira/browse/HADOOP-18309

> Project: Hadoop Common

> Issue Type: Improvement

> Components: httpfs, kms

>Affects Versions: 2.10.1, 2.10.2

>Reporter: Ashutosh Gupta

>Assignee: Ashutosh Gupta

>Priority: Major

> Labels: pull-request-available

> Fix For: 2.10.3

>

> Time Spent: 40m

> Remaining Estimate: 0h

>

> Currently we are using 8.5.75 which is affected by

> {color:#33}CVE-2022-25762{color}

> More Details -

> [https://lists.apache.org/thread/qzkqh2819x6zsmj7vwdf14ng2fdgckw7]

> Lets upgrade 8.5.76 or higher

>

>

--

This message was sent by Atlassian Jira

(v8.20.7#820007)

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] aajisaka commented on pull request #4479: HADOOP-18309.Upgrade bundled Tomcat to 8.5.81

aajisaka commented on PR #4479: URL: https://github.com/apache/hadoop/pull/4479#issuecomment-1165034064 Thank you @ashutoshcipher for the contribution and thank you @iwasakims for testing and merging the PR. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18303) Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime

[ https://issues.apache.org/jira/browse/HADOOP-18303?focusedWorklogId=784376=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784376 ] ASF GitHub Bot logged work on HADOOP-18303: --- Author: ASF GitHub Bot Created on: 23/Jun/22 23:13 Start Date: 23/Jun/22 23:13 Worklog Time Spent: 10m Work Description: ayushtkn commented on PR #4461: URL: https://github.com/apache/hadoop/pull/4461#issuecomment-116433 Yeps, if people let us do that, that jira is already in a released version Issue Time Tracking --- Worklog Id: (was: 784376) Time Spent: 2h 50m (was: 2h 40m) > Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime > -- > > Key: HADOOP-18303 > URL: https://issues.apache.org/jira/browse/HADOOP-18303 > Project: Hadoop Common > Issue Type: Bug >Reporter: Viraj Jasani >Assignee: Viraj Jasani >Priority: Critical > Labels: pull-request-available > Time Spent: 2h 50m > Remaining Estimate: 0h > > As part of HADOOP-18033, we have excluded shading of javax.ws.rs-api from > both hadoop-client-runtime and hadoop-client-minicluster. This has caused > issues for downstreamers e.g. > [https://github.com/apache/incubator-kyuubi/issues/2904], more discussions. > We should put the shading back in hadoop-client-runtime to fix CNFE issues > for downstreamers. > cc [~ayushsaxena] [~pan3793] -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] ayushtkn commented on pull request #4461: HADOOP-18303. Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime

ayushtkn commented on PR #4461: URL: https://github.com/apache/hadoop/pull/4461#issuecomment-116433 Yeps, if people let us do that, that jira is already in a released version -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18310) Add option and make 400 bad request retryable

[

https://issues.apache.org/jira/browse/HADOOP-18310?focusedWorklogId=784372=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784372

]

ASF GitHub Bot logged work on HADOOP-18310:

---

Author: ASF GitHub Bot

Created on: 23/Jun/22 22:46

Start Date: 23/Jun/22 22:46

Worklog Time Spent: 10m

Work Description: mukund-thakur commented on PR #4483:

URL: https://github.com/apache/hadoop/pull/4483#issuecomment-1164984485

Do we really need to introduce this config? Seems like an overkill.

I think 400 Bad Request are supposed to be non retry-able.

Issue Time Tracking

---

Worklog Id: (was: 784372)

Time Spent: 1h 50m (was: 1h 40m)

> Add option and make 400 bad request retryable

> -

>

> Key: HADOOP-18310

> URL: https://issues.apache.org/jira/browse/HADOOP-18310

> Project: Hadoop Common

> Issue Type: Bug

> Components: fs/s3

>Affects Versions: 3.3.4

>Reporter: Tak-Lon (Stephen) Wu

>Priority: Major

> Labels: pull-request-available

> Time Spent: 1h 50m

> Remaining Estimate: 0h

>

> When one is using a customized credential provider via

> fs.s3a.aws.credentials.provider, e.g.

> org.apache.hadoop.fs.s3a.TemporaryAWSCredentialsProvider, when the provided

> credential by this pluggable provider is expired and return an error code of

> 400 as bad request exception.

> Here, the current S3ARetryPolicy will fail immediately and does not retry on

> the S3A level.

> Our recent use case in HBase found this use case could lead to a Region

> Server got immediate abandoned from this Exception without retry, when the

> file system is trying open or S3AInputStream is trying to reopen the file.

> especially the S3AInputStream use cases, we cannot find a good way to retry

> outside of the file system semantic (because if a ongoing stream is failing

> currently it's considered as irreparable state), and thus we come up with

> this optional flag for retrying in S3A.

> {code}

> Caused by: com.amazonaws.services.s3.model.AmazonS3Exception: The provided

> token has expired. (Service: Amazon S3; Status Code: 400; Error Code:

> ExpiredToken; Request ID: XYZ; S3 Extended Request ID: ABC; Proxy: null), S3

> Extended Request ID: 123

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutor.handleErrorResponse(AmazonHttpClient.java:1862)

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutor.handleServiceErrorResponse(AmazonHttpClient.java:1415)

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutor.executeOneRequest(AmazonHttpClient.java:1384)

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutor.executeHelper(AmazonHttpClient.java:1154)

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutor.doExecute(AmazonHttpClient.java:811)

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutor.executeWithTimer(AmazonHttpClient.java:779)

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutor.execute(AmazonHttpClient.java:753)

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutor.access$500(AmazonHttpClient.java:713)

> at

> com.amazonaws.http.AmazonHttpClient$RequestExecutionBuilderImpl.execute(AmazonHttpClient.java:695)

> at

> com.amazonaws.http.AmazonHttpClient.execute(AmazonHttpClient.java:559)

> at

> com.amazonaws.http.AmazonHttpClient.execute(AmazonHttpClient.java:539)

> at

> com.amazonaws.services.s3.AmazonS3Client.invoke(AmazonS3Client.java:5453)

> at

> com.amazonaws.services.s3.AmazonS3Client.invoke(AmazonS3Client.java:5400)

> at

> com.amazonaws.services.s3.AmazonS3Client.getObject(AmazonS3Client.java:1524)

> at

> org.apache.hadoop.fs.s3a.S3AFileSystem$InputStreamCallbacksImpl.getObject(S3AFileSystem.java:1506)

> at

> org.apache.hadoop.fs.s3a.S3AInputStream.lambda$reopen$0(S3AInputStream.java:217)

> at org.apache.hadoop.fs.s3a.Invoker.once(Invoker.java:117)

> ... 35 more

> {code}

--

This message was sent by Atlassian Jira

(v8.20.7#820007)

-

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] mukund-thakur commented on pull request #4483: HADOOP-18310 Add option and make 400 bad request retryable

mukund-thakur commented on PR #4483: URL: https://github.com/apache/hadoop/pull/4483#issuecomment-1164984485 Do we really need to introduce this config? Seems like an overkill. I think 400 Bad Request are supposed to be non retry-able. -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18306) Warnings should not be shown on cli console when linux user not present on client

[

https://issues.apache.org/jira/browse/HADOOP-18306?focusedWorklogId=784363=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784363

]

ASF GitHub Bot logged work on HADOOP-18306:

---

Author: ASF GitHub Bot

Created on: 23/Jun/22 22:22

Start Date: 23/Jun/22 22:22

Worklog Time Spent: 10m

Work Description: hadoop-yetus commented on PR #4474:

URL: https://github.com/apache/hadoop/pull/4474#issuecomment-1164971077

:broken_heart: **-1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 55s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| -1 :x: | test4tests | 0m 0s | | The patch doesn't appear to include

any new or modified tests. Please justify why no new tests are needed for this

patch. Also please list what manual steps were performed to verify this patch.

|

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 40m 24s | | trunk passed |

| +1 :green_heart: | compile | 25m 10s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 21m 37s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 31s | | trunk passed |

| +1 :green_heart: | mvnsite | 1m 58s | | trunk passed |

| +1 :green_heart: | javadoc | 1m 31s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 4s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 6s | | trunk passed |

| +1 :green_heart: | shadedclient | 25m 52s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 1m 6s | | the patch passed |

| +1 :green_heart: | compile | 24m 13s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javac | 24m 13s | | the patch passed |

| +1 :green_heart: | compile | 21m 45s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 21m 45s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 1m 24s | | the patch passed |

| +1 :green_heart: | mvnsite | 1m 56s | | the patch passed |

| +1 :green_heart: | javadoc | 1m 22s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 4s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 3s | | the patch passed |

| +1 :green_heart: | shadedclient | 25m 37s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 18m 17s | | hadoop-common in the patch

passed. |

| +1 :green_heart: | asflicense | 1m 16s | | The patch does not

generate ASF License warnings. |

| | | 224m 51s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4474/2/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4474 |

| JIRA Issue | HADOOP-18306 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell detsecrets |

| uname | Linux 472d91b4539c 4.15.0-175-generic #184-Ubuntu SMP Thu Mar 24

17:48:36 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 370df9b21f54462fd0da45aa1a5cf2c87bbd0757 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions | /usr/lib/jvm/java-11-openjdk-amd64:Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4474/2/testReport/ |

| Max. process+thread count | 1803 (vs. ulimit of 5500) |

| modules | C:

[jira] [Work logged] (HADOOP-18215) Enhance WritableName to be able to return aliases for classes that use serializers

[

https://issues.apache.org/jira/browse/HADOOP-18215?focusedWorklogId=784364=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784364

]

ASF GitHub Bot logged work on HADOOP-18215:

---

Author: ASF GitHub Bot

Created on: 23/Jun/22 22:22

Start Date: 23/Jun/22 22:22

Worklog Time Spent: 10m

Work Description: hadoop-yetus commented on PR #4215:

URL: https://github.com/apache/hadoop/pull/4215#issuecomment-1164971314

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 39s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 2 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 37m 50s | | trunk passed |

| +1 :green_heart: | compile | 23m 15s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 20m 39s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 48s | | trunk passed |

| +1 :green_heart: | mvnsite | 2m 14s | | trunk passed |

| +1 :green_heart: | javadoc | 1m 36s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 22s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 11s | | trunk passed |

| +1 :green_heart: | shadedclient | 23m 34s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 1m 7s | | the patch passed |

| +1 :green_heart: | compile | 22m 23s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javac | 22m 23s | | the patch passed |

| +1 :green_heart: | compile | 20m 41s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 20m 41s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 1m 39s | | the patch passed |

| +1 :green_heart: | mvnsite | 2m 9s | | the patch passed |

| +1 :green_heart: | javadoc | 1m 38s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 20s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 8s | | the patch passed |

| +1 :green_heart: | shadedclient | 23m 27s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 18m 47s | | hadoop-common in the patch

passed. |

| +1 :green_heart: | asflicense | 1m 35s | | The patch does not

generate ASF License warnings. |

| | | 215m 49s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4215/6/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4215 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell detsecrets |

| uname | Linux 0cb34f79ee1f 4.15.0-112-generic #113-Ubuntu SMP Thu Jul 9

23:41:39 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / adf3de0112c97cb637b72c19aacddb0662bdee4d |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions | /usr/lib/jvm/java-11-openjdk-amd64:Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4215/6/testReport/ |

| Max. process+thread count | 2466 (vs. ulimit of 5500) |

| modules | C: hadoop-common-project/hadoop-common U:

hadoop-common-project/hadoop-common |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4215/6/console |

[GitHub] [hadoop] hadoop-yetus commented on pull request #4215: HADOOP-18215. Enhance WritableName to be able to return aliases for classes that use serializers

hadoop-yetus commented on PR #4215:

URL: https://github.com/apache/hadoop/pull/4215#issuecomment-1164971314

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 39s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 2 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 37m 50s | | trunk passed |

| +1 :green_heart: | compile | 23m 15s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 20m 39s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 48s | | trunk passed |

| +1 :green_heart: | mvnsite | 2m 14s | | trunk passed |

| +1 :green_heart: | javadoc | 1m 36s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 22s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 11s | | trunk passed |

| +1 :green_heart: | shadedclient | 23m 34s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 1m 7s | | the patch passed |

| +1 :green_heart: | compile | 22m 23s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javac | 22m 23s | | the patch passed |

| +1 :green_heart: | compile | 20m 41s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 20m 41s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 1m 39s | | the patch passed |

| +1 :green_heart: | mvnsite | 2m 9s | | the patch passed |

| +1 :green_heart: | javadoc | 1m 38s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 20s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 8s | | the patch passed |

| +1 :green_heart: | shadedclient | 23m 27s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 18m 47s | | hadoop-common in the patch

passed. |

| +1 :green_heart: | asflicense | 1m 35s | | The patch does not

generate ASF License warnings. |

| | | 215m 49s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4215/6/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4215 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell detsecrets |

| uname | Linux 0cb34f79ee1f 4.15.0-112-generic #113-Ubuntu SMP Thu Jul 9

23:41:39 UTC 2020 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / adf3de0112c97cb637b72c19aacddb0662bdee4d |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions | /usr/lib/jvm/java-11-openjdk-amd64:Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4215/6/testReport/ |

| Max. process+thread count | 2466 (vs. ulimit of 5500) |

| modules | C: hadoop-common-project/hadoop-common U:

hadoop-common-project/hadoop-common |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4215/6/console |

| versions | git=2.25.1 maven=3.6.3 spotbugs=4.2.2 |

| Powered by | Apache Yetus 0.14.0 https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org

For

[GitHub] [hadoop] hadoop-yetus commented on pull request #4474: HADOOP-18306: Warnings should not be shown on cli console when linux user not present on client

hadoop-yetus commented on PR #4474:

URL: https://github.com/apache/hadoop/pull/4474#issuecomment-1164971077

:broken_heart: **-1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 55s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| -1 :x: | test4tests | 0m 0s | | The patch doesn't appear to include

any new or modified tests. Please justify why no new tests are needed for this

patch. Also please list what manual steps were performed to verify this patch.

|

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 40m 24s | | trunk passed |

| +1 :green_heart: | compile | 25m 10s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 21m 37s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 31s | | trunk passed |

| +1 :green_heart: | mvnsite | 1m 58s | | trunk passed |

| +1 :green_heart: | javadoc | 1m 31s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 4s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 6s | | trunk passed |

| +1 :green_heart: | shadedclient | 25m 52s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 1m 6s | | the patch passed |

| +1 :green_heart: | compile | 24m 13s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javac | 24m 13s | | the patch passed |

| +1 :green_heart: | compile | 21m 45s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 21m 45s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 1m 24s | | the patch passed |

| +1 :green_heart: | mvnsite | 1m 56s | | the patch passed |

| +1 :green_heart: | javadoc | 1m 22s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 1m 4s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 3m 3s | | the patch passed |

| +1 :green_heart: | shadedclient | 25m 37s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 18m 17s | | hadoop-common in the patch

passed. |

| +1 :green_heart: | asflicense | 1m 16s | | The patch does not

generate ASF License warnings. |

| | | 224m 51s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4474/2/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4474 |

| JIRA Issue | HADOOP-18306 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell detsecrets |

| uname | Linux 472d91b4539c 4.15.0-175-generic #184-Ubuntu SMP Thu Mar 24

17:48:36 UTC 2022 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 370df9b21f54462fd0da45aa1a5cf2c87bbd0757 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions | /usr/lib/jvm/java-11-openjdk-amd64:Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4474/2/testReport/ |

| Max. process+thread count | 1803 (vs. ulimit of 5500) |

| modules | C: hadoop-common-project/hadoop-common U:

hadoop-common-project/hadoop-common |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4474/2/console |

| versions | git=2.25.1 maven=3.6.3 spotbugs=4.2.2 |

| Powered by | Apache Yetus 0.14.0 https://yetus.apache.org |

This message was automatically generated.

--

This is an automated message from the Apache Git Service.

To respond to the

[GitHub] [hadoop] slfan1989 commented on pull request #4464: YARN-11169. Support moveApplicationAcrossQueues, getQueueInfo API's for Federation.

slfan1989 commented on PR #4464: URL: https://github.com/apache/hadoop/pull/4464#issuecomment-1164896098 @goiri Please help me to review the code, Thank you very much! -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] hadoop-yetus commented on pull request #4155: HDFS-16533. COMPOSITE_CRC failed between replicated file and striped …

hadoop-yetus commented on PR #4155:

URL: https://github.com/apache/hadoop/pull/4155#issuecomment-1164882298

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 39s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 1 new or modified test files. |

_ trunk Compile Tests _ |

| +0 :ok: | mvndep | 14m 52s | | Maven dependency ordering for branch |

| +1 :green_heart: | mvninstall | 25m 59s | | trunk passed |

| +1 :green_heart: | compile | 6m 17s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 6m 0s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 37s | | trunk passed |

| +1 :green_heart: | mvnsite | 3m 9s | | trunk passed |

| +1 :green_heart: | javadoc | 2m 27s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 2m 52s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 6m 30s | | trunk passed |

| +1 :green_heart: | shadedclient | 22m 55s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +0 :ok: | mvndep | 0m 31s | | Maven dependency ordering for patch |

| +1 :green_heart: | mvninstall | 2m 20s | | the patch passed |

| +1 :green_heart: | compile | 6m 0s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javac | 6m 0s | | the patch passed |

| +1 :green_heart: | compile | 5m 44s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | javac | 5m 44s | | the patch passed |

| +1 :green_heart: | blanks | 0m 0s | | The patch has no blanks

issues. |

| +1 :green_heart: | checkstyle | 1m 15s | | the patch passed |

| +1 :green_heart: | mvnsite | 2m 30s | | the patch passed |

| +1 :green_heart: | javadoc | 1m 46s | | the patch passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 2m 19s | | the patch passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 6m 9s | | the patch passed |

| +1 :green_heart: | shadedclient | 22m 37s | | patch has no errors

when building and testing our client artifacts. |

_ Other Tests _ |

| +1 :green_heart: | unit | 2m 40s | | hadoop-hdfs-client in the patch

passed. |

| +1 :green_heart: | unit | 237m 20s | | hadoop-hdfs in the patch

passed. |

| +1 :green_heart: | asflicense | 1m 17s | | The patch does not

generate ASF License warnings. |

| | | 384m 46s | | |

| Subsystem | Report/Notes |

|--:|:-|

| Docker | ClientAPI=1.41 ServerAPI=1.41 base:

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4155/4/artifact/out/Dockerfile

|

| GITHUB PR | https://github.com/apache/hadoop/pull/4155 |

| Optional Tests | dupname asflicense compile javac javadoc mvninstall

mvnsite unit shadedclient spotbugs checkstyle codespell detsecrets |

| uname | Linux 7bdf891ec394 4.15.0-156-generic #163-Ubuntu SMP Thu Aug 19

23:31:58 UTC 2021 x86_64 x86_64 x86_64 GNU/Linux |

| Build tool | maven |

| Personality | dev-support/bin/hadoop.sh |

| git revision | trunk / 91fd09d2c3759c8cbdb376a2caa22f64ddd41875 |

| Default Java | Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Multi-JDK versions | /usr/lib/jvm/java-11-openjdk-amd64:Private

Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1

/usr/lib/jvm/java-8-openjdk-amd64:Private

Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| Test Results |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4155/4/testReport/ |

| Max. process+thread count | 3092 (vs. ulimit of 5500) |

| modules | C: hadoop-hdfs-project/hadoop-hdfs-client

hadoop-hdfs-project/hadoop-hdfs U: hadoop-hdfs-project |

| Console output |

https://ci-hadoop.apache.org/job/hadoop-multibranch/job/PR-4155/4/console |

| versions | git=2.25.1 maven=3.6.3 spotbugs=4.2.2 |

| Powered by | Apache Yetus 0.14.0 https://yetus.apache.org |

This message was

[jira] [Work logged] (HADOOP-18303) Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime

[ https://issues.apache.org/jira/browse/HADOOP-18303?focusedWorklogId=784342=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784342 ] ASF GitHub Bot logged work on HADOOP-18303: --- Author: ASF GitHub Bot Created on: 23/Jun/22 19:37 Start Date: 23/Jun/22 19:37 Worklog Time Spent: 10m Work Description: sunchao commented on PR #4461: URL: https://github.com/apache/hadoop/pull/4461#issuecomment-1164795939 In that case should we consider reverting it for now until we are ready to upgrade both jersey and jackson together at some point? Issue Time Tracking --- Worklog Id: (was: 784342) Time Spent: 2h 40m (was: 2.5h) > Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime > -- > > Key: HADOOP-18303 > URL: https://issues.apache.org/jira/browse/HADOOP-18303 > Project: Hadoop Common > Issue Type: Bug >Reporter: Viraj Jasani >Assignee: Viraj Jasani >Priority: Critical > Labels: pull-request-available > Time Spent: 2h 40m > Remaining Estimate: 0h > > As part of HADOOP-18033, we have excluded shading of javax.ws.rs-api from > both hadoop-client-runtime and hadoop-client-minicluster. This has caused > issues for downstreamers e.g. > [https://github.com/apache/incubator-kyuubi/issues/2904], more discussions. > We should put the shading back in hadoop-client-runtime to fix CNFE issues > for downstreamers. > cc [~ayushsaxena] [~pan3793] -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] sunchao commented on pull request #4461: HADOOP-18303. Remove shading exclusion of javax.ws.rs-api from hadoop-client-runtime

sunchao commented on PR #4461: URL: https://github.com/apache/hadoop/pull/4461#issuecomment-1164795939 In that case should we consider reverting it for now until we are ready to upgrade both jersey and jackson together at some point? -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18044) Hadoop - Upgrade to JQuery 3.6.0

[ https://issues.apache.org/jira/browse/HADOOP-18044?focusedWorklogId=784341=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784341 ] ASF GitHub Bot logged work on HADOOP-18044: --- Author: ASF GitHub Bot Created on: 23/Jun/22 19:23 Start Date: 23/Jun/22 19:23 Worklog Time Spent: 10m Work Description: steveloughran commented on PR #4495: URL: https://github.com/apache/hadoop/pull/4495#issuecomment-1164783134 merged. doesn't need yetus to validate it fully as its a cherrypick and i'd verified this branch built correctly Issue Time Tracking --- Worklog Id: (was: 784341) Time Spent: 2h 10m (was: 2h) > Hadoop - Upgrade to JQuery 3.6.0 > > > Key: HADOOP-18044 > URL: https://issues.apache.org/jira/browse/HADOOP-18044 > Project: Hadoop Common > Issue Type: Improvement >Reporter: Yuan Luo >Assignee: Yuan Luo >Priority: Major > Labels: pull-request-available > Fix For: 3.4.0, 3.3.4 > > Time Spent: 2h 10m > Remaining Estimate: 0h > > jQuery 3.6.0 has been released few months ago - > [http://blog.jquery.com/2021/03/02/jquery-3-6-0-released/ > |http://blog.jquery.com/2021/03/02/jquery-3-6-0-released/,] > We can upgrade jquery-3.5.1.min.js to jquery-3.6.0.min.js in hadoop project. -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] steveloughran commented on pull request #4495: HADOOP-18044. Hadoop - Upgrade to jQuery 3.6.0 (#3791)

steveloughran commented on PR #4495: URL: https://github.com/apache/hadoop/pull/4495#issuecomment-1164783134 merged. doesn't need yetus to validate it fully as its a cherrypick and i'd verified this branch built correctly -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18044) Hadoop - Upgrade to JQuery 3.6.0

[ https://issues.apache.org/jira/browse/HADOOP-18044?focusedWorklogId=784340=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784340 ] ASF GitHub Bot logged work on HADOOP-18044: --- Author: ASF GitHub Bot Created on: 23/Jun/22 19:22 Start Date: 23/Jun/22 19:22 Worklog Time Spent: 10m Work Description: steveloughran merged PR #4495: URL: https://github.com/apache/hadoop/pull/4495 Issue Time Tracking --- Worklog Id: (was: 784340) Time Spent: 2h (was: 1h 50m) > Hadoop - Upgrade to JQuery 3.6.0 > > > Key: HADOOP-18044 > URL: https://issues.apache.org/jira/browse/HADOOP-18044 > Project: Hadoop Common > Issue Type: Improvement >Reporter: Yuan Luo >Assignee: Yuan Luo >Priority: Major > Labels: pull-request-available > Fix For: 3.4.0, 3.3.4 > > Time Spent: 2h > Remaining Estimate: 0h > > jQuery 3.6.0 has been released few months ago - > [http://blog.jquery.com/2021/03/02/jquery-3-6-0-released/ > |http://blog.jquery.com/2021/03/02/jquery-3-6-0-released/,] > We can upgrade jquery-3.5.1.min.js to jquery-3.6.0.min.js in hadoop project. -- This message was sent by Atlassian Jira (v8.20.7#820007) - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[GitHub] [hadoop] steveloughran merged pull request #4495: HADOOP-18044. Hadoop - Upgrade to jQuery 3.6.0 (#3791)

steveloughran merged PR #4495: URL: https://github.com/apache/hadoop/pull/4495 -- This is an automated message from the Apache Git Service. To respond to the message, please log on to GitHub and use the URL above to go to the specific comment. To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For queries about this service, please contact Infrastructure at: us...@infra.apache.org - To unsubscribe, e-mail: common-issues-unsubscr...@hadoop.apache.org For additional commands, e-mail: common-issues-h...@hadoop.apache.org

[jira] [Work logged] (HADOOP-18215) Enhance WritableName to be able to return aliases for classes that use serializers

[

https://issues.apache.org/jira/browse/HADOOP-18215?focusedWorklogId=784339=com.atlassian.jira.plugin.system.issuetabpanels:worklog-tabpanel#worklog-784339

]

ASF GitHub Bot logged work on HADOOP-18215:

---

Author: ASF GitHub Bot

Created on: 23/Jun/22 19:21

Start Date: 23/Jun/22 19:21

Worklog Time Spent: 10m

Work Description: hadoop-yetus commented on PR #4215:

URL: https://github.com/apache/hadoop/pull/4215#issuecomment-1164781759

:confetti_ball: **+1 overall**

| Vote | Subsystem | Runtime | Logfile | Comment |

|::|--:|:|::|:---:|

| +0 :ok: | reexec | 0m 44s | | Docker mode activated. |

_ Prechecks _ |

| +1 :green_heart: | dupname | 0m 0s | | No case conflicting files

found. |

| +0 :ok: | codespell | 0m 0s | | codespell was not available. |

| +0 :ok: | detsecrets | 0m 0s | | detect-secrets was not available.

|

| +1 :green_heart: | @author | 0m 0s | | The patch does not contain

any @author tags. |

| +1 :green_heart: | test4tests | 0m 0s | | The patch appears to

include 2 new or modified test files. |

_ trunk Compile Tests _ |

| +1 :green_heart: | mvninstall | 38m 41s | | trunk passed |

| +1 :green_heart: | compile | 23m 6s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | compile | 21m 1s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | checkstyle | 1m 17s | | trunk passed |

| +1 :green_heart: | mvnsite | 1m 41s | | trunk passed |

| +1 :green_heart: | javadoc | 1m 12s | | trunk passed with JDK

Private Build-11.0.15+10-Ubuntu-0ubuntu0.20.04.1 |

| +1 :green_heart: | javadoc | 0m 46s | | trunk passed with JDK

Private Build-1.8.0_312-8u312-b07-0ubuntu1~20.04-b07 |

| +1 :green_heart: | spotbugs | 2m 44s | | trunk passed |

| +1 :green_heart: | shadedclient | 22m 33s | | branch has no errors

when building and testing our client artifacts. |

_ Patch Compile Tests _ |

| +1 :green_heart: | mvninstall | 0m 59s | | the patch passed |

| +1 :green_heart: | compile | 23m 18s | | the patch passed with JDK