[GitHub] spark pull request #22329: [SPARK-25328][PYTHON] Add an example for having t...

Github user asfgit closed the pull request at: https://github.com/apache/spark/pull/22329 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22329: [SPARK-25328][PYTHON] Add an example for having t...

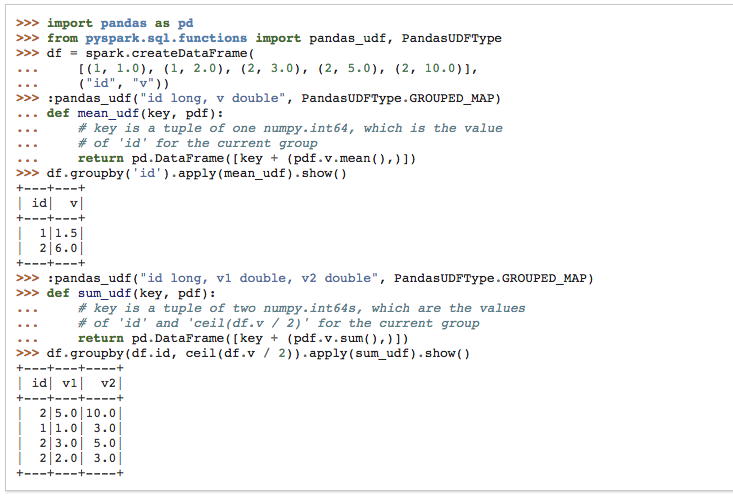

Github user BryanCutler commented on a diff in the pull request: https://github.com/apache/spark/pull/22329#discussion_r215345817 --- Diff: python/pyspark/sql/functions.py --- @@ -2804,6 +2804,22 @@ def pandas_udf(f=None, returnType=None, functionType=None): | 1|1.5| | 2|6.0| +---+---+ + >>> @pandas_udf( + ..."id long, additional_key double, v double", --- End diff -- Sorry, I know you just changed it, but I think just naming the column "ceil(v1 / 2)" with a type `long` would be a little more clear. Although "additional_key" is ok too, if you guys want to keep that. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22329: [SPARK-25328][PYTHON] Add an example for having t...

Github user icexelloss commented on a diff in the pull request: https://github.com/apache/spark/pull/22329#discussion_r215267320 --- Diff: python/pyspark/sql/functions.py --- @@ -2804,6 +2804,22 @@ def pandas_udf(f=None, returnType=None, functionType=None): | 1|1.5| | 2|6.0| +---+---+ + >>> @pandas_udf( + ..."id long, additional_key double, v double", --- End diff -- do you mind changing the type of additional_key to long? It seems like the type coercion here is not necessary. --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22329: [SPARK-25328][PYTHON] Add an example for having t...

Github user icexelloss commented on a diff in the pull request:

https://github.com/apache/spark/pull/22329#discussion_r214940744

--- Diff: python/pyspark/sql/functions.py ---

@@ -2804,6 +2804,20 @@ def pandas_udf(f=None, returnType=None,

functionType=None):

| 1|1.5|

| 2|6.0|

+---+---+

+ >>> @pandas_udf("id long, v1 double, v2 double",

PandasUDFType.GROUPED_MAP) # doctest: +SKIP

--- End diff --

It took me a while to realize `v1` is a grouping key. It also a bit

uncommon to use double value as a grouping key . How about we do sth like?

`id long, additional_key long, v double`

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #22329: [SPARK-25328][PYTHON] Add an example for having t...

GitHub user HyukjinKwon opened a pull request: https://github.com/apache/spark/pull/22329 [SPARK-25328][PYTHON] Add an example for having two columns as the grouping key in group aggregate pandas UDF ## What changes were proposed in this pull request? This PR proposes to add another example for multiple grouping key in group aggregate pandas UDF since this feature could make users still confused. ## How was this patch tested? Manually tested and documentation built:  You can merge this pull request into a Git repository by running: $ git pull https://github.com/HyukjinKwon/spark SPARK-25328 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/22329.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #22329 commit 36a7ccc37374a42a2c9cf67f3f1748df638eb937 Author: hyukjinkwon Date: 2018-09-04T10:00:34Z Add an example for having two columns as the grouping key in group aggregate pandas UDF --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org