shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI]

Task summary metrics are wrong when there are running tasks

URL: https://github.com/apache/spark/pull/25369#discussion_r312271621

##########

File path: core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

##########

@@ -156,7 +162,8 @@ private[spark] class AppStatusStore(

// cheaper for disk stores (avoids deserialization).

val count = {

Utils.tryWithResource(

- if (store.isInstanceOf[InMemoryStore]) {

+ if (isInMemoryStore) {

Review comment:

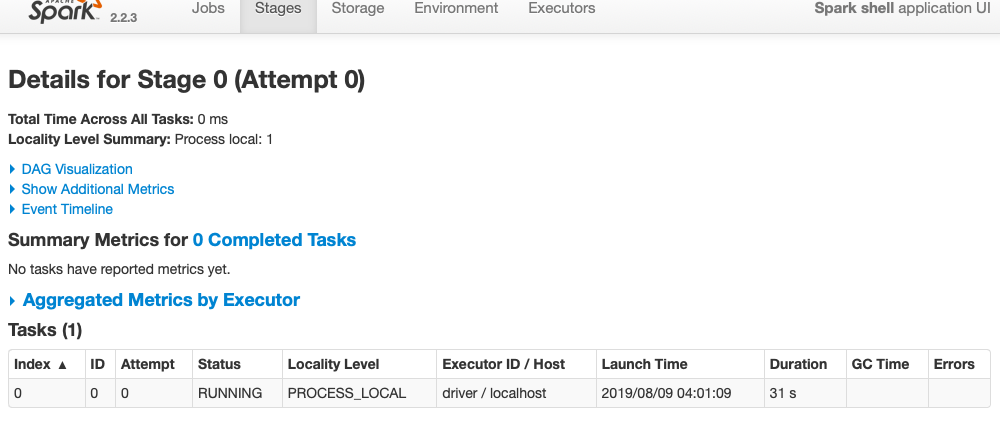

@vanzin In Spark-2.2, as per this line, it doesn't count metrics of a

running task. That was the intend behind #23088 (which eventually fixes this

issue as well)

https://github.com/apache/spark/blob/7c7d7f6a878b02ece881266ee538f3e1443aa8c1/core/src/main/scala/org/apache/spark/ui/jobs/StagePage.scala#L340

verified locally also,

`sc.parallelize(1 to 160, 1).map( i => Thread.sleep(1000)).collect()`

----------------------------------------------------------------

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

[email protected]

With regards,

Apache Git Services

---------------------------------------------------------------------

To unsubscribe, e-mail: [email protected]

For additional commands, e-mail: [email protected]