[GitHub] [spark] shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI] Task summary metrics are wrong when there are running tasks

shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI]

Task summary metrics are wrong when there are running tasks

URL: https://github.com/apache/spark/pull/25369#discussion_r312271621

##

File path: core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

##

@@ -156,7 +162,8 @@ private[spark] class AppStatusStore(

// cheaper for disk stores (avoids deserialization).

val count = {

Utils.tryWithResource(

-if (store.isInstanceOf[InMemoryStore]) {

+if (isInMemoryStore) {

Review comment:

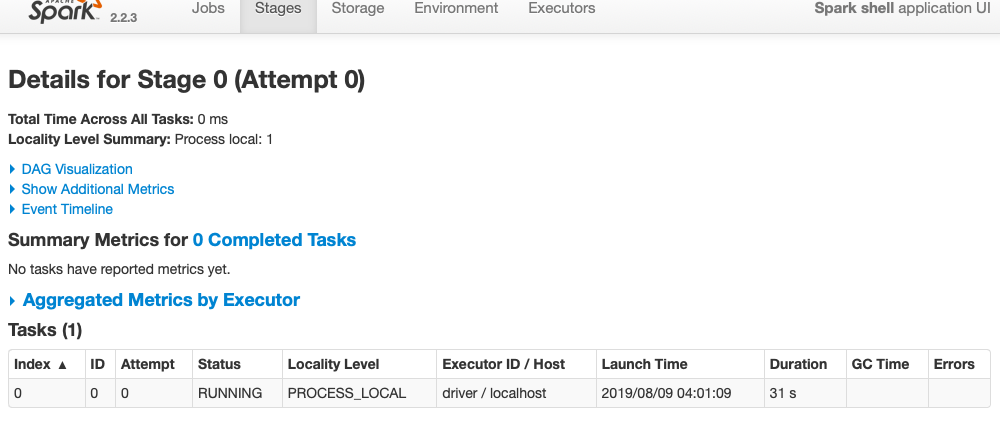

@vanzin In Spark-2.2, as per this line, it doesn't count metrics of a

running task. That was the intend behind #23088 (which eventually fixes this

issue as well)

https://github.com/apache/spark/blob/7c7d7f6a878b02ece881266ee538f3e1443aa8c1/core/src/main/scala/org/apache/spark/ui/jobs/StagePage.scala#L340

verified locally also,

`sc.parallelize(1 to 160, 1).map( i => Thread.sleep(1000)).collect()`

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI] Task summary metrics are wrong when there are running tasks

shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI]

Task summary metrics are wrong when there are running tasks

URL: https://github.com/apache/spark/pull/25369#discussion_r312271621

##

File path: core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

##

@@ -156,7 +162,8 @@ private[spark] class AppStatusStore(

// cheaper for disk stores (avoids deserialization).

val count = {

Utils.tryWithResource(

-if (store.isInstanceOf[InMemoryStore]) {

+if (isInMemoryStore) {

Review comment:

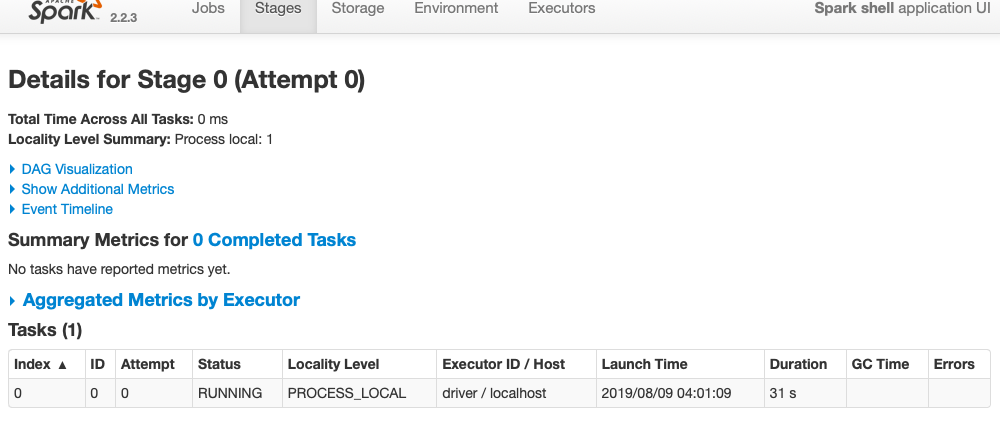

@vanzin In Spark-2.2, as per this line, it doesn't count metrics of a

running task.

https://github.com/apache/spark/blob/7c7d7f6a878b02ece881266ee538f3e1443aa8c1/core/src/main/scala/org/apache/spark/ui/jobs/StagePage.scala#L340

verified locally also,

`sc.parallelize(1 to 160, 1).map( i => Thread.sleep(1000)).collect()`

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI] Task summary metrics are wrong when there are running tasks

shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI]

Task summary metrics are wrong when there are running tasks

URL: https://github.com/apache/spark/pull/25369#discussion_r311719400

##

File path: core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

##

@@ -156,7 +162,8 @@ private[spark] class AppStatusStore(

// cheaper for disk stores (avoids deserialization).

val count = {

Utils.tryWithResource(

-if (store.isInstanceOf[InMemoryStore]) {

+if (isInMemoryStore) {

Review comment:

@gengliangwang If I understand correctly, ~~#23008~~ #23088 is actually

fixing this issue. right ?(At least history server case).

Because, `count` is always filtering out the running tasks, as

`ExecutorRunTime` will be defined for only finished tasks. But `scan tasks`

contains all the tasks, including running tasks.

https://github.com/apache/spark/blob/a59fdc4b5783be591a236bfc60d1107caa818412/core/src/main/scala/org/apache/spark/status/AppStatusStore.scala#L169

https://github.com/apache/spark/blob/a59fdc4b5783be591a236bfc60d1107caa818412/core/src/main/scala/org/apache/spark/status/AppStatusStore.scala#L263-L265

So, reverting ~~23008~~ 23088 and hence this PR, would not fix the issue?

Please correct me if I am wrong.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI] Task summary metrics are wrong when there are running tasks

shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI]

Task summary metrics are wrong when there are running tasks

URL: https://github.com/apache/spark/pull/25369#discussion_r311719400

##

File path: core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

##

@@ -156,7 +162,8 @@ private[spark] class AppStatusStore(

// cheaper for disk stores (avoids deserialization).

val count = {

Utils.tryWithResource(

-if (store.isInstanceOf[InMemoryStore]) {

+if (isInMemoryStore) {

Review comment:

@gengliangwang If I understand correctly, #23088 is actually fixing this

issue. right ?(At least history server case).

Because, `count` is always filtering out the running tasks, as

`ExecutorRunTime` will be defined for only finished tasks. But `scan tasks`

contains all the tasks, including running tasks.

https://github.com/apache/spark/blob/a59fdc4b5783be591a236bfc60d1107caa818412/core/src/main/scala/org/apache/spark/status/AppStatusStore.scala#L169

https://github.com/apache/spark/blob/a59fdc4b5783be591a236bfc60d1107caa818412/core/src/main/scala/org/apache/spark/status/AppStatusStore.scala#L263-L265

So, reverting 23088 and hence this PR, would not fix the issue? Please

correct me if I am wrong.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI] Task summary metrics are wrong when there are running tasks

shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI]

Task summary metrics are wrong when there are running tasks

URL: https://github.com/apache/spark/pull/25369#discussion_r311719400

##

File path: core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

##

@@ -156,7 +162,8 @@ private[spark] class AppStatusStore(

// cheaper for disk stores (avoids deserialization).

val count = {

Utils.tryWithResource(

-if (store.isInstanceOf[InMemoryStore]) {

+if (isInMemoryStore) {

Review comment:

@gengliangwang If I understand correctly, #23008 is actually fixing this

issue. right ?(At least history server case).

Because, `count` is always filtering out the running tasks, as

`ExecutorRunTime` will be defined for only finished tasks. But `scan tasks`

contains all the tasks, including running tasks.

https://github.com/apache/spark/blob/a59fdc4b5783be591a236bfc60d1107caa818412/core/src/main/scala/org/apache/spark/status/AppStatusStore.scala#L169

https://github.com/apache/spark/blob/a59fdc4b5783be591a236bfc60d1107caa818412/core/src/main/scala/org/apache/spark/status/AppStatusStore.scala#L263-L265

So, reverting 23008 and hence this PR, would not fix the issue? Please

correct me if I am wrong.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] [spark] shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI] Task summary metrics are wrong when there are running tasks

shahidki31 commented on a change in pull request #25369: [SPARK-28638][WebUI]

Task summary metrics are wrong when there are running tasks

URL: https://github.com/apache/spark/pull/25369#discussion_r311479709

##

File path: core/src/main/scala/org/apache/spark/status/AppStatusStore.scala

##

@@ -156,7 +162,8 @@ private[spark] class AppStatusStore(

// cheaper for disk stores (avoids deserialization).

val count = {

Utils.tryWithResource(

-if (store.isInstanceOf[InMemoryStore]) {

+if (isInMemoryStore) {

Review comment:

Thanks @gengliangwang . History server uses `InMemory` store by default. For

Disk store case, this isn't an optimal way for finding success task. I am yet

to raise a PR for supporting for Disk store case.

I think you just need to add,

`isInMemoryStore: Boolean = listener.isDefined ||

store.isInstanceOf[InMemoryStore]`

Will test your code with all the scenarios.

Any case, this would be temporary as we need to support for Diskstore also.

This is an automated message from the Apache Git Service.

To respond to the message, please log on to GitHub and use the

URL above to go to the specific comment.

For queries about this service, please contact Infrastructure at:

us...@infra.apache.org

With regards,

Apache Git Services

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org