[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user LantaoJin closed the pull request at: https://github.com/apache/spark/pull/20803 --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user LantaoJin commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r176320495

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/SparkSession.scala

---

@@ -635,7 +637,8 @@ class SparkSession private(

* @since 2.0.0

*/

def sql(sqlText: String): DataFrame = {

-Dataset.ofRows(self, sessionState.sqlParser.parsePlan(sqlText))

+Dataset.ofRows(self, sessionState.sqlParser.parsePlan(sqlText),

+ substitutor.substitute(sqlText))

--- End diff --

> the following case shows a misleading and wrong SQL statement instead of

real executed SQL plan.

Yes. We know this, so current implementation which bind sql text to DF is

not good.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user dongjoon-hyun commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r176316669

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/SparkSession.scala

---

@@ -635,7 +637,8 @@ class SparkSession private(

* @since 2.0.0

*/

def sql(sqlText: String): DataFrame = {

-Dataset.ofRows(self, sessionState.sqlParser.parsePlan(sqlText))

+Dataset.ofRows(self, sessionState.sqlParser.parsePlan(sqlText),

+ substitutor.substitute(sqlText))

--- End diff --

BTW, in general, the initial SQL texts easily become meaningless when

another operations are added. In your example, the following case shows a

misleading and wrong SQL statement instead of *real executed SQL plan*.

```scala

val df = spark.sql("x")

df.filter(...).collect() // shows sql text "x"

```

As another example, please try the following. It will show you `select a,b

from t1`.

```

scala> spark.sql("select a,b from t1").select("a").show

```

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user dongjoon-hyun commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r176313994

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/SparkSession.scala

---

@@ -635,7 +637,8 @@ class SparkSession private(

* @since 2.0.0

*/

def sql(sqlText: String): DataFrame = {

-Dataset.ofRows(self, sessionState.sqlParser.parsePlan(sqlText))

+Dataset.ofRows(self, sessionState.sqlParser.parsePlan(sqlText),

+ substitutor.substitute(sqlText))

--- End diff --

You may want to refactor this PR into `ParserExtension` and UI part. I

think that will be less intrusive than the current implementation.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user dongjoon-hyun commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r176313767

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/SparkSession.scala

---

@@ -635,7 +637,8 @@ class SparkSession private(

* @since 2.0.0

*/

def sql(sqlText: String): DataFrame = {

-Dataset.ofRows(self, sessionState.sqlParser.parsePlan(sqlText))

+Dataset.ofRows(self, sessionState.sqlParser.parsePlan(sqlText),

+ substitutor.substitute(sqlText))

--- End diff --

Hi, @LantaoJin .

What you need is just grapping the initial SQL text here, you can use Spark

extension. Please refer [Spark Atlas

Connector](https://github.com/hortonworks-spark/spark-atlas-connector/blob/master/spark-atlas-connector/src/main/scala/com/hortonworks/spark/atlas/sql/SparkExtension.scala)

for a sample code.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user LantaoJin commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r176102381

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/Dataset.scala ---

@@ -166,20 +168,28 @@ private[sql] object Dataset {

class Dataset[T] private[sql](

@transient val sparkSession: SparkSession,

@DeveloperApi @InterfaceStability.Unstable @transient val

queryExecution: QueryExecution,

-encoder: Encoder[T])

+encoder: Encoder[T],

+val sqlText: String = "")

--- End diff --

I have decoupled the sqlText with sql execution. In current implementation,

when user invoke spark.sql(xx), it will create a new

SparkListenerSQLTextCaptured event to listenerbus. Then in

SQLAppStatusListener, the information will be stored and all the sql sentences

will display in AllExecutionPage in order with submission time, instead of in

each ExecutionPage. I will upload the commit after testing.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user LantaoJin commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r175975380

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/Dataset.scala ---

@@ -166,20 +168,28 @@ private[sql] object Dataset {

class Dataset[T] private[sql](

@transient val sparkSession: SparkSession,

@DeveloperApi @InterfaceStability.Unstable @transient val

queryExecution: QueryExecution,

-encoder: Encoder[T])

+encoder: Encoder[T],

+val sqlText: String = "")

--- End diff --

Your speculation is almost right. First call val df = spark.sql(), then

separates the sql text with pattern matching to there type: count, limit and

other. if count, then invoke the df.showString(2,20). if limit, just invoke

df.limit(1).foreach, the last type other will do noting.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r175885690

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/Dataset.scala ---

@@ -166,20 +168,28 @@ private[sql] object Dataset {

class Dataset[T] private[sql](

@transient val sparkSession: SparkSession,

@DeveloperApi @InterfaceStability.Unstable @transient val

queryExecution: QueryExecution,

-encoder: Encoder[T])

+encoder: Encoder[T],

+val sqlText: String = "")

--- End diff --

> spark-submit --master yarn-cluster --class com.ebay.SQLFramework -s

biz.sql

How does `com.ebay.SQLFramework` process the sql file? just call

`spark.sql().show` or other stuff?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user LantaoJin commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r175683700

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/Dataset.scala ---

@@ -166,20 +168,28 @@ private[sql] object Dataset {

class Dataset[T] private[sql](

@transient val sparkSession: SparkSession,

@DeveloperApi @InterfaceStability.Unstable @transient val

queryExecution: QueryExecution,

-encoder: Encoder[T])

+encoder: Encoder[T],

+val sqlText: String = "")

--- End diff --

Thanks for your review. I agree this comment. Before the discuss, let me

reproduce the scenario our company met. Team A developed a framework to submit

application with sql sentences in a file

> spark-submit --master yarn-cluster --class com.ebay.SQLFramework -s

biz.sql

In the biz.sql, there are many sql sentences like

> create or replace temporary view view_a select xx from table

${old_db}.table_a where dt=${check_date};

> insert overwrite table ${new_db}.table_a select xx from view_a join

${new_db}.table_b;

> ...

There is no case like

`val df = spark.sql("x")`

`spark.range(10).collect()`

`df.filter(..).count() `

Team B (Platform) need to capture the really sql sentences which are

executed in whole cluster, as the sql files from Team A contains many

variables. A better way is recording the really sql sentence in EventLog.

Ok, back to the discussion. The original purpose is to display the sql

sentence which user inputs. `spark.range(10).collect()` isn't a sql sentence

user inputs, either `df.filter(..).count() `. Only "x" is. So I have two

proposals.

1. Change the display behavior, only displays the sql which can trigger

action. like "create table", "insert overwrite", etc. Do not care about the

select sentence. That won't propagate sql text any more. The test case above

won't show anything in SQL ui.

2. Add a SQLCommandEvent and post an event with sql sentence in method

SparkSession.sql(), then in the EventLoggingListener, just logging this to

eventlog.

3. Open another ticket to add a command option `--sqlfile biz.sql` in

spark-submit command. biz.sql must be a file consist by sql sentence. Base this

implementation, not only client mode but also cluster mode can use pure sql.

How do you think? @cloud-fan

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r175609377

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/Dataset.scala ---

@@ -166,20 +168,28 @@ private[sql] object Dataset {

class Dataset[T] private[sql](

@transient val sparkSession: SparkSession,

@DeveloperApi @InterfaceStability.Unstable @transient val

queryExecution: QueryExecution,

-encoder: Encoder[T])

+encoder: Encoder[T],

+val sqlText: String = "")

--- End diff --

And how does the SQL shell execute commands? like `SELECT * FROM ...`, does

it display all the rows or add a LIMIT before displaying? Generally we should

not propagate sql text, as a new DataFrame usually means the plan is changed,

the SQL text is not accurate anymore.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r175608866

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/Dataset.scala ---

@@ -166,20 +168,28 @@ private[sql] object Dataset {

class Dataset[T] private[sql](

@transient val sparkSession: SparkSession,

@DeveloperApi @InterfaceStability.Unstable @transient val

queryExecution: QueryExecution,

-encoder: Encoder[T])

+encoder: Encoder[T],

+val sqlText: String = "")

--- End diff --

what's the exact rule you defined to decide whether or not we should

propagate the sql text?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r174677799

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/SparkSession.scala

---

@@ -635,6 +637,7 @@ class SparkSession private(

* @since 2.0.0

*/

def sql(sqlText: String): DataFrame = {

+SQLExecution.setSqlText(substitutor.substitute(sqlText))

--- End diff --

It's better to answer the list first. Strictly speaking, except `collect`,

most of the dataframe operations will create another dataframe and execute.

e.g. `.count()` creates a new dataframe with aggregate, `.show()` creates a new

dataframe with limit.

It seems like `df.count` should not show the SQL, but `df.show` should as

it's very common.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user LantaoJin commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r174662445

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/SparkSession.scala

---

@@ -635,6 +637,7 @@ class SparkSession private(

* @since 2.0.0

*/

def sql(sqlText: String): DataFrame = {

+SQLExecution.setSqlText(substitutor.substitute(sqlText))

--- End diff --

@cloud-fan, Bind sql text to DataFrame is a good idea. Trying to fix the

list you mentioned above.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user LantaoJin commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r174662131

--- Diff:

sql/core/src/main/scala/org/apache/spark/sql/execution/SQLExecution.scala ---

@@ -34,6 +34,16 @@ object SQLExecution {

private val executionIdToQueryExecution = new ConcurrentHashMap[Long,

QueryExecution]()

+ private val executionIdToSqlText = new ConcurrentHashMap[Long, String]()

+

+ def setSqlText(sqlText: String): Unit = {

+executionIdToSqlText.putIfAbsent(_nextExecutionId.get(), sqlText)

+ }

+

+ def getSqlText(executionId: Long): String = {

+executionIdToSqlText.get(executionId)

--- End diff --

It shows nothing

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r174600066

--- Diff: sql/core/src/main/scala/org/apache/spark/sql/SparkSession.scala

---

@@ -635,6 +637,7 @@ class SparkSession private(

* @since 2.0.0

*/

def sql(sqlText: String): DataFrame = {

+SQLExecution.setSqlText(substitutor.substitute(sqlText))

--- End diff --

I think the most difficult part is, how to connect the SQL text to the

execution. I don't think the current one works, e.g.

```

val df = spark.sql("x")

spark.range(10).count()

```

You set the SQL text for the next execution, but the next execution may not

happen on this dataframe.

I think SQL text should belong to a DataFrame, and executions on this

dataframe show the SQL text. e.g.

```

val df = spark.sql("xx")

df.collect() // this should show sql text on the UI

df.count() // shall we shall sql text?

df.show() // this adds a limit on top of the query plan, but ideally we

should shall the sql text.

df.filter(...).collect() // how about this?

```

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user cloud-fan commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r174596852

--- Diff:

sql/core/src/main/scala/org/apache/spark/sql/execution/SQLExecution.scala ---

@@ -34,6 +34,16 @@ object SQLExecution {

private val executionIdToQueryExecution = new ConcurrentHashMap[Long,

QueryExecution]()

+ private val executionIdToSqlText = new ConcurrentHashMap[Long, String]()

+

+ def setSqlText(sqlText: String): Unit = {

+executionIdToSqlText.putIfAbsent(_nextExecutionId.get(), sqlText)

+ }

+

+ def getSqlText(executionId: Long): String = {

+executionIdToSqlText.get(executionId)

--- End diff --

what if this execution doesn't have SQL text?

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user jerryshao commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r174109194

--- Diff:

sql/core/src/main/scala/org/apache/spark/sql/execution/SQLExecution.scala ---

@@ -34,6 +34,16 @@ object SQLExecution {

private val executionIdToQueryExecution = new ConcurrentHashMap[Long,

QueryExecution]()

+ private val executionIdToSqlText = new ConcurrentHashMap[Long, String]()

+

+ def setSqlText(sqlText: String): Unit = {

+executionIdToSqlText.putIfAbsent(_nextExecutionId.get(), sqlText)

--- End diff --

Ohh, I see. Sorry I misunderstood it.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user LantaoJin commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r174105959

--- Diff:

sql/core/src/main/scala/org/apache/spark/sql/execution/SQLExecution.scala ---

@@ -34,6 +34,16 @@ object SQLExecution {

private val executionIdToQueryExecution = new ConcurrentHashMap[Long,

QueryExecution]()

+ private val executionIdToSqlText = new ConcurrentHashMap[Long, String]()

+

+ def setSqlText(sqlText: String): Unit = {

+executionIdToSqlText.putIfAbsent(_nextExecutionId.get(), sqlText)

--- End diff --

`setSqlText` is invoked before `withNewExecutionId`. First time

`_nextExecutionId` is 0 by default, so `setSqlText` store (0, x) in map. When

`withNewExecutionId` is invoked, the code `val executionId =

SQLExecution.nextExecutionId` increase the execution id and return the previous

execution id, 0. Then `val sqlText = getSqlText(executionId)` will return the

sql text which 0 mapped, x. Next time when `setSqlText` is invoked,

_nextExecutionId.get() return the next value, 1. So the new sql text store with

in map like (1, y).

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

Github user jerryshao commented on a diff in the pull request:

https://github.com/apache/spark/pull/20803#discussion_r174038997

--- Diff:

sql/core/src/main/scala/org/apache/spark/sql/execution/SQLExecution.scala ---

@@ -34,6 +34,16 @@ object SQLExecution {

private val executionIdToQueryExecution = new ConcurrentHashMap[Long,

QueryExecution]()

+ private val executionIdToSqlText = new ConcurrentHashMap[Long, String]()

+

+ def setSqlText(sqlText: String): Unit = {

+executionIdToSqlText.putIfAbsent(_nextExecutionId.get(), sqlText)

--- End diff --

Does the executionId used here match the current execution? IIUC, the

execution id is incremented in `withNewExecutionId`, and the one you used here

mostly refers to the previous execution, please correct me if I'm wrong.

---

-

To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org

For additional commands, e-mail: reviews-h...@spark.apache.org

[GitHub] spark pull request #20803: [SPARK-23653][SQL] Show sql statement in spark SQ...

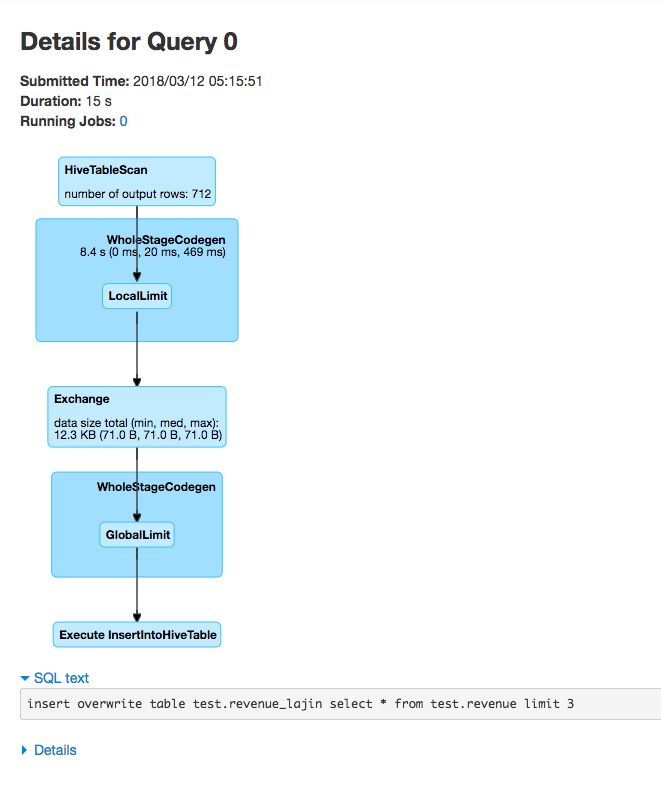

GitHub user LantaoJin opened a pull request: https://github.com/apache/spark/pull/20803 [SPARK-23653][SQL] Show sql statement in spark SQL UI ## What changes were proposed in this pull request? [SPARK-4871](https://issues.apache.org/jira/browse/SPARK-4871) had already added the sql statement in job description for using spark-sql. But it has some problems: 1. long sql statement cannot be displayed in description column.  2. sql statement submitted in spark-shell or spark-submit cannot be covered.  ## How was this patch tested?  You can merge this pull request into a Git repository by running: $ git pull https://github.com/LantaoJin/spark SPARK-23653 Alternatively you can review and apply these changes as the patch at: https://github.com/apache/spark/pull/20803.patch To close this pull request, make a commit to your master/trunk branch with (at least) the following in the commit message: This closes #20803 commit 9d2098db3e082de0deb1c6cfea7527d04f3f5d03 Author: LantaoJin Date: 2018-03-12T13:43:06Z [SPARK-23653][SQL] Show sql statement in spark SQL UI --- - To unsubscribe, e-mail: reviews-unsubscr...@spark.apache.org For additional commands, e-mail: reviews-h...@spark.apache.org